超详细从零记录Hadoop2.7.3完全分布式集群部署过程

超详细从零记录Ubuntu16.04.1 3台服务器上Hadoop2.7.3完全分布式集群部署过程。包含,Ubuntu服务器创建、远程工具连接配置、Ubuntu服务器配置、Hadoop文件配置、Hadoop格式化、启动。(首更时间2016年10月27日)

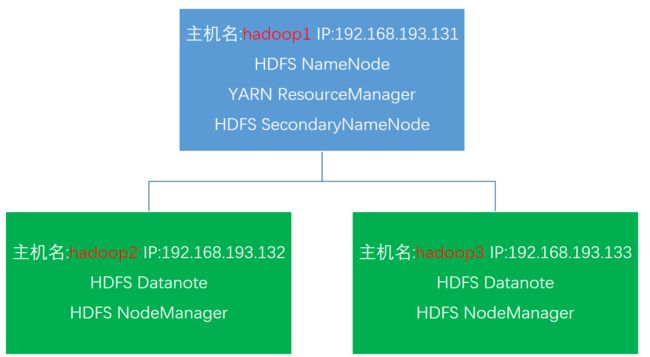

| 主机名/hostname | IP | 角色 |

|---|---|---|

| hadoop1 | 192.168.193.131 | ResourceManager/NameNode/SecondaryNameNode |

| hadoop2 | 192.168.193.132 | NodeManager/DataNode |

| hadoop3 | 192.168.193.133 | NodeManager/DataNode |

1.0.准备

1.1.目录

- 用VMware创建3个Ubuntu虚拟机

- 用mobaxterm远程连接创建好的虚拟机

- 配置Ubuntu虚拟机源、ssh无密匙登录、jdk

- 配置Hadoop集群文件(Github源码)

- 启动Hadoop集群、在Windows主机上显示集群状态。

1.2.提前准备安装包

- Windows10(宿主操作系统)

- VMware12 workstation(虚拟机)

- Ubuntu16.04.1 LTS 服务器版

- Hadoop2.7.3

- jdk1.8

- MobaXterm(远程连接工具)

- Github源码,记得start哦(CSDN博文中全部源码公开至个人github)

2. VMvare安装Ubuntu16.04.1LTS服务器版过程

2.1.注意在安装时username要一致如xiaolei,即主机用户名。而主机名hostname可不同如hadoop1,hadoop2,hadoop3.或者master,slave1,slave2.在本篇博文中用hadoop1,2,3区分hostname主机名。

2.2.VMvare安装Ubuntu16.04.1LTS桌面版过程

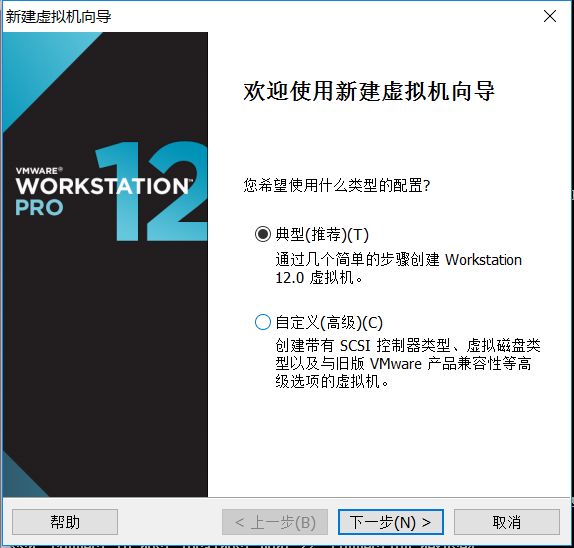

2.3.在VMvare中选择 文件然后 新建虚拟机

2.4选择典型安装

2.5.选择下载好的Ubuntu64位 16.04.1 LTS服务器版镜像

2.6.个性化Linux设置

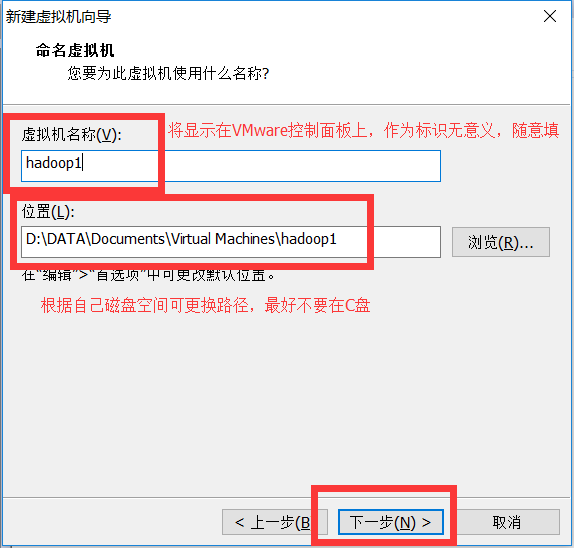

2.7.虚拟机命名及文件路径 wangxiaolei \ hadoop1等 随意可更换

2.8.磁盘分配,默认即可,磁盘大小可以根据自身硬盘空间调节(不要太小)

2.9.然后就是等待安装完成,输入登录名 xiaolei 登录密码****…

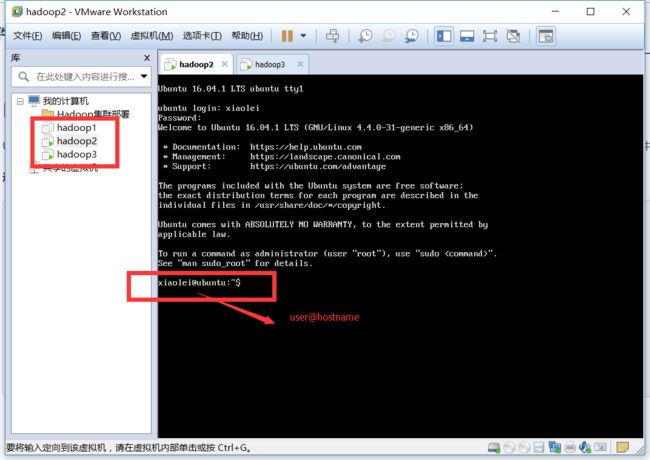

建立好的虚拟机如下

通过ipconfig命令查看服务器ip地址

IP 192.168.193.131 默认主机名ubuntu

IP 192.168.193.132 默认主机名ubuntu

IP 192.168.193.133 默认主机名ubuntu

下一步会修改主机名hostname

3. 配置Ubuntu系统(服务器版在VMware中操作不方便,通过远程在putty或者MobaXterm操作比较快捷些)

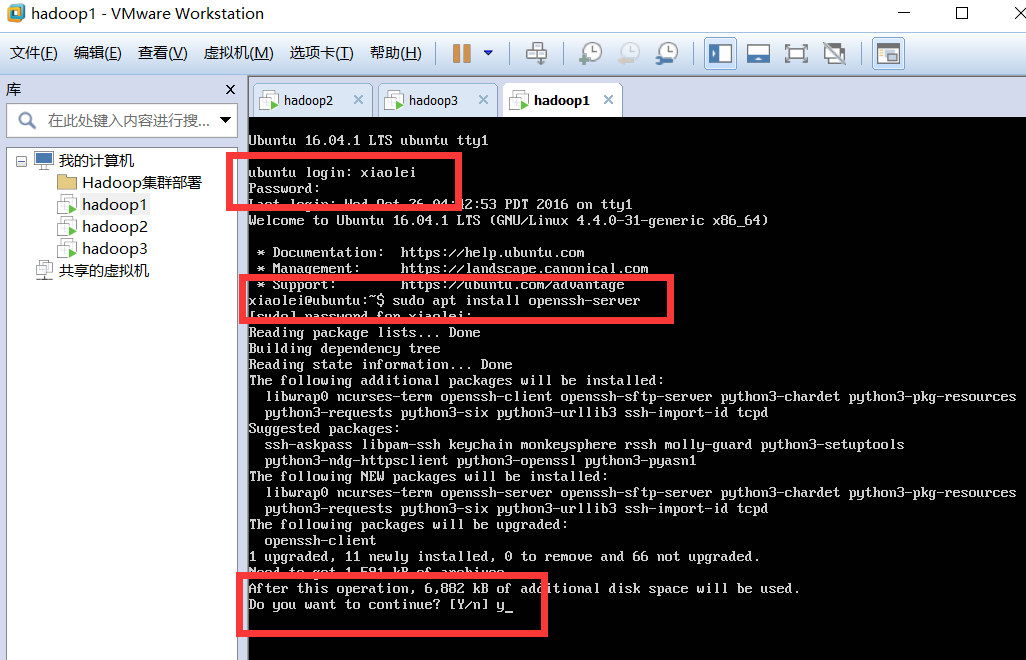

3.1 安装ssh即可。这里不需要 ssh-keygen。

打开终端或者服务器版命令行

查看是否安装(ssh)openssh-server,否则无法远程连接。

sshd

sudo apt install openssh-server

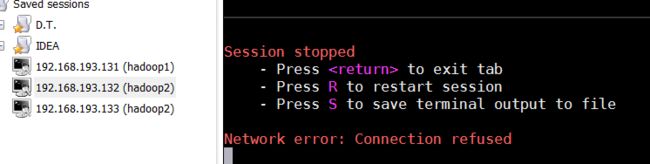

3.2.安装ssh后,可以通过工具(putty或者MobaXterm)远程连接已经建立好的服务器(Hadoop1,Hadoop2,Hadoop3)

同样三个虚拟机建立连接

3.3.更换为国内源(清华大学帮助文档)

在Hadoop1、Hadoop2、Hadoop3中

xiaolei@ubuntu:~$ sudo vi /etc/apt/sources.list

# 默认注释了源码镜像以提高 apt update 速度,如有需要可自行取消注释

deb https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial main restricted universe multiverse

# deb-src https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial main main restricted universe multiverse

deb https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial-updates main restricted universe multiverse

# deb-src https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial-updates main restricted universe multiverse

deb https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial-backports main restricted universe multiverse

# deb-src https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial-backports main restricted universe multiverse

deb https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial-security main restricted universe multiverse

# deb-src https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial-security main restricted universe multiverse

# 预发布软件源,不建议启用

# deb https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial-proposed main restricted universe multiverse

# deb-src https://mirrors.tuna.tsinghua.edu.cn/ubuntu/ xenial-proposed main restricted universe multiverse

更新源

xiaolei@ubuntu:~$ sudo apt update

3.4.安装vim编辑器,默认自带vi编辑器

sudo apt install vim

更新系统(服务器端更新量小,桌面版Ubuntu更新量较大,可以暂时不更新)

sudo apt-get upgrade

3.5.修改Ubuntu服务器hostname主机名,主机名和ip是一一对应的。

#在192.168.193.131

xiaolei@ubuntu:~$ sudo hostname hadoop1

#在192.168.193.131

xiaolei@ubuntu:~$ sudo hostname hadoop2

#在192.168.193.131

xiaolei@ubuntu:~$ sudo hostname hadoop3

#断开远程连接,重新连接即可看到已经改变了主机名。

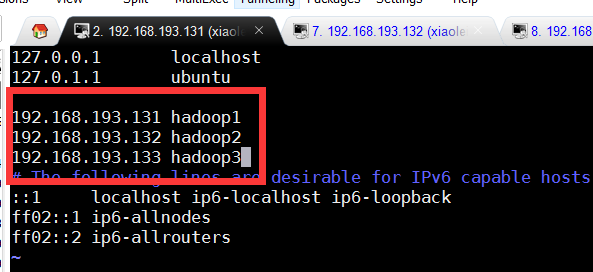

3.6.增加hosts文件中ip和主机名对应字段

在Hadoop1,2,3中

xiaolei@hadoop1:~$ sudo vim /etc/hosts

192.168.193.131 hadoop1

192.168.193.132 hadoop2

192.168.193.133 hadoop3

3.7.更改系统时区(将时间同步更改为北京时间)

xiaolei@hadoop1:~$ date

Wed Oct 26 02:42:08 PDT 2016

xiaolei@hadoop1:~$ sudo tzselect

根据提示选择Asia``````China``````Beijing Time``````yes

最后将Asia/Shanghai shell scripts 复制到/etc/localtime

xiaolei@hadoop1:~$ sudo cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

xiaolei@ubuntu:~$ date

Wed Oct 26 17:45:30 CST 2016

4. Hadoop集群完全分布式部署过程

- JDK配置

- Hadoop集群部署

4.1.安装JDK1.8 (配置源码Github,记得start哦)

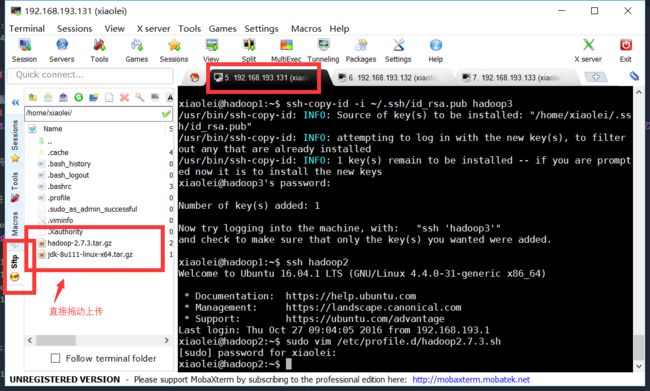

4.1.1将所需文件(Hadoop2.7.3、JDK1.8)上传至Hadoop1服务器(可以直接复制粘贴)

4.1.2.解压缩并将jdk放置/opt路径下

xiaolei@hadoop1:~$ tar -zxf jdk-8u111-linux-x64.tar.gz

hadoop1@hadoop1:~$ sudo mv jdk1.8.0_111 /opt/

[sudo] password for hadoop1:

xiaolei@hadoop1:~$

4.1.3.配置环境变量

编写环境变量脚本并使其生效

xiaolei@hadoop1:~$ sudo vim /etc/profile.d/jdk1.8.sh

输入内容(或者在我的github上下载jdk环境配置脚本源码)

#!/bin/sh

# author:wangxiaolei 王小雷

# blog:http://blog.csdn.net/dream_an

# date:20161027

export JAVA_HOME=/opt/jdk1.8.0_111

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH

xiaolei@hadoop1:~$ source /etc/profile

4.1.4.验证jdk成功安装

xiaolei@hadoop1:~$ java -version

java version "1.8.0_111"

Java(TM) SE Runtime Environment (build 1.8.0_111-b14)

Java HotSpot(TM) 64-Bit Server VM (build 25.111-b14, mixed mode)

4.1.5.同样方法安装其他集群机器。

也可通过scp命令

#注意后面带 : 默认是/home/xiaolei路径下

xiaolei@hadoop1:~$ scp jdk-8u111-linux-x64.tar.gz hadoop2:

命令解析:scp远程复制 -r递归 本机文件地址app是文件,里面包含jdk、Hadoop包 远程主机名@远程主机ip:远程文件地址

4.2.集群ssh无密匙登录

4.2.1.在hadoop1,hadoop2,hadoop3中执行

sudo apt install ssh

sudo apt install rsync

xiaolei@ubuntu:~$ ssh-keygen -t rsa //一路回车就好

4.2.2.在 Hadoop1(master角色) 执行,将/.ssh/下的id_rsa.pub公私作为认证发放到hadoop1,hadoop2,hadoop3的/.ssh/下

ssh-copy-id -i ~/.ssh/id_rsa.pub hadoop1

ssh-copy-id -i ~/.ssh/id_rsa.pub hadoop2

ssh-copy-id -i ~/.ssh/id_rsa.pub hadoop3

4.2.3.然后在 Hadoop1 上登录其他Linux服务器不需要输入密码即成功。

#不需要输入密码

ssh hadoop2

5.hadoop完全分布式集群文件配置和启动

在hadoop1上配置完成后将Hadoop包直接远程复制scp到其他Linux主机即可。

Linux主机Hadoop集群完全分布式分配

5.1.Hadoop主要文件配置(Github源码地址)

5.1.1.在Hadoop1,2,3中配置Hadoop环境变量

xiaolei@hadoop2:~$ sudo vim /etc/profile.d/hadoop2.7.3.sh

输入

#!/bin/sh

# Author:wangxiaolei 王小雷

# Blog:http://blog.csdn.net/dream_an

# Github:https://github.com/wxiaolei

# Date:20161027

# Path:/etc/profile.d/hadoop2.7.3.sh

export HADOOP_HOME="/opt/hadoop-2.7.3"

export PATH="$HADOOP_HOME/bin:$PATH"

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

export YARN_CONF_DIR=$HADOOP_HOME/etc/hadoop

5.1.2.配置 hadoop-env.sh 增加如下内容

export JAVA_HOME=/opt/jdk1.8.0_111

5.1.3.配置slaves文件,增加slave主机名

hadoop2

hadoop3

5.1.4.配置 core-site.xml

fs.defaultFS

hdfs://Hadoop1:9000

io.file.buffer.size

131072

hadoop.tmp.dir

/home/xiaolei/hadoop/tmp

5.1.5.配置 hdfs-site.xml

dfs.namenode.secondary.http-address

hadoop1:50090

dfs.replication

2

dfs.namenode.name.dir

file:/home/xiaolei/hadoop/hdfs/name

dfs.datanode.data.dir

file:/home/xiaolei/hadoop/hdfs/data

5.1.6.配置yarn-site.xml

yarn.nodemanager.aux-services

mapreduce_shuffle

yarn.resourcemanager.address

hadoop1:8032

yarn.resourcemanager.scheduler.address

hadoop1:8030

yarn.resourcemanager.resource-tracker.address

hadoop1:8031

yarn.resourcemanager.admin.address

hadoop1:8033

yarn.resourcemanager.webapp.address

hadoop1:8088

5.1.7.配置mapred-site.xml

mapreduce.framework.name

yarn

mapreduce.jobhistory.address

hadoop1:10020

mapreduce.jobhistory.address

hadoop1:19888

5.1.8.复制Hadoop配置好的包到其他Linux主机

xiaolei@hadoop1:~$ scp -r hadoop-2.7.3 hadoop3:

将每个Hadoop包sudo mv移动到/opt/路径下。不要sudo cp,注意权限。

xiaolei@hadoop1:sudo mv hadoop-2.7.3 /opt/

5.2.格式化节点

在hadoop1上执行

xiaolei@hadoop1:/opt/hadoop-2.7.3$ hdfs namenode -format

5.3.hadoop集群全部启动

5.3.1. 在Hadoop1上执行

xiaolei@hadoop1:/opt/hadoop-2.7.3/sbin$ ./start-all.sh

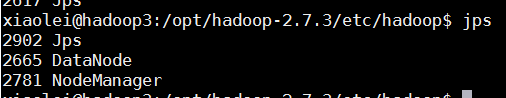

5.3.2.其他主机上jps

5.3.3.在主机上查看,博主是Windows10,直接在浏览器中输入hadoop1 集群地址即可。

http://192.168.193.131:8088/

5.3.4. Github源码位置——超详细从零记录Hadoop2.7.3完全分布式集群部署过程

5.4.可能问题:

权限问题:

chown -R xiaolei:xiaolei hadoop-2.7.3

解析:将hadoop-2.7.3文件属主、组更换为xiaolei:xiaolei

chmod 777 hadoop

解析:将hadoop文件权限变成421 421 421 可写、可读可、执行即 7 7 7

查看是否安装openssh-server

ssd

或者

ps -e|grep ssh

安装 openssh-server

sudo apt install openssh-server

问题解决:

问题

Network error: Connection refused

解决安装

Network error: Connection refused