Cloudera提供了一个可扩展的,灵活的集成平台,可以轻松管理企业中快速增长的数据量和各种数据。 Cloudera产品和解决方案使您能够部署和管理Apache Hadoop和相关项目,操纵和分析数据,并保持数据的安全和受保护。

先决条件

Centos7.x主机一台

Target

部署CDH伪分布式Hadoop集群应用

部署好的版本

[root@localhost ~]# hadoop version

Hadoop 2.6.0-cdh5.13.1

Subversion http://github.com/cloudera/hadoop -r 0061e3eb8ab164e415630bca11d299a7c2ec74fd

Compiled by jenkins on 2017-11-09T16:34Z

Compiled with protoc 2.5.0

From source with checksum 16d5272b34af2d8a4b4b7ee8f7c4cbe

This command was run using /usr/lib/hadoop/hadoop-common-2.6.0-cdh5.13.1.jar

偶然间查到了cdh官网的伪分布式安装教程

这里做下笔记和记录.

开始部署

(笔者以Centos7.x为例)

1.JAVA环境

#到oracle.com下载jdk1.8.161

$ wget http://download.oracle.com/otn-pub/java/jdk/8u161-b12/2f38c3b165be4555a1fa6e98c45e0808/jdk-8u161-linux-x64.rpm?AuthParam=1516458261_e7574995a6546eeecbe0e4e901bc61a8

#上面这个网址可能会由于session live失效

#到官网重新download 即可

$ rpm -ivh jdk-8u161-linux-x64.rpm

Set the Java_Home

$ vim ~/.bashrc

#Add the JAVA_HOME

export JAVA_HOME=/usr/java/jdk1.8.0_161

#保存退出

$ source ~/.bashrc

2.Download the CDH 5 Package

$ wget http://archive.cloudera.com/cdh5/one-click-install/redhat/6/x86_64/cloudera-cdh-5-0.x86_64.rpm

$ yum --nogpgcheck localinstall cloudera-cdh-5-0.x86_64.rpm

#For instructions on how to add a CDH 5 yum repository or build your own CDH 5 yum repository

3.Install CDH 5

#Add a repository key

$ rpm --import http://archive.cloudera.com/cdh5/redhat/7/x86_64/cdh/RPM-GPG-KEY-cloudera

#Install Hadoop in pseudo-distributed mode: To install Hadoop with YARN:

$ yum install hadoop-conf-pseudo -y

4.Starting Hadoop

查看安装好的文件默认存放位置

[root@localhost ~]# rpm -ql hadoop-conf-pseudo

/etc/hadoop/conf.pseudo

/etc/hadoop/conf.pseudo/README

/etc/hadoop/conf.pseudo/core-site.xml

/etc/hadoop/conf.pseudo/hadoop-env.sh

/etc/hadoop/conf.pseudo/hadoop-metrics.properties

/etc/hadoop/conf.pseudo/hdfs-site.xml

/etc/hadoop/conf.pseudo/log4j.properties

/etc/hadoop/conf.pseudo/mapred-site.xml

/etc/hadoop/conf.pseudo/yarn-site.xml

无需改动,开始部署

Step 1.格式化namenode hdfs namenode -format

[root@localhost ~]# hdfs namenode -format

18/01/21 00:13:39 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: user = root

..........................................

18/01/21 00:13:41 INFO common.Storage: Storage directory /var/lib/hadoop-hdfs/cache/root/dfs/name has been successfully formatted.

...........................................

18/01/21 00:13:41 INFO util.ExitUtil: Exiting with status 0

18/01/21 00:13:41 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at localhost/127.0.0.1

************************************************************/

Step 2: 启动HDFS集群

for x in `cd /etc/init.d ; ls hadoop-hdfs-*` ; do sudo service $x start ; done

[root@localhost ~]# for x in `cd /etc/init.d ; ls hadoop-hdfs-*` ; do sudo service $x start ; done

starting datanode, logging to /var/log/hadoop-hdfs/hadoop-hdfs-datanode-localhost.out

Started Hadoop datanode (hadoop-hdfs-datanode): [ OK ]

starting namenode, logging to /var/log/hadoop-hdfs/hadoop-hdfs-namenode-localhost.out

Started Hadoop namenode: [ OK ]

starting secondarynamenode, logging to /var/log/hadoop-hdfs/hadoop-hdfs-secondarynamenode-localhost.out

Started Hadoop secondarynamenode: [ OK ]

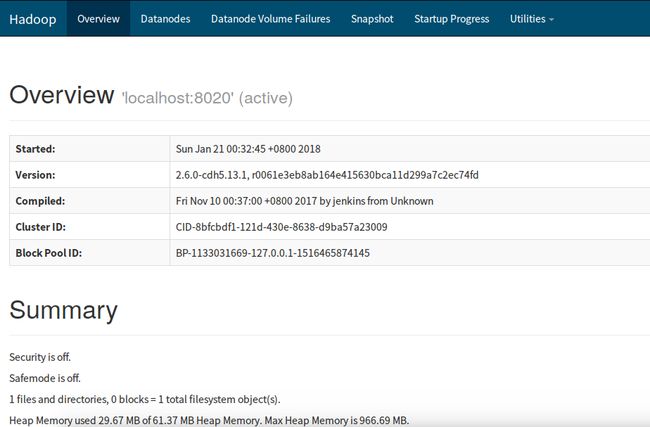

#为了确认服务是否以及启动,可以使用jps命令或者查看webUI:http://localhost:50070

Step 3: Create the directories needed for Hadoop processes.

建立Hadoop进程所需的相关目录

/usr/lib/hadoop/libexec/init-hdfs.sh

[root@localhost ~]# /usr/lib/hadoop/libexec/init-hdfs.sh

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -mkdir -p /tmp'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chmod -R 1777 /tmp'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -mkdir -p /var'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -mkdir -p /var/log'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chmod -R 1775 /var/log'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chown yarn:mapred /var/log'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -mkdir -p /tmp/hadoop-yarn'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chown -R mapred:mapred /tmp/hadoop-yarn'

....................................

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -mkdir -p /user/oozie/share/lib/sqoop'

+ ls '/usr/lib/hive/lib/*.jar'

+ ls /usr/lib/hadoop-mapreduce/hadoop-streaming-2.6.0-cdh5.13.1.jar /usr/lib/hadoop-mapreduce/hadoop-streaming.jar

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -put /usr/lib/hadoop-mapreduce/hadoop-streaming*.jar /user/oozie/share/lib/mapreduce-streaming'

+ ls /usr/lib/hadoop-mapreduce/hadoop-distcp-2.6.0-cdh5.13.1.jar /usr/lib/hadoop-mapreduce/hadoop-distcp.jar

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -put /usr/lib/hadoop-mapreduce/hadoop-distcp*.jar /user/oozie/share/lib/distcp'

+ ls '/usr/lib/pig/lib/*.jar' '/usr/lib/pig/*.jar'

+ ls '/usr/lib/sqoop/lib/*.jar' '/usr/lib/sqoop/*.jar'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chmod -R 777 /user/oozie'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chown -R oozie /user/oozie'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -mkdir -p /var/lib/hadoop-hdfs/cache/mapred/mapred/staging'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chmod 1777 /var/lib/hadoop-hdfs/cache/mapred/mapred/staging'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chown -R mapred /var/lib/hadoop-hdfs/cache/mapred'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -mkdir -p /user/spark/applicationHistory'

+ su -s /bin/bash hdfs -c '/usr/bin/hadoop fs -chown spark /user/spark/applicationHistory'

Step 4: Verify the HDFS File Structure:

确认HDFS的目录结构hadoop fs -ls -R /

[root@localhost ~]# sudo -u hdfs hadoop fs -ls -R /

drwxrwxrwx - hdfs supergroup 0 2018-01-20 16:42 /benchmarks

drwxr-xr-x - hbase supergroup 0 2018-01-20 16:42 /hbase

drwxrwxrwt - hdfs supergroup 0 2018-01-20 16:41 /tmp

drwxrwxrwt - mapred mapred 0 2018-01-20 16:42 /tmp/hadoop-yarn

drwxrwxrwt - mapred mapred 0 2018-01-20 16:42 /tmp/hadoop-yarn/staging

drwxrwxrwt - mapred mapred 0 2018-01-20 16:42 /tmp/hadoop-yarn/staging/history

drwxrwxrwt - mapred mapred 0 2018-01-20 16:42 /tmp/hadoop-yarn/staging/history/done_intermediate

drwxr-xr-x - hdfs supergroup 0 2018-01-20 16:44 /user

drwxr-xr-x - mapred supergroup 0 2018-01-20 16:42 /user/history

drwxrwxrwx - hive supergroup 0 2018-01-20 16:42 /user/hive

drwxrwxrwx - hue supergroup 0 2018-01-20 16:43 /user/hue

drwxrwxrwx - jenkins supergroup 0 2018-01-20 16:42 /user/jenkins

drwxrwxrwx - oozie supergroup 0 2018-01-20 16:43 /user/oozie

................

Step 5: Start YARN

启动Yarn管理器

service hadoop-yarn-resourcemanager startservice hadoop-yarn-nodemanager startservice hadoop-mapreduce-historyserver start

[root@localhost ~]# service hadoop-yarn-resourcemanager start

starting resourcemanager, logging to /var/log/hadoop-yarn/yarn-yarn-resourcemanager-localhost.out

Started Hadoop resourcemanager: [ OK ]

[root@localhost ~]# service hadoop-yarn-nodemanager start

starting nodemanager, logging to /var/log/hadoop-yarn/yarn-yarn-nodemanager-localhost.out

Started Hadoop nodemanager: [ OK ]

[root@localhost ~]# service hadoop-mapreduce-historyserver start

starting historyserver, logging to /var/log/hadoop-mapreduce/mapred-mapred-historyserver-localhost.out

STARTUP_MSG: java = 1.8.0_161

Started Hadoop historyserver: [ OK ]

通过jps查看相关服务是否启动.

[root@localhost ~]# jps

5232 ResourceManager

3425 SecondaryNameNode

5906 Jps

5827 JobHistoryServer

3286 NameNode

5574 NodeManager

3162 DataNode

Step 6: 创建用户目录

[root@localhost ~]# sudo -u hdfs hadoop fs -mkdir /taroballs/

[root@localhost ~]# hadoop fs -ls /

Found 6 items

drwxrwxrwx - hdfs supergroup 0 2018-01-20 16:42 /benchmarks

drwxr-xr-x - hbase supergroup 0 2018-01-20 16:42 /hbase

drwxr-xr-x - hdfs supergroup 0 2018-01-20 16:48 /taroballs

drwxrwxrwt - hdfs supergroup 0 2018-01-20 16:41 /tmp

drwxr-xr-x - hdfs supergroup 0 2018-01-20 16:44 /user

drwxr-xr-x - hdfs supergroup 0 2018-01-20 16:44 /var

在Yarn上运行一个简单的例子

#首先在root用户下建立个Input文件夹

[root@localhost ~]# hadoop fs -mkdir input

[root@localhost ~]# hadoop fs -ls /user/root/

Found 1 items

drwxr-xr-x - root supergroup 0 2018-01-20 17:51 /user/root/input

#然后put一些东西上去

[root@localhost ~]# hadoop fs -put /etc/hadoop/conf/*.xml input/

[root@localhost ~]# hadoop fs -ls input/

Found 4 items

-rw-r--r-- 1 root supergroup 2133 2018-01-20 17:54 input/core-site.xml

-rw-r--r-- 1 root supergroup 2324 2018-01-20 17:54 input/hdfs-site.xml

-rw-r--r-- 1 root supergroup 1549 2018-01-20 17:54 input/mapred-site.xml

-rw-r--r-- 1 root supergroup 2375 2018-01-20 17:54 input/yarn-site.xml

Set HADOOP_MAPRED_HOME

#Set HADOOP_MAPRED_HOME

[root@localhost ~]# vim ~/.bashrc

#Add the HADOOP_MAPRED_HOME

export HADOOP_MAPRED_HOME=/usr/lib/hadoop-mapreduce

#保存退出

[root@localhost ~]# source ~/.bashrc

运行Hadoop MR实例

hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar grep input outputroot23 'dfs[a-z.]+'

#运行Hadoop simple

[root@localhost ~]# hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar grep input outputroot23 'dfs[a-z.]+'

18/01/20 17:55:54 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032

18/01/20 17:55:55 WARN mapreduce.JobResourceUploader: No job jar file set. User classes may not be found. See Job or Job#setJar(String).

18/01/20 17:55:55 INFO input.FileInputFormat: Total input paths to process : 4

18/01/20 17:56:44 INFO mapreduce.Job: Job job_1516438047064_0004 running in uber mode : false

18/01/20 17:56:44 INFO mapreduce.Job: map 0% reduce 0%

18/01/20 17:56:51 INFO mapreduce.Job: map 100% reduce 0%

18/01/20 17:56:59 INFO mapreduce.Job: map 100% reduce 100%

18/01/20 17:56:59 INFO mapreduce.Job: Job job_1516438047064_0004 completed successfully

18/01/20 17:56:59 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=330

FILE: Number of bytes written=287357

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=599

HDFS: Number of bytes written=244

HDFS: Number of read operations=7

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=4358

Total time spent by all reduces in occupied slots (ms)=4738

Total time spent by all map tasks (ms)=4358

Total time spent by all reduce tasks (ms)=4738

Total vcore-milliseconds taken by all map tasks=4358

Total vcore-milliseconds taken by all reduce tasks=4738

Total megabyte-milliseconds taken by all map tasks=4462592

Total megabyte-milliseconds taken by all reduce tasks=4851712

Map-Reduce Framework

Map input records=10

Map output records=10

Map output bytes=304

Map output materialized bytes=330

Input split bytes=129

Combine input records=0

Combine output records=0

Reduce input groups=1

Reduce shuffle bytes=330

Reduce input records=10

Reduce output records=10

Spilled Records=20

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=161

CPU time spent (ms)=1320

Physical memory (bytes) snapshot=328933376

Virtual memory (bytes) snapshot=5055086592

Total committed heap usage (bytes)=170004480

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=470

File Output Format Counters

Bytes Written=244

Result

[root@localhost ~]# hadoop fs -ls

Found 2 items

drwxr-xr-x - root supergroup 0 2018-01-20 17:54 input

drwxr-xr-x - root supergroup 0 2018-01-20 17:56 outputroot23

[root@localhost ~]# hadoop fs -ls outputroot23

Found 2 items

-rw-r--r-- 1 root supergroup 0 2018-01-20 17:56 outputroot23/_SUCCESS

-rw-r--r-- 1 root supergroup 244 2018-01-20 17:56 outputroot23/part-r-00000

[root@localhost ~]# hadoop fs -cat outputroot23/part-r-00000

1 dfs.safemode.min.datanodes

1 dfs.safemode.extension

1 dfs.replication

1 dfs.namenode.name.dir

1 dfs.namenode.checkpoint.dir

1 dfs.domain.socket.path

1 dfs.datanode.hdfs

1 dfs.datanode.data.dir

1 dfs.client.read.shortcircuit

1 dfs.client.file

[root@localhost ~]#

大功告成~CDH伪分布式Hadoop集群搭建成功~如有勘误,欢迎斧正~