hadoop 2.8.5完全分布式环境搭建

zookeeper集群文档:https://zookeeper.apache.org/doc/r3.4.13/zookeeperAdmin.html

namenode HA文档:

https://hadoop.apache.org/docs/r2.8.5/hadoop-project-dist/hadoop-hdfs/HDFSHighAvailabilityWithQJM.html

1.下载zookeeper

[root@node1 ~]# wget http://mirror.bit.edu.cn/apache/zookeeper/stable/zookeeper-3.4.13.tar.gz

[root@node1 ~]# tar xvf zookeeper-3.4.13.tar.gz -C /opt/

[root@node1 ~]# cd /opt/zookeeper-3.4.13/conf/

[root@node1 conf]# vim zoo.cfg

tickTime=2000 dataDir=/opt/zookeeper-3.4.13/data clientPort=2181 initLimit=5 syncLimit=2 server.1=node1:2888:3888 server.2=node2:2888:3888 server.3=node3:2888:3888

[root@node1 conf]# mkdir /opt/zookeeper-3.4.13/data

[root@node1 conf]# cd /opt/zookeeper-3.4.13/data --myid必须要在data目录下面,否则会报错

[root@node1 data]# cat myid

1

[root@node1 zookeeper-3.4.13]# cd ..

[root@node1 opt]# scp -r zookeeper-3.4.13 node2:/opt/

[root@node1 opt]# scp -r zookeeper-3.4.13 node3:/opt/

2.在node2修改myid文件

[root@node2 opt]# cat /opt/zookeeper-3.4.13/data/myid

2

[root@node2 opt]#

3.在node3修改myid文件

[root@node3 ~]# cat /opt/zookeeper-3.4.13/data/myid

3

[root@node3 ~]# zkServer.sh start --每个节点都要启动zookeeper服务

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper-3.4.13/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@node3 opt]# zkCli.sh --使用客户端登陆

4.下载解压hadoop安装包(在各节点上安装jdk和hadoop)

[root@node1 ~]# wget https://download.oracle.com/otn-pub/java/jdk/8u202-b08/1961070e4c9b4e26a04e7f5a083f551e/jdk-8u202-linux-x64.rpm?AuthParam=1552723272_02cde009ff2384cfcf01e2c377d085cc

[root@node1 ~]# wget http://mirrors.shu.edu.cn/apache/hadoop/common/hadoop-2.8.5/hadoop-2.8.5.tar.gz

[root@node1 ~]# scp jdk-8u202-linux-x64.rpm node2:/root/ --将jdk传到各节点上

[root@node1 ~]# rpm -ivh jdk-8u202-linux-x64.rpm --在各节点安装jdk

[root@node1 ~]# tar xvf hadoop-2.8.5.tar.gz -C /opt/

[root@node1 ~]# cd /opt/hadoop-2.8.5/etc/hadoop/

[root@node1 hadoop]# vim hadoop-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_202-amd64/

[root@node1 hadoop]# cat slaves --配置datanode节点(datanode节点都需要)

node2 node3 node4

[root@node1 hadoop]#

5.配置环境变量

[root@node1 opt]# vim /etc/profile --其它node节点一样添加

export JAVA_HOME=/usr/java/jdk1.8.0_202-amd64

export HADOOP_HOME=/opt/hadoop-2.8.5

export ZOOKEEPER_HOME=/opt/zookeeper-3.4.13

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$JAVA_HOME/bin:$ZOOKEEPER_HOME/bin

[root@node1 opt]# source /etc/profile

[root@node1 opt]# scp /etc/profile node2:/etc/

profile 100% 2037 1.4MB/s 00:00

[root@node1 opt]# scp /etc/profile node3:/etc/

profile 100% 2037 961.7KB/s 00:00

[root@node1 opt]#

5.使用zookeeper做namenode高可用

[root@node1 ~]# cd /opt/hadoop-2.8.5/etc/hadoop/

[root@node1 hadoop]# vim hdfs-site.xml

dfs.nameservices --指定集群名 mycluster dfs.ha.namenodes.mycluster --指定集群两个namenode成员 nn1,nn2 dfs.namenode.rpc-address.mycluster.nn1 --nn1成员是node1 node1:8020 dfs.namenode.rpc-address.mycluster.nn2 --nn2成员是node4 node4:8020 dfs.namenode.http-address.mycluster.nn1 node1:50070 dfs.namenode.http-address.mycluster.nn2 node4:50070 dfs.namenode.shared.edits.dir qjournal://node2:8485;node3:8485;node4:8485/mycluster dfs.client.failover.proxy.provider.mycluster org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider dfs.ha.fencing.methods sshfence dfs.ha.fencing.ssh.private-key-files --各个节点通过/root/.ssh/id_rsa文件信任关系 /root/.ssh/id_rsa dfs.ha.automatic-failover.enabled --开启HA功能 true

[root@node1 hadoop]# vim core-site.xml

fs.defaultFS hdfs://mycluster ha.zookeeper.quorum node1:2181,node2:2181,node3:2181 --定义zookeeper

[root@node1 hadoop]# scp hdfs-site.xml core-site.xml node2:/opt/hadoop-2.8.5/etc/hadoop/

[root@node1 hadoop]# scp hdfs-site.xml core-site.xml node3:/opt/hadoop-2.8.5/etc/hadoop/

[root@node1 hadoop]# scp hdfs-site.xml core-site.xml node4:/opt/hadoop-2.8.5/etc/hadoop/

6.启用journalnode(node1,2,3,4都要启动)

[root@node2 hadoop]# hadoop-daemon.sh start journalnode

starting journalnode, logging to /opt/hadoop-2.8.5/logs/hadoop-root-journalnode-node2.out

[root@node2 hadoop]#

7.格式化磁盘和启动服务

[root@node1 hadoop]# hdfs namenode -format --在任意一个datanode就可以了

[root@node1 hadoop]# scp -r /tmp/hadoop-root/dfs node4:/tmp/hadoop-root/ --将格式化好后的数据复制到另一个namenode上(node4)

[root@node1 hadoop]# hdfs zkfc -formatZK --初使化zookeeper

[root@node1 hadoop]# start-dfs.sh --启动服务

Starting namenodes on [node1 node4]

node1: starting namenode, logging to /opt/hadoop-2.8.5/logs/hadoop-root-namenode-node1.out

node4: starting namenode, logging to /opt/hadoop-2.8.5/logs/hadoop-root-namenode-node4.out

node4: starting datanode, logging to /opt/hadoop-2.8.5/logs/hadoop-root-datanode-node4.out

node2: starting datanode, logging to /opt/hadoop-2.8.5/logs/hadoop-root-datanode-node2.out

node3: starting datanode, logging to /opt/hadoop-2.8.5/logs/hadoop-root-datanode-node3.out

Starting journal nodes [node2 node3 node4]

node2: journalnode running as process 10429. Stop it first.

node4: journalnode running as process 9923. Stop it first.

node3: journalnode running as process 10198. Stop it first.

Starting ZK Failover Controllers on NN hosts [node1 node4]

node1: starting zkfc, logging to /opt/hadoop-2.8.5/logs/hadoop-root-zkfc-node1.out

node4: starting zkfc, logging to /opt/hadoop-2.8.5/logs/hadoop-root-zkfc-node4.out

[root@node1 hadoop]# jps

12728 QuorumPeerMain --zookeeper进程

3929 DataNode --datanode进程

15707 JournalNode --JournalNode进程

16907 DFSZKFailoverController

16556 NameNode

17471 Jps

[root@node1 hadoop]#

8.datanode进程

[root@node2 hadoop]# jps

11282 DataNode

11867 Jps

10429 JournalNode

8590 QuorumPeerMain

[root@node2 hadoop]#

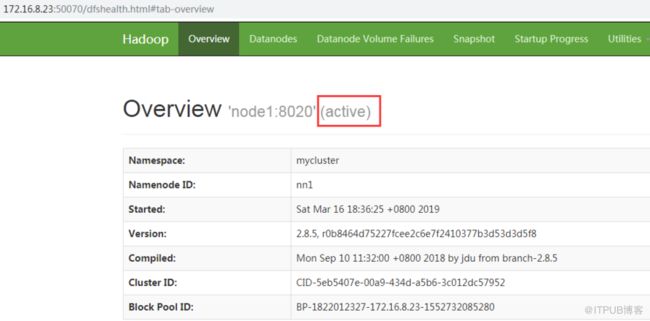

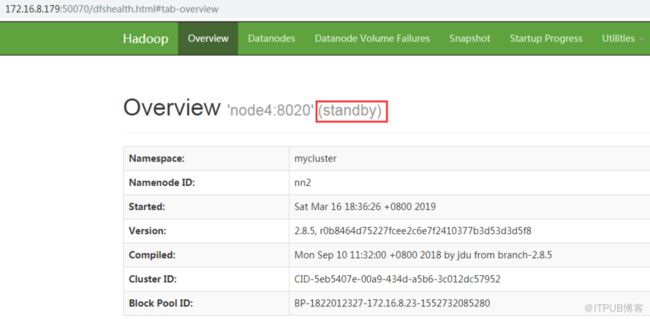

9.使用web访问

http://node1:50070

http://node4:50070

来自 “ ITPUB博客 ” ,链接:http://blog.itpub.net/25854343/viewspace-1453156/,如需转载,请注明出处,否则将追究法律责任。

转载于:http://blog.itpub.net/25854343/viewspace-1453156/