ELK Stack 是 Elasticsearch、Logstash、Kibana 三个开源软件的组合。在实时数据检索和分析场合,三者通常是配合共用,而且又都先后归于 Elastic.co 公司名下,故有此简称。

ELK Stack 在最近两年迅速崛起,成为机器数据分析,或者说实时日志处理领域,开源界的第一选择。

ELK由三个组建构成,分别是

Elasticsearch,负责数据的索引和存储

Logstash ,负责日志的采集和格式化

Kibana,负责前端统计的展示

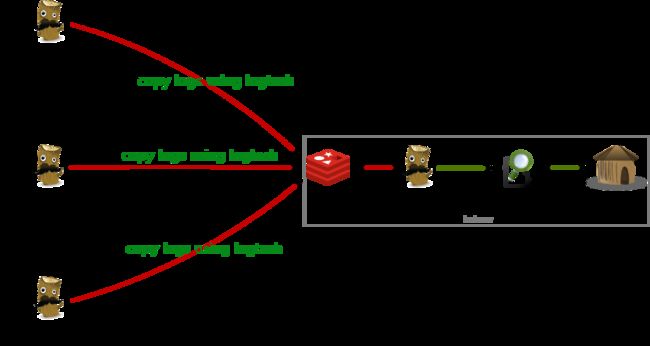

大致的架构如下:

redis提供队列来作为broker负责log传输过程中的缓冲,也可以由kafka等来代替。

一、logstansh安装

1、JDK安装

yum -y install java-1.8.0 [root@ELKServer ~]# java -version openjdk version "1.8.0_101" OpenJDK Runtime Environment (build 1.8.0_101-b13) OpenJDK 64-Bit Server VM (build 25.101-b13, mixed mode)

2、logstansh安装

wget https://download.elastic.co/logstash/logstash/logstash-2.3.4.zip unzip logstash-2.3.4.zip mv logstash-2.3.4 /usr/local/ echo "PATH=$PATH:/usr/local/logstash-2.3.4/bin" >> /etc/profile source /etc/profile

3、新建logstansh配置文件目录

mkdir /usr/local/logstash-2.3.4/conf

4、测试logstansh

[root@ELKServer logstash-2.3.4]# logstash -e "input {stdin{}} output {stdout{}}"

hello

Settings: Default pipeline workers: 1

Pipeline main started

2016-07-28T12:47:41.597Z 0.0.0.0 hello

二、redis安装

1、redis安装

wget http://download.redis.io/releases/redis-2.8.20.tar.gz yum install tcl -y tar -zxvf redis-2.8.20.tar.gz mv redis-2.8.20/ /usr/local/ [root@ELKServer logstash-2.3.4]# cd /usr/local/redis-2.8.20/ [root@ELKServer redis-2.8.20]# make MALLOC=libc [root@ELKServer redis-2.8.20]# make install [root@ELKServer redis-2.8.20]# cd utils/ [root@ELKServer utils]# ./install_server.sh #所有选项默认 Welcome to the redis service installer This script will help you easily set up a running redis server Please select the redis port for this instance: [6379] Selecting default: 6379 Please select the redis config file name [/etc/redis/6379.conf] Selected default - /etc/redis/6379.conf Please select the redis log file name [/var/log/redis_6379.log] Selected default - /var/log/redis_6379.log Please select the data directory for this instance [/var/lib/redis/6379] Selected default - /var/lib/redis/6379 Please select the redis executable path [/usr/local/bin/redis-server] Selected config: Port : 6379 Config file : /etc/redis/6379.conf Log file : /var/log/redis_6379.log Data dir : /var/lib/redis/6379 Executable : /usr/local/bin/redis-server Cli Executable : /usr/local/bin/redis-cli Is this ok? Then press ENTER to go on or Ctrl-C to abort. Copied /tmp/6379.conf => /etc/init.d/redis_6379 Installing service... Successfully added to chkconfig! Successfully added to runlevels 345! Starting Redis server... Installation successful!

2、查看redis的监控端口

[root@ELKServer utils]# netstat -tnlp |grep redis tcp 0 0 0.0.0.0:6379 0.0.0.0:* LISTEN 2085/redis-server * tcp 0 0 :::6379 :::* LISTEN 2085/redis-server *

3、测试redis是否缓存数据

a、新建logstansh配置文件如下:

[root@ELKServer conf]# cat output_redis.conf

input { stdin { } } #手动输入数据

output {

stdout { codec => rubydebug } #页面debug信息

redis {

host => '192.168.10.49'

data_type => 'list'

key => 'redis'

}

}

b、启动logstansh

[root@ELKServer conf]# logstash -f output_redis.conf --verbose

starting agent {:level=>:info}

starting pipeline {:id=>"main", :level=>:info}

Settings: Default pipeline workers: 1

Starting pipeline {:id=>"main", :pipeline_workers=>1, :batch_size=>125, :batch_delay=>5, :max_inflight=>125, :level=>:info}

Pipeline main started

hello #手动输入

{

"message" => "hello",

"@version" => "1",

"@timestamp" => "2016-07-28T13:09:42.651Z",

"host" => "0.0.0.0"

}

c、查看redis中是否存在数据。

cd /usr/local/redis-2.8.20/src/

执行下面这条命令后再执行logstash -f output_redis.conf(最好分两个ssh会话测试)。

[root@ELKServer src]# ./redis-cli monitor

OK

1469711599.811344 [0 192.168.10.49:56179] "rpush" "redis" "{\"message\":\"hello\",\"@version\":\"1\",\"@timestamp\":\"2016-07-28T13:13:18.446Z\",\"host\":\"0.0.0.0\"}"

三、elasticsearch安装

1、elasticsearch安装配置

wget https://download.elastic.co/elasticsearch/release/org/elasticsearch/distribution/zip/elasticsearch/2.3.4/elasticsearch-2.3.4.zip unzip elasticsearch-2.3.4.zip mv elasticsearch-2.3.4 /usr/local/

修改elasticsearch配置文件

vim /usr/local/elasticsearch-2.3.4/config/elasticsearch.yml

把下面参数的注释去掉并改成服务器IP。这里只做简单安装,优化及集群后面再介绍

network.host: 192.168.10.49

2、elasticsearch启动

[root@ELKServer bin]# /usr/local/elasticsearch-2.3.4/bin/elasticsearch -d [root@ELKServer bin]# Exception in thread "main" java.lang.RuntimeException: don't run elasticsearch as root.'

elasticsearch启动不能使用root用户。新建elk用户,并把elasticsearch目录用户所有者改成elk

[elk@ELKServer bin]$ ./elasticsearch -d

查看elasticsearch是否启动

[elk@ELKServer bin]$ netstat -tnlp |grep java tcp 0 0 ::ffff:192.168.10.49:9200 :::* LISTEN 2476/java tcp 0 0 ::ffff:192.168.10.49:9300 :::* LISTEN 2476/java

3、测试logstansh和elasticsearch是否能结合使用

a、新建logstansh配置文件elasticsearch.conf

[root@ELKServer bin]# cd /usr/local/logstash-2.3.4/conf

[root@ELKServer conf]# cat elasticsearch.conf

input { stdin {} } #手动输入

output {

elasticsearch { hosts => "192.168.10.49" }

stdout { codec=> rubydebug } #页面debug信息

}

b、启动elasticsearch.conf配置文件

[root@ELKServer conf]# logstash -f elasticsearch.conf --verbose

starting agent {:level=>:info}

starting pipeline {:id=>"main", :level=>:info}

Settings: Default pipeline workers: 1

Using mapping template from {:path=>nil, :level=>:info}

Attempting to install template {:manage_template=>{"template"=>"logstash-*", "settings"=>{"index.refresh_interval"=>"5s"}, "mappings"=>{"_default_"=>{"_all"=>{"enabled"=>true, "omit_norms"=>true}, "dynamic_templates"=>[{"message_field"=>{"match"=>"message", "match_mapping_type"=>"string", "mapping"=>{"type"=>"string", "index"=>"analyzed", "omit_norms"=>true, "fielddata"=>{"format"=>"disabled"}}}}, {"string_fields"=>{"match"=>"*", "match_mapping_type"=>"string", "mapping"=>{"type"=>"string", "index"=>"analyzed", "omit_norms"=>true, "fielddata"=>{"format"=>"disabled"}, "fields"=>{"raw"=>{"type"=>"string", "index"=>"not_analyzed", "ignore_above"=>256}}}}}], "properties"=>{"@timestamp"=>{"type"=>"date"}, "@version"=>{"type"=>"string", "index"=>"not_analyzed"}, "geoip"=>{"dynamic"=>true, "properties"=>{"ip"=>{"type"=>"ip"}, "location"=>{"type"=>"geo_point"}, "latitude"=>{"type"=>"float"}, "longitude"=>{"type"=>"float"}}}}}}}, :level=>:info}

New Elasticsearch output {:class=>"LogStash::Outputs::ElasticSearch", :hosts=>["192.168.10.49"], :level=>:info}

Starting pipeline {:id=>"main", :pipeline_workers=>1, :batch_size=>125, :batch_delay=>5, :max_inflight=>125, :level=>:info}

Pipeline main started

hello elasticsearch #这行是手动输入的

{

"message" => "hello elasticsearch",

"@version" => "1",

"@timestamp" => "2016-07-28T14:20:09.460Z",

"host" => "0.0.0.0"

}

c、查看elasticsearch是否获取到了"hello elasticsearch"

[root@ELKServer conf]# curl http://localhost:9200/_search?pretty

{

"took" : 45,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 1,

"max_score" : 1.0,

"hits" : [ {

"_index" : "logstash-2016.07.28",

"_type" : "logs",

"_id" : "AVYx4H2BdePMZ0WyBMGl",

"_score" : 1.0,

"_source" : {

"message" : "hello elasticsearch",

"@version" : "1",

"@timestamp" : "2016-07-28T14:20:09.460Z",

"host" : "0.0.0.0"

}

} ]

}

}

4、安装elasticsearch插件

elasticsearch有很多插件:http://www.searchtech.pro/elasticsearch-plugins

elasticsearch-head插件安装

[root@ELKServer lang-expression]# cd /usr/local/elasticsearch-2.3.4/bin/ [root@ELKServer bin]# ./plugin install mobz/elasticsearch-head -> Installing mobz/elasticsearch-head... Trying https://github.com/mobz/elasticsearch-head/archive/master.zip ... Downloading .................DONE Verifying https://github.com/mobz/elasticsearch-head/archive/master.zip checksums if available ... Installed head into /usr/local/elasticsearch-2.3.4/plugins/head

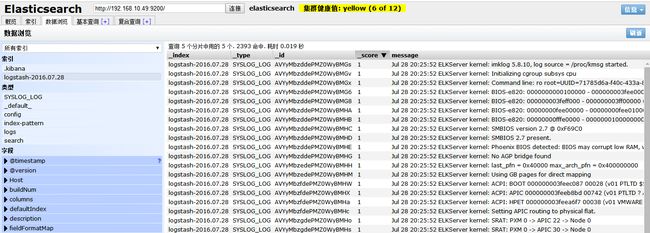

查看elasticsearch-head插件显示的页面

http://192.168.10.49:9200/_plugin/head/

四、kibana安装

1、安装kibana

[root@ELKServer ~]# tar -zxvf kibana-4.5.2-linux-x64.tar.gz [root@ELKServer ~]# mv kibana-4.5.2-linux-x64 /usr/local/ [root@ELKServer ~]# cd /usr/local/kibana-4.5.2-linux-x64/config/ [root@ELKServer config]# vim kibana.yml

修改kibana配置文件,把下面这行改成elasticsearc的访问路径

elasticsearch.url: "http://192.168.10.49:9200"

2、启动kibana

[root@ELKServer bin]# /usr/local/kibana-4.5.2-linux-x64/bin/kibana& log [23:10:23.215] [info][status][plugin:kibana] Status changed from uninitialized to green - Ready log [23:10:23.288] [info][status][plugin:elasticsearch] Status changed from uninitialized to yellow - Waiting for Elasticsearch log [23:10:23.344] [info][status][plugin:kbn_vislib_vis_types] Status changed from uninitialized to green - Ready log [23:10:23.377] [info][status][plugin:markdown_vis] Status changed from uninitialized to green - Ready log [23:10:23.415] [info][status][plugin:metric_vis] Status changed from uninitialized to green - Ready log [23:10:23.445] [info][status][plugin:spyModes] Status changed from uninitialized to green - Ready log [23:10:23.464] [info][status][plugin:statusPage] Status changed from uninitialized to green - Ready log [23:10:23.485] [info][status][plugin:table_vis] Status changed from uninitialized to green - Ready log [23:10:23.510] [info][listening] Server running at http://0.0.0.0:5601 log [23:10:28.470] [info][status][plugin:elasticsearch] Status changed from yellow to yellow - No existing Kibana index found log [23:10:31.442] [info][status][plugin:elasticsearch] Status changed from yellow to green - Kibana index ready

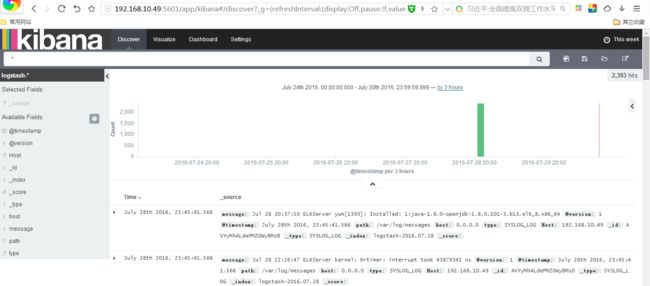

3、测试kibana

访问页面:http://192.168.10.49:5601/

五、ELK实例测试

1、简单介绍下ELK的数据传输过程

a、logstansh客户端根据日志存放路径采集日志(input),然后转给redis服务器缓存(output)

b、logstansh服务端将消息从redie服务器拿出来(input),然后用filter对日志分割处理

c、logstansh服务端最后将处理好的日志转给ealsticsearch(output)存储

d、kibana通过ealsticsearch接口获取存储的数据并页面展示出来

在测试中我们没有对日志做filter处理

2、新建logstansh配置文件

logstash_redis.conf logstansh客户端配置文件

input {

file {

path => "/var/log/messages"

start_position => beginning

sincedb_write_interval => 0

add_field => {"Host"=>"192.168.10.47"}

type => "SYSLOG_LOG"

}

}

output {

redis {

host => "192.168.10.47:6379"

data_type => "list"

key => "logstash:syslog_log"

}

}

redis_elasticserach.conf logstansh服务端配置文件

input {

redis {

host => '192.168.10.47'

data_type => 'list'

port => "6379"

key => 'logstash:syslog_log'

type => 'redis-input'

}

}

output {

elasticsearch {

hosts => "192.168.10.47"

index => "logstash-%{+YYYY.MM.dd}"

}

}

2、启动ELK各项服务

logstash -f logstash_redis.conf & logstash -f redis_elasticsearch.conf & /etc/init.d/redis_6379 start /usr/local/elasticsearch-2.3.4/bin/elasticsearch -d(elk用户启动) /usr/local/kibana-4.5.2-linux-x64/bin/kibana&

3、检查日志传输是否正常

a、查看elasticsearch数据信息

http://192.168.10.49:9200/_plugin/head/

b、查看kibana是否有数据显示

http://192.168.10.49:5601/

注意:因为上面从message获取的日志是日志文件首行开始获取,时间可能是几天前的。

在kibana查看数据是注意选择时间范围