软硬件限制:

1)cpu和内存 master:至少1c2g,推荐2c4g;node:至少1c2g

2)linux系统 内核版本至少3.10,推荐CentOS7/RHEL7

3)docker 至少1.9版本,推荐1.12+ 4)etcd 至少2.0版本,推荐3.0+

kubernetes官方github地址 https://github.com/kubernetes/kubernetes/releases

高可用集群所需节点规划:

部署节点------x1 : 运行这份 ansible 脚本的节点 etcd节点------x3 : 注意etcd集群必须是1,3,5,7...奇数个节点

master节点----x2 : 根据实际集群规模可以增加节点数,需要额外规划一个master VIP(虚地址)

lb节点--------x2 : 负载均衡节点两个,安装 haproxy+keepalived

node节点------x3 : 真正应用负载的节点,根据需要提升机器配置和增加节点数

机器规划:

| Ip | 机名 | 角色 | 系统 |

|---|---|---|---|

| 192.168.2.10 | master | deploy、master1、lb1、etcd | centos7.5 x86_64 |

| 192.168.2.11 | node1 | etcd、node | |

| 192.168.2.12 | node2 | etcd、node | |

| 192.168.2.13 | node3 | node | |

| 192.168.2.14 | master2 | master2、lb2 | |

| 192.168.2.16 | vip |

准备工作

安装epel源、python

六台机器,全部执行:

yum install epel-release

yum update

yum install pythondeploy节点安装和准备ansible

yum install -y python-pip git

pip install pip --upgrade -i http://mirrors.aliyun.com/pypi/simple/ --trusted-host mirrors.aliyun.com

pip install --no-cache-dir ansible -i http://mirrors.aliyun.com/pypi/simple/ --trusted-host mirrors.aliyun.comdeploy节点配置免密码登录

奉上我使用多年的自动布置key的脚本

#!/bin/bash

keypath=/root/.ssh

[ -d ${keypath} ] || mkdir -p ${keypath}

rpm -q expect &> /dev/null || yum install expect -y

ssh-keygen -t rsa -f /root/.ssh/id_rsa -P ""

password=centos

for host in `seq 10 14`;do

expect <执行脚本,deploy自动copy key到目标主机

[root@master ~]# sh sshkey.sh deploy上编排k8s

git clone https://github.com/gjmzj/kubeasz.git

mkdir -p /etc/ansible

mv kubeasz/* /etc/ansible/从百度云网盘下载二进制文件 https://pan.baidu.com/s/1c4RFaA#list/path=%2F

可以根据自己所需版本,下载对应的tar包,这里我下载1.12

经过一番折腾, 终把k8s.1-12-1.tar.gz的tar包放到了depoly上

tar zxvf k8s.1-12-1.tar.gz

mv bin/* /etc/ansible/bin/Example:

[root@master ~]# rz

rz waiting to receive.

Starting zmodem transfer. Press Ctrl+C to cancel.

Transferring k8s.1-12-1.tar.gz...

100% 234969 KB 58742 KB/sec 00:00:04 0 Errors

[root@master ~]# ls

anaconda-ks.cfg ifcfg-ens192.bak k8s.1-12-1.tar.gz kubeasz

[root@master ~]# tar zxf k8s.1-12-1.tar.gz

[root@master ~]# ls

anaconda-ks.cfg bin ifcfg-ens192.bak k8s.1-12-1.tar.gz kubeasz

[root@master ~]# mv bin /etc/ansible/

mv:是否覆盖"/etc/ansible/bin/readme.md"? y配置集群参数

cd /etc/ansible/

cp example/hosts.m-masters.example hosts //内容根据实际情况修改[deploy]

192.168.2.10 NTP_ENABLED=no

# 'etcd' cluster must have odd member(s) (1,3,5,...)

# variable 'NODE_NAME' is the distinct name of a member in 'etcd' cluster

[etcd]

192.168.2.10 NODE_NAME=etcd1

192.168.2.11 NODE_NAME=etcd2

192.168.2.12 NODE_NAME=etcd3

[kube-master]

192.168.2.10

# 'loadbalance' node, with 'haproxy+keepalived' installed

[lb]

192.168.2.10 LB_IF="eth0" LB_ROLE=backup # replace 'etho' with node's network interface

192.168.2.14 LB_IF="eth0" LB_ROLE=master

[kube-node]

192.168.2.11

192.168.2.12

192.168.2.13

[vip]

192.168.2.15修改完hosts,测试

ansible all -m ping

[root@master ansible]# ansible all -m ping

192.168.2.11 | SUCCESS => {

"changed": false,

"ping": "pong"

}

192.168.2.14 | SUCCESS => {

"changed": false,

"ping": "pong"

}

192.168.2.12 | SUCCESS => {

"changed": false,

"ping": "pong"

}

192.168.2.10 | SUCCESS => {

"changed": false,

"ping": "pong"

}

192.168.2.13 | SUCCESS => {

"changed": false,

"ping": "pong"

}

192.168.2.15 | SUCCESS => {

"changed": false,

"ping": "pong"

}分步骤安装:

1)创建证书和安装准备

ansible-playbook 01.prepare.yml2)安装etcd集群

ansible-playbook 02.etcd.yml检查etcd节点健康状况:

执行bash

for ip in 10 11 12 ; do ETCDCTL_API=3 etcdctl --endpoints=https://192.168.2.$ip:2379 --cacert=/etc/kubernetes/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem endpoint healt; done执行后:

https://192.168.2.10:2379 is healthy: successfully committed proposal: took = 857.393µs

https://192.168.2.11:2379 is healthy: successfully committed proposal: took = 1.0619ms

https://192.168.2.12:2379 is healthy: successfully committed proposal: took = 1.19245ms

或者 添加/etc/ansible/bin环境变量:

[root@master ansible]# vim /etc/profile.d/k8s.sh

export PATH=$PATH:/etc/ansible/bin

[root@master ansible]# for ip in 10 11 12 ; do ETCDCTL_API=3 etcdctl --endpoints=https://192.168.2.$ip:2379 --cacert=/etc/kubernetes/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem endpoint healt; done

https://192.168.2.10:2379 is healthy: successfully committed proposal: took = 861.891µs

https://192.168.2.11:2379 is healthy: successfully committed proposal: took = 1.061687ms

https://192.168.2.12:2379 is healthy: successfully committed proposal: took = 909.274µs

3)安装docker

ansible-playbook 03.docker.yml4)安装master节点

ansible-playbook 04.kube-master.yml

kubectl get componentstatus//查看集群状态

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"} 5)安装node节点

ansible-playbook 05.kube-node.yml查看node节点

kubectl get nodes

[root@master ansible]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

192.168.2.10 Ready,SchedulingDisabled master 112s v1.12.1

192.168.2.11 Ready node 17s v1.12.1

192.168.2.12 Ready node 17s v1.12.1

192.168.2.13 Ready node 17s v1.12.1

192.168.2.14 Ready,SchedulingDisabled master 112s v1.12.16)部署集群网络

ansible-playbook 06.network.yml

kubectl get pod -n kube-system //查看kube-systemnamespace上的pod,从中可以看到flannel相关的pod

[root@master ansible]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-5d574 1/1 Running 0 47s

kube-flannel-ds-6kpnm 1/1 Running 0 47s

kube-flannel-ds-f2nfs 1/1 Running 0 47s

kube-flannel-ds-gmbmv 1/1 Running 0 47s

kube-flannel-ds-w5st7 1/1 Running 0 47s7)安装集群插件(dns, dashboard)

ansible-playbook 07.cluster-addon.yml查看kube-system namespace下的服务

kubectl get svc -n kube-system

[root@master ~]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.68.0.2 53/UDP,53/TCP 10h

kubernetes-dashboard NodePort 10.68.119.108 443:35065/TCP 10h

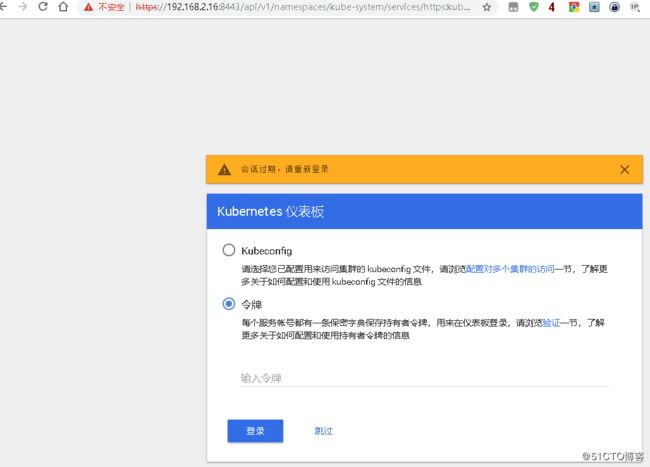

metrics-server ClusterIP 10.68.235.9 443/TCP 10h 查看admin登录dashboard的 token

到此为止,分步部署已经基本配置完毕了,下面就可以查找登录token登录dashboard了:

[root@master ~]# kubectl get secret -n kube-system|grep admin

admin-user-token-4zdgw kubernetes.io/service-account-token 3 9h

[root@master ~]# kubectl describe secret admin-user-token-4zdgw -n kube-system

Name: admin-user-token-4zdgw

Namespace: kube-system

Labels:

Annotations: kubernetes.io/service-account.name: admin-user

kubernetes.io/service-account.uid: 72378c78-ee7d-11e8-a2a7-000c2931fb97

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1346 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLTR6ZGd3Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI3MjM3OGM3OC1lZTdkLTExZTgtYTJhNy0wMDBjMjkzMWZiOTciLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.J0MjCSAP00RDvQgG1xBPvAYVo1oycXfoBh0dqdCzX1ByILyCHUqqxixuQdfE-pZqP15u6UV8OF3lGI_mHs5DBvNK0pCfaRICSo4SXSihJHKl_j9Bbozq9PjQ5d7CqHOFoXk04q0mWpJ5o0rJ6JX6Psx93Ch0uaXPPMLtzL0kolIF0j1tCFnsob8moczH06hfzo3sg8h0YCXyO6Z10VT7GMuLlwiG8XgWcplm-vcPoY_AWHnLV3RwAJH0u1q0IrMprvgTCuHighTaSjPeUe2VsXMhDpocJMoHQOoHirQKmiIAnanbIm4N1TO_5R1cqh-_gH7-MH8xefgWXoSrO-fo2w 登录了账号密码后,用上面token在界面上登录即可

也可以查询证.

[root@master ~]# kubectl get secret -n kube-system

NAME TYPE DATA AGE

admin-user-token-4zdgw kubernetes.io/service-account-token 3 10h

coredns-token-98zvm kubernetes.io/service-account-token 3 10h

default-token-zk5rj kubernetes.io/service-account-token 3 10h

flannel-token-4gmtz kubernetes.io/service-account-token 3 10h

kubernetes-dashboard-certs Opaque 0 10h

kubernetes-dashboard-key-holder Opaque 2 10h

kubernetes-dashboard-token-lcsd6 kubernetes.io/service-account-token 3 10h

metrics-server-token-j4s2c kubernetes.io/service-account-token 3 10h

[root@master ~]# kubectl get secret/admin-user-token-4zdgw -n kube-system -o yaml

apiVersion: v1

data:

ca.crt: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUR0akNDQXA2Z0F3SUJBZ0lVVFB3YVdFR0gyT2kwaHlVeGlJWnhFSUF3UFpVd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1lURUxNQWtHQTFVRUJoTUNRMDR4RVRBUEJnTlZCQWdUQ0VoaGJtZGFhRzkxTVFzd0NRWURWUVFIRXdKWQpVekVNTUFvR0ExVUVDaE1EYXpoek1ROHdEUVlEVlFRTEV3WlRlWE4wWlcweEV6QVJCZ05WQkFNVENtdDFZbVZ5CmJtVjBaWE13SGhjTk1UZ3hNVEl5TVRZeE1EQXdXaGNOTXpNeE1URTRNVFl4TURBd1dqQmhNUXN3Q1FZRFZRUUcKRXdKRFRqRVJNQThHQTFVRUNCTUlTR0Z1WjFwb2IzVXhDekFKQmdOVkJBY1RBbGhUTVF3d0NnWURWUVFLRXdOcgpPSE14RHpBTkJnTlZCQXNUQmxONWMzUmxiVEVUTUJFR0ExVUVBeE1LYTNWaVpYSnVaWFJsY3pDQ0FTSXdEUVlKCktvWklodmNOQVFFQkJRQURnZ0VQQURDQ0FRb0NnZ0VCQU5zV1NweVVRcGYvWDFCaHNtUS9NUDVHVE0zcUFjWngKV3lKUjB0VEtyUDVWNStnSjNZWldjK01HSzlrY3h6OG1RUUczdldvNi9ENHIyZ3RuREVWaWxRb1dlTm0rR3hLSwpJNjkzczNlS2ovM1dIdGloOVA4TWp0RktMWnRvSzRKS09kUURYeGFHLzJNdzJEMmZnbzNJT2VDdlZzR0F3Qlc4ClYxMDh3dUVNdTIzMnhybFdSSFFWaTNyc0dmN3pJbkZzSFNOWFFDbXRMMHhubERlYnZjK2c2TWRtcWZraVZSdzIKNTFzZGxnbmV1aEFqVFJaRkYvT0lFWE4yUjIyYTJqZVZDbWNySEcvK2orU0tzTlpmeVVCb216NGRUcmRsV0JEUQpob3ZzSGkrTEtJVGNxZHBQV3MrZmxIQjlaL1FRUnM5MTZEREpxMHRWNFV6MEY0YjRsemJXaGdrQ0F3RUFBYU5tCk1HUXdEZ1lEVlIwUEFRSC9CQVFEQWdFR01CSUdBMVVkRXdFQi93UUlNQVlCQWY4Q0FRSXdIUVlEVlIwT0JCWUUKRklaN3NZczRjV0xtYnlwVUEwWUhGanc3Mk5jV01COEdBMVVkSXdRWU1CYUFGSVo3c1lzNGNXTG1ieXBVQTBZSApGanc3Mk5jV01BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQ2Eyell1NmVqMlRURWcyN1VOeGh4U0ZMaFJLTHhSClg5WnoyTmtuVjFQMXhMcU8xSHRUSmFYajgvL0wxUmdkQlRpR3JEOXNENGxCdFRRMmF2djJFQzZKeXJyS0xVelUKSWNiUXNpU0h4NkQ3S1FFWjFxQnZkNWRKVDluai9mMG94SjlxNDVmZTBJbWNiUndKWnA2WDJKbWtQSWZyYjYreQo2YUFTbzhaakliTktQN1Z1WndIQ1RPQUwzeUhVR2lJTEJtT1hKNldGRDlpTWVFMytPZE95ZHIwYzNvUmRXVW1aCkI1andlN2x2MEtVc2Y1SnBTS0JCbzZ3bkViNXhMdDRSYjBMa2RxMXZLTGFOMXUvbXFFc1ltbUk3MmRuaUdLSTkKakdDdkRqNVREaW55T1RQU005Vi81RE5OTFlLQkExaDRDTmVBRjE1RWlCay9EU055SzIrUTF3TVgKLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

namespace: a3ViZS1zeXN0ZW0=

token: ZXlKaGJHY2lPaUpTVXpJMU5pSXNJbXRwWkNJNklpSjkuZXlKcGMzTWlPaUpyZFdKbGNtNWxkR1Z6TDNObGNuWnBZMlZoWTJOdmRXNTBJaXdpYTNWaVpYSnVaWFJsY3k1cGJ5OXpaWEoyYVdObFlXTmpiM1Z1ZEM5dVlXMWxjM0JoWTJVaU9pSnJkV0psTFhONWMzUmxiU0lzSW10MVltVnlibVYwWlhNdWFXOHZjMlZ5ZG1salpXRmpZMjkxYm5RdmMyVmpjbVYwTG01aGJXVWlPaUpoWkcxcGJpMTFjMlZ5TFhSdmEyVnVMVFI2WkdkM0lpd2lhM1ZpWlhKdVpYUmxjeTVwYnk5elpYSjJhV05sWVdOamIzVnVkQzl6WlhKMmFXTmxMV0ZqWTI5MWJuUXVibUZ0WlNJNkltRmtiV2x1TFhWelpYSWlMQ0pyZFdKbGNtNWxkR1Z6TG1sdkwzTmxjblpwWTJWaFkyTnZkVzUwTDNObGNuWnBZMlV0WVdOamIzVnVkQzUxYVdRaU9pSTNNak0zT0dNM09DMWxaVGRrTFRFeFpUZ3RZVEpoTnkwd01EQmpNamt6TVdaaU9UY2lMQ0p6ZFdJaU9pSnplWE4wWlcwNmMyVnlkbWxqWldGalkyOTFiblE2YTNWaVpTMXplWE4wWlcwNllXUnRhVzR0ZFhObGNpSjkuSjBNakNTQVAwMFJEdlFnRzF4QlB2QVlWbzFveWNYZm9CaDBkcWRDelgxQnlJTHlDSFVxcXhpeHVRZGZFLXBacVAxNXU2VVY4T0YzbEdJX21IczVEQnZOSzBwQ2ZhUklDU280U1hTaWhKSEtsX2o5QmJvenE5UGpRNWQ3Q3FIT0ZvWGswNHEwbVdwSjVvMHJKNkpYNlBzeDkzQ2gwdWFYUFBNTHR6TDBrb2xJRjBqMXRDRm5zb2I4bW9jekgwNmhmem8zc2c4aDBZQ1h5TzZaMTBWVDdHTXVMbHdpRzhYZ1djcGxtLXZjUG9ZX0FXSG5MVjNSd0FKSDB1MXEwSXJNcHJ2Z1RDdUhpZ2hUYVNqUGVVZTJWc1hNaERwb2NKTW9IUU9vSGlyUUttaUlBbmFuYkltNE4xVE9fNVIxY3FoLV9nSDctTUg4eGVmZ1dYb1NyTy1mbzJ3

kind: Secret

metadata:

annotations:

kubernetes.io/service-account.name: admin-user

kubernetes.io/service-account.uid: 72378c78-ee7d-11e8-a2a7-000c2931fb97

creationTimestamp: 2018-11-22T17:38:38Z

name: admin-user-token-4zdgw

namespace: kube-system

resourceVersion: "977"

selfLink: /api/v1/namespaces/kube-system/secrets/admin-user-token-4zdgw

uid: 7239bb01-ee7d-11e8-8c5c-000c29fd1c0f

type: kubernetes.io/service-account-token查看ServiceAccount

ServiceAccount 是一种账号,但是不是为集群用户(管理员、运维人员等)使用的,而是给运行在集群中的 Pod 里面的进程使用的。

[root@master ~]# kubectl get serviceaccount --all-namespaces

NAMESPACE NAME SECRETS AGE

default default 1 10h

kube-public default 1 10h

kube-system admin-user 1 10h

kube-system coredns 1 10h

kube-system default 1 10h

kube-system flannel 1 10h

kube-system kubernetes-dashboard 1 10h

kube-system metrics-server 1 10h

[root@master ~]# kubectl describe serviceaccount/default -n kube-system

Name: default

Namespace: kube-system

Labels:

Annotations:

Image pull secrets:

Mountable secrets: default-token-zk5rj

Tokens: default-token-zk5rj

Events:

[root@master ~]# kubectl get secret/default-token-zk5rj -n kube-system

NAME TYPE DATA AGE

default-token-zk5rj kubernetes.io/service-account-token 3 10h

[root@master ~]# kubectl get secret/default-token-zk5rj -n kube-system -o yaml

apiVersion: v1

data:

ca.crt: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUR0akNDQXA2Z0F3SUJBZ0lVVFB3YVdFR0gyT2kwaHlVeGlJWnhFSUF3UFpVd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1lURUxNQWtHQTFVRUJoTUNRMDR4RVRBUEJnTlZCQWdUQ0VoaGJtZGFhRzkxTVFzd0NRWURWUVFIRXdKWQpVekVNTUFvR0ExVUVDaE1EYXpoek1ROHdEUVlEVlFRTEV3WlRlWE4wWlcweEV6QVJCZ05WQkFNVENtdDFZbVZ5CmJtVjBaWE13SGhjTk1UZ3hNVEl5TVRZeE1EQXdXaGNOTXpNeE1URTRNVFl4TURBd1dqQmhNUXN3Q1FZRFZRUUcKRXdKRFRqRVJNQThHQTFVRUNCTUlTR0Z1WjFwb2IzVXhDekFKQmdOVkJBY1RBbGhUTVF3d0NnWURWUVFLRXdOcgpPSE14RHpBTkJnTlZCQXNUQmxONWMzUmxiVEVUTUJFR0ExVUVBeE1LYTNWaVpYSnVaWFJsY3pDQ0FTSXdEUVlKCktvWklodmNOQVFFQkJRQURnZ0VQQURDQ0FRb0NnZ0VCQU5zV1NweVVRcGYvWDFCaHNtUS9NUDVHVE0zcUFjWngKV3lKUjB0VEtyUDVWNStnSjNZWldjK01HSzlrY3h6OG1RUUczdldvNi9ENHIyZ3RuREVWaWxRb1dlTm0rR3hLSwpJNjkzczNlS2ovM1dIdGloOVA4TWp0RktMWnRvSzRKS09kUURYeGFHLzJNdzJEMmZnbzNJT2VDdlZzR0F3Qlc4ClYxMDh3dUVNdTIzMnhybFdSSFFWaTNyc0dmN3pJbkZzSFNOWFFDbXRMMHhubERlYnZjK2c2TWRtcWZraVZSdzIKNTFzZGxnbmV1aEFqVFJaRkYvT0lFWE4yUjIyYTJqZVZDbWNySEcvK2orU0tzTlpmeVVCb216NGRUcmRsV0JEUQpob3ZzSGkrTEtJVGNxZHBQV3MrZmxIQjlaL1FRUnM5MTZEREpxMHRWNFV6MEY0YjRsemJXaGdrQ0F3RUFBYU5tCk1HUXdEZ1lEVlIwUEFRSC9CQVFEQWdFR01CSUdBMVVkRXdFQi93UUlNQVlCQWY4Q0FRSXdIUVlEVlIwT0JCWUUKRklaN3NZczRjV0xtYnlwVUEwWUhGanc3Mk5jV01COEdBMVVkSXdRWU1CYUFGSVo3c1lzNGNXTG1ieXBVQTBZSApGanc3Mk5jV01BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQ2Eyell1NmVqMlRURWcyN1VOeGh4U0ZMaFJLTHhSClg5WnoyTmtuVjFQMXhMcU8xSHRUSmFYajgvL0wxUmdkQlRpR3JEOXNENGxCdFRRMmF2djJFQzZKeXJyS0xVelUKSWNiUXNpU0h4NkQ3S1FFWjFxQnZkNWRKVDluai9mMG94SjlxNDVmZTBJbWNiUndKWnA2WDJKbWtQSWZyYjYreQo2YUFTbzhaakliTktQN1Z1WndIQ1RPQUwzeUhVR2lJTEJtT1hKNldGRDlpTWVFMytPZE95ZHIwYzNvUmRXVW1aCkI1andlN2x2MEtVc2Y1SnBTS0JCbzZ3bkViNXhMdDRSYjBMa2RxMXZLTGFOMXUvbXFFc1ltbUk3MmRuaUdLSTkKakdDdkRqNVREaW55T1RQU005Vi81RE5OTFlLQkExaDRDTmVBRjE1RWlCay9EU055SzIrUTF3TVgKLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

namespace: a3ViZS1zeXN0ZW0=

token: ZXlKaGJHY2lPaUpTVXpJMU5pSXNJbXRwWkNJNklpSjkuZXlKcGMzTWlPaUpyZFdKbGNtNWxkR1Z6TDNObGNuWnBZMlZoWTJOdmRXNTBJaXdpYTNWaVpYSnVaWFJsY3k1cGJ5OXpaWEoyYVdObFlXTmpiM1Z1ZEM5dVlXMWxjM0JoWTJVaU9pSnJkV0psTFhONWMzUmxiU0lzSW10MVltVnlibVYwWlhNdWFXOHZjMlZ5ZG1salpXRmpZMjkxYm5RdmMyVmpjbVYwTG01aGJXVWlPaUprWldaaGRXeDBMWFJ2YTJWdUxYcHJOWEpxSWl3aWEzVmlaWEp1WlhSbGN5NXBieTl6WlhKMmFXTmxZV05qYjNWdWRDOXpaWEoyYVdObExXRmpZMjkxYm5RdWJtRnRaU0k2SW1SbFptRjFiSFFpTENKcmRXSmxjbTVsZEdWekxtbHZMM05sY25acFkyVmhZMk52ZFc1MEwzTmxjblpwWTJVdFlXTmpiM1Z1ZEM1MWFXUWlPaUpoTkRKaE9EUmlaQzFsWlRkakxURXhaVGd0T0dNMVl5MHdNREJqTWpsbVpERmpNR1lpTENKemRXSWlPaUp6ZVhOMFpXMDZjMlZ5ZG1salpXRmpZMjkxYm5RNmEzVmlaUzF6ZVhOMFpXMDZaR1ZtWVhWc2RDSjkuSTBqQnNkVk1udUw1Q2J2VEtTTGtvcFFyd1h4NTlPNWt0YnVJUHVaemVNTjJjdmNvTE9icS1Xa0NRWWVaaDEwdUFsWVBUbnAtTkxLTFhLMUlrQVpab3dzcllKVmJsQmdQVmVOUDhtOWJ4dk5HXzlMVjcyNGNOaU1aT2pfQ0ExREJEVF91eHlXWlF0eUEwZ0RpeTBRem1zMnZrVEpaZFNHQUZ6V2NVdjA1QWlsdUxaUUhLZmMyOWpuVGJERUhxT2U1UXU2cjRXd05qLTA0SE5qUzFpMHpzUGFkbmR0bzVSaUgtcThaSTVVT3hsNGYyUXlTMlJrWmdtV0tEM2tRaVBWUHpLZDRqRmJsLWhHN3VhQjdBSUVwcHBaUzVYby1USEFhRjJTSi1SUUJfenhDTG42QUZhU0EwcVhrYWhGYmpET0s0OTlZRTVlblJrNkpIRmZVWnR0YmlB

kind: Secret

metadata:

annotations:

kubernetes.io/service-account.name: default

kubernetes.io/service-account.uid: a42a84bd-ee7c-11e8-8c5c-000c29fd1c0f

creationTimestamp: 2018-11-22T17:32:53Z

name: default-token-zk5rj

namespace: kube-system

resourceVersion: "175"

selfLink: /api/v1/namespaces/kube-system/secrets/default-token-zk5rj

uid: a42daa94-ee7c-11e8-8c5c-000c29fd1c0f

type: kubernetes.io/service-account-token

一键全自动安装

合并所有步骤的安装,和分步安装一样的效果:

ansible-playbook 90.setup.yml查看集群信息:

kubectl cluster-info

[root@master ~]# kubectl cluster-info

Kubernetes master is running at https://192.168.2.16:8443

CoreDNS is running at https://192.168.2.16:8443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

kubernetes-dashboard is running at https://192.168.2.16:8443/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy查看node/pod使用资源情况:

kubectl top node

kubectl top pod --all-namespaces测试DNS

a) 创建nginx service

kubectl run nginx --image=nginx --expose --port=80b)创建alpine 测试pod

kubectl run b1 -it --rm --image=alpine /bin/sh //进入到alpine内部

nslookup nginx.default.svc.cluster.local //结果如下

Address 1: 10.68.167.102 nginx.default.svc.cluster.local增加node节点

1)deploy节点免密码登录node

ssh-copy-id 新node ip2)修改/etc/ansible/hosts

[new-node]

192.168.2.153)执行安装脚本

ansible-playbook /etc/ansible/20.addnode.yml4)验证

kubectl get node

kubectl get pod -n kube-system -o wide5)后续工作

修改/etc/ansible/hosts,将new-node里面的所有ip全部移动到kube-node组里去

增加master节点(略)

https://github.com/gjmzj/kubeasz/blob/master/docs/op/AddMaster.md 升级集群

1)备份etcd

ETCDCTL_API=3 etcdctl snapshot save backup.db查看备份文件信息

ETCDCTL_API=3 etcdctl --write-out=table snapshot status backup.db2

)到本项目的根目录kubeasz

cd /dir/to/kubeasz拉取最新的代码

git pull origin master3)下载升级目标版本的kubernetes二进制包(百度网盘https://pan.baidu.com/s/1c4RFaA#list/path=%2F)解压,并替换/etc/ansible/bin/下的二进制文件

4)docker升级(略),除非特别需要,否则不建议频繁升级docker

5)如果接受业务中断,执行:

ansible-playbook -t upgrade_k8s,restart_dockerd 22.upgrade.yml6)不能接受短暂中断,需要这样做:

a)

ansible-playbook -t upgrade_k8s 22.upgrade.ymlb)到所有node上逐一:

kubectl cordon和kubectl drain //迁移业务pod

systemctl restart docker

kubectl uncordon //恢复pod备份和恢复

1)备份恢复原理:

备份,从运行的etcd集群中备份数据到磁盘文件恢复,把etcd的备份文件恢复到etcd集群中,然后据此重建整个集群

2)如果使用kubeasz项目创建的集群,除了备份etcd数据外,还需要备份CA证书文件,以及ansible的hosts文件

3)手动操作步骤:

mkdir -p ~/backup/k8s //创建备份目录

ETCDCTL_API=3 etcdctl snapshot save ~/backup/k8s/snapshot.db //备份etcd数据

cp /etc/kubernetes/ssl/ca* ~/backup/k8s/ //备份ca证书deploy节点执行

ansible-playbook /etc/ansible/99.clean.yml //模拟集群崩

溃恢复步骤如下(在deploy节点):

a)恢复ca证书

mkdir -p /etc/kubernetes/ssl /backup/k8s

cp ~/backup/k8s/* /backup/k8s/

cp /backup/k8s/ca* /etc/kubernetes/ssl/b)重建集群

只需执行前5步,其他的在etcd保存着。

cd /etc/ansibl

ansible-playbook 01.prepare.yml

ansible-playbook 02.etcd.yml

ansible-playbook 03.docker.yml

ansible-playbook 04.kube-master.yml

ansible-playbook 05.kube-node.ymlc)恢复etcd数据

停止服务

ansible etcd -m service -a 'name=etcd state=stopped'清空文件

ansible etcd -m file -a 'name=/var/lib/etcd/member/ state=absent'登录所有的etcd节点,参照本etcd节点/etc/systemd/system/etcd.service的服务文件,替换如下{{}}中变量后执行

cd /backup/k8s/

ETCDCTL_API=3 etcdctl snapshot restore snapshot.db \

-name etcd1 \

-initialcluster etcd1=https://192.168.2.10:2380,etcd2=https://192.168.2.11:2380,etcd3=https://192.168.2.12:2380 \

-initial-cluster-token etcd-cluster-0 \

--initial-advertise-peer-urls https://192.168.2.10:2380

执行上面的步骤后,会生成{{ NODE_NAME }}.etcd目录

cp -r etcd1.etcd/member /var/lib/etcd/

systemctl restart etcdd)在deploy节点重建网络

ansible-playbook /etc/ansible/tools/change_k8s_network.yml4)不想手动恢复,可以用ansible自动恢复

需要一键备份

ansible-playbook /etc/ansible/23.backup.yml检查/etc/ansible/roles/cluster-backup/files目录下是否有文件

tree /etc/ansible/roles/cluster-backup/files/ //如下

├── ca #集群CA相关备份

| ├── ca-config.json

| ├── ca.csr

| ├── ca-csr.json

| ├── ca-key.pem

| └── ca.pem

├── hosts # ansible hosts备份

| ├── hosts #最近的备份

| └── hosts-201807231642

|── readme.md

└── snapshot # etcd数据备份

├── snapshot-201807231642.db

└── snapshot.db #最近的备份

模拟故障:

ansible-playbook /etc/ansible/99.clean.yml修改文件/etc/ansible/roles/cluster-restore/defaults/main.yml,指定要恢复的etcd快照备份,如果不修改就是 新的一次

恢复操作:

ansible-playbook /etc/ansible/24.restore.yml

ansible-playbook /etc/ansible/tools/change_k8s_network.yml可选

对集群所有节点进行操作系统层面的安全加固

ansible-playbook roles/os-harden/os-harden.yml,

详情请参考os-harden项目

考文档:

本文档参考 https://github.com/gjmzj/kubeasz 扩展:使用kubeadm部署集群 https://blog.frognew.com/2018/08/kubeadm-install-kubernetes-1.11.html