Mongodb分片+副本集配置

一:分片介绍

这是一种将海量的数据水平扩展的数据库集群系统,数据分表存储在sharding的各个节点上,使用者通过简单的配置就可以很方便地构建一个分布式MongoDB集群。

MongoDB 的数据分块称为 chunk。每个 chunk 都是 Collection 中一段连续的数据记录,通常最大尺寸是 200MB,超出则生成新的数据块。

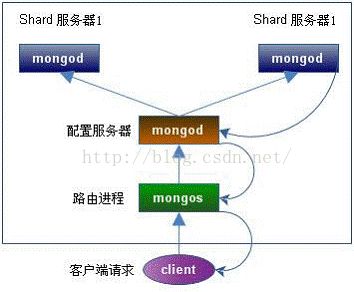

要构建一个 MongoDB Sharding Cluster,需要三种角色:

Shard Server

即存储实际数据的分片,每个Shard可以是一个mongod实例,也可以是一组mongod实例构成的Replica Set。为了实现每个Shard内部的auto-failover,MongoDB官方建议每个Shard为一组Replica Set。

Config Server

为了将一个特定的collection存储在多个shard中,需要为该collection指定一个shard key,例如{age: 1} ,shard key可以决定该条记录属于哪个chunk。Config Servers就是用来存储:所有shard节点的配置信息、每个chunk的shard key范围、chunk在各shard的分布情况、该集群中所有DB和collection的sharding配置信息。

Route Process

这是一个前端路由,客户端由此接入,然后询问Config Servers需要到哪个Shard上查询或保存记录,再连接相应的Shard进行操作,最后将结果返回给客户端。客户端只需要将原本发给mongod的查询或更新请求原封不动地发给Routing Process,而不必关心所操作的记录存储在哪个Shard上。

下面我们在同一台物理机器上构建一个简单的 Sharding Cluster:

架构图如下:

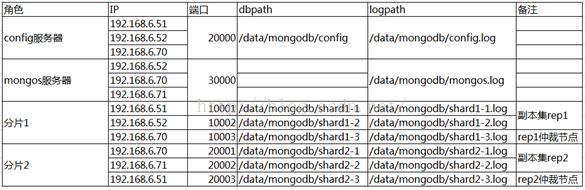

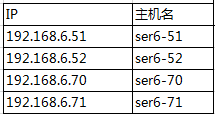

二:实验环境

这里模拟在四台机器上做实验:

分别在192.168.6.51,192.168.6.52,192.168.6.70上创建一个单实例,组成一个副本集rep1,作为分片1.

同理,在192.168.6.70,192.168.6.71,192.168.6.51上创建一个单实例组成一个副本集rep2,作为分片2.

建3个config服务器,3个mongos服务器,共12个mongodb实例。

三:实验步骤

3.1 下载解压Mongodb

在四台服务器上都下载解压mongodb.

tar -xvf mongodb-linux-x86_64-2.6.9.tgz

#为了方便管理,把安装文件移动到/data下

mv mongodb-linux-x86_64-2.6.9 /data/mongodb

3.2 创建相关目录/文件

#建配置信息服务器数据文件目录

[root@ser6-51 data]# mkdir -p /data/mongodb/config

[root@ser6-52 data]# mkdir -p /data/mongodb/config

[root@ser6-70 data]# mkdir -p /data/mongodb/config

#mongos服务器日志

[root@ser6-52 data]# touch /data/mongodb/mongos.log

[root@ser6-70 data]# touch /data/mongodb/mongos.log

[root@ser6-71 data]# touch /data/mongodb/mongos.log

#分片

#建分片数据文件目录

[root@ser6-51 data]# mkdir /data/mongodb/shard1-1

[root@ser6-52 data]# mkdir /data/mongodb/shard1-2

[root@ser6-70 data]# mkdir /data/mongodb/shard1-3

[root@ser6-70 data]# mkdir /data/mongodb/shard2-1

[root@ser6-71 data]# mkdir /data/mongodb/shard2-2

[root@ser6-51 data]# mkdir /data/mongodb/shard2-3

#建分片日志文件

[root@ser6-51 data]# touch /data/mongodb/shard1-1.log

[root@ser6-52 data]# touch /data/mongodb/shard1-2.log

[root@ser6-70 data]# touch /data/mongodb/shard1-3.log

[root@ser6-70 data]# touch /data/mongodb/shard2-1.log

[root@ser6-71 data]# touch /data/mongodb/shard2-2.log

[root@ser6-51 data]# touch /data/mongodb/shard2-3.log3.3 配置PATH

在三台服务器上分别配置PATH,这里以192.168.6.51为例:

[root@ser6-51 dbs]# vi /root/.bash_profile

在PATH行末尾添加:

:/data/mongodb/bin

[root@ser6-51 dbs]# source /root/.bash_profile

3.4 启动配置服务器

配置服务器需要最先启动,mongos服务器需要用到上面的配置信息。

配置服务器的启动就像普通mongod一样。

配置服务器不需要很多空间和资源(200MB实际数据大约占1KB的配置空间)

在192.168.6.51,192.168.6.52,192.168.6.70上分别执行:

mongod --dbpath=/data/mongodb/config --logpath=/data/mongodb/config.log --fork --logappend --port 20000

#开放防火墙端口

为了让其他服务器可以远程连接该mongodb服务器,如果已经开启了防火墙,需要开放其端口。

vi /etc/sysconfig/iptables

直接在配置文件里已有的-A INPUT那些命令下面

添加一行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 20000 -j ACCEPT

重启防火墙

[root@ser6-70 mongodb]# /etc/init.d/iptables restart

iptables: Setting chains to policy ACCEPT: filter [ OK ]

iptables: Flushing firewall rules: [ OK ]

iptables: Unloading modules: [ OK ]

iptables: Applying firewall rules: [ OK ]

3.5 启动mongos服务器

在192.168.6.52,192.168.6.70,192.168.6.71上启动mongos服务器:

mongos --logpath=/data/mongodb/mongos.log --configdb=192.168.6.51:20000,192.168.6.52:20000,192.168.6.70:20000 --fork --logappend --port 30000

--configdb这里记录的是配置服务器所在IP和端口号。三个配置服务器用逗号分隔。

#开放防火墙端口

为了让其他服务器可以远程连接该mongodb服务器,如果已经开启了防火墙,需要开放其端口。

vi /etc/sysconfig/iptables

直接在配置文件里已有的-A INPUT那些命令下面

添加一行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 30000 -j ACCEPT

重启防火墙

[root@ser6-51 mongodb]# /etc/init.d/iptables restart

iptables: Setting chains to policy ACCEPT: filter [ OK ]

iptables: Flushing firewall rules: [ OK ]

iptables: Unloading modules: [ OK ]

iptables: Applying firewall rules: [ OK ]

3.6 启动分片

3.6.1 分片1

3.6.1.1 启动副本集

#192.168.6.51

[root@ser6-51 data]# mongod --dbpath=/data/mongodb/shard1-1 --logpath=/data/mongodb/shard1-1.log --fork --logappend --replSet rep1 --port 10001

about to fork child process, waiting until server is ready for connections.

forked process: 15809

child process started successfully, parent exiting

#192.168.6.52

[root@ser6-52 data]# mongod --dbpath=/data/mongodb/shard1-2 --logpath=/data/mongodb/shard1-2.log --fork --logappend --replSet rep1 --port 10002

about to fork child process, waiting until server is ready for connections.

forked process: 11570

child process started successfully, parent exiting

#192.168.6.70

[root@ser6-70 data]# mongod --dbpath=/data/mongodb/shard1-3 --logpath=/data/mongodb/shard1-3.log --fork --logappend --replSet rep1 --port 10003

about to fork child process, waiting until server is ready for connections.

forked process: 15000

child process started successfully, parent exiting3.6.1.2 开放防火墙端口

vi /etc/sysconfig/iptables

直接在配置文件里已有的-A INPUT那些命令下面

#192.168.6.51

添加一行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 10001 -j ACCEPT

#192.168.6.52

添加一行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 10002 -j ACCEPT

#192.168.6.70

添加一行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 10003 -j ACCEPT

重启防火墙

[root@ser6-51 mongodb]# /etc/init.d/iptables restart

iptables: Setting chains to policy ACCEPT: filter [ OK ]

iptables: Flushing firewall rules: [ OK ]

iptables: Unloading modules: [ OK ]

iptables: Applying firewall rules: [ OK ]

3.6.1.3 初始化副本集

连接其中一个节点,初始化命令只能执行一次。

[root@ser6-51 data]# mongo --port 10001

MongoDB shell version: 2.6.9

connecting to: 127.0.0.1:10001/test

> use admin;

switched to db admin

> config =

... { _id:"rep1",

... members:[ {_id:0,host:"192.168.6.51:10001"},

... {_id:1,host:"192.168.6.52:10002"}]

... }

{

"_id" : "rep1",

"members" : [

{

"_id" : 0,

"host" : "192.168.6.51:10001"

},

{

"_id" : 1,

"host" : "192.168.6.52:10002"

}

]

}

> rs.initiate(config);

{

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}/*

config =

{ _id:"rep1",

members:[ {_id:0,host:"192.168.6.51:10001"},

{_id:1,host:"192.168.6.52:10002"}]

}

*/

#添加仲裁节点

rep1:PRIMARY> rs.addArb("192.168.6.70:10003")

{ "ok" : 1 }

3.6.1.4 查看同步状态

> db.printSlaveReplicationInfo();

source: 192.168.6.52:10002

syncedTo: Fri Jul 24 2015 15:38:54 GMT+0800 (CST)

0 secs (0 hrs) behind the primary

rep1:PRIMARY>

3.6.1.5 设置副本节点可读

#mongodb默认是从主节点读写数据的,副本节点上不允许读,需要设置副本节点可以读。

#在所有节点上进行设置

修改root用户,mongodb用户家目录下的.mongorc.js文件

如:

vi /root/.mongorc.js

添加一行:rs.slaveOk();

修改完成后,重新登录mongo,发现副本节点可读了(当前会话不生效,需要重新登录才行)。

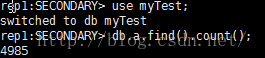

3.6.1.6 验证是否同步成功

在主库上建个表,看其他副本是否同步成功。

#主节点

rep1:PRIMARY> use dba;

switched to db dba

rep1:PRIMARY> db.createCollection("a");

{ "ok" : 1 }

rep1:PRIMARY> show tables;

a

System.indexes

#副本节点

[root@ser6-52 data]# mongo --port 10002

MongoDB shell version: 2.6.9

connecting to: 127.0.0.1:10002/test

rep1:SECONDARY> show dbs;

admin (empty)

dba 0.078GB

local 1.078GB

rep1:SECONDARY> use dba;

switched to db dba

rep1:SECONDARY> show tables;

a

system.indexes说明同步成功,副本集配置成功。

3.6.1.7 验证故障转移

在主节点上关库:

rep1:PRIMARY> db.shutdownServer()

2015-07-24T16:43:54.645+0800 DBClientCursor::init call() failed

server should be down...

2015-07-24T16:43:54.647+0800 trying reconnect to 127.0.0.1:10001 (127.0.0.1) failed

2015-07-24T16:43:54.647+0800 reconnect 127.0.0.1:10001 (127.0.0.1) ok

2015-07-24T16:43:54.653+0800 Socket recv() errno:104 Connection reset by peer 127.0.0.1:10001

2015-07-24T16:43:54.653+0800 SocketException: remote: 127.0.0.1:10001 error: 9001 socket exception [RECV_ERROR] server [127.0.0.1:10001]

2015-07-24T16:43:54.653+0800 DBClientCursor::init call() failed

2015-07-24T16:43:54.656+0800 trying reconnect to 127.0.0.1:10001 (127.0.0.1) failed

2015-07-24T16:43:54.656+0800 warning: Failed to connect to 127.0.0.1:10001, reason: errno:111 Connection refused

2015-07-24T16:43:54.656+0800 reconnect 127.0.0.1:10001 (127.0.0.1) failed failed couldn't connect to server 127.0.0.1:10001 (127.0.0.1), connection attempt failed

在副本节点上再输入命令的话,看到会由从节点变为主节点:

验证完之后,把关闭的节点起来。

[root@ser6-51 data]# mongod --dbpath=/data/mongodb/shard1-1 --logpath=/data/mongodb/shard1-1.log --fork --logappend --replSet rep1 --port 10001

about to fork child process, waiting until server is ready for connections.

forked process: 17407

child process started successfully, parent exiting

3.6.2 分片2

3.6.2.1 启动副本集

#192.168.6.70

[root@ser6-70 ~]# mongod --dbpath=/data/mongodb/shard2-1 --logpath=/data/mongodb/shard2-1.log --fork --logappend --replSet rep2 --port 20001

about to fork child process, waiting until server is ready for connections.

forked process: 16480

child process started successfully, parent exiting

#192.168.6.71

[root@ser6-71 mongodb]# mongod --dbpath=/data/mongodb/shard2-2 --logpath=/data/mongodb/shard2-2.log --fork --logappend --replSet rep2 --port 20002

about to fork child process, waiting until server is ready for connections.

forked process: 2061

child process started successfully, parent exiting

#192.168.6.51

[root@ser6-51 data]# mongod --dbpath=/data/mongodb/shard2-3 --logpath=/data/mongodb/shard2-3.log --fork --logappend --replSet rep2 --port 20003

about to fork child process, waiting until server is ready for connections.

forked process: 17532

child process started successfully, parent exiting

3.6.2.2 开放防火墙端口

vi /etc/sysconfig/iptables

直接在配置文件里已有的-A INPUT那些命令下面

#192.168.6.70

添加一行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 20001 -j ACCEPT

#192.168.6.71

添加一行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 20002 -j ACCEPT

#192.168.6.51

添加一行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 20003 -j ACCEPT

重启防火墙

[root@ser6-51 mongodb]# /etc/init.d/iptables restart

iptables: Setting chains to policy ACCEPT: filter [ OK ]

iptables: Flushing firewall rules: [ OK ]

iptables: Unloading modules: [ OK ]

iptables: Applying firewall rules: [ OK ]

3.6.2.3 初始化副本集

连接其中一个节点,初始化命令只能执行一次。

[root@ser6-70 ~]# mongo --port 20001

MongoDB shell version: 2.6.9

connecting to: 127.0.0.1:20001/test>use admin;

> config =

... { _id:"rep2",

... members:[ {_id:0,host:"192.168.6.70:20001"},

... {_id:1,host:"192.168.6.71:20002"},

... {_id: 2, host: '192.168.6.51:20003',arbiterOnly:true}]

... }

{

"_id" : "rep2",

"members" : [

{

"_id" : 0,

"host" : "192.168.6.70:20001"

},

{

"_id" : 1,

"host" : "192.168.6.71:20002"

},

{

"_id" : 2,

"host" : "192.168.6.51:20003",

"arbiterOnly" : true

}

]

}

> rs.initiate(config);

{

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

/*

use admin;

config =

{ _id:"rep2",

members:[ {_id:0,host:"192.168.6.70:20001"},

{_id:1,host:"192.168.6.71:20002"},

{_id: 2, host: '192.168.6.51:20003',arbiterOnly:true}]

}

*/

3.6.2.4 查看同步状态

rep2:PRIMARY> db.printSlaveReplicationInfo();

source: 192.168.6.71:20002

syncedTo: Fri Jul 24 2015 16:57:43 GMT+0800 (CST)

0 secs (0 hrs) behind the primary

3.6.2.5 设置副本节点可读

vi /root/.mongorc.js

添加一行:rs.slaveOk();

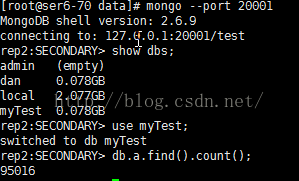

3.6.2.6 验证是否同步成功

在主库上建个表,看其他副本是否同步成功。

#主节点

rep2:PRIMARY> use dan;

switched to db dan

rep2:PRIMARY> db.createCollection("b");

{ "ok" : 1 }

rep2:PRIMARY> show tables;

b

#副本节点

[root@ser6-71 mongodb]# mongo --port 20002

MongoDB shell version: 2.6.9

connecting to: 127.0.0.1:20002/test

rep2:SECONDARY> show dbs;

admin (empty)

dan 0.078GB

local 2.077GB

rep2:SECONDARY> use dan;

switched to db dan

rep2:SECONDARY> show tables;

b

system.indexes

说明同步成功,副本集配置成功。

测试成功后,将该库删掉。

3.6.2.7 验证故障转移

在主库上关库:

rep2:PRIMARY> use admin;

switched to db admin

rep2:PRIMARY> db.shutdownServer()

2015-07-24T17:01:55.603+0800 DBClientCursor::init call() failed

server should be down...

2015-07-24T17:01:55.648+0800 trying reconnect to 127.0.0.1:20001 (127.0.0.1) failed

2015-07-24T17:01:55.649+0800 warning: Failed to connect to 127.0.0.1:20001, reason: errno:111 Connection refused

2015-07-24T17:01:55.649+0800 reconnect 127.0.0.1:20001 (127.0.0.1) failed failed couldn't connect to server 127.0.0.1:20001 (127.0.0.1), connection attempt failed

在从库上随便输入一个命令,可以看到由从库变为主库了。

把之前关闭的节点服务起来:

[root@ser6-70 ~]# mongod --dbpath=/data/mongodb/shard2-1 --logpath=/data/mongodb/shard2-1.log --fork --logappend --replSet rep2 --port 20001

about to fork child process, waiting until server is ready for connections.

forked process: 16871

child process started successfully, parent exiting

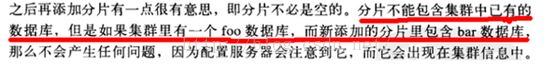

3.7 添加分片

#注意:添加分片和切分数据都是在mongos服务器上进行操作的。

登录mongos服务器,

#添加分片

[root@ser6-52 data]# mongo --port 30000

MongoDB shell version: 2.6.9

connecting to: 127.0.0.1:30000/test

mongos> use admin;

switched to db admin

mongos> db.runCommand({addshard:"rep1/192.168.6.51:10001",allowLocal:true });

{ "shardAdded" : "rep1", "ok" : 1 }

mongos> db.runCommand({addshard:"rep2/192.168.6.70:20001",allowLocal:true });

{ "shardAdded" : "rep2", "ok" : 1 }

#查看所有的片

mongos> use config;

mongos> db.shards.find();

{ "_id" : "rep1", "host" : "rep1/192.168.6.51:10001,192.168.6.52:10002" }

{ "_id" : "rep2", "host" : "rep2/192.168.6.70:20001,192.168.6.71:20002" }3.8 切分数据

默认的是不会将存储的每条数据进行分片处理,需要在数据库和集合的粒度上都开启分片功能。

这里以myTest.a为例

3.8.1 开启库的分片功能

mongos> use admin;

switched to db admin

mongos> db.runCommand({"enablesharding":"myTest"});

{ "ok" : 1 }

3.8.2 开启表的分片功能

一旦激活数据库分片,数据库中不同的collection将被存放在不同的shard上

但一个collection仍旧存放在同一个shard上,要使单个collection也分片,还需单独对collection分区。

mongos> db.runCommand({"shardcollection":"myTest.a","key":{"id":1}});

{ "collectionsharded" : "myTest.a", "ok" : 1 }

为该表开启分片功能后,自动就创建了该表。

注意:需要切换到admin库执行命令。

片键:上面的key就是所谓的片键(shard key)。MongoDB不允许插入没有片键的文档。

但是允许不同文档的片键类型不一样,MongoDB内部对不同类型有一个排序。

mongos> use config;

switched to db config

mongos> db.databases.find();

{ "_id" : "admin", "partitioned" : false, "primary" : "config" }

{ "_id" : "dba", "partitioned" : false, "primary" : "rep1" }

{ "_id" : "test", "partitioned" : false, "primary" : "rep1" }

{ "_id" : "dan", "partitioned" : false, "primary" : "rep2" }

{ "_id" : "myTest", "partitioned" : true, "primary" : "rep1" }

可以看到myTest这个库分片了。

同时,也看到之前副本集是否同步时创建的数据库dba和dan.

/*

*/

mongos> db.chunks.find();

{ "_id" : "myTest.a-id_MinKey", "lastmod" : Timestamp(1, 0), "lastmodEpoch" : ObjectId("55b200b52c2fd90d85570ab7"), "ns" : "myTest.a", "min" : { "id" : { "$minKey" : 1 } }, "max" : { "id" : { "$maxKey" : 1 } }, "shard" : "rep1" }Chunks:理解 MongoDB 分片机制的关键是理解 Chunks 。

mongodb不是一个分片上存储一个区间,而是每个分片包含多个区间,这每个区间就是一个块。

3.9 分片测试

mongos> use myTest;

switched to db dba

mongos> for(i=0;i<100000;i++){ db.a.insert({"id":i,"Name":"baidandan","Date":new Date()}); }

WriteResult({ "nInserted" : 1 })

mongos> use config;

switched to db config

mongos> db.chunks.find();

{ "_id" : "myTest.a-id_MinKey", "lastmod" : Timestamp(2, 0), "lastmodEpoch" : ObjectId("55b200b52c2fd90d85570ab7"), "ns" : "myTest.a", "min" : { "id" : { "$minKey" : 1 } }, "max" : { "id" : 0 }, "shard" : "rep2" }

{ "_id" : "myTest.a-id_0.0", "lastmod" : Timestamp(3, 1), "lastmodEpoch" : ObjectId("55b200b52c2fd90d85570ab7"), "ns" : "myTest.a", "min" : { "id" : 0 }, "max" : { "id" : 4984 }, "shard" : "rep1" }

{ "_id" : "myTest.a-id_4984.0", "lastmod" : Timestamp(3, 0), "lastmodEpoch" : ObjectId("55b200b52c2fd90d85570ab7"), "ns" : "myTest.a", "min" : { "id" : 4984 }, "max" : { "id" : { "$maxKey" : 1 } }, "shard" : "rep2" }

我们可以看到现在有三个块:(-∞,0)在rep2上,[0,4984)在rep1上,[4984,+ ∞)在rep2上。

数据已经被自动分配到了这两个片上面。

Note:通过sh.status()可以很直观的查看当前整个集群的分片情况,类似如下:

mongos> sh.status();

--- Sharding Status ---

sharding version: {

"_id" : 1,

"version" : 4,

"minCompatibleVersion" : 4,

"currentVersion" : 5,

"clusterId" : ObjectId("55b1e7592c2fd90d8557074f")

}

shards:

{ "_id" : "rep1", "host" : "rep1/192.168.6.51:10001,192.168.6.52:10002" }

{ "_id" : "rep2", "host" : "rep2/192.168.6.70:20001,192.168.6.71:20002" }

databases:

{ "_id" : "admin", "partitioned" : false, "primary" : "config" }

{ "_id" : "dba", "partitioned" : false, "primary" : "rep1" }

{ "_id" : "test", "partitioned" : false, "primary" : "rep1" }

{ "_id" : "dan", "partitioned" : false, "primary" : "rep2" }

{ "_id" : "myTest", "partitioned" : true, "primary" : "rep1" }

myTest.a

shard key: { "id" : 1 }

chunks:

rep22

rep11

{ "id" : { "$minKey" : 1 } } -->> { "id" : 0 } on : rep2 Timestamp(2, 0)

{ "id" : 0 } -->> { "id" : 4984 } on : rep1 Timestamp(3, 1)

{ "id" : 4984 } -->> { "id" : { "$maxKey" : 1 } } on : rep2 Timestamp(3, 0)

mongos> 在分片服务器上查看下数据:

--分片1

--分片2

/*

假如插入的时候一直没有反应,插不进去的话,试验只插入1条数据:

mongos> db.a.insert({"id":1,"Name":"baidandan","Date":new Date()});

如果报错:

WriteResult({

"nInserted" : 0,

"writeError" : {

"code" : 82,

"errmsg" : "no progress was made executing batch write op in myTest.a after 5 rounds (0 ops completed in 6 rounds total)"

}

})先尝试重启mongodb实例。如果插入的时候还是报这个错误,请检查所有mongodb实例的防火墙端口已开放

(当时这个问题困扰了我三天~~~~(>_<)~~~~)。

*/

--本篇文章参考自:

http://blog.itpub.net/27000195/viewspace-1404405/,Mongodb权威指南.