【Python+OpenCV】基于Harris角点的边缘提取以及矩形四角点检测

目录

- 开始之前必须要说的一些事

- 一、参考文章

- 二、实验目标

- 三、局限性声明

- 开始说正事了,所以标题一定要比第一个一级标题长

- 一、思路

- (一)基于Harris角点检测[本文思路]

- (二)基于Hough变换[参考思路]

- 二、实现

- 我的Main函数在干什么?

- 分步实现!

- (一)调整图像角度

- getMAD(s):利用绝对中位差剔除异常值

- CalcDegree(srcImage): 校正图像旋转变形

- (二)Harris角点检测

- HarrisDetect(img)

- (三)利用掩模限制角点结果分布

- getMask(img, Harris_img)

- (四)求四个角点,标出,连线

- pointDetect(mask, imgCopy)

- 完整代码

- 如果你想看结果可以直接点这里

- 局限性总结

开始之前必须要说的一些事

一、参考文章

- (c++)[图像处理]边缘提取以及Harris角点检测1

- (python)OpenCV-Python的文本透视矫正与水平矫正2

- (python) Python - 异常值检测(绝对中位差、平均值 和 LOF)3

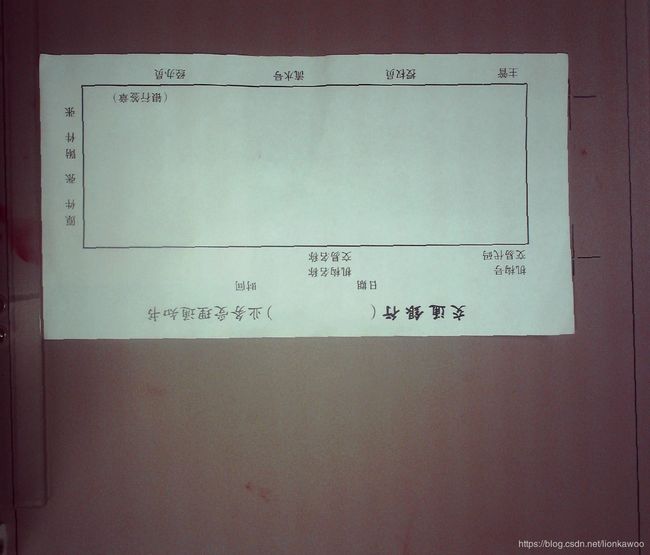

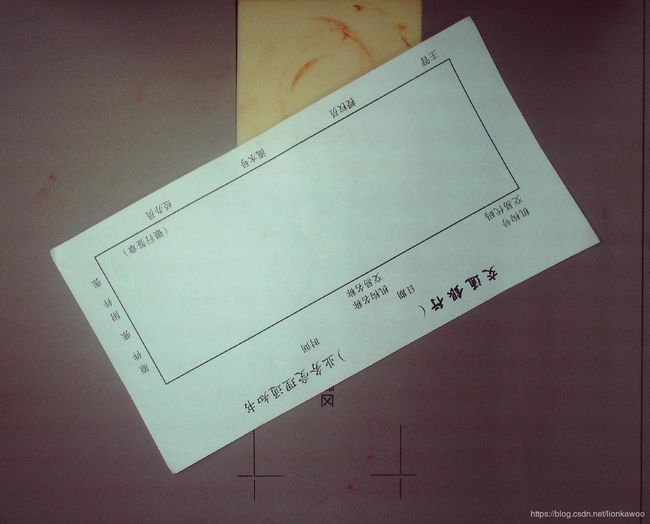

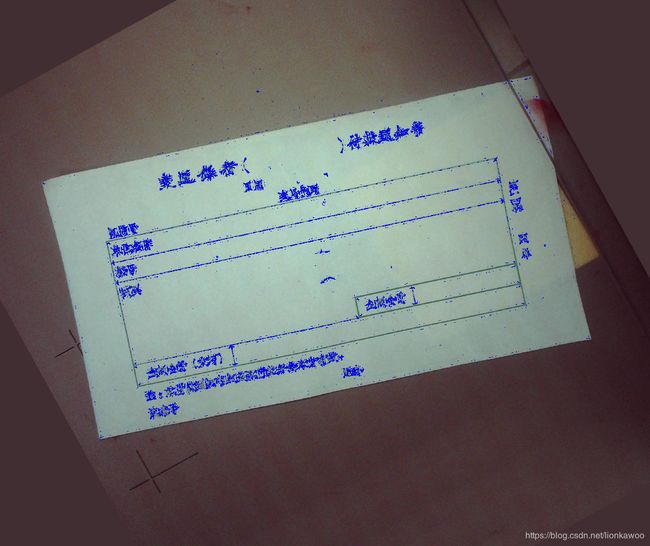

二、实验目标

三、局限性声明

本文源于计算机视觉学习中的一次作业。由于样本不足,我认为本算法存在着不容忽视的局限性,这些局限性可能会导致本算法虽具备一定的鲁棒性(对这三个目标而言是足够的),但不够健壮。

开始说正事了,所以标题一定要比第一个一级标题长

一、思路

在本次实验之前,老师给出了基于Hough变换和基于Harris角点检测的两种解题思路。在本文中,我采纳了后者。

在这里,我把两种思路都贴出来:

(一)基于Harris角点检测[本文思路]

- 调用HarrisDetect(imagefile),求票据四个顶点

- 由顶点连线形成票据外边界

(二)基于Hough变换[参考思路]

- 利用Hough变换检测票据外边界

- 识别主要边,利用直线方程计算出角点并标出

在寻找资料的过程中,我发现有两篇文章是基于Hough变换实现的。虽然使用的语言是c++,但还是具有很好的参考价值:

《计算机视觉与模式识别(1)—— A4纸边缘提取》4

《[计算机视觉] A4纸边缘检测》5

二、实现

我的Main函数在干什么?

在看代码的时候,我的习惯是先看Main()。这样能让我最快知道我的代码的主要流程。

具体为什么是这些步骤,在后面每一步里我都写了分析。

# 导入图像

filename = 'ex0301.jpg'

# filename = 'ex0302.jpg'

# filename = 'ex0303.bmp'

img = cv2.imread(filename)

imgCopy = img.copy()

# 调整图像角度

degree = CalcDegree(img) # 求矩形主要方向与x轴的夹角degree

if degree != 0: # 若夹角不为0,则图像需要旋转

rotate = rotateImage(img, degree)

imgCopy = rotate.copy()

else: # 夹角很小时,可以不旋转

rotate = img.copy()

# Harris角点检测

Harris_img = HarrisDetect(rotate)

# 利用掩模限制角点结果分布

mask = getMask(imgCopy, Harris_img)

# 求四个角点,标出,连线

pointDetect(mask, imgCopy)

cv2.waitKey(0)

cv2.destroyAllWindows()

分步实现!

(一)调整图像角度

参考资料: ① openCV-Python的文本透视矫正与水平矫正2

② Python - 异常值检测(绝对中位差、平均值 和 LOF)3

为什么这样做?

根据观察实验目标,可以发现,样本图片其实并没有透视、变形等比较复杂的图像畸变。因此,使代码具有适应性,对于这三张图而言,就变成了我们需要去调整他们的旋转角而已。

样本劣势 & 局限性分析

① 样本的旋转角(检测出的主要方向和x轴的夹角)都在90°以内,因此,对于90°

这一部分的代码,是我在参考资料①2的基础上改写的。虽然原文是校正文字的旋转变形,但其思想是可以被用在本文中的情况的。在这里贴上它的主要思路,详细代码可以点进链接看:

(1)用霍夫线变换探测出图像中的所有直线

(2)计算出每条直线的倾斜角,求他们的平均值

(3)根据倾斜角旋转矫正

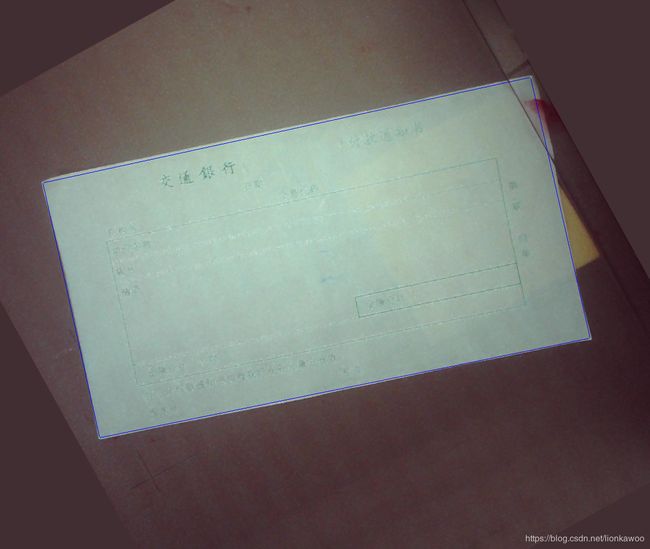

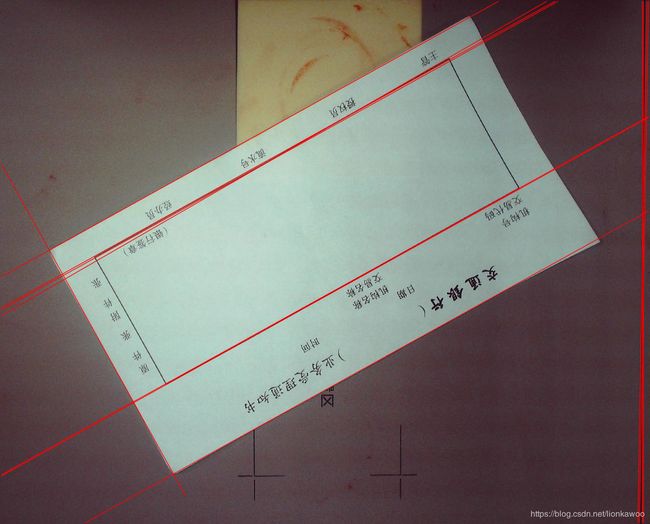

原文思路很完美了~但因为面对目标不同,在我实际运用的时候还是出现了一些小问题。请看图:

可以看到,除了主轴方向以外,HoughLines()还为我们检测了不少我们并不想要的线(主要是右边垂直的一簇),

我们把这些不想要的结果称之为异常值,它们偏离了主轴。

而在原文中,思路(2)是这么写的:“计算出每条直线的倾斜角,求他们的平均值。”

可以想到,如果异常值和正常值一起加入计算平均值的队伍,那么实际的结果会和想要的结果有很大的误差。

因此,在计算平均值前,我们还要对异常值进行检测和剔除。

由此一来,我们的思路变成了:

- 用霍夫线变换探测出图像中的所有直线

- 计算出每条直线的倾斜角

- 判断这条直线的是不是主要方向上的直线,如果不是,扔掉它

- 求主轴上的所有直线的倾斜角的平均值

- 根据倾斜角旋转矫正

getMAD(s):利用绝对中位差剔除异常值

虽然知道了要把异常值剔除,但是具体应该怎么操作呢?

在我苦思无果,在网上一顿冲浪之后,发现了伟大数学家还真的发明了这么一个东西,专门用来针对“异常值”。(也太强了)

请看:Python - 异常值检测(绝对中位差、平均值 和 LOF)

def getMAD(s):

median = np.median(s)

# 这里的b为波动范围

b = 1.4826

mad = b * np.median(np.abs(s-median))

# 确定一个值,用来排除异常值范围

lower_limit = median - (3*mad)

upper_limit = median + (3*mad)

# print(mad, lower_limit, upper_limit)

return lower_limit, upper_limit

CalcDegree(srcImage): 校正图像旋转变形

剔除了异常值后,就可以继续原文的操作啦。具体步骤直接看代码叭,我想我的注释写的已经足够详细了-。-~

# 通过霍夫变换计算角度

def CalcDegree(srcImage):

midImage = cv2.cvtColor(srcImage, cv2.COLOR_BGR2GRAY)

dstImage = cv2.Canny(midImage, 50, 200, 3)

lineimage = srcImage.copy()

# 通过霍夫变换检测直线

# 第4个参数为阈值,阈值越大,检测精度越高

lines = cv2.HoughLines(dstImage, 1, np.pi / 180, 200)

# 由于图像不同,阈值不好设定,因为阈值设定过高导致无法检测直线,阈值过低直线太多,速度很慢

sum = 0

# 绝对中位差排除异常值

thetaList = []

for i in range(len(lines)):

for rho, theta in lines[i]:

thetaList.append(theta)

#print(thetaList)

lower_limit, upper_limit = getMAD(thetaList)

# 判断是否需要旋转操作

thetaavg_List = []

for i in range(len(lines)):

for rho, theta in lines[i]:

if lower_limit <= theta <= upper_limit:

thetaavg_List.append(theta)

thetaAvg = np.mean(thetaavg_List)

#print(thetaAvg)

deviation = 0.01

if (np.pi/2-deviation <= thetaAvg <= np.pi/2+deviation) or (0 <= thetaAvg <= deviation) or (np.pi-deviation <= thetaAvg <= 180):

angle = 0

else:

# 依次画出每条线段

for i in range(len(lines)):

for rho, theta in lines[i]:

if lower_limit <= theta <= upper_limit:

#print("theta:", theta, " rho:", rho)

a = np.cos(theta)

b = np.sin(theta)

x0 = a * rho

y0 = b * rho

x1 = int(round(x0 + 1000 * (-b)))

y1 = int(round(y0 + 1000 * a))

x2 = int(round(x0 - 1000 * (-b)))

y2 = int(round(y0 - 1000 * a))

# 只选角度最小的作为旋转角度

sum += theta

cv2.line(lineimage, (x1, y1), (x2, y2), (0, 0, 255), 1, cv2.LINE_AA)

#cv2.imshow("Imagelines", lineimage)

# 对所有角度求平均,这样做旋转效果会更好

average = sum / len(lines)

res = DegreeTrans(average)

if res > 45:

angle = 90 + res

elif res < 45:

print(2)

angle = -90 + res

return angle

# 度数转换

def DegreeTrans(theta):

res = theta / np.pi * 180

print(res)

return res

# 逆时针旋转图像degree角度(原尺寸)

def rotateImage(src, degree):

# 旋转中心为图像中心

h, w = src.shape[:2]

# 计算二维旋转的仿射变换矩阵

# RotateMatrix = cv2.getRotationMatrix2D((w / 2.0, h / 2.0), degree, 1)

if 65 < abs(degree) < 90:

print(11)

RotateMatrix = cv2.getRotationMatrix2D((w / 2.0, h / 2.0), degree * (4/5), 1)

elif 0 < abs(degree) < 65:

print(12)

RotateMatrix = cv2.getRotationMatrix2D((w / 2.0, h / 2.0), degree/3, 1)

# 仿射变换,背景色填充为白色

rotate = cv2.warpAffine(src, RotateMatrix, (w, h), borderValue=(50, 46, 65))

return rotate

(二)Harris角点检测

这步十分简单易懂,在此不做过多赘述。

需要注意的是,在设置参数的过程中,我们需要遵守的原则是 “结果一定取到了四个角点,并且干扰点尽可能的少”

HarrisDetect(img)

def HarrisDetect(img):

# 转换成灰度图像

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# 高斯模糊

gray = cv2.GaussianBlur(gray, (7, 7), 0)

# 图像转换为float32

gray = np.float32(gray)

# harris角点检测

dst = cv2.cornerHarris(gray, 2, 3, 0.02)

# 阈值设定

img[dst > 0.0001*dst.max()] = [225, 0, 0]

cv2.imshow('Harris', img)

return img

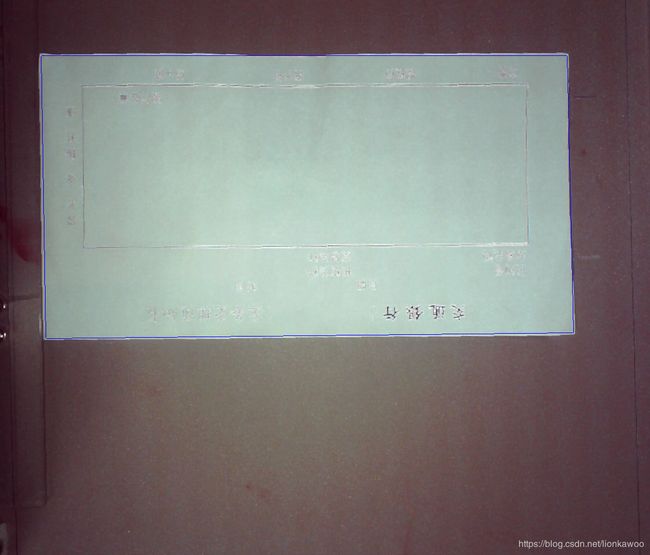

(三)利用掩模限制角点结果分布

为什么这样做?

可以看到,上图中虽然求出了许多角点,但有许多是“废点”。

我把废点分为两种:

① 在票据轮廓之外的点

② 在票据轮廓之内的点

基于此,本步骤就是用来剔除第一种废点,即票据轮廓之外的点。这将为我们后面的操作提供了便利性,也是必不可少的。

值得一提的是,创建蒙版的过程,其实就是根据阈值对进行二值化。

其中,灰度化以后,我们用蓝色标出的角点就变成了灰度值很小的点,导致在二值化的过程中,他们并不能被有效变“1”。(因为四个顶点的灰度值和我们标出的废点的灰度值肯定是一样的,因此如果我们保留四个顶点的灰度值,就意味着保留这些废点的灰度值。)

但只要我们对求出的模板做适当的腐蚀操作,这些噪点就会被腐蚀掉。

看图吧

可以看到变化吗~

getMask(img, Harris_img)

def getMask(img, Harris_img):

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# 利用阈值,创造一个蒙版

ret, mask = cv2.threshold(gray, 100, 255, cv2.THRESH_BINARY)

# 腐蚀蒙版,去掉噪点部分

kernel = cv2.getStructuringElement(cv2.MORPH_RECT, (3, 3))

mask = cv2.erode(mask, kernel)

mask_inv = cv2.bitwise_not(mask)

# 对二进制取与

img1_bg = cv2.bitwise_and(img, img, mask=mask_inv)

img2_fg = cv2.bitwise_and(Harris_img, Harris_img, mask=mask)

dst = cv2.add(img1_bg, img2_fg)

cv2.imshow('mask', mask)

cv2.imshow('mask_result', dst)

return dst

(四)求四个角点,标出,连线

参考资料: [图像处理]边缘提取以及Harris角点检测1

局限性分析

在这里,我利用了嵌套for循环遍历了图像所有的点,并且对for循环的起始值和终止值做了约束。目的有二:

① 减少计算量

② 去除第二种废点,即票据轮廓内的废点

这也造成一个缺陷,因为for循环的起始值和终止值并不是自由的,为了让顶点能够被检测到,我们在校正的时候,应该确保四个角都分别能被for循环检测到。

从几何意义上说,这意味着四个顶点应该分别分布于这四个色块

pointDetect(mask, imgCopy)

def pointDetect(Harris_img, img):

# 求图像大小

shape = img.shape

height = shape[0]

width = shape[1]

upLeftX = 0

upLeftY = 0

downLeftX = 0

downLeftY = 0

upRightX = 0

upRightY = 0

downRightX = 0

downRightY = 0

# 求左上顶点

for i in range(0, round(width/2)):

for j in range(0, round(height/2)):

if upLeftX == 0 and upLeftY == 0 and Harris_img[j][i][0] == 225:

upLeftX = i

upLeftY = j

break

if upLeftX or upLeftY:

break

# 求右上顶点

for i in range(width-1, (round(width * (3/4))), -1):

for j in range(0, round(height/6)):

if upRightX == 0 and upRightY == 0 and Harris_img[j][i][0] == 225:

upRightX = i

upRightY = j

break

if upRightX or upRightY:

break

# 求左下顶点

for j in range(height-1, round(height/2), -1):

for i in range(0, round(width/2)):

if downLeftX == 0 and downLeftY == 0 and Harris_img[j][i][0] == 225:

downLeftX = i

downLeftY = j

break

if downLeftX or downLeftY:

break

# 求右下顶点

for i in range(width-1, round(width/2), -1):

for j in range(round(height/2), height-1,):

if downRightX == 0 and downRightY == 0 and Harris_img[j][i][0] == 225:

downRightX = i

downRightY = j

break

if downRightY or downRightY:

break

img[upLeftY][upLeftX][0] = 0

img[upLeftY][upLeftX][1] = 255

img[upLeftY][upLeftX][2] = 0

print("左上坐标:", upLeftY, upLeftX)

img[upRightY][upRightX][0] = 0

img[upRightY][upRightX][1] = 255

img[upRightY][upRightX][2] = 0

print("右上坐标:", upRightY, upRightX)

img[downRightY][downRightX][0] = 0

img[downRightY][downRightX][1] = 255

img[downRightY][downRightX][2] = 0

print("右下坐标:", downRightY, downRightX)

img[downLeftY][downLeftX][0] = 0

img[downLeftY][downLeftX][1] = 255

img[downLeftY][downLeftX][2] = 0

print("左下坐标:", downLeftY, downLeftX)

# 图像膨胀,让点更明显

img = cv2.dilate(img, None)

# 描边

cv2.line(img, (upLeftX, upLeftY), (upRightX, upRightY), (255, 0, 0), 1)

cv2.line(img, (upRightX, upRightY), (downRightX, downRightY), (255, 0, 0), 1)

cv2.line(img, (downRightX, downRightY), (downLeftX, downLeftY), (255, 0, 0), 1)

cv2.line(img, (downLeftX, downLeftY), (upLeftX, upLeftY), (255, 0, 0), 1)

cv2.imshow('result', img)

完整代码

import cv2

import numpy as np

# Harris角点

def HarrisDetect(img):

# 转换成灰度图像

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# 高斯模糊

gray = cv2.GaussianBlur(gray, (7, 7), 0)

# 图像转换为float32

gray = np.float32(gray)

# harris角点检测

dst = cv2.cornerHarris(gray, 2, 3, 0.02)

# 图像膨胀

# dst = cv2.dilate(dst, None)

# 图像腐蚀

# dst = cv2.erode(dst, None)

# 阈值设定

img[dst > 0.0001*dst.max()] = [225, 0, 0]

cv2.imshow('Harris', img)

return img

# 求顶点

def pointDetect(Harris_img, img):

# 求图像大小

shape = img.shape

height = shape[0]

width = shape[1]

upLeftX = 0

upLeftY = 0

downLeftX = 0

downLeftY = 0

upRightX = 0

upRightY = 0

downRightX = 0

downRightY = 0

# 求左上顶点

for i in range(0, round(width/2)):

for j in range(0, round(height/2)):

if upLeftX == 0 and upLeftY == 0 and Harris_img[j][i][0] == 225:

upLeftX = i

upLeftY = j

break

if upLeftX or upLeftY:

break

# 求右上顶点

for i in range(width-1, (round(width * (3/4))), -1):

for j in range(0, round(height/6)):

if upRightX == 0 and upRightY == 0 and Harris_img[j][i][0] == 225:

upRightX = i

upRightY = j

break

if upRightX or upRightY:

break

# 求左下顶点

for j in range(height-1, round(height/2), -1):

for i in range(0, round(width/2)):

if downLeftX == 0 and downLeftY == 0 and Harris_img[j][i][0] == 225:

downLeftX = i

downLeftY = j

break

if downLeftX or downLeftY:

break

# 求右下顶点

for i in range(width-1, round(width/2), -1):

for j in range(round(height/2), height-1,):

if downRightX == 0 and downRightY == 0 and Harris_img[j][i][0] == 225:

downRightX = i

downRightY = j

break

if downRightY or downRightY:

break

img[upLeftY][upLeftX][0] = 0

img[upLeftY][upLeftX][1] = 255

img[upLeftY][upLeftX][2] = 0

print("左上坐标:", upLeftY, upLeftX)

img[upRightY][upRightX][0] = 0

img[upRightY][upRightX][1] = 255

img[upRightY][upRightX][2] = 0

print("右上坐标:", upRightY, upRightX)

img[downRightY][downRightX][0] = 0

img[downRightY][downRightX][1] = 255

img[downRightY][downRightX][2] = 0

print("右下坐标:", downRightY, downRightX)

img[downLeftY][downLeftX][0] = 0

img[downLeftY][downLeftX][1] = 255

img[downLeftY][downLeftX][2] = 0

print("左下坐标:", downLeftY, downLeftX)

# 图像膨胀

img = cv2.dilate(img, None)

# 描边

cv2.line(img, (upLeftX, upLeftY), (upRightX, upRightY), (255, 0, 0), 1)

cv2.line(img, (upRightX, upRightY), (downRightX, downRightY), (255, 0, 0), 1)

cv2.line(img, (downRightX, downRightY), (downLeftX, downLeftY), (255, 0, 0), 1)

cv2.line(img, (downLeftX, downLeftY), (upLeftX, upLeftY), (255, 0, 0), 1)

cv2.imshow('result', img)

# 利用绝对中位差排除异常Theta

def getMAD(s):

median = np.median(s)

# 这里的b为波动范围

b = 1.4826

mad = b * np.median(np.abs(s-median))

# 确定一个值,用来排除异常值范围

lower_limit = median - (3*mad)

upper_limit = median + (3*mad)

# print(mad, lower_limit, upper_limit)

return lower_limit, upper_limit

# 通过霍夫变换计算角度

def CalcDegree(srcImage):

midImage = cv2.cvtColor(srcImage, cv2.COLOR_BGR2GRAY)

dstImage = cv2.Canny(midImage, 50, 200, 3)

lineimage = srcImage.copy()

# 通过霍夫变换检测直线

# 第4个参数为阈值,阈值越大,检测精度越高

lines = cv2.HoughLines(dstImage, 1, np.pi / 180, 200)

# 由于图像不同,阈值不好设定,因为阈值设定过高导致无法检测直线,阈值过低直线太多,速度很慢

sum = 0

# 绝对中位差排除异常值

thetaList = []

for i in range(len(lines)):

for rho, theta in lines[i]:

thetaList.append(theta)

#print(thetaList)

lower_limit, upper_limit = getMAD(thetaList)

# 判断是否需要旋转操作

thetaavg_List = []

for i in range(len(lines)):

for rho, theta in lines[i]:

if lower_limit <= theta <= upper_limit:

thetaavg_List.append(theta)

thetaAvg = np.mean(thetaavg_List)

#print(thetaAvg)

deviation = 0.01

if (np.pi/2-deviation <= thetaAvg <= np.pi/2+deviation) or (0 <= thetaAvg <= deviation) or (np.pi-deviation <= thetaAvg <= 180):

angle = 0

else:

# 依次画出每条线段

for i in range(len(lines)):

for rho, theta in lines[i]:

if lower_limit <= theta <= upper_limit:

#print("theta:", theta, " rho:", rho)

a = np.cos(theta)

b = np.sin(theta)

x0 = a * rho

y0 = b * rho

x1 = int(round(x0 + 1000 * (-b)))

y1 = int(round(y0 + 1000 * a))

x2 = int(round(x0 - 1000 * (-b)))

y2 = int(round(y0 - 1000 * a))

# 只选角度最小的作为旋转角度

sum += theta

cv2.line(lineimage, (x1, y1), (x2, y2), (0, 0, 255), 1, cv2.LINE_AA)

cv2.imshow("Imagelines", lineimage)

# 对所有角度求平均,这样做旋转效果会更好

average = sum / len(lines)

res = DegreeTrans(average)

if res > 45:

angle = 90 + res

elif res < 45:

print(2)

angle = -90 + res

return angle

# 度数转换

def DegreeTrans(theta):

res = theta / np.pi * 180

print(res)

return res

# 逆时针旋转图像degree角度(原尺寸)

def rotateImage(src, degree):

# 旋转中心为图像中心

h, w = src.shape[:2]

# 计算二维旋转的仿射变换矩阵

# RotateMatrix = cv2.getRotationMatrix2D((w / 2.0, h / 2.0), degree, 1)

if 65 < abs(degree) < 90:

print(11)

RotateMatrix = cv2.getRotationMatrix2D((w / 2.0, h / 2.0), degree * (4/5), 1)

elif 0 < abs(degree) < 65:

print(12)

RotateMatrix = cv2.getRotationMatrix2D((w / 2.0, h / 2.0), degree/3, 1)

# 仿射变换,背景色填充为白色

rotate = cv2.warpAffine(src, RotateMatrix, (w, h), borderValue=(50, 46, 65))

return rotate

# 利用掩模排除多余的点

def getMask(img, Harris_img):

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# gray = cv2.GaussianBlur(gray, (7, 7), 0)

ret, mask = cv2.threshold(gray, 100, 255, cv2.THRESH_BINARY)

kernel = cv2.getStructuringElement(cv2.MORPH_RECT, (3, 3))

mask = cv2.erode(mask, kernel)

mask_inv = cv2.bitwise_not(mask)

img1_bg = cv2.bitwise_and(img, img, mask=mask_inv)

img2_fg = cv2.bitwise_and(Harris_img, Harris_img, mask=mask)

dst = cv2.add(img1_bg, img2_fg)

cv2.imshow('mask_result', dst)

return dst

# 导入图像

filename = 'ex0301.jpg'

# filename = 'ex0302.jpg'

# filename = 'ex0303.bmp'

img = cv2.imread(filename)

imgCopy = img.copy()

# 调整图像角度

degree = CalcDegree(img) # 求矩形主要方向与x轴的夹角degree

if degree != 0: # 若夹角不为0,则图像需要旋转

rotate = rotateImage(img, degree)

imgCopy = rotate.copy()

else: # 夹角很小时,可以不旋转

rotate = img.copy()

# Harris角点检测

Harris_img = HarrisDetect(rotate)

# 求掩模,提出距离票据太远的点

mask = getMask(imgCopy, Harris_img)

# 求四个角点,标出

pointDetect(mask, imgCopy)

cv2.waitKey(0)

cv2.destroyAllWindows()

如果你想看结果可以直接点这里

局限性总结

在最后的最后,还是要强调一下我的代码的局限性。但正是为了不再写出有很多局限性的代码,所以才要不断学习鸭!

[图像处理]边缘提取以及Harris角点检测 ↩︎ ↩︎

OpenCV-Python的文本透视矫正与水平矫正 ↩︎ ↩︎ ↩︎

[图像处理]Python - 异常值检测(绝对中位差、平均值 和 LOF) ↩︎ ↩︎

计算机视觉与模式识别(1)—— A4纸边缘提取 ↩︎

[计算机视觉] A4纸边缘检测 ↩︎