目标检测 - 基于 SSD: Single Shot MultiBox Detector 的人体上下半身检测

基于 SSD 的人体上下半身检测

这里主要是通过将训练数据转换成 Pascal VOC 数据集格式来实现 SSD 检测人体上下半身.

由于没有对人体上下半身进行标注的数据集, 这里利用 MPII Human Pose Dataset 来将 Pose 数据转换成上下半身 box 数据, 故box的准确性不一定很高, 但还是可以用来测试学习使用的.

1. Pose to GTbox

将MPII Human Pose Data 转换为 json 格式 - mpii_single.txt, 其内容如下:

mpii/060111501.jpg|{"PELVIS": [904,237], "THORAX": [858,135], "NECK": [871.1877,180.4244], "HEAD": [835.8123,58.5756], "R_ANKLE": [980,322], "R_KNEE": [896,318], "R_HIP": [865,248], "L_HIP": [943,226], "L_KNEE": [948,290], "L_ANKLE": [881,349], "R_WRIST": [772,294], "R_ELBOW": [754,247], "R_SHOULDER": [792,147], "L_SHOULDER": [923,123], "L_ELBOW": [995,163], "L_WRIST": [961,223]}

mpii/002058449.jpg|{"PELVIS": [846,351], "THORAX": [738,259], "NECK": [795.2738,314.8937], "HEAD": [597.7262,122.1063], "R_ANKLE": [918,456], "R_KNEE": [659,518], "R_HIP": [713,413], "L_HIP": [979,288], "L_KNEE": [1222,453], "L_ANKLE": [974,399], "R_WRIST": [441,490], "R_ELBOW": [446,434], "R_SHOULDER": [599,270], "L_SHOULDER": [877,247], "L_ELBOW": [1112,384], "L_WRIST": [1012,489]}

mpii/029122914.jpg|{"PELVIS": [332,346], "THORAX": [325,217], "NECK": [326.2681,196.1669], "HEAD": [330.7319,122.8331], "R_ANKLE": [301,473], "R_KNEE": [302,346], "R_HIP": [362,345], "L_HIP": [367,470], "L_KNEE": [275,299], "L_ANKLE": [262,300], "R_WRIST": [278,220], "R_ELBOW": [371,213], "R_SHOULDER": [396,309], "L_SHOULDER": [393,290]}

mpii/061185289.jpg|{"PELVIS": [533,322], "THORAX": [515.0945,277.1333], "NECK": [463.9055,148.8667], "HEAD": [353,172], "R_ANKLE": [426,239], "R_KNEE": [513,288], "R_HIP": [552,355]}

mpii/013949386.jpg|{"PELVIS": [159,370], "THORAX": [189,228], "NECK": [191.1195,227.0916], "HEAD": [326.8805,168.9084], "R_ANKLE": [110,385], "R_KNEE": [208,355], "R_HIP": [367,363], "L_HIP": [254,429], "L_KNEE": [166,303], "L_ANKLE": [212,153], "R_WRIST": [319,123], "R_ELBOW": [376,39]}

....定义上下半身关节点:

upper = ['HEAD', 'NECK', 'L_SHOULDER', 'L_ELBOW', 'L_WRIST', 'R_WRIST', 'R_ELBOW', 'R_SHOULDER', 'THORAX']

lower = ['PELVIS', 'L_HIP', 'L_KNEE', 'L_ANKLE', 'R_ANKLE', 'R_KNEE', 'R_HIP']以关节点图像中的位置, 设定外扩 50 个 像素,以使得 gtbox 尽可能准确.

get_gtbox.py

#!/usr/bin/env python

import json

import cv2

from PIL import Image

import numpy as np

import matplotlib.pyplot as plt

import scipy.misc as scm

upper = ['HEAD', 'NECK', 'L_SHOULDER', 'L_ELBOW', 'L_WRIST', 'R_WRIST', 'R_ELBOW', 'R_SHOULDER', 'THORAX']

lower = ['PELVIS', 'L_HIP', 'L_KNEE', 'L_ANKLE', 'R_ANKLE', 'R_KNEE', 'R_HIP']

datas = open('mpii_single.txt').readlines()

print 'Length of datas: ', len(datas)

f = open('mpii_gtbox.txt', 'w')

for data in datas:

# print data

datasplit = data.split('|')

imgname, posedict = datasplit[0], json.loads(datasplit[1])

img = np.array(Image.open(imgname), dtype=np.uint8)

height, width, _ = np.shape(img)

if len(posedict.keys()) == 16: # only joints of full body used to get gtbox

x_upper, y_upper = [], []

for joint in upper:

x_upper.append(posedict[joint][0])

y_upper.append(posedict[joint][1])

upper_x1, upper_y1 = int(max(min(x_upper) - 50, 0)), int(max(min(y_upper) - 50, 0))

upper_x2, upper_y2 = int(min(max(x_upper) + 50, width)), int(min(max(y_upper) + 50, height))

img = cv2.rectangle(img, (upper_x1, upper_y1), (upper_x2, upper_y2), (0, 255, 0), 2)

x_lower, y_lower = [], []

for joint in lower:

x_lower.append(posedict[joint][0])

y_lower.append(posedict[joint][1])

lower_x1, lower_y1 = int(max(min(x_lower) - 50, 0)), int(max(min(y_lower) - 50, 0))

lower_x2, lower_y2 = int(min(max(x_lower) + 50, width)), int(min(max(y_lower) + 50, height))

img = cv2.rectangle(img, (lower_x1, lower_y1), (lower_x2, lower_y2), (255, 0, 0), 2)

tempstr_upper = str(upper_x1) + ',' + str(upper_y1) + ',' + str(upper_x2) + ',' + str(upper_y2) + ',upper'

tempstr_lower = str(lower_x1) + ',' + str(lower_y1) + ',' + str(lower_x2) + ',' + str(lower_y2) + ',lower'

tempstr = imgname + '|' + tempstr_upper + '|' + tempstr_lower + '\n'

f.write(tempstr)

# plt.imshow(img)

# plt.show()

f.close()

print 'Done.'2. GTbox - txt2xml

由于Pascal VOC 的 image-xml 的格式, 即一张图片对应一个 xml 标注信息, 因此这里也将得到的 人体上下半身的 gtbox 转换成 xml 标注的形式.

这里每张图片都是有两个标注信息的, 上半身 gtbox 和 下半身 gtbox.

txt2xml.py

#! /usr/bin/python

import os

from PIL import Image

datas = open("mpii_gtbox.txt").readlines()

imgpath = "mpii/"

ann_dir = 'gtboxs/'

for data in datas:

datasplit = datas.split('|')

img_name = datasplit[0]

im = Image.open(imgpath + img_name)

width, height = im.size

gts = datasplit[1:]

# write in xml file

if os.path.exists(ann_dir + os.path.dirname(img_name)):

pass

else:

os.makedirs(ann_dir + os.path.dirname(img_name))

os.mknod(ann_dir + img_name[:-4] + '.xml')

xml_file = open((ann_dir + img_name[:-4] + '.xml'), 'w')

xml_file.write('\n' )

xml_file.write(' gtbox \n')

xml_file.write(' ' + img_name + '\n')

xml_file.write(' \n' )

xml_file.write(' ' + str(width) + '\n')

xml_file.write(' ' + str(height) + '\n')

xml_file.write(' 3 \n')

xml_file.write(' \n')

# write the region of text on xml file

for img_each_label in gts:

spt = img_each_label.split(',')

xml_file.write(' )

xml_file.write(' ' + spt[4].strip() + '\n')

xml_file.write(' Unspecified \n')

xml_file.write(' 0 \n')

xml_file.write(' 0 \n')

xml_file.write(' \n' )

xml_file.write(' ' + str(spt[0]) + '\n')

xml_file.write(' ' + str(spt[1]) + '\n')

xml_file.write(' ' + str(spt[2]) + '\n')

xml_file.write(' ' + str(spt[3]) + '\n')

xml_file.write(' \n')

xml_file.write(' \n')

xml_file.write('')

xml_file.close() #

print 'Done.'gtbox - xml 内容格式如:

<annotation>

<folder>gtboxfolder>

<filename>mpii/000004812.jpgfilename>

<size>

<width>1920width>

<height>1080height>

<depth>3depth>

size>

<object>

<name>uppername>

<pose>Unspecifiedpose>

<truncated>0truncated>

<difficult>0difficult>

<bndbox>

<xmin>1408xmin>

<ymin>573ymin>

<xmax>1848xmax>

<ymax>1025ymax>

bndbox>

object>

<object>

<name>lowername>

<pose>Unspecifiedpose>

<truncated>0truncated>

<difficult>0difficult>

<bndbox>

<xmin>1310xmin>

<ymin>475ymin>

<xmax>1460xmax>

<ymax>1042ymax>

bndbox>

object>

annotation>3. Create LMDB

生成 trainval.txt 和 test.txt, 其内容格式为:

mpii/038796633.jpg gtboxs/038796633.xml

mpii/081305121.jpg gtboxs/081305121.xml

mpii/016047648.jpg gtboxs/016047648.xml

mpii/078242581.jpg gtboxs/078242581.xml

mpii/027364042.jpg gtboxs/027364042.xml

mpii/090828862.jpg gtboxs/090828862.xml

......labelmap_gtbox.prototxt 定义如下:

item {

name: "none_of_the_above"

label: 0

display_name: "background"

}

item {

name: "upper"

label: 1

display_name: "upper"

}

item {

name: "lower"

label: 2

display_name: "lower"

}

test_name_size.py 来生成 test_name_size.txt:

#! /usr/bin/python

import os

from PIL import Image

img_lists = open('test.txt').readlines()

img_lists = [item.split(' ')[0] for item in img_lists]

test_name_size = open('test_name_size.txt', 'w')

imgpath = "mpii/"

for item in img_lists:

img = Image.open(imgpath + item)

width, height = img.size

temp1, temp2 = os.path.splitext(item)

test_name_size.write(temp1 + ' ' + str(height) + ' ' + str(width) + '\n')

print 'Done.'利用 create_data.sh 创建 trainval 和 test 的 lmdb —— gtbox_trainval_lmdb 和 gtbox_test_lmdb.

cur_dir=$(cd $( dirname ${BASH_SOURCE[0]} ) && pwd )

root_dir="mpii/data"est

ssd_dir="/path/to/caffe-ssd"

cd $root_dir

redo=1

data_root_dir="mpii/"

dataset_name="gtbox"

mapfile="$root_dir/labelmap_gtbox.prototxt"

anno_type="detection"

db="lmdb"

min_dim=0

max_dim=0

width=0

height=0

extra_cmd="--encode-type=jpg --encoded"

if [ $redo ]

then

extra_cmd="$extra_cmd --redo"

fi

for subset in test trainval

do

python $ssd_dir/scripts/create_annoset.py --anno-type=$anno_type --label-map-file=$mapfile --min-dim=$min_dim --max-dim=$max_dim --resize-width=$width --resize-height=$height --check-label $extra_cmd $data_root_dir $root_dir/$subset.txt $root_dir/$dataset_name/$db/$dataset_name"_"$subset"_"$db ddbox/$dataset_name

done

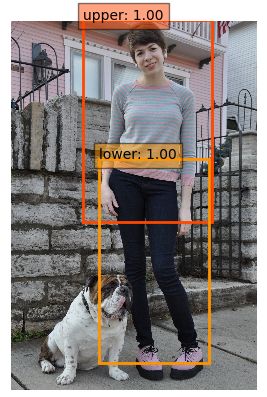

4. Train/Eval

修改 examples/ssd/ssd_pascal.py, python 运行即可.

这里的训练和测试网络为—— ssd_detect_human_body.

训练得到的测试精度接近 90%,还可以.

检测代码 —— ssd_detect.py

#!/usr/bin/env/python

import numpy as np

import matplotlib.pyplot as plt

caffe_root = '/path/to/caffe-ssd/'

import sys

sys.path.insert(0, caffe_root + 'python')

import caffe

caffe.set_device(0)

caffe.set_mode_gpu()

from google.protobuf import text_format

from caffe.proto import caffe_pb2

# load labels

labelmap_file = 'gtbox/labelmap_gtbox.prototxt'

file = open(labelmap_file, 'r')

labelmap = caffe_pb2.LabelMap()

text_format.Merge(str(file.read()), labelmap)

def get_labelname(labelmap, labels):

num_labels = len(labelmap.item)

labelnames = []

if type(labels) is not list:

labels = [labels]

for label in labels:

found = False

for i in xrange(0, num_labels):

if label == labelmap.item[i].label:

found = True

labelnames.append(labelmap.item[i].display_name)

break

assert found == True

return labelnames

model_def = 'deploy.prototxt'

model_weights = 'VGG_gtbox_SSD_300x300_iter_120000.caffemodel'

net = caffe.Net(model_def, model_weights, caffe.TEST)

image_resize = 300

net.blobs['data'].reshape(1, 3, image_resize, image_resize)

transformer = caffe.io.Transformer({'data': net.blobs['data'].data.shape})

transformer.set_transpose('data', (2, 0, 1))

transformer.set_mean('data', np.array([104,117,123])) # mean pixel

transformer.set_raw_scale('data', 255) # the reference model operates on images in [0,255] range instead of [0,1]

transformer.set_channel_swap('data', (2,1,0)) # the reference model has channels in BGR order instead of RGB

image = caffe.io.load_image('images/000000011.jpg')

transformed_image = transformer.preprocess('data', image)

net.blobs['data'].data[...] = transformed_image

# Forward pass.

detections = net.forward()['detection_out']

# Parse the outputs.

det_label = detections[0,0,:,1]

det_conf = detections[0,0,:,2]

det_xmin = detections[0,0,:,3]

det_ymin = detections[0,0,:,4]

det_xmax = detections[0,0,:,5]

det_ymax = detections[0,0,:,6]

# Get detections with confidence higher than 0.6.

top_indices = [i for i, conf in enumerate(det_conf) if conf >= 0.6]

top_conf = det_conf[top_indices]

top_label_indices = det_label[top_indices].tolist()

top_labels = get_labelname(labelmap, top_label_indices)

top_xmin = det_xmin[top_indices]

top_ymin = det_ymin[top_indices]

top_xmax = det_xmax[top_indices]

top_ymax = det_ymax[top_indices]

colors = plt.cm.hsv(np.linspace(0, 1, 21)).tolist()

plt.imshow(image)

plt.axis('off')

currentAxis = plt.gca()

for i in xrange(top_conf.shape[0]):

xmin = int(round(top_xmin[i] * image.shape[1]))

ymin = int(round(top_ymin[i] * image.shape[0]))

xmax = int(round(top_xmax[i] * image.shape[1]))

ymax = int(round(top_ymax[i] * image.shape[0]))

score = top_conf[i]

label = int(top_label_indices[i])

label_name = top_labels[i]

display_txt = '%s: %.2f'%(label_name, score)

coords = (xmin, ymin), xmax-xmin+1, ymax-ymin+1

color = colors[label]

currentAxis.add_patch(plt.Rectangle(*coords, fill=False, edgecolor=color, linewidth=2))

currentAxis.text(xmin, ymin, display_txt, bbox={'facecolor':color, 'alpha':0.5})

plt.show()

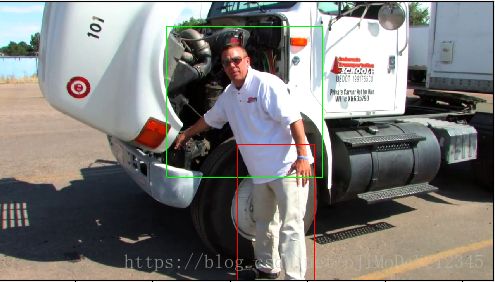

5. Results

6. Reference

[1]. [Code-SSD]

[2] - SSD: Single Shot MultiBox Detector

[3] - SSD: Signle Shot Detector 用于自然场景文字检测