Linux安装Sqoop(CentOS7+Sqoop1.4.6+Hadoop2.8.0+Hive2.1.1)

1下载Sqoop

2上载和解压缩

3一系列配置

3.1配置环境变量

3.2sqoop配置文件修改

3.2.1 sqoop-env.sh文件

3.2.1.1新建

3.2.1.2编辑内容

3.3将MySQL驱动包上载到Sqoop的lib下

4使用sqoop

4.1使用help命令

4.2使用Sqoop查看MySQL中的数据表

4.3基于MySQL的表创建hive表

4.3.1创建

4.3.2测试

4.4将MySQL中的数据导入到hive中

4.4.1执行导入命令

4.4.2执行hive命令测试上面的操作是否成功

5报错和解决

5.1 java.net.NoRouteToHostException: No route to host(Host unreachable)

5.2 ERROR tool.ImportTool: Error during import: Importjob failed!

关键字:Linux CentOS Sqoop Hadoop Hive Java

版本号:CetOS7 Sqoop1.4.6 Hadoop2.8.0 Hive2.1.1

注意:本文只讲Sqoop1.4.6的安装。和hive一样,sqoop只需要在hadoop的namenode上安装即可。本例安装sqoop的机器上已经安装了hdoop2.8.0和hive2.1.1,hadoop2.8.0的安装请参考博文:

http://blog.csdn.net/pucao_cug/article/details/71698903

hive2.1.1的安装请参考博文:

http://blog.csdn.net/pucao_cug/article/details/71773665

1下载Sqoop

因为官方并不建议在生产环境中使用1.99.7版本,所以我们还是等2.0版本出来在用新的吧,现在依然使用1.4.6版本

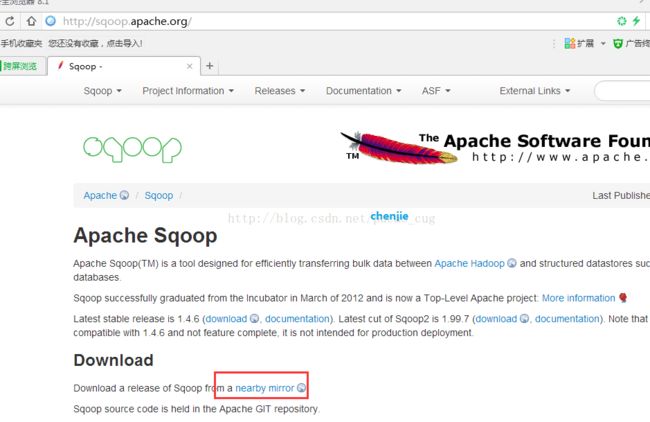

打开官网:

http://sqoop.apache.org/

如图:

点击上图的nearby mirror

相当于是直接打开:http://www.apache.org/dyn/closer.lua/sqoop/

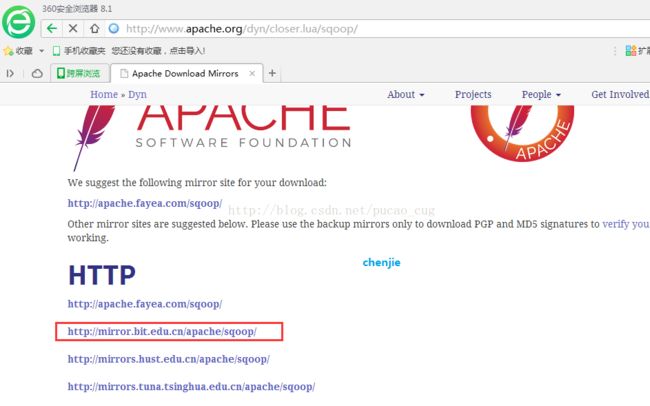

如图:

我选择的是http://mirror.bit.edu.cn/apache/sqoop/

如图:

点击1.4.6,相当于是直接打开地址:

http://mirror.bit.edu.cn/apache/sqoop/1.4.6/

如图:

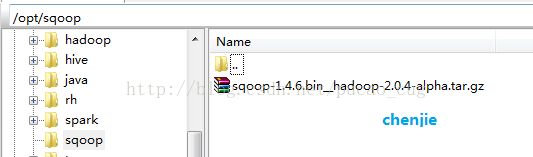

2上载和解压缩

在opt目录下新建一个名为sqoop的目录,将下载得到的文件sqoop-1.4.6.bin__hadoop-2.0.4-alpha.tar上载到该目录内

如图:

进入到该目录下,执行解压缩,也就是执行命令:

cd /opt/sqoop

tar -xvf sqoop-1.4.6.bin__hadoop-2.0.4-alpha.tar.gz

命令执行完成后得到了/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha目录

3一系列配置

3.1 配置环境变量

编辑/etc/profile文件,添加SQOOP_HOME变量,并且将$SQOOP_HOME/bin添加到PATH变量中,编辑方法很多,可以将profile文件下载到本地编辑,也可以直接用vim命令编辑。

添加的内容如下:

export JAVA_HOME=/opt/java/jdk1.8.0_121

export HADOOP_HOME=/opt/hadoop/hadoop-2.8.0

export HADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

export HADOOP_COMMON_LIB_NATIVE_DIR=${HADOOP_HOME}/lib/native

export HADOOP_OPTS="-Djava.library.path=${HADOOP_HOME}/lib"

export HIVE_HOME=/opt/hive/apache-hive-2.1.1-bin

export HIVE_CONF_DIR=${HIVE_HOME}/conf

export SQOOP_HOME=/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha

export CLASS_PATH=.:${JAVA_HOME}/lib:${HIVE_HOME}/lib:$CLASS_PATH

export PATH=.:${JAVA_HOME}/bin:${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin: ${HIVE_HOME}/bin:${SQOOP_HOME}/bin:$PATH

/etc/profile文件编辑完成后,执行命令:

source /etc/profile

3.2 Sqoop配置文件修改

3.2.1 sqoop-env.sh文件

3.2.1.1 新建

进入到/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/conf目录下,也就是执行命令:

cd /opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/conf

将sqoop-env-template.sh复制一份,并取名为sqoop-env.sh,也就是执行命令:

cp sqoop-env-template.sh sqoop-env.sh

如图:

3.2.1.2 编辑内容

编辑这个新建的sqoop-env.sh文件,编辑方法有很多,可以下载到本地编辑,也可是使用vim命令编辑。

在该文件末尾加入下面的配置:

export HADOOP_COMMON_HOME=/opt/hadoop/hadoop-2.8.0

export HADOOP_MAPRED_HOME=/opt/hadoop/hadoop-2.8.0

export HIVE_HOME=/opt/hive/apache-hive-2.1.1-bin

说明:上面的路径修改为自己的hadoop路径和hive路径。

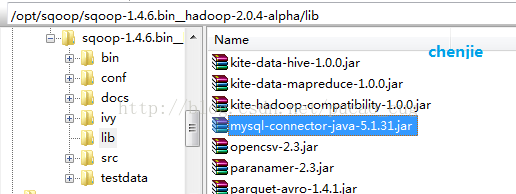

3.3 将MySQL驱动包上载到Sqoop的lib下

将MySQL的驱动包上载到Sqoop安装目录的lib子目录下

如图:

说明:该驱动不是越旧越好,也不是越新越好,5.1.31我这里测试是可以的。

4 使用sqoop

sqoop是一个工具,安装完成后,如果操作的命令不涉及hive和hadoop的,可以实现不启动hive和hadoop,直接输入sqoop命令即可,例如sqoop help命令。要使用hive相关的命令,必须事先启动hive和hadoop。

hadoop的安装和启动可以参考该博文:

http://blog.csdn.net/pucao_cug/article/details/71698903

hive的安装和启动可以参考该博文:

http://blog.csdn.net/pucao_cug/article/details/71773665

4.1 使用help命令

首先看看sqoop都有哪些命令,在终上输入命令:

sqoop help

如图:

上图中的内容是:

[root@hserver1 ~]# sqoop help

Warning:/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../hbase does not exist! HBaseimports will fail.

Please set $HBASE_HOME to the root of yourHBase installation.

Warning:/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../hcatalog does not exist! HCatalogjobs will fail.

Please set $HCAT_HOME to the root of yourHCatalog installation.

Warning:/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../accumulo does not exist!Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root ofyour Accumulo installation.

Warning:/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../zookeeper does not exist!Accumulo imports will fail.

Please set $ZOOKEEPER_HOME to the root ofyour Zookeeper installation.

17/05/14 16:21:30 INFO sqoop.Sqoop: RunningSqoop version: 1.4.6

usage: sqoop COMMAND [ARGS]

Available commands:

codegen Generate codeto interact with database records

create-hive-table Import a tabledefinition into Hive

eval Evaluate a SQLstatement and display the results

export Export an HDFS directory to adatabase table

help List availablecommands

import Import a tablefrom a database to HDFS

import-all-tables Import tablesfrom a database to HDFS

import-mainframe Import datasetsfrom a mainframe server to HDFS

job Work with savedjobs

list-databases List availabledatabases on a server

list-tables List availabletables in a database

merge Merge resultsof incremental imports

metastore Run astandalone Sqoop metastore

version Display versioninformation

See 'sqoop help COMMAND' for information ona specific command.

[root@hserver1 ~]#说明:因为我们没有基于hadoop安装HBase,所以HBase相关的命令不能用,但是操作hadoop分布式文件系统的命令是可以用的。

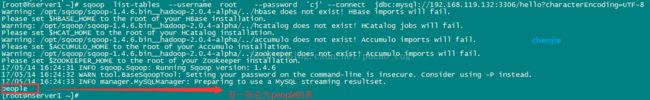

4.2 使用Sqoop查看MySQL中的数据表

下面是使用命令查看MySQL数据库中的数据表list,命令是(命令中不能有回车,必须是在同一行,复制粘贴时候请注意):

sqoop list-tables --username root --password 'cj' --connect jdbc:mysql://192.168.27.132:3306/hello?characterEncoding=UTF-8如图:

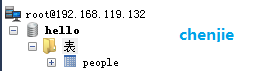

4.3 基于MySQL的表创建hive表

4.3.1 创建

注意:hive是基于hadoop的HDFS的,在运行下面的导入命令请,请确保hadoop和hive都在正常运行。

现在MySQL数据库服务器上有一个数据库名为 hello,下面有一张表名为people

如图:

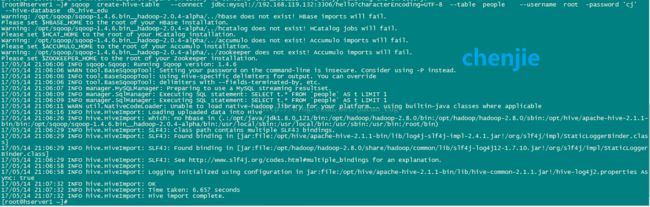

执行命令,在已经存在的db_hive_deu这个库中创建名为place的表:

sqoop create-hive-table --connect jdbc:mysql://192.168.119.132:3306/hello?characterEncoding=UTF-8 --table people --username root -password 'cj' --hive-database db_hive_edu如图:

上图中的内容是:

[root@hserver1 ~]# sqoop create-hive-table --connect jdbc:mysql://192.168.119.132:3306/hello?characterEncoding=UTF-8 --table people --username root -password 'cj' --hive-database db_hive_edu

Warning: /opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../hbasedoes not exist! HBase imports will fail.

Please set $HBASE_HOME to the root of your HBase installation.

Warning: /opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../hcatalogdoes not exist! HCatalog jobs will fail.

Please set $HCAT_HOME to the root of your HCatalog installation.

Warning: /opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../accumulodoes not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

Warning: /opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../zookeeperdoes not exist! Accumulo imports will fail.

Please set $ZOOKEEPER_HOME to the root of your Zookeeperinstallation.

17/05/14 21:06:06 INFO sqoop.Sqoop: Running Sqoop version: 1.4.6

17/05/14 21:06:06 WARN tool.BaseSqoopTool: Setting your password onthe command-line is insecure. Consider using -P instead.

17/05/14 21:06:06 INFO tool.BaseSqoopTool: Using Hive-specificdelimiters for output. You can override

17/05/14 21:06:06 INFO tool.BaseSqoopTool: delimiters with--fields-terminated-by, etc.

17/05/14 21:06:07 INFO manager.MySQLManager: Preparing to use aMySQL streaming resultset.

17/05/14 21:06:09 INFO manager.SqlManager: Executing SQL statement:SELECT t.* FROM `people` AS t LIMIT 1

17/05/14 21:06:09 INFO manager.SqlManager: Executing SQL statement:SELECT t.* FROM `people` AS t LIMIT 1

17/05/14 21:06:11 WARN util.NativeCodeLoader: Unable to loadnative-hadoop library for your platform... using builtin-java classes whereapplicable

17/05/14 21:06:16 INFO hive.HiveImport: Loading uploaded data intoHive

17/05/14 21:06:26 INFO hive.HiveImport: which: no hbase in (.:/opt/java/jdk1.8.0_121/bin:/opt/hadoop/hadoop-2.8.0/bin:/opt/hadoop/hadoop-2.8.0/sbin:/opt/hive/apache-hive-2.1.1-bin/bin:/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/bin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/root/bin)

17/05/14 21:06:29 INFO hive.HiveImport: SLF4J: Class path containsmultiple SLF4J bindings.

17/05/14 21:06:29 INFO hive.HiveImport: SLF4J: Found binding in[jar:file:/opt/hive/apache-hive-2.1.1-bin/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

17/05/14 21:06:29 INFO hive.HiveImport: SLF4J: Found binding in[jar:file:/opt/hadoop/hadoop-2.8.0/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

17/05/14 21:06:29 INFO hive.HiveImport: SLF4J: Seehttp://www.slf4j.org/codes.html#multiple_bindings for an explanation.

17/05/14 21:06:58 INFO hive.HiveImport:

17/05/14 21:06:58 INFO hive.HiveImport: Logging initialized usingconfiguration injar:file:/opt/hive/apache-hive-2.1.1-bin/lib/hive-common-2.1.1.jar!/hive-log4j2.propertiesAsync: true

17/05/14 21:07:32 INFO hive.HiveImport: OK

17/05/14 21:07:32 INFO hive.HiveImport: Time taken: 6.657 seconds

17/05/14 21:07:32 INFO hive.HiveImport: Hive import complete.

[root@hserver1 ~]#注意:create-hive-table只是创建hive表,并没有导入数据,导入数据的命令在4.4中讲到。

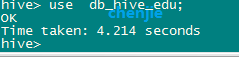

4.3.2 测试

在hive命令模式下,输入以下命令,切换到db_hive_edu数据库中,命令是:

use db_hive_edu;

如图:

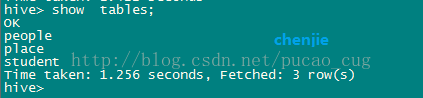

在hive命令模式下,输入以下命令:

show tables;

如图:

4.4 将MySQL中的数据导入到hive中

4.4.1 执行导入命令

注意:hive是基于hadoop的HDFS的,在运行下面的导入命令请,请确保hadoop和hive都在正常运行。需要额外说明的是,下面的命令不需要以4.3为前提,也就是说直接执行下面的命令即可,不需要事先在hive上创建对应的表。

执行下面的命令(下面的命令在同一行内):

sqoop import --connect jdbc:mysql://192.168.119.132:3306/hello?characterEncoding=UTF-8 --table place --username root -password 'cj' --fields-terminated-by ',' --hive-import --hive-database db_hive_edu -m 1如图:

上图中的内容是(完整的):

[root@hserver1~]# sqoop import --connect jdbc:mysql://192.168.119.132:3306/hello?characterEncoding=UTF-8 --table place --username root -password 'cj' --fields-terminated-by ',' --hive-import --hive-databasedb_hive_edu -m 1

Warning: /opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../hbasedoes not exist! HBase imports will fail.

Please set $HBASE_HOME to the root of yourHBase installation.

Warning:/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../hcatalog does not exist!HCatalog jobs will fail.

Please set $HCAT_HOME to the root of yourHCatalog installation.

Warning:/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../accumulo does not exist!Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root ofyour Accumulo installation.

Warning: /opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../zookeeperdoes not exist! Accumulo imports will fail.

Please set $ZOOKEEPER_HOME to the root ofyour Zookeeper installation.

17/05/14 20:44:07 INFO sqoop.Sqoop: RunningSqoop version: 1.4.6

17/05/14 20:44:08 WARN tool.BaseSqoopTool:Setting your password on the command-line is insecure. Consider using -Pinstead.

17/05/14 20:44:08 INFOmanager.MySQLManager: Preparing to use a MySQL streaming resultset.

17/05/14 20:44:08 INFO tool.CodeGenTool:Beginning code generation

17/05/14 20:44:10 INFO manager.SqlManager:Executing SQL statement: SELECT t.* FROM `place` AS t LIMIT 1

17/05/14 20:44:10 INFO manager.SqlManager:Executing SQL statement: SELECT t.* FROM `place` AS t LIMIT 1

17/05/14 20:44:10 INFOorm.CompilationManager: HADOOP_MAPRED_HOME is /opt/hadoop/hadoop-2.8.0

Note:/tmp/sqoop-root/compile/382b047cc7e935d9ba3014747288b5f7/place.java uses oroverrides a deprecated API.

Note: Recompile with -Xlint:deprecation fordetails.

17/05/14 20:44:18 INFOorm.CompilationManager: Writing jar file:/tmp/sqoop-root/compile/382b047cc7e935d9ba3014747288b5f7/place.jar

17/05/14 20:44:18 WARNmanager.MySQLManager: It looks like you are importing from mysql.

17/05/14 20:44:18 WARNmanager.MySQLManager: This transfer can be faster! Use the --direct

17/05/14 20:44:18 WARNmanager.MySQLManager: option to exercise a MySQL-specific fast path.

17/05/14 20:44:18 INFOmanager.MySQLManager: Setting zero DATETIME behavior to convertToNull (mysql)

17/05/14 20:44:18 INFOmapreduce.ImportJobBase: Beginning import of place

17/05/14 20:44:18 INFOConfiguration.deprecation: mapred.job.tracker is deprecated. Instead, usemapreduce.jobtracker.address

17/05/14 20:44:20 WARNutil.NativeCodeLoader: Unable to load native-hadoop library for yourplatform... using builtin-java classes where applicable

17/05/14 20:44:20 INFOConfiguration.deprecation: mapred.jar is deprecated. Instead, usemapreduce.job.jar

17/05/14 20:44:24 INFOConfiguration.deprecation: mapred.map.tasks is deprecated. Instead, usemapreduce.job.maps

17/05/14 20:44:25 INFO client.RMProxy:Connecting to ResourceManager at hserver1/192.168.119.128:8032

17/05/14 20:44:41 INFO db.DBInputFormat:Using read commited transaction isolation

17/05/14 20:44:42 INFOmapreduce.JobSubmitter: number of splits:1

17/05/14 20:44:43 INFOmapreduce.JobSubmitter: Submitting tokens for job: job_1494764774368_0001

17/05/14 20:44:49 INFO impl.YarnClientImpl:Submitted application application_1494764774368_0001

17/05/14 20:44:49 INFO mapreduce.Job: Theurl to track the job:http://hserver1:8088/proxy/application_1494764774368_0001/

17/05/14 20:44:49 INFO mapreduce.Job:Running job: job_1494764774368_0001

17/05/14 20:45:40 INFO mapreduce.Job: Jobjob_1494764774368_0001 running in uber mode : false

17/05/14 20:45:40 INFO mapreduce.Job: map 0% reduce 0%

17/05/14 20:46:21 INFO mapreduce.Job: map 100% reduce 0%

17/05/14 20:46:25 INFO mapreduce.Job: Jobjob_1494764774368_0001 completed successfully

17/05/14 20:46:25 INFO mapreduce.Job:Counters: 30

File System Counters

FILE: Number of bytes read=0

FILE: Number of byteswritten=154570

FILE: Number of readoperations=0

FILE: Number of large readoperations=0

FILE: Number of writeoperations=0

HDFS: Number of bytes read=87

HDFS: Number of byteswritten=10

HDFS: Number of readoperations=4

HDFS: Number of large readoperations=0

HDFS: Number of writeoperations=2

Job Counters

Launched map tasks=1

Other local map tasks=1

Total time spent by all maps inoccupied slots (ms)=34902

Total time spent by all reducesin occupied slots (ms)=0

Total time spent by all maptasks (ms)=34902

Total vcore-milliseconds takenby all map tasks=34902

Total megabyte-millisecondstaken by all map tasks=35739648

Map-Reduce Framework

Map input records=2

Map output records=2

Input split bytes=87

Spilled Records=0

Failed Shuffles=0

Merged Map outputs=0

GC time elapsed (ms)=190

CPU time spent (ms)=2490

Physical memory (bytes)snapshot=102395904

Virtual memory (bytes)snapshot=2082492416

Total committed heap usage(bytes)=16318464

File Input Format Counters

Bytes Read=0

File Output Format Counters

Bytes Written=10

17/05/14 20:46:25 INFOmapreduce.ImportJobBase: Transferred 10 bytes in 121.3811 seconds (0.0824bytes/sec)

17/05/14 20:46:25 INFOmapreduce.ImportJobBase: Retrieved 2 records.

17/05/14 20:46:26 INFO manager.SqlManager:Executing SQL statement: SELECT t.* FROM `place` AS t LIMIT 1

17/05/14 20:46:26 INFO hive.HiveImport:Loading uploaded data into Hive

17/05/14 20:48:25 INFO hive.HiveImport:which: no hbase in(.:/opt/java/jdk1.8.0_121/bin:/opt/hadoop/hadoop-2.8.0/bin:/opt/hadoop/hadoop-2.8.0/sbin:/opt/hive/apache-hive-2.1.1-bin/bin:/opt/sqoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/bin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/root/bin)

17/05/14 20:48:28 INFO hive.HiveImport:SLF4J: Class path contains multiple SLF4J bindings.

17/05/14 20:48:28 INFO hive.HiveImport:SLF4J: Found binding in[jar:file:/opt/hive/apache-hive-2.1.1-bin/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

17/05/14 20:48:28 INFO hive.HiveImport:SLF4J: Found binding in[jar:file:/opt/hadoop/hadoop-2.8.0/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

17/05/14 20:48:28 INFO hive.HiveImport:SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for anexplanation.

17/05/14 20:49:48 INFO hive.HiveImport:

17/05/14 20:49:48 INFO hive.HiveImport:Logging initialized using configuration injar:file:/opt/hive/apache-hive-2.1.1-bin/lib/hive-common-2.1.1.jar!/hive-log4j2.propertiesAsync: true

17/05/14 20:50:16 INFO hive.HiveImport: OK

17/05/14 20:50:16 INFO hive.HiveImport:Time taken: 3.735 seconds

17/05/14 20:50:18 INFO hive.HiveImport:Loading data to table db_hive_edu.place

17/05/14 20:50:20 INFO hive.HiveImport: OK

17/05/14 20:50:20 INFO hive.HiveImport:Time taken: 4.872 seconds

17/05/14 20:50:21 INFO hive.HiveImport:Hive import complete.

17/05/14 20:50:21 INFO hive.HiveImport:Export directory is contains the _SUCCESS file only, removing the directory.

[root@hserver1 ~]#4.4.2 执行hive命令测试上面的操作是否成功

切换到刚才hive命令行(为了方便,我一般都是开了个连接,一个是普通的Linux命令模式,一个是启动hive,用于执行hive命令)。

在hive命令模式下,输入以下命令,切换到db_hive_edu数据库中,命令是:

use db_hive_edu;

如图:

在hive命令模式下,输入以下命令:

show tables;

如图:

在hive命令模式下,输入以下命令查看people表里的数据:

select * from place;

如图:

5 报错和解决

5.1 java.net.NoRouteToHostException: No route to host (Host unreachable)

检查你命令中的IP地址是否写错了,检查命令中的--connect后面的IP地址,出现这个错误,往往是这个地方写错了。

5.2 ERROR tool.ImportTool: Error during import: Import job failed!

在执行sqoop import从MySql导出数据到hive时候报错,完整报错是:

java.lang.Exception:java.lang.RuntimeException: java.lang.ClassNotFoundException: Class place notfound

atorg.apache.hadoop.mapred.LocalJobRunner$Job.runTasks(LocalJobRunner.java:489)

atorg.apache.hadoop.mapred.LocalJobRunner$Job.run(LocalJobRunner.java:549)

Caused by:java.lang.RuntimeException: java.lang.ClassNotFoundException: Class place notfound

atorg.apache.hadoop.conf.Configuration.getClass(Configuration.java:2216)

at org.apache.sqoop.mapreduce.db.DBConfiguration.getInputClass(DBConfiguration.java:403)

atorg.apache.sqoop.mapreduce.db.DataDrivenDBInputFormat.createDBRecordReader(DataDrivenDBInputFormat.java:237)

atorg.apache.sqoop.mapreduce.db.DBInputFormat.createRecordReader(DBInputFormat.java:263)

atorg.apache.hadoop.mapred.MapTask$NewTrackingRecordReader.

atorg.apache.hadoop.mapred.MapTask.runNewMapper(MapTask.java:758)

atorg.apache.hadoop.mapred.MapTask.run(MapTask.java:341)

atorg.apache.hadoop.mapred.LocalJobRunner$Job$MapTaskRunnable.run(LocalJobRunner.java:270)

atjava.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

atjava.util.concurrent.FutureTask.run(FutureTask.java:266)

atjava.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

atjava.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

atjava.lang.Thread.run(Thread.java:745)

Caused by: java.lang.ClassNotFoundException:Class place not found

atorg.apache.hadoop.conf.Configuration.getClassByName(Configuration.java:2122)

atorg.apache.hadoop.conf.Configuration.getClass(Configuration.java:2214)

... 12 more

17/05/14 22:17:45 INFO mapreduce.Job:Job job_local612327026_0001 failed with state FAILED due to: NA

17/05/14 22:17:45 INFOmapreduce.Job: Counters: 0

17/05/14 22:17:46 WARNmapreduce.Counters: Group FileSystemCounters is deprecated. Useorg.apache.hadoop.mapreduce.FileSystemCounter instead

17/05/14 22:17:46 INFOmapreduce.ImportJobBase: Transferred 0 bytes in 19.1156 seconds (0 bytes/sec)

17/05/14 22:17:46 WARNmapreduce.Counters: Group org.apache.hadoop.mapred.Task$Counter is deprecated.Use org.apache.hadoop.mapreduce.TaskCounter instead

17/05/14 22:17:46 INFOmapreduce.ImportJobBase: Retrieved 0 records.

17/05/14 22:17:46 ERRORtool.ImportTool: Error during import: Import job failed!

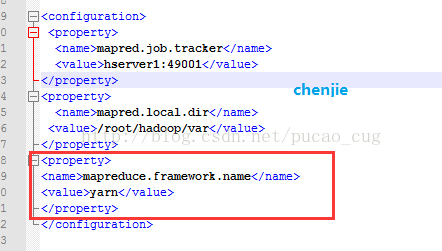

原因:

和hadoop的配置和有关系,需要启用yar这个mapreduce框架。

解决方法:

我的hadoop安装路径是/opt/hadoop/hadoop-2.8.0/。

找到/opt/hadoop/hadoop-2.8.0/etc/hadoop/mapred-site.xml配置文件,加入配置:

如图: