YOLOv3 训练自己的数据集

1.VOC数据集准备

使用ImageLab软件数据标注产生与图片对应的.xml

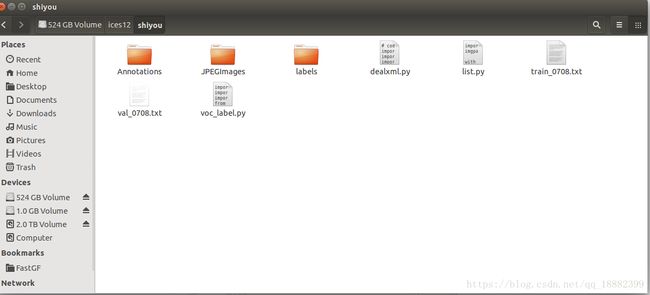

Annotations存放xml文件,JPEGImages存放原图,labels存放annotation对应的txt文件,

deal.xml对xml文件修改(folder,filname,path,object的name),list.py划分train和val数据集产生train_0708.txt和val_0708.txt的原图路径列表,voc_label.py产生annotation对应的txt文件。

# coding=utf-8

import os

import xml.dom.minidom

import xml.etree.ElementTree as ET

xmlpath = "/media/ices/d171fa47-c64c-42f5-886e-92bc88e1f6a6/ices12/shiyou/Annotations/"

imgpath="/media/ices/d171fa47-c64c-42f5-886e-92bc88e1f6a6/ices12/shiyou/JPEGImages/"

newpath = "/media/ices/d171fa47-c64c-42f5-886e-92bc88e1f6a6/ices12/shiyou/Annotation/"

#files = os.listdir(path) 得到文件夹下所有文件名称

for xmlname in os.listdir(xmlpath): # 遍历文件夹

p = xmlpath+xmlname

dom = xml.dom.minidom.parse(p)

root = dom.documentElement

epath = root.getElementsByTagName('path')

folder = root.getElementsByTagName('folder')

epath[0].firstChild.data = imgpath+xmlname.split('.')[0]+'.jpg'

folder[0].firstChild.data = 'JPEGImages'

print epath[0].firstChild.data

with open(newpath+ xmlname, 'w') as fh:

dom.writexml(fh)

print '写入 '+xmlname+' OK!'2.配置文件的修改

(1) Makfile

GPU=1 #如果使用GPU设置为1,CPU设置为0

CUDNN=1 #如果使用CUDNN设置为1,否则为0

OPENCV=0 #如果调用摄像头,还需要设置OPENCV为1,否则为0

OPENMP=0 #如果使用OPENMP设置为1,否则为0

DEBUG=0 #如果使用DEBUG设置为1,否则为0

CC=gcc

NVCC=/usr/local/cuda/bin/nvcc #NVCC=nvcc 修改为自己的路径

AR=ar

ARFLAGS=rcs

OPTS=-Ofast

LDFLAGS= -lm -pthread

COMMON= -Iinclude/ -Isrc/

CFLAGS=-Wall -Wno-unused-result -Wno-unknown-pragmas -Wfatal-errors -fPIC

...

ifeq ($(GPU), 1)

COMMON+= -DGPU -I/usr/local/cuda/include/ #修改为自己的路径

CFLAGS+= -DGPU

LDFLAGS+= -L/home/hebao/cuda-9.0/lib64 -lcuda -lcudart -lcublas -lcurand #修改为自己的路径

endif(2) cfg(注意此处filters个数要修改三次!!!)

[net]

# Testing

# batch=1

# subdivisions=1

# Training

batch=64

subdivisions=8

......

[convolutional]

size=1

stride=1

pad=1

filters=30###((类别数目+1)×5)

activation=linear

[yolo]

mask = 6,7,8

anchors = 10,13, 16,30, 33,23, 30,61, 62,45, 59,119, 116,90, 156,198, 373,326

classes=5###20

num=9

jitter=.3

ignore_thresh = .5

truth_thresh = 1

random=0###1

......

[convolutional]

size=1

stride=1

pad=1

filters=30###75

activation=linear

[yolo]

mask = 3,4,5

anchors = 10,13, 16,30, 33,23, 30,61, 62,45, 59,119, 116,90, 156,198, 373,326

classes=5###20

num=9

jitter=.3

ignore_thresh = .5

truth_thresh = 1

random=0###1

......

[convolutional]

size=1

stride=1

pad=1

filters=30###75

activation=linear

[yolo]

mask = 0,1,2

anchors = 10,13, 16,30, 33,23, 30,61, 62,45, 59,119, 116,90, 156,198, 373,326

classes=5###20

num=9

jitter=.3

ignore_thresh = .5

truth_thresh = 1

random=0###1(3) voc.data

classes= 5

train = /media/ices/d171fa47-c64c-42f5-886e-92bc88e1f6a6/ices12/shiyou/train_0708.txt

valid = /media/ices/d171fa47-c64c-42f5-886e-92bc88e1f6a6/ices12/shiyou/val_0708.txt

names = data/voc.names

backup = backup0708(4) voc.names

car

truck

building

collapse

river执行:sudo ./darknet detector train /home/ices/darknet/cfg/voc.data /home/ices/darknet/cfg/yolov3-voc0605.cfg /home/ices/darknet/backup0708/yolov3-voc0605.backup -gpus 0,1

3.测试

./darknet detector valid cfg/voc.data cfg/yolov3-voc0605.cfg backup3_1/yolov3-voc0605_final.weights -out g-

python 123.py /home/ices/darknet/results/g-1.txt /home/ices/darknet/V3/darknet/scripts/shiyou0605_val.txt 1123.py

# --------------------------------------------------------

# Fast/er R-CNN

# Licensed under The MIT License [see LICENSE for details]

# Written by Bharath Hariharan

# --------------------------------------------------------

import xml.etree.ElementTree as ET

import os

import pickle

import numpy as np

import sys

import re

def parse_rec(filename):

""" Parse a PASCAL VOC xml file """

tree = ET.parse(filename)

objects = []

for obj in tree.findall('object'):

obj_struct = {}

obj_struct['name'] = obj.find('name').text

obj_struct['pose'] = obj.find('pose').text

obj_struct['truncated'] = int(obj.find('truncated').text)

obj_struct['difficult'] = int(obj.find('difficult').text)

bbox = obj.find('bndbox')

obj_struct['bbox'] = [int(bbox.find('xmin').text),

int(bbox.find('ymin').text),

int(bbox.find('xmax').text),

int(bbox.find('ymax').text)]

objects.append(obj_struct)

return objects

def voc_ap(rec, prec, use_07_metric=False):

""" ap = voc_ap(rec, prec, [use_07_metric])

Compute VOC AP given precision and recall.

If use_07_metric is true, uses the

VOC 07 11 point method (default:False).

"""

if use_07_metric:

# 11 point metric

ap = 0.

for t in np.arange(0., 1.1, 0.1):

if np.sum(rec >= t) == 0:

p = 0

else:

p = np.max(prec[rec >= t])

ap = ap + p / 11.

else:

# correct AP calculation

# first append sentinel values at the end

mrec = np.concatenate(([0.], rec, [1.]))

mpre = np.concatenate(([0.], prec, [0.]))

# compute the precision envelope

for i in range(mpre.size - 1, 0, -1):

mpre[i - 1] = np.maximum(mpre[i - 1], mpre[i])

# to calculate area under PR curve, look for points

# where X axis (recall) changes value

i = np.where(mrec[1:] != mrec[:-1])[0]

# and sum (\Delta recall) * prec

ap = np.sum((mrec[i + 1] - mrec[i]) * mpre[i + 1])

return ap

def voc_eval(detpath,

annopath,

imagesetfile,

classname,

ovthresh=0.5,

use_07_metric=False):

"""rec, prec, ap = voc_eval(detpath,

annopath,

imagesetfile,

classname,

[ovthresh],

[use_07_metric])

Top level function that does the PASCAL VOC evaluation.

detpath: Path to detections

detpath.format(classname) should produce the detection results file.

annopath: Path to annotations

annopath.format(imagename) should be the xml annotations file.

imagesetfile: Text file containing the list of images, one image per line.

classname: Category name (duh)

cachedir: Directory for caching the annotations

[ovthresh]: Overlap threshold (default = 0.5)

[use_07_metric]: Whether to use VOC07's 11 point AP computation

(default False)

"""

# assumes detections are in detpath.format(classname)

# assumes annotations are in annopath.format(imagename)

# assumes imagesetfile is a text file with each line an image name

# cachedir caches the annotations in a pickle file

# first load gt

#if not os.path.isdir(cachedir):

# os.mkdir(cachedir)

#cachefile = os.path.join(cachedir, 'annots.pkl')

# read list of images

with open(imagesetfile, 'r') as f:

lines = f.readlines()

imagenames = [x.strip() for x in lines]

#if not os.path.isfile(cachefile):

# load annots

recs = {}

for i, imagename in enumerate(imagenames):

recs[imagename] = parse_rec(annopath.format(imagename))

if i % 100 == 0:

print('Reading annotation for {:d}/{:d}'.format(

i + 1, len(imagenames)))

# save

#print 'Saving cached annotations to {:s}'.format(cachefile)

#with open(cachefile, 'w') as f:

# cPickle.dump(recs, f)

#else:

# load

# with open(cachefile, 'r') as f:

# recs = cPickle.load(f)

# extract gt objects for this class

class_recs = {}

npos = 0

for imagename in imagenames:

R = [obj for obj in recs[imagename] if obj['name'] == classname]

bbox = np.array([x['bbox'] for x in R])

difficult = np.array([x['difficult'] for x in R]).astype(np.bool)

det = [False] * len(R)

npos = npos + sum(~difficult)

class_recs[imagename] = {'bbox': bbox,

'difficult': difficult,

'det': det}

# read dets

detfile = detpath.format(classname)

with open(detfile, 'r') as f:

lines = f.readlines()

splitlines = [x.strip().split(' ') for x in lines]

image_ids = [x[0] for x in splitlines]

confidence = np.array([float(x[1]) for x in splitlines])

BB = np.array([[float(z) for z in x[2:]] for x in splitlines])

# sort by confidence

sorted_ind = np.argsort(-confidence)

sorted_scores = np.sort(-confidence)

BB = BB[sorted_ind, :]

image_ids = [image_ids[x] for x in sorted_ind]

# go down dets and mark TPs and FPs

nd = len(image_ids)

tp = np.zeros(nd)

fp = np.zeros(nd)

for d in range(nd):

R = class_recs[image_ids[d]]

bb = BB[d, :].astype(float)

ovmax = -np.inf

BBGT = R['bbox'].astype(float)

if BBGT.size > 0:

# compute overlaps

# intersection

ixmin = np.maximum(BBGT[:, 0], bb[0])

iymin = np.maximum(BBGT[:, 1], bb[1])

ixmax = np.minimum(BBGT[:, 2], bb[2])

iymax = np.minimum(BBGT[:, 3], bb[3])

iw = np.maximum(ixmax - ixmin + 1., 0.)

ih = np.maximum(iymax - iymin + 1., 0.)

inters = iw * ih

# union

uni = ((bb[2] - bb[0] + 1.) * (bb[3] - bb[1] + 1.) +

(BBGT[:, 2] - BBGT[:, 0] + 1.) *

(BBGT[:, 3] - BBGT[:, 1] + 1.) - inters)

overlaps = inters / uni

ovmax = np.max(overlaps)

jmax = np.argmax(overlaps)

if ovmax > ovthresh:

if not R['difficult'][jmax]:

if not R['det'][jmax]:

tp[d] = 1.

R['det'][jmax] = 1

else:

fp[d] = 1.

else:

fp[d] = 1.

# compute precision recall

fp = np.cumsum(fp)

tp = np.cumsum(tp)

rec = tp / float(npos)

# avoid divide by zero in case the first detection matches a difficult

# ground truth

prec = tp / np.maximum(tp + fp, np.finfo(np.float64).eps)

ap = voc_ap(rec, prec, use_07_metric)

return rec, prec, ap

if __name__ =='__main__':

if len(sys.argv)<4:

print('error!!!')

print('the argv must be python 123.py [detpath] [testfile] [testname]')

else:

detpath=sys.argv[1]

testfile=sys.argv[2]

testname=sys.argv[3]

name_id=re.compile('.*/(.*)\.jpg')

name_xml = re.compile('(.*/).*.jpg')

#print(re.findall(name_id,'456/123.jpg'))

with open(testfile, 'r') as f:

lines = f.readlines()

f=open('123_testname.txt','w')

for i in lines:

temp=re.findall(name_id,i)

f.write(temp[0]+'\n')

f.close()

testfilepath=os.getcwd()+'/123_testname.txt'

temp = re.findall(name_xml, lines[0])

temp[0] = temp[0].replace('JPEGImages','Annotation')

annopath=temp[0]+'{}.xml'

print(voc_eval(detpath,annopath,testfilepath,testname)[2])