Python爬虫scrapy框架的源代码分析

scrapy框架流程图

推荐三个网址:官方1.5版本:https://doc.scrapy.org/en/latest/topics/architecture.html点击打开链接

官方0.24版本(中文):https://scrapy-chs.readthedocs.io/zh_CN/0.24/topics/architecture.html点击打开链接

scrapy中文网1.5版本:http://www.scrapyd.cn/doc/137.html点击打开链接

图十分的重要

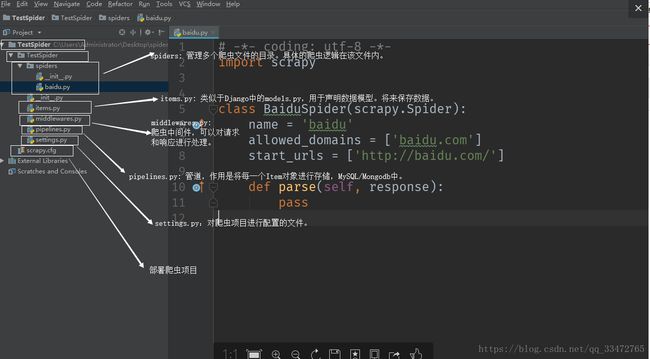

创建项目与配置环境后各部分组件:

上图主要是关于各个组件的作用!

下面是部分组件的详情:

首先主要是项目写代码部分:

项目名.py(eg:baidu.py)

项目一百度——eg:baidu.py

# -*- coding: utf-8 -*-

import scrapy

# scrapy: 是一个基于异步+多线程的方式运行爬虫的框架,内部的函数都是以回调的形式执行的,不能手动调用。

class BaiduSpider(scrapy.Spider):

# name: 自定义的爬虫名称,运行爬虫的时候就通过这个name的值运行的。name的值是唯一的。

name = 'baidu'

# allowed_domains:允许访问的网站的域名。没有设置的无法访问。

allowed_domains = ['baidu.com', 'qq.com', 'zhihu.com']

# start_urls:指定爬虫的起始url,爬虫启动之后,Engine就会从start_urls提取第一个url,然后将url构造成一个Request对象,交给调度器。

start_urls = ['https://www.baidu.com/', 'http://news.baidu.com/']

# parse()函数是在start_urls中的url请求成功以后,自动回调parse()函数。

def parse(self, response):

print('请求的url:', response.url)

print('状态码:', response.status)

print('返回的内容:', response.body)

项目二小说——eg:novel.py

# -*- coding: utf-8 -*-

import scrapy

from NovelSpider.items import NovelspiderItem

class NovelSpider(scrapy.Spider):

name = 'novel'

allowed_domains = ['readnovel.com']

start_urls = ['https://www.readnovel.com/rank/hotsales/']

number = 2

# headers = {

# 'Host':'',

# 'Referer':'',

# 'Cookie':''

# }

def parse(self, response):

self.number += 1

# 解析response对象

all_divs = response.xpath('//div[@class="book-mid-info"]')

# all_a: 保存的是Selector对象。该对象可以继续调用xpath()函数。

# print(all_a)

# xpath()返回的是

for div in all_divs:

# extract_first(默认值):尝试获取第一个元素,获取失败会采用默认值。

# href = a.xpath('@href').extract_first(default='')

# title = a.xpath('text()').extract_first(default='')

href = div.xpath('.//h4/a/@href').extract_first(default='')

detail_url = 'https://www.readnovel.com' + href

title = div.xpath('.//h4/a/text()').extract_first(default='')

author = div.xpath('.//p[@class="author"]/a[contains(@class, "name")]/text()').extract_first('')

# meta参数,可以向回调函数parse_detail_page传递参数。

# 将每一个详情页的请求对象,yield到调度器的队列中,等待被执行。

yield scrapy.Request(url=detail_url, callback=self.parse_detail_page, meta={'title': title, 'author': author}, dont_filter=False)

# novel = NovelspiderItem()

# novel["url"] = href

# novel["title"] = title

# novel["author"] = author

#

# print(href, title, author)

# yield novel

# 获取下一页的连接,然后构造一个请求对象,将这个request对象yield到调度器的队列中。

if self.number <= 3:

next_href = 'https://www.readnovel.com/rank/hotsales?pageNum={}'.format(self.number)

yield scrapy.Request(url=next_href, callback=self.parse)

def parse_detail_page(self, response):

# response.meta获取字典中的键值对。

book_img = response.xpath('//a[@id="bookImg"]/img/@src').extract_first('').strip()

book_img = 'https:'+book_img

novel = NovelspiderItem()

# print('---', response.url, response.meta['title'], response.meta['author'])

novel['url'] = response.url

novel['title'] = response.meta['title']

novel['author'] = response.meta['author']

# 需要下载的图片地址,需要是一个列表

# 如果不下载,只是将地址保存在数据库中,不需要设置列表

novel['img_url'] = [book_img]

# 需要下载的文件地址,需要是一个列表

# 如果不下载,只是将地址保存在数据库中,不需要设置列表

# novel['file_url'] = [file_url]

yield novel

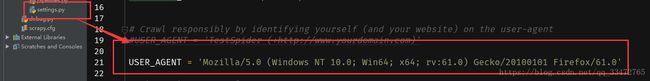

settings.py:主要是设置配置文件:

可以设置自定义配置也可以设置源代码中的操作同时也可以设置是否执行源代码操作还是自定义的操作,settings.py是一个字典!

# -*- coding: utf-8 -*-

# Scrapy settings for TestSpider project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://doc.scrapy.org/en/latest/topics/settings.html

# https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'TestSpider'

SPIDER_MODULES = ['TestSpider.spiders']

NEWSPIDER_MODULE = 'TestSpider.spiders'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'TestSpider (+http://www.yourdomain.com)'

USER_AGENT = 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:61.0) Gecko/20100101 Firefox/61.0'

# Obey robots.txt rules

# Scrapy框架默认遵守 robots.txt 协议规则,robots规定了一个网站中,哪些地址可以请求,哪些地址不能请求。

# 默认是True,设置为False不遵守这个协议。

ROBOTSTXT_OBEY = False

# Configure maximum concurrent requests performed by Scrapy (default: 16)

# 配置scrapy的请求连接数,默认会同时并发16个请求。

# CONCURRENT_REQUESTS = 10

# Configure a delay for requests for the same website (default: 0)

# See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

# 下载延时,请求和请求之间的间隔,降低爬取速度,default: 0

# DOWNLOAD_DELAY = 3

# CONCURRENT_REQUESTS_PER_DOMAIN:针对网站(主域名)设置的最大请求并发数。

# CONCURRENT_REQUESTS_PER_IP:某一个IP的最大请求并发数。

# The download delay setting will honor only one of:

# CONCURRENT_REQUESTS_PER_DOMAIN = 16

# CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

# 是否启用Cookie的配置,默认是可以使用Cookie的。主要是针对一些网站是禁用Cookie的。

# COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

# 配置默认的请求头Headers.

# DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

# }

# Enable or disable spider middlewares

# See https://doc.scrapy.org/en/latest/topics/spider-middleware.html

# 配置自定义爬虫中间件,scrapy也默认启用了一些爬虫中间件,可以在这个配置中关闭。

# SPIDER_MIDDLEWARES = {

# 'TestSpider.middlewares.TestspiderSpiderMiddleware': 543,

# }

# 下载中间件,配置自定义的中间件或者取消Scrapy默认启用的中间件。

# Enable or disable downloader middlewares

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

# DOWNLOADER_MIDDLEWARES = {

# 'TestSpider.middlewares.TestspiderDownloaderMiddleware': 543,

# }

# Enable or disable extensions

# See https://doc.scrapy.org/en/latest/topics/extensions.html

# EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

# }

# Configure item pipelines

# See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

# 配置自定义的PIPELINES,或者取消PIPELINES默认启用的中间件。

# ITEM_PIPELINES = {

# 'TestSpider.pipelines.TestspiderPipeline': 300,

# }

# 限速配置

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/autothrottle.html

# 是否开启自动限速

# AUTOTHROTTLE_ENABLED = True

# The initial download delay

# 配置初始url的下载延时

# AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

# 配置最大请求时间

# AUTOTHROTTLE_MAX_DELAY = 60

# 配置请求和请求之间的下载间隔,单位是秒

# The average number of requests Scrapy should be sending in parallel to

# each remote server

# AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

# AUTOTHROTTLE_DEBUG = False

# 关于Http缓存的配置,默认是不启用。

# 对于同一个页面的请求进行数据的缓存,如果后续还有相同的请求,直接从缓存中进行获取。

# Enable and configure HTTP caching (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

scrapy爬虫框架部件示意图源代码位置:

调度器组件schedule.py源代码:

depefilters.py源代码(去重作用):

useragent.py源代码(类比后面的中间件解析):

中间件middlewares.py组件的源码以及自定义(自定义useragent下载中间件和代理ip)

middlewares.py主要是对请求进行处理可以参考流程图

注意:useragent也可以直接放在settings.py中更可以放在自己的代码里直接使用如下图:

在settings.py中配置(默认首先使用自己配置的:1.直接设置(如火狐浏览器),2.随机一个):

直接在代码里使用:

from fake_useragent import UserAgent

ua = UserAgent()

headers = {

'User-Agent': ua.random,

}

# -*- coding: utf-8 -*-

# Define here the models for your spider middleware

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

from scrapy import signals

from fake_useragent import UserAgent

import requests, logging

class NovelspiderSpiderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the spider middleware does not modify the

# passed objects.

def __init__(self):

self.logger = logging.getLogger('NovelspiderSpiderMiddleware')

@classmethod

def from_crawler(cls, crawler):

print('from_crawler 开始执行了')

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_spider_input(self, response, spider):

# Called for each response that goes through the spider

# middleware and into the spider.

# 对应的是流程图中的第6步,在response对象交给Spider爬虫进行解析前,可以对response进行处理。

# 只能返回None或者抛出一个异常。。。

# print('process_spider_input 开始执行了')

self.logger.debug('process_spider_input 开始执行了')

# if item['id'] in set():

# raise DropItem()

# Should return None or raise an exception.

return None

def process_spider_output(self, response, result, spider):

# Called with the results returned from the Spider, after

# it has processed the response.

# 对应流程图中的第7步,可以从response返回的结果中,对后续的item和request进行处理。

# 必须返回Request或者Item对象

# print('process_spider_output 开始执行了')

self.logger.debug('process_spider_output 开始执行了')

# Must return an iterable of Request, dict or Item objects.

for i in result:

yield i

def process_spider_exception(self, response, exception, spider):

# Called when a spider or process_spider_input() method

# (from other spider middleware) raises an exception.

# Should return either None or an iterable of Response, dict

# or Item objects.

pass

def process_start_requests(self, start_requests, spider):

# Called with the start requests of the spider, and works

# similarly to the process_spider_output() method, except

# that it doesn’t have a response associated.

print('process_start_requests 开始执行了')

# Must return only requests (not items).

for r in start_requests:

yield r

def spider_opened(self, spider):

print('spider_opened 开始执行了')

spider.logger.info('Spider opened: %s' % spider.name)

# DownloaderMiddleware: 可以在请求被发起之前对Request进行处理,设置代理IP或者是请求头中的一些字段。

class NovelspiderDownloaderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the downloader middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_request(self, request, spider):

# Called for each request that goes through the downloader

# middleware.

# Must either:

# - return None: continue processing this request, 继续执行后续中间件的process_request(),直到将request交给downloader下载器进行下载;

# - or return a Response object,如果返回Response对象,后续的中间件以及downloader下载器都不在执行,而是将Response对象返回给引擎。引擎将它交给Spider进行解析。

# - or return a Request object,一般不会返回Request对象,将这个对象又存入了调度器,调度器会对返回的request进行重新调度。

# - or raise IgnoreRequest: process_exception() methods of

# installed downloader middleware will be called

return None

def process_response(self, request, response, spider):

# Called with the response returned from the downloader.

# Must either;

# - return a Response object,继续执行后续中间件的process_response()函数,最终返回给引擎;

# - return a Request object,终止中间件的执行,会重新调度这个request;

# - or raise IgnoreRequest

return response

def process_exception(self, request, exception, spider):

# Called when a download handler or a process_request()

# (from other downloader middleware) raises an exception.

# Must either:

# - return None: continue processing this exception

# - return a Response object: stops process_exception() chain

# - return a Request object: stops process_exception() chain

pass

def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name)

class UserAgentMiddlewares(object):

"""

自定义一个UserAgent的下载中间件。

"""

def __init__(self, user_agent_type):

self.ua = UserAgent()

self.user_agent_type = user_agent_type

@classmethod

def from_crawler(cls, crawler):

obj = cls(

user_agent_type=crawler.settings.get('USER_AEGNT_TYPE', 'random')

)

return obj

def get_user_agent(self):

# getattr():通过self.ua调用self.user_agent_type

user_agent = getattr(self.ua, self.user_agent_type)

return user_agent

def get_proxy(self):

#自己开启代理池

return requests.get('http://localhost:5010/get/').text

def process_request(self, request, spider):

# 设置随机的User-Agent

request.headers.setdefault(b'User-Agent', self.get_user_agent())

# 设置代理IP

request['proxy'] = 'http://' + self.get_proxy()

return None下载图片源码:

如果自定义保存图片,在pipeline中设置如下代码(注意:settings配置)

from scrapy.http import Request

#导入源码ImagesPipelines,继承

from scrapy.pipelines.images import ImagesPipeline

class CustomImagesPipeline(ImagesPipeline):

def get_media_requests(self, item, info):

# 从item中获取要下载图片的url,根据url构造Request()对象,并返回该对象

image_url = item['img_url'][0]

yield Request(image_url, meta={'item': item})

def file_path(self, request, response=None, info=None):

# 用来自定义图片的下载路径

item = request.meta['item']

url = item['img_url'][0].split('/')[5]

return '%s.jpg'%url

def item_completed(self, results, item, info):

# 图片下载完成后,返回的结果results

print(results)

return item

#注意:如果后面还需要用到item,则必须返回(return),供后面可以使用

保存文件(主要:文档,下载文档等,注意:类比图片的保存!)源码:

如果自定义保存文件,需要在pipelines.py中设置如下代码(注意:settings.py的配置):

from scrapy.http import Request

#导入源码的FilePipeline,继承

from scrapy.pipelines.files import FilesPipeline

class CustomFilesPipeline(FilesPipeline):

def get_media_requests(self, item, info):

download_url = item['download_url'][0]

download_url = download_url.replace("'",'')

print(download_url)

yield Request(download_url, meta={'item':item})

def file_path(self, request, response=None, info=None):

item = request.meta['item']

#创建sort_name文件,在里面保存novel_name文件

return '%s/%s' % (item['sort'],item['novel_name'])

def item_completed(self, results, item, info):

print(results)

return item组件middlewares.py详解与自定义中间件(已备注和含有自定义):

# -*- coding: utf-8 -*-

# Define here the models for your spider middleware

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

from scrapy import signals

from fake_useragent import UserAgent

import requests, logging

class NovelspiderSpiderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the spider middleware does not modify the

# passed objects.

def __init__(self):

self.logger = logging.getLogger('NovelspiderSpiderMiddleware')

@classmethod

def from_crawler(cls, crawler):

print('from_crawler 开始执行了')

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_spider_input(self, response, spider):

# Called for each response that goes through the spider

# middleware and into the spider.

# 对应的是流程图中的第6步,在response对象交给Spider爬虫进行解析前,可以对response进行处理。

# 只能返回None或者抛出一个异常。。。

# print('process_spider_input 开始执行了')

self.logger.debug('process_spider_input 开始执行了')

# if item['id'] in set():

# raise DropItem()

# Should return None or raise an exception.

return None

def process_spider_output(self, response, result, spider):

# Called with the results returned from the Spider, after

# it has processed the response.

# 对应流程图中的第7步,可以从response返回的结果中,对后续的item和request进行处理。

# 必须返回Request或者Item对象

# print('process_spider_output 开始执行了')

self.logger.debug('process_spider_output 开始执行了')

# Must return an iterable of Request, dict or Item objects.

for i in result:

yield i

def process_spider_exception(self, response, exception, spider):

# Called when a spider or process_spider_input() method

# (from other spider middleware) raises an exception.

# Should return either None or an iterable of Response, dict

# or Item objects.

pass

def process_start_requests(self, start_requests, spider):

# Called with the start requests of the spider, and works

# similarly to the process_spider_output() method, except

# that it doesn’t have a response associated.

print('process_start_requests 开始执行了')

# Must return only requests (not items).

for r in start_requests:

yield r

def spider_opened(self, spider):

print('spider_opened 开始执行了')

spider.logger.info('Spider opened: %s' % spider.name)

# DownloaderMiddleware: 可以在请求被发起之前对Request进行处理,设置代理IP或者是请求头中的一些字段。

class NovelspiderDownloaderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the downloader middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_request(self, request, spider):

# Called for each request that goes through the downloader

# middleware.

# Must either:

# - return None: continue processing this request, 继续执行后续中间件的process_request(),直到将request交给downloader下载器进行下载;

# - or return a Response object,如果返回Response对象,后续的中间件以及downloader下载器都不在执行,而是将Response对象返回给引擎。引擎将它交给Spider进行解析。

# - or return a Request object,一般不会返回Request对象,将这个对象又存入了调度器,调度器会对返回的request进行重新调度。

# - or raise IgnoreRequest: process_exception() methods of

# installed downloader middleware will be called

return None

def process_response(self, request, response, spider):

# Called with the response returned from the downloader.

# Must either;

# - return a Response object,继续执行后续中间件的process_response()函数,最终返回给引擎;

# - return a Request object,终止中间件的执行,会重新调度这个request;

# - or raise IgnoreRequest

return response

def process_exception(self, request, exception, spider):

# Called when a download handler or a process_request()

# (from other downloader middleware) raises an exception.

# Must either:

# - return None: continue processing this exception

# - return a Response object: stops process_exception() chain

# - return a Request object: stops process_exception() chain

pass

def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name)

#######################################################################################

#以下自定义部分:

class UserAgentMiddlewares(object):

"""

自定义一个UserAgent的下载中间件。

"""

def __init__(self, user_agent_type):

self.ua = UserAgent()

self.user_agent_type = user_agent_type

@classmethod

def from_crawler(cls, crawler):

obj = cls(

user_agent_type=crawler.settings.get('USER_AEGNT_TYPE', 'random')

)

return obj

def get_user_agent(self):

# getattr():通过self.ua调用self.user_agent_type

user_agent = getattr(self.ua, self.user_agent_type)

return user_agent

def get_proxy(self):

return requests.get('http://localhost:5010/get/').text

def process_request(self, request, spider):

# 设置随机的User-Agent

request.headers.setdefault(b'User-Agent', self.get_user_agent())

# 设置代理IP

request['proxy'] = 'http://' + self.get_proxy()

return None