前期准备

节点准备

本次节点列表如下:

| Ip | Hostname | 角色 |

|---|---|---|

| 192.168.2.131 | pinpointNode1 | hbase master节点;NameNode;pinpoint collector;nginx(用于代理pinpoint collector) |

| 192.168.2.132 | pinpointNode2 | hbase slave节点;DataNode;pinpoint collector;pinpoint web |

| 192.168.2.133 | pinpointNode3 | hbase slave节点;DataNode;pinpoint collector |

pinpoint集群依赖于hbase集群,因此需要先搭建好hbase集群(hbase+zookeeper+Hadoop)。

安装java

安装

cd /home/vagrant

curl -OL http://files.saas.hand-china.com/hitoa/1.0.0/jdk-8u112-linux-x64.tar.gz

tar -xzvf jdk-8u112-linux-x64.tar.gz

配置环境变量

vim /etc/profile

在/etc/profile文件末尾追加以下内容:

export JAVA_HOME=/home/vagrant/jdk1.8.0_112

export JRE_HOME=$JAVA_HOME/jre

export PATH=$JAVA_HOME/bin:$JRE_HOME/bin:$PATH

使配置生效:

source /etc/profile

验证:

java -version

如果出现类似如下的输出,则表示安装成功:

java version "1.8.0_112"

Java(TM) SE Runtime Environment (build 1.8.0_112-b15)

Java HotSpot(TM) 64-Bit Server VM (build 25.112-b15, mixed mode)

配置Hosts映射

分别在三个节点上添加hosts映射关系:

vim /etc/hosts

更改后配置如下:

#分别注释各个节点上的此处配置,否则会报错

#127.0.0.1 pinpointNode1 pinpointNode1

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

#主机ip 主机名

192.168.2.131 pinpointNode1

192.168.2.132 pinpointNode2

192.168.2.133 pinpointNode3

配置集群节点之间无密码登录

CentOS默认安装了ssh,如果没有你需要先安装ssh 。

集群环境的使用必须通过ssh无密码登陆来执行,本机登陆本机必须无密码登陆,主机与从机之间必须可以双向无密码登陆,从机与从机之间无限制(从机之间的无密码登录配置为可选的)。

免密登录本机

下面以配置pinpointNode1本机无密码登录为例进行讲解,参照下面的步骤配置另外两台子节点机器的本机无密码登录;

1)生产秘钥

ssh-keygen -t rsa

2)将公钥追加到”authorized_keys”文件

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

3)赋予权限

chmod 600 .ssh/authorized_keys

4)验证本机能无密码访问

ssh pinpointNode1

master节点免密登录从节点机器

将master节点pinpointNode1的公钥复制到pinpointNode2、pinpointNode3 authorized_keys文件中

然后再pinpointNode1节点进行测试:ssh pinpointNode2、ssh pinpointNode3。

第一次登录可能需要yes确认,之后就可以直接登录了。

从节点机器免密登录主节点机器

将每个从节点的公钥id_rsa.pub中的内容复制到主节点的authorized_keys文件中。

然后在从节点测试:ssh pinpointNode1。

第一次登录可能需要yes确认,之后就可以直接登录了。

配置zookeeper集群

登录到主节点pinpointNode1

解压安装包

tar -xzvf zookeeper-3.4.9.tar.gz

修改配置文件

[vagrant@pinpointNode1 ~]$ cd zookeeper-3.4.9/

[vagrant@pinpointNode1 zookeeper-3.4.9]$ mkdir data

[vagrant@pinpointNode1 zookeeper-3.4.9]$ mkdir logs

更改配置:

[vagrant@pinpointNode1 zookeeper-3.4.9]$ vi conf/zoo.cfg

添加如下内容:

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/home/vagrant/zookeeper-3.4.9/data

dataLogDir=/home/vagrant/zookeeper-3.4.9/logs

autopurge.snapRetainCount=20

autopurge.purgeInterval=48

server.1=192.168.2.131:2888:3888

server.2=192.168.2.132:2888:3888

server.3=192.168.2.133:2888:3888

# the port at which the clients will connect

clientPort=2181

MaxSessionTimeout=200000

在data目录下创建文件myid,写入数字1。

[vagrant@pinpointNode1 zookeeper-3.4.9]$ cd data

[vagrant@pinpointNode1 data]$ vi myid

1

复制配置好的zookeeper到从节点pinpointNode2、pinpointNode3。

[vagrant@pinpointNode1]$ scp -r zookeeper-3.4.9 pinpointNode2:/home/vagrant/

[vagrant@pinpointNode1]$ scp -r zookeeper-3.4.9 pinpointNode3:/home/vagrant/

更改从节点的myid:

登录到pinpointNode2,进入zookeeper的data目录执行echo 2 > myid;登录到pinpointNode3,进入zookeeper的data目录执行echo 3 > myid。

启动zookeeper

分别启动不同节点的zookeeper:

[vagrant@pinpointNode1 zookeeper-3.4.9]$ ./bin/zkServer.sh start

[vagrant@pinpointNode2 zookeeper-3.4.9]$ ./bin/zkServer.sh start

[vagrant@pinpointNode3 zookeeper-3.4.9]$ ./bin/zkServer.sh start

查看zookeeper状态

[vagrant@pinpointNode1 zookeeper-3.4.9]$ ./bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /home/vagrant/zookeeper-3.4.9/bin/../conf/zoo.cfg

Mode: follower

[vagrant@pinpointNode2 zookeeper-3.4.9]$ ./bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /home/vagrant/zookeeper-3.4.9/bin/../conf/zoo.cfg

Mode: leader

[vagrant@pinpointNode3 zookeeper-3.4.9]$ ./bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /home/vagrant/zookeeper-3.4.9/bin/../conf/zoo.cfg

Mode: follower

由于zk运行一段时间后,会产生大量的日志文件,把磁盘空间占满,导致整个机器进程都不能活动了,所以需要定期清理这些日志文件(方法之后再整理)。

Hadoop集群安装

配置pinpointNode1的Hadoop环境

解压

[vagrant@pinpointNode1 ~]$ tar -xzvf hadoop-2.6.5.tar.gz

配置环境变量

配置环境变量,修改配置文件vi /etc/profile:

export HADOOP_HOME=/home/vagrant/hadoop-2.6.5

export PATH=$PATH:$HADOOP_HOME/bin

使得hadoop命令在当前终端立即生效

source /etc/profile

更改Hadoop配置

下面配置,文件都在:/home/vagrant/hadoop-2.6.5/etc/hadoop路径下

配置core-site.xml

创建目录/home/vagrant/hadoop-2.6.5/tmp:mkdir -p /home/vagrant/hadoop-2.6.5/tmp。更改配置如下:

hadoop.tmp.dir

file:/home/vagrant/hadoop-2.6.5/tmp

Abase for other temporary directories.

fs.defaultFS

hdfs://192.168.2.131:9000

特别注意:如没有配置hadoop.tmp.dir参数,此时系统默认的临时目录为:/tmp/hadoo-hadoop。而这个目录在每次重启后都会被删除,必须重新执行format才行,否则会出错。

配置hdfs-site.xml

创建文件:

mkdir -p /home/vagrant/hadoop-2.6.5/hdfs/name

mkdir -p /home/vagrant/hadoop-2.6.5/hdfs/data

更改配置:

dfs.replication

2

dfs.name.dir

file:/home/vagrant/hadoop-2.6.5/hdfs/name

dfs.data.dir

file:/home/vagrant/hadoop-2.6.5/hdfs/data

dfs.replication表示数据副本数,一般不大于datanode的节点数。

配置mapred-site.xml

拷贝mapred-site.xml.template为mapred-site.xml,在进行修改

cp /home/vagrant/hadoop-2.6.5/etc/hadoop/mapred-site.xml.template /home/vagrant/hadoop-2.6.5/etc/hadoop/mapred-site.xml

vi /home/vagrant/hadoop-2.6.5/etc/hadoop/mapred-site.xml

mapreduce.framework.name

yarn

mapred.job.tracker

192.168.2.131:9001

配置yarn-site.xml

vim /home/vagrant/hadoop-2.6.5/etc/hadoop/yarn-site.xml

yarn.nodemanager.aux-services

mapreduce_shuffle

yarn.resourcemanager.hostname

192.168.2.131

修改slaves文件

修改/home/vagrant/hadoop-2.6.5/etc/hadoop/slaves文件,该文件指定哪些服务器节点是datanode节点。删除locahost,添加所有datanode节点的主机名,如下所示:

192.168.2.132

192.168.2.133

修改hadoop-env.sh

vi /home/vagrant/hadoop-2.6.5/etc/hadoop/hadoop-env.sh

添加JDK路径,如果不同的服务器jdk路径不同需要单独修改:

export JAVA_HOME=/home/vagrant/jdk1.8.0_112

配置从节点的Hadoop环境

在pinpointNode1上将配置好的Hadoop复制到pinpointNode2、pinpointNode3:

scp -r /home/vagrant/hadoop-2.6.5 pinpointNode2:/home/vagrant/

scp -r /home/vagrant/hadoop-2.6.5 pinpointNode3:/home/vagrant/

添加环境变量:

vi /etc/profile

## 内容

export HADOOP_HOME= /home/vagrant/hadoop-2.6.5

export PATH=$PATH:$HADOOP_HOME/bin

使得hadoop命令在当前终端立即生效;

source /etc/profile

hbase集群安装

解压安装包

[vagrant@pinpointNode1 ~]$ unzip hbase-1.2.4-bin.tar.gz

更改配置

配置文件位置/home/vagrant/hbase-1.2.4/conf。

hbase-env.sh

vi hbase-env.sh

更改如下部分:

export JAVA_HOME=/home/vagrant/jdk1.8.0_112 # 如果jdk路径不同需要单独配置

export HBASE_CLASSPATH=/home/vagrant/hadoop-2.6.5/etc/hadoop #配置hbase找到Hadoop

export HBASE_MANAGES_ZK=false #使用外部的zookeeper

hbase-site.xml

vi hbase-site.xml

hbase.rootdir

hdfs://192.168.2.131:9000/hbase

hbase.master

192.168.2.131

hbase.cluster.distributed

true

hbase.zookeeper.quorum

192.168.2.131,192.168.2.132,192.168.2.133

hbase.zookeeper.property.clientPort

2181

zookeeper.session.timeout

200000

dfs.support.append

true

regionservers

vi regionservers

在 regionservers 文件中添加从节点列表:

192.168.2.132

192.168.2.133

分发并同步安装包

将整个hbase安装目录都拷贝到所有从节点服务器:

scp -r /home/vagrant/hbase-1.2.4 pinpointNode2:/home/vagrant/

scp -r /home/vagrant/hbase-1.2.4 pinpointNode3:/home/vagrant/

启动集群

启动zookeeper

每个节点执行如下命令启动zookeeper:

/home/vagrant/zookeeper-3.4.9/bin/zkServer.sh start

启动Hadoop

进入master的/home/vagrant/hadoop-2.6.5目录,执行以下操作:

./bin/hadoop namenode -format

格式化namenode,第一次启动服务前执行的操作,以后不需要执行。

然后启动hadoop:

在master节点执行如下命令即可:

/home/vagrant/hadoop-2.6.5/sbin/start-all.sh

启动hbase

在master节点执行如下命令即可:

/home/vagrant/hbase-1.2.4/bin/start-hbase.sh

进程列表

集群启动成功后可查看进程:

master节点

[vagrant@pinpointNode1 ~]$ jps

10929 QuorumPeerMain

18562 Jps

17033 NameNode

17356 ResourceManager

17756 HMaster

17213 SecondaryNameNode

从节点

[vagrant@pinpointNode2 ~]$ jps

9955 DataNode

11076 HRegionServer

8309 QuorumPeerMain

11592 Jps

10059 NodeManager

[vagrant@pinpointNode3 ~]$ jps

10608 Jps

9878 NodeManager

10087 HRegionServer

8189 QuorumPeerMain

9774 DataNode

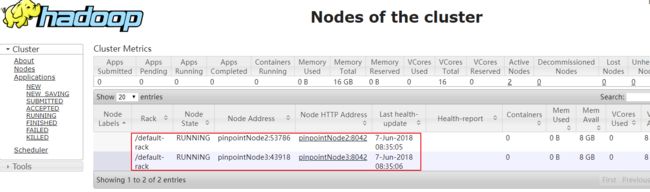

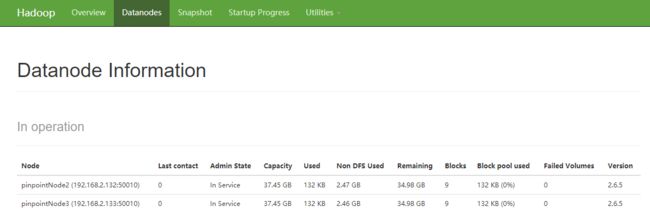

web界面查看状态

Hadoop

访问http://192.168.2.131:8088/cluster/nodes

hbase

访问http://192.168.2.131:16010/master-status

hdfs

访问http://192.168.2.131:50070/dfshealth.html#tab-overview

初始化Pinpoint表结构

首先确保HBase是正常启动状态,然后在主节点上执行如下命令:

[vagrant@pinpointNode1 ~]$ ./hbase-1.2.4/bin/hbase shell ./hbase-create.hbase

初始化成功以后,会在HBase中创建16张表。

安装pinpoint collector

此次打算安装pinpoint collector集群,因此在三个节点上都安装上pinpoint collector,具体安装方式以下会有详细的介绍。

Pinpoint Collector、Pinpoint Web的部署均需要Tomcat,所以先下载Tomcat。

解压tomcat

[vagrant@pinpointNode1 ~]$ unzip apache-tomcat-8.5.14.zip

[vagrant@pinpointNode1 ~]$ mv apache-tomcat-8.5.14 pinpoint-collector-1.6.2

[vagrant@pinpointNode1 ~]$ cd pinpoint-collector-1.6.2/webapps

[vagrant@pinpointNode1 webapps]$ rm -rf *

[vagrant@pinpointNode1 webapps]$ unzip ~/pinpoint-collector-1.6.2.war -d ROOT

修改配置文件

修改server.xml

为了避免端口冲突,更改tomcat端口设置:

[vagrant@pinpointNode1 ~]$ cat pinpoint-collector-1.6.2/conf/server.xml

修改hbase.properties

修改该配置文件里面的hbase.client.host、hbase.client.port参数即可,该配置文件在每个collector节点中应该保持相同:

[vagrant@pinpointNode1 ~]$ cat pinpoint-collector-1.6.2/webapps/ROOT/WEB-INF/classes/hbase.properties

#zookeeper集群服务器IP地址

hbase.client.host=192.168.2.131,192.168.2.132,192.168.2.133

#zookeeper端口

hbase.client.port=2181

# hbase default:/hbase

hbase.zookeeper.znode.parent=/hbase

# hbase timeout option==================================================================================

# hbase default:true

hbase.ipc.client.tcpnodelay=true

# hbase default:60000

hbase.rpc.timeout=10000

# hbase default:Integer.MAX_VALUE

hbase.client.operation.timeout=10000

# hbase socket read timeout. default: 200000

hbase.ipc.client.socket.timeout.read=20000

# socket write timeout. hbase default: 600000

hbase.ipc.client.socket.timeout.write=60000

# ==================================================================================

# hbase client thread pool option

hbase.client.thread.max=64

hbase.client.threadPool.queueSize=5120

# prestartAllCoreThreads

hbase.client.threadPool.prestart=false

# enable hbase async operation. default: false

hbase.client.async.enable=false

# the max number of the buffered asyncPut ops for each region. default:10000

hbase.client.async.in.queuesize=10000

# periodic asyncPut ops flush time. default:100

hbase.client.async.flush.period.ms=100

# the max number of the retry attempts before dropping the request. default:10

hbase.client.async.max.retries.in.queue=10

修改pinpoint-collector.properties

修改该配置文件的如下参数,另外两个collector节点将如下配置文件中的collector相关IP地址替换为各自的IP即可:

#当前collector节点的IP地址

collector.tcpListenIp=192.168.2.131

#collector监听的tcp端口(默认9994端口)

collector.tcpListenPort=9994

#当前collector节点的IP地址

collector.udpStatListenIp=192.168.2.131

#collector监听的udp端口(默认9995)

collector.udpStatListenPort=9995

#当前collector节点的IP地址

collector.udpSpanListenIp=192.168.2.131

#collector监听的udp端口(默认9996)

collector.udpSpanListenPort=9996

#因为是部署collector集群,因此将此参数设置为true

cluster.enable=true

#zookeeper集群地址

cluster.zookeeper.address=192.168.2.131,192.168.2.132,192.168.2.133

cluster.zookeeper.sessiontimeout=30000

#当前节点collector IP地址

cluster.listen.ip=192.168.2.131

#collector监听的端口

cluster.listen.port=9090

[vagrant@pinpointNode1 ~]$ cat pinpoint-collector-1.6.2/webapps/ROOT/WEB-INF/classes/pinpoint-collector.properties

# tcp listen ip and port

collector.tcpListenIp=192.168.2.131

collector.tcpListenPort=9994

# number of tcp worker threads

collector.tcpWorkerThread=8

# capacity of tcp worker queue

collector.tcpWorkerQueueSize=1024

# monitoring for tcp worker

collector.tcpWorker.monitor=true

# udp listen ip and port

collector.udpStatListenIp=192.168.2.131

collector.udpStatListenPort=9995

# configure l4 ip address to ignore health check logs

collector.l4.ip=

# number of udp statworker threads

collector.udpStatWorkerThread=8

# capacity of udp statworker queue

collector.udpStatWorkerQueueSize=64

# monitoring for udp stat worker

collector.udpStatWorker.monitor=true

collector.udpStatSocketReceiveBufferSize=4194304

# span listen port ---------------------------------------------------------------------

collector.udpSpanListenIp=192.168.2.131

collector.udpSpanListenPort=9996

# type of udp spanworker type

#collector.udpSpanWorkerType=DEFAULT_EXECUTOR

# number of udp spanworker threads

collector.udpSpanWorkerThread=32

# capacity of udp spanworker queue

collector.udpSpanWorkerQueueSize=256

# monitoring for udp span worker

collector.udpSpanWorker.monitor=true

collector.udpSpanSocketReceiveBufferSize=4194304

# change OS level read/write socket buffer size (for linux)

#sudo sysctl -w net.core.rmem_max=

#sudo sysctl -w net.core.wmem_max=

# check current values using:

#$ /sbin/sysctl -a | grep -e rmem -e wmem

# number of agent event worker threads

collector.agentEventWorker.threadSize=4

# capacity of agent event worker queue

collector.agentEventWorker.queueSize=1024

statistics.flushPeriod=1000

# -------------------------------------------------------------------------------------------------

# The cluster related options are used to establish connections between the agent, collector, and web in order to send/receive data between them in real time.

# You may enable additional features using this option (Ex : RealTime Active Thread Chart).

# -------------------------------------------------------------------------------------------------

# Usage : Set the following options for collector/web components that reside in the same cluster in order to enable this feature.

# 1. cluster.enable (pinpoint-web.properties, pinpoint-collector.properties) - "true" to enable

# 2. cluster.zookeeper.address (pinpoint-web.properties, pinpoint-collector.properties) - address of the ZooKeeper instance that will be used to manage the cluster

# 3. cluster.web.tcp.port (pinpoint-web.properties) - any available port number (used to establish connection between web and collector)

# -------------------------------------------------------------------------------------------------

# Please be aware of the following:

#1. If the network between web, collector, and the agents are not stable, it is advisable not to use this feature.

#2. We recommend using the cluster.web.tcp.port option. However, in cases where the collector is unable to establish connection to the web, you may reverse this and make the web establish connection to the collector.

# In this case, you must set cluster.connect.address (pinpoint-web.properties); and cluster.listen.ip, cluster.listen.port (pinpoint-collector.properties) accordingly.

cluster.enable=true

cluster.zookeeper.address=192.168.2.131,192.168.2.132,192.168.2.133

cluster.zookeeper.sessiontimeout=30000

cluster.listen.ip=192.168.2.131

cluster.listen.port=9090

#collector.admin.password=

#collector.admin.api.rest.active=

#collector.admin.api.jmx.active=

collector.spanEvent.sequence.limit=10000

# span.binary format compatibility = v1 or v2 or dualWrite

# span format v2 : https://github.com/naver/pinpoint/issues/1819

collector.span.format.compatibility.version=v2

# stat handling compatibility = v1 or v2 or dualWrite

# AgentStatV2 table : https://github.com/naver/pinpoint/issues/1533

collector.stat.format.compatibility.version=v2

启动pinpoint collector

当配置完三个节点collector后即可启动collector:

[vagrant@pinpointNode1 ~]$ cd pinpoint-collector-1.6.2/bin

[vagrant@pinpointNode1 bin]$ chmod +x catalina.sh shutdown.sh startup.sh

[vagrant@pinpointNode1 bin$ ./startup.sh

启动Pinpoint Collector时请确保HBase、Zookeeper都是正常启动的,否则会报错Connection Refused。

启动后可查看collector日志:

[vagrant@pinpointNode1 ~]$ cd pinpoint-collector-1.6.2/logs

[vagrant@pinpointNode1 logs]$ tail -f catalina.out

安装pinpoint web

解压tomcat

[vagrant@pinpointNode1 ~]$ unzip apache-tomcat-8.5.14.zip

[vagrant@pinpointNode1 ~]$ mv apache-tomcat-8.5.14 pinpoint-web-1.6.2

[vagrant@pinpointNode1 ~]$ cd pinpoint-web-1.6.2/webapps

[vagrant@pinpointNode1 webapps]$ rm -rf *

[vagrant@pinpointNode1 webapps]$ unzip ~/pinpoint-web-1.6.2.war -d ROOT

修改配置文件

修改server.xml

为了避免端口冲突,更改tomcat端口设置:

此处设置的端口是19080,当pinpoint web安装好之后就可以在浏览器中通过该端口访问web界面了。

修改hbase.properties

修改该配置文件中的hbase.client.host、hbase.client.port参数:

[vagrant@pinpointNode2 ~]$ cat pinpoint-web-1.6.2/webapps/ROOT/WEB-INF/classes/hbase.properties

#zookeeper集群IP地址

hbase.client.host=192.168.2.131,192.168.2.132,192.168.2.133

#zookeeper端口

hbase.client.port=2181

# hbase default:/hbase

hbase.zookeeper.znode.parent=/hbase

# hbase timeout option==================================================================================

# hbase default:true

hbase.ipc.client.tcpnodelay=true

# hbase default:60000

hbase.rpc.timeout=10000

# hbase default:Integer.MAX_VALUE

hbase.client.operation.timeout=10000

# hbase socket read timeout. default: 200000

hbase.ipc.client.socket.timeout.read=20000

# socket write timeout. hbase default: 600000

hbase.ipc.client.socket.timeout.write=30000

#==================================================================================

# hbase client thread pool option

hbase.client.thread.max=64

hbase.client.threadPool.queueSize=5120

# prestartAllCoreThreads

hbase.client.threadPool.prestart=false

#==================================================================================

# hbase parallel scan options

hbase.client.parallel.scan.enable=true

hbase.client.parallel.scan.maxthreads=64

hbase.client.parallel.scan.maxthreadsperscan=16

修改pinpoint-web.properties

修改后的配置文件如下所示:

[vagrant@pinpointNode2 ~]$ cat pinpoint-web-1.6.2/webapps/ROOT/WEB-INF/classes/pinpoint-web.properties

# -------------------------------------------------------------------------------------------------

# The cluster related options are used to establish connections between the agent, collector, and web in order to send/receive data between them in real time.

# You may enable additional features using this option (Ex : RealTime Active Thread Chart).

# -------------------------------------------------------------------------------------------------

# Usage : Set the following options for collector/web components that reside in the same cluster in order to enable this feature.

# 1. cluster.enable (pinpoint-web.properties, pinpoint-collector.properties) - "true" to enable

# 2. cluster.zookeeper.address (pinpoint-web.properties, pinpoint-collector.properties) - address of the ZooKeeper instance that will be used to manage the cluster

# 3. cluster.web.tcp.port (pinpoint-web.properties) - any available port number (used to establish connection between web and collector)

# -------------------------------------------------------------------------------------------------

# Please be aware of the following:

#1. If the network between web, collector, and the agents are not stable, it is advisable not to use this feature.

#2. We recommend using the cluster.web.tcp.port option. However, in cases where the collector is unable to establish connection to the web, you may reverse this and make the web establish connection to the collector.

# In this case, you must set cluster.connect.address (pinpoint-web.properties); and cluster.listen.ip, cluster.listen.port (pinpoint-collector.properties) accordingly.

cluster.enable=true

#pinpoint web的tcp端口(默认是9997)

cluster.web.tcp.port=9997

cluster.zookeeper.address=192.168.2.131,192.168.2.132,192.168.2.133

cluster.zookeeper.sessiontimeout=30000

cluster.zookeeper.retry.interval=60000

#对应pinpoint collector集群IP:端口

cluster.connect.address=192.168.2.131:9090,192.168.2.132:9090,192.168.2.133:9090

# FIXME - should be removed for proper authentication

admin.password=admin

#log site link (guide url : https://github.com/naver/pinpoint/blob/master/doc/per-request_feature_guide.md)

#log.enable=false

#log.page.url=

#log.button.name=

# Configuration

# Flag to send usage information (button click counts/order) to Google Analytics

# https://github.com/naver/pinpoint/wiki/FAQ#why-do-i-see-ui-send-requests-to-httpwwwgoogle-analyticscomcollect

config.sendUsage=true

config.editUserInfo=true

config.openSource=true

config.show.activeThread=true

config.show.activeThreadDump=true

config.show.inspector.dataSource=true

config.enable.activeThreadDump=true

web.hbase.selectSpans.limit=500

web.hbase.selectAllSpans.limit=500

web.activethread.activeAgent.duration.days=7

# span.binary format compatibility = v1 or v2 or compatibilityMode

# span format v2 : https://github.com/naver/pinpoint/issues/1819

web.span.format.compatibility.version=compatibilityMode

# stat handling compatibility = v1 or v2 or compatibilityMode

# AgentStatV2 table : https://github.com/naver/pinpoint/issues/1533

web.stat.format.compatibility.version=compatibilityMode

启动pinpoint web

[vagrant@pinpointNode2 ~]$ cd pinpoint-web-1.6.2/bin

[vagrant@pinpointNode2 bin]$ chmod +x catalina.sh shutdown.sh startup.sh

[vagrant@pinpointNode2 bin]$ ./startup.sh

启动后可查看collector日志:

[vagrant@pinpointNode2 ~]$ cd pinpoint-web -1.6.2/logs

[vagrant@pinpointNode2 logs]$ tail -f catalina.out

成功启动后在浏览器查看web界面http://192.168.2.132:19080/

配置Nginx代理pinpoint collector

修改nginx配置文件:

[vagrant@pinpointNode1 ~]$ vim /etc/nginx/nginx.conf

添加如下内容:

stream {

proxy_protocol_timeout 120s;

log_format main '$remote_addr $remote_port - [$time_local] '

'$status $bytes_sent $protocol $server_addr $server_port'

'$proxy_protocol_addr $proxy_protocol_port';

access_log /var/log/nginx/access.log main;

upstream 9994_tcp_upstreams {

#least_timefirst_byte;

fail_timeout=15s;

server 192.168.2.131:9994;

server 192.168.2.132:9994;

server 192.168.2.133:9994;

}

upstream 9995_udp_upstreams {

#least_timefirst_byte;

server 192.168.2.131:9995;

server 192.168.2.132:9995;

server 192.168.2.133:9995;

}

upstream 9996_udp_upstreams {

#least_timefirst_byte;

server 192.168.2.131:9996;

server 192.168.2.132:9996;

server 192.168.2.133:9996;

}

server {

listen 19994;

proxy_pass 9994_tcp_upstreams;

#proxy_timeout 1s;

proxy_connect_timeout 5s;

}

server {

listen 19995 udp;

proxy_pass 9995_udp_upstreams;

proxy_timeout 1s;

#proxy_responses1;

}

server {

listen 19996 udp;

proxy_pass 9996_udp_upstreams;

proxy_timeout 1s;

#proxy_responses1;

}

}

添加内容后保存并退出。

使nginx配置生效:

[vagrant@pinpointNode1 ~]$ nginx -s reload

完成之后可在该节点上看到19994(tcp)、19995(udp)、19996(udp)端口被启用,分别用于代理各个pinpoint collector节点的9994、9995、9996端口。

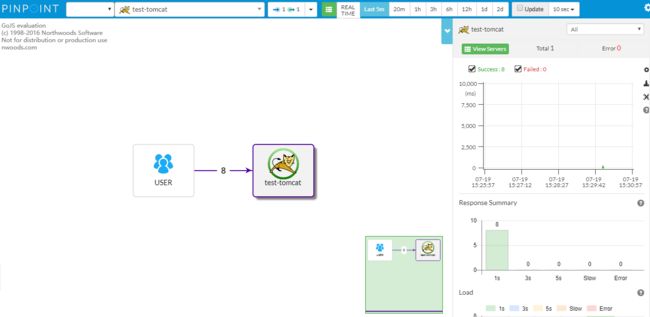

安装pinpoint agent

为了方便检验本次搭建的集群环境,在pinpointNode3节点使用tomcat进行验证。

解压agent安装包

[vagrant@pinpointNode3 ~]$ mkdir pinpoint-agent-1.6.2

[vagrant@pinpointNode3 ~]$ cd pinpoint-agent-1.6.2/

[vagrant@pinpointNode3 pinpoint-agent-1.6.2]$ cp ~/pinpoint-agent-1.6.2.tar.gz .

[vagrant@pinpointNode3 pinpoint-agent-1.6.2]$ tar -xzvf pinpoint-agent-1.6.2.tar.gz

[vagrant@pinpointNode3 pinpoint-agent-1.6.2]$ rm pinpoint-agent-1.6.2.tar.gz

配置pinpoint agent

修改pinpoint agent的配置文件pinpoint.config中的以下内容:

###########################################################

# Collector server #

###########################################################

# 本次使用了nginx进行代理,此处改为nginx IP地址

profiler.collector.ip=192.168.2.131

# placeHolder support "${key}"

profiler.collector.span.ip=${profiler.collector.ip}

# nginx代理collector 9996的端口

profiler.collector.span.port=19996

# placeHolder support "${key}"

profiler.collector.stat.ip=${profiler.collector.ip}

# nginx代理collector 9995的端口

profiler.collector.stat.port=19995

# placeHolder support "${key}"

profiler.collector.tcp.ip=${profiler.collector.ip}

# nginx代理collector 9994的端口

profiler.collector.tcp.port=19994

tomcat验证

[vagrant@pinpointNode3 ~]$ unzip apache-tomcat-8.5.14.zip

[vagrant@pinpointNode3 ~]$ cd apache-tomcat-8.5.14/bin

[vagrant@pinpointNode3 bin]$ chmod +x catalina.sh shutdown.sh startup.sh

[vagrant@pinpointNode3 bin]$ vim catalina.sh

在 catalina.sh 头部添加以下内容:

CATALINA_OPTS="$CATALINA_OPTS -javaagent:/home/vagrant/pinpoint-agent-1.6.2/pinpoint-bootstrap-1.6.2.jar"

CATALINA_OPTS="$CATALINA_OPTS -Dpinpoint.applicationName=test-tomcat"

CATALINA_OPTS="$CATALINA_OPTS -Dpinpoint.agentId=tomcat-01"

其中,第一个路径指向pinpoint-bootstrap的jar包位置,第二个pinpoint.applicationName表示监控的目标应用的名称,第三 个pinpoint.agentId表示监控目标应用的ID,其中pinpoint.applicationName可以不唯一,pinpoint.agentId要求唯一,如果 pinpoint.applicationName相同但pinpoint.agentId不同,则表示的是同一个应用的集群。

启动tomcat

[vagrant@pinpointNode3 ~]$ cd apache-tomcat-8.5.14/bin

[vagrant@pinpointNode3 bin]$ ./startup.sh

启动完成,当你访问tomcat后刷新pinpoint web界面即可看到应用调用情况。

参考文章

- Hadoop2.7.3+HBase1.2.5+ZooKeeper3.4.6搭建分布式集群环境

- pinpoint部署以及使用指南