corosync+pacemaker+mysql+drbd 实现mysql的高可用

====================================

一、了解各组件是什么以及之间的关系

二、安装高可用集群的提前

三、安装corosync+pacemaker

四、编译安装mysql

五、安装drbd

六、mysql与drbd实现mysql数据的镜像

七、利用crmsh配置mysql的高可用

====================================

环境:

OS:Centos 6.x(redhat 6.x)

kernel:2.6.32-358.el6.x86_64

yum源:

[centos] name=sohu-centos baseurl=http://mirrors.sohu.com/centos/$releasever/os/$basearch gpgcheck=1 enable=0 gpgkey=http://mirrors.sohu.com/centos/RPM-GPG-KEY-CentOS-6 [epel] name=sohu-epel baseurl=http://mirrors.sohu.com/fedora-epel/$releasever/$basearch/ enable=1 gpgcheck=0

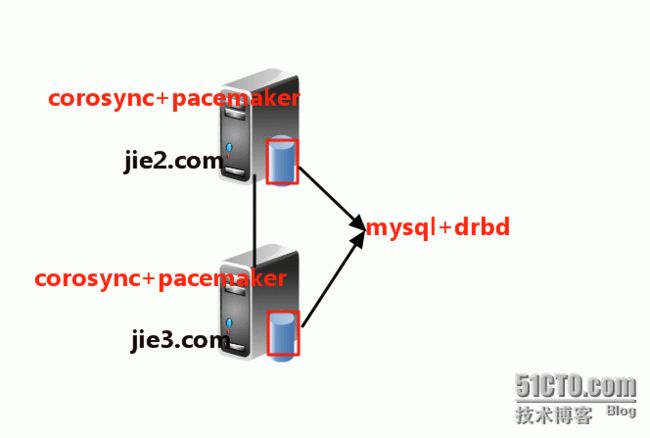

拓扑图:

部分软件以附件的形式上传,mysql的源码包软件,网上很容易找

望各位博友带着下面的疑问去实现corosync+pacemaker+mysql+drbd mysql的高可用?

1、corosync是什么?pacemaker是什么?corosync与pacemaker的关系?

2、 mysql与drbd之间的连接关系?

3、 corosync、pacemaker、mysql、drbd之间的关系?

4、提到高可用集群,广大博友们就会想到,高可用集群中资源之间是如何建立关系?各个节点会不会抢占资源使其出现脑裂(split-brain)?

出现脑裂可以有fence设备自行解决

一、了解各组件是什么以及之间的关系

corosync:corosync的由来是源于一个Openais的项目,是Openais的一个子 项目,可以实现HA心跳信息传输的功能,是众多实现HA集群软件中之一,heartbeat与corosync是流行的Messaging Layer (集群信息层)工具。而corosync是一个新兴的软件,相比Heartbeat这款很老很成熟的软件,corosync与Heartbeat各有优势,博主就不在这里比较之间的优势了,corosync相对于Heartbeat只能说现在比较流行。

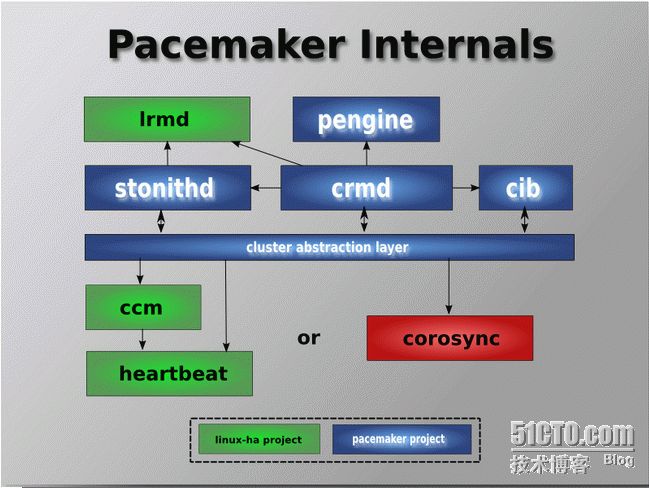

pacemaker:是众多集群资源管理器(Cluster Resource Manager)CRM中的一个,其主要功能是管理来着集群信息层发送来的信息。Pacemaker是集群的核心,它管理集群和集群信息. 集群信息更新通过Corosync通知到各个节点.

常见的CRM有:

heartbeat v1-->haresources

hearbeat v2--->crm

hearbeat v3---->pacemaker

RHCS(cman)----->rgmanager

如下图corosync与pacemaker之间的关系:

mysql:一个开源的关系型数据库

drbd:DRBD:(distributed replication block device)即分布式复制块设备。它的工作原理是:在A主机上有对指定磁盘设备写请求时,数据发送给A主机的kernel,然后通kernel中的一个模块,把相同的数据传送给B主机的kernel中一份,然后B主机再写入自己指定的磁盘设备,从而实现两主机数据的同步,也就实现了写操作高可用。类似于raid1一样,实现数据的镜像,DRBD一般是一主一从,并且所有的读写操作,挂载只能在主节点服务器上进行,,但是主从DRBD服务器之间是可以进行调换的。

各个组件之间的关系:其实mysql与drbd根本没有半毛钱的关系,而drbd与mysql相结合就有很重要的作用了,因为drbd实现数据的镜像,当drbd的主节点挂了之后,drbd的辅助节点还可以提供服务,但是主节点不会主动的切换到辅助的节点上面去,于是乎,高可用集群就派上用场了,因为资源定义为高可用的资源,主节点出现故障之后,高可用集群可以自动的切换到辅助节点上去,实现故障转移继续提供服务。

二、安装高可用集群的提前准备

1)、hosts文件

#把主机名改成jie2.com

[root@jie2 ~]# sed -i s/`grep HOSTNAME /etc/sysconfig/network |awk -F '=' '{print $2}'`/jie2.com/g /etc/sysconfig/network

#把主机名改成jie3.com

[root@jie3 ~]# sed -i s/`grep HOSTNAME /etc/sysconfig/network |awk -F '=' '{print $2}'`/jie3.com/g /etc/sysconfig/network

[root@jie2 ~]# cat >>/etc/hosts << EOF

>172.16.22.2 jie2.com jie2

>172.16.22.3 jie3.com jie3

>EOF

[root@jie3 ~]# cat >>/etc/hosts << EOF

>172.16.22.2 jie2.com jie2

>172.16.22.3 jie3.com jie3

>EOF

2)、ssh互信

[root@jie2 ~]# ssh-keygen -t rsa -P '' [root@jie2 ~]# ssh-copy-id -i .ssh/id_rsa.pub jie3 [root@jie3 ~]# ssh-keygen -t rsa -P '' [root@jie3 ~]# ssh-copy-id -i .ssh/id_rsa.pub jie2

3)、关闭NetworkManger

[root@jie2 ~]# chkconfig --del NetworkManager [root@jie2 ~]# chkconfig NetworkManager off [root@jie2 ~]# service NetworkManager stop [root@jie3 ~]# chkconfig --del NetworkManager [root@jie3 ~]# chkconfig NetworkManager off [root@jie3 ~]# service NetworkManager stop

4)、时间同步(博主用的是自己的ntp时间服务器)

[root@jie2 ~]#ntpdate 172.16.0.1 [root@jie3 ~]#ntpdate 172.16.0.1

三、安装corosync+pacemaker

1)、安装corosync+pacemaker

#节点jie2.com的操作

[root@jie2 ~]# yum -y install corosync pacemaker

[root@jie2 ~]# yum -y --nogpgcheck install crmsh-1.2.6-4.el6.x86_64.rpm pssh-2.3.1-2.el6.x86_64.rpm #提供crmsh命令接口的软件

#节点jie3.com的操作

[root@jie3 ~]# yum -y install corosync pacemaker

[root@jie3 ~]# yum -y --nogpgcheck install crmsh-1.2.6-4.el6.x86_64.rpm pssh-2.3.1-2.el6.x86_64.rpm

2)、修改配置文件和生成认证文件

配置文件

#节点jie2.com的操作

[root@jie2 ~]# cd /etc/corosync/

[root@jie2 corosync]# mv corosync.conf.example corosync.conf

[root@jie2 corosync]# vim corosync.conf

# Please read the corosync.conf.5 manual page

compatibility: whitetank

totem { #心跳信息传递层

version: 2 #版本

secauth: on #认证信息 一般on

threads: 0 #线程

interface { #定义心跳信息传递的接口

ringnumber: 0

bindnetaddr: 172.16.0.0 #绑定的网络地址,写网络地址

mcastaddr: 226.94.1.1 #多播地址

mcastport: 5405 #多播的端口

ttl: 1 #生存周期

}

}

logging { #日志

fileline: off

to_stderr: no #是否输出在屏幕上

to_logfile: yes #定义自己的日志

to_syslog: no #是否由syslog记录日志

logfile: /var/log/cluster/corosync.log #日志文件的存放路径

debug: off

timestamp: on #时间戳是否关闭

logger_subsys {

subsys: AMF

debug: off

}

}

amf {

mode: disabled

}

service {

ver: 0

name: pacemaker #pacemaker作为corosync的插件进行工作

}

aisexec {

user: root

group: root

}

[root@jie2 corosync]# scp corosync.conf jie3:/etc/corosync/

##把节点jie2.com的配置文件copy到jie3.com中

认证文件

#节点jie2.com的操作

[root@jie2 corosync]# corosync-keygen

Corosync Cluster Engine Authentication key generator.

Gathering 1024 bits for key from /dev/random.

Press keys on your keyboard to generate entropy (bits = 152).

#遇到这个情况,表示电脑的随机数不够,各位朋友可以不停的随便敲键盘,或者安装软件也可以生成随机数

[root@jie2 corosync]# scp authkey jie3:/etc/corosync/

#把认证文件也复制到jie3.com主机上

3)、开启服务和查看集群中的节点信息

#节点jie2.com的操作

[root@jie2 ~]# service corosync start

Starting Corosync Cluster Engine (corosync): [ OK ]

[root@jie2 ~]# crm status

Last updated: Thu Aug 8 14:43:13 2013

Last change: Sun Sep 1 16:41:18 2013 via crm_attribute on jie3.com

Stack: classic openais (with plugin)

Current DC: jie3.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

Online: [ jie2.com jie3.com ]

#节点jie3.com的操作

[root@jie3 ~]# service corosync start

Starting Corosync Cluster Engine (corosync): [ OK ]

[root@jie3 ~]# crm status

Last updated: Thu Aug 8 14:43:13 2013

Last change: Sun Sep 1 16:41:18 2013 via crm_attribute on jie3.com

Stack: classic openais (with plugin)

Current DC: jie3.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

Online: [ jie2.com jie3.com ]

四、编译安装mysql(两个节点的操作过程都是一样)

#节点jie2.com的操作 #1)、解压编译安装 [root@jie2 ~]# tar xf mysql-5.5.33.tar.gz [root@jie2 ~]# yum -y groupinstall "Development tools" "Server Platform Development" [root@jie2 ~]# cd mysql-5.5.33 [root@jie2 mysql-5.5.33]# yum -y install cmake [root@jie2 mysql-5.5.33]# cmake . -DCMAKE_INSTALL_PREFIX=/usr/local/mysql \ -DMYSQL_DATADIR=/mydata/data -DSYSCONFDIR=/etc \ -DWITH_INNOBASE_STORAGE_ENGINE=1 -DWITH_ARCHIVE_STORAGE_ENGINE=1 \ -DWITH_BLACKHOLE_STORAGE_ENGINE=1 -DWITH_READLINE=1 -DWITH_SSL=system \ -DWITH_ZLIB=system -DWITH_LIBWRAP=0 -DMYSQL_UNIX_ADDR=/tmp/mysql.sock \ -DDEFAULT_CHARSET=utf8 -DDEFAULT_COLLATION=utf8_general_ci [root@jie2 mysql-5.5.33]# make && make install #2)、建立配置文件和脚本文件 [root@jie2 mysql-5.5.33]# cp /usr/local/mysql/support-files/my-large.cnf /etc/my.cnf [root@jie2 mysql-5.5.33]# cp /usr/local/mysql/support-files/mysql.server /etc/rc.d/init.d/mysqld [root@jie2 mysql-5.5.33]# cd /usr/local/mysql/ [root@jie2 mysql]# useradd -r -u 306 mysql [root@jie2 mysql]# chown -R root:mysql ./* #3)、关联系统识别的路径 [root@jie2 mysql]#echo "PATH=/usr/local/mysql/bin:$PATH" >/etc/profile.d/mysqld.sh [root@jie2 mysql]#source /etc/profile.d/mysqld.sh [root@jie2 mysql]#echo "/usr/local/mysql/lib" >/etc/ld.so.conf.d/mysqld.conf [root@jie2 mysql]#ldconfig -v | grep mysql [root@jie2 mysql]#ln -sv /usr/local/mysql/include/ /usr/local/mysqld

先别初始化数据库,安装drbd把drbd挂载到目录下,然后初始化数据库把数据库的数据存放到drbd挂载的目录。

五、安装drbd

安装rpm包的drbd软件必须保证找相同内核版本的drbd-kmdl软件

1)、先划分一个分区,此分区做成drbd镜像(RHEL 6.x的重新格式化一个新的分区之后要重启系统)

#节点jie2.com的操作

[root@jie2 ~]# fdisk /dev/sda

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 3

First cylinder (7859-15665, default 7859):

Using default value 7859

Last cylinder, +cylinders or +size{K,M,G} (7859-15665, default 15665): +5G

Command (m for help): w

#节点jie3.com的操作

[root@jie3 ~]# fdisk /dev/sda

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 3

First cylinder (7859-15665, default 7859):

Using default value 7859

Last cylinder, +cylinders or +size{K,M,G} (7859-15665, default 15665): +5G

Command (m for help): w

2)、安装drbd和修改配置文件

#1)、安装drbd

#节点jie2.com的操作

[root@jie2 ~]# rpm -ivh drbd-kmdl-2.6.32-358.el6-8.4.3-33.el6.x86_64.rpm

warning: drbd-kmdl-2.6.32-358.el6-8.4.3-33.el6.x86_64.rpm: Header V4 DSA/SHA1 Signature, key ID 66534c2b: NOKEY

Preparing... ################################# [100%]

[root@jie2 ~]# rpm -ivh drbd-8.4.3-33.el6.x86_64.rpm

warning: drbd-8.4.3-33.el6.x86_64.rpm: Header V4 DSA/SHA1 Signature, key ID 66534c2b: NOKEY

Preparing... ################################## [100%]

#节点jie3.com的操作

[root@jie3 ~]# rpm -ivh drbd-kmdl-2.6.32-358.el6-8.4.3-33.el6.x86_64.rpm

warning: drbd-kmdl-2.6.32-358.el6-8.4.3-33.el6.x86_64.rpm: Header V4 DSA/SHA1 Signature, key ID 66534c2b: NOKEY

Preparing... ################################# [100%]

[root@jie3 ~]# rpm -ivh drbd-8.4.3-33.el6.x86_64.rpm

warning: drbd-8.4.3-33.el6.x86_64.rpm: Header V4 DSA/SHA1 Signature, key ID 66534c2b: NOKEY

Preparing... ################################## [100%]

#2)、修改drbd的配置文件

#节点jie2.com的操作

[root@jie2 ~]# cd /etc/drbd.d/

[root@jie2 drbd.d]# cat global_common.conf #全局配置文件

global {

usage-count no;

# minor-count dialog-refresh disable-ip-verification

}

common {

protocol C;

handlers {

pri-on-incon-degr "/usr/lib/drbd/notify-pri-on-incon-degr.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

pri-lost-after-sb "/usr/lib/drbd/notify-pri-lost-after-sb.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

local-io-error "/usr/lib/drbd/notify-io-error.sh; /usr/lib/drbd/notify-emergency-shutdown.sh; echo o > /proc/sysrq-trigger ; halt -f";

# fence-peer "/usr/lib/drbd/crm-fence-peer.sh";

# split-brain "/usr/lib/drbd/notify-split-brain.sh root";

# out-of-sync "/usr/lib/drbd/notify-out-of-sync.sh root";

# before-resync-target "/usr/lib/drbd/snapshot-resync-target-lvm.sh -p 15 -- -c 16k";

# after-resync-target /usr/lib/drbd/unsnapshot-resync-target-lvm.sh;

}

startup {

#wfc-timeout 120;

#degr-wfc-timeout 120;

}

disk {

on-io-error detach;

#fencing resource-only;

}

net {

cram-hmac-alg "sha1";

shared-secret "mydrbdlab";

}

syncer {

rate 1000M;

}

}

[root@jie2 drbd.d]# cat mydata.res #资源配置文件

resource mydata {

on jie2.com {

device /dev/drbd0;

disk /dev/sda3;

address 172.16.22.2:7789;

meta-disk internal;

}

on jie3.com {

device /dev/drbd0;

disk /dev/sda3;

address 172.16.22.3:7789;

meta-disk internal;

}

}

#把配置文件copy到节点jie3.com上面

[root@jie2 drbd.d]# scp global_common.conf mydata.res jie3:/etc/drbd.d/

3)、初始化drbd的资源并启动

#节点jie2.com的操作

#创建drbd的资源

[root@jie2 ~]# drbdadm create-md mydata

Writing meta data...

initializing activity log

NOT initializing bitmap

lk_bdev_save(/var/lib/drbd/drbd-minor-0.lkbd) failed: No such file or directory

New drbd meta data block successfully created. #提示已经创建成功

lk_bdev_save(/var/lib/drbd/drbd-minor-0.lkbd) failed: No such file or directory

#启动服务

[root@jie2 ~]# service drbd start

Starting DRBD resources: [

create res: mydata

prepare disk: mydata

adjust disk: mydata

adjust net: mydata

]

.......... [ok]

#节点jie3.com的操作

#创建drbd的资源

[root@jie3 ~]# drbdadm create-md mydata

Writing meta data...

initializing activity log

NOT initializing bitmap

lk_bdev_save(/var/lib/drbd/drbd-minor-0.lkbd) failed: No such file or directory

New drbd meta data block successfully created. #提示已经创建成功

lk_bdev_save(/var/lib/drbd/drbd-minor-0.lkbd) failed: No such file or directory

#启动服务

[root@jie3 ~]# service drbd start

Starting DRBD resources: [

create res: mydata

prepare disk: mydata

adjust disk: mydata

adjust net: mydata

]

.......... [ok]

4)、设置一个主节点,然后同步drbd的数据(此步骤只需在一个节点上操作)

#设置jie2.com为drbd的主节点

[root@jie2 ~]# drbdadm primary --force mydata

[root@jie2 ~]# cat /proc/drbd #查看同步进度

version: 8.4.3 (api:1/proto:86-101)

GIT-hash: 89a294209144b68adb3ee85a73221f964d3ee515 build by gardner@, 2013-05-27 04:30:21

0: cs:SyncSource ro:Primary/Secondary ds:UpToDate/Inconsistent C r---n-

ns:1897624 nr:0 dw:0 dr:1901216 al:0 bm:115 lo:0 pe:3 ua:3 ap:0 ep:1 wo:f oos:207988

[=================>..] sync'ed: 90.3% (207988/2103412)K

finish: 0:00:07 speed: 26,792 (27,076) K/sec

[root@jie2 ~]# watch -n1 'cat /proc/drbd' 此命令可以动态的查看

[root@jie2 ~]# cat /proc/drbd

version: 8.4.3 (api:1/proto:86-101)

GIT-hash: 89a294209144b68adb3ee85a73221f964d3ee515 build by gardner@, 2013-05-27 04:30:21

0: cs:Connected ro:Primary/Secondary ds:UpToDate/UpToDate C r-----

ns:120 nr:354 dw:435 dr:5805 al:6 bm:9 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:0 #当两边都为UpToDate时,表示两边已经同步

5)、格式化drdb分区(此步骤在主节点上操作)

[root@jie2 ~]# mke2fs -t ext4 /dev/drbd0

六、mysql与drbd实现mysql数据的镜像

1)、在drbd的主节点上,挂载drbd的分区,然后初始化数据库

[root@jie2 ~]# mkdir /mydata #创建用于挂载drbd的目录

[root@jie2 ~]# mount /dev/drbd0 /mydata/

[root@jie2 ~]# mkdir /mydata/data

[root@jie2 ~]#chown -R mysql.mysql /mydata #把文件的属主和属组改成mysql

[root@jie2 ~]#vim /etc/my.cnf #修改mysql的配置文件

datadir = /mydata/data

innodb_file_per_table =1

[root@jie2 ~]#/usr/local/mysql/scripts/mysql_install_db --user=mysql --datadir=/mydata/data/ --basedir=/usr/local/mysql #初始化数据库

[root@jie2 ~]# service mysqld start

Starting MySQL ....... [ OK ]

2)、验证drbd是否镜像

#节点jie2.com的操作

#1)、先在drbd的主节点上面创建一个数据库

[root@jie2 ~]# mysql

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| test |

+--------------------+

4 rows in set (0.00 sec)

mysql> create database jie2;

Query OK, 1 row affected (0.01 sec)

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| jie2 |

| mysql |

| performance_schema |

| test |

+--------------------+

5 rows in set (0.00 sec)

mysql>\q

#2)、停掉mysql服务,卸载drbd挂载的目录

[root@jie2 ~]# service mysqld stop

[root@jie2 ~]# umount /dev/drbd0 #卸载drbd的挂载点

[root@jie2 ~]# drbdadm secondary mydata #把此节点改为drbd的备用节点

#节点jie3.com的操作

#3)、把jie3.com变为drbd的主节点

[root@jie3 ~]#drbdadm primary mydata #把此节点改为drbd的主节点

[root@jie3 ~]# mkdir /mydata

[root@jie3 ~]#chown -R mysql.mysql /mydata

[root@jie3 ~]#mount /dev/drdb0 /mydata

[root@jie3 ~]#vim /etc/my.cnf

datadir = /mydata/data

innodb_file_per_table = 1

[root@jie3 ~]# service mysqld start #此节点上不用初始化数据库,直接开启服务即可

Starting MySQL ....... [ OK ]

[root@jie3 ~]# mysql

mysql> show databases; #可以看见jie2数据库

+--------------------+

| Database |

+--------------------+

| information_schema |

| jie2 |

| mysql |

| performance_schema |

| test |

+--------------------+

5 rows in set (0.00 sec)

七、利用crmsh配置mysql的高可用

需要定义集群资源而mysql、drbd都是集群的资源,由集群管理的资源开机是一定不能够自行启动的。

1)、关闭drbd的服务和关闭mysql的服务

[root@jie2 ~]#service mysqld stop [root@jie2 ~]#service drbd stop [root@jie3 ~]#service mysqld stop [root@jie3 ~]#umount /dev/drbd0 #之前drbd已经挂载到jie3.com节点上了 [root@jie3 ~]#service drdb stop

2)、定义集群资源

定义drbd的资源(提供drbd的资源代理RA由OCF类别中的linbit提供)

[root@jie2 ~]# crm crm(live)# configure crm(live)configure# property stonith-enabled=false crm(live)configure# property no-quorum-policy=ignore crm(live)configure# primitive mysqldrbd ocf:linbit:drbd params drbd_resource=mydata op monitor role=Master interval=10 timeout=20 op monitor role=Slave interval=20 timeout=20 op start timeout=240 op stop timeout=100 crm(live)configure#verify #可以检查语法

定义drbd的主从资源

crm(live)configure# ms ms_mysqldrbd mysqldrbd meta master-max=1 master-node-max=1 clone-max=2 clone-node-max=1 notify=true crm(live)configure# verify

定义文件系统资源和约束关系

crm(live)configure# primitive mystore ocf:heartbeat:Filesystem params device="/dev/drbd0" directory="/mydata" fstype="ext4" op monitor interval=40 timeout=40 op start timeout=60 op stop timeout=60 crm(live)configure# verify crm(live)configure# colocation mystore_with_ms_mysqldrbd inf: mystore ms_mysqldrbd:Master crm(live)configure# order ms_mysqldrbd_before_mystore mandatory: ms_mysqldrbd:promote mystore:start crm(live)configure# verify

定义vip资源、mysql服务的资源约束关系

crm(live)configure# primitive myvip ocf:heartbeat:IPaddr params ip="172.16.22.100" op monitor interval=20 timeout=20 on-fail=restart crm(live)configure# primitive myserver lsb:mysqld op monitor interval=20 timeout=20 on-fail=restart crm(live)configure# verify crm(live)configure# colocation myserver_with_mystore inf: myserver mystore crm(live)configure# order mystore_before_myserver mandatory: mystore:start myserver:start crm(live)configure# verify crm(live)configure# colocation myvip_with_myserver inf: myvip myserver crm(live)configure# order myvip_before_myserver mandatory: myvip myserver crm(live)configure# verify crm(live)configure# commit

查看所有定义资源的信息

crm(live)configure# show

node jie2.com \

attributes standby="off"

node jie3.com \

attributes standby="off"

primitive myserver lsb:mysqld \

op monitor interval="20" timeout="20" on-fail="restart"

primitive mysqldrbd ocf:linbit:drbd \

params drbd_resource="mydata" \

op monitor role="Master" interval="10" timeout="20" \

op monitor role="Slave" interval="20" timeout="20" \

op start timeout="240" interval="0" \

op stop timeout="100" interval="0"

primitive mystore ocf:heartbeat:Filesystem \

params device="/dev/drbd0" directory="/mydata" fstype="ext4" \

op monitor interval="40" timeout="40" \

op start timeout="60" interval="0" \

op stop timeout="60" interval="0"

primitive myvip ocf:heartbeat:IPaddr \

params ip="172.16.22.100" \

op monitor interval="20" timeout="20" on-fail="restart" \

meta target-role="Started"

ms ms_mysqldrbd mysqldrbd \

meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true"

colocation myserver_with_mystore inf: myserver mystore

colocation mystore_with_ms_mysqldrbd inf: mystore ms_mysqldrbd:Master

colocation myvip_with_myserver inf: myvip myserver

order ms_mysqldrbd_before_mystore inf: ms_mysqldrbd:promote mystore:start

order mystore_before_myserver inf: mystore:start myserver:start

order myvip_before_myserver inf: myvip myserver

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

查看资源运行的状态运行在jie3.com上

[root@jie2 ~]# crm status

Last updated: Thu Aug 8 17:55:30 2013

Last change: Sun Sep 1 16:41:18 2013 via crm_attribute on jie3.com

Stack: classic openais (with plugin)

Current DC: jie3.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

5 Resources configured.

Online: [ jie2.com jie3.com ]

Master/Slave Set: ms_mysqldrbd [mysqldrbd]

Masters: [ jie3.com ]

Slaves: [ jie2.com ]

mystore (ocf::heartbeat:Filesystem): Started jie3.com

myvip (ocf::heartbeat:IPaddr): Started jie3.com

myserver (lsb:mysqld): Started jie3.com

切换节点,看资源是否转移

[root@jie3 ~]# crm node standby jie3.com #把此节点设置为备用节点

[root@jie3 ~]# crm status

Last updated: Mon Sep 2 01:45:07 2013

Last change: Mon Sep 2 01:44:59 2013 via crm_attribute on jie3.com

Stack: classic openais (with plugin)

Current DC: jie3.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

5 Resources configured.

Node jie3.com: standby

Online: [ jie2.com ]

Master/Slave Set: ms_mysqldrbd [mysqldrbd]

Masters: [ jie2.com ] #资源已然转到jie2.com上面

Stopped: [ mysqldrbd:1 ]

mystore (ocf::heartbeat:Filesystem): Started jie2.com

myvip (ocf::heartbeat:IPaddr): Started jie2.com

myserver (lsb:mysqld): Started jie2.com

由于定义了drbd的资源约束,Masters运行在那个节点,则此节点不可能成为drbd的辅助节点

[root@jie3 ~]# cat /proc/drbd

version: 8.4.3 (api:1/proto:86-101)

GIT-hash: 89a294209144b68adb3ee85a73221f964d3ee515 build by gardner@, 2013-05-27 04:30:21

0: cs:Connected ro:Primary/Secondary ds:UpToDate/UpToDate C r-----

ns:426 nr:354 dw:741 dr:6528 al:8 bm:9 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:0

[root@jie3 ~]# drbdadm secondary mydata

0: State change failed: (-12) Device is held open by someone

Command 'drbdsetup secondary 0' terminated with exit code 11

手动的停掉myvip资源还是会启动(因为定义资源是指定了on-fail=restart)

[root@jie2 ~]# ifconfig | grep eth0

eth0 Link encap:Ethernet HWaddr 00:0C:29:1F:74:CF

inet addr:172.16.22.2 Bcast:172.16.255.255 Mask:255.255.0.0

inet6 addr: fe80::20c:29ff:fe1f:74cf/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:2165062 errors:0 dropped:0 overruns:0 frame:0

TX packets:4109895 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:167895762 (160.1 MiB) TX bytes:5731508707 (5.3 GiB)

eth0:0 Link encap:Ethernet HWaddr 00:0C:29:1F:74:CF

inet addr:172.16.22.100 Bcast:172.16.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

[root@jie2 ~]# ifconfig eth0:0 down

[root@jie2 ~]# ifconfig | grep eth0

eth0 Link encap:Ethernet HWaddr 00:0C:29:1F:74:CF

inet addr:172.16.22.2 Bcast:172.16.255.255 Mask:255.255.0.0

inet6 addr: fe80::20c:29ff:fe1f:74cf/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:2165242 errors:0 dropped:0 overruns:0 frame:0

TX packets:4110094 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:167917669 (160.1 MiB) TX bytes:5731537035 (5.3 GiB)

[root@jie2 ~]# crm status

Last updated: Thu Aug 8 18:29:27 2013

Last change: Mon Sep 2 01:44:59 2013 via crm_attribute on jie3.com

Stack: classic openais (with plugin)

Current DC: jie3.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

5 Resources configured.

Node jie3.com: standby

Online: [ jie2.com ]

Master/Slave Set: ms_mysqldrbd [mysqldrbd]

Masters: [ jie2.com ]

Stopped: [ mysqldrbd:1 ]

mystore (ocf::heartbeat:Filesystem): Started jie2.com

myvip (ocf::heartbeat:IPaddr): Started jie2.com

myserver (lsb:mysqld): Started jie2.com

Failed actions:

myvip_monitor_20000 (node=jie2.com, call=47, rc=7, status=complete): not running

myserver_monitor_20000 (node=jie3.com, call=209, rc=7, status=complete): not running

[root@jie2 ~]# ifconfig | grep eth0

eth0 Link encap:Ethernet HWaddr 00:0C:29:1F:74:CF

inet addr:172.16.22.2 Bcast:172.16.255.255 Mask:255.255.0.0

inet6 addr: fe80::20c:29ff:fe1f:74cf/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:2165681 errors:0 dropped:0 overruns:0 frame:0

TX packets:4110535 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:168015864 (160.2 MiB) TX bytes:5731617112 (5.3 GiB)

eth0:1 Link encap:Ethernet HWaddr 00:0C:29:1F:74:CF

inet addr:172.16.22.100 Bcast:172.16.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

[root@jie2 ~]#

自此mysql的高可用已经完成。本博客没对crm命令参数进行解释