项目介绍

环境中一共有两个节点,node1提供Mariadb服务,node2为node1的备份。

数据库部署在DRBD磁盘分区,保证数据实时同步到备节点。

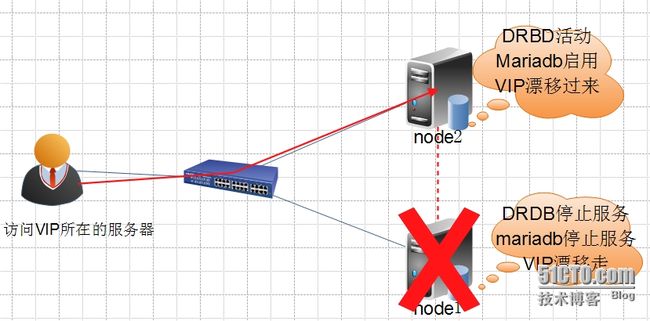

正常请求下,node1节点运行VIP,DRBD,Mariadb提供服务,一旦node1节点出现故障,node2节点检测不到node1节点的心跳,就会启用DRBD、Mariadb,VIP继续提供Mariadb服务,保证业务不中断。

架构缺点:无论是corosync还是drbd都有一个严重的问题就是裂脑,要是有分布式系统可以将Mariadb部署在分布式系统上。

故障前拓扑:

故障后拓扑

环境说明:

主机名 |

接口 |

Ip地址 |

用途 |

|

Node1 |

Node1.xmfb.com |

Eth0 |

172.16.4.100 |

集群主节点,提供Mariadb服务 |

Node2 |

Node2.xmfb.com |

Eth0 |

172.16.4.101 |

备用节点,不断检测主节点是否故障,随时准备接替主节点提供高可用服务 |

VIP |

172.16.4.1 |

用户访问集群使用的地址 |

配置前的准备

(1)节点间时间必须同步:使用ntp协议实现;

[root@node1 ~]# ntpdate 172.16.0.1 #172.16.0.1是我这里的时间服务器 [root@node2 ~]# ntpdate 172.16.0.1

(2) 节点间需要通过主机名互相通信,必须解析主机至IP地址;

(a)建议名称解析功能使用hosts文件来实现;

(b)通信中使用的名字与节点名字必须保持一致:“uname -n”命令,或“hostname”展示出的名字保持一致;

[root@node1 ~]# sed -i's@\(HOSTNAME=\).*@\1node1.xmfb.com@g' /etc/sysconfig/network [root@node1 ~]# hostname node1.xmfb.com [root@node1 ~]# echo "172.16.4.100node1.xmfb.com node1" >> /etc/hosts [root@node1 ~]# echo "172.16.4.101node2.xmfb.com node2" >> /etc/hosts [root@node2 ~]# sed -i's@\(HOSTNAME=\).*@\1node2.xmfb.com@g' /etc/sysconfig/network [root@node2 ~]# hostname node2.xmfb.com [root@node2 ~]# echo "172.16.4.100 node1.xmfb.comnode1" >> /etc/hosts [root@node2 ~]# echo "172.16.4.101node2.xmfb.com node2" >> /etc/hosts

(3)考虑仲裁设备是否会用到;

(4) 建立各节点之间的root用户能够基于密钥认证;

#ssh-keygen -t rsa -P ''

#ssh-copy-id -i /root/.ssh/id_rsa.pub root@HOSTNAME

[root@node1 ~]# ssh-keygen -t rsa -f ~/.ssh/id_rsa-P '' [root@node1 ~]# ssh-copy-id -i .ssh/id_rsa.pubnode2 [root@node2 ~]# ssh-keygen -t rsa -f ~/.ssh/id_rsa-P '' [root@node2 ~]# ssh-copy-id -i .ssh/id_rsa.pubnode1

注意:定义成为集群服务中的资源,一定不能开机自动启动;因为它们将由crm管理;

Corosync配置

安装corosync

[root@node1~]# yum -y install corosync pacemaker [root@node2~]# yum -y install corosync pacemaker

安装crm

[root@node1 ~]# yum--nogpgcheck localinstall crmsh-2.1-1.6.x86_64.rpm pssh-2.3.1-2.el6.x86_64.rpm –y [root@node2 ~]# yum --nogpgcheck localinstallcrmsh-2.1-1.6.x86_64.rpm pssh-2.3.1-2.el6.x86_64.rpm -y

复制配置文件模版

[root@node1 ~]# cp/etc/corosync/corosync.conf.example /etc/corosync/corosync.conf

修改配置文件

[root@node1 ~]# vim /etc/corosync/corosync.conf

compatibility: whitetank

totem {

version: 2

secauth: on

threads: 0

interface {

ringnumber: 0

bindnetaddr: 172.16.0.0

mcastaddr: 239.165.17.11

mcastport: 5405

ttl: 1

}

}

logging {

fileline: off

to_stderr: no

to_logfile: no

logfile: /var/log/cluster/corosync.log

to_syslog: yes

debug: off

timestamp: on

logger_subsys {

subsys: AMF

debug: off

}

}

service {

ver: 0

name:pacemaker

use_mgmtd:yes

}

aisexec {

user:root

group: root

}

生成验证密钥

[root@node1 ~]# corosync-keygen

复制配置文件和密钥到节点2

[root@node1 ~]# scp/etc/corosync/{authkey,corosync.conf} node2:/etc/corosync/

authkey 100% 128 0.1KB/s 00:00

corosync.conf 100% 2790 2.7KB/s 00:00

启动corosync

[root@node1 ~]# service corosync start;ssh node2'service corosync start'

查看集群状态信息

[root@node1 ~]# crm status Last updated: Sun May 31 17:32:28 2015 Last change: Sun May 31 17:32:22 2015 Stack: classic openais (with plugin) Current DC: node1.xmfb.com - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 0 Resources configured Online: [ node1.xmfb.com node2.xmfb.com ]

解决corosync中STONITH报错问题

crm(live)configure# property stonith-enabled=false crm(live)configure# commit

资源转移配置

crm(live)configure# property no-quorum-policy=ignore

资源粘性配置

crm(live)configure# propertydefault-resource-stickiness=50

Corosync就先配置到这里,然后配置drbd

drbd配置

配置之前需要配置好做drbd磁盘的分区,而且不要格式化(此处省略)

安装配置drbd

安装drbd

rpm -ivh kmod-drbd84-8.4.5-504.1.el6.x86_64.rpmdrbd84-utils-8.9.1-1.el6.elrepo.x86_64.rpm

配置global_common.conf

[root@node1 ~]# vim /etc/drbd.d/global_common.conf

global {

usage-count no;

# minor-countdialog-refresh disable-ip-verification

}

common {

protocol C;

handlers {

pri-on-incon-degr "/usr/lib/drbd/notify-pri-on-incon-degr.sh;/usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ;reboot -f";

pri-lost-after-sb "/usr/lib/drbd/notify-pri-lost-after-sb.sh;/usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ;reboot -f";

local-io-error"/usr/lib/drbd/notify-io-error.sh;/usr/lib/drbd/notify-emergency-shutdown.sh; echo o > /proc/sysrq-trigger ;halt -f";

# fence-peer"/usr/lib/drbd/crm-fence-peer.sh";

# split-brain"/usr/lib/drbd/notify-split-brain.sh root";

# out-of-sync"/usr/lib/drbd/notify-out-of-sync.sh root";

#before-resync-target "/usr/lib/drbd/snapshot-resync-target-lvm.sh -p 15 ---c 16k";

#after-resync-target /usr/lib/drbd/unsnapshot-resync-target-lvm.sh;

}

startup {

#wfc-timeout120;

#degr-wfc-timeout 120;

}

disk {

on-io-errordetach; #如果磁盘故障就拆掉,不让磁盘继续同步

#fencingresource-only;

}

net {

cram-hmac-alg"sha1"; #同步的算法

shared-secret"mydrbdlab"; #同步的随机码

}

syncer {

rate200M; #设置同步的速率

}

}

定义一个资源,文件结尾必须是res

[root@node1 ~]# vim /etc/drbd.d/mystore.res #文件名最好和资源一致。

resource mystore {

onnode1.xmfb.com {

device /dev/drbd0; #资源名称

disk /dev/sda3; #本地drbd设备

address 172.16.4.100:7789; #drbd节点设置,而且需要保证可以解析对方的节点

meta-diskinternal; #drbd网络设置

}

onnode2.xmfb.com {

device /dev/drbd0;

disk /dev/sda3;

address 172.16.4.101:7789;

meta-diskinternal;

}

}

以上文件在两个节点上必须相同,因此,可以基于ssh将刚才配置的文件全部同步至另外一个节点。

[root@node1 ~]# scp-r /etc/drbd.* node2:/etc/ drbd.conf 100% 133 0.1KB/s 00:00 mystore.res 100% 201 0.2KB/s 00:00 global_common.conf 100%2197 2.2KB/s 00:00

初始化资源,两个节点都运行

[root@node1 ~]# drbdadm create-md mystore initializing activity log NOT initializing bitmap Writing meta data... New drbd meta data block successfully created. [root@node2 ~]# drbdadm create-md mystore initializing activity log NOT initializing bitmap Writing meta data... New drbd meta data block successfully created.

启动drbd

[root@node1 ~]# service drbd start [root@node2 ~]# service drbd start

说明,第一个节点启动的时候会等待第二个节点启动,如果第二个节点不启动那么第一个节点也无法启动

drbd作为集群资源需要设置开机不启动

[root@node1 ~]# chkconfig drbd off [root@node1 ~]# chkconfig --list drbd drbd 0:off 1:off 2:off 3:off 4:off 5:off 6:off

启动后的配置

查看状态,由于都没有配置主节点所以都是从节点

[root@node1 ~]# cat /proc/drbd version: 8.4.5 (api:1/proto:86-101) GIT-hash: 1d360bde0e095d495786eaeb2a1ac76888e4db96build by [email protected], 2015-01-02 12:06:20 0:cs:Connected ro:Secondary/Secondaryds:Inconsistent/Inconsistent C r----- ns:0 nr:0dw:0 dr:0 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:5252056

设置主从

[root@node1 ~]# drbdadm -- --overwrite-data-of-peerprimary mystore [root@node1 ~]# cat /proc/drbd version: 8.4.5 (api:1/proto:86-101) GIT-hash: 1d360bde0e095d495786eaeb2a1ac76888e4db96build by [email protected], 2015-01-02 12:06:20 0:cs:SyncSource ro:Primary/Secondary ds:UpToDate/Inconsistent C r---n- ns:160720nr:0 dw:0 dr:164648 al:0 bm:0 lo:0 pe:9 ua:5 ap:0 ep:1 wo:f oos:5093336 [>....................]sync'ed: 3.2% (4972/5128)M finish:0:02:40 speed: 31,744 (31,744) K/sec

主从已经设置完成,正在同步数据

格式化挂载drbd设备

[root@node1 ~]# mkfs.ext4 -j /dev/drbd0 [root@node1 ~]# mkdir /mydata [root@node1 ~]# mount /dev/drbd0 /mydata/

drbd复制验证

在主节点的drbd分区创建一个测试文件

[root@node1 ~]# cd /mydata/ [root@node1 mydata]# touch test [root@node1 mydata]# ls lost+found test

调换主从角色,调换之前需要卸载文件系统,因为主从节点不能同时使用

[root@node1 ~]# umount /mydata/ [root@node1 ~]# drbdadm secondary mystore [root@node1 ~]# drbd-overview 0:mystore/0 Connected Secondary/Secondary UpToDate/UpToDate

从节点挂载查看主节点创建的文件还在说明drbd配置成功

[root@node2 ~]# drbd-overview 0:drbd/0 ConnectedSecondary/Secondary UpToDate/UpToDate C r----- [root@node2 ~]# drbdadm primary mystore [root@node2 ~]# mkdir /mydata [root@node2 ~]# mount /dev/drbd0 /mydata [root@node2 ~]# cd /mydata [root@node2 mydata]# ls lost+found testdrbd

配置corosync+drbd高可用

配置之前需要先关闭drbd

[root@node1 ~]# service drbd stop [root@node2 ~]# service drbd stop

使用corosync定义drbd高可用是由linbit实现的

crm(live)ra# classes lsb ocf / heartbeat linbit pacemaker service stonith crm(live)ra# info ocf:linbit:drbd #查看drbd帮助信息

定义drbd资源和克隆

crm(live)configure# primitive mystorocf:linbit:drbd params drbd_resource="mystore" op monitorrole="Master" interval=10s timeout=20s op monitorrole="Slave" interval=20s timeout=20s op start timeout=240s op stoptimeout=100s crm(live)configure# ms my_mystor mystor metaclone-max="2" clone-node-max="1" master-max="1"master-node-max="1" notify="true"

定义drbd挂载

crm(live)configure# primitive mydataocf:heartbeat:Filesystem params device="/dev/drbd0"directory="/mydata" fstype="ext4" op monitor interval=20stimeout=40s op start timeout=60s op stop timeout=60s

设置位置约束

crm(live)configure# colocationmydata_with_ms_mystor_master inf: mydata my_mystor:Master

顺序约束

crm(live)configure#order mydata_after_ms_mystor_master Mandatory: my_mystor:promote mydata:start

转换测试

[root@node1 /]# crm status Last updated: Sun May 31 14:16:28 2015 Last change: Sun May 31 14:16:13 2015 Stack: classic openais (with plugin) Current DC: node2.xmfb.com - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 3 Resources configured Online: [ node1.xmfb.com node2.xmfb.com ] Master/SlaveSet: my_mystor [mystor] Masters:[ node1.xmfb.com ] Slaves:[ node2.xmfb.com ] mydata(ocf::heartbeat:Filesystem): Started node1.xmfb.com [root@node1 ~]# crm node standby [root@node2 ~]# crm status Last updated: Sun May 31 22:10:17 2015 Last change: Sun May 31 22:09:20 2015 Stack: classic openais (with plugin) Current DC: node2.xmfb.com - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 3 Resources configured Node node1.xmfb.com: standby Online: [ node2.xmfb.com ] Master/SlaveSet: my_mystor [mystor] Masters:[ node2.xmfb.com ] Stopped:[ node1.xmfb.com ] mydata(ocf::heartbeat:Filesystem): Started node2.xmfb.com

drbd主从也是正常的

[root@node2 ~]# drbd-overview 0:mystore/0 Connected Primary/Secondary UpToDate/UpToDate /mydata ext4 4.9G 11M 4.6G1%

Mariadb配置

主节点部署mariadb

创建mariadb用户和数据目录

[root@node1 ~]# groupadd -r -g 306 mysql [root@node1 ~]# useradd -r -g 306 -u 306 mysql [root@node1 ~]# mkdir /mydata/data [root@node1 ~]# chown -R mysql.mysql /mydata/data/

安装mariadb

tar xfmariadb-5.5.43-linux-x86_64.tar.gz -C /usr/local/ cd/usr/local/ ln -smariadb-5.5.43-linux-x86_64/ mysql cd mysql/ chown -Rroot.mysql ./* scripts/mysql_install_db--user=mysql --data=/mydata/data/

设置启动脚本

cp support-files/mysql.server/etc/rc.d/init.d/mysqld chkconfig --add mysqld chkconfig mysqld off

设置配置文件

[root@node1 ~]# cp support-files/my-large.cnf/etc/my.cnf [root@node1 ~]# vim /etc/my.cnf thread_concurrency = 2 #设置CPU核心数量乘以2 datadir = /mydata/data #设置数据文件目录 innodb_file_per_table = 1 #使用inoodb引擎,每表一个表文件

优化执行路径

echo "exportPATH=/usr/local/mysql/bin:$PATH" >> /etc/profile.d/mysql . /etc/profile.d/mysql

启动测试

[root@node1 mysql]# service mysqld start Starting MySQL... [ OK ] [root@node1 mysql]# mysql Welcome to the MariaDB monitor. Commands end with ; or \g. Your MariaDB connection id is 2 Server version: 5.5.43-MariaDB-log MariaDB Server Copyright (c) 2000, 2015, Oracle, MariaDBCorporation Ab and others. Type 'help;' or '\h' for help. Type '\c' to clearthe current input statement. MariaDB [(none)]>

设置root用户可以远程连接

MariaDB [(none)]> GRANT ALL ON *.* TO'root'@'172.16.%.%' IDENTIFIED BY 'centos'; MariaDB [(none)]> FLUSH PRIVILEGES;

设置完成关闭mysql服务

service mysqld stop

设置节点1为备节点

[root@node1 ~]# crm node standby [root@node1 ~]# crm node online [root@node2 ~]# crm status Last updated: Wed Jun 3 21:19:10 2015 Last change: Wed Jun 3 21:19:07 2015 Stack: classic openais (with plugin) Current DC: node1.xmfb.com - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 3 Resources configured Node node1.xmfb.com: standby Online: [ node2.xmfb.com ] Master/SlaveSet: MS_Mysql [mysqldrbd] Masters:[ node2.xmfb.com ] Stopped:[ node1.xmfb.com ] mydata(ocf::heartbeat:Filesystem): Started node2.xmfb.com

备用节点部署mariadb

部署之前确保,主节点安装的文件已经转移到备节点

[root@node2 ~]# ll /mydata/ total 20 -rw-r--r-- 1 root root 0 May 31 21:46 a.txt drwxr-xr-x 5 306 306 4096 May 31 22:16 data drwx------ 2 root root 16384 May 31 21:46lost+found [root@node2 ~]# ll /mydata/data/ total 28716 -rw-rw---- 1 306 306 16384 May 31 22:16aria_log.00000001 -rw-rw---- 1 306 306 52 May 31 22:16aria_log_control -rw-rw---- 1 306 306 18874368 May 31 22:16 ibdata1 -rw-rw---- 1 306 306 5242880 May 31 22:16ib_logfile0 -rw-rw---- 1 306 306 5242880 May 31 22:16ib_logfile1 drwx------ 2 306 root 4096 May 31 22:15 mysql -rw-rw---- 1 306 306 476 May 31 22:16mysql-bin.000001 -rw-rw---- 1 306 306 19 May 31 22:16mysql-bin.index -rw-r----- 1 306 root 2414 May 31 22:16 node1.xmfb.com.err drwx------ 2 306 306 4096 May 31 22:15performance_schema drwx------ 2 306 root 4096 May 31 22:14 test

创建mysql相关用户

[root@node1 ~]# groupadd -r -g 306 mysql [root@node1 ~]# useradd -r -g 306 -u 306 mysql

安装mariadb不需要初始化

tar xf mariadb-5.5.43-linux-x86_64.tar.gz -C/usr/local/ cd /usr/local/ ln -s mariadb-5.5.43-linux-x86_64/ mysql cd mysql/ chown -R root.mysql ./*

复制配置文件

[root@node1 ~]# scp /etc/my.cnf node2:/etc/my.cnf

设置启动脚本

cp support-files/mysql.server/etc/rc.d/init.d/mysqld chkconfig --add mysqld chkconfig mysqld off

优化执行路径

echo "export PATH=/usr/local/mysql/bin:$PATH">> /etc/profile.d/mysql . /etc/profile.d/mysql

启动mariadb

[root@node2 ~]# service mysqld start

创建一个数据库

MariaDB [(none)]> create database testdb; Query OK, 1 row affected (0.06 sec) MariaDB [(none)]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | performance_schema | | test | | testdb | +--------------------+ 5 rows in set (0.00 sec)

关闭mysql服务

[root@node2 ~]# service mysqld stop

切换主从节点

[root@node2 ~]# crm node standby [root@node2 ~]# crm node online [root@node2 ~]# crm status Last updated: Sun May 31 22:32:38 2015 Last change: Sun May 31 22:32:33 2015 Stack: classic openais (with plugin) Current DC: node2.xmfb.com - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 3 Resources configured Online: [ node1.xmfb.com node2.xmfb.com ] Master/SlaveSet: my_mystor [mystor] Masters:[ node1.xmfb.com ] Slaves:[ node2.xmfb.com ] mydata(ocf::heartbeat:Filesystem): Started node1.xmfb.com

node1启动mysql服务

[root@node1 ~]# service mysqld start

登录数据库可以看到node2上面创建的数据库

MariaDB [(none)]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | performance_schema | | test | | testdb | +--------------------+ 5 rows in set (0.00 sec)

查看完成停止mysql服务

[root@node1 ~]# service mysqld stop

配置mysql高可用集群

设置vip资源

crm(live)configure# primitive myipocf:heartbeat:IPaddr params ip="172.16.4.1" op monitor interval=10stimeout=20s

定义mysql服务为集群资源

crm(live)configure# primitive myserver lsb:mysqldop monitor interval=20s timeout=20s

定义位置约束

crm(live)configure# colocationmyip_with_ms_mystor_master inf: myip my_mystor:Master crm(live)configure# colocation myserver_with_mydatainf: myserver mydata

定义顺序约束

crm(live)configure# order myserver_after_mydataMandatory: mydata:start myserver:start crm(live)configure# order myserver_after_myipMandatory: myip:start myserver:start

设置完成commit提交,并且查看状态

[root@node1 ~]# crm status Last updated: Sun May 31 22:48:39 2015 Last change: Sun May 31 22:48:27 2015 Stack: classic openais (with plugin) Current DC: node2.xmfb.com - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 5 Resources configured Online: [ node1.xmfb.com node2.xmfb.com ] Master/SlaveSet: my_mystor [mystor] Masters:[ node1.xmfb.com ] Slaves:[ node2.xmfb.com ] mydata(ocf::heartbeat:Filesystem): Started node1.xmfb.com myip (ocf::heartbeat:IPaddr):Started node1.xmfb.com myserver (lsb:mysqld):Startednode1.xmfb.com

验证

设置完成之后,使用其他节点可以使用vip地址连接mysql

[root@node2 ~]# mysql -u root -h172.16.4.1 –p MariaDB [(none)]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | performance_schema | | test | | testdb | +--------------------+ 5 rows in set (0.01 sec)

主备转换测试

[root@node1 ~]# crm node standby

节点2已经启动vip和mysql资源

[root@node2 ~]# crm status Last updated: Sun May 31 22:57:34 2015 Last change: Sun May 31 22:57:23 2015 Stack: classic openais (with plugin) Current DC: node2.xmfb.com - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 5 Resources configured Online: [ node1.xmfb.com node2.xmfb.com ] Master/SlaveSet: my_mystor [mystor] Masters:[ node2.xmfb.com ] Slaves:[ node1.xmfb.com ] mydata(ocf::heartbeat:Filesystem): Started node2.xmfb.com myip (ocf::heartbeat:IPaddr):Started node2.xmfb.com myserver (lsb:mysqld):Startednode2.xmfb.com

连接数据库依旧正常

[root@node2 ~]# mysql -u root -h172.16.4.1 -p Enter password: Welcome to the MariaDB monitor. Commands end with ; or \g. Your MariaDB connection id is 2 Server version: 5.5.43-MariaDB-log MariaDB Server Copyright (c) 2000, 2015, Oracle, MariaDBCorporation Ab and others. Type 'help;' or '\h' for help. Type '\c' to clearthe current input statement. MariaDB [(none)]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | performance_schema | | test | | testdb | +--------------------+ 5 rows in set (0.06 sec)

创建一个表

MariaDB [(none)]> use testdb; Database changed MariaDB [testdb]> create table t1 (id int,namevarchar(20)); Query OK, 0 rows affected (0.10 sec) MariaDB [testdb]> show tables; +------------------+ | Tables_in_testdb | +------------------+ | t1 | +------------------+ 1 row in set (0.00 sec)

再次进行主从切换

[root@node2 ~]# crm node standby [root@node2 ~]# crm node online

节点转移到node1

[root@node2 ~]# crm status Last updated: Sun May 31 23:03:37 2015 Last change: Sun May 31 23:03:32 2015 Stack: classic openais (with plugin) Current DC: node2.xmfb.com - partition with quorum Version: 1.1.11-97629de 2 Nodes configured, 2 expected votes 5 Resources configured Online: [ node1.xmfb.com node2.xmfb.com ] Master/SlaveSet: my_mystor [mystor] Masters:[ node1.xmfb.com ] Slaves:[ node2.xmfb.com ] mydata(ocf::heartbeat:Filesystem): Started node1.xmfb.com myip (ocf::heartbeat:IPaddr):Started node1.xmfb.com myserver (lsb:mysqld):Startednode1.xmfb.com

数据库访问依旧正常

MariaDB [testdb]> show tables; +------------------+ | Tables_in_testdb | +------------------+ | t1 | +------------------+ 1 row in set (0.00 sec)

Corosync最终配置环境

crm(live)configure# show node node1.xmfb.com \ attributes standby=on node node2.xmfb.com \ attributes standby=off primitive mydata Filesystem \ params device="/dev/drbd0" directory="/mydata"fstype=ext4 \ opmonitor interval=20s timeout=40s \ opstart timeout=60s interval=0 \ opstop timeout=60s interval=0 primitive myip IPaddr \ params ip=172.16.4.1 \ opmonitor interval=10s timeout=20s primitive myserver lsb:mysqld \ opmonitor interval=20s timeout=20s primitive mystor ocf:linbit:drbd \ params drbd_resource=mystore \ opmonitor role=Master interval=10s timeout=20s \ opmonitor role=Slave interval=20s timeout=20s \ opstart timeout=240s interval=0 \ opstop timeout=100s interval=0 ms my_mystor mystor \ metaclone-max=2 clone-node-max=1 master-max=1 master-node-max=1 notify=true colocation mydata_with_ms_mystor_master inf: mydatamy_mystor:Master colocation myip_with_ms_mystor_master inf: myipmy_mystor:Master colocation myserver_with_mydata inf: myservermydata order mydata_after_ms_mystor_master Mandatory:my_mystor:promote mydata:start order myserver_after_mydata Mandatory: mydata:startmyserver:start order myserver_after_myip Mandatory: myip:startmyserver:start property cib-bootstrap-options: \ dc-version=1.1.11-97629de \ cluster-infrastructure="classic openais (with plugin)" \ expected-quorum-votes=2 \ stonith-enabled=false \ no-quorum-policy=ignore \ default-resource-stickiness=50 \ last-lrm-refresh=1433085584

彩色corosync配置示例: