前言:最近在给公司搞kafka和zookeeper容器化结合rancher的项目,查阅了相关官网和书籍,发现如果和公司的标准化关联比较牵强,原因有很多,我简单谈一下我最后选择自定义的原因:(因是个人本地二进制部署k8s+kakfa测试的,因此生产需要自己配置所需要的内存和cpu,动态持久化存储等)

1、使用官网dockfile不能自定义jdk。

2、dockerfile和yaml关联比较牵强,每个人有每个人的思路。

3、不能和公司之前物理机部署标准化文档相结合。

.....

下面,我将花了半个月研究的部署分享一,有兴趣者可加好友共同探讨:

https://github.com/renzhiyuan6666666/kubernetes-docker

一、 zookeeper集群部署

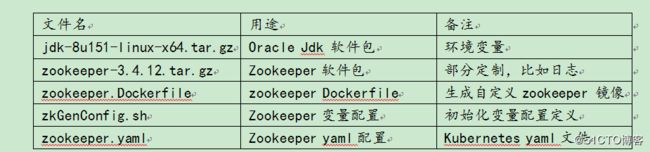

1.1) zookeeper文件清单

2.

1.2) zookeeper文件清单详解

1.2.1)oracle jdk软件包

jdk-8u151-linux-x64.tar.gz

底层使用centos6.6镜像,部署目录到app目录下,在dockerfile里面配置环境变量。

1.2.2)zookeeper软件包

zookeeper-3.4.12.tar.gz

底层使用centos6.6镜像,部署目录到app目录下

1.2.3)zookeeper Dockerfile

#设置继承镜像

FROM centos:6.6

#作者的信息

MAINTAINER docker_user renzhiyuan

#Zookeeper和jdk标准化版本

#ENV JAVA_VERSION="1.8.0_151"

#ENV ZK_VERSION="3.4.12"

ENV ZK_JDK_HOME=/app

ENV JAVA_HOME=/app/jdk1.8.0_151

ENV ZK_HOME=/app/zookeeper

ENV LANG=en_US.utf8

#基础使用包安装配置

#RUN yum makecache

RUN yum install lsof yum-utils lrzsz net-tools nc -y &>/dev/null

#创建安装目录

RUN mkdir $ZK_JDK_HOME

#权限和变量

RUN chown -R root.root $ZK_JDK_HOME && chmod -R 755 $ZK_JDK_HOME

#安装配置JDK

ADD jdk-8u151-linux-x64.tar.gz /app

RUN echo "export JAVA_HOME=/app/jdk1.8.0_151" >>/etc/profile

RUN echo "export PATH=\$JAVA_HOME/bin:\$PATH" >>/etc/profile

RUN echo "export CLASSPATH=.:\$JAVA_HOME/lib/dt.jar:\$JAVA_HOME/lib/tools.jar" >>/etc/profile && source /etc/profile

#安装配置zookeeper-3.4.12,相关目录整合到安装包

ADD zookeeper-3.4.12.tar.gz /app

RUN ln -s /app/zookeeper-3.4.12 /app/zookeeper

#配置文件,日志切割,jvm标准化单独在zkGenConfig.sh配置

COPY zkGenConfig.sh /app/zookeeper/bin/

#开放端口

EXPOSE 2181 10052

1.2.4)zkGenConfig.sh

# !/usr/bin/env

# Copyright 2016 The Kubernetes Authors.

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#配置ZK相关变量

#ZK_USER=${ZK_USER:-"root"}

#ZK_LOG_LEVEL=${ZK_LOG_LEVEL:-"INFO"}

#ZK_HOME=${ZK_HOME:-"/app/zookeeper"}

ZK_DATA_DIR=${ZK_DATA_DIR:-"/app/zookeeper/data"}

ZK_DATA_LOG_DIR=${ZK_DATA_LOG_DIR:-"/app/zookeeper/datalog"}

ZK_LOG_DIR=${ZK_LOG_DIR:-"/app/zookeeper/logs"}

ZK_CONF_DIR=${ZK_CONF_DIR:-"/app/zookeeper/conf"}

LOGGER_PROPS_FILE="$ZK_CONF_DIR/log4j.properties"

#ZK_CLIENT_PORT=${ZK_CLIENT_PORT:-2181}

#ZK_SERVER_PORT=${ZK_SERVER_PORT:-2222}

#ZK_ELECTION_PORT=${ZK_ELECTION_PORT:-2223}

#ZK_TICK_TIME=${ZK_TICK_TIME:-3000}

#ZK_INIT_LIMIT=${ZK_INIT_LIMIT:-10}

#ZK_SYNC_LIMIT=${ZK_SYNC_LIMIT:-5}

#ZK_MAX_CLIENT_CNXNS=${ZK_MAX_CLIENT_CNXNS:-100}

#ZK_MIN_SESSION_TIMEOUT=${ZK_MIN_SESSION_TIMEOUT:- $((ZK_TICK_TIME*2))}

#ZK_MAX_SESSION_TIMEOUT=${ZK_MAX_SESSION_TIMEOUT:- $((ZK_TICK_TIME*20))}

#ZK_SNAP_RETAIN_COUNT=${ZK_SNAP_RETAIN_COUNT:-3}

#ZK_PURGE_INTERVAL=${ZK_PURGE_INTERVAL:-0}

ID_FILE="$ZK_DATA_DIR/myid"

ZK_CONFIG_FILE="$ZK_CONF_DIR/zoo.cfg"

JAVA_ENV_FILE="$ZK_CONF_DIR/java.env"

#副本数

#ZK_REPLICAS=3

#配置主机名和domain

HOST=`hostname -s`

DOMAIN=`hostname -d`

#ipdrr=`ip a|grep eth1|grep inet|awk '{print $2}'|awk -F"/" '{print $1}'`

#配置选举端口和数据同步端口

function print_servers() {

for (( i=1; i<=$ZK_REPLICAS; i++ ))

do

echo "server.$i=$NAME-$((i-1)).$DOMAIN:$ZK_SERVER_PORT:$ZK_ELECTION_PORT"

done

}

#获取hostName的最后一位,比如zookeeper-0获取到0作为myid

function validate_env() {

echo "Validating environment"

if [ -z $ZK_REPLICAS ]; then

echo "ZK_REPLICAS is a mandatory environment variable"

exit 1

fi

if [[ $HOST =~ (.*)-([0-9]+)$ ]]; then

NAME=${BASH_REMATCH[1]}

ORD=${BASH_REMATCH[2]}

else

echo "Failed to extract ordinal from hostname $HOST"

exit 1

fi

MY_ID=$((ORD+1))

if [ ! -f $ID_FILE ]; then

echo $MY_ID >> $ID_FILE

fi

#echo "ZK_REPLICAS=$ZK_REPLICAS"

#echo "MY_ID=$MY_ID"

#echo "ZK_LOG_LEVEL=$ZK_LOG_LEVEL"

#echo "ZK_DATA_DIR=$ZK_DATA_DIR"

#echo "ZK_DATA_LOG_DIR=$ZK_DATA_LOG_DIR"

#echo "ZK_LOG_DIR=$ZK_LOG_DIR"

#echo "ZK_CLIENT_PORT=$ZK_CLIENT_PORT"

#echo "ZK_SERVER_PORT=$ZK_SERVER_PORT"

#echo "ZK_ELECTION_PORT=$ZK_ELECTION_PORT"

#echo "ZK_TICK_TIME=$ZK_TICK_TIME"

#echo "ZK_INIT_LIMIT=$ZK_INIT_LIMIT"

#echo "ZK_SYNC_LIMIT=$ZK_SYNC_LIMIT"

#echo "ZK_MAX_CLIENT_CNXNS=$ZK_MAX_CLIENT_CNXNS"

#echo "ZK_MIN_SESSION_TIMEOUT=$ZK_MIN_SESSION_TIMEOUT"

#echo "ZK_MAX_SESSION_TIMEOUT=$ZK_MAX_SESSION_TIMEOUT"

#echo "ZK_HEAP_SIZE=$ZK_HEAP_SIZE"

#echo "ZK_SNAP_RETAIN_COUNT=$ZK_SNAP_RETAIN_COUNT"

#echo "ZK_PURGE_INTERVAL=$ZK_PURGE_INTERVAL"

#echo "ENSEMBLE"

#print_servers

#echo "Environment validation successful"

}

#配置ZK配置文件变量

function create_config() {

#rm -f $ZK_CONFIG_FILE

echo "dataDir=$ZK_DATA_DIR" >>$ZK_CONFIG_FILE

echo "dataLogDir=$ZK_DATA_LOG_DIR" >>$ZK_CONFIG_FILE

echo "tickTime=$ZK_TICK_TIME" >>$ZK_CONFIG_FILE

echo "initLimit=$ZK_INIT_LIMIT" >>$ZK_CONFIG_FILE

echo "syncLimit=$ZK_SYNC_LIMIT" >>$ZK_CONFIG_FILE

echo "clientPort=$ZK_CLIENT_PORT" >>$ZK_CONFIG_FILE

echo "maxClientCnxns=$ZK_MAX_CLIENT_CNXNS" >>$ZK_CONFIG_FILE

if [ $ZK_REPLICAS -gt 1 ]; then

print_servers >> $ZK_CONFIG_FILE

fi

echo "Write ZooKeeper configuration file to $ZK_CONFIG_FILE"

}

#创建ZK相关目录和myid

#function create_data_dirs() {

# echo "Creating ZooKeeper data directories and setting permissions"

#

# if [ ! -d $ZK_DATA_DIR ]; then

# mkdir -p $ZK_DATA_DIR

# chown -R $ZK_USER:$ZK_USER $ZK_DATA_DIR

# fi

#

# if [ ! -d $ZK_DATA_LOG_DIR ]; then

# mkdir -p $ZK_DATA_LOG_DIR

# chown -R $ZK_USER:$ZK_USER $ZK_DATA_LOG_DIR

# fi

#

# if [ ! -d $ZK_LOG_DIR ]; then

# mkdir -p $ZK_LOG_DIR

# chown -R $ZK_USER:$ZK_USER $ZK_LOG_DIR

# fi

#

# echo "Created ZooKeeper data directories and set permissions in $ZK_DATA_DIR"

#}

#配置日志切割

#function create_log_props () {

# rm -f $LOGGER_PROPS_FILE

# echo "Creating ZooKeeper log4j configuration"

# echo "zookeeper.root.logger=CONSOLE" >> $LOGGER_PROPS_FILE

# echo "zookeeper.console.threshold="$ZK_LOG_LEVEL >> $LOGGER_PROPS_FILE

# echo "log4j.rootLogger=\${zookeeper.root.logger}" >> $LOGGER_PROPS_FILE

# echo "log4j.appender.CONSOLE=org.apache.log4j.ConsoleAppender" >> $LOGGER_PROPS_FILE

# echo "log4j.appender.CONSOLE.Threshold=\${zookeeper.console.threshold}" >> $LOGGER_PROPS_FILE

# echo "log4j.appender.CONSOLE.layout=org.apache.log4j.PatternLayout" >> $LOGGER_PROPS_FILE

# echo "log4j.appender.CONSOLE.layout.ConversionPattern=%d{ISO8601} [myid:%X{myid}] - %-5p [%t:%C{1}@%L] - %m%n" >> $LOGGER_PROPS_FILE

# echo "Wrote log4j configuration to $LOGGER_PROPS_FILE"

#}

#配置启动jmx配置

function create_java_env() {

rm -f $JAVA_ENV_FILE

echo "Creating JVM configuration file"

echo '#!/bin/bash' >> $JAVA_ENV_FILE

echo "export JMXPORT=10052" >> $JAVA_ENV_FILE

echo "JVMFLAGS=\"\$JVMFLAGS -Xms256m -Xmx256m -Djute.maxbuffer=5000000 -Xloggc:gc.log -XX:+PrintGCApplicationStoppedTime -XX:+PrintGCApplicationConcurrentTime -XX:+PrintGC -XX:+PrintGCTimeStamps -XX:+PrintGCDetails -XX:ParallelGCThreads=8 -XX:+UseConcMarkSweepGC\"" >> $JAVA_ENV_FILE

echo "Wrote JVM configuration to $JAVA_ENV_FILE"

}

validate_env && create_config && create_java_env && cat $ZK_CONFIG_FILE && cat $JAVA_ENV_FILE

1.2.5)zookeeper.yaml

# !/usr/bin/env

#部署 Service Headless,用于Zookeeper间相互通信

apiVersion: v1

kind: Service

metadata:

name: zookeeper-headless

labels:

app: zookeeper

spec:

clusterIP: None

publishNotReadyAddresses: true

ports:

- name: client

port: 2181

targetPort: client

- name: server

port: 2222

targetPort: server

- name: leader-election

port: 2223

targetPort: leader-election

selector:

app: zookeeper

---

#部署 Service,用于外部访问 Zookeeper

apiVersion: v1

kind: Service

metadata:

name: zookeeper

labels:

app: zookeeper

spec:

type: NodePort

ports:

- name: client

port: 2181

targetPort: 2181

nodePort: 32181

protocol: TCP

selector:

app: zookeeper

---

#配置控制器保证POD集群处于运行状态最低个数

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: zk-pdb

spec:

selector:

matchLabels:

app: zookeeper

minAvailable: 2

---

#配置StatefulSet

apiVersion: apps/v1beta2

kind: StatefulSet

metadata:

name: zookeeper

spec:

podManagementPolicy: OrderedReady

replicas: 3

revisionHistoryLimit: 10

selector:

matchLabels:

app: zookeeper

serviceName: zookeeper-headless

template:

metadata:

annotations:

labels:

app: zookeeper

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- zookeeper

topologyKey: "kubernetes.io/hostname"

containers:

- name: zookeeper

imagePullPolicy: Always

image: 192.168.8.183/library/zookeeper-zyxf:3.4.12

resources:

requests:

memory: "512m"

cpu: "500m"

ports:

- containerPort: 2181

name: client

- containerPort: 2222

name: server

- containerPort: 2223

name: leader-election

env:

- name : ZK_REPLICAS

value: "3"

- name : ZK_DATA_DIR

value: "/app/zookeeper/data"

- name : ZK_DATA_LOG_DIR

value: "/app/zookeeper/dataLog"

- name : ZK_TICK_TIME

value: "3000"

- name : ZK_INIT_LIMIT

value: "10"

- name : ZK_SYNC_LIMIT

value: "5"

- name : ZK_MAX_CLIENT_CNXNS

value: "100"

- name: ZK_CLIENT_PORT

value: "2181"

- name: ZK_SERVER_PORT

value: "2222"

- name: ZK_ELECTION_PORT

value: "2223"

command:

- sh

- -c

- /app/zookeeper/bin/zkGenConfig.sh && /app/zookeeper/bin/zkServer.sh start-foreground

volumeMounts:

- name: datadir

mountPath: /app/zookeeper/dataLog

volumes:

- name: datadir

hostPath:

path: /zk

type: DirectoryOrCreate

# volumeMounts:

# - name: data

# mountPath: /renzhiyuan/zookeeper

# volumeClaimTemplates:

# - metadata:

# name: data

# spec:

# accessModes: [ "ReadWriteOnce" ]

# storageClassName: local-storage

# resources:

# requests:

# storage: 3Gi

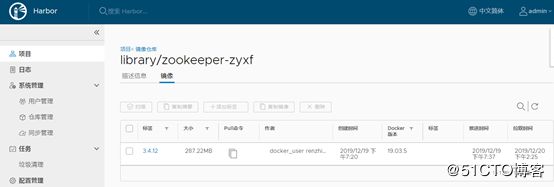

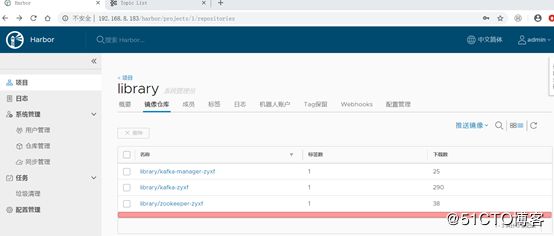

* 1.3)zookeeper镜像生成上传

1.3.1)zookeeper镜像打包

docker build -t zookeeper:3.4.12 -f zookeeper.Dockerfile .

docker tag zookeeper:3.4.12 192.168.8.183/library/zookeeper-zyxf:3.4.12

1.3.2)zookeeper镜像上传harbor仓库

docker login 192.168.8.183 -u admin -p renzhiyuan

docker push 192.168.8.183/library/zookeeper-zyxf

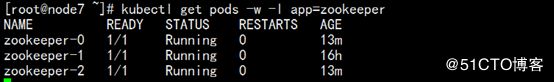

1.4)zookeeper部署

1.4)zookeeper部署

1.4.1)zookeeper部署

kubectl apply -f zookeeper.yaml

1.4.2)zookeeper部署过程检查

[root@node7 ~]# kubectl describe pods zookeeper-

zookeeper-0 zookeeper-1 zookeeper-2

kubectl get pods -w -l app=zookeeper

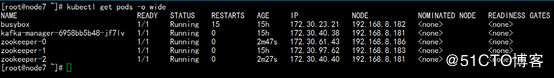

1.5) zookeeper集群验证

1.5.1)检查 zookeeper StatefulSet 中 Pods 分布

[root@node7 ~]# kubectl get pods -o wide

1.5.2)检查 zookeeper StatefulSet 中 Pods 主机名

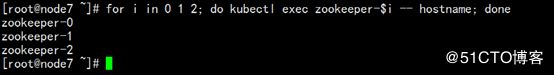

for i in 0 1 2; do kubectl exec zookeeper-$i – hostname ; done

1.5.3) 检查 zookeeper StatefulSet 中myid标识

for i in 0 1 2; do echo "myid zookeeper-$i";kubectl exec zookeeper-$i -- cat /app/zookeeper/data/myid; done

1.5.4) 检查 zookeeper StatefulSet 中FQDN (正式域名)

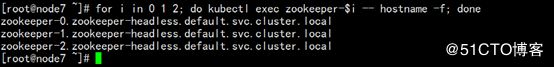

for i in 0 1 2; do kubectl exec zookeeper-$i -- hostname -f; done

1.5.5) 检查 zookeeper StatefulSet pods中dns解析

for i in 0 1 2; do kubectl exec -ti busybox -- nslookup zookeeper-$i.zookeeper-headless.default.svc.cluster.local; done

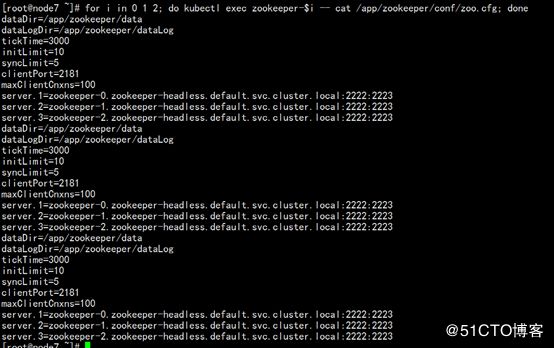

1.5.6) 检查 zookeeper StatefulSet zoo.cfg配置文件标准化

1.5.7) 检查 zookeeper StatefulSet 集群状态

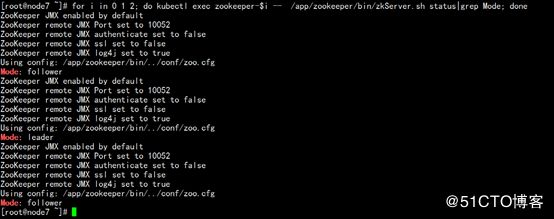

for i in 0 1 2; do kubectl exec zookeeper-$i -- /app/zookeeper/bin/zkServer.sh status|grep Mode; done

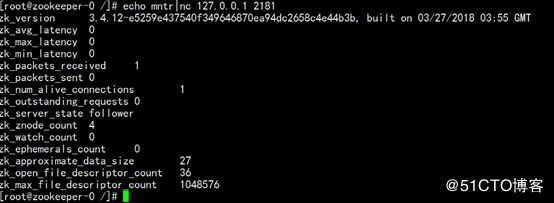

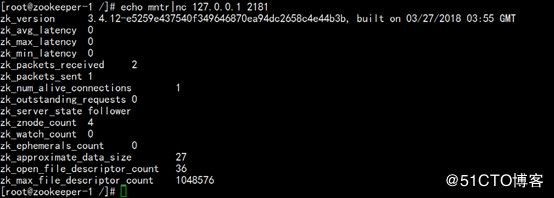

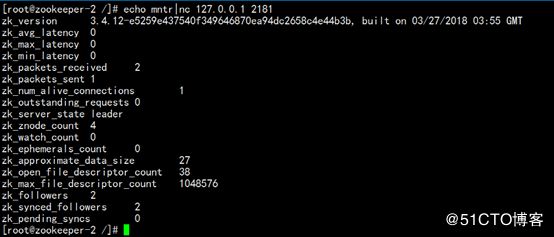

1.5.8) 检查 zookeeper StatefulSet 四字命令检验

1.6) zookeeper集群扩容

二、 kafka集群部署

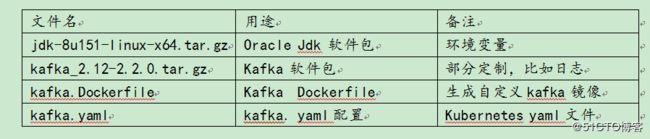

2.1)kafka文件清单

2.2) kafka文件清单详解

2.2.1)oracle jdk软件包

jdk-8u151-linux-x64.tar.gz

底层使用centos6.6镜像,部署目录到app目录下,在dockerfile里面配置环境变量。

2.2.2)zookeeper软件包

kafka_2.12-2.2.0.tar.gz

底层使用centos6.6镜像,部署目录到app目录下

2.2.3)kafka Dockerfile

#设置继承镜像

FROM centos:6.6

#作者的信息

MAINTAINER docker_user ([email protected])

#kafka和jdk标准化版本

ENV JAVA_VERSION="1.8.0_151"

ENV KAFKA_VERSION="2.2.0"

ENV KAFKA_JDK_HOME=/app

ENV JAVA_HOME=/app/jdk1.8.0_151

ENV KAFKA_HOME=/app/kafka

ENV LANG=en_US.utf8

#基础使用包安装配置

#RUN yum makecache

RUN yum install lsof yum-utils lrzsz net-tools nc -y &>/dev/null

#创建安装目录

RUN mkdir $KAFKA_JDK_HOME

#权限和变量

RUN chown -R root.root $KAFKA_JDK_HOME && chmod -R 755 $KAFKA_JDK_HOME

#安装配置JDK

ADD jdk-8u151-linux-x64.tar.gz /app

RUN echo "export JAVA_HOME=/app/jdk1.8.0_151" >>/etc/profile

RUN echo "export PATH=\$JAVA_HOME/bin:\$PATH" >>/etc/profile

RUN echo "export CLASSPATH=.:\$JAVA_HOME/lib/dt.jar:\$JAVA_HOME/lib/tools.jar" >>/etc/profile

#安装配置Kafka和创建目录

ADD kafka_2.12-2.2.0.tar.gz /app

RUN ln -s /app/kafka_2.12-2.2.0 /app/kafka

#配置文件,日志切割,jvm标准化单独在kafkaGenConfig.sh配置

#开放端口

EXPOSE 9092 9999

2.2.4)kafka.yaml

#部署 Service Headless,用于Kafka间相互通信

apiVersion: v1

kind: Service

metadata:

name: kafka-headless

labels:

app: kafka

spec:

type: ClusterIP

clusterIP: None

ports:

- name: kafka

port: 9092

targetPort: kafka

selector:

app: kafka

---

#部署 Service,用于外部访问 kafka

apiVersion: v1

kind: Service

metadata:

name: kafka

labels:

app: kafka

spec:

type: NodePort

ports:

- name: kafka

port: 9092

targetPort: 9092

nodePort: 32192

protocol: TCP

selector:

app: kafka

---

#配置控制器保证POD集群处于运行状态最低个数

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: kafka-pdb

spec:

selector:

matchLabels:

app: kafka

minAvailable: 2

---

#配置StatefulSet

apiVersion: apps/v1beta2

kind: StatefulSet

metadata:

name: kafka

spec:

podManagementPolicy: OrderedReady

replicas: 3

revisionHistoryLimit: 10

selector:

matchLabels:

app: kafka

serviceName: kafka-headless

template:

metadata:

annotations:

labels:

app: kafka

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- kafka

topologyKey: "kubernetes.io/hostname"

containers:

- name: kafka

imagePullPolicy: Always

image: 192.168.8.183/library/kafka-zyxf:2.2.0

resources:

requests:

memory: "500m"

cpu: "256m"

ports:

- containerPort: 9092

name: kafka

env:

- name: KAFKA_HEAP_OPTS

value : "-Xmx256M -Xms256M"

command:

- sh

- -c

- "/app/kafka/bin/kafka-server-start.sh /app/kafka/config/server.properties \

--override broker.id=${HOSTNAME##*-} \

--override zookeeper.connect=zookeeper:2181 \

--override listeners=PLAINTEXT://:9092 \

--override advertised.listeners=PLAINTEXT://:9092 \

--override broker.id.generation.enable=false \

--override auto.create.topics.enable=false \

--override min.insync.replicas=2 \

--override log.dir= \

--override log.dirs=/app/kafka/kafka-logs \

--override offsets.retention.minutes=10080 \

--override default.replication.factor=3 \

--override queued.max.requests=2000 \

--override num.network.threads=8 \

--override num.io.threads=16 \

--override auto.create.topics.enable=false \

--override socket.send.buffer.bytes=1048576 \

--override socket.receive.buffer.bytes=1048576 \

--override num.replica.fetchers=4 \

--override replica.fetch.max.bytes=5242880 \

--override replica.socket.receive.buffer.bytes=1048576"

volumeMounts:

- name: datadir

mountPath: /app/kafka/kafka-logs

volumes:

- name: datadir

hostPath:

path: /kafka

type: DirectoryOrCreate

# emptyDir: {}

# volumeMounts:

# - name: data

# mountPath: /renzhiyuan/kafka

# volumeClaimTemplates:

# - metadata:

# name: data

# spec:

# accessModes: [ "ReadWriteOnce" ]

# storageClassName: local-storage

# resources:

# requests:

# storage: 3Gi

2.3)kafka镜像生成上传

2.3.1)zookeeper镜像打包

docker build -t kafka:2.2.0 -f kafka.Dockerfile .

docker tag kafka:2.2.0 192.168.8.183/library/kafka-zyxf:2.2.0

2.3.2)zookeeper镜像上传harbor仓库

docker login 192.168.8.183 -u admin -p renzhiyuan

docker push 192.168.8.183/library/kafka-zyxf:2.2.0

2.4)kafka部署

2.4.1)kafka部署

kubectl apply -f kafka.yaml

2.4.2)kafka部署过程检查

[root@node7 ~]# kubectl describe pods kafka-

kafka-0 kafka-1 kafka-2

[root@node7 ~]#

kubectl get pods -w -l app=kafka

2.5)kafka集群验证

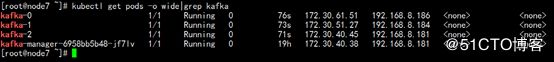

2.5.1)检查 kafka StatefulSet 中 Pods 分布

2.5.2)检查 kafka StatefulSet 中 Pods 主机名

for i in 0 1 2; do kubectl exec kafka-$i -- hostname ; done

2.5.3) 检查 kafka StatefulSet 中FQDN (正式域名)

for i in 0 1 2; do kubectl exec kafka-$i -- hostname -f; done

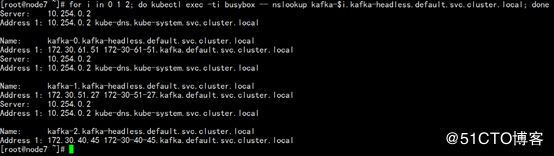

2.5.4) 检查 kafka StatefulSet pods中dns解析

for i in 0 1 2; do kubectl exec -ti busybox -- nslookup kafka-$i.kafka-headless.default.svc.cluster.local; done

2.5.5) 检查 kafka StatefulSet server.properties配置文件标准化

for i in 0 1 2; do echo kafka-$i; kubectl exec kafka-$i cat /app/kafka/logs/server.log|grep "auto.create.topics.enable = false"; done 2.5.6) 检查 kafka StatefulSet 集群验证

2.5.6) 检查 kafka StatefulSet 集群验证

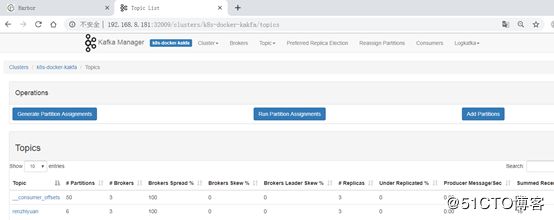

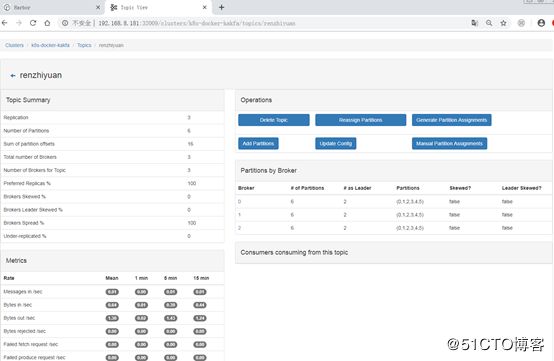

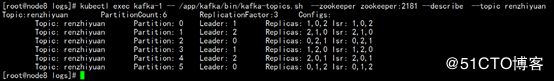

创建一个topic

kubectl exec kafka-1 -- /app/kafka/bin/kafka-topics.sh --create --zookeeper zookeeper:2181 --replication-factor 3 --partitions 6 --topic renzhiyuan

检查topic信息

kubectl exec kafka-1 -- /app/kafka/bin/kafka-topics.sh --describe --zookeeper zookeeper:2181 --topic renzhiyuan

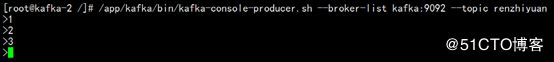

生产消息

/app/kafka/bin/kafka-console-producer.sh --broker-list kafka:9092 --topic renzhiyuan

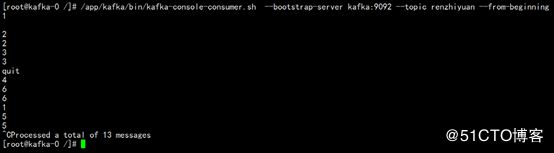

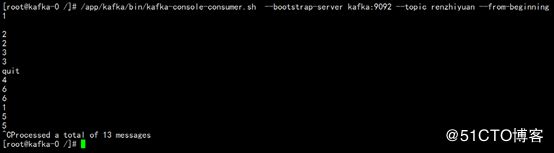

消费消息

/app/kafka/bin/kafka-console-consumer.sh --bootstarp-server kafka:9092 --from-beginning --topic renzhiyuan

2.6)kafka集群扩容

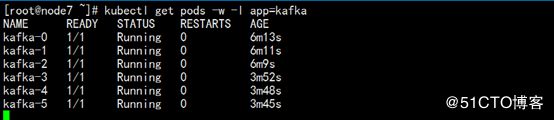

2.3.1)kafka扩容到6实例

kubectl scale --replicas=6 StatefulSet/kafka

statefulset.apps/kafka scaled

[root@node7 ~]#

2.3.2)kafka扩容过程检查

[root@node7 ~]# kubectl describe pods kafka-

kafka-0 kafka-2 kafka-4

kafka-1 kafka-3 kafka-5

[root@node7 ~]#

kubectl get pods -w -l app=kafka

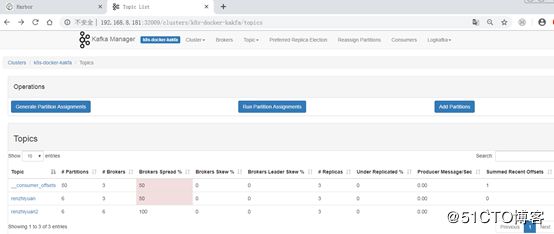

创建一个topic

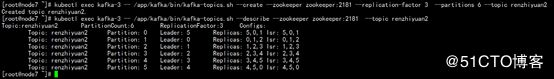

kubectl exec kafka-3 -- /app/kafka/bin/kafka-topics.sh --create --zookeeper zookeeper:2181 --replication-factor 3 --partitions 6 --topic renzhiyuan2

检查topic信息

kubectl exec kafka-3 -- /app/kafka/bin/kafka-topics.sh --describe --zookeeper zookeeper:2181 --topic renzhiyuan2

三、 Kafka manager管理端部署

3.1)kafka manager文件清单

3.2) kafka manager文件清单详解

3.3)kafka manager 镜像生成上传

3.3.1)zookeeper镜像打包

docker build -t kafka-manager:1.3.3.18 -f manager.Dockerfile .

docker tag kafka-manager:1.3.3.18 192.168.8.183/library/ kafka-manager-zyxf: 1.3.3.18

3.3.2)zookeeper镜像上传harbor仓库

docker login 192.168.8.183 -u admin -p renzhiyuan

docker push 192.168.8.183/library/kafka-manager-zyxf

3.4)kafka manager 部署

[root@node7 ~]# kubectl apply -f manager.yaml

3.5)kafka manager验证

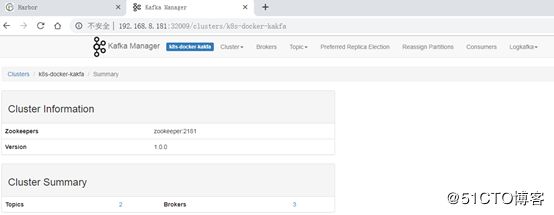

http://192.168.8.181:32009/

3.5.1)扩容前验证