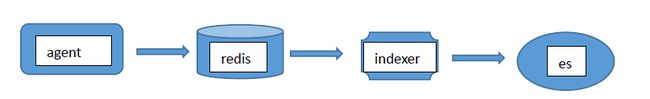

1、采用拓扑:

角色扮演:

Agent:采用logstash,IP:192.168.10.7

Redis队列: IP:192.168.10.100

Indexer:logstash,IP:192.168.10.205

Es+kibana:放在192.168.10.100(大的日志环境可以单独存放)

说明:下面是一台日志服务器下面nginx的日志格式

log_format backend '$http_x_forwarded_for [$time_local] '

'"$host" "$request" $status $body_bytes_sent '

'"$http_referer" "$http_user_agent"'

1、192.168.10.7上面agnet的配置:

[luohui@BJ-huasuan-h-web-07 ~]$ cat /home/luohui/logstash-5.0.0/etc/logstash-nginx.conf

input {

file {

path => ["/home/data/logs/access.log"]

type => "nginx_access"

}

}

output {

if [type] == "nginx_access"{

redis {

host => ["192.168.10.100:6379"]

data_type =>"list"

key => "nginx"

}

}

}

##说明:这里的agent只是做日志发送,对性能影响不大,读取access.log日志文件,并且发送到远端redis。

2、192.168.10.205:indexer的配置:

[root@mail etc]# cat logstash_nginx.conf

input {

redis {

host => "192.168.10.100"

port => 6379

data_type => "list"

key => "nginx"

}

}

filter {

grok {

match =>

{"message" => "%{IPORHOST:clientip} \[%{HTTPDATE:timestamp}\] %{NOTSPACE:http_name} \"(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:httpversion})?|%{DATA:rawrequest})\" %{NUMBER:response} (?:%{NUMBER:bytes:float}|-) %{QS:referrer} %{QS:agent}"

}

}

date {

match => [ "timestamp" , "dd/MMM/YYYY:HH:mm:ss Z" ]

}

geoip {

source => "clientip"

target => "geoip"

database => "/test/logstash-5.0.0/GeoLite2-City.mmdb"

add_field => [ "[geoip][coordinates]", "%{[geoip][longitude]}" ]

add_field => [ "[geoip][coordinates]", "%{[geoip][latitude]}" ]

}

mutate {

convert => [ "[geoip][coordinates]", "float"]

}

}

output {

elasticsearch {

action => "index"

hosts =>"192.168.10.100:9200"

index => "logstash-nginx-%{+yyyy.MM.dd}"

}

}

##说明:这里接收来自:redis的数据key为nginx的。然后进行正则匹配筛选数据。

Geoip调用我们本地下载的库,在linux版本下现在用:GeoLite2-City.mmdb,可以去网上下载。

备注:基本上操作的也就是logstash的相关操作,其他都是傻瓜安装。但是记得要启动elastic监听端口,启动redis监听端口。最后面启动logstash倒入数据。

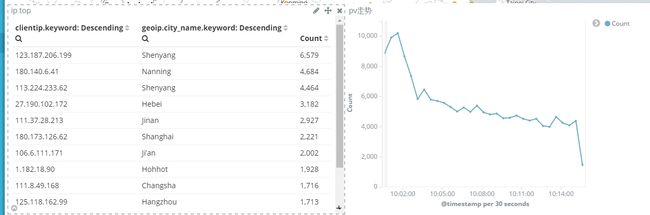

这个比较简单,调用city库之后,选择Tile map即可:

这里是kibana带的地图,可以看到是英文的城市名之类的,我们改成高德地图,显示中文城市名。

3、修改kibana.yml添加如下URL:

tilemap.url: "http://webrd02.is.autonavi.com/appmaptilelang=zh_cn&size=1&scale=1&style=8&x={x}&y={y}&z={z}"

4、重启kibana即可得到如下图形:

5、到这里已经差不多完成了。然后还有剩下的相关图表。大家熟悉kibana自己做聚合运算即可。

6、有一些nginx喜欢用如下的默认格式:

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

7、可以用如下的grok,默认一些正则表达式logstash已经提供,我们可以如下地址去查看:

vendor/bundle/jruby/1.9/gems/logstash-patterns-core-4.0.2/patterns

8、我们切换到这个目录下,创建相关的正则:

[root@controller logstash-5.0.0]# cd vendor/bundle/jruby/1.9/gems/logstash-patterns-core-4.0.2/patterns[root@controller patterns]# cat nginx

NGUSERNAME [a-zA-Z\.\@\-\+_%]+

NGUSER %{NGUSERNAME}

NGINXACCESS %{IPORHOST:clientip} - %{NGUSER:remote_user} \[%{HTTPDATE:timestamp}\] \"(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:httpversion})?|%{DATA:rawrequest})\" %{NUMBER:response} (?:%{NUMBER:bytes:float}|-) %{QS:referrer} %{QS:agent} %{NOTSPACE:http_x_forwarded_for} %{NUMBER:request_time:float}

9、直接调用即可:

###到处已经可以手工了,剩下就是采集数据kibana聚合出图的事情。

[root@controller etc]# cat nginx.conf

input {

redis {

host => "192.168.10.100"

port => 6379

data_type => "list"

key => "nginx"

}

}

filter {

grok {

match => { "message" => "%{NGINXACCESS}" }

}

date {

match => [ "timestamp" , "dd/MMM/YYYY:HH:mm:ss Z" ]

}

geoip {

source => "clientip"

target => "geoip"

database => "/test/logstash-5.0.0/GeoLite2-City.mmdb"

add_field => [ "[geoip][coordinates]", "%{[geoip][longitude]}" ]

add_field => [ "[geoip][coordinates]", "%{[geoip][latitude]}" ]

}

mutate {

convert => [ "[geoip][coordinates]", "float"]

}

}

output {

stdout{codec=>rubydebug}

elasticsearch {

action => "index"

hosts => "192.168.63.235:9200"

index => "logstash-nginx-%{+yyyy.MM.dd}"

}

}

10、可以完善的,就是nginx我们可以再生成数据的时候以json的格式生成,这样就不用grok去解析这么消耗CPU了:

log_format json '{"@timestamp":"$time_iso8601",'

'"host":"$server_addr",'

'"clientip":"$remote_addr",'

'"size":$body_bytes_sent,'

'"responsetime":$request_time,'

'"upstreamtime":"$upstream_response_time",'

'"upstreamhost":"$upstream_addr",'

'"http_host":"$host",'

'"url":"$uri",'

'"xff":"$http_x_forwarded_for",'

'"referer":"$http_referer",'

'"agent":"$http_user_agent",'

'"status":"$status"}';

access_log /etc/nginx/logs/access_nginx.json json;

11、这样就省去了很多解析的部分,直接用json格式解析即可。

[root@controller logstash-5.0.0]# cat etc/nginx_json.conf

input {

file { #从nginx日志读入

type => "nginx-access"

path => "/etc/nginx/logs/access_nginx.json"

start_position => "beginning"

codec => "json" #这里指定codec格式为json

}

}

filter {

if [type] == "nginx-access"{

geoip {

source => "clientip"

target => "geoip"

database => "/test/logstash-5.0.0/GeoLite2-City.mmdb"

add_field => [ "[geoip][coordinates]", "%{[geoip][longitude]}" ]

add_field => [ "[geoip][coordinates]", "%{[geoip][latitude]}" ]

}

}

}

output {

if [type] == "nginx-access" {

stdout{codec=>rubydebug}

elasticsearch {

action => "index"

hosts => "192.168.63.235:9200"

index => "mysql-slow-%{+yyyy.MM.dd}"

}

}

}

清爽好多,手工。注意GeoLite2-City.mmdb用这个库,我之前用bat这个。是出不来图的

ELK相关课程:日志分析之 ELK stack 实战