kafka的分区消费模型

分区消费模型是kafka的消费者编程模型。其模型如下所示:

主要是一个consumer对应一个分区。而分区消费的伪代码如下所示:

kafka的组消费模型

kafka按照组进行消费的时候一个kafka组中的消费者可以获取到kafka集群中的所有数据以供消费。

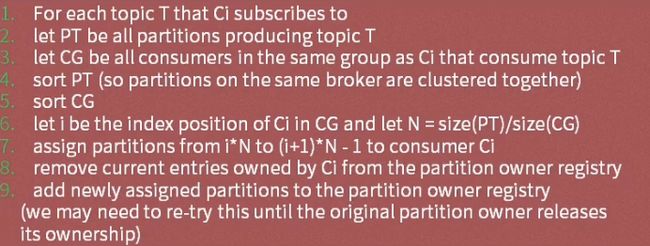

组消费模型的伪代码描述如下:

- 上面的流数代表每个consumer组里面包含的consumer实例个数。

kafka Topic的分配算法如下所示:

消费模型的对比:

- 分区消费模型:较为灵活,但需要自己处理各种异常情况;且需要自己管理offset以实现消息传递的其他语义。

- 组消费模型:更加简单,但不灵活,不需要自己处理异常,不需要自己管理offset,其只能实现kafka默认的最少一次消息传递语义(可能会发生重复)。

- 消息传递语义有三种:最少一次(消费者收到的消息可能会重复),最多一个(消费者可能收不到这条消息),有且仅有一次(不会发生重复也不会丢失)

分区消费模型的python实现

eversilver@debian:~/silverTest/kafka/kafka/projects/consumer/partition$ cat partition_consumer.py

#!/usr/bin/env python

# coding=utf-8

import threading

from kafka.client import KafkaClient

from kafka.consumer import SimpleConsumer

class Consumer(threading.Thread):

daemon=True

def __init__(self, partition_index):

threading.Thread.__init__(self)

self.part = [partition_index]

self.__offset = 0

def run(self):

client = KafkaClient("192.168.128.128:19092,192.168.128.129:19092")

consumer=SimpleConsumer(client,"test-group","myTest",auto_commit=False,partitions=self.part)

consumer.seek(0,0)

while True:

message = consumer.get_message(True, 60)

self.__offset = message.offset

print (message.message.value)

eversilver@debian:~/silverTest/kafka/kafka/projects/consumer/partition$ cat main.py

#!/usr/bin/env python

# coding=utf-8

import logging, time

import partition_consumer

def main():

threads = []

partition = 3

for index in range(partition):

threads.append(partition_consumer.Consumer(index))

for t in threads:

t.start()

time.sleep(50000)

if __name__ == '__main__':

main()

组消费模型的python实现

eversilver@debian:~/silverTest/kafka/kafka/projects/consumer/group$ cat group_consumer.py

#!/usr/bin/env python

# coding=utf-8

import threading

from kafka.client import KafkaClient

from kafka.consumer import SimpleConsumer

class Consumer(threading.Thread):

daemon = True

def run(self):

client = KafkaClient("192.168.128.128:19092,192.168.128.129:19092,192.168.128.130:19092")

consumer = SimpleConsumer(client, "test-group", "mytest")

for message in consumer:

print(message.message.value)

eversilver@debian:~/silverTest/kafka/kafka/projects/consumer/group$ cat main.py

#!/usr/bin/env python

# coding=utf-8

import group_consumer

import time

def main():

consumer_thread = group_consumer.Consumer()

consumer_thread.start()

time.sleep(500000)

if __name__ == '__main__':

main()

python客户端参数调优

- fetch_size_bytes:从服务器获取得到的单个包的大小

- buffer_size:kafka客户端缓冲区大小(一次最多可以从服务器获取的数据大小)

- Group:分组消费的分组名

- auto_commit:offset是否自动进行提交(一般用于分区消费模型)

分组消费模式java实现

package kafka.consumer.group;

import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream;

public class ConsumerTest implements Runnable {

private KafkaStream m_stream;

private int m_threadNumber;

public ConsumerTest(KafkaStream a_stream, int a_threadNumber) {

m_threadNumber = a_threadNumber;

m_stream = a_stream;

}

public void run() {

ConsumerIterator it = m_stream.iterator();

while (it.hasNext()){

System.out.println("Thread " + m_threadNumber + ": " + new String(it.next().message()));

}

System.out.println("Shutting down Thread: " + m_threadNumber);

}

}

package kafka.consumer.group;

import kafka.consumer.ConsumerConfig;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties;

import java.util.concurrent.ExecutorService;

import java.util.concurrent.Executors;

import java.util.concurrent.TimeUnit;

public class GroupConsumerTest extends Thread {

private final ConsumerConnector consumer;

private final String topic;

private ExecutorService executor;

public GroupConsumerTest(String a_zookeeper, String a_groupId, String a_topic){

consumer = kafka.consumer.Consumer.createJavaConsumerConnector(

createConsumerConfig(a_zookeeper, a_groupId));

this.topic = a_topic;

}

public void shutdown() {

if (consumer != null) consumer.shutdown();

if (executor != null) executor.shutdown();

try {

if (!executor.awaitTermination(Long.MAX_VALUE, TimeUnit.MILLISECONDS)) {

System.out.println("Timed out waiting for consumer threads to shut down, exiting uncleanly");

}

} catch (InterruptedException e) {

System.out.println("Interrupted during shutdown, exiting uncleanly");

}

}

public void run(int a_numThreads) {

Map topicCountMap = new HashMap();

topicCountMap.put(topic, new Integer(a_numThreads));

Map>> consumerMap = consumer.createMessageStreams(topicCountMap);

List> streams = consumerMap.get(topic);

// now launch all the threads

//

executor = Executors.newFixedThreadPool(a_numThreads);

// now create an object to consume the messages

//

int threadNumber = 0;

for (final KafkaStream stream : streams) {

executor.submit(new ConsumerTest(stream, threadNumber));

threadNumber++;

}

}

private static ConsumerConfig createConsumerConfig(String a_zookeeper, String a_groupId) {

Properties props = new Properties();

props.put("zookeeper.connect", a_zookeeper);

props.put("group.id", a_groupId);

props.put("zookeeper.session.timeout.ms", "40000");

props.put("zookeeper.sync.time.ms", "2000");

props.put("auto.commit.interval.ms", "1000");

return new ConsumerConfig(props);

}

public static void main(String[] args) {

if(args.length < 1){

System.out.println("Please assign partition number.");

}

String zooKeeper = "10.206.216.13:12181,10.206.212.14:12181,10.206.209.25:12181";

String groupId = "jikegrouptest";

String topic = "jiketest";

int threads = Integer.parseInt(args[0]);

GroupConsumerTest example = new GroupConsumerTest(zooKeeper, groupId, topic);

example.run(threads);

try {

Thread.sleep(Long.MAX_VALUE);

} catch (InterruptedException ie) {

}

example.shutdown();

}

}

分区消费模式的java实现

package kafka.consumer.partition;

import kafka.api.FetchRequest;

import kafka.api.FetchRequestBuilder;

import kafka.api.PartitionOffsetRequestInfo;

import kafka.common.ErrorMapping;

import kafka.common.TopicAndPartition;

import kafka.javaapi.*;

import kafka.javaapi.consumer.SimpleConsumer;

import kafka.message.MessageAndOffset;

import java.nio.ByteBuffer;

import java.util.ArrayList;

import java.util.Collections;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

public class PartitionConsumerTest {

public static void main(String args[]) {

PartitionConsumerTest example = new PartitionConsumerTest();

long maxReads = Long.MAX_VALUE;

String topic = "jiketest";

if(args.length < 1){

System.out.println("Please assign partition number.");

}

List seeds = new ArrayList();

String hosts="10.206.216.13,10.206.212.14,10.206.209.25";

String[] hostArr = hosts.split(",");

for(int index = 0;index < hostArr.length;index++){

seeds.add(hostArr[index].trim());

}

int port = 19092;

int partLen = Integer.parseInt(args[0]);

for(int index=0;index < partLen;index++){

try {

example.run(maxReads, topic, index/*partition*/, seeds, port);

} catch (Exception e) {

System.out.println("Oops:" + e);

e.printStackTrace();

}

}

}

private List m_replicaBrokers = new ArrayList();

public PartitionConsumerTest() {

m_replicaBrokers = new ArrayList();

}

public void run(long a_maxReads, String a_topic, int a_partition, List a_seedBrokers, int a_port) throws Exception {

// find the meta data about the topic and partition we are interested in

//

PartitionMetadata metadata = findLeader(a_seedBrokers, a_port, a_topic, a_partition);

if (metadata == null) {

System.out.println("Can't find metadata for Topic and Partition. Exiting");

return;

}

if (metadata.leader() == null) {

System.out.println("Can't find Leader for Topic and Partition. Exiting");

return;

}

String leadBroker = metadata.leader().host();

String clientName = "Client_" + a_topic + "_" + a_partition;

SimpleConsumer consumer = new SimpleConsumer(leadBroker, a_port, 100000, 64 * 1024, clientName);

long readOffset = getLastOffset(consumer,a_topic, a_partition, kafka.api.OffsetRequest.EarliestTime(), clientName);

int numErrors = 0;

while (a_maxReads > 0) {

if (consumer == null) {

consumer = new SimpleConsumer(leadBroker, a_port, 100000, 64 * 1024, clientName);

}

FetchRequest req = new FetchRequestBuilder()

.clientId(clientName)

.addFetch(a_topic, a_partition, readOffset, 100000) // Note: this fetchSize of 100000 might need to be increased if large batches are written to Kafka

.build();

FetchResponse fetchResponse = consumer.fetch(req);

if (fetchResponse.hasError()) {

numErrors++;

// Something went wrong!

short code = fetchResponse.errorCode(a_topic, a_partition);

System.out.println("Error fetching data from the Broker:" + leadBroker + " Reason: " + code);

if (numErrors > 5) break;

if (code == ErrorMapping.OffsetOutOfRangeCode()) {

// We asked for an invalid offset. For simple case ask for the last element to reset

readOffset = getLastOffset(consumer,a_topic, a_partition, kafka.api.OffsetRequest.LatestTime(), clientName);

continue;

}

consumer.close();

consumer = null;

leadBroker = findNewLeader(leadBroker, a_topic, a_partition, a_port);

continue;

}

numErrors = 0;

long numRead = 0;

for (MessageAndOffset messageAndOffset : fetchResponse.messageSet(a_topic, a_partition)) {

long currentOffset = messageAndOffset.offset();

if (currentOffset < readOffset) {

System.out.println("Found an old offset: " + currentOffset + " Expecting: " + readOffset);

continue;

}

readOffset = messageAndOffset.nextOffset();

ByteBuffer payload = messageAndOffset.message().payload();

byte[] bytes = new byte[payload.limit()];

payload.get(bytes);

System.out.println(String.valueOf(messageAndOffset.offset()) + ": " + new String(bytes, "UTF-8"));

numRead++;

a_maxReads--;

}

if (numRead == 0) {

try {

Thread.sleep(1000);

} catch (InterruptedException ie) {

}

}

}

if (consumer != null) consumer.close();

}

public static long getLastOffset(SimpleConsumer consumer, String topic, int partition,

long whichTime, String clientName) {

TopicAndPartition topicAndPartition = new TopicAndPartition(topic, partition);

Map requestInfo = new HashMap();

requestInfo.put(topicAndPartition, new PartitionOffsetRequestInfo(whichTime, 1));

kafka.javaapi.OffsetRequest request = new kafka.javaapi.OffsetRequest(

requestInfo, kafka.api.OffsetRequest.CurrentVersion(), clientName);

OffsetResponse response = consumer.getOffsetsBefore(request);

if (response.hasError()) {

System.out.println("Error fetching data Offset Data the Broker. Reason: " + response.errorCode(topic, partition) );

return 0;

}

long[] offsets = response.offsets(topic, partition);

return offsets[0];

}

private String findNewLeader(String a_oldLeader, String a_topic, int a_partition, int a_port) throws Exception {

for (int i = 0; i < 3; i++) {

boolean goToSleep = false;

PartitionMetadata metadata = findLeader(m_replicaBrokers, a_port, a_topic, a_partition);

if (metadata == null) {

goToSleep = true;

} else if (metadata.leader() == null) {

goToSleep = true;

} else if (a_oldLeader.equalsIgnoreCase(metadata.leader().host()) && i == 0) {

// first time through if the leader hasn't changed give ZooKeeper a second to recover

// second time, assume the broker did recover before failover, or it was a non-Broker issue

//

goToSleep = true;

} else {

return metadata.leader().host();

}

if (goToSleep) {

try {

Thread.sleep(1000);

} catch (InterruptedException ie) {

}

}

}

System.out.println("Unable to find new leader after Broker failure. Exiting");

throw new Exception("Unable to find new leader after Broker failure. Exiting");

}

private PartitionMetadata findLeader(List a_seedBrokers, int a_port, String a_topic, int a_partition) {

PartitionMetadata returnMetaData = null;

loop:

for (String seed : a_seedBrokers) {

SimpleConsumer consumer = null;

try {

consumer = new SimpleConsumer(seed, a_port, 100000, 64 * 1024, "leaderLookup");

List topics = Collections.singletonList(a_topic);

TopicMetadataRequest req = new TopicMetadataRequest(topics);

kafka.javaapi.TopicMetadataResponse resp = consumer.send(req);

List metaData = resp.topicsMetadata();

for (TopicMetadata item : metaData) {

for (PartitionMetadata part : item.partitionsMetadata()) {

if (part.partitionId() == a_partition) {

returnMetaData = part;

break loop;

}

}

}

} catch (Exception e) {

System.out.println("Error communicating with Broker [" + seed + "] to find Leader for [" + a_topic

+ ", " + a_partition + "] Reason: " + e);

} finally {

if (consumer != null) consumer.close();

}

}

if (returnMetaData != null) {

m_replicaBrokers.clear();

for (kafka.cluster.Broker replica : returnMetaData.replicas()) {

m_replicaBrokers.add(replica.host());

}

}

return returnMetaData;

}

}

java客户端的参数调优

- fetchSize:从服务器获取的单包大小

- bufferSize:kafka客户端缓冲区大小

- group.id:分组消费分组名(用于实现复制消费,每个分组都能取得全量的数)