- SpringBoot整合Quartz

m0_67402564

面试学习路线阿里巴巴android前端后端

目录`Quartz``Quartz`简介`Quartz`优点核心概念`Quartz`的作业存储类型`Cron`表达式`Cron`语法`Cron`语法中每个时间元素的说明`Cron`语法中特殊字符说明在线`Cron`表达式生成器`Springboot`整合`Quartz`数据库表准备`Maven`主要依赖配置文件`quartz.properties``application.properties``

- Spark Livy 指南及livy部署访问实践

house.zhang

大数据-Spark大数据

背景:ApacheSpark是一个比较流行的大数据框架、广泛运用于数据处理、数据分析、机器学习中,它提供了两种方式进行数据处理,一是交互式处理:比如用户使用spark-shell,编写交互式代码编译成spark作业提交到集群上去执行;二是批处理,通过spark-submit提交打包好的spark应用jar到集群中进行执行。这两种运行方式都需要安装spark客户端配置好yarn集群信息,并打通集群网

- 大数据学习(四):Livy的安装配置及pyspark的会话执行

猪笨是念来过倒

大数据pyspark

一个基于Spark的开源REST服务,它能够通过REST的方式将代码片段或是序列化的二进制代码提交到Spark集群中去执行。它提供了以下这些基本功能:提交Scala、Python或是R代码片段到远端的Spark集群上执行;提交Java、Scala、Python所编写的Spark作业到远端的Spark集群上执行;提交批处理应用在集群中运行。从Livy所提供的基本功能可以看到Livy涵盖了原生Spar

- RHCE第一次作业

岩魈云散

服务器linux网络

实验4:nfs自动挂载实验原理:当客户端有使用NFS文件系统的需求时才让系统自动挂载,而当NFS文件系统使用完毕后,让NFS自动卸载。使用服务:nfs、autofs实验演示:需要完成自动挂载,必须使用两台虚拟机,一台做客户端,一台做服务端。这里新建了一张网卡,使用新的网卡去完成该实验,而不使用Linux默认的网卡。1.配置新网卡ip地址查看ip,新网卡ens224初始没有ip,需要先配置ip[ro

- 【2024年华为OD机试】 (B卷,100分)- 流水线(Java & JS & Python&C/C++)

妄北y

算法汇集总结华为odjavajavascript游戏C++c语言python

一、问题描述题目描述一个工厂有m条流水线,来并行完成n个独立的作业,该工厂设置了一个调度系统,在安排作业时,总是优先执行处理时间最短的作业。现给定流水线个数m,需要完成的作业数n,每个作业的处理时间分别为t1,t2,...,tn。请你编程计算处理完所有作业的耗时为多少?当n>m时,首先处理时间短的m个作业进入流水线,其他的等待,当某个作业完成时,依次从剩余作业中取处理时间最短的进入处理。输入描述第

- 算法设计与分析第一章课后作业

小毛头~

算法

第一章一.单选题1【单选题】子程序(包括函数和方法)是用来被调用的,递归指的是A、不同子程序之间直接或间接调用的程序设计方法B、同一个子程序直接或间接调用自己的程序设计方法C、子程序向调用它的程序段返回结果的程序设计方法D、子程序不向调用它的程序段返回结果的程序设计方法正确答案:B我的答案:B得分:4.0分2【单选题】背包问题:n个物品和1个背包。对物品i,其价值为vi,重量为wi,背包的容量为W

- Python学习day14 BBS功能和聊天室

weixin_30725467

json数据库前端ViewUI

Createdon2017年5月15日@author:louts第1课作业讲解及装饰器使用28minutesdefcheck(func):defrec(request,*args,**kargs):returnfunc(request,*args,**kargs)returnrec@checkdefindex(request,):printrequest第2课自定义装饰器扩展使用18minutes

- 【第十章——数据可视化之地图构建】【最新!黑马程序员Python自学课程笔记】课上笔记+案例源码+作业源码

嗯哈!

信息可视化python笔记pycharm

第十章-数据可视化之地图构建10.1数据可视化-地图-基础地图使用注意!!!现在的版本,需要加:省,市"""演示地图可视化的基本使用"""frompyecharts.chartsimportMapfrompyecharts.optionsimportVisualMapOpts#准备地图对象map=Map()#准备数据data=[("北京市",9),("上海市",8),("湖南省",5),("台湾省

- 低空经济市场竞争激烈,无人机研发公司如何突破困境?

无人机技术圈

无人机技术无人机

低空经济是指以民用有人驾驶和无人驾驶航空器为主,以载人、载货及其他作业等多场景低空飞行活动为牵引,辐射带动相关领域融合发展的综合性经济形态。从应用场景来看,低空经济涉及军用、政用、商用、民用全方位场景;从产品角度来看,主要包含低空内飞行的无人机、私人飞机、eVTOL等航空器;从产业构成来看,主要包括低空制造、低空飞行、低空保障、低空基础设施和综合服务等产业。在低空经济市场竞争激烈的背景下,无人机研

- LabVIEW 蔬菜精密播种监测系统

LabVIEW开发

LabVIEW开发案例LabVIEW开发案例

在当前蔬菜播种工作中,存在着诸多问题。一方面,播种精度难以达到现代农业的高标准要求,导致种子分布不均,影响作物的生长发育和最终产量;另一方面,对于小粒径种子,传统的监测手段难以实现有效监测,使得播种过程中的质量把控成为难题。为了攻克这些难题,设计了一套基于光纤传感器与LabVIEW的单粒精密播种监测系统。该系统充分发挥高精度传感器的感知能力以及先进软件的强大数据处理与控制能力,显著提高了播种作业的

- JIT模式深度解析:如何精准预测,高效执行

团队协作

JIT(Just-In-Time)模式,即准时制生产方式,是一种以减少浪费、提高效率为目标的生产管理方法。以下是对JIT模式的详细解析:一、JIT模式的定义JIT模式是指在精确测定生产各工艺环节作业效率的前提下,按订单准确地计划,以消除一切无效作业与浪费为目标的一种管理模式。它强调在正确的时间,将正确的原材料和零部件,以正确的数量,送往正确的地点,以满足生产或客户的需求。这种生产方式也被称为零库存

- 寒假四(1.15)

2401_88126894

算法数据结构

今天写了作业中的两个题目,看了网课中的指针,对指针更了解,记了四级单词。1.解析:首先定义了几个需要用到的数组,定义后面需要用的变量,使用循环将n个数输入,再使用一个for循环,while(a[i]>=a[q[r]]&&r>0):r在这里可能是q数组的一个索引,它的初始值没有明确给出,在代码中可能假设为0。当a[i]的值大于或等于a[q[r]]并且r大于0时,r的值减1。这个while循环的目的是

- Flink 批作业如何在 Master 节点出错重启后恢复执行进度?

flink大数据

摘要:本文撰写自阿里云研发工程师李俊睿(昕程),主要介绍Flink1.20版本中引入了批作业在JMfailover后的进度恢复功能。主要分为以下四个内容:背景解决思路使用效果如何启用一、背景在Flink1.20版本之前,如果Flink的JobMaster(JM)发生故障导致被终止,将会发生如下两种情况:如果作业未启用高可用性(HA),作业将失败。如果作业启用了HA,JM会被自动重新拉起(JMfai

- 全网最全的Java项目系统源码+LW

程序猿麦小七

毕业设计Java后台JavaWebjavaspringboot开发语言毕业设计题目选择

描述临近学期结束,还是毕业设计,你还在做java程序网络编程,期末作业,老师的作业要求觉得大了吗?不知道毕业设计该怎么办?网页功能的数量是否太多?没有合适的类型或系统?等等。你有题目我就有项目,包安装运行成功。文档较大,请耐性等待,可用电脑打开,(CTRL+F)精准搜索题目关键词查询第一部分:SpringBoot项目源码(800+)SpringBoot1-300springboot001基于Sp

- Unity学习记录——UI设计

XiaoChen04_3

unity学习ui

Unity学习记录——UI设计前言本文是中山大学软件工程学院2020级3d游戏编程与设计的作业8编程题:血条制作1.相关资源本次项目之中的人物模型来自StarterAssets-ThirdPersonCharacterController|必备工具|UnityAssetStore此处使用了以下路径的PlayerArmature预制,这个预制人物模型可以进行行走奔跑跳跃等动作,很适合血条的演示由于这

- 带你学C带你飞 | 数组 | 可变长度数组 | 字符串处理函数 | 二维数组

Drill_

带你学C带你飞c语言

文章目录一、数组1.数组2.数组的课后作业二、可变长度数组1.可变长度数组三、字符串处理函数1.字符串处理函数2.字符串处理函数课后作业四、二维数组1.二维数组2.二维数组的课后作业一、数组1.数组 有些时候,我们需要保存类型一致、数量庞大的数据,这时就需要引入数组。数组的定义:存储一批同类型数据的地方。数组的语法:数据类型数组名[元素个数]元素个数只能是常量或者常量表达式inta[6];cha

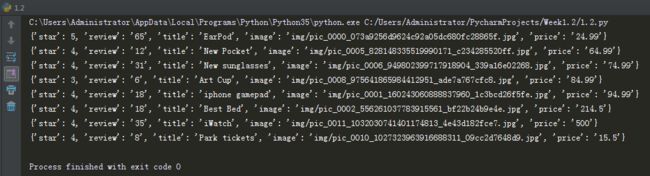

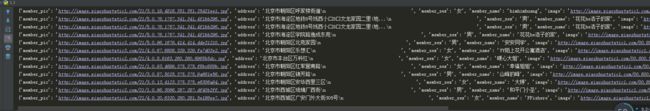

- 超详细python实现爬取淘宝商品信息(标题、销量、地区、店铺等)

芝士胡椒粉

python爬虫数据库数据可视化

引导因为数据可视化这门课程的大作业要自己爬取数据,想着爬取淘宝的数据,结果找了不少文章都不太行、或者已经失效了等等,就边学边看边写搓了一份代码出来,一是为了记录一下、二是如果大家有需要也可以使用。首先看最后爬取的数据的效果:代码部分引入第三方库importpymysqlfromseleniumimportwebdriverfromselenium.common.exceptionsimportTi

- 11个精美网页——Web前端开发技术课程大作业,期末考试,Dreamweaver简单网页制作

web学生网页设计

web前端web前端网页设计网页设计与制作网页作业

HTML实例网页代码,本实例适合于初学HTML的同学。该实例里面有设置了css的样式设置,有div的样式格局,这个实例比较全面,有助于同学的学习,本文将介绍如何通过从头开始设计个人网站并将其转换为代码的过程来实践设计。精彩专栏推荐❤【作者主页——获取更多优质源码】❤【web前端期末大作业——毕设项目精品实战案例(1000套)】文章目录一、网页介绍一、网页效果二、代码展示1.HTML代码2.CSS代

- Web第一次作业

feng68_

前端

目录题目html代码indexloginregistercss代码baseindexloginregister效果展示indexloginregister题目实现一个登录页面、实现一个注册页面;实现一个主页-登录页面:`login.html`-注册页面:`register.html`-主页:`index.html`要求如下:-主页中,可以点击**注册**或者**登录**能直接在新窗口跳转到对应的页

- Shell作业二

捣蛋大队

运维服务器

1、编写一个Shell脚本用于判断192.168.242.0/24网络中当前在线的IP地址,并打印出这些IP地址。脚本:#!/bin/bash#网络前缀NETWORK="192.168.242"echo"正在扫描网络$NETWORK.0/24中的活动主机..."#遍历主机地址foriin{1..254}do#IP地址IP="${NETWORK}.${i}"#使用ping命令检查主机是否在线,仅发送

- 设计一个缓存策略,动态缓存热点数据

「已注销」

智力题&场景题缓存数据库java排行榜

写在前面,因为我们最近的大作业项目需要用到热点排行这个功能,因为我们是要使用Elasticsearch来存储数据,然后最初设想是在ES中实现这个热点排行的功能,但是经过仔细思考,在我们这个项目中使用ES来做热点排行是一个很蠢的方式,因为我们这只是一个很小的排行,所以最终我们还是使用Redis来实现热点排行使用LRU?LRU是一种常见的算法,假如我们设定TOP10的热点数据,那么我们可以规定LRU容

- Gitlab流水线配置

由于格式和图片解析问题,为了更好阅读体验可前往阅读原文流水线的流程是,提交代码后,gitlab会检测项目根目录里的.github-ci.yml文件,根据文件中的流水线自动构建,配置文件格式正确性可以在gitlab进行文件校验,格式使用yaml文件格式,一个yaml文件就是一个流水线,里面会定义多个作业示例stages:-install-build-deployinstall_job:stage:i

- python程序设计期末大作业,python大作业代码100行

chatgpt001

人工智能

大家好,小编来为大家解答以下问题,python期末大作业代码200行带批注,python程序设计期末大作业,今天让我们一起来看看吧!#题目:利用Python实现一个计算器,可以计算小数复数等importredefcalculator(string):#去除括号函数defget_grouping(string):flag=Falseret=re.findall('\(([^()]+)\)',stri

- 【运维自动化-作业平台】如何使用全局变量之命名空间类型?

命名空间类型的全局变量主要适用场景是同一批主机在多个步骤间需要传递独立的变量值,比如内网ip、hostame,每台主机都是不同的变量值。而字符串变量是全局针对所有主机所有步骤都是一样的变量值。实操演示例:定义一个local_ip的命名空间变量,目标两台机器,然后添加两个执行脚本的步骤,看下变量是如何渲染的。1、添加命名空间变量local_ip2、添加两个执行脚本步骤(步骤一)(步骤二)3、调试执行

- ArgoWorkflow 教程(一)--DevOps 另一选择?云原生 CICD 初体验

本文主要记录了如何在k8s上快速部署云原生的工作流引擎ArgoWorkflow。ArgoWorkflow是什么ArgoWorkflows是一个开源的云原生工作流引擎,用于在Kubernetes上编排并行作业。Argo工作流作为KubernetesCRD实现。定义工作流,其中工作流中的每个步骤都是一个容器。将多步骤工作流建模为一系列任务,或使用DAG来捕获任务之间的依赖关系图。使用Argo可以在很短

- python头歌实验五作业_3.1(hbut)

树先生.

python开发语言

第1关:判断火车票座位##第1关:判断火车票座位seat=input()try:letter=seat[-1]line=int(seat[:len(seat)-1])ifline17or(letternotin['A','a','B','b','C','c','D','d','F','f']):print("输入错误")elifletterin['A','a','F','f']:print("窗口

- 2018-07-23-催眠日作业-#不一样的31天#-66小鹿

小鹿_33

预言日:人总是在逃避命运的路上,与之不期而遇。心理学上有个著名的名词,叫做自证预言;经济学上也有一个很著名的定律叫做,墨菲定律;在灵修派上,还有一个很著名的法则,叫做吸引力法则。这3个领域的词,虽然看起来不太一样,但是他们都在告诉人们一个现象:你越担心什么,就越有可能会发生什么。同样的道理,你越想得到什么,就应该要积极地去创造什么。无论是自证预言,墨菲定律还是吸引力法则,对人都有正反2个维度的影响

- 我的烦恼

余建梅

我的烦恼。女儿问我:“你给学生布置什么作文题目?”“《我的烦恼》。”“他们都这么大了,你觉得他们还有烦恼吗?”“有啊!每个人都会有自己烦恼。”“我不相信,大人是没有烦恼的,如果说一定有的话,你的烦恼和我写作业有关,而且是小烦恼。不像我,天天被你说,有这样的妈妈,烦恼是没完没了。”女儿愤愤不平。每个人都会有自己的烦恼,处在上有老下有小的年纪,烦恼多的数不完。想干好工作带好孩子,想孝顺父母又想经营好自

- 感赏日志133

马姐读书

图片发自App感赏自己今天买个扫地机,以后可以解放出来多看点书,让这个智能小机器人替我工作了。感赏孩子最近进步很大,每天按时上学,认真听课,认真背书,主动认真完成老师布置的作业。感赏自己明白自己容易受到某人的影响,心情不好,每当此刻我就会舒缓,感赏,让自己尽快抽离,想好的一面。感赏儿子今天在我提醒他事情时,告诉我谢谢妈妈对我的提醒我明白了,而不是说我啰嗦,管事情,孩子更懂事了,懂得感恩了。投射父母

- DIV+CSS+JavaScript技术制作网页(旅游主题网页设计与制作)云南大理

STU学生网页设计

网页设计期末网页作业html静态网页html5期末大作业网页设计web大作业

️精彩专栏推荐作者主页:【进入主页—获取更多源码】web前端期末大作业:【HTML5网页期末作业(1000套)】程序员有趣的告白方式:【HTML七夕情人节表白网页制作(110套)】文章目录二、网站介绍三、网站效果▶️1.视频演示2.图片演示四、网站代码HTML结构代码CSS样式代码五、更多源码二、网站介绍网站布局方面:计划采用目前主流的、能兼容各大主流浏览器、显示效果稳定的浮动网页布局结构。网站程

- JAVA基础

灵静志远

位运算加载Date字符串池覆盖

一、类的初始化顺序

1 (静态变量,静态代码块)-->(变量,初始化块)--> 构造器

同一括号里的,根据它们在程序中的顺序来决定。上面所述是同一类中。如果是继承的情况,那就在父类到子类交替初始化。

二、String

1 String a = "abc";

JAVA虚拟机首先在字符串池中查找是否已经存在了值为"abc"的对象,根

- keepalived实现redis主从高可用

bylijinnan

redis

方案说明

两台机器(称为A和B),以统一的VIP对外提供服务

1.正常情况下,A和B都启动,B会把A的数据同步过来(B is slave of A)

2.当A挂了后,VIP漂移到B;B的keepalived 通知redis 执行:slaveof no one,由B提供服务

3.当A起来后,VIP不切换,仍在B上面;而A的keepalived 通知redis 执行slaveof B,开始

- java文件操作大全

0624chenhong

java

最近在博客园看到一篇比较全面的文件操作文章,转过来留着。

http://www.cnblogs.com/zhuocheng/archive/2011/12/12/2285290.html

转自http://blog.sina.com.cn/s/blog_4a9f789a0100ik3p.html

一.获得控制台用户输入的信息

&nbs

- android学习任务

不懂事的小屁孩

工作

任务

完成情况 搞清楚带箭头的pupupwindows和不带的使用 已完成 熟练使用pupupwindows和alertdialog,并搞清楚两者的区别 已完成 熟练使用android的线程handler,并敲示例代码 进行中 了解游戏2048的流程,并完成其代码工作 进行中-差几个actionbar 研究一下android的动画效果,写一个实例 已完成 复习fragem

- zoom.js

换个号韩国红果果

oom

它的基于bootstrap 的

https://raw.github.com/twbs/bootstrap/master/js/transition.js transition.js模块引用顺序

<link rel="stylesheet" href="style/zoom.css">

<script src=&q

- 详解Oracle云操作系统Solaris 11.2

蓝儿唯美

Solaris

当Oracle发布Solaris 11时,它将自己的操作系统称为第一个面向云的操作系统。Oracle在发布Solaris 11.2时继续它以云为中心的基调。但是,这些说法没有告诉我们为什么Solaris是配得上云的。幸好,我们不需要等太久。Solaris11.2有4个重要的技术可以在一个有效的云实现中发挥重要作用:OpenStack、内核域、统一存档(UA)和弹性虚拟交换(EVS)。

- spring学习——springmvc(一)

a-john

springMVC

Spring MVC基于模型-视图-控制器(Model-View-Controller,MVC)实现,能够帮助我们构建像Spring框架那样灵活和松耦合的Web应用程序。

1,跟踪Spring MVC的请求

请求的第一站是Spring的DispatcherServlet。与大多数基于Java的Web框架一样,Spring MVC所有的请求都会通过一个前端控制器Servlet。前

- hdu4342 History repeat itself-------多校联合五

aijuans

数论

水题就不多说什么了。

#include<iostream>#include<cstdlib>#include<stdio.h>#define ll __int64using namespace std;int main(){ int t; ll n; scanf("%d",&t); while(t--)

- EJB和javabean的区别

asia007

beanejb

EJB不是一般的JavaBean,EJB是企业级JavaBean,EJB一共分为3种,实体Bean,消息Bean,会话Bean,书写EJB是需要遵循一定的规范的,具体规范你可以参考相关的资料.另外,要运行EJB,你需要相应的EJB容器,比如Weblogic,Jboss等,而JavaBean不需要,只需要安装Tomcat就可以了

1.EJB用于服务端应用开发, 而JavaBeans

- Struts的action和Result总结

百合不是茶

strutsAction配置Result配置

一:Action的配置详解:

下面是一个Struts中一个空的Struts.xml的配置文件

<?xml version="1.0" encoding="UTF-8" ?>

<!DOCTYPE struts PUBLIC

&quo

- 如何带好自已的团队

bijian1013

项目管理团队管理团队

在网上看到博客"

怎么才能让团队成员好好干活"的评论,觉得写的比较好。 原文如下: 我做团队管理有几年了吧,我和你分享一下我认为带好团队的几点:

1.诚信

对团队内成员,无论是技术研究、交流、问题探讨,要尽可能的保持一种诚信的态度,用心去做好,你的团队会感觉得到。 2.努力提

- Java代码混淆工具

sunjing

ProGuard

Open Source Obfuscators

ProGuard

http://java-source.net/open-source/obfuscators/proguardProGuard is a free Java class file shrinker and obfuscator. It can detect and remove unused classes, fields, m

- 【Redis三】基于Redis sentinel的自动failover主从复制

bit1129

redis

在第二篇中使用2.8.17搭建了主从复制,但是它存在Master单点问题,为了解决这个问题,Redis从2.6开始引入sentinel,用于监控和管理Redis的主从复制环境,进行自动failover,即Master挂了后,sentinel自动从从服务器选出一个Master使主从复制集群仍然可以工作,如果Master醒来再次加入集群,只能以从服务器的形式工作。

什么是Sentine

- 使用代理实现Hibernate Dao层自动事务

白糖_

DAOspringAOP框架Hibernate

都说spring利用AOP实现自动事务处理机制非常好,但在只有hibernate这个框架情况下,我们开启session、管理事务就往往很麻烦。

public void save(Object obj){

Session session = this.getSession();

Transaction tran = session.beginTransaction();

try

- maven3实战读书笔记

braveCS

maven3

Maven简介

是什么?

Is a software project management and comprehension tool.项目管理工具

是基于POM概念(工程对象模型)

[设计重复、编码重复、文档重复、构建重复,maven最大化消除了构建的重复]

[与XP:简单、交流与反馈;测试驱动开发、十分钟构建、持续集成、富有信息的工作区]

功能:

- 编程之美-子数组的最大乘积

bylijinnan

编程之美

public class MaxProduct {

/**

* 编程之美 子数组的最大乘积

* 题目: 给定一个长度为N的整数数组,只允许使用乘法,不能用除法,计算任意N-1个数的组合中乘积中最大的一组,并写出算法的时间复杂度。

* 以下程序对应书上两种方法,求得“乘积中最大的一组”的乘积——都是有溢出的可能的。

* 但按题目的意思,是要求得这个子数组,而不

- 读书笔记-2

chengxuyuancsdn

读书笔记

1、反射

2、oracle年-月-日 时-分-秒

3、oracle创建有参、无参函数

4、oracle行转列

5、Struts2拦截器

6、Filter过滤器(web.xml)

1、反射

(1)检查类的结构

在java.lang.reflect包里有3个类Field,Method,Constructor分别用于描述类的域、方法和构造器。

2、oracle年月日时分秒

s

- [求学与房地产]慎重选择IT培训学校

comsci

it

关于培训学校的教学和教师的问题,我们就不讨论了,我主要关心的是这个问题

培训学校的教学楼和宿舍的环境和稳定性问题

我们大家都知道,房子是一个比较昂贵的东西,特别是那种能够当教室的房子...

&nb

- RMAN配置中通道(CHANNEL)相关参数 PARALLELISM 、FILESPERSET的关系

daizj

oraclermanfilespersetPARALLELISM

RMAN配置中通道(CHANNEL)相关参数 PARALLELISM 、FILESPERSET的关系 转

PARALLELISM ---

我们还可以通过parallelism参数来指定同时"自动"创建多少个通道:

RMAN > configure device type disk parallelism 3 ;

表示启动三个通道,可以加快备份恢复的速度。

- 简单排序:冒泡排序

dieslrae

冒泡排序

public void bubbleSort(int[] array){

for(int i=1;i<array.length;i++){

for(int k=0;k<array.length-i;k++){

if(array[k] > array[k+1]){

- 初二上学期难记单词三

dcj3sjt126com

sciet

concert 音乐会

tonight 今晚

famous 有名的;著名的

song 歌曲

thousand 千

accident 事故;灾难

careless 粗心的,大意的

break 折断;断裂;破碎

heart 心(脏)

happen 偶尔发生,碰巧

tourist 旅游者;观光者

science (自然)科学

marry 结婚

subject 题目;

- I.安装Memcahce 1. 安装依赖包libevent Memcache需要安装libevent,所以安装前可能需要执行 Shell代码 收藏代码

dcj3sjt126com

redis

wget http://download.redis.io/redis-stable.tar.gz

tar xvzf redis-stable.tar.gz

cd redis-stable

make

前面3步应该没有问题,主要的问题是执行make的时候,出现了异常。

异常一:

make[2]: cc: Command not found

异常原因:没有安装g

- 并发容器

shuizhaosi888

并发容器

通过并发容器来改善同步容器的性能,同步容器将所有对容器状态的访问都串行化,来实现线程安全,这种方式严重降低并发性,当多个线程访问时,吞吐量严重降低。

并发容器ConcurrentHashMap

替代同步基于散列的Map,通过Lock控制。

&nb

- Spring Security(12)——Remember-Me功能

234390216

Spring SecurityRemember Me记住我

Remember-Me功能

目录

1.1 概述

1.2 基于简单加密token的方法

1.3 基于持久化token的方法

1.4 Remember-Me相关接口和实现

- 位运算

焦志广

位运算

一、位运算符C语言提供了六种位运算符:

& 按位与

| 按位或

^ 按位异或

~ 取反

<< 左移

>> 右移

1. 按位与运算 按位与运算符"&"是双目运算符。其功能是参与运算的两数各对应的二进位相与。只有对应的两个二进位均为1时,结果位才为1 ,否则为0。参与运算的数以补码方式出现。

例如:9&am

- nodejs 数据库连接 mongodb mysql

liguangsong

mongodbmysqlnode数据库连接

1.mysql 连接

package.json中dependencies加入

"mysql":"~2.7.0"

执行 npm install

在config 下创建文件 database.js

- java动态编译

olive6615

javaHotSpotjvm动态编译

在HotSpot虚拟机中,有两个技术是至关重要的,即动态编译(Dynamic compilation)和Profiling。

HotSpot是如何动态编译Javad的bytecode呢?Java bytecode是以解释方式被load到虚拟机的。HotSpot里有一个运行监视器,即Profile Monitor,专门监视

- Storm0.9.5的集群部署配置优化

roadrunners

优化storm.yaml

nimbus结点配置(storm.yaml)信息:

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional inf

- 101个MySQL 的调节和优化的提示

tomcat_oracle

mysql

1. 拥有足够的物理内存来把整个InnoDB文件加载到内存中——在内存中访问文件时的速度要比在硬盘中访问时快的多。 2. 不惜一切代价避免使用Swap交换分区 – 交换时是从硬盘读取的,它的速度很慢。 3. 使用电池供电的RAM(注:RAM即随机存储器)。 4. 使用高级的RAID(注:Redundant Arrays of Inexpensive Disks,即磁盘阵列

- zoj 3829 Known Notation(贪心)

阿尔萨斯

ZOJ

题目链接:zoj 3829 Known Notation

题目大意:给定一个不完整的后缀表达式,要求有2种不同操作,用尽量少的操作使得表达式完整。

解题思路:贪心,数字的个数要要保证比∗的个数多1,不够的话优先补在开头是最优的。然后遍历一遍字符串,碰到数字+1,碰到∗-1,保证数字的个数大于等1,如果不够减的话,可以和最后面的一个数字交换位置(用栈维护十分方便),因为添加和交换代价都是1