1. 背景

在网页爬取的时候,有时候会使用scrapy.FormRequest向目标网站提交数据(表单提交)。参照scrapy官方文档的标准写法是:

# header信息

unicornHeader = {

'Host': 'www.example.com',

'Referer': 'http://www.example.com/',

}

# 表单需要提交的数据

myFormData = {'name': 'John Doe', 'age': '27'}

# 自定义信息,向下层响应(response)传递下去

customerData = {'key1': 'value1', 'key2': 'value2'}

yield scrapy.FormRequest(url = "http://www.example.com/post/action",

headers = unicornHeader,

method = 'POST', # GET or POST

formdata = myFormData, # 表单提交的数据

meta = customerData, # 自定义,向response传递数据

callback = self.after_post,

errback = self.error_handle,

# 如果需要多次提交表单,且url一样,那么就必须加此参数dont_filter,防止被当成重复网页过滤掉了

dont_filter = True

)

但是,当表单提交数据myFormData 是形如字典内嵌字典的形式,又该如何写?

2. 案例 — 参数为字典

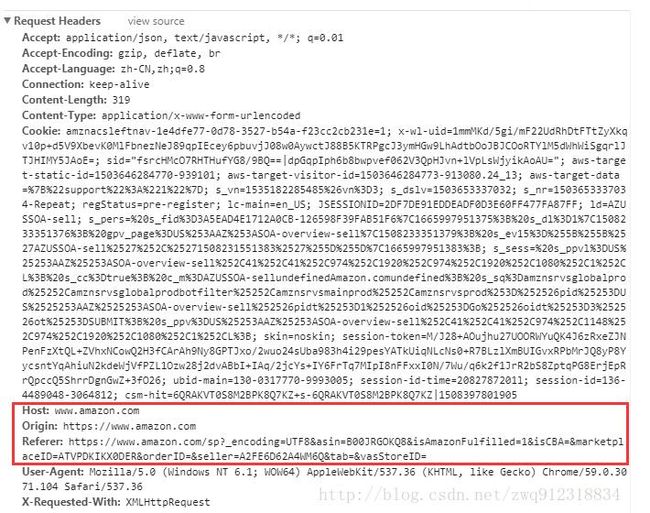

在做亚马逊网站爬取时,当进入商家店铺,爬取店铺内商品列表时,发现采取的方式是ajax请求,返回的是json数据。

请求信息如下:

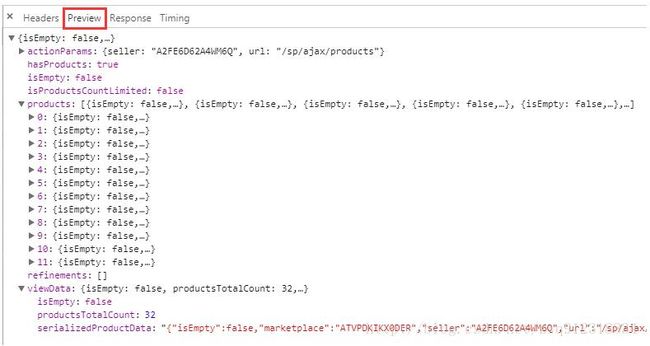

响应信息如下:

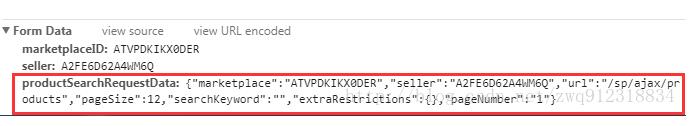

如上图所示,From Data中的数据包含一个字典:

marketplaceID:ATVPDKIKX0DER

seller:A2FE6D62A4WM6Q

productSearchRequestData:{"marketplace":"ATVPDKIKX0DER","seller":"A2FE6D62A4WM6Q","url":"/sp/ajax/products","pageSize":12,"searchKeyword":"","extraRestrictions":{},"pageNumber":"1"}

# formDate 必须构造如下:

myFormData = {

'marketplaceID' : 'ATVPDKIKX0DER',

'seller' : 'A2FE6D62A4WM6Q',

# 注意下面这一行,内部字典是作为一个字符串的形式

'productSearchRequestData' :'{"marketplace":"ATVPDKIKX0DER","seller":"A2FE6D62A4WM6Q","url":"/sp/ajax/products","pageSize":12,"searchKeyword":"","extraRestrictions":{},"pageNumber":"1"}'

}

在amazon中实际使用的构造方法如下:

def sendRequestForProducts(response):

ajaxParam = response.meta

for pageIdx in range(1, ajaxParam['totalPageNum']+1):

ajaxParam['isFirstAjax'] = False

ajaxParam['pageNumber'] = pageIdx

unicornHeader = {

'Host': 'www.amazon.com',

'Origin': 'https://www.amazon.com',

'Referer': ajaxParam['referUrl'],

}

'''

marketplaceID:ATVPDKIKX0DER

seller:AYZQAQRQKEXRP

productSearchRequestData:{"marketplace":"ATVPDKIKX0DER","seller":"AYZQAQRQKEXRP","url":"/sp/ajax/products","pageSize":12,"searchKeyword":"","extraRestrictions":{},"pageNumber":1}

'''

productSearchRequestData = '{"marketplace": "ATVPDKIKX0DER", "seller": "' + f'{ajaxParam["sellerID"]}' + '","url": "/sp/ajax/products", "pageSize": 12, "searchKeyword": "","extraRestrictions": {}, "pageNumber": "' + str(pageIdx) + '"}'

formdataProduct = {

'marketplaceID': ajaxParam['marketplaceID'],

'seller': ajaxParam['sellerID'],

'productSearchRequestData': productSearchRequestData

}

productAjaxMeta = ajaxParam

# 请求店铺商品列表

yield scrapy.FormRequest(

url = 'https://www.amazon.com/sp/ajax/products',

headers = unicornHeader,

formdata = formdataProduct,

func = 'POST',

meta = productAjaxMeta,

callback = self.solderProductAjax,

errback = self.error, # 处理http error

dont_filter = True, # 需要加此参数的

)

3. 原理分析

举例来说,目前有如下一笔数据:

formdata = {

'Field': {"pageIdx":99, "size":"10"},

'func': 'nextPage',

}

从网页上,可以看到请求数据如下:

Field=%7B%22pageIdx%22%3A99%2C%22size%22%3A%2210%22%7D&func=nextPage

第一种,按照如下方式发出请求,结果如下(正确):

yield scrapy.FormRequest(

url = 'https://www.example.com/sp/ajax',

headers = unicornHeader,

formdata = {

'Field': '{"pageIdx":99, "size":"10"}',

'func': 'nextPage',

},

func = 'POST',

callback = self.handleFunc,

)

# 请求数据为:Field=%7B%22pageIdx%22%3A99%2C%22size%22%3A%2210%22%7D&func=nextPage

第二种,按照如下方式发出请求,结果如下(错误,无法获取到正确的数据):

yield scrapy.FormRequest(

url = 'https://www.example.com/sp/ajax',

headers = unicornHeader,

formdata = {

'Field': {"pageIdx":99, "size":"10"},

'func': 'nextPage',

},

func = 'POST',

callback = self.handleFunc,

)

# 经过错误的编码之后,发送的请求为:Field=size&Field=pageIdx&func=nextPage

我们跟踪看一下scrapy中的源码:

# E:/Miniconda/Lib/site-packages/scrapy/http/request/form.py

# FormRequest

class FormRequest(Request):

def __init__(self, *args, **kwargs):

formdata = kwargs.pop('formdata', None)

if formdata and kwargs.get('func') is None:

kwargs['func'] = 'POST'

super(FormRequest, self).__init__(*args, **kwargs)

if formdata:

items = formdata.items() if isinstance(formdata, dict) else formdata

querystr = _urlencode(items, self.encoding)

if self.func == 'POST':

self.headers.setdefault(b'Content-Type', b'application/x-www-form-urlencoded')

self._set_body(querystr)

else:

self._set_url(self.url + ('&' if '?' in self.url else '?') + querystr)

# 关键函数 _urlencode

def _urlencode(seq, enc):

values = [(to_bytes(k, enc), to_bytes(v, enc))

for k, vs in seq

for v in (vs if is_listlike(vs) else [vs])]

return urlencode(values, doseq=1)

分析过程如下:

# 第一步:items = formdata.items() if isinstance(formdata, dict) else formdata

# 第一步结果:经过items()方法执行后,原始的dict格式变成如下列表形式:

dict_items([('func', 'nextPage'), ('Field', {'size': '10', 'pageIdx': 99})])

# 第二步:再经过后面的 _urlencode方法将items转换成如下:

[(b'func', b'nextPage'), (b'Field', b'size'), (b'Field', b'pageIdx')]

# 可以看到就是在调用 _urlencode方法的时候出现了问题,上面的方法执行过后,会使字典形式的数据只保留了keys(value是字典的情况下,只保留了value字典中的key).

解决方案: 就是将字典当成普通的字符串,然后编码(转换成bytes),进行传输,到达服务器端之后,服务器会反过来进行解码,得到这个字典字符串。然后服务器按照Dict进行解析。

拓展:对于其他特殊类型的数据,都按照这种方式打包成字符串进行传递。

4. 补充1 ——参数类型

formdata的 参数值 必须是unicode , str 或者 bytes object,不能是整数。

案例:

yield FormRequest(

url = 'https://www.amztracker.com/unicorn.php',

headers = unicornHeader,

# formdata 的参数必须是字符串

formdata={'rank': 10, 'category': productDetailInfo['topCategory']},

method = 'GET',

meta = {'productDetailInfo': productDetailInfo},

callback = self.amztrackerSale,

errback = self.error, # 本项目中这里触发errback占绝大多数

dont_filter = True, # 按理来说是不需要加此参数的

)

# 提示如下ERROR:

Traceback (most recent call last):

File "E:\Miniconda\lib\site-packages\scrapy\utils\defer.py", line 102, in iter_errback

yield next(it)

File "E:\Miniconda\lib\site-packages\scrapy\spidermiddlewares\offsite.py", line 29, in process_spider_output

for x in result:

File "E:\Miniconda\lib\site-packages\scrapy\spidermiddlewares\referer.py", line 339, in

return (_set_referer(r) for r in result or ())

File "E:\Miniconda\lib\site-packages\scrapy\spidermiddlewares\urllength.py", line 37, in

return (r for r in result or () if _filter(r))

File "E:\Miniconda\lib\site-packages\scrapy\spidermiddlewares\depth.py", line 58, in

return (r for r in result or () if _filter(r))

File "E:\PyCharmCode\categorySelectorAmazon1\categorySelectorAmazon1\spiders\categorySelectorAmazon1Clawer.py", line 224, in parseProductDetail

dont_filter = True,

File "E:\Miniconda\lib\site-packages\scrapy\http\request\form.py", line 31, in __init__

querystr = _urlencode(items, self.encoding)

File "E:\Miniconda\lib\site-packages\scrapy\http\request\form.py", line 66, in _urlencode

for k, vs in seq

File "E:\Miniconda\lib\site-packages\scrapy\http\request\form.py", line 67, in

for v in (vs if is_listlike(vs) else [vs])]

File "E:\Miniconda\lib\site-packages\scrapy\utils\python.py", line 117, in to_bytes

'object, got %s' % type(text).__name__)

TypeError: to_bytes must receive a unicode, str or bytes object, got int

# 正确写法:

formdata = {'rank': str(productDetailInfo['topRank']), 'category': productDetailInfo['topCategory']},

原理部分(源代码):

# 第一阶段: 字典分解为items

if formdata:

items = formdata.items() if isinstance(formdata, dict) else formdata

querystr = _urlencode(items, self.encoding)

# 第二阶段: 对value,调用 to_bytes 编码

def _urlencode(seq, enc):

values = [(to_bytes(k, enc), to_bytes(v, enc))

for k, vs in seq

for v in (vs if is_listlike(vs) else [vs])]

return urlencode(values, doseq=1)

# 第三阶段: 执行 to_bytes ,参数要求是bytes, str

def to_bytes(text, encoding=None, errors='strict'):

"""Return the binary representation of `text`. If `text`

is already a bytes object, return it as-is."""

if isinstance(text, bytes):

return text

if not isinstance(text, six.string_types):

raise TypeError('to_bytes must receive a unicode, str or bytes '

'object, got %s' % type(text).__name__)

5. 补充2 ——参数为中文

formdata的 参数值 必须是unicode , str 或者 bytes object,不能是整数。

以1688网站搜索产品为案例:

搜索信息如下(搜索关键词为:动漫周边):

可以看到 动漫周边 == %B6%AF%C2%FE%D6%DC%B1%DF

# scrapy中这个请求的构造如下

# python3 所有的字符串都是unicode

unicornHeaders = {

':authority': 's.1688.com',

'Referer': 'https://www.1688.com/',

}

# python3 所有的字符串都是unicode

# 动漫周边 tobyte为:%B6%AF%C2%FE%D6%DC%B1%DF

formatStr = "动漫周边".encode('gbk')

print(f"formatStr = {formatStr}")

yield FormRequest(

url = 'https://s.1688.com/selloffer/offer_search.htm',

headers = unicornHeaders,

formdata = {'keywords': formatStr, 'n': 'y', 'spm': 'a260k.635.1998096057.d1'},

method = 'GET',

meta={},

callback = self.parseCategoryPage,

errback = self.error, # 本项目中这里触发errback占绝大多数

dont_filter = True, # 按理来说是不需要加此参数的

)

# 日志如下:

formatStr = b'\xb6\xaf\xc2\xfe\xd6\xdc\xb1\xdf'

2017-11-16 15:11:02 [scrapy.downloadermiddlewares.redirect] DEBUG: Redirecting (302) to from

# https://s.1688.com/selloffer/offer_search.htm?keywords=%B6%AF%C2%FE%D6%DC%B1%DF&n=y&spm=a260k.635.1998096057.d1

以上这篇scrapy爬虫:scrapy.FormRequest中formdata参数详解就是小编分享给大家的全部内容了,希望能给大家一个参考,也希望大家多多支持脚本之家。