Hadoop_16_flume

文章目录

- flume

- flume安装部署

- 采集案例

- flume采集目录到hdfs

- flume监控某个文件的变化

- flume的多个agent实现串联

- node02安装flume

- node02配置flume配置文件

- node02开发脚本文件往文件写入数据

- node03开发flume配置文件

- 顺序启动

- flume的failover高可用机制

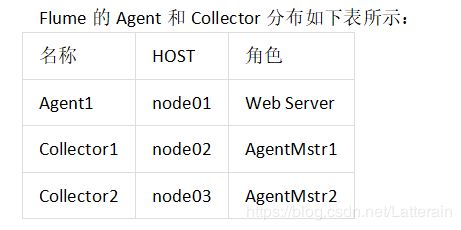

- 角色分配

- node01安装配置flume与拷贝文件脚本

- node02与node03配置flumecollection

- 顺序启动命令

- FAILOVER测试

- flume的load balancer负载均衡机制

- 角色分配

- 开发node01服务器的flume配置

- 开发node02服务器的flume配置

- 开发node03服务器flume配置

- 启动flume服务

- node01服务器运行脚本产生数据

- flume的静态拦截器的使用-标识数据的类型

- 数据流程处理分析

- 实现

- flume的自定义拦截器

- 实现

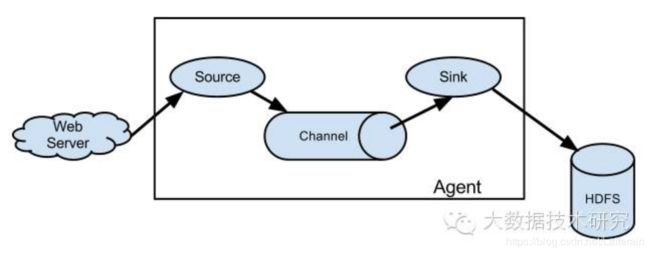

flume

Flume是一个分布式、可靠、和高可用的海量日志采集、聚合和传输的系统。

官方文档

Flume可以采集文件,socket数据包、文件、文件夹、kafka等各种形式源数据,又可以将采集到的数据(下沉sink)输出到HDFS、hbase、hive、kafka等众多外部存储系统中

flume的运行机制:三个组件构成

source:数据采集组件,对接我们的源数据

channel:管道 数据的缓冲区,连接source与sink,将我们的source与sink进行打通

sink:对接我们目的地的数据,数据保存到哪里去都是这个sink说了算。

这三个组件共同构成一个flume的运行的实例,运行的实例叫做agent。

可以理解为channel就是一个管子,将我们source采集的数据,都搬到sink目的地去。

flume安装部署

前提是已有hadoop环境上传安装包到数据源所在节点上

-

下载解压修改配置文件

这里我们采用在第三台机器来进行安装

tar -zxvf flume-ng-1.6.0-cdh5.14.0.tar.gz -C /export/servers/

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

cp flume-env.sh.template flume-env.sh

vim flume-env.sh

export JAVA_HOME=/export/servers/jdk1.8.0_141 -

开发配置文件

根据数据采集的需求配置采集方案,描述在配置文件中(文件名可任意自定义)

配置我们的网络收集的配置文件

在flume的conf目录下新建一个配置文件(采集方案)

vim /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf/netcat-logger.conf

定义这个agent中各组件的名字

a1.sources = r1

a1.sinks = k1

a1.channels = c1

描述和配置source组件:r1

a1.sources.r1.type = netcat

a1.sources.r1.bind = 192.168.190.120

a1.sources.r1.port = 44444

描述和配置sink组件:k1

a1.sinks.k1.type = logger

描述和配置channel组件,此处使用是内存缓存的方式

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

描述和配置source channel sink之间的连接关系

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

- 启动配置文件

指定采集方案配置文件,在相应的节点上启动flume agent

先用一个最简单的例子来测试一下程序环境是否正常

启动agent去采集数据

bin/flume-ng agent -c conf -f conf/netcat-logger.conf -n a1 -Dflume.root.logger=INFO,console

-c conf 指定flume自身的配置文件所在目录

-f conf/netcat-logger.con 指定我们所描述的采集方案

-n a1 指定我们这个agent的名字

- 安装telent准备测试

在node02机器上面安装telnet客户端,用于模拟数据的发送

yum -y install telnet

telnet node03 44444 # 使用telnet模拟数据发送

采集案例

flume采集目录到hdfs

监控某一个目录下面的所有的文件,只要目录下面有文件,收集文件内容,上传到hdfs上面去

flume:source 监看某一个文件夹下面的文件的变化,有变化,收集文件内容,到hdfs上面去

sink:hdfsSink

source:

channel:memory channel

flume的采集频率设置:两种控制策略

- 文件127.9M的时候采集一次 (文件大小)

- 两个小时滚动一次(时间控制)

hdfs sink文件大小的控制策略:将我们的数据放到hdfs上面去,要避免产生大量的小文件,可以控制我们的flume的采集数据的频率

多长时间采集一次

文件多大采集一次

文件的采集策略 多长时间采集一次,文件多大采集一次

a1.sinks.k1.hdfs.round = true

a1.sinks.k1.hdfs.roundValue = 10

a1.sinks.k1.hdfs.roundUnit = minute

#定义文件多大的时候采集一次

a1.sinks.k1.hdfs.rollInterval = 3

a1.sinks.k1.hdfs.rollSize = 20

a1.sinks.k1.hdfs.rollCount = 5

a1.sinks.k1.hdfs.batchSize = 1

a1.sinks.k1.hdfs.useLocalTimeStamp = true

flume配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

mkdir -p /export/servers/dirfile

vim spooldir.conf

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

##注意:不能往监控目中重复丢同名文件

a1.sources.r1.type = spooldir

a1.sources.r1.spoolDir = /export/servers/dirfile

a1.sources.r1.fileHeader = true

# Describe the sink

a1.sinks.k1.type = hdfs

a1.sinks.k1.channel = c1

a1.sinks.k1.hdfs.path = hdfs://node01:8020/spooldir/files/%y-%m-%d/%H%M/

a1.sinks.k1.hdfs.filePrefix = events-

a1.sinks.k1.hdfs.round = true

a1.sinks.k1.hdfs.roundValue = 10

a1.sinks.k1.hdfs.roundUnit = minute

a1.sinks.k1.hdfs.rollInterval = 3

a1.sinks.k1.hdfs.rollSize = 20

a1.sinks.k1.hdfs.rollCount = 5

a1.sinks.k1.hdfs.batchSize = 1

a1.sinks.k1.hdfs.useLocalTimeStamp = true

#生成的文件类型,默认是Sequencefile,可用DataStream,则为普通文本

a1.sinks.k1.hdfs.fileType = DataStream

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

启动flume

bin/flume-ng agent -c ./conf -f ./conf/spooldir.conf -n a1 -Dflume.root.logger=INFO,console

将文件上传至路径cd /export/servers/dirfile,即可在node01中收集到目录中的数据。

例如上传hello.txt(纯英文文本上传至路径),变化为hello.txt.COMPLETED。

可以写一个脚本,定时的执行检测,检测源数据有没有减少,检测目的数据有没有增多

杀掉flume,重新启动。

flume监控某个文件的变化

采集需求:比如业务系统使用log4j生成的日志,日志内容不断增加,需要把追加到日志文件中的数据实时采集到hdfs

根据需求,首先定义以下3大要素

- 采集源,即source——监控文件内容更新 : exec ‘tail -F file’

- 下沉目标,即sink——HDFS文件系统 : hdfs sink

- Source和sink之间的传递通道——channel,可用file channel 也可以用 内存channel

定义flume的配置文件

node03开发配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim tail-file.conf

agent1.sources = source1

agent1.sinks = sink1

agent1.channels = channel1

# Describe/configure tail -F source1

agent1.sources.source1.type = exec

agent1.sources.source1.command = tail -F /export/servers/taillogs/access_log

agent1.sources.source1.channels = channel1

#configure host for source

#agent1.sources.source1.interceptors = i1

#agent1.sources.source1.interceptors.i1.type = host

#agent1.sources.source1.interceptors.i1.hostHeader = hostname

# Describe sink1

agent1.sinks.sink1.type = hdfs

#a1.sinks.k1.channel = c1

agent1.sinks.sink1.hdfs.path = hdfs://node01:8020/weblog/flume-collection/%y-%m-%d/%H-%M

agent1.sinks.sink1.hdfs.filePrefix = access_log

agent1.sinks.sink1.hdfs.maxOpenFiles = 5000

agent1.sinks.sink1.hdfs.batchSize= 100

agent1.sinks.sink1.hdfs.fileType = DataStream

agent1.sinks.sink1.hdfs.writeFormat =Text

agent1.sinks.sink1.hdfs.rollSize = 102400

agent1.sinks.sink1.hdfs.rollCount = 1000000

agent1.sinks.sink1.hdfs.rollInterval = 60

agent1.sinks.sink1.hdfs.round = true

agent1.sinks.sink1.hdfs.roundValue = 10

agent1.sinks.sink1.hdfs.roundUnit = minute

agent1.sinks.sink1.hdfs.useLocalTimeStamp = true

# Use a channel which buffers events in memory

agent1.channels.channel1.type = memory

agent1.channels.channel1.keep-alive = 120

agent1.channels.channel1.capacity = 500000

agent1.channels.channel1.transactionCapacity = 600

# Bind the source and sink to the channel

agent1.sources.source1.channels = channel1

agent1.sinks.sink1.channel = channel1

开发shell脚本定时追加文件内容

mkdir -p /export/servers/shells/

cd /export/servers/shells/

vim tail-file.sh

#!/bin/bash

while true

do

date >> /export/servers/taillogs/access_log;

sleep 0.5;

done

创建文件夹

mkdir -p /export/servers/taillogs

启动shell脚本

sh /export/servers/shells/tail-file.sh

启动flume

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -c conf -f conf/tail-file.conf -n agent1 -Dflume.root.logger=INFO,console

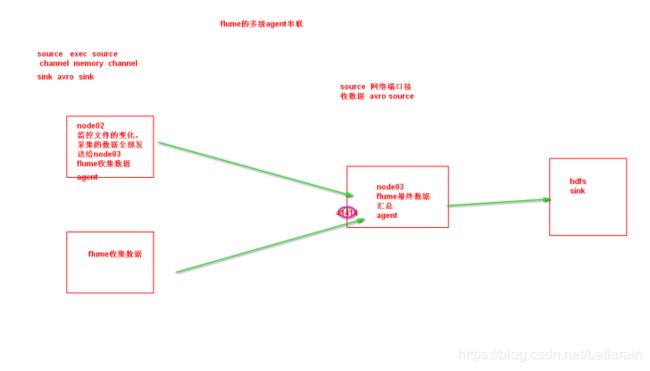

flume的多个agent实现串联

第一个agent负责收集文件当中的数据,通过网络发送到第二个agent当中去,第二个agent负责接收第一个agent发送的数据,并将数据保存到hdfs上面去。

node02安装flume

将node03机器上面解压后的flume文件夹拷贝到node02机器上面去

cd /export/servers

scp -r apache-flume-1.6.0-cdh5.14.0-bin/ node02:$PWD

node02配置flume配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim tail-avro-avro-logger.conf

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = exec

a1.sources.r1.command = tail -F /export/servers/taillogs/access_log

a1.sources.r1.channels = c1

# Describe the sink

##sink端的avro是一个数据发送者

a1.sinks = k1

a1.sinks.k1.type = avro

a1.sinks.k1.channel = c1

a1.sinks.k1.hostname = 192.168.190.130

a1.sinks.k1.port = 4141

a1.sinks.k1.batch-size = 10

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

node02开发脚本文件往文件写入数据

cd /export/servers

scp -r shells/ taillogs/ node02:$PWD

node03开发flume配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim avro-hdfs.conf

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

##source中的avro组件是一个接收者服务

a1.sources.r1.type = avro

a1.sources.r1.channels = c1

a1.sources.r1.bind = 192.168.190.130

a1.sources.r1.port = 4141

# Describe the sink

a1.sinks.k1.type = hdfs

a1.sinks.k1.hdfs.path = hdfs://node01:8020/avro/hdfs/%y-%m-%d/%H%M/

a1.sinks.k1.hdfs.filePrefix = events-

a1.sinks.k1.hdfs.round = true

a1.sinks.k1.hdfs.roundValue = 10

a1.sinks.k1.hdfs.roundUnit = minute

a1.sinks.k1.hdfs.rollInterval = 3

a1.sinks.k1.hdfs.rollSize = 20

a1.sinks.k1.hdfs.rollCount = 5

a1.sinks.k1.hdfs.batchSize = 1

a1.sinks.k1.hdfs.useLocalTimeStamp = true

#生成的文件类型,默认是Sequencefile,可用DataStream,则为普通文本

a1.sinks.k1.hdfs.fileType = DataStream

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

顺序启动

node03机器启动flume进程

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -c conf -f conf/avro-hdfs.conf -n a1 -Dflume.root.logger=INFO,console

node02机器启动flume进程

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/

bin/flume-ng agent -c conf -f conf/tail-avro-avro-logger.conf -n a1 -Dflume.root.logger=INFO,console

node02机器启shell脚本生成文件

cd /export/servers/shells

sh tail-file.sh

flume的failover高可用机制

flume的failover机制可以实现将我们的文件采集之后,发送到下游,下游可以通过flume的配置,实现高可用。

角色分配

node01安装配置flume与拷贝文件脚本

将node03机器上面的flume安装包以及文件生产的两个目录拷贝到node01机器上面去

node03机器执行以下命令

cd /export/servers

scp -r apache-flume-1.6.0-cdh5.14.0-bin/ node01:$PWD

scp -r shells/ taillogs/ node01:$PWD

node01机器配置agent的配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim agent.conf

#agent1 name

agent1.channels = c1

agent1.sources = r1

agent1.sinks = k1 k2

#

##set gruop

agent1.sinkgroups = g1

#

##set channel

agent1.channels.c1.type = memory

agent1.channels.c1.capacity = 1000

agent1.channels.c1.transactionCapacity = 100

#

agent1.sources.r1.channels = c1

agent1.sources.r1.type = exec

agent1.sources.r1.command = tail -F /export/servers/taillogs/access_log

#

agent1.sources.r1.interceptors = i1 i2

agent1.sources.r1.interceptors.i1.type = static

agent1.sources.r1.interceptors.i1.key = Type

agent1.sources.r1.interceptors.i1.value = LOGIN

agent1.sources.r1.interceptors.i2.type = timestamp

#

## set sink1

agent1.sinks.k1.channel = c1

agent1.sinks.k1.type = avro

agent1.sinks.k1.hostname = node02

agent1.sinks.k1.port = 52020

#

## set sink2

agent1.sinks.k2.channel = c1

agent1.sinks.k2.type = avro

agent1.sinks.k2.hostname = node03

agent1.sinks.k2.port = 52020

#

##set sink group

agent1.sinkgroups.g1.sinks = k1 k2

#

##set failover

agent1.sinkgroups.g1.processor.type = failover

agent1.sinkgroups.g1.processor.priority.k1 = 10

agent1.sinkgroups.g1.processor.priority.k2 = 1

agent1.sinkgroups.g1.processor.maxpenalty = 10000

#

node02与node03配置flumecollection

node02机器修改配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim collector.conf

#set Agent name

a1.sources = r1

a1.channels = c1

a1.sinks = k1

#

##set channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

#

## other node,nna to nns

a1.sources.r1.type = avro

a1.sources.r1.bind = node02

a1.sources.r1.port = 52020

a1.sources.r1.interceptors = i1

a1.sources.r1.interceptors.i1.type = static

a1.sources.r1.interceptors.i1.key = Collector

a1.sources.r1.interceptors.i1.value = node02

a1.sources.r1.channels = c1

#

##set sink to hdfs

a1.sinks.k1.type=hdfs

a1.sinks.k1.hdfs.path= hdfs://node01:8020/flume/failover/

a1.sinks.k1.hdfs.fileType=DataStream

a1.sinks.k1.hdfs.writeFormat=TEXT

a1.sinks.k1.hdfs.rollInterval=10

a1.sinks.k1.channel=c1

a1.sinks.k1.hdfs.filePrefix=%Y-%m-%d

#

node03机器修改配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim collector.conf

#set Agent name

a1.sources = r1

a1.channels = c1

a1.sinks = k1

#

##set channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

#

## other node,nna to nns

a1.sources.r1.type = avro

a1.sources.r1.bind = node03

a1.sources.r1.port = 52020

a1.sources.r1.interceptors = i1

a1.sources.r1.interceptors.i1.type = static

a1.sources.r1.interceptors.i1.key = Collector

a1.sources.r1.interceptors.i1.value = node03

a1.sources.r1.channels = c1

#

##set sink to hdfs

a1.sinks.k1.type=hdfs

a1.sinks.k1.hdfs.path= hdfs://node01:8020/flume/failover/

a1.sinks.k1.hdfs.fileType=DataStream

a1.sinks.k1.hdfs.writeFormat=TEXT

a1.sinks.k1.hdfs.rollInterval=10

a1.sinks.k1.channel=c1

a1.sinks.k1.hdfs.filePrefix=%Y-%m-%d

顺序启动命令

node03机器上面启动flume

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -n a1 -c conf -f conf/collector.conf -Dflume.root.logger=DEBUG,console

node02机器上面启动flume

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -n a1 -c conf -f conf/collector.conf -Dflume.root.logger=DEBUG,console

node01机器上面启动flume

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -n agent1 -c conf -f conf/agent.conf -Dflume.root.logger=DEBUG,console

node01机器启动文件产生脚本

cd /export/servers/shells

sh tail-file.sh

FAILOVER测试

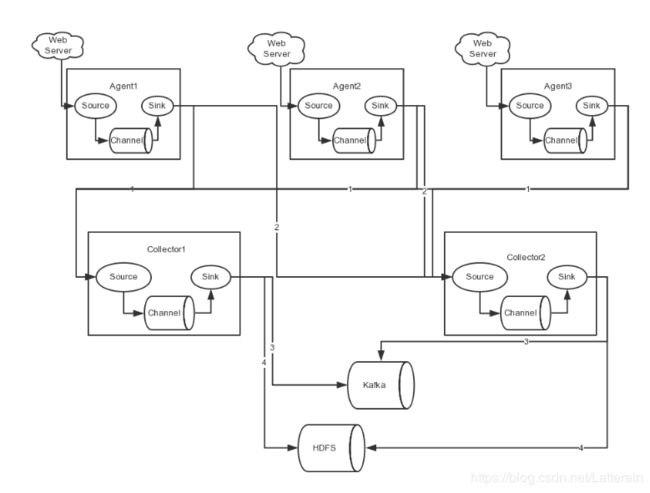

测试Flume NG集群的高可用(故障转移)。

场景如下:我们在Agent1节点上传文件,由于我们配置Collector1的权重比Collector2大,所以 Collector1优先采集并上传到存储系统。然后我们kill掉Collector1,此时有Collector2负责日志的采集上传工作,之后,我 们手动恢复Collector1节点的Flume服务,再次在Agent1上次文件,发现Collector1恢复优先级别的采集工作。

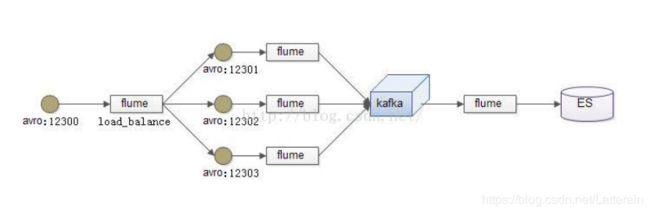

flume的load balancer负载均衡机制

负载均衡是用于解决一台机器(一个进程)无法解决所有请求而产生的一种算法。Load balancing Sink Processor 能够实现 load balance 功能,如下图Agent1 是一个路由节点,负责将 Channel 暂存的 Event 均衡到对应的多个 Sink组件上,而每个 Sink 组件分别连接到一个独立的 Agent 上,示例配置,如下所示:

角色分配

在此处通过三台机器来进行模拟flume的负载均衡

三台机器规划如下:

node01:采集数据,发送到node02和node03机器上去

node02:接收node01的部分数据

node03:接收node01的部分数据

开发node01服务器的flume配置

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim load_banlancer_client.conf

#agent name

a1.channels = c1

a1.sources = r1

a1.sinks = k1 k2

#set gruop

a1.sinkgroups = g1

#set channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

a1.sources.r1.channels = c1

a1.sources.r1.type = exec

a1.sources.r1.command = tail -F /export/servers/taillogs/access_log

# set sink1

a1.sinks.k1.channel = c1

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = node02

a1.sinks.k1.port = 52020

# set sink2

a1.sinks.k2.channel = c1

a1.sinks.k2.type = avro

a1.sinks.k2.hostname = node03

a1.sinks.k2.port = 52020

#set sink group

a1.sinkgroups.g1.sinks = k1 k2

#set failover

a1.sinkgroups.g1.processor.type = load_balance

a1.sinkgroups.g1.processor.backoff = true

a1.sinkgroups.g1.processor.selector = round_robin

a1.sinkgroups.g1.processor.selector.maxTimeOut=10000

开发node02服务器的flume配置

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim load_banlancer_server.conf

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = avro

a1.sources.r1.channels = c1

a1.sources.r1.bind = node02

a1.sources.r1.port = 52020

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

开发node03服务器flume配置

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim load_banlancer_server.conf

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = avro

a1.sources.r1.channels = c1

a1.sources.r1.bind = node03

a1.sources.r1.port = 52020

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

启动flume服务

启动node03的flume服务

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -n a1 -c conf -f conf/load_banlancer_server.conf -Dflume.root.logger=DEBUG,console

启动node02的flume服务

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -n a1 -c conf -f conf/load_banlancer_server.conf -Dflume.root.logger=DEBUG,console

启动node01的flume服务

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -n a1 -c conf -f conf/load_banlancer_client.conf -Dflume.root.logger=DEBUG,console

node01服务器运行脚本产生数据

cd /export/servers/shells

sh tail-file.sh

flume的静态拦截器的使用-标识数据的类型

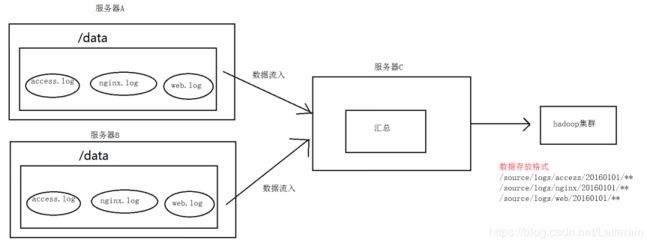

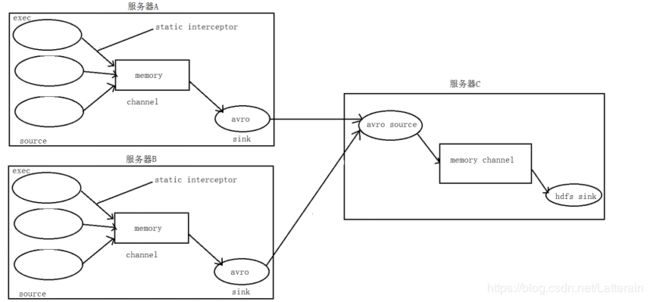

A、B两台日志服务机器实时生产日志主要类型为access.log、nginx.log、web.log

现在要求: 把A、B 机器中的access.log、nginx.log、web.log 采集汇总到C机器上然后统一收集到hdfs中。

但是在hdfs中要求的目录为:

/source/logs/access/20180101/xx

/source/logs/nginx/20180101/xx

/source/logs/web/20180101/xx

数据流程处理分析

实现

服务器A对应的IP为 192.168.190.3

服务器B对应的IP为 192.168.190.120

服务器C对应的IP为 192.168.190.130

采集端配置文件开发

node01与node02服务器开发flume的配置文件,一样的配置。

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim exec_source_avro_sink.conf

# Name the components on this agent

a1.sources = r1 r2 r3

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = exec

a1.sources.r1.command = tail -F /export/servers/taillogs/access.log

a1.sources.r1.interceptors = i1

a1.sources.r1.interceptors.i1.type = static

## static拦截器的功能就是往采集到的数据的header中插入自己定## 义的key-value对

a1.sources.r1.interceptors.i1.key = type

a1.sources.r1.interceptors.i1.value = access

a1.sources.r2.type = exec

a1.sources.r2.command = tail -F /export/servers/taillogs/nginx.log

a1.sources.r2.interceptors = i2

a1.sources.r2.interceptors.i2.type = static

a1.sources.r2.interceptors.i2.key = type

a1.sources.r2.interceptors.i2.value = nginx

a1.sources.r3.type = exec

a1.sources.r3.command = tail -F /export/servers/taillogs/web.log

a1.sources.r3.interceptors = i3

a1.sources.r3.interceptors.i3.type = static

a1.sources.r3.interceptors.i3.key = type

a1.sources.r3.interceptors.i3.value = web

# Describe the sink

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = node03

a1.sinks.k1.port = 41414

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 20000

a1.channels.c1.transactionCapacity = 10000

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sources.r2.channels = c1

a1.sources.r3.channels = c1

a1.sinks.k1.channel = c1

服务端配置文件开发

在node03上面开发flume配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim avro_source_hdfs_sink.conf

a1.sources = r1

a1.sinks = k1

a1.channels = c1

#定义source

a1.sources.r1.type = avro

a1.sources.r1.bind = 192.168.190.130

a1.sources.r1.port =41414

#添加时间拦截器

a1.sources.r1.interceptors = i1

a1.sources.r1.interceptors.i1.type = org.apache.flume.interceptor.TimestampInterceptor$Builder

#定义channels

a1.channels.c1.type = memory

a1.channels.c1.capacity = 20000

a1.channels.c1.transactionCapacity = 10000

#定义sink

a1.sinks.k1.type = hdfs

a1.sinks.k1.hdfs.path=hdfs://192.168.190.3:8020/source/logs/%{type}/%Y%m%d

a1.sinks.k1.hdfs.filePrefix =events

a1.sinks.k1.hdfs.fileType = DataStream

a1.sinks.k1.hdfs.writeFormat = Text

#时间类型

a1.sinks.k1.hdfs.useLocalTimeStamp = true

#生成的文件不按条数生成

a1.sinks.k1.hdfs.rollCount = 0

#生成的文件按时间生成

a1.sinks.k1.hdfs.rollInterval = 30

#生成的文件按大小生成

a1.sinks.k1.hdfs.rollSize = 10485760

#批量写入hdfs的个数

a1.sinks.k1.hdfs.batchSize = 10000

#flume操作hdfs的线程数(包括新建,写入等)

a1.sinks.k1.hdfs.threadsPoolSize=10

#操作hdfs超时时间

a1.sinks.k1.hdfs.callTimeout=30000

#组装source、channel、sink

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

采集端文件生成脚本

在node01与node02上面开发shell脚本,模拟数据生成

cd /export/servers/shells

vim server.sh

#!/bin/bash

while true

do

date >> /export/servers/taillogs/access.log;

date >> /export/servers/taillogs/web.log;

date >> /export/servers/taillogs/nginx.log;

sleep 0.5;

done

顺序启动服务

node03启动flume实现数据收集

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -c conf -f conf/avro_source_hdfs_sink.conf -name a1 -Dflume.root.logger=DEBUG,console

node01与node02启动flume实现数据监控

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -c conf -f conf/exec_source_avro_sink.conf -name a1 -Dflume.root.logger=DEBUG,console

node01与node02启动生成文件脚本

cd /export/servers/shells

sh server.sh

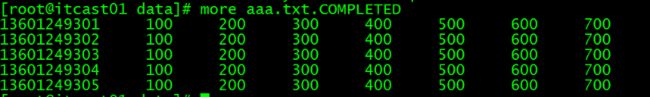

flume的自定义拦截器

数据的脱敏

将一些敏感信息,全部进行加密。比如:手机号,银行卡号,银行卡余额

原始数据八个字段,经过处理之后,只要五个字段,并且第一个字段进行加密,采集数据的时候就要进行处理。

使用flume的自定义拦截器,来实现将数据进行脱敏:实现数据采集之前就已经处理好了,数据采集到hdfs上面来之后,全部都已经进行脱敏。

在数据采集之后,通过flume的拦截器,实现不需要的数据过滤掉,并将指定的第一个字段进行加密,加密之后再往hdfs上面保存。

原始数据与处理之后的数据对比:

原始

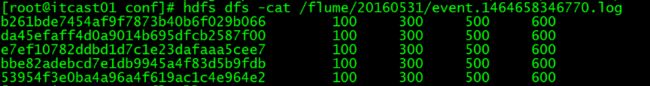

HDFS上产生收集到的处理数据

HDFS上产生收集到的处理数据

1,2,4,6,7这五个个字段需要

第一个字段进行加密

实现

- 自定义拦截器,打包成jar包,放到lib目录下

自定义拦截器jar包 - 开发flume的配置文件

第三台机器开发flume的配置文件

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin/conf

vim spool-interceptor-hdfs.conf

a1.channels = c1

a1.sources = r1

a1.sinks = s1

#channel

a1.channels.c1.type = memory

a1.channels.c1.capacity=100000

a1.channels.c1.transactionCapacity=50000

#source

a1.sources.r1.channels = c1

a1.sources.r1.type = spooldir

a1.sources.r1.spoolDir = /export/servers/intercept

a1.sources.r1.batchSize= 50

a1.sources.r1.inputCharset = UTF-8

a1.sources.r1.interceptors =i1 i2

a1.sources.r1.interceptors.i1.type =cn.itcast.iterceptor.CustomParameterInterceptor$Builder

a1.sources.r1.interceptors.i1.fields_separator=\\u0009

a1.sources.r1.interceptors.i1.indexs =0,1,3,5,6

a1.sources.r1.interceptors.i1.indexs_separator =\\u002c

a1.sources.r1.interceptors.i1.encrypted_field_index =0

a1.sources.r1.interceptors.i2.type = org.apache.flume.interceptor.TimestampInterceptor$Builder

#sink

a1.sinks.s1.channel = c1

a1.sinks.s1.type = hdfs

a1.sinks.s1.hdfs.path =hdfs://192.168.52.100:8020/flume/intercept/%Y%m%d

a1.sinks.s1.hdfs.filePrefix = event

a1.sinks.s1.hdfs.fileSuffix = .log

a1.sinks.s1.hdfs.rollSize = 10485760

a1.sinks.s1.hdfs.rollInterval =20

a1.sinks.s1.hdfs.rollCount = 0

a1.sinks.s1.hdfs.batchSize = 1500

a1.sinks.s1.hdfs.round = true

a1.sinks.s1.hdfs.roundUnit = minute

a1.sinks.s1.hdfs.threadsPoolSize = 25

a1.sinks.s1.hdfs.useLocalTimeStamp = true

a1.sinks.s1.hdfs.minBlockReplicas = 1

a1.sinks.s1.hdfs.fileType =DataStream

a1.sinks.s1.hdfs.writeFormat = Text

a1.sinks.s1.hdfs.callTimeout = 60000

a1.sinks.s1.hdfs.idleTimeout =60

- 上传测试数据

上传我们的测试数据到/export/servers/intercept 这个目录下面去,如果目录不存在则创建

mkdir -p /export/servers/intercept

测试数据如下

13601249301 100 200 300 400 500 600 700

13601249302 100 200 300 400 500 600 700

13601249303 100 200 300 400 500 600 700

13601249304 100 200 300 400 500 600 700

13601249305 100 200 300 400 500 600 700

13601249306 100 200 300 400 500 600 700

13601249307 100 200 300 400 500 600 700

13601249308 100 200 300 400 500 600 700

13601249309 100 200 300 400 500 600 700

13601249310 100 200 300 400 500 600 700

13601249311 100 200 300 400 500 600 700

13601249312 100 200 300 400 500 600 700 - 启动flume

cd /export/servers/apache-flume-1.6.0-cdh5.14.0-bin

bin/flume-ng agent -c conf -f conf/spool-interceptor-hdfs.conf -name a1 -Dflume.root.logger=DEBUG,console