centos7.6使用kubeadm安装kubenetes 1.16.0

参考:

https://blog.csdn.net/fenglailea/article/details/88745642

https://www.kubernetes.org.cn/5551.html

kubenetes v1.16.0

centos 7.6

docker-ce 19.03.2

一、服务器相关设置

系统环境

系统:centos 7.6

内核: 5.2.11-1.el7.elrepo.x86_64

1、Linux查看版本当前操作系统内核信息

uname -a输出

Linux iZwz9d75c59ll4waz4y8ctZ 3.10.0-693.2.2.el7.x86_64 #1 SMP Tue Sep 12 22:26:13 UTC 2017 x86_64 x86_64 x86_64 GNU/Linux

2、Linux查看当前操作系统版本信息

cat /proc/version输出

Linux version 3.10.0-693.2.2.el7.x86_64 ([email protected]) (gcc version 4.8.5 20150623 (Red Hat 4.8.5-16) (GCC) ) #1 SMP Tue Sep 12 22:26:13 UTC 2017

Linux查看版本当前操作系统发行版信息

cat /etc/redhat-release输出

CentOS Linux release 7.4.1708 (Core)

升级内核

# 升级yum,升级系统内核,系统内核最好能是4.10以上,可以使用overlay2存储驱动

# 本人在自己虚拟机使用centos7.6,升级内核后,可以用到overlay2存储驱动

# 在服务器上由于某些原因没办法升级内核,在3.10版本也能部署成功,只是存储驱动使用overlay

yum update -y

# 安装工具

yum install -y wget curl vim 系统配置

设置主机名

hostnamectl set-hostname master

必须设置域名解析(master、node都要设置)

cat <>/etc/hosts

192.168.43.88 k8s-master

192.168.43.39 k8s-node1

EOF

关闭防火墙 、selinux和swap

systemctl disable firewalld --now

setenforce 0

sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

swapoff -a

echo "vm.swappiness = 0">> /etc/sysctl.conf

sed -i 's/.*swap.*/#&/' /etc/fstab

sysctl -p

配置内核参数,将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

sysctl --system二、配置软件源

1、配置yum源base repo为阿里云的yum源

cd /etc/yum.repos.d

mv CentOS-Base.repo CentOS-Base.repo.bak

mv epel.repo epel.repo.bak

curl https://mirrors.aliyun.com/repo/Centos-7.repo -o CentOS-Base.repo

sed -i 's/gpgcheck=1/gpgcheck=0/g' /etc/yum.repos.d/CentOS-Base.repo

curl https://mirrors.aliyun.com/repo/epel-7.repo -o epel.repo gpkcheck=0 表示对从这个源下载的rpm包不进行校验

2、配置docker repo

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O/etc/yum.repos.d/docker-ce.repo3、配置kubernetes源为阿里的yum源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOFbaseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 Kubernetes源设为阿里

gpgcheck=0:表示对从这个源下载的rpm包不进行校验

repo_gpgcheck=0:某些安全性配置文件会在 /etc/yum.conf 内全面启用 repo_gpgcheck,以便能检验软件库的中继数据的加密签署

如果gpgcheck设为1,会进行校验,就会报错如下,所以这里设为0

repomd.xml signature could not be verified for kubernetes4、update cache 更新缓存

yum clean all && yum makecache && yum repolist三、安装docker

查看可安装的版本

yum list docker-ce --showduplicates | sort -r安装

yum install -y docker-ce或者指定版本安装

yum install -y docker-ce-19.03.5-3.el7启动docker

systemctl enable docker && systemctl start docker查看docker版本

docker version设置docker的镜像源,这里我设置为国内的docker源https://www.docker-cn.com

vim /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-file": "3",

"max-size": "100m"

},

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

],

"registry-mirrors": ["https://www.docker-cn.com"]

}注意:native.cgroupdriver=systemd 官方推荐此配置,地址 https://kubernetes.io/docs/setup/cri/重新加载daemon,重启docker

systemctl daemon-reload && systemctl restart docker如果docker重启失败提示start request repeated too quickly for docker.service

应该是centos的内核太低内核是3.x,不支持overlay2的文件系统,移除下面的配置

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

]四、安装kubeadm、kubelet和kubectl

kubeadm不管kubelet和kubectl,所以我们安装kubeadm,还要安装kubelet和kubectl

查看kubelet版本

yum list kubelet --showduplicates | sort -r安装kubelet kubeadm kubectl

yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetesKubelet负责与其他节点集群通信,并进行本节点Pod和容器生命周期的管理。

Kubeadm是Kubernetes的自动化部署工具,降低了部署难度,提高效率。

Kubectl是Kubernetes集群管理工具。

最后启动kubelet:

systemctl enable kubelet --now五、部署kubernetes master

开始初始化集群之前可以使用kubeadm config images list查看一下初始化需要哪些镜像

kubeadm config images list以下是初始化所需docker镜像

k8s.gcr.io/kube-apiserver:v1.16.0

k8s.gcr.io/kube-controller-manager:v1.16.0

k8s.gcr.io/kube-scheduler:v1.16.0

k8s.gcr.io/kube-proxy:v1.16.0

k8s.gcr.io/pause:3.1

k8s.gcr.io/etcd:3.3.15-0

k8s.gcr.io/coredns:1.6.2

可以先通过kubeadm config images pull手动在各个节点上拉取所k8s需要的docker镜像,master节点初始化或者node节点加入集群时,会用到这些镜像

如果不先执行kubeadm config images pull拉取镜像,其实在master节点执行kubeadm init 或者node节点执行 kubeadm join命令时,也会先拉取镜像

本人没有提前拉取镜像,都是在master节点kubeadm init 和node节点 kubeadm join时,自动拉的镜像

kubeadm config images pull1、初始化kubeadm

kubeadm init \

--apiserver-advertise-address=master机器的ip \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.16.0 \

--service-cidr=10.1.0.0/16 \

--pod-network-cidr=10.244.0.0/16

上面指定的参数都不能缺

–kubernetes-version: 用于指定k8s版本;

–apiserver-advertise-address:用于指定kube-apiserver监听的ip地址,就是 master本机IP地址。

–pod-network-cidr:用于指定Pod的网络范围; 10.244.0.0/16

–service-cidr:用于指定SVC的网络范围;

–image-repository: 指定阿里云镜像仓库地址

这一步很关键,由于kubeadm 默认从官网k8s.grc.io下载所需镜像,国内无法访问,因此需要通过–image-repository指定阿里云镜像仓库地址

注意:初始化时,留意输出的英文提示,可以及时知道初始化失败的原因,如下warning就是说主机名不对,找不到这个主机

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 19.03.2. Latest validated version: 18.09

[WARNING Hostname]: hostname "bogon" could not be reached

[WARNING Hostname]: hostname "bogon": lookup bogon on 192.168.2.1:53: no such host

[WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service'

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'初始化时间比较长一些,大概1分钟左右,当出现以下结果,标识初始化完成(初始化完成,不代表集群运行正常)

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.43.88:6443 --token m16ado.6ne248sk47nln0jj \

--discovery-token-ca-cert-hash sha256:09cda974fb18e716219bf08ef9d7a4eaa76bfe59ec91d0930b4ccfbd111276de2、按提示执行命令

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

3、将pod网络部署到集群

下载kube-flannel.yml文件

curl -O https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml下载后的文件

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unsed in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"cniVersion": "0.2.0",

"name": "cbr0",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- amd64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

- --iface=eth0

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-ppc64le

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- ppc64le

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-s390x

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- s390x

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg安装flannel network add-on

kubectl apply -f ./kube-flannel.yml这里注意kube-flannel.yml这个文件里的flannel的镜像是0.11.0,quay.io/coreos/flannel:v0.11.0-amd64执行后,显示如下,表示flannel部署成功

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds-amd64 created

daemonset.apps/kube-flannel-ds-arm64 created

daemonset.apps/kube-flannel-ds-arm created

daemonset.apps/kube-flannel-ds-ppc64le created

daemonset.apps/kube-flannel-ds-s390x created

如果flannel创建失败的,则可能是服务器存在多个网卡,目前需要在kube-flannel.yml中使用–iface参数指定集群主机内网网卡的名称,否则可能会出现dns无法解析。需要将kube-flannel.yml下载到本地,flanneld启动参数加上–iface=

注意:kube-flannel.yml文件里面有多个containers,要在quay.io/coreos/flannel:v0.11.0-amd64这个containers的启动参数里面设

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

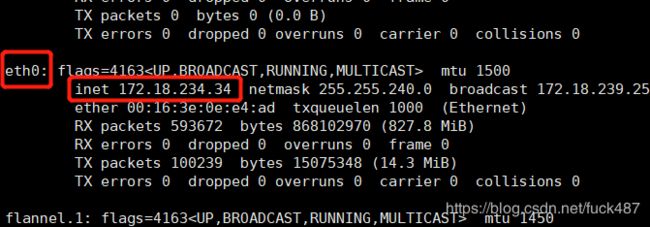

- --iface=eth0containers下的args指定参数iface=eth0,前提先ifconfig查看一下本地ip使用的网卡名称:eth0

修改之前下载的kube-flannel.yml,修改containers下的args启动参数,指定网卡名称

修改完kube-flannel.yml后,先删除之前部署的网络

kubectl delete -f ./kube-flannel.yml再重新创建

kubectl apply -f ./kube-flannel.yml

执行完后,查看node状态、pod状态

kubectl get nodesNAME STATUS ROLES AGE VERSION

izwz9d75c59ll4waz4y8ctz Ready master 71m v1.16.0

kubectl get pod -n kube-systemNAME READY STATUS RESTARTS AGE

coredns-58cc8c89f4-2d6q9 1/1 Running 0 72m

coredns-58cc8c89f4-rxs6n 1/1 Running 0 72m

etcd-izwz9d75c59ll4waz4y8ctz 1/1 Running 0 71m

kube-apiserver-izwz9d75c59ll4waz4y8ctz 1/1 Running 0 71m

kube-controller-manager-izwz9d75c59ll4waz4y8ctz 1/1 Running 0 71m

kube-flannel-ds-amd64-55fzx 1/1 Running 0 71m

kube-proxy-5x9wv 1/1 Running 0 72m

kube-scheduler-izwz9d75c59ll4waz4y8ctz 1/1 Running 0 71m

一般node状态为NotReady状态,kubectl get pod -n kube-system看一下pod状态,

一般可以发现问题,flannel镜像下载失败ImagePullBackOff,coredns状态一直是pending,

这时查看一下docker image ls里面有没有quay.io/coreos/flannel:v0.11.0-amd64镜像,如果没有,尝试

docker pull quay.io/coreos/flannel:v0.11.0-amd64

必须把flannel镜像拉取下,kube-flannel-ds-amd64的状态才正常running

本人虚拟机里面死活没办法拉取quay.io/coreos/flannel:v0.11.0-amd64镜像,只好从其他地方拉取后save导出,再load到虚拟机的docker里面

注意:master节点和node节点,都必须有这个镜像quay.io/coreos/flannel:v0.11.0-amd64

六、部署Node节点加入集群

1、首先确保node节点是否存在flannel的docker镜像:quay.io/coreos/flannel:v0.11.0-amd64

2、执行kubeadm join命令加入集群

kubeadm join 192.168.43.88:6443 --token ep9bne.6at6gds2o05dgutd \

--discovery-token-ca-cert-hash sha256:b2f75a6e5a49e66e467392d7d237548664ba8a28aafe98bdb18a7dd63ecc4aa8 到master节点查看node状态,都显示ready

[root@k8s-master ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 3h31m v1.16.0

k8s-node1 Ready 57m v1.16.0

七、其他

1、创建pod验证集群是否正常

kubectl create deployment nginx --image=nginx

kubectl expose deployment nginx --port=80 --type=NodePort

kubectl get pod,svc查看pod和svc,nginx正常ready

NAME READY STATUS RESTARTS AGE

pod/nginx-86c57db685-kgfn2 1/1 Running 0 67s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.1.0.1 443/TCP 3h49m

service/nginx NodePort 10.1.143.205 80:32597/TCP 12s

浏览器访问master主机ip+NodePort端口32597,能正常显示nginx首页

2、移除Node节点

在node节点执行

kubeadm reset

撤销kubeadm join,再手动rm掉提示的配置文件夹

在master节点执行(kubectl get node可以查看节点名) kubectl delete node 节点名称

kubectl delete node k8s-node13、设置docker私库

另外一个文章:https://blog.csdn.net/fuck487/article/details/102147110