tensorflow 两层卷积手写识别字体

tf.nn.conv2d(input, filter, strides, padding, use_cudnn_on_gpu=None, name=None)

除去name参数用以指定该操作的name,与方法有关的一共五个参数:

第一个参数input:指需要做卷积的输入图像,它要求是一个Tensor,具有[batch, in_height, in_width, in_channels]这样的shape,具体含义是[训练时一个batch的图片数量, 图片高度, 图片宽度, 图像通道数],注意这是一个4维的Tensor,要求类型为float32和float64其中之一

第二个参数filter:相当于CNN中的卷积核,它要求是一个Tensor,具有[filter_height, filter_width, in_channels, out_channels]这样的shape,具体含义是[卷积核的高度,卷积核的宽度,图像通道数,卷积核个数],要求类型与参数input相同,有一个地方需要注意,第三维in_channels,就是参数input的第四维

具体含义是[卷积核的高度,卷积核的宽度,图像通道数,卷积核个数],要求类型与参数input相同,有一个地方需要注意,第三维in_channels,就是参数input的第四维,这里是维度一致,不是数值一致。这里out_channels指定的是卷积核的个数,而in_channels说明卷积核的维度与图像的维度一致,在做卷积的时候,单个卷积核在不同维度上对应的卷积图片,然后将in_channels个通道上的结果相加,加上bias来得到单个卷积核卷积图片的结果。

第三个参数strides:卷积时在图像每一维的步长,这是一个一维的向量,长度4,对应的是在input的4个维度上的步长

第四个参数padding:string类型的量,只能是"SAME","VALID"其中之一,这个值决定了不同的卷积方式(后面会介绍)

第五个参数:use_cudnn_on_gpu:bool类型,是否使用cudnn加速,默认为true

结果返回一个Tensor,这个输出,就是我们常说的feature map,shape仍然是[batch, height, width, channels]这种形式

那么TensorFlow的卷积具体是怎样实现的呢,用一些例子去解释它:

1.考虑一种最简单的情况,现在有一张3×3单通道的图像(对应的shape:[1,3,3,1]),用一个1×1的卷积核(对应的shape:[1,1,1,1])去做卷积,最后会得到一张3×3的feature map

2.增加图片的通道数,使用一张3×3五通道的图像(对应的shape:[1,3,3,5]),用一个1×1的卷积核(对应的shape:[1,1,1,1])去做卷积,仍然是一张3×3的feature map,这就相当于每一个像素点,卷积核都与该像素点的每一个通道做卷积。

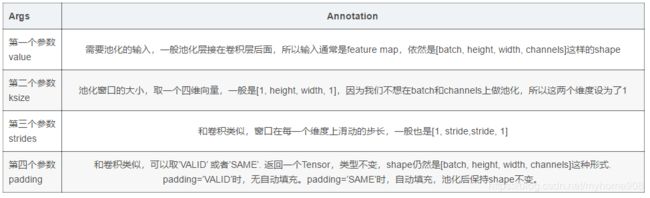

tf.nn.max_pool(value, ksize, strides, padding, name=None),

返回一个Tensor,类型不变,shape仍然是[batch, height, width, channels]这种形式

# coding: utf-8

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

def weight_variable(shape):

#tf.truncated_normal(shape, mean, stddev) :shape表示生成张量的维度,mean是均值,stddev是标准差。

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

#生成常数,维度由shape确定

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1], padding='SAME')

if __name__ == '__main__':

# 读入数据

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

# x为训练图像的占位符、y_为训练图像标签的占位符

x = tf.placeholder(tf.float32, [None, 784])

y_ = tf.placeholder(tf.float32, [None, 10])

# 因卷积网络对图像分类,不能使用784的向量表示输入x

#将单张图片从784维向量重新还原为28x28的矩阵图片

#[-1, 28, 28, 1]中-1表示形状第一维的大小是根据x自动确定

x_image = tf.reshape(x, [-1, 28, 28, 1])

# 第一层卷积层

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

# 第二层卷积层

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

# 全连接层,输出为1024维的向量

W_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7 * 7 * 64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

# 使用Dropout,keep_prob是一个占位符,训练时为0.5,测试时为1

keep_prob = tf.placeholder(tf.float32)

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

# 把1024维的向量转换成10维,对应10个类别

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

y_conv = tf.matmul(h_fc1_drop, W_fc2) + b_fc2

# 我们不采用先Softmax再计算交叉熵的方法,而是直接用tf.nn.softmax_cross_entropy_with_logits直接计算

cross_entropy = tf.reduce_mean(

tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=y_conv))

# 同样定义train_step

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

# 定义测试的准确率

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

# 创建Session和变量初始化

sess = tf.InteractiveSession()

sess.run(tf.global_variables_initializer())

# 训练20000步

for i in range(20000):

batch = mnist.train.next_batch(50)

# 每100步报告一次在验证集上的准确度

if i % 100 == 0:

train_accuracy = accuracy.eval(feed_dict={

x: batch[0], y_: batch[1], keep_prob: 1.0})

print("step %d, training accuracy %g" % (i, train_accuracy))

train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5})

# 训练结束后报告在测试集上的准确度

print("test accuracy %g" % accuracy.eval(feed_dict={

x: mnist.test.images, y_: mnist.test.labels, keep_prob: 1.0}))

Extracting MNIST_data/train-images-idx3-ubyte.gz

Extracting MNIST_data/train-labels-idx1-ubyte.gz

Extracting MNIST_data/t10k-images-idx3-ubyte.gz

Extracting MNIST_data/t10k-labels-idx1-ubyte.gz

E:\anacondaInstall\lib\site-packages\tensorflow\python\client\session.py:1735: UserWarning: An interactive session is already active. This can cause out-of-memory errors in some cases. You must explicitly call `InteractiveSession.close()` to release resources held by the other session(s).

warnings.warn('An interactive session is already active. This can '

step 0, training accuracy 0.02

step 100, training accuracy 0.9

step 200, training accuracy 0.92

step 300, training accuracy 0.92

step 400, training accuracy 0.96

step 500, training accuracy 1

step 600, training accuracy 0.96

step 700, training accuracy 0.98

step 800, training accuracy 0.96

step 900, training accuracy 0.96

step 1000, training accuracy 0.94

step 1100, training accuracy 0.98

step 1200, training accuracy 0.98

step 1300, training accuracy 0.94

step 1400, training accuracy 0.96

step 1500, training accuracy 0.98

step 1600, training accuracy 1

step 1700, training accuracy 0.94

step 1800, training accuracy 1

step 1900, training accuracy 0.96

step 2000, training accuracy 0.94

step 2100, training accuracy 1

step 2200, training accuracy 1

step 2300, training accuracy 0.9

step 2400, training accuracy 1

step 2500, training accuracy 0.98

step 2600, training accuracy 0.94

step 2700, training accuracy 0.98

step 2800, training accuracy 1

step 2900, training accuracy 0.96

step 3000, training accuracy 1

step 3100, training accuracy 1

step 3200, training accuracy 1

step 3300, training accuracy 0.96

step 3400, training accuracy 0.98

step 3500, training accuracy 0.98

step 3600, training accuracy 1

step 3700, training accuracy 1

step 3800, training accuracy 1

step 3900, training accuracy 0.94

step 4000, training accuracy 0.98

step 4100, training accuracy 1

step 4200, training accuracy 1

step 4300, training accuracy 1

step 4400, training accuracy 0.98

step 4500, training accuracy 0.96

step 4600, training accuracy 0.92

step 4700, training accuracy 1

step 4800, training accuracy 0.98

step 4900, training accuracy 1

step 5000, training accuracy 1

step 5100, training accuracy 1

step 5200, training accuracy 0.98

step 5300, training accuracy 1

step 5400, training accuracy 0.98

step 5500, training accuracy 1

step 5600, training accuracy 1

step 5700, training accuracy 0.98

step 5800, training accuracy 1

step 5900, training accuracy 1

step 6000, training accuracy 0.96

step 6100, training accuracy 0.98

step 6200, training accuracy 1

step 6300, training accuracy 1

step 6400, training accuracy 1

step 6500, training accuracy 0.98

step 6600, training accuracy 0.98

step 6700, training accuracy 1

step 6800, training accuracy 0.98

step 6900, training accuracy 1

step 7000, training accuracy 0.96

step 7100, training accuracy 1

step 7200, training accuracy 1

step 7300, training accuracy 0.98

step 7400, training accuracy 0.96

step 7500, training accuracy 0.98

step 7600, training accuracy 1

step 7700, training accuracy 1

step 7800, training accuracy 1

step 7900, training accuracy 0.98

step 8000, training accuracy 1

step 8100, training accuracy 1

step 8200, training accuracy 1

step 8300, training accuracy 0.96

step 8400, training accuracy 1

step 8500, training accuracy 0.98

step 8600, training accuracy 1

step 8700, training accuracy 1

step 8800, training accuracy 1

step 8900, training accuracy 1

step 9000, training accuracy 1

step 9100, training accuracy 1

step 9200, training accuracy 1

step 9300, training accuracy 1

step 9400, training accuracy 1

step 9500, training accuracy 1

step 9600, training accuracy 1

step 9700, training accuracy 1

step 9800, training accuracy 1

step 9900, training accuracy 1

step 10000, training accuracy 1

step 10100, training accuracy 1

step 10200, training accuracy 1

step 10300, training accuracy 1

step 10400, training accuracy 1

step 10500, training accuracy 1

step 10600, training accuracy 1

step 10700, training accuracy 1

step 10800, training accuracy 0.98

step 10900, training accuracy 1

step 11000, training accuracy 1

step 11100, training accuracy 1

step 11200, training accuracy 1

step 11300, training accuracy 1

step 11400, training accuracy 0.98

step 11500, training accuracy 1

step 11600, training accuracy 1

step 11700, training accuracy 0.98

step 11800, training accuracy 1

step 11900, training accuracy 1

step 12000, training accuracy 1

step 12100, training accuracy 1

step 12200, training accuracy 1

step 12300, training accuracy 1

step 12400, training accuracy 1

step 12500, training accuracy 1

step 12600, training accuracy 1

step 12700, training accuracy 1

step 12800, training accuracy 1

step 12900, training accuracy 1

step 13000, training accuracy 1

step 13100, training accuracy 1

step 13200, training accuracy 1

step 13300, training accuracy 1

step 13400, training accuracy 1

step 13500, training accuracy 1

step 13600, training accuracy 1

step 13700, training accuracy 1

step 13800, training accuracy 1

step 13900, training accuracy 1

step 14000, training accuracy 1

step 14100, training accuracy 1

step 14200, training accuracy 1

step 14300, training accuracy 1

step 14400, training accuracy 1

step 14500, training accuracy 1

step 14600, training accuracy 1

step 14700, training accuracy 1

step 14800, training accuracy 1

step 14900, training accuracy 1

step 15000, training accuracy 1

step 15100, training accuracy 1

step 15200, training accuracy 1

step 15300, training accuracy 1

step 15400, training accuracy 1

step 15500, training accuracy 1

step 15600, training accuracy 1

step 15700, training accuracy 1

step 15800, training accuracy 0.98

step 15900, training accuracy 1

step 16000, training accuracy 1

step 16100, training accuracy 1

step 16200, training accuracy 1

step 16300, training accuracy 1

step 16400, training accuracy 1

step 16500, training accuracy 1

step 16600, training accuracy 1

step 16700, training accuracy 1

step 16800, training accuracy 1

step 16900, training accuracy 1

step 17000, training accuracy 1

step 17100, training accuracy 1

step 17200, training accuracy 1

step 17300, training accuracy 1

step 17400, training accuracy 1

step 17500, training accuracy 1

step 17600, training accuracy 1

step 17700, training accuracy 1

step 17800, training accuracy 1

step 17900, training accuracy 1

step 18000, training accuracy 1

step 18100, training accuracy 1

step 18200, training accuracy 1

step 18300, training accuracy 1

step 18400, training accuracy 1

step 18500, training accuracy 1

step 18600, training accuracy 1

step 18700, training accuracy 1

step 18800, training accuracy 1

step 18900, training accuracy 1

step 19000, training accuracy 1

step 19100, training accuracy 1

step 19200, training accuracy 1

step 19300, training accuracy 1

step 19400, training accuracy 0.98

step 19500, training accuracy 1

step 19600, training accuracy 1

step 19700, training accuracy 1

step 19800, training accuracy 1

step 19900, training accuracy 1

test accuracy 0.9926