hadoop Flume 操作实例(监控端口数据、实时读取本地文件到 HDFS、实时读取目录文件到 HDFS)

环境配置:

Linux Ubuntu16.04系统

hadoop 2.7.2(一主master二从slave1、slave2)

java jdk1.8.0_181

Flume 1.7.0

flume环境配置教程:hadoop Flume 安装环境

案例一:监控端口数据

目标:Flume监控一端Console,另一端Console发送消息,使被监控端实时显示。

分步实现:

1)安装telnet工具(Ubuntu自带的有,centos需要安装)

$ sudo rpm -ivh xinetd-2.3.14-40.el6.x86_64.rpm

$ sudo rpm -ivh telnet-0.17-48.el6.x86_64.rpm

$ sudo rpm -ivh telnet-server-0.17-48.el6.x86_64.rpm

2)在conf下面创建文件夹job,在job下面创建Flume Agent的配置文件flume-telnet.conf

mkdir /home/hadoop/apache-flume-1.7.0-bin/conf/job

vim /home/hadoop/apache-flume-1.7.0-bin/conf/job/flume-telnet.conf

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

3)判断4444端口是否被占用

netstat -tunlp | grep 44444

4)开启flume监听端口

flume-ng agent --conf conf/ --name a1 --conf-file /home/hadoop/apache-flume-1.7.0-bin/conf/job/flume-telnet.conf -Dflume.root.logger==INFO,console

注意:以上命令中的“conf/”在flume环境变量配置好的情况下可使用,否则写绝对路径

5)另开一终端,使用telnet工具向本机的44444端口发送内容

telnet localhost 44444

6)测试结果

案例二:实时读取本地文件到 HDFS

目标:实时监控 hive 日志,并上传到 HDFS 中

分步实现:

1) 拷贝 Hadoop 相关 jar 到 Flume 的 lib 目录下

cp /home/hadoop/hadoop-2.7.2/share/hadoop/common/lib/hadoop-auth-2.7.2.jar /home/hadoop/apache-flume-1.7.0-bin/lib/

cp /home/hadoop/hadoop-2.7.2/share/hadoop/common/lib/commons-configuration-1.6.jar /home/hadoop/apache-flume-1.7.0-bin/lib/

cp /home/hadoop/hadoop-2.7.2/share/hadoop/common/hadoop-common-2.7.2.jar /home/hadoop/apache-flume-1.7.0-bin/lib/

cp /home/hadoop/hadoop-2.7.2/share/hadoop/hdfs/hadoop-hdfs-2.7.2.jar /home/hadoop/apache-flume-1.7.0-bin/lib/

注意:

1、不同版本hadoop的文件路径可能略有不同,请自行查找

2、下面的 jar 为 1.99 版本 flume 必须引用的 jar

$ cp ./share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar ./lib/

$ cp ./share/hadoop/hdfs/lib/commons-io-2.4.jar ./lib/

2)创建flume-hdfs.conf 文件

vim /home/hadoop/apache-flume-1.7.0-bin/conf/job/flume-hdfs.conf

# Name the components on this agent

a2.sources = r2

a2.sinks = k2

a2.channels = c2

# Describe/configure the source

a2.sources.r2.type = exec

a2.sources.r2.command = tail -F /home/hadoop/run_job.log

a2.sources.r2.shell = /bin/bash -c

# Describe the sink

a2.sinks.k2.type = hdfs

a2.sinks.k2.hdfs.path = hdfs://192.168.1.188:9000/flume/%Y%m%d/%H

# 上传文件的前缀

a2.sinks.k2.hdfs.filePrefix = run-job-

# 是否按照时间滚动文件夹

a2.sinks.k2.hdfs.round = true

# 多少时间单位创建一个新的文件夹

a2.sinks.k2.hdfs.roundValue = 5

# 重新定义时间单位

a2.sinks.k2.hdfs.roundUnit = minute

# 是否使用本地时间戳

a2.sinks.k2.hdfs.useLocalTimeStamp = true

# 积攒多少个 Event 才 flush 到 HDFS 一次

a2.sinks.k2.hdfs.batchSize = 5

# 设置文件类型,可支持压缩

a2.sinks.k2.hdfs.fileType = DataStream

# 多久生成一个新的文件

a2.sinks.k2.hdfs.rollInterval = 1

# 设置每个文件的滚动大小

a2.sinks.k2.hdfs.rollSize = 134217700

# 文件的滚动与 Event 数量无关

a2.sinks.k2.hdfs.rollCount = 0

# 最小冗余数

a2.sinks.k2.hdfs.minBlockReplicas = 1

# Use a channel which buffers events in memory

a2.channels.c2.type = memory

a2.channels.c2.capacity = 1000

a2.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel

a2.sources.r2.channels = c2

a2.sinks.k2.channel = c2

3)其中的run_job.log文件由run_job.sh文件执行获得,在/home/hadoop/下新建run_job.sh文件,run_job.sh文件的内容为:

#!/usr/bin/env bash

#source /home/hadoop/.profile

while : ;

do starttime=$(date +%Y-%m-%d\ %H:%M:%S); echo $starttime + "Hello world!." >> /home/hadoop/run_job.log;sleep 5;

done;

使run_job.sh文件获得执行权限:

chmod +x run_job.sh

4)启动hadoop集群 start-all.sh,执行对run_job.log文件的监控配置,将结果上传到集群上

flume-ng agent --conf conf/ --name a2 --conf-file /home/hadoop/apache-flume-1.7.0-bin/conf/job/flume-hdfs.conf

5)启动run_job.sh:./run_job.sh,等待,新开终端查看测试结果

tail -f run_job.log

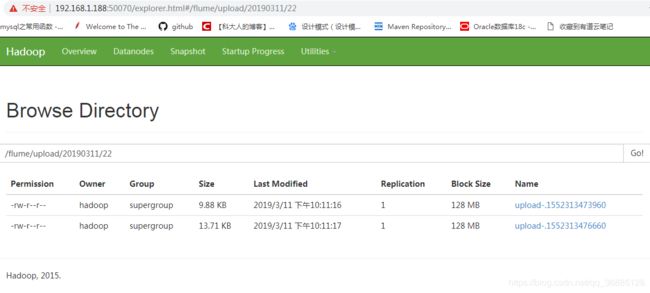

打开网页:master:50070,查看文件系统里上传的监测日志

案例三 实时读取目录文件到 HDFS

目标:使用 flume 监听整个目录的文件

分步实现:

1) 同上个案例,创建配置文件 flume-dir.conf

vim /home/hadoop/apache-flume-1.7.0-bin/conf/job/flume-dir.conf

# Name the components on this agent

a3.sources = r3

a3.sinks = k3

a3.channels = c3

# Describe/configure the source

a3.sources.r3.type = spooldir

a3.sources.r3.spoolDir = /home/hadoop/apache-flume-1.7.0-bin/upload

a3.sources.r3.fileSuffix = .COMPLETED

a3.sources.r3.fileHeader = true

#忽略所有以.tmp 结尾的文件,不上传

a3.sources.r3.ignorePattern = ([^ ]*\.tmp)

# Describe the sink

a3.sinks.k3.type = hdfs

a3.sinks.k3.hdfs.path = hdfs://192.168.1.188:9000/flume/upload/%Y%m%d/%H

#上传文件的前缀

a3.sinks.k3.hdfs.filePrefix = upload-

#是否按照时间滚动文件夹

a3.sinks.k3.hdfs.round = true

#多少时间单位创建一个新的文件夹

a3.sinks.k3.hdfs.roundValue = 2

#重新定义时间单位

a3.sinks.k3.hdfs.roundUnit = minute

#是否使用本地时间戳

a3.sinks.k3.hdfs.useLocalTimeStamp = true

#积攒多少个 Event 才 flush 到 HDFS 一次

a3.sinks.k3.hdfs.batchSize = 2

#设置文件类型,可支持压缩

a3.sinks.k3.hdfs.fileType = DataStream

#多久生成一个新的文件

a3.sinks.k3.hdfs.rollInterval = 1

#设置每个文件的滚动大小大概是 128M

a3.sinks.k3.hdfs.rollSize = 134217700

#文件的滚动与 Event 数量无关

a3.sinks.k3.hdfs.rollCount = 0

#最小冗余数

a3.sinks.k3.hdfs.minBlockReplicas = 1

# Use a channel which buffers events in memory

a3.channels.c3.type = memory

a3.channels.c3.capacity = 1000

a3.channels.c3.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r3.channels = c3

a3.sinks.k3.channel = c3

2)其中的upload文件目录由run_job_dir.sh文件执行获得,在/home/hadoop/下新建run_job_dir.sh文件,run_job_dir.sh文件的内容为:

#!/usr/bin/env bash

#source /home/hadoop/.profile

while : ;

do starttime=$(date +%Y-%m-%d\_%H:%M:%S); file_time=$(date +%Y-%m-%d\_%H:%M:00);echo $starttime + "Hello world!." >> /home/hadoop/hadoop_home/apache-flume-1.7.0-bin/upload/+$file_time+_test.log;sleep 0.1;

done;

使run_job_dir.sh文件获得执行权限:

chmod +x run_job_dir.sh

3)启动hadoop集群 start-all.sh,执行对upload文件夹的监控配置,将结果上传到集群上

flume-ng agent --conf conf/ --name a3 --conf-file /home/hadoop/apahce-flume-1.7.0-bin/conf/job/flume-dir.conf

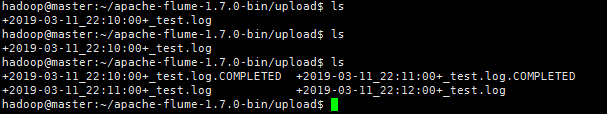

4)启动run_job_dir.sh:./run_job_dir.sh,等待,新开终端查看测试结果

ls /home/hadoop/apache-flume-1.7.0-bin/upload/

打开网页:master:50070,查看文件系统里上传的监测日志