SGAN 简介与代码实战

1.介绍

SGAN来源于论文Semi-Supervised Learning with Generative Adversarial Networks ,而这个半监督和gan又有什么关系呢?在判别器网络中,网络不仅要判断类别(有监督),也要判断真假(无监督),所以。。。

2.模型结构

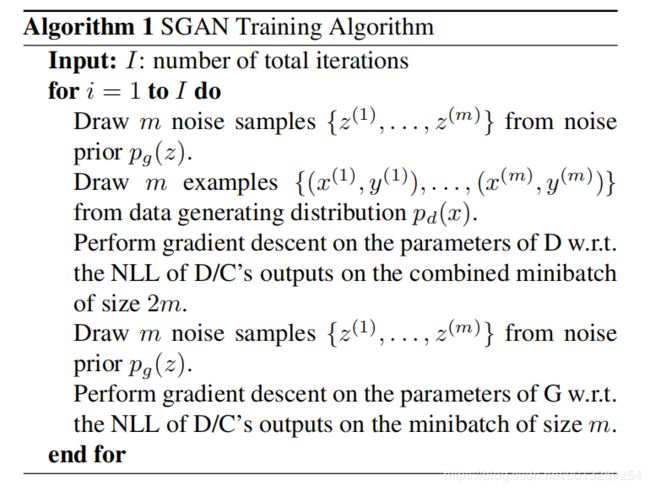

由于论文没有网络结构的图,我在这里也就不展示了,下图为SGAN的整个算法流程,其实和大部分gan的算法流程差不多,其中D/C( [CLASS-1, CLASS-2, . . . CLASS-N, FAKE]. In this case, D can also act as C. We call this network D/C.)

3.模型特点

为什么要结合分类(结合D和C替换普通判别器网络)?

从比较直观的视角来看,如果判别器网络D不能判断出那个是真图片那个是生成图片,那么这个生成图片就更容易被分类网络C分类正确(因为生成的图片更真实,如果很假不合逻辑,我相信人的眼睛也不能分类出),D和C之间有着相互促进的机制。

4.代码实现 keras

class SGAN:

def __init__(self):

self.img_rows = 28

self.img_cols = 28

self.channels = 1

self.img_shape = (self.img_rows, self.img_cols, self.channels)

self.num_classes = 10

self.latent_dim = 100

optimizer = Adam(0.0002, 0.5)

# Build and compile the discriminator

self.discriminator = self.build_discriminator()

self.discriminator.compile(

loss=['binary_crossentropy', 'categorical_crossentropy'],

loss_weights=[0.5, 0.5],

optimizer=optimizer,

metrics=['accuracy']

)

# Build the generator

self.generator = self.build_generator()

# The generator takes noise as input and generates imgs

noise = Input(shape=(100,))

img = self.generator(noise)

# For the combined model we will only train the generator

self.discriminator.trainable = False

# The valid takes generated images as input and determines validity

valid, _ = self.discriminator(img)

# The combined model (stacked generator and discriminator)

# Trains generator to fool discriminator

self.combined = Model(noise, valid)

self.combined.compile(loss=['binary_crossentropy'], optimizer=optimizer)

def build_generator(self):

model = Sequential()

model.add(Dense(128 * 7 * 7, activation="relu", input_dim=self.latent_dim))

model.add(Reshape((7, 7, 128)))

model.add(BatchNormalization(momentum=0.8))

model.add(UpSampling2D())

model.add(Conv2D(128, kernel_size=3, padding="same"))

model.add(Activation("relu"))

model.add(BatchNormalization(momentum=0.8))

model.add(UpSampling2D())

model.add(Conv2D(64, kernel_size=3, padding="same"))

model.add(Activation("relu"))

model.add(BatchNormalization(momentum=0.8))

model.add(Conv2D(1, kernel_size=3, padding="same"))

model.add(Activation("tanh"))

model.summary()

noise = Input(shape=(self.latent_dim,))

img = model(noise)

return Model(noise, img)

def build_discriminator(self):

model = Sequential()

model.add(Conv2D(32, kernel_size=3, strides=2, input_shape=self.img_shape, padding="same"))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Conv2D(64, kernel_size=3, strides=2, padding="same"))

model.add(ZeroPadding2D(padding=((0,1),(0,1))))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(BatchNormalization(momentum=0.8))

model.add(Conv2D(128, kernel_size=3, strides=2, padding="same"))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(BatchNormalization(momentum=0.8))

model.add(Conv2D(256, kernel_size=3, strides=1, padding="same"))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Flatten())

model.summary()

img = Input(shape=self.img_shape)

features = model(img)

valid = Dense(1, activation="sigmoid")(features)

label = Dense(self.num_classes+1, activation="softmax")(features)

return Model(img, [valid, label])

def train(self, epochs, batch_size=128, sample_interval=50):

# Load the dataset

(X_train, y_train), (_, _) = mnist.load_data()

# Rescale -1 to 1

X_train = (X_train.astype(np.float32) - 127.5) / 127.5

X_train = np.expand_dims(X_train, axis=3)

y_train = y_train.reshape(-1, 1)

# Class weights:

# To balance the difference in occurences of digit class labels.

# 50% of labels that the discriminator trains on are 'fake'.

# Weight = 1 / frequency

half_batch = batch_size // 2

cw1 = {0: 1, 1: 1}

cw2 = {i: self.num_classes / half_batch for i in range(self.num_classes)}

cw2[self.num_classes] = 1 / half_batch

# Adversarial ground truths

valid = np.ones((batch_size, 1))

fake = np.zeros((batch_size, 1))

for epoch in range(epochs):

# ---------------------

# Train Discriminator

# ---------------------

# Select a random batch of images

idx = np.random.randint(0, X_train.shape[0], batch_size)

imgs = X_train[idx]

# Sample noise and generate a batch of new images

noise = np.random.normal(0, 1, (batch_size, self.latent_dim))

gen_imgs = self.generator.predict(noise)

# One-hot encoding of labels

labels = to_categorical(y_train[idx], num_classes=self.num_classes+1)

fake_labels = to_categorical(np.full((batch_size, 1), self.num_classes), num_classes=self.num_classes+1)

# Train the discriminator

d_loss_real = self.discriminator.train_on_batch(imgs, [valid, labels], class_weight=[cw1, cw2])

d_loss_fake = self.discriminator.train_on_batch(gen_imgs, [fake, fake_labels], class_weight=[cw1, cw2])

d_loss = 0.5 * np.add(d_loss_real, d_loss_fake)

# ---------------------

# Train Generator

# ---------------------

g_loss = self.combined.train_on_batch(noise, valid, class_weight=[cw1, cw2])

# Plot the progress

print ("%d [D loss: %f, acc: %.2f%%, op_acc: %.2f%%] [G loss: %f]" % (epoch, d_loss[0], 100*d_loss[3], 100*d_loss[4], g_loss))

# If at save interval => save generated image samples

if epoch % sample_interval == 0:

self.sample_images(epoch)

def sample_images(self, epoch):

r, c = 5, 5

noise = np.random.normal(0, 1, (r * c, self.latent_dim))

gen_imgs = self.generator.predict(noise)

# Rescale images 0 - 1

gen_imgs = 0.5 * gen_imgs + 0.5

fig, axs = plt.subplots(r, c)

cnt = 0

for i in range(r):

for j in range(c):

axs[i,j].imshow(gen_imgs[cnt, :,:,0], cmap='gray')

axs[i,j].axis('off')

cnt += 1

fig.savefig("images/mnist_%d.png" % epoch)

plt.close()