k8s上运行我们的springboot服务之——k8s 1.16.0安装

k8s上运行我们的springboot服务之——k8s 1.16.0安装

- 内容介绍

- 技术版本

- 服务器罗列

- 域名映射

- 基础配置

- 关闭防火墙和selinux

- 关闭swap

- 时间同步

- master节点

- slave节点

- 安装docker

- 安装kubelet kubeadm kubectl

- master 执行

- 安装flannel网络

- slave节点

- 查看集群情况

- kube-proxy开启ipvs

- 移除节点和集群

内容介绍

个人觉得有了k8s,我们管理和发布服务都很轻松。这里介绍k8s在centos下的安装,作为各种服务在k8s发布和管理的基础平台架构

技术版本

docker-ce-19.03.1-3.el7

centos7

k8s 1.16.0

服务器罗列

一台作为master,另外两台作为slave节点

192.168.1.181 k8s-master

192.168.1.182 k8s-slave1

192.168.1.183 k8s-slave2

一定要按步骤来,不然会出错

域名映射

在每台服务器上执行

#vi /etc/hosts

192.168.1.181 k8s-master

192.168.1.182 k8s-slave1

192.168.1.183 k8s-slave2

基础配置

全部主机配置、停防火墙、关闭Swap、关闭Selinux、设置内核、K8S的yum源、安装依赖包、配置ntp(配置完后建议重启一次)

禁用防火墙,安装iptables, 并安装ntp时间同步

关闭防火墙和selinux

#systemctl stop firewalld && systemctl disable firewalld

#sed -i ‘s/^SELINUX=enforcing$/SELINUX=disabled/’ /etc/selinux/config && setenforce 0

关闭swap

#swapoff -a

#yes | cp /etc/fstab /etc/fstab_bak

#cat /etc/fstab_bak |grep -v swap > /etc/fstab

时间同步

注意各个节点必须时间一致,同步,否则会报错

master节点

#安装chrony:

#yum install -y chrony

#注释默认ntp服务器

#sed -i ‘s/^server/#&/’ /etc/chrony.conf

#指定上游公共 ntp 服务器,并允许其他节点同步时间

#cat >> /etc/chrony.conf << EOF

server 0.asia.pool.ntp.org iburst

server 1.asia.pool.ntp.org iburst

server 2.asia.pool.ntp.org iburst

server 3.asia.pool.ntp.org iburst

allow all

EOF

#重启chronyd服务并设为开机启动:

systemctl enable chronyd && systemctl restart chronyd

#开启网络时间同步功能

timedatectl set-ntp true

slave节点

#安装chrony:

yum install -y chrony

#注释默认服务器

sed -i ‘s/^server/#&/’ /etc/chrony.conf

#指定内网 master节点为上游NTP服务器

echo server 192.168.1.181 iburst >> /etc/chrony.conf

#重启服务并设为开机启动:

systemctl enable chronyd && systemctl restart chronyd

安装docker

master、slave均需要执行

#配置docker yum源

#yum -y install yum-utils

#yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#安装指定版本,这里安装19.03.1-3.el7,因为k8s1.16.0的要求

#yum list docker-ce --showduplicates | sort -r

#yum install -y docker-ce-19.03.1-3.el7

#systemctl start docker && systemctl enable docker

参数修改

vi /etc/docker/daemon.json

{

#The recommended driver is “systemd”

“exec-opts”: [“native.cgroupdriver=systemd”],

#https

“insecure-registries”: [“192.168.1.181:5000”]

}

#systemctl start docker && systemctl enable docker

修改内核参数

#vi /etc/sysctl.conf

net.ipv4.ip_forward=1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-arptables = 1

vm.swappiness=0

关闭swap

#swapoff -a

关闭selinux

#vi /etc/selinux/config

SELINUX=disabled

保存修改内核参数

#sysctl -p

安装kubelet kubeadm kubectl

master、slave均需要执行

#vi /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes Repo

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

enabled=1

#yum update

#yum install -y kubelet kubeadm kubectl

设置开机启动kubelet

systemctl start kubelet

systemctl enable kubelet.service

此时查看下面两个文件的值是否为1

#cat /proc/sys/net/bridge/bridge-nf-call-ip6tables

1

#cat /proc/sys/net/bridge/bridge-nf-call-ip6tables

1

master 执行

查看需要哪些镜像:

#kubeadm config images list

由于网络原因,我们使用脚本通过阿里巴巴的镜像源获得需要的docker镜像

#mkdir /home/spark/k8s

#cd /home/spark/k8s

#vi k8s.sh

#!/bin/bash

#使用如下脚本下载国内镜像,并修改tag为google的tag

set -e

KUBE_VERSION=v1.16.0

KUBE_PAUSE_VERSION=3.1

ETCD_VERSION=3.3.15-0

CORE_DNS_VERSION=1.6.2

GCR_URL=k8s.gcr.io

ALIYUN_URL=registry.cn-hangzhou.aliyuncs.com/google_containers

images=(kube-proxy:${KUBE_VERSION}

kube-scheduler:${KUBE_VERSION}

kube-controller-manager:${KUBE_VERSION}

kube-apiserver:${KUBE_VERSION}

pause:${KUBE_PAUSE_VERSION}

etcd:${ETCD_VERSION}

coredns:${CORE_DNS_VERSION})

for imageName in ${images[@]} ; do

docker pull $ALIYUN_URL/$imageName

docker tag $ALIYUN_URL/$imageName $GCR_URL/$imageName

docker rmi $ALIYUN_URL/$imageName

done

#执行下载镜像,可能要等一会,所以让子弹飞一会

#./k8s.sh

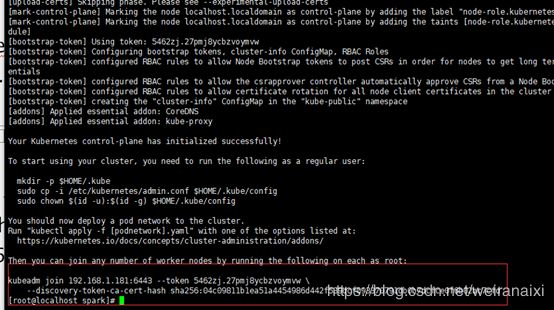

安装

#kubeadm init --kubernetes-version=v1.16.0 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.1.181

把这个token复制保存下来,后面添加slave节点需要使用,当然也可以不记下来,后面可以通过命令获得

kubeadm join 192.168.1.181:6443 --token syjco0.js9a67cyst38vwht

–discovery-token-ca-cert-hash sha256:4b5f2fc80cc09ef97eedb0042338dfc709d6a1b94163174ec31b95e02dffd21a

下面的命令就是拷贝上面截图中的三个命令

#mkdir -p $HOME/.kube

#sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

#sudo chown ( i d − u ) : (id -u): (id−u):(id -g) $HOME/.kube/config

查看一下

#kubectl get pod -n kube-system -o wide

可以看到,CoreDNS依赖于网络的 Pod 都处于 Pending 状态,即调度失败。这当然是符合预期的:因为这个 Master 节点的网络尚未就绪

安装flannel网络

由于网络原因,还是通过脚本来pull镜像吧

#vi flanneld.sh

#!/bin/bash

set -e

FLANNEL_VERSION=v0.11.0

#在这里修改源

QUAY_URL=quay.io/coreos

QINIU_URL=quay-mirror.qiniu.com/coreos

images=(flannel: F L A N N E L V E R S I O N − a m d 64 f l a n n e l : {FLANNEL_VERSION}-amd64 flannel: FLANNELVERSION−amd64flannel:{FLANNEL_VERSION}-arm64

flannel: F L A N N E L V E R S I O N − a r m f l a n n e l : {FLANNEL_VERSION}-arm flannel: FLANNELVERSION−armflannel:{FLANNEL_VERSION}-ppc64le

flannel:${FLANNEL_VERSION}-s390x)

for imageName in ${images[@]} ; do

docker pull Q I N I U U R L / QINIU_URL/ QINIUURL/imageName

docker tag Q I N I U U R L / QINIU_URL/ QINIUURL/imageName Q U A Y U R L / QUAY_URL/ QUAYURL/imageName

docker rmi Q I N I U U R L / QINIU_URL/ QINIUURL/imageName

done

#./flanneld.sh

#vi kube-flannel.yml

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unsed in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- amd64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-ppc64le

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- ppc64le

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-s390x

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- s390x

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

#kubectl apply -f kube-flannel.yml

至此,Kubernetes 的 Master 节点就部署完成了。如果你只需要一个单节点的 Kubernetes,现在你就可以使用了。不过,在默认情况下,Kubernetes 的 Master 节点是不能运行用户 Pod 的

如果安装失败,需要重装时。可以使用如下命令来清理环境

#重置kubeadm

kubeadm reset

#重置iptables

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

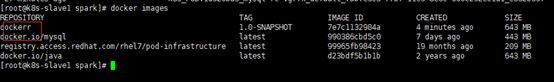

slave节点

vi k8s-slave.sh

#!/bin/bash

##使用如下脚本下载国内镜像,并修改tag为google的tag

set -e

KUBE_VERSION=v1.16.0

KUBE_PAUSE_VERSION=3.1

CORE_DNS_VERSION=1.6.2

GCR_URL=k8s.gcr.io

ALIYUN_URL=registry.cn-hangzhou.aliyuncs.com/google_containers

images=(kube-proxy:${KUBE_VERSION}

pause:${KUBE_PAUSE_VERSION}

coredns:${CORE_DNS_VERSION})

for imageName in ${images[@]} ; do

docker pull $ALIYUN_URL/$imageName

docker tag $ALIYUN_URL/$imageName $GCR_URL/$imageName

docker rmi $ALIYUN_URL/$imageName

done

#./k8s-slave.sh

#yum install -y kubelet kubeadm kubectl

#systemctl start kubelet

#systemctl enable kubelet

#systemctl status kubelet

在master执行命令查看slave几点加入集群的命令

#kubeadm token create --print-join-command

kubeadm join 192.168.1.181:6443 --token wowvwv.0drdeh4f6n97e10h

–discovery-token-ca-cert-hash sha256:c9b2808367a08b846af8774bfd70ef12588c0598374c622fdedda46232eb5780

拷贝上面的命令在各个slave节点执行

#kubeadm join 192.168.1.181:6443 --token wowvwv.0drdeh4f6n97e10h

–discovery-token-ca-cert-hash sha256:c9b2808367a08b846af8774bfd70ef12588c0598374c622fdedda46232eb5780

排错

#journalctl -xefu kubelet

如果安装失败,需要重装时。可以使用如下命令来清理环境

#重置kubeadm

kubeadm reset

#重置iptables

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

错误处理:

cni.go:259] Error adding network: failed to set bridge addr: “cni0” already has an IP address different from 10.16.2.1/24

#kubeadm reset

#systemctl stop kubelet

#systemctl stop docker

#rm -rf /var/lib/cni/

#rm -rf /var/lib/kubelet/*

#rm -rf /etc/cni/

#ifconfig cni0 down

#ifconfig flannel.1 down

#ifconfig docker0 down

#ip link delete cni0

#ip link delete flannel.1

#systemctl start docker

错误解决

关于错误cni config uninitialized Addresses,有可能是node拉取flannel镜像报错,可以配置好了docker私服再试

查看集群情况

让子弹飞一会

slave执行

#kubectl -s http://k8s-master:8080 get node

NAME STATUS AGE

k8s-node-1 Ready 3m

k8s-node-2 Ready 16s

master执行

#kubectl get nodes

NAME STATUS AGE

k8s-node-1 Ready 3m

k8s-node-2 Ready 43s

#kubectl get pod --all-namespaces -o wide

至此,已经搭建了一个kubernetes集群。

#kubectl describe pod kube-controller-manager-k8s-master --namespace=kube-system

出于安全考虑,默认配置下Kubernetes不会将Pod调度到Master节点。查看Taints字段默认配置:

#kubectl describe node k8s-master

如果希望将k8s-master也当作Node节点使用,可以执行如下命令,其中k8s-master是主机节点hostname:

#kubectl taint node k8s-master node-role.kubernetes.io/master-

修改后Taints字段状态:

#kubectl describe node k8s-master

如果要恢复Master Only状态,执行如下命令:

kubectl taint node k8s-master node-role.kubernetes.io/master=:NoSchedule

kube-proxy开启ipvs

在所有的Kubernetes节点slave1和slave2上执行以下脚本:

cat > /etc/sysconfig/modules/ipvs.modules <

modprobe – ip_vs

modprobe – ip_vs_rr

modprobe – ip_vs_wrr

modprobe – ip_vs_sh

modprobe – nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

脚本创建了的/etc/sysconfig/modules/ipvs.modules文件,保证在节点重启后能自动加载所需模块。 使用lsmod | grep -e ip_vs -e nf_conntrack_ipv4命令查看是否已经正确加载所需的内核模块。

在所有节点上安装ipset软件包

yum install ipset -y

为了方便查看ipvs规则我们要安装ipvsadm(可选)

yum install ipvsadm -y

修改ConfigMap的kube-system/kube-proxy中的config.conf,mode: “ipvs”

#kubectl edit cm kube-proxy -n kube-system

之后重启各个节点上的kube-proxy pod:

#kubectl get pod -n kube-system | grep kube-proxy | awk ‘{system(“kubectl delete pod “$1” -n kube-system”)}’

#kubectl get pod -n kube-system | grep kube-proxy

查看日志:

#kubectl logs kube-proxy-6qlgv -n kube-system

日志中打印出了Using ipvs Proxier,说明ipvs模式已经开启。

移除节点和集群

kubernetes集群移除节点

以移除k8s-node2节点为例,在Master节点上运行:

kubectl drain k8s-node2 --delete-local-data --force --ignore-daemonsets

上面两条命令执行完成后,在k8s-slave2节点执行清理命令,重置kubeadm的安装状态:

kubeadm reset

在master上删除node并不会清理k8s-slave2运行的容器,需要在删除节点上面手动运行清理命令。

如果你想重新配置集群,使用新的参数重新运行kubeadm init或者kubeadm join即可。

至此3个节点的集群搭建完成,后续可以继续添加slave节点,或者部署Prometheus、alertmanager等组件(这些可以结合istio完成)。也可以部署flink,springboot服务等

禁止master部署pod

kubectl taint nodes k8s-master node-role.kubernetes.io/master=true:NoSchedule

以上说的均可以参考link