scrapy-redis 安装 及使用 结合例子解释

scrapy-redis安装及配置

scrapy-redis 的安装

pip install scrapy-redis

easy_install scrapy-redis

下载

http://redis.io/download

版本推荐

stable 3.0.2

运行redis

redis-server redis.conf

清空缓存

redis-cli flushdb

scrapy配置redis

settings.py配置redis(在scrapy-redis 自带的例子中已经配置好)

SCHEDULER = "scrapy_redis.scheduler.Scheduler"

SCHEDULER_PERSIST = True

SCHEDULER_QUEUE_CLASS = 'scrapy_redis.queue.SpiderPriorityQueue'

REDIS_URL = None # 一般情况可以省去

REDIS_HOST = '127.0.0.1' # 也可以根据情况改成 localhost

REDIS_PORT = 6379

在scrapy中使用scrapy-redis

spider 继承RedisSpider

class tempSpider(RedisSpider)

name = "temp"

redis_key = ''temp:start_url"

启动redis

在redis的src目录下,执行 ./redis-server启动服务器

执行 ./redis-cli 启动客户端

设置好setting.py的redis 的ip和端口

启动scropy-redis的代码;

如启动name= "lhy",start_urls="lhy:start_urls" 的spider。

如果在redis中没有 主键为lhy:start_urls 的list,则爬虫已只监听等待。

此时,在redis客户端执行:lpush lhy:start_urls http://blog.csdn.net/u013378306/article/details/53888173

可以看到爬虫开始抓取。在 redis客户端下输入 keys *,查看所有主键

原来的 lhy:start_urls 已经被自动删除,并新建了 一个lhy:dupefilter (set),一个 lhy:items (list), 一个 lhy:requests(zset)

lhy:dupefilter用来存储 已经requests 过的url的hash值,分布式去重时使用到, lhy:items是分布式生成的items,lhy:requests是新生成的 url封装后的requests。

理论上,lhy:dupefilter 等于已经request的数量,一直增加

lhy:items 是经过 spider prase生成的

lhy:requests 是有序集合ZSET,scrapy-redis 重新吧他封装成了一个队列,requests是spider 解析生成新url后重新封装,如果不载有新的url产生,则随着spider的prase,一直减少。总之取request时出队列,新的url会重新封装成request后增加进来,入队列。

scrapy-redis 原理及解释

scrapy-redis 重写了scrapy的多个类,具体请看http://blog.csdn.net/u013378306/article/details/53992707

并且在setting.py中 配置了这些类,所以当运行scrapy-redis例子时,自动使用了scrapy-redis 重写的类。

下载scrapy-redis源代码 https://github.com/rolando/scrapy-Redis

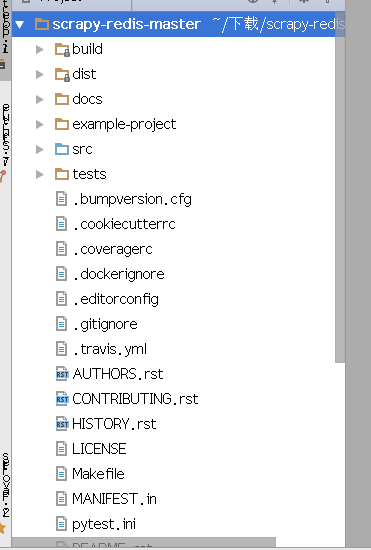

文档结构如下:

其中,src 中是scrapy-redis的源代码。

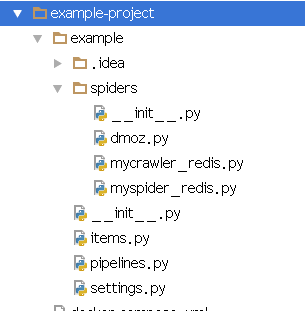

example-redis 是写好的例子,其中有三个例子

(1)domz.py (2) mycrawler_redis.py (3) myspider.py

文档中队者三个例子的解释如下

* **dmoz**

This spider simply scrapes dmoz.org.

* **myspider_redis**

This spider uses redis as a shared requests queue and uses

``myspider:start_urls`` as start URLs seed. For each URL, the spider outputs

one item.

* **mycrawler_redis**

This spider uses redis as a shared requests queue and uses

``mycrawler:start_urls`` as start URLs seed. For each URL, the spider follows

are links.domz.py :此例子仅仅是抓取一个网站下的数据,没有用分布式

from scrapy.linkextractors import LinkExtractor

from scrapy.spiders import CrawlSpider, Rule

class DmozSpider(CrawlSpider):

"""Follow categories and extract links."""

name = 'dmoz'

allowed_domains = ['dmoz.org']

start_urls = ['http://www.dmoz.org/']

rules = [

Rule(LinkExtractor(

restrict_css=('.top-cat', '.sub-cat', '.cat-item')

), callback='parse_directory', follow=True),

]

def parse_directory(self, response):

for div in response.css('.title-and-desc'):

yield {

'name': div.css('.site-title::text').extract_first(),

'description': div.css('.site-descr::text').extract_first().strip(),

'link': div.css('a::attr(href)').extract_first(),

}mycrawler_redis.py

此例子 使用 RedisCrawlSpider类,支持分布式去重爬取,并且 可以定义抓取连接的rules

from scrapy.spiders import Rule

from scrapy.linkextractors import LinkExtractor

from scrapy_redis.spiders import RedisCrawlSpider

class MyCrawler(RedisCrawlSpider):

"""Spider that reads urls from redis queue (myspider:start_urls)."""

name = 'mycrawler_redis'

redis_key = 'mycrawler:start_urls'

rules = (

# follow all links

Rule(LinkExtractor(), callback='parse_page', follow=True),

)

def __init__(self, *args, **kwargs):

# Dynamically define the allowed domains list.

domain = kwargs.pop('domain', '')

self.allowed_domains = filter(None, domain.split(','))

super(MyCrawler, self).__init__(*args, **kwargs)

def parse_page(self, response):

return {

'name': response.css('title::text').extract_first(),

'url': response.url,

}myspider.py 此例子支持分布式去重爬取,但不支持定义规则抓取url

from scrapy_redis.spiders import RedisSpider

class MySpider(RedisSpider):

"""Spider that reads urls from redis queue (myspider:start_urls)."""

name = 'myspider_redis'

redis_key = 'myspider:start_urls'

def __init__(self, *args, **kwargs):

# Dynamically define the allowed domains list.

domain = kwargs.pop('domain', '')

self.allowed_domains = filter(None, domain.split(','))

super(MySpider, self).__init__(*args, **kwargs)

def parse(self, response):

return {

'name': response.css('title::text').extract_first(),

'url': response.url,

}