Elasticsearch 7.6 分词器使用

1.创建索引

1.1使用ik分词器

适用于中文分词器,若是对邮箱/用户名等进行分词, 只能按着标点符号进行分割,颗粒度太大,不太适用,这种情况可以考虑下面的自定义分词器

{

"settings":{

"number_of_shards": 3,

"number_of_replicas": 1,

"analysis":{

"analyzer":{

"ik":{

"tokenizer":"ik_max_word"

}

}

}

},

"mappings":{

"properties":{

"id": {

"type": "keyword"

},

"shopcode":{

"type":"text",

"analyzer":"ik",

"search_analyzer":"ik",

"fields":{

"keyword":{

"type":"keyword",

"ignore_above":256

}

}

}

}

}

}

创建索引

**测试邮箱分词效果 **

1…2使用自定义分词器

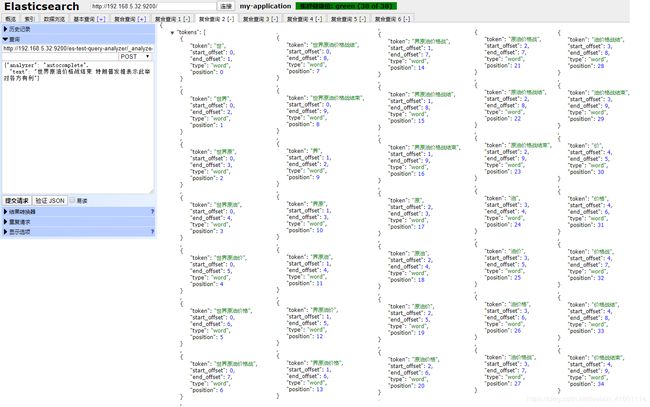

可以自定义分词的颗粒度,对邮箱/用户名密码等数据进行分词时,可以从单个字母开始分词,分词颗粒度可以自定义,弊端是创建出来的倒排索引会非常多,但是如果数据量够大的话,倒排索引的数量不会成线性增长,所以更适合大量数据的索引,千万级别或者亿级别的数据量

创建索引,自定义分词器

{

"settings":{

"number_of_shards": 5,

"number_of_replicas": 1,

"index.max_ngram_diff":100,

"index.max_result_window":1000000000,

"analysis":{

"analyzer":{

"autocomplete":{

"tokenizer":"autocomplete",

"filter":[

"lowercase"

]

},

"autocomplete_search":{

"tokenizer":"lowercase"

}

},

"tokenizer":{

"autocomplete":{

"type":"ngram",

"min_gram":1,

"max_gram":100,

"token_chars":[

"letter",

"digit",

"symbol"

]

}

}

}

},

"mappings":{

"properties":{

"shopcode":{

"type":"text",

"analyzer":"autocomplete",

"search_analyzer":"autocomplete_search",

"fields":{

"keyword":{

"type":"keyword",

"ignore_above":256

}

}

}

}

}

}

"index.max_result_window":1000000000属性用于设置查询页面返回的数组总数,默认为10000,没有此设置的话,分页查询时, 查询的数量超过10000就会报错

org.elasticsearch.ElasticsearchException: Elasticsearch exception [type=illegal_argument_exception, reason=Result window is too large, from + size must be less than or equal to: [10000] but was [60000].

See the scroll api for a more efficient way to request large data sets. This limit can be set by changing the [index.max_result_window] index level setting.]

index.max_ngram_diff参数表示最大允许的分词间隔

做设置的话,会提示如下报错信息

The difference between max_gram and min_gram in NGram Tokenizer must be less than

or equal to: [1] but was [3]. This limit can be set by changing the [index.max_ngram_diff]

index level setting

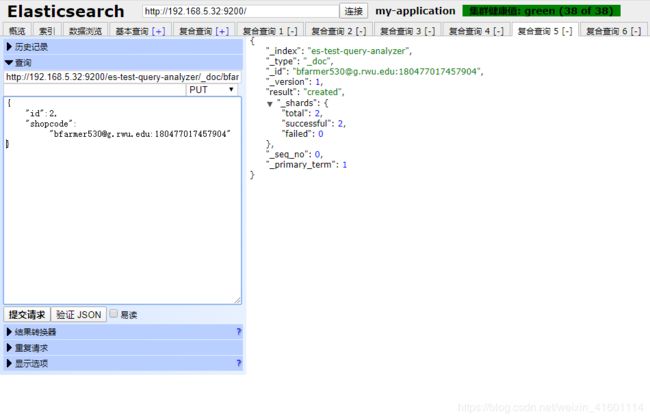

设置之后即可解决报错问题, 注意Elasticsearch7以后的版本, 在创建document时不需要指定type类型,默认为_doc

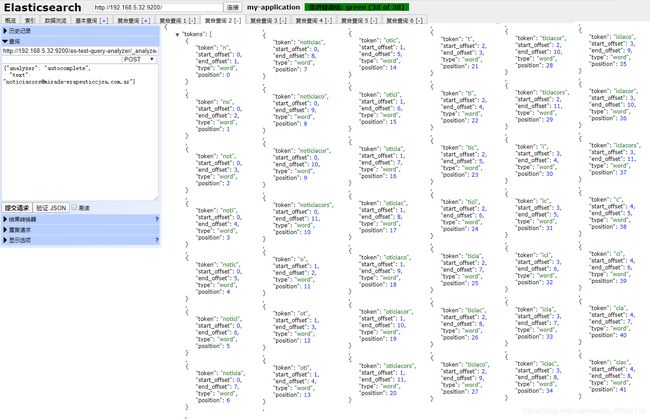

自定义的分词器是针对邮箱/英文用户名/特殊符号和数字组合的数据内容进行分词操作,从单个字符开始拆分,越来越多的进行分词创建索引

对于中文文本的分词是比较累赘的, 对于邮箱/用户名等英文数字符号等组成的文本进行分词,这里是比较适合的

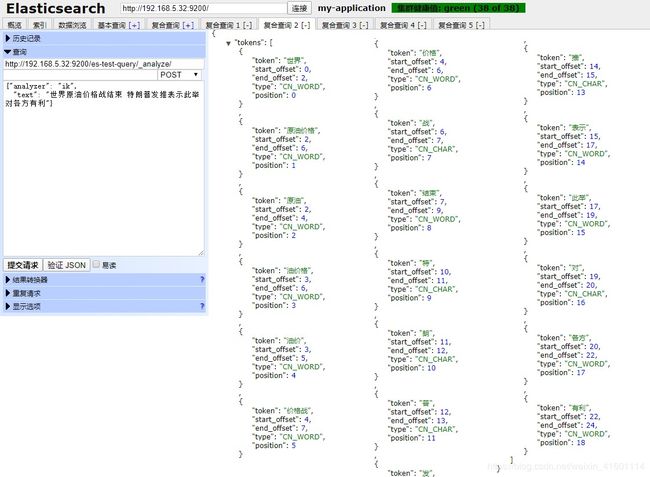

分词器对中文文本的分词效果

http://192.168.5.32:9200/es-test-query-analyzer/_doc/bfarmer530@g.rwu.edu:180477017457904/

{

"id":2,

"shopcode":"[email protected]:180477017457904"

}

搜索数据

{

"query": {

"match": {

"shopcode": "far"

}

}

}