python实践5——爬取淘宝网“鱼尾裙”商品信息

相信学了python爬虫,很多人都想爬取一些数据量比较大的网站,淘宝网就是一个很好的目标,其数据量大,而且种类繁多,而且难度不是很大,很适合初级学者进行爬取。下面是整个爬取过程:

第一步:构建访问的url

#构建访问的url

goods = "鱼尾裙"

page = 10

infoList = []

url = 'https://s.taobao.com/search'

for i in range(page):

s_num = str(44*i+1)

num = 44*i

data = {'q':goods,'s':s_num}第二步:获取网页信息

def getHTMLText(url,data):

try:

rsq = requests.get(url,params=data,timeout=30)

rsq.raise_for_status()

return rsq.text

except:

return "没找到页面"第三步:利用正则获取所需数据

def parasePage(ilt, html,goods_id):

try:

plt = re.findall(r'\"view_price\"\:\"[\d\.]*\"', html)

slt = re.findall(r'\"view_sales\"\:\".*?\"', html)

tlt = re.findall(r'\"raw_title\"\:\".*?\"', html)

ult = re.findall(r'\"pic_url\"\:\".*?\"', html)

dlt = re.findall(r'\"detail_url\"\:\".*?\"', html)

for i in range(len(plt)):

goods_id += 1

price = eval(plt[i].split(':')[1])

sales = eval(slt[i].split(':')[1])

title = eval(tlt[i].split(':')[1])

pic_url = "https:" + eval(ult[i].split(':')[1])

detail_url = "https:" + eval(dlt[i].split(':')[1])

ilt.append([goods_id,price,sales,title,pic_url,detail_url])

return ilt

except:

print("没找到您所需的商品!")第四步:将数据保存到csv文件

def saveGoodsList(ilt):

with open('goods.csv','w') as f:

writer = csv.writer(f)

writer.writerow(["序列号", "价格", "成交量", "商品名称","商品图片网址","商品详情网址"])

for info in ilt:

writer.writerow(info)下面是完整代码:

import csv

import requests

import re

#获取网页信息

def getHTMLText(url,data):

try:

rsq = requests.get(url,params=data,timeout=30)

rsq.raise_for_status()

return rsq.text

except:

return "没找到页面"

#正则获取所需数据

def parasePage(ilt, html,goods_id):

try:

plt = re.findall(r'\"view_price\"\:\"[\d\.]*\"', html)

slt = re.findall(r'\"view_sales\"\:\".*?\"', html)

tlt = re.findall(r'\"raw_title\"\:\".*?\"', html)

ult = re.findall(r'\"pic_url\"\:\".*?\"', html)

dlt = re.findall(r'\"detail_url\"\:\".*?\"', html)

for i in range(len(plt)):

goods_id += 1

price = eval(plt[i].split(':')[1])

sales = eval(slt[i].split(':')[1])

title = eval(tlt[i].split(':')[1])

pic_url = "https:" + eval(ult[i].split(':')[1])

detail_url = "https:" + eval(dlt[i].split(':')[1])

ilt.append([goods_id,price,sales,title,pic_url,detail_url])

return ilt

except:

print("没找到您所需的商品!")

#数据保存到csv文件

def saveGoodsList(ilt):

with open('goods.csv','w') as f:

writer = csv.writer(f)

writer.writerow(["序列号", "价格", "成交量", "商品名称","商品图片网址","商品详情网址"])

for info in ilt:

writer.writerow(info)

#运行程序

if __name__ == '__main__':

#构建访问的url

goods = "鱼尾裙"

page = 10

infoList = []

url = 'https://s.taobao.com/search'

for i in range(page):

s_num = str(44*i+1)

num = 44*i

data = {'q':goods,'s':s_num}

try:

html = getHTMLText(url,data)

ilt = parasePage(infoList, html,num)

saveGoodsList(ilt)

except:

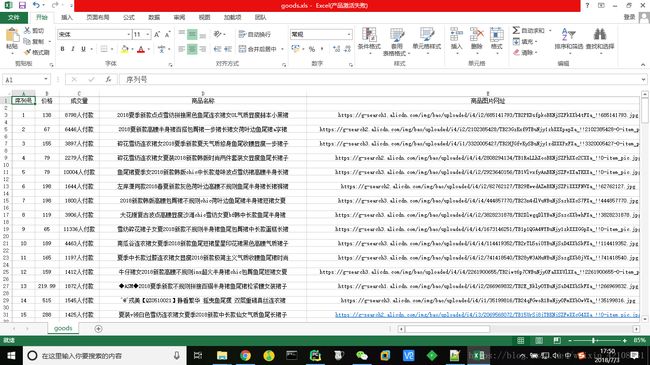

continue结果如下图: