Canal获取mysql数据

背景

早期,阿里巴巴 B2B 公司因为存在杭州和美国双机房部署,存在跨机房同步的业务需求 ,主要是基于trigger的方式获取增量变更。从 2010 年开始,公司开始逐步尝试数据库日志解析,获取增量变更进行同步,由此衍生出了增量订阅和消费业务,从此开启一段新纪元。【github项目】

当前的 canal 支持源端 MySQL 版本包括 5.1.x , 5.5.x , 5.6.x , 5.7.x , 8.0.x

基于日志增量订阅和消费的业务包括

- 数据库镜像

- 数据库实时备份-

- 索引构建和实时维护(拆分异构索引、倒排索引等)

- 业务 cache 刷新

- 带业务逻辑的增量数据处理

项目介绍

名称:canal [kə’næl]

译意: 水道/管道/沟渠

产品定位: 基于数据库增量日志解析,提供增量数据订阅和消费

关键词: MySQL binlog parser / real-time / queue&topic / index build

工作原理

MySQL主备复制原理

- MySQL master 将数据变更写入二进制日志( binary log, 其中记录叫做二进制日志事件binary log events,可以通过 show binlog events 进行查看)

- MySQL slave 将 master 的 binary log events 拷贝到它的中继日志(relay log)

- MySQL slave 重放 relay log 中事件,将数据变更反映它自己的数据

canal 工作原理

- canal 模拟 MySQL slave 的交互协议,伪装自己为 MySQL slave ,向 MySQL master 发送dump 协议

- MySQL master 收到 dump 请求,开始推送 binary log 给 slave (即 canal )

- canal 解析 binary log 对象(原始为 byte 流)

ClientSample

直接使用canal.example工程

部署canal

准备

对于自建 MySQL , 需要先开启 Binlog 写入功能,配置 binlog-format 为 ROW 模式,my.cnf 中配置如下

[mysqld]

log-bin=mysql-bin # 开启 binlog

binlog-format=ROW # 选择 ROW 模式

server_id=1 # 配置 MySQL replaction 需要定义,不要和 canal 的 slaveId 重复

注意:针对阿里云 RDS for MySQL , 默认打开了 binlog , 并且账号默认具有 binlog dump 权限 , 不需要任何权限或者 binlog 设置,可以直接跳过这一步

授权 canal 链接 MySQL 账号具有作为 MySQL slave 的权限, 如果已有账户可直接 grant

CREATE USER canal IDENTIFIED BY 'canal';

GRANT SELECT, REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'canal'@'%';

-- GRANT ALL PRIVILEGES ON *.* TO 'canal'@'%' ;

FLUSH PRIVILEGES;

启动

-

下载 canal, 访问 release 页面 , 选择需要的包下载, 如以 1.0.17 版本为例

wget https://github.com/alibaba/canal/releases/download/canal-1.0.17/canal.deployer-1.0.17.tar.gz -

解压缩

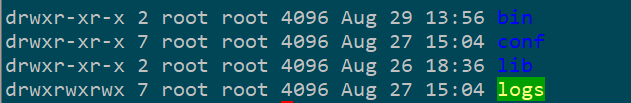

mkdir /tmp/canal tar zxvf canal.deployer-$version.tar.gz -C /tmp/canal -

配置修改

vi conf/example/instance.properties

几个比较重要常用参数配置

#################################################

## mysql serverId , v1.0.26+ will autoGen

# canal.instance.mysql.slaveId=0

# 仿从节点,给一个id,不要和从节点重复

canal.instance.mysql.slaveId=1234

# 配置实例地址

canal.instance.master.address=192.168.1.1:3306

# mysql> show master status; 查出name 和 posttion

canal.instance.master.journal.name=mysql-bin.xxxx

canal.instance.master.position=xxxx

canal.instance.master.timestamp=

# username/password

canal.instance.dbUsername=userName

canal.instance.dbPassword=password

canal.instance.connectionCharset = UTF-8

# enable druid Decrypt database password

canal.instance.enableDruid=fals

canal.instance.parser.parallel=true

# table regex

# 只监控的库或者表

canal.instance.filter.regex=.*\\..*

# table black regex

# 黑名单 如果设置的话,就不扫描该库或者表

canal.instance.filter.black.regex=

- canal.instance.connectionCharset 代表数据库的编码方式对应到 java 中的编码类型,比如 UTF-8,GBK , ISO-8859-1

- 如果系统是1个 cpu,需要将 canal.instance.parser.parallel 设置为 false

启动

sh bin/startup.sh

查看日志

tail -f logs/canal/canal.log

关闭

sh bin/stop.sh

从头创建工程

- 添加依赖

<dependency> <groupId>com.alibaba.otter</groupId> <artifactId>canal.client</artifactId> <version>1.1.0</version> </dependency> - ClientSample代码

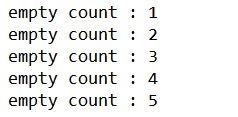

import com.alibaba.otter.canal.client.CanalConnector; import com.alibaba.otter.canal.client.CanalConnectors; import com.alibaba.otter.canal.protocol.CanalEntry.*; import com.alibaba.otter.canal.protocol.Message; import java.net.InetSocketAddress; import java.util.List; /** * * @author GPF * @FileName Demo * @create 2019/7/27 14:57 * @since 1.0.0 */ public class Demo { public static void main(String args[]) { // 创建链接 CanalConnector connector = CanalConnectors.newSingleConnector(new InetSocketAddress("localhost", 11111), "example", "canal", "canal"); int batchSize = 20; int emptyCount = 0; try { connector.connect(); connector.subscribe(".*\\..*"); connector.rollback(); int totalEmptyCount = 300; while (emptyCount < totalEmptyCount) { Message message = connector.getWithoutAck(batchSize); // 获取指定数量的数据 long batchId = message.getId(); int size = message.getEntries().size(); if (batchId == -1 || size == 0) { emptyCount++; System.out.println("empty count : " + emptyCount); try { Thread.sleep(1000); } catch (InterruptedException e) { } } else { emptyCount = 0; // System.out.printf("message[batchId=%s,size=%s] \n", batchId, size); printEntry(message.getEntries()); } connector.ack(batchId); // 提交确认 // connector.rollback(batchId); // 处理失败, 回滚数据 } System.out.println("empty too many times, exit"); } finally { connector.disconnect(); } } private static void printEntry(List<Entry> entrys) { for (Entry entry : entrys) { if (entry.getEntryType() == EntryType.TRANSACTIONBEGIN || entry.getEntryType() == EntryType.TRANSACTIONEND) { continue; } RowChange rowChage = null; try { rowChage = RowChange.parseFrom(entry.getStoreValue()); } catch (Exception e) { throw new RuntimeException("ERROR ## parser of eromanga-event has an error , data:" + entry.toString(), e); } EventType eventType = rowChage.getEventType(); System.out.println(String.format("================> binlog[%s:%s] , name[%s,%s] , eventType : %s", entry.getHeader().getLogfileName(), entry.getHeader().getLogfileOffset(), entry.getHeader().getSchemaName(), entry.getHeader().getTableName(), eventType)); for (RowData rowData : rowChage.getRowDatasList()) { if (eventType == EventType.DELETE) { printColumn(rowData.getBeforeColumnsList()); } else if (eventType == EventType.INSERT) { printColumn(rowData.getAfterColumnsList()); } else { System.out.println("-------> before"); printColumn(rowData.getBeforeColumnsList()); System.out.println("-------> after"); printColumn(rowData.getAfterColumnsList()); } } } } private static void printColumn(List<Column> columns) { for (Column column : columns) { System.out.println(column.getName()+ "["+ column.getMysqlType()+"]" + " : " + column.getValue() + " update=" + column.getUpdated()); } } }

运行Client

针对aliyun RDS

针对于阿里的RDS我们需要单独处理一下,

canal.instance.filter.regex=userdb.tshop,shj_cate_db.tdishes,shj_cate_db.tdishestype,shj_cate_db.tattachindishes

一般情况下,这个参数不配置的时候canal也可以正常运行,但是mysql为阿里云rds时会报错,贴一段canal源码吧,如下:

1.show databases 查询mysql所有库(schema )

2.show tables from `" + schema + "` for循环中查询每个库的所有表

3.show create table `" + schema + "`.`" + table + "`;" 查询每个表的创建语句(canal原理就是mysql的主备复制)

在通过3得到的创建语句,在本地的mysql创建这些表

问题在于,如果不配置canal.instance.filter.regex,在第2步时,canal会将mysql master所有的表,包括view视图,问题就在这了,在对rds执行(show create table + 视图)的时候会报没有权限错误,就是你去设置用户权限也不行,rds就没有开放这个权限.

设置了canal.instance.filter.regex这个参数后,第2步在for循环中,会将不在参数中的表过滤掉,这样就这处理业务关心的表.

源码如下:

/**

* 初始化的时候dump一下表结构

*/

private boolean dumpTableMeta(MysqlConnection connection, final CanalEventFilter filter) {

try {

ResultSetPacket packet = connection.query("show databases");//1

List<String> schemas = new ArrayList<String>();

for (String schema : packet.getFieldValues()) {

schemas.add(schema);

}

for (String schema : schemas) {

packet = connection.query("show tables from `" + schema + "`");//2

List<String> tables = new ArrayList<String>();

for (String table : packet.getFieldValues()) {

String fullName = schema + "." + table;

if (blackFilter == null || !blackFilter.filter(fullName)) {

if (filter == null || filter.filter(fullName)) {

tables.add(table);

}

}

}

if (tables.isEmpty()) {

continue;

}

StringBuilder sql = new StringBuilder();

for (String table : tables) {

sql.append("show create table `" + schema + "`.`" + table + "`;");//3

}

List<ResultSetPacket> packets = connection.queryMulti(sql.toString());

for (ResultSetPacket onePacket : packets) {

if (onePacket.getFieldValues().size() > 1) {

String oneTableCreateSql = onePacket.getFieldValues().get(1);

memoryTableMeta.apply(INIT_POSITION, schema, oneTableCreateSql, null);//4

}

}

}

return true;

} catch (IOException e) {

throw new CanalParseException(e);

}

}

配置好了后,执行bin目录下的startup.sh就跑起来了.