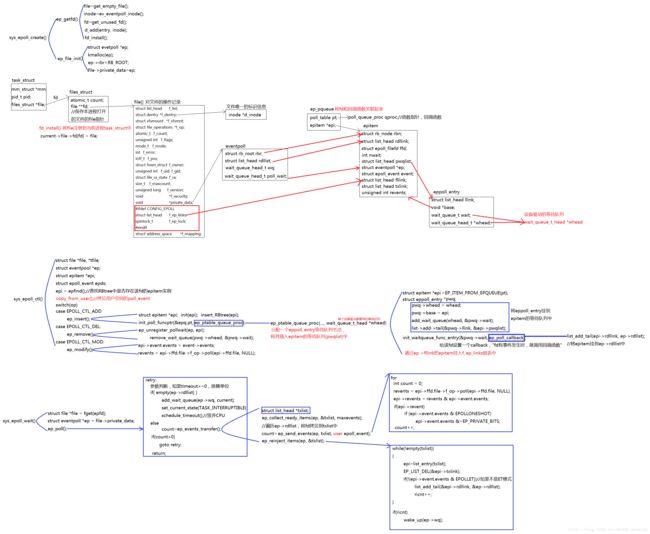

epoll源码剖析

epoll的实现主要依赖于一个文件系统eventpoll。

epoll使用中有三个重要的函数:epoll_create(), epoll_ctl(), epoll_wait

epoll有四个重要的数据结构:struct eventpoll, struct epitem,struct epoll_event, struct eppoll_entry

struct eventpoll

{

rwlock_t lock;

struct rw_semaphore sem;

wait_queue_head_t wq;

wait_queue_head_t poll_wait;

//链接的是epitem->rdllink,该epitem对应的fd有事件就绪。

struct list_head rdllist;

//该连接监听的fd的epitem

struct rb_root rbr;

};

struct epitem

{

//红黑树的根节点,它的结点都为epitem变量。方便查找和删除

struct rb_node rbn;

//链表中的每个结点即为epitem中的rdllink,当epitem所对应的fd存在已经就绪的I/O事件,ep_poll_callback回调函数会将该结点连接到eventpoll中的rdllist循环链表中去,这样就将就绪的epitem都串起来了。

struct list_head rdllink;

//将fd和file绑定起来

struct epoll_filefd ffd;

int nwait;

//指向包含此epitem的所有poll wait queue,insert时,pwqlist=eppoll_entry->llink;

struct list_head pwqlist;

//eventpoll的指针,每个epitem都有这样一个指针,它指向对应的eventpoll变量。只要拿到了epitem,就可以根据它找到eventpoll

struct eventpoll *ep;

//存放从用户空间拷贝的epoll_event

struct epoll_event event;

atomic_t usecnt;

//通过这个节点,将epitem挂到file->f_op_links文件操作等待队列中

struct list_head fllink;

//通过这个节点,将epitem挂到transfer链表中

struct list_head txlink;

//判断是否要重新插入rdllist中 ET/LT

unsigned int revents;

};

struct epoll_event

{

__u32 events;//关心的事件EPOLLIN/EPOLLOUT/EPOLLONESHOT

__u64 data;//fd

};

//等待队列节点

struct eppoll_entry

{

/* List header used to link this structure to the "struct epitem" */

struct list_head llink;

//指向其e对应的pitem

void *base;

//等待队列的项,insert时,将该等待队列挂在设备驱动的等待队列中,并设置wait->proc=ep_poll_callback。事件就绪时,设备状态发生改变,设备驱动调用回调函数,将epi->rdllink挂到ep->rdllist上

wait_queue_t wait;

/* The wait queue head that linked the "wait" wait queue item */

wait_queue_head_t *whead;

};1.epoll_create

该函数的作用是创建一系列数据结构并建立之间的连接关系,为epoll_ctl做准备。

创建fd、inode、file结构体,初始化后,建立file与inode的连接关系(file->dentry->inode)、task_struct与file的连接关系(files->fd[fd]=file)

生成eventpoll对象,初始化wq、poll_wait、rdllist三个等待队列和红黑树根节点rbroot,建立file与eventpoll的连接关系(file->private_data=ep)

在epoll_create中,size是只要>=0即可,size没有实际意义。

asmlinkage long sys_epoll_create(int size)

{

int error, fd;

struct inode *inode;

struct file *file;

if (size <= 0)

goto eexit_1;

//获取fd、inode、file结构体,初始化,建立连接关系。

error= ep_getfd(&fd, &inode, &file);

//获取eventpoll文件系统,初始化,file->private_data=ep;

error= ep_file_init(file);

return fd;

}下面是ep_getfd()函数的实现过程,重要的代码我都做了注释,有兴趣的同学自行阅读。

该函数创建fd、inode、file结构体,初始化后,建立file与inode的连接关系、task_struct与file的连接关系

static int ep_getfd(int *efd, struct inode **einode, struct file **efile)

{

struct qstr this;

char name[32];

struct dentry *dentry;

struct inode *inode;

struct file *file;

int error, fd;

/* Get an ready to use file */

error = -ENFILE;

//申请file数据结构

file = get_empty_filp();

if (!file)

goto eexit_1;

/* Allocates an inode from the eventpoll file system */

//申请inode数据结构

inode = ep_eventpoll_inode();

error = PTR_ERR(inode);

if (IS_ERR(inode))

goto eexit_2;

/* Allocates a free descriptor to plug the file onto */

//获得文件描述符

error = get_unused_fd();

if (error < 0)

goto eexit_3;

fd = error;

/*

* Link the inode to a directory entry by creating a unique name

* using the inode number.

*/

error = -ENOMEM;

sprintf(name, "[%lu]", inode->i_ino);

this.name = name;

this.len = strlen(name);

this.hash = inode->i_ino;

//file和inode必须通过dentry结构才能连接起来,除了做file和inode的桥梁,这个数据结构本身没有什么大的作用

dentry = d_alloc(eventpoll_mnt->mnt_sb->s_root, &this);

if (!dentry)

goto eexit_4;

dentry->d_op = &eventpollfs_dentry_operations;

//dentry->d_inode=inode

d_add(dentry, inode);

//初始化file结构体

file->f_vfsmnt = mntget(eventpoll_mnt);

file->f_dentry = dentry;

file->f_mapping = inode->i_mapping;

file->f_pos = 0;

file->f_flags = O_RDONLY;

file->f_op = &eventpoll_fops;

file->f_mode = FMODE_READ;

file->f_version = 0;

file->private_data = NULL;

/* Install the new setup file into the allocated fd. */

//将task_struct和file连接起来 current->file->fd[fd]=file

fd_install(fd, file);

*efd = fd;

*einode = inode;

*efile = file;

return 0;

eexit_4:

put_unused_fd(fd);

eexit_3:

iput(inode);

eexit_2:

put_filp(file);

eexit_1:

return error;

}//ep_file_init()函数生成eventpoll对象,初始化wq、poll_wait、rdllist三个等待队列和红黑树根节点rbroot,建立file与eventpoll的连接关系(file->private_data=ep)

static int ep_file_init(struct file *file)

{

struct eventpoll *ep;

//生成eventpoll对象 kmalloc

if (!(ep = kmalloc(sizeof(struct eventpoll), GFP_KERNEL)))

return -ENOMEM;

//初始化eventpoll

memset(ep, 0, sizeof(*ep));

rwlock_init(&ep->lock);

init_rwsem(&ep->sem);

init_waitqueue_head(&ep->wq);

init_waitqueue_head(&ep->poll_wait);

INIT_LIST_HEAD(&ep->rdllist);

ep->rbr = RB_ROOT;

//将file和heventpoll连接起来

file->private_data = ep;

DNPRINTK(3, (KERN_INFO "[%p] eventpoll: ep_file_init() ep=%p\n",

current, ep));

return 0;

}

2.epoll_ctl(int epfd, int op, int fd, struct epoll_event *event)

该函数支持三种操作:EPOLL_CTL_ADD(给fd注册事件)、EPOLL_CTL_DEL(删除fd上的注册事件)、EPOLL_CTL_MOD(修改fd上的注册事件)。

<1>将用户空间的epoll_event拷贝到内核空间,在epoll_ctl只拷贝一次,不用每次都从用户空间拷贝。

<2>通过epfd找到需要操作的eventpoll

<3>在eventpoll->rbr中查找fd是否存在

<4>根据op操作选择insert/remove/modify

asmlinkage long sys_epoll_ctl(int epfd, int op, int fd, struct epoll_event __user *event)

{

int error;

struct file *file, *tfile;

struct eventpoll *ep;

struct epitem *epi;

struct epoll_event epds;

DNPRINTK(3, (KERN_INFO "[%p] eventpoll: sys_epoll_ctl(%d, %d, %d, %p)\n",current, epfd, op, fd, event));

error = -EFAULT;

//将用户空间的epoll_event拷贝到内核空间

if (EP_OP_HASH_EVENT(op) &&

copy_from_user(&epds, event, sizeof(struct epoll_event)))

goto eexit_1;

/* Get the "struct file *" for the eventpoll file */

error = -EBADF;

//获得epfd的file结构

file = fget(epfd);

if (!file)

goto eexit_1;

/* Get the "struct file *" for the target file */

//获得fd的file结构

tfile = fget(fd);

if (!tfile)

goto eexit_2;

/* The target file descriptor must support poll */

error = -EPERM;

if (!tfile->f_op || !tfile->f_op->poll)

goto eexit_3;

/*

* We have to check that the file structure underneath the file descriptor

* the user passed to us _is_ an eventpoll file. And also we do not permit

* adding an epoll file descriptor inside itself.

*/

error = -EINVAL;

if (file == tfile || !IS_FILE_EPOLL(file))

goto eexit_3;

/*

* At this point it is safe to assume that the "private_data" contains

* our own data structure.

*/

//获得epfd的eventpoll结构

ep = file->private_data;

down_write(&ep->sem);

/* Try to lookup the file inside our hash table */

//在eventpoll->rbr中查找fd是否存在

epi = ep_find(ep, tfile, fd);

error = -EINVAL;

switch (op)

{

case EPOLL_CTL_ADD:

if (!epi)

{

epds.events |= POLLERR | POLLHUP;

//给fd上注册epoll_event事件

error = ep_insert(ep, &epds, tfile, fd);

}

else

error = -EEXIST;

break;

case EPOLL_CTL_DEL:

if (epi)

//删除fd上的epoll_event事件

error = ep_remove(ep, epi);

else

error = -ENOENT;

break;

case EPOLL_CTL_MOD:

if (epi)

{

epds.events |= POLLERR | POLLHUP;

//更改fd上注册的事件

error = ep_modify(ep, epi, &epds);

}

else

error = -ENOENT;

break;

}

/*

* The function ep_find() increments the usage count of the structure

* so, if this is not NULL, we need to release it.

*/

if (epi)

ep_release_epitem(epi);

up_write(&ep->sem);

eexit_3:

fput(tfile);

eexit_2:

fput(file);

eexit_1:

DNPRINTK(3, (KERN_INFO "[%p] eventpoll: sys_epoll_ctl(%d, %d, %d, %p) = %d\n",

current, epfd, op, fd, event, error));

return error;

}

ep_insert()

这儿引入了一个ep_pqueue结构体,主要是给epitem绑定一个回调函数。

<1>首先定义了一个epitem变量,对三个头指针初始化,并将epitem->ep指向该eventpoll,通过用户传进来的参数event对ep内部变量epitem->epollevent赋值。通过EP_SET_FFD将目标文件file和epitem关联,这样,epitem、eventpoll和file关联起来了。

<2>然后,给epitem注册回调函数。调用该回调函数时,分配了一个eppoll_entry等待队列节点,初始化并将eppoll_entry等待队列节点挂到epitem中,设置fd的回调函数ep_poll_callback。该回调函数会在fd上有事件发生时由设备驱动调用。

<3>最后,将epitem挂到文件系统的等待队列中,将epitem插入eventpoll的rbtree中。判断当前插入的event是否刚好发生,如果是,将epitem加入到rdlist中,并对epoll上的wait队列调用wakeup。

static int ep_insert(struct eventpoll *ep, struct epoll_event *event, struct file *tfile, int fd)

{

int error, revents, pwake = 0;

unsigned long flags;

struct epitem *epi;

struct ep_pqueue epq;

error = -ENOMEM;

if (!(epi = EPI_MEM_ALLOC()))

goto eexit_1;

/* Item initialization follow here ... */

//初始化epitem节点

EP_RB_INITNODE(&epi->rbn);

INIT_LIST_HEAD(&epi->rdllink);

INIT_LIST_HEAD(&epi->fllink);

INIT_LIST_HEAD(&epi->txlink);

INIT_LIST_HEAD(&epi->pwqlist);

//epitem和eventpol建立连接

epi->ep = ep;

//epitem的事件设置为用户传入的事件

epi->event = *event;

//将目标文件file和epitem关联

EP_SET_FFD(&epi->ffd, tfile, fd);

atomic_set(&epi->usecnt, 1);

epi->nwait = 0;

/* Initialize the poll table ck */

epq.epi = epi;

//设置回调函数

init_poll_funcptr(&epq.pt, ep_ptable_queue_proc);

/*

* Attach the item to the poll hooks and get current event bits.

* We can safely use the file* here because its usage count has

* been increased by the caller of this function.

*/

//调用回调函数

revents = tfile->f_op->poll(tfile, &epq.pt)

if (epi->nwait < 0)

goto eexit_2;

spin_lock(&tfile->f_ep_lock);

//将epitem挂到文件系统的等待队列(file->f_ep_links)中,

list_add_tail(&epi->fllink, &tfile->f_ep_links);

spin_unlock(&tfile->f_ep_lock);

write_lock_irqsave(&ep->lock, flags);

//将epitem挂到ep->rbtree

ep_rbtree_insert(ep, epi)

if ((revents & event->events) && !EP_IS_LINKED(&epi->rdllink))

{

list_add_tail(&epi->rdllink, &ep->rdllist)

if (waitqueue_active(&ep->wq))

wake_up(&ep->wq);

if (waitqueue_active(&ep->poll_wait))

pwake++;

}

write_unlock_irqrestore(&ep->lock, flags);

/* We have to call this outside the lock */

if (pwake)

ep_poll_safewake(&psw, &ep->poll_wait);

DNPRINTK(3, (KERN_INFO "[%p] eventpoll: ep_insert(%p, %p, %d)\n",current, ep, tfile, fd));

return 0;

}//这是在insert中注册的回调函数ep_ptable_queue_proc

//调用该回调函数时,分配了一个eppoll_entry等待队列节点,初始化并将eppoll_entry等待队列节点挂到epitem中,设置fd的回调函数ep_poll_callback。

static void ep_ptable_queue_proc(struct file *file, wait_queue_head_t *whead, poll_table *pt)

{

struct epitem *epi = EP_ITEM_FROM_EPQUEUE(pt);

struct eppoll_entry *pwq;

if (epi->nwait >= 0 && (pwq = PWQ_MEM_ALLOC())) {

//设置回调函数

init_waitqueue_func_entry(&pwq->wait, ep_poll_callback);

//初始化eppoll_entry

pwq->whead = whead;

pwq->base = epi;

add_wait_queue(whead, &pwq->wait);

//将eppoll_entry插入epitem中

list_add_tail(&pwq->llink, &epi->pwqlist);

epi->nwait++;

} else {

/* We have to signal that an error occurred */

epi->nwait = -1;

}

}3.sys_epoll_wait()

sys_epoll_wait()首先获得ep=file->private_data;再调用函数ep_poll()完成真正的epoll_wait。

在ep_poll中

<1>首先检测参数的合法性,如果timeout时间>0,则转换成jtimeout时间

<2>判断eventpoll的rdllist上是否有事件就绪,如果当前rdllist为空,将current进程挂到eventpoll->wq中,将当前进程的状态设置成TASK_INTERRUPTIBLE,调用schedule_timeout让出CPU的执行。

<3>如果当前rdllist不为空,调用ep_events_transfer()函数,将rdllist中就绪的事件通知给用户空间。

static int ep_poll(struct eventpoll *ep, struct epoll_event __user *events, int maxevents, long timeout)

{

int res, eavail;

unsigned long flags;

long jtimeout;

wait_queue_t wait;

jtimeout = timeout == -1 || timeout > (MAX_SCHEDULE_TIMEOUT - 1000) / HZ ? MAX_SCHEDULE_TIMEOUT: (timeout * HZ + 999) / 1000;

retry:

write_lock_irqsave(&ep->lock, flags);

res = 0;

//如果现在ep->rdllist上无就绪事件发生

if (list_empty(&ep->rdllist))

{

/*

* We don't have any available event to return to the caller.

* We need to sleep here, and we will be wake up by

* ep_poll_callback() when events will become availe.

*/

//将当前进程挂到ep->wq中

init_waitqueue_entry(&wait, current);

add_wait_queue(&ep->wq, &wait);

for (;;)

{

/*

* We don't want to sleep if the ep_poll_callback() sends us

* a wakeup in between. That's why we set the task state

* to TASK_INTERRUPTIBLE before doing the checks.

*/

//将当前进程的状态设置成TASK_INTERRUPTIBLE

set_current_state(TASK_INTERRUPTIBLE);

if (!list_empty(&ep->rdllist) || !jtimeout)

break;

if (signal_pending(current))

{

res = -EINTR;

break;

}

write_unlock_irqrestore(&ep->lock, flags);

//调用schedule_timeout让出CPU的执行

jtimeout = schedule_timeout(jtimeout);

write_lock_irqsave(&ep->lock, flags);

}

remove_wait_queue(&ep->wq, &wait);

set_current_state(TASK_RUNNING);

}

/* Is it worth to try to dig for events ? */

eavail = !list_empty(&ep->rdllist);

write_unlock_irqrestore(&ep->lock, flags);

/*

* Try to transfer events to user space. In case we get 0 events and

* there's still timeout left over, we go trying again in search of

* more luck.

*/

//调用ep_events_transfer函数,将就绪事件发给用户空间

if (!res && eavail &&!(res = ep_events_transfer(ep, events, maxevents)) && jtimeout)

goto retry;

return res;

}ep_events_transfer函数

<1>定义list_head txlist;

<2>ep_collect_ready_items()将ep->rdllist中就绪的事件拷贝到txlist中;

<3>ep_send_events()将txlist中的事件发给用户空间;

<4>ep_reinject_items()如果是ET模式,将通知过的事件从rdllist中删除;如果是LT模式,将该事件重新插入rdllist中。

static int ep_events_transfer(struct eventpoll *ep,

struct epoll_event __user *events, int maxevents)

{

int eventcnt = 0;

struct list_head txlist;

INIT_LIST_HEAD(&txlist);

/*

* We need to lock this because we could be hit by

* eventpoll_release_file() and epoll_ctl(EPOLL_CTL_DEL).

*/

down_read(&ep->sem);

/* Collect/extract ready items */

if (ep_collect_ready_items(ep, &txlist, maxevents) > 0) {

/* Build result set in userspace */

eventcnt = ep_send_events(ep, &txlist, events);

/* Reinject ready items into the ready list */

ep_reinject_items(ep, &txlist);

}

up_read(&ep->sem);

return eventcnt;

}

static int ep_collect_ready_items(struct eventpoll *ep, struct list_head *txlist, int maxevents)

{

int nepi;

unsigned long flags;

struct list_head *lsthead = &ep->rdllist, *lnk;

struct epitem *epi;

write_lock_irqsave(&ep->lock, flags);

for (nepi = 0, lnk = lsthead->next; lnk != lsthead && nepi < maxevents;)

{

epi = list_entry(lnk, struct epitem, rdllink);

lnk = lnk->next;

/* If this file is already in the ready list we exit soon */

if (!EP_IS_LINKED(&epi->txlink))

{

/*

* This is initialized in this way so that the default

* behaviour of the reinjecting code will be to push back

* the item inside the ready list.

*/

epi->revents = epi->event.events;

/* Link the ready item into the transfer list */

list_add(&epi->txlink, txlist);

nepi++;

/*

* Unlink the item from the ready list.

*/

EP_LIST_DEL(&epi->rdllink);

}

}

write_unlock_irqrestore(&ep->lock, flags);

return nepi;

}

static int ep_send_events(struct eventpoll *ep, struct list_head *txlist, struct epoll_event __user *events)

{

int eventcnt = 0;

unsigned int revents;

struct list_head *lnk;

struct epitem *epi;

/*

* We can loop without lock because this is a task private list.

* The test done during the collection loop will guarantee us that

* another task will not try to collect this file. Also, items

* cannot vanish during the loop because we are holding "sem".

*/

list_for_each(lnk, txlist)

{

epi = list_entry(lnk, struct epitem, txlink);

/*

* Get the ready file event set. We can safely use the file

* because we are holding the "sem" in read and this will

* guarantee that both the file and the item will not vanish.

*/

revents = epi->ffd.file->f_op->poll(epi->ffd.file, NULL);

/*

* Set the return event set for the current file descriptor.

* Note that only the task task was successfully able to link

* the item to its "txlist" will write this field.

*/

epi->revents = revents & epi->event.events;

if (epi->revents)

{

if (__put_user(epi->revents,

&events[eventcnt].events) ||

__put_user(epi->event.data,

&events[eventcnt].data))

return -EFAULT;

if (epi->event.events & EPOLLONESHOT)

epi->event.events &= EP_PRIVATE_BITS;

eventcnt++;

}

}

return eventcnt;

}

static void ep_reinject_items(struct eventpoll *ep, struct list_head *txlist)

{

int ricnt = 0, pwake = 0;

unsigned long flags;

struct epitem *epi;

write_lock_irqsave(&ep->lock, flags);

while (!list_empty(txlist))

{

epi = list_entry(txlist->next, struct epitem, txlink);

/* Unlink the current item from the transfer list */

EP_LIST_DEL(&epi->txlink);

/*

* If the item is no more linked to the interest set, we don't

* have to push it inside the ready list because the following

* ep_release_epitem() is going to drop it. Also, if the current

* item is set to have an Edge Triggered behaviour, we don't have

* to push it back either.

*/

if (EP_RB_LINKED(&epi->rbn) && !(epi->event.events & EPOLLET) &&

(epi->revents & epi->event.events) && !EP_IS_LINKED(&epi->rdllink)) {

list_add_tail(&epi->rdllink, &ep->rdllist);

ricnt++;

}

}

if (ricnt)

{

/*

* Wake up ( if active ) both the eventpoll wait list and the ->poll()

* wait list.

*/

if (waitqueue_active(&ep->wq))

wake_up(&ep->wq);

if (waitqueue_active(&ep->poll_wait))

pwake++;

}

write_unlock_irqrestore(&ep->lock, flags);

/* We have to call this outside the lock */

if (pwake)

ep_poll_safewake(&psw, &ep->poll_wait);

}

4.ep_poll_callback

当fd上有事件就绪时,该回调函数被设备驱动程序触发,将epitem加入ep->rdllist中(ep->rdllist=epitem->rdllink)并唤醒当前进程(ep->wq)