学习笔记:从0开始学习大数据-42.综合实训四:Spark+Kafka构建实时分析Dashboard案例

本次实习是学习厦门大学林子雨团队的案例,本来以为容易,结果两天才调试通过,主要是spark的版本不对,调试了好久,最后下载对的版本,才通过,记录一下:

教程网址: http://dblab.xmu.edu.cn/post/8274/ Spark课程实验案例:Spark+Kafka构建实时分析Dashboard(免费共享)

本案例实现:

一、下载数据,测试kafka处理数据

1.数据集下载:点击这里下载data_format.zip数据集,

2.pip安装需要的module

- pip3 install flask

- pip3 install flask-socketio

- pip3 install kafka-python

- pip3 install kafka

3.启动kafka

cd /home/linbin/software/kafka_2.12-2.1.0

bin/zookeeper-server-start.sh config/zookeeper.properties &

bin/kafka-server-start.sh config/server.properties &

4.建立文件producer.py作为生产者不断发送数据

# coding: utf-8

import csv

import time

from kafka import KafkaProducer

# 实例化一个KafkaProducer示例,用于向Kafka投递消息

producer = KafkaProducer(bootstrap_servers='localhost:9092')

# 打开数据文件

csvfile = open("../data/user_log.csv","r")

# 生成一个可用于读取csv文件的reader

reader = csv.reader(csvfile)

for line in reader:

gender = line[9] # 性别在每行日志代码的第9个元素

if gender == 'gender':

continue # 去除第一行表头

time.sleep(0.1) # 每隔0.1秒发送一行数据

# 发送数据,topic为'sex'

producer.send('sex',line[9].encode('utf8'))5.建立文件consumer.py作为消费者测试接收数据

from kafka import KafkaConsumer

consumer = KafkaConsumer('sex')

for msg in consumer:

print((msg.value).decode('utf8'))6.测试

一个终端运行 python3 producer.py

另外一个终端运行 python3 consumer.py

如果消费终端可以看见源源不断的数据,说明成功

二、Spark Streaming实时处理数据(python版本)

1.检查是否有这个文件,没有下载一个

~/spark-2.2.0-bin-hadoop2.6/jars/spark-streaming-kafka-0-8-assembly_2.11-2.2.0.jar

2. 编辑spark-env.sh

cd ~/spark-2.2.0-bin-hadoop2.6/conf

nano spark-env.sh

加一行: export PYSPARK_PYTHON=/usr/bin/python3

改一行: export SPARK_DIST_CLASSPATH=$(/home/linbin/software/hadoop-2.6.0-cdh5.15.1/bin/hadoop classpath):/home/linbin/software/kafka_2.12-2.1.0/libs/*

3.修改文件 ~/spark-2.2.0-bin-hadoop2.6/bin/pyspark

找到 PYSPARK_PYTHON=python 修改为 PYSPARK_PYTHON=python3

4.建立目录 ~/spark-2.2.0-bin-hadoop2.6/mycode/kafka

在目录下建立文件kafka_test.py

from kafka import KafkaProducer

from pyspark.streaming import StreamingContext

from pyspark.streaming.kafka import KafkaUtils

from pyspark import SparkConf, SparkContext

import json

import sys

def KafkaWordCount(zkQuorum, group, topics, numThreads):

spark_conf = SparkConf().setAppName("KafkaWordCount")

sc = SparkContext(conf=spark_conf)

sc.setLogLevel("ERROR")

ssc = StreamingContext(sc, 1)

ssc.checkpoint(".")

# 这里表示把检查点文件写入分布式文件系统HDFS,所以要启动Hadoop

#ssc.checkpoint("file:///home/linbin/software/spark-1.6.0-cdh5.15.1/mycode/kafka//checkpoint")

topicAry = topics.split(",")

# 将topic转换为hashmap形式,而python中字典就是一种hashmap

topicMap = {}

for topic in topicAry:

topicMap[topic] = numThreads

lines = KafkaUtils.createStream(ssc, zkQuorum, group, topicMap).map(lambda x : x[1])

words = lines.flatMap(lambda x : x.split(" "))

wordcount = words.map(lambda x : (x, 1)).reduceByKeyAndWindow((lambda x,y : x+y), (lambda x,y : x-y), 1, 1, 1)

wordcount.foreachRDD(lambda x : sendmsg(x))

ssc.start()

ssc.awaitTermination()

# 格式转化,将[["1", 3], ["0", 4], ["2", 3]]变为[{'1': 3}, {'0': 4}, {'2': 3}],这样就不用修改第四个教程的代码了

def Get_dic(rdd_list):

res = []

for elm in rdd_list:

tmp = {elm[0]: elm[1]}

res.append(tmp)

return json.dumps(res)

def sendmsg(rdd):

if rdd.count != 0:

msg = Get_dic(rdd.collect())

# 实例化一个KafkaProducer示例,用于向Kafka投递消息

producer = KafkaProducer(bootstrap_servers='localhost:9092')

producer.send("result", msg.encode('utf8'))

# 很重要,不然不会更新

producer.flush()

if __name__ == '__main__':

# 输入的四个参数分别代表着

# 1.zkQuorum为zookeeper地址

# 2.group为消费者所在的组

# 3.topics该消费者所消费的topics

# 4.numThreads开启消费topic线程的个数

if (len(sys.argv) < 5):

print("Usage: KafkaWordCount ")

exit(1)

zkQuorum = sys.argv[1]

group = sys.argv[2]

topics = sys.argv[3]

numThreads = int(sys.argv[4])

print(group, topics)

KafkaWordCount(zkQuorum, group, topics, numThreads) 5. 启动 hdfs

start-all.sh

6. 建立文件 startup_py.sh

/home/linbin/software/spark-2.2.0-bin-hadoop2.6/bin/spark-submit /home/linbin/software/spark-2.2.0-bin-hadoop2.6/mycode/kafka/kafka_test.py 127.0.0.1:2181 1 sex 1

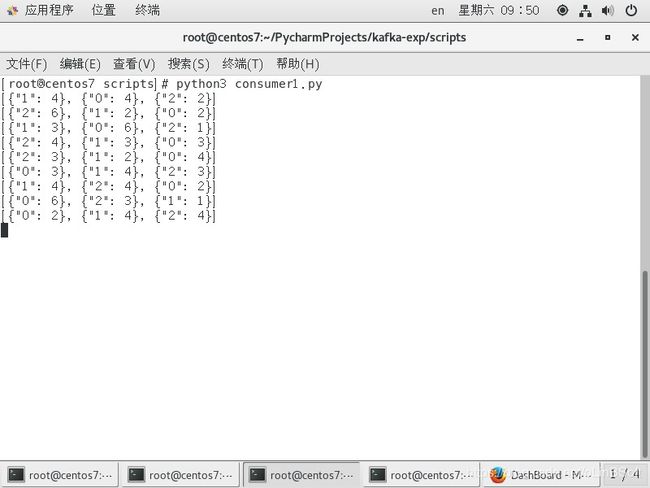

7. 修改上面的consumer.py消费者程序

consumer = KafkaConsumer('sex') 修改为consumer = KafkaConsumer('result') 即监控另外一个topic

8. 开启三个终端,分别运行

python3 producer.py #源源不断定时发送数据到kafka的 sex topic

sh startup_py.sh #接收kafka的sex topic数据 spark streaming 统计后,发送回kafka 的result topic

python3 consumer.py #监控kafka result topic源源不断输出的数据

三、web展示数据

1. 因为数据是动态的,不断产生,因此

利用Flask-SocketIO实时推送数据

socket.io.js实时获取数据

highlights.js展示数据

目录结构:

kafka-exp

├── app.py

├── static

│ └── js

│ ├── exporting.js

│ ├── highcharts.js

│ ├── jquery-3.1.1.min.js

│ ├── socket.io.js

│ └── socket.io.js.map

└── templates

└── index.html

2. 源代码从厦大大数据实验室提供的网盘下载 :请点击这里从百度云盘下载

其中 app.py

import json

from flask import Flask, render_template

from flask_socketio import SocketIO

from kafka import KafkaConsumer

#因为第一步骤安装好了flask,所以这里可以引用

app = Flask(__name__)

app.config['SECRET_KEY'] = 'secret!'

socketio = SocketIO(app)

thread = None

# 实例化一个consumer,接收topic为result的消息

consumer = KafkaConsumer('result')

# 一个后台线程,持续接收Kafka消息,并发送给客户端浏览器

def background_thread():

girl = 0

boy = 0

for msg in consumer:

data_json = msg.value.decode('utf8')

data_list = json.loads(data_json)

for data in data_list:

if '0' in data.keys():

girl = data['0']

elif '1' in data.keys():

boy = data['1']

else:

continue

result = str(girl) + ',' + str(boy)

print(result)

socketio.emit('test_message',{'data':result})

socketio.sleep(1)

# 客户端发送connect事件时的处理函数

@socketio.on('test_connect')

def connect(message):

print(message)

global thread

if thread is None:

# 单独开启一个线程给客户端发送数据

thread = socketio.start_background_task(target=background_thread)

socketio.emit('connected', {'data': 'Connected'})

# 通过访问http://127.0.0.1:5000/访问index.html

@app.route("/")

def handle_mes():

return render_template("index.html")

# main函数

if __name__ == '__main__':

socketio.run(app,debug=True)index.html

DashBoard

Girl:

Boy:

其它都是网上下载的标准js库文件

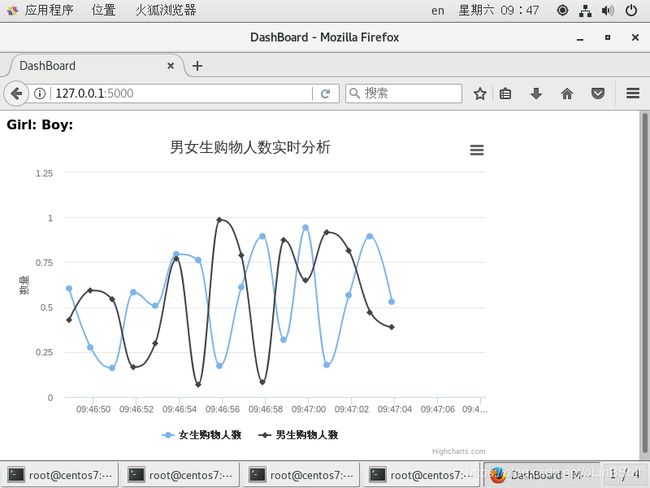

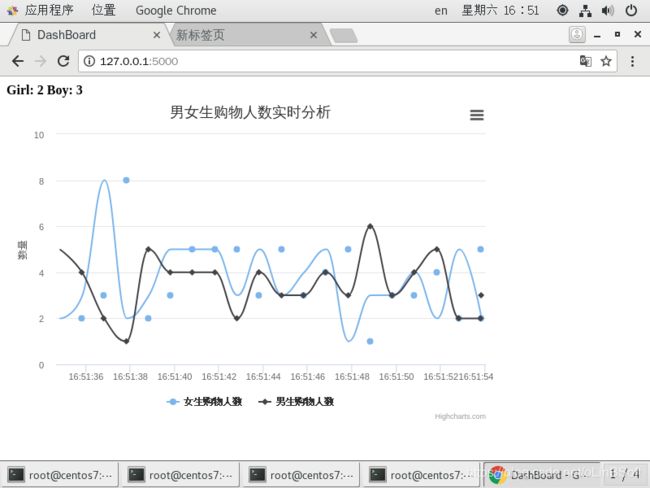

3. 运行

打开一个新的终端(第四个终端)

python3 app.py

4.打开浏览器 http://127.0.0.1:5000 测试结果图标

但是数据不能更新,这个又调试了半天,后来用类似的https://blog.csdn.net/sigmarising/article/details/90763114 的 flask-socketIO可以正常使用,经对比分析,在app.py 的 socketio.emit('test_message',{'data':result}) 后加上 socketio.sleep(1) 就能正常使用

也许是我的电脑不堪重负,处理不了这么快速的数据,客户端一直取不到数据。

四、Spark Streaming实时处理数据(csala版本)

以上第二部分spark streaming处理部分可以替换成scala版本

新建目录 ~/spark-2.2.0-bin-hadoop2.6/mycode/kafka/src/main/scala

在该目录新建文件StreamingExamples.scala 和 KafkaTest.scala

StreamingExamples.scala

package org.apache.spark.examples.streaming

import org.apache.spark.internal.Logging

import org.apache.log4j.{Level, Logger}

/** Utility functions for Spark Streaming examples. */

object StreamingExamples extends Logging {

/** Set reasonable logging levels for streaming if the user has not configured log4j. */

def setStreamingLogLevels() {

val log4jInitialized = Logger.getRootLogger.getAllAppenders.hasMoreElements

if (!log4jInitialized) {

// We first log something to initialize Spark's default logging, then we override the

// logging level.

logInfo("Setting log level to [WARN] for streaming example." +

" To override add a custom log4j.properties to the classpath.")

Logger.getRootLogger.setLevel(Level.WARN)

}

}

}KafkaTest.scala

package org.apache.spark.examples.streaming

import java.util.HashMap

import org.apache.kafka.clients.producer.{KafkaProducer, ProducerConfig, ProducerRecord}

import org.json4s._

import org.json4s.jackson.Serialization

import org.json4s.jackson.Serialization.write

import org.apache.spark.SparkConf

import org.apache.spark.streaming._

import org.apache.spark.streaming.Interval

import org.apache.spark.streaming.kafka._

object KafkaWordCount {

implicit val formats = DefaultFormats//数据格式化时需要

def main(args: Array[String]): Unit={

if (args.length < 4) {

System.err.println("Usage: KafkaWordCount ")

System.exit(1)

}

StreamingExamples.setStreamingLogLevels()

/* 输入的四个参数分别代表着

* 1. zkQuorum 为zookeeper地址

* 2. group为消费者所在的组

* 3. topics该消费者所消费的topics

* 4. numThreads开启消费topic线程的个数

*/

val Array(zkQuorum, group, topics, numThreads) = args

val sparkConf = new SparkConf().setAppName("KafkaWordCount")

val ssc = new StreamingContext(sparkConf, Seconds(1))

ssc.checkpoint(".") //这里表示把检查点文件写入分布式文件系统HDFS,所以要启动Hadoop

// 将topics转换成topic-->numThreads的哈稀表

val topicMap = topics.split(",").map((_, numThreads.toInt)).toMap

// 创建连接Kafka的消费者链接

val lines = KafkaUtils.createStream(ssc, zkQuorum, group, topicMap).map(_._2)

val words = lines.flatMap(_.split(" "))//将输入的每行用空格分割成一个个word

// 对每一秒的输入数据进行reduce,然后将reduce后的数据发送给Kafka

val wordCounts = words.map(x => (x, 1L))

.reduceByKeyAndWindow(_+_,_-_, Seconds(1), Seconds(1), 1).foreachRDD(rdd => {

if(rdd.count !=0 ){

val props = new HashMap[String, Object]()

props.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "127.0.0.1:9092")

props.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,

"org.apache.kafka.common.serialization.StringSerializer")

props.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG,

"org.apache.kafka.common.serialization.StringSerializer")

// 实例化一个Kafka生产者

val producer = new KafkaProducer[String, String](props)

// rdd.colect即将rdd中数据转化为数组,然后write函数将rdd内容转化为json格式

val str = write(rdd.collect)

// 封装成Kafka消息,topic为"result"

val message = new ProducerRecord[String, String]("result", null, str)

// 给Kafka发送消息

producer.send(message)

}

})

ssc.start()

ssc.awaitTermination()

}

} 在 ~/spark-2.2.0-bin-hadoop2.6/mycode/kafka 目录新建文件 simple.sbt 和startup_scala.sh

simple.sbt

name := "Simple Project"

version := "1.0"

scalaVersion := "2.11.8"

libraryDependencies += "org.apache.spark" %% "spark-core" % "2.1.0"

libraryDependencies += "org.apache.spark" % "spark-streaming_2.11" % "2.1.0"

libraryDependencies += "org.apache.spark" % "spark-streaming-kafka-0-8_2.11" % "2.1.0"

libraryDependencies += "org.json4s" %% "json4s-jackson" % "3.2.11"startup_scala.sh

cat startup_scala.sh

/home/linbin/software/spark-2.2.0-bin-hadoop2.6/bin/spark-submit --class "org.apache.spark.examples.streaming.KafkaWordCount" /home/linbin/software/spark-2.2.0-bin-hadoop2.6/mycode/kafka/target/scala-2.11/simple-project_2.11-1.0.jar 127.0.0.1:2181 1 sex 1

sbt编译,需先安装sbt编译器

cd /home/linbin/software/spark-2.2.0-bin-hadoop2.6/mycode/kafka

/root/sbt/sbt package

编译成功后,运行 sh startup_scala.sh 即可

实验顺利完毕,再次感谢厦大大数据实验室!