人工智能与机器学习——SVM算法深入探究

人工智能与机器学习——SVM算法深入探究

- 一、原理介绍

- 1. 支持向量机

- 2. 超平面

- 二、Soft Margin SVM

- 1. 加载鸢尾花数据集并查看散点图分布

- 2. 绘制决策边界

- 3. 再次实例化SVC,重新传入一个较小的C

- 三、使用多项式与核函数

- 1. 加载月亮数据集

- 2. 绘制散点图

- 3. 加入噪声点

- 4. 通过多项式特征的SVM进行分类

- 5. 使用核技巧来对数据进行处理

- 四、核函数

- 1. 产生测试点以及绘制散点图

- 2. 将数据升为二维

- 五、超参数γ

- 1. 加载月亮数据集

- 2. 定义一个RBF核的SVM

- 2. 修改γ值

- ① γ=100

- ② γ=10

- ③ γ=1

- ③ γ=0.1

- 六. 回归问题

一、原理介绍

1. 支持向量机

支持向量机是一类按监督学习方式对数据进行二元分类的广义线性分类器,其决策边界是对学习样本求解的最大边距超平面。

支持向量机的基本思想是SVM从线性可分情况下的最优分类面发展而来。最优分类面就是要求分类线不但能将两类正确分开(训练错误率为0),且使分类间隔最大。SVM考虑寻找一个满足分类要求的超平面,并且使训练集中的点距离分类面尽可能的远,也就是寻找一个分类面使它两侧的空白区域(margin)最大。(SVM算法就是为找到距分类样本点间隔最大的分类超平面(ω,b)过两类样本中离分类面最近的点,且平行于最优分类面的超平面上H1,H2的训练样本就叫支持向量。)

2. 超平面

在超平面wx+b=0确定的情况下,|wx+b|能够表示点x到距离超平面的远近,而通过观察wx+b的符号与类标记y的符号是否一致可判断分类是否正确,所以,可以用(y(w*x+b))的正负性来判定或表示分类的正确性。于此,我们便引出了函数间隔(functional margin)的概念。定义函数间隔(用γ表示)为:

![]()

二、Soft Margin SVM

1. 加载鸢尾花数据集并查看散点图分布

import numpy as np

import matplotlib.pyplot as plt

from sklearn import datasets

from sklearn.preprocessing import StandardScaler

from sklearn.svm import LinearSVC

iris = datasets.load_iris()

X = iris.data

y = iris.target

X = X [y<2,:2] #只取y<2的类别,也就是0 1 并且只取前两个特征

y = y[y<2] # 只取y<2的类别

# 分别画出类别0和1的点

plt.scatter(X[y==0,0],X[y==0,1],color='red')

plt.scatter(X[y==1,0],X[y==1,1],color='blue')

plt.show()

# 标准化

standardScaler = StandardScaler()

standardScaler.fit(X) #计算训练数据的均值和方差

X_standard = standardScaler.transform(X) #再用scaler中的均值和方差来转换X,使X标准化

svc = LinearSVC(C=1e9) #线性SVM分类器

svc.fit(X_standard,y) # 训练svm

2. 绘制决策边界

def plot_decision_boundary(model, axis):

x0, x1 = np.meshgrid(

np.linspace(axis[0], axis[1], int((axis[1]-axis[0])*100)).reshape(-1,1),

np.linspace(axis[2], axis[3], int((axis[3]-axis[2])*100)).reshape(-1,1)

)

X_new = np.c_[x0.ravel(), x1.ravel()]

y_predict = model.predict(X_new)

zz = y_predict.reshape(x0.shape)

from matplotlib.colors import ListedColormap

custom_cmap = ListedColormap(['#EF9A9A','#FFF59D','#90CAF9'])

plt.contourf(x0, x1, zz, linewidth=5, cmap=custom_cmap)

# 绘制决策边界

plot_decision_boundary(svc,axis=[-3,3,-3,3]) # x,y轴都在-3到3之间

# 绘制原始数据即散点图

plt.scatter(X_standard[y==0,0],X_standard[y==0,1],color='red')

plt.scatter(X_standard[y==1,0],X_standard[y==1,1],color='blue')

plt.show()

3. 再次实例化SVC,重新传入一个较小的C

#C越小容错空间越大

svc2 = LinearSVC(C=0.01)

svc2.fit(X_standard,y)

plot_decision_boundary(svc2,axis=[-3,3,-3,3]) # x,y轴都在-3到3之间

# 绘制原始数据

plt.scatter(X_standard[y==0,0],X_standard[y==0,1],color='red')

plt.scatter(X_standard[y==1,0],X_standard[y==1,1],color='blue')

plt.show()

三、使用多项式与核函数

1. 加载月亮数据集

import numpy as np

import matplotlib.pyplot as plt

from sklearn import datasets

#月亮数据集

X, y = datasets.make_moons() #使用生成的数据

print(X.shape) # (100,2)

print(y.shape) # (100,)

2. 绘制散点图

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

3. 加入噪声点

X, y = datasets.make_moons(noise=0.15,random_state=777) #随机生成噪声点,random_state是随机种子,noise是方差

#分类

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

4. 通过多项式特征的SVM进行分类

from sklearn.preprocessing import PolynomialFeatures,StandardScaler

from sklearn.svm import LinearSVC

from sklearn.pipeline import Pipeline

def PolynomialSVC(degree,C=1.0):

return Pipeline([

("poly",PolynomialFeatures(degree=degree)),#生成多项式

("std_scaler",StandardScaler()),#标准化

("linearSVC",LinearSVC(C=C))#最后生成svm

])

poly_svc = PolynomialSVC(degree=3)

poly_svc.fit(X,y)

plot_decision_boundary(poly_svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

5. 使用核技巧来对数据进行处理

from sklearn.svm import SVC

def PolynomialKernelSVC(degree,C=1.0):

return Pipeline([

("std_scaler",StandardScaler()),

("kernelSVC",SVC(kernel="poly")) # poly代表多项式特征

])

poly_kernel_svc = PolynomialKernelSVC(degree=3)

poly_kernel_svc.fit(X,y)

plot_decision_boundary(poly_kernel_svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

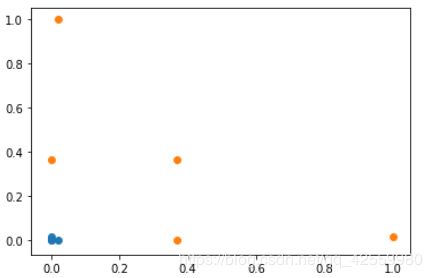

四、核函数

1. 产生测试点以及绘制散点图

import numpy as np

import matplotlib.pyplot as plt

x = np.arange(-4,5,1)#生成测试数据

y = np.array((x >= -2 ) & (x <= 2),dtype='int')

plt.scatter(x[y==0],[0]*len(x[y==0]))# x取y=0的点, y取0,有多少个x,就有多少个y

plt.scatter(x[y==1],[0]*len(x[y==1]))

plt.show()

2. 将数据升为二维

# 高斯核函数

def gaussian(x,l):

gamma = 1.0

return np.exp(-gamma * (x -l)**2)

l1,l2 = -1,1

X_new = np.empty((len(x),2)) #len(x) ,2

for i,data in enumerate(x):

X_new[i,0] = gaussian(data,l1)

X_new[i,1] = gaussian(data,l2)

plt.scatter(X_new[y==0,0],X_new[y==0,1])

plt.scatter(X_new[y==1,0],X_new[y==1,1])

plt.show()

五、超参数γ

1. 加载月亮数据集

import numpy as np

import matplotlib.pyplot as plt

from sklearn import datasets

#月亮数据集

X,y = datasets.make_moons(noise=0.15,random_state=777)

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

2. 定义一个RBF核的SVM

from sklearn.preprocessing import StandardScaler

from sklearn.svm import SVC

from sklearn.pipeline import Pipeline

def RBFKernelSVC(gamma=1.0):

return Pipeline([

('std_scaler',StandardScaler()),

('svc',SVC(kernel='rbf',gamma=gamma))

])

svc = RBFKernelSVC()

svc.fit(X,y)

plot_decision_boundary(svc,axis=[-1.5,2.5,-1.0,1.5])

plt.scatter(X[y==0,0],X[y==0,1])

plt.scatter(X[y==1,0],X[y==1,1])

plt.show()

2. 修改γ值

① γ=100

② γ=10

③ γ=1

③ γ=0.1

六. 回归问题

import numpy as np

import matplotlib.pyplot as plt

from sklearn import datasets

boston = datasets.load_boston()

X = boston.data

y = boston.target

from sklearn.model_selection import train_test_split

X_train,X_test,y_train,y_test = train_test_split(X,y,random_state=777) # 把数据集拆分成训练数据和测试数据

from sklearn.svm import LinearSVR

from sklearn.svm import SVR

from sklearn.preprocessing import StandardScaler

def StandardLinearSVR(epsilon=0.1):

return Pipeline([

('std_scaler',StandardScaler()),

('linearSVR',LinearSVR(epsilon=epsilon))

])

svr = StandardLinearSVR()

svr.fit(X_train,y_train)

svr.score(X_test,y_test) #0.6989278257702748

运行结果

![]()