- 浅谈MapReduce

Android路上的人

Hadoop分布式计算mapreduce分布式框架hadoop

从今天开始,本人将会开始对另一项技术的学习,就是当下炙手可热的Hadoop分布式就算技术。目前国内外的诸多公司因为业务发展的需要,都纷纷用了此平台。国内的比如BAT啦,国外的在这方面走的更加的前面,就不一一列举了。但是Hadoop作为Apache的一个开源项目,在下面有非常多的子项目,比如HDFS,HBase,Hive,Pig,等等,要先彻底学习整个Hadoop,仅仅凭借一个的力量,是远远不够的。

- Hadoop

傲雪凌霜,松柏长青

后端大数据hadoop大数据分布式

ApacheHadoop是一个开源的分布式计算框架,主要用于处理海量数据集。它具有高度的可扩展性、容错性和高效的分布式存储与计算能力。Hadoop核心由四个主要模块组成,分别是HDFS(分布式文件系统)、MapReduce(分布式计算框架)、YARN(资源管理)和HadoopCommon(公共工具和库)。1.HDFS(HadoopDistributedFileSystem)HDFS是Hadoop生

- hbase介绍

CrazyL-

云计算+大数据hbase

hbase是一个分布式的、多版本的、面向列的开源数据库hbase利用hadoophdfs作为其文件存储系统,提供高可靠性、高性能、列存储、可伸缩、实时读写、适用于非结构化数据存储的数据库系统hbase利用hadoopmapreduce来处理hbase、中的海量数据hbase利用zookeeper作为分布式系统服务特点:数据量大:一个表可以有上亿行,上百万列(列多时,插入变慢)面向列:面向列(族)的

- Spark集群的三种模式

MelodyYN

#Sparksparkhadoopbigdata

文章目录1、Spark的由来1.1Hadoop的发展1.2MapReduce与Spark对比2、Spark内置模块3、Spark运行模式3.1Standalone模式部署配置历史服务器配置高可用运行模式3.2Yarn模式安装部署配置历史服务器运行模式4、WordCount案例1、Spark的由来定义:Hadoop主要解决,海量数据的存储和海量数据的分析计算。Spark是一种基于内存的快速、通用、可

- HBase介绍

mingyu1016

数据库

概述HBase是一个分布式的、面向列的开源数据库,源于google的一篇论文《bigtable:一个结构化数据的分布式存储系统》。HBase是GoogleBigtable的开源实现,它利用HadoopHDFS作为其文件存储系统,利用HadoopMapReduce来处理HBase中的海量数据,利用Zookeeper作为协同服务。HBase的表结构HBase以表的形式存储数据。表有行和列组成。列划分为

- Hadoop windows intelij 跑 MR WordCount

piziyang12138

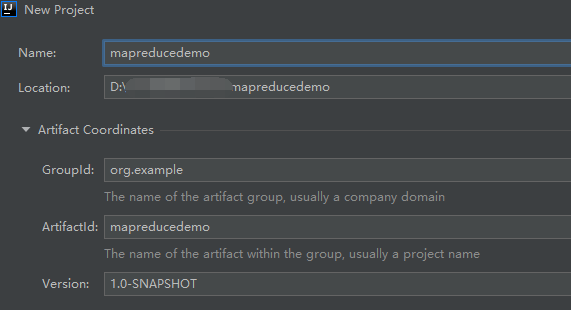

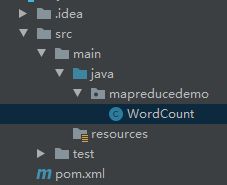

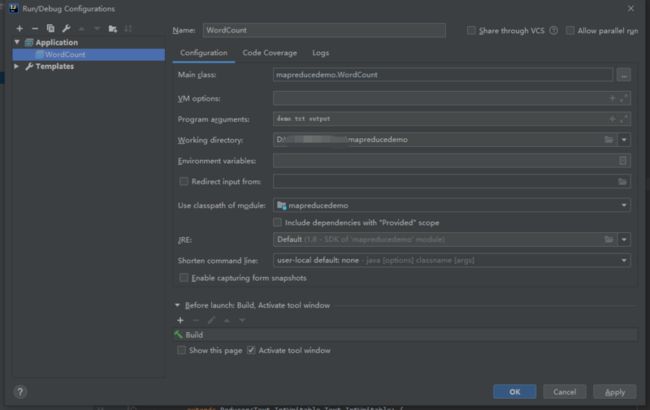

一、软件环境我使用的软件版本如下:IntellijIdea2017.1Maven3.3.9Hadoop分布式环境二、创建maven工程打开Idea,file->new->Project,左侧面板选择maven工程。(如果只跑MapReduce创建java工程即可,不用勾选Creatfromarchetype,如果想创建web工程或者使用骨架可以勾选)image.png设置GroupId和Artif

- ArcGIS地图切片原理与算法

数智侠

GIS

ArcGIS地图切图系列之(一)切片原理解析点击打开链接ArcGIS地图切图系列之(二)JAVA实现点击打开链接ArcGIS地图切图系列之(三)MapReduce实现点击打开链接

- 数据中台建设方案-基于大数据平台(下)

FRDATA1550333

大数据数据库架构数据库开发数据库

数据中台建设方案-基于大数据平台(下)1数据中台建设方案1.1总体建设方案1.2大数据集成平台1.3大数据计算平台1.3.1数据计算层建设计算层技术含量最高,最为活跃,发展也最为迅速。计算层主要实现各类数据的加工、处理和计算,为上层应用提供良好和充分的数据支持。大数据基础平台技术能力的高低,主要依赖于该层组件的发展。本建设方案满足甲方对于数据计算层建设的基本要求:利用了MapReduce、Spar

- MIT6.824 课程-MapReduce

余为民同志

6.824mapreduce分布式6.824

MapReduce:在大型集群上简化数据处理概要MapReduce是一种编程模型,它是一种用于处理和生成大型数据集的实现。用户通过指定一个用来处理键值对(Key/Value)的map函数来生成一个中间键值对集合。然后,再指定一个reduce函数,它用来合并所有的具有相同中间key的中间value。现实生活中有许多任务可以通过该模型进行表达,具体案例会在论文中展现出来。以这种函数式风格编写的程序能够

- Hadoop之mapreduce -- WrodCount案例以及各种概念

lzhlizihang

hadoopmapreduce大数据

文章目录一、MapReduce的优缺点二、MapReduce案例--WordCount1、导包2、Mapper方法3、Partitioner方法(自定义分区器)4、reducer方法5、driver(main方法)6、Writable(手机流量统计案例的实体类)三、关于片和块1、什么是片,什么是块?2、mapreduce启动多少个MapTask任务?四、MapReduce的原理五、Shuffle过

- Yarn介绍 - 大数据框架

why do not

大数据hadoop

YARN的概述YARN是一个资源调度平台,负责为运算程序提供服务器运算资源,相当于一个分布式的操作系统平台,而MapReduce等运算程序则相当于运行于操作系统之上的应用程序YARN是Hadoop2.x版本中的一个新特性。它的出现其实是为了解决第一代MapReduce编程框架的不足,提高集群环境下的资源利用率,这些资源包括内存,磁盘,网络,IO等。Hadoop2.X版本中重新设计的这个YARN集群

- 浅析大数据Hadoop之YARN架构

haotian1685

python数据清洗人工智能大数据大数据学习深度学习大数据大数据学习YARNhadoop

1.YARN本质上是资源管理系统。YARN提供了资源管理和资源调度等机制1.1原HadoopMapReduce框架对于业界的大数据存储及分布式处理系统来说,Hadoop是耳熟能详的卓越开源分布式文件存储及处理框架,对于Hadoop框架的介绍在此不再累述,读者可参考Hadoop官方简介。使用和学习过老Hadoop框架(0.20.0及之前版本)的同仁应该很熟悉如下的原MapReduce框架图:1.2H

- Hive的优势与使用场景

傲雪凌霜,松柏长青

后端大数据hivehadoop数据仓库

Hive的优势Hive作为一个构建在Hadoop上的数据仓库工具,具有许多优势,特别是在处理大规模数据分析任务时。以下是Hive的主要优势:1.与Hadoop生态系统的紧密集成Hive构建在Hadoop分布式文件系统(HDFS)之上,能够处理海量数据并进行分布式计算。它利用Hadoop的MapReduce或Spark来执行查询,具备高度扩展性,适合大数据处理。2.支持SQL-like查询语言(Hi

- Spark分布式计算原理

NightFall丶

#Sparkapachesparkspark

目录一、RDD依赖与DAG原理1.1RDD的转换一、RDD依赖与DAG原理Spark根据计算逻辑中的RDD的转换与动作生成RDD的依赖关系,同时这个计算链也形成了逻辑上的DAG。1.1RDD的转换e.g.(以wordcount为例)packagesparkimportorg.apache.spark.{SparkConf,SparkContext}objectWordCount{defmain(a

- Spark概念知识笔记

kuntoria

最近总结了个人的各项能力,发现在大数据这方面几乎没有涉及,因此想补充这方面的知识,丰富自己的知识体系,大数据生态主要包含:Hadoop和Spark两个部分,Spark作用相当于MapReduceMapReduce和Spark对比如下磁盘由于其物理特性现在,速度提升非常困难,远远跟不上CPU和内存的发展速度。近几十年来,内存的发展一直遵循摩尔定律,价格在下降,内存在增加。现在主流的服务器,几百GB或

- 【Hadoop】- MapReduce & YARN 初体验[9]

星星法术嗲人

hadoophadoopmapreduce

目录提交MapReduce程序至YARN运行1、提交wordcount示例程序1.1、先准备words.txt文件上传到hdfs,文件内容如下:1.2、在hdfs中创建两个文件夹,分别为/input、/output1.3、将创建好的words.txt文件上传到hdfs中/input1.4、提交MapReduce程序至YARN1.5、可通过node1:8088查看1.6、返回我们的服务器,检查输出文

- DAG (directed acyclic graph) 作为大数据执行引擎的优点

joeywen

分布式计算StormSparkStorm杂谈StormsparkDAG

TL;DR-ConceptuallyDAGmodelisastrictgeneralizationofMapReducemodel.DAG-basedsystemslikeSparkandTezthatareawareofthewholeDAGofoperationscandobetterglobaloptimizationsthansystemslikeHadoopMapReducewhicha

- Hadoop组件

静听山水

Hadoophadoop

这张图片展示了Hadoop生态系统的一些主要组件。Hadoop是一个开源的大数据处理框架,由Apache基金会维护。以下是每个组件的简短介绍:HBase:一个分布式、面向列的NoSQL数据库,基于GoogleBigTable的设计理念构建。HBase提供了实时读写访问大量结构化和半结构化数据的能力,非常适合大规模数据存储。Pig:一种高级数据流语言和执行引擎,用于编写MapReduce任务。Pig

- Hadoop-MapReduce机制原理

H.S.T不想卷

大数据hadoopmapreduce大数据

MapReduce机制原理1、MapReduce概述2、MapReduce特点3、MapReduce局限性4、MapTask5、Map阶段步骤:6、Reduce阶段步骤:7、MapReduce阶段图1、MapReduce概述 HadoopMapReduce是一个分布式计算框架,用于轻松编写分布式应用程序,这些应用程序以可靠,容错的方式并行处理大型硬件集群(数千个节点)上的大量数据(多TB数据集)

- EMR组件部署指南

ivwdcwso

运维EMR大数据开源运维

EMR(ElasticMapReduce)是一个大数据处理和分析平台,包含了多个开源组件。本文将详细介绍如何部署EMR的主要组件,包括:JDK1.8ElasticsearchKafkaFlinkZookeeperHBaseHadoopPhoenixScalaSparkHive准备工作所有操作都在/data目录下进行。首先安装JDK1.8:yuminstalljava-1.8.0-openjdk部署

- hive学习记录

2302_80695227

hive学习hadoop

一、Hive的基本概念定义:Hive是基于Hadoop的一个数据仓库工具,可以将结构化的数据文件映射为一张表,并提供类SQL查询功能。Hive将HQL(HiveQueryLanguage)转化成MapReduce程序或其他分布式计算引擎(如Tez、Spark)的任务进行计算。数据存储:Hive处理的数据存储在HDFS(HadoopDistributedFileSystem)上。执行引擎:Hive的

- Mapreduce是什么

whisky丶

简单来说,MapReduce是一个编程模型,用以进行大数据量的计算。HadoopMapReduce是一个软件框架,基于该框架能够容易地编写应用程序,这些应用程序能够运行在由上千个商用机器组成的大集群上,并以一种可靠的,具有容错能力的方式并行地处理上TB级别的海量数据集。Mapreduce的特点:软件框架并行处理可靠且容错大规模集群海量数据集

- Hadoop之MapReduce

qq_43198449

1.MapReduce解决的问题1)数据问题:10G的TXT文件2)生活问题:统计分类上海市的图书馆的书2.MapReduce是什么MapReduce是一种分布式的离线计算框架,是一种编程模型,用于大规模数据集(大于1TB)的并行运算将自己的程序运行在分布式系统上。概念是:Map(映射)"和"Reduce(归约)指定一个Map(映射)函数,用来把一组键值对映射成一组新的键值对,指定并发的Reduc

- 生产环境中MapReduce的最佳实践

大数据深度洞察

Hadoopmapreduce大数据

目录MapReduce跑的慢的原因MapReduce常用调优参数1.MapTask相关参数2.ReduceTask相关参数3.总体调优参数4.其他重要参数调优策略MapReduce数据倾斜问题1.数据预处理2.自定义Partitioner3.调整Reduce任务数4.小文件问题处理5.二次排序6.使用桶表7.使用随机前缀8.参数调优实施步骤MapReduce跑的慢的原因MapReduce程序效率的

- Hive 运行在 Tez 上

爱吃酸梨

大数据

Tez介绍Tez是一种基于内存的计算框架,速度比MapReduce要快解释:浅蓝色方块表示Map任务,绿色方块表示Reduce任务,蓝色边框的云朵表示中间结果落地磁盘。Tez下载Tez官网Tez在Hive上的运用前提要有Hadoop集群上传Tez压缩包到Hive节点上tar-zxvfapache-tez-0.9.1-bin.tar.gz-C/opt/module/tez-0.9.1修改$HIVE_

- 经验笔记:Hadoop

漆黑的莫莫

随手笔记笔记hadoop大数据

Hadoop经验笔记一、Hadoop概述Hadoop是一个开源软件框架,用于分布式存储和处理大规模数据集。其设计目的是为了在商用硬件上运行,具备高容错性和可扩展性。Hadoop的核心是HadoopDistributedFileSystem(HDFS)和YARN(YetAnotherResourceNegotiator),这两个组件加上MapReduce编程模型,构成了Hadoop的基本架构。二、H

- 大数据毕业设计hadoop+spark+hive微博舆情情感分析 知识图谱微博推荐系统

qq_79856539

javaweb大数据hadoop课程设计

(一)Selenium自动化Python爬虫工具采集新浪微博评论、热搜、文章等约10万条存入.csv文件作为数据集;(二)使用pandas+numpy或MapReduce对数据进行数据清洗,生成最终的.csv文件并上传到hdfs;(三)使用hive数仓技术建表建库,导入.csv数据集;(四)离线分析采用hive_sql完成,实时分析利用Spark之Scala完成;(五)统计指标使用sqoop导入m

- Data-Intensive Text Processing with MapReduce

西二旗小码农

自然语言处理(NLP)mapreduceprocessing算法integerhadooppair

大量高效的MapReduce程序因为它简单的编写方法而产生:除了准备输入数据之外,程序员只需要实现mapper和ruducer接口,或加上合并器(combiner)和分配器(partitioner)。所有其他方面的执行都透明地控制在由一个节点到上千个节点组成的,数据级别达到GB到PB级别的集群的执行框架中。然而,这就意味着程序员想在上面实现的算法必须表现为一些严格定义的组件,必须用特殊的方法把它们

- 双十一云起实验室体验专场,七大场景,体验有礼

阿里云天池

体验场景活动云计算大数据容器云原生

云起实验室云起实验室是阿里云为开发者打造的一站式体验学习平台,在这里你可以了解并亲自动手体验各类云产品和云计算基础,无需关注资源开通和底层产品,无需任何费用。只要有一颗想要了解云、学习云、体验云的心,这里就是你的上云第一站。场景介绍此次体验《双十一云起实验室体验专场》,涉及七大技术场景实践体验,云上实践,云上成长。\大数据计算场景《基于EMR离线数据分析》E-MapReduce(简称“EMR”)是

- 小白学习大数据测试之hadoop hdfs和MapReduce小实战

大数据学习02

转发是对小编的最大支持在湿货|大数据测试之hadoop单机环境搭建(超级详细版)这个基础上,我们来运行一个官网的MapReducedemo程序来看看效果和处理过程。大致步骤如下:新建一个文件test.txt,内容为HelloHadoopHelloxiaoqiangHellotestingbangHellohttp://xqtesting.sxl.cn将test.txt上传到hdfs的根目录/usr

- JAVA中的Enum

周凡杨

javaenum枚举

Enum是计算机编程语言中的一种数据类型---枚举类型。 在实际问题中,有些变量的取值被限定在一个有限的范围内。 例如,一个星期内只有七天 我们通常这样实现上面的定义:

public String monday;

public String tuesday;

public String wensday;

public String thursday

- 赶集网mysql开发36条军规

Bill_chen

mysql业务架构设计mysql调优mysql性能优化

(一)核心军规 (1)不在数据库做运算 cpu计算务必移至业务层; (2)控制单表数据量 int型不超过1000w,含char则不超过500w; 合理分表; 限制单库表数量在300以内; (3)控制列数量 字段少而精,字段数建议在20以内

- Shell test命令

daizj

shell字符串test数字文件比较

Shell test命令

Shell中的 test 命令用于检查某个条件是否成立,它可以进行数值、字符和文件三个方面的测试。 数值测试 参数 说明 -eq 等于则为真 -ne 不等于则为真 -gt 大于则为真 -ge 大于等于则为真 -lt 小于则为真 -le 小于等于则为真

实例演示:

num1=100

num2=100if test $[num1]

- XFire框架实现WebService(二)

周凡杨

javawebservice

有了XFire框架实现WebService(一),就可以继续开发WebService的简单应用。

Webservice的服务端(WEB工程):

两个java bean类:

Course.java

package cn.com.bean;

public class Course {

private

- 重绘之画图板

朱辉辉33

画图板

上次博客讲的五子棋重绘比较简单,因为只要在重写系统重绘方法paint()时加入棋盘和棋子的绘制。这次我想说说画图板的重绘。

画图板重绘难在需要重绘的类型很多,比如说里面有矩形,园,直线之类的,所以我们要想办法将里面的图形加入一个队列中,这样在重绘时就

- Java的IO流

西蜀石兰

java

刚学Java的IO流时,被各种inputStream流弄的很迷糊,看老罗视频时说想象成插在文件上的一根管道,当初听时觉得自己很明白,可到自己用时,有不知道怎么代码了。。。

每当遇到这种问题时,我习惯性的从头开始理逻辑,会问自己一些很简单的问题,把这些简单的问题想明白了,再看代码时才不会迷糊。

IO流作用是什么?

答:实现对文件的读写,这里的文件是广义的;

Java如何实现程序到文件

- No matching PlatformTransactionManager bean found for qualifier 'add' - neither

林鹤霄

java.lang.IllegalStateException: No matching PlatformTransactionManager bean found for qualifier 'add' - neither qualifier match nor bean name match!

网上找了好多的资料没能解决,后来发现:项目中使用的是xml配置的方式配置事务,但是

- Row size too large (> 8126). Changing some columns to TEXT or BLOB

aigo

column

原文:http://stackoverflow.com/questions/15585602/change-limit-for-mysql-row-size-too-large

异常信息:

Row size too large (> 8126). Changing some columns to TEXT or BLOB or using ROW_FORMAT=DYNAM

- JS 格式化时间

alxw4616

JavaScript

/**

* 格式化时间 2013/6/13 by 半仙

[email protected]

* 需要 pad 函数

* 接收可用的时间值.

* 返回替换时间占位符后的字符串

*

* 时间占位符:年 Y 月 M 日 D 小时 h 分 m 秒 s 重复次数表示占位数

* 如 YYYY 4占4位 YY 占2位<p></p>

* MM DD hh mm

- 队列中数据的移除问题

百合不是茶

队列移除

队列的移除一般都是使用的remov();都可以移除的,但是在昨天做线程移除的时候出现了点问题,没有将遍历出来的全部移除, 代码如下;

//

package com.Thread0715.com;

import java.util.ArrayList;

public class Threa

- Runnable接口使用实例

bijian1013

javathreadRunnablejava多线程

Runnable接口

a. 该接口只有一个方法:public void run();

b. 实现该接口的类必须覆盖该run方法

c. 实现了Runnable接口的类并不具有任何天

- oracle里的extend详解

bijian1013

oracle数据库extend

扩展已知的数组空间,例:

DECLARE

TYPE CourseList IS TABLE OF VARCHAR2(10);

courses CourseList;

BEGIN

-- 初始化数组元素,大小为3

courses := CourseList('Biol 4412 ', 'Psyc 3112 ', 'Anth 3001 ');

--

- 【httpclient】httpclient发送表单POST请求

bit1129

httpclient

浏览器Form Post请求

浏览器可以通过提交表单的方式向服务器发起POST请求,这种形式的POST请求不同于一般的POST请求

1. 一般的POST请求,将请求数据放置于请求体中,服务器端以二进制流的方式读取数据,HttpServletRequest.getInputStream()。这种方式的请求可以处理任意数据形式的POST请求,比如请求数据是字符串或者是二进制数据

2. Form

- 【Hive十三】Hive读写Avro格式的数据

bit1129

hive

1. 原始数据

hive> select * from word;

OK

1 MSN

10 QQ

100 Gtalk

1000 Skype

2. 创建avro格式的数据表

hive> CREATE TABLE avro_table(age INT, name STRING)STORE

- nginx+lua+redis自动识别封解禁频繁访问IP

ronin47

在站点遇到攻击且无明显攻击特征,造成站点访问慢,nginx不断返回502等错误时,可利用nginx+lua+redis实现在指定的时间段 内,若单IP的请求量达到指定的数量后对该IP进行封禁,nginx返回403禁止访问。利用redis的expire命令设置封禁IP的过期时间达到在 指定的封禁时间后实行自动解封的目的。

一、安装环境:

CentOS x64 release 6.4(Fin

- java-二叉树的遍历-先序、中序、后序(递归和非递归)、层次遍历

bylijinnan

java

import java.util.LinkedList;

import java.util.List;

import java.util.Stack;

public class BinTreeTraverse {

//private int[] array={ 1, 2, 3, 4, 5, 6, 7, 8, 9 };

private int[] array={ 10,6,

- Spring源码学习-XML 配置方式的IoC容器启动过程分析

bylijinnan

javaspringIOC

以FileSystemXmlApplicationContext为例,把Spring IoC容器的初始化流程走一遍:

ApplicationContext context = new FileSystemXmlApplicationContext

("C:/Users/ZARA/workspace/HelloSpring/src/Beans.xml&q

- [科研与项目]民营企业请慎重参与军事科技工程

comsci

企业

军事科研工程和项目 并非要用最先进,最时髦的技术,而是要做到“万无一失”

而民营科技企业在搞科技创新工程的时候,往往考虑的是技术的先进性,而对先进技术带来的风险考虑得不够,在今天提倡军民融合发展的大环境下,这种“万无一失”和“时髦性”的矛盾会日益凸显。。。。。。所以请大家在参与任何重大的军事和政府项目之前,对

- spring 定时器-两种方式

cuityang

springquartz定时器

方式一:

间隔一定时间 运行

<bean id="updateSessionIdTask" class="com.yang.iprms.common.UpdateSessionTask" autowire="byName" />

<bean id="updateSessionIdSchedule

- 简述一下关于BroadView站点的相关设计

damoqiongqiu

view

终于弄上线了,累趴,戳这里http://www.broadview.com.cn

简述一下相关的技术点

前端:jQuery+BootStrap3.2+HandleBars,全站Ajax(貌似对SEO的影响很大啊!怎么破?),用Grunt对全部JS做了压缩处理,对部分JS和CSS做了合并(模块间存在很多依赖,全部合并比较繁琐,待完善)。

后端:U

- 运维 PHP问题汇总

dcj3sjt126com

windows2003

1、Dede(织梦)发表文章时,内容自动添加关键字显示空白页

解决方法:

后台>系统>系统基本参数>核心设置>关键字替换(是/否),这里选择“是”。

后台>系统>系统基本参数>其他选项>自动提取关键字,这里选择“是”。

2、解决PHP168超级管理员上传图片提示你的空间不足

网站是用PHP168做的,反映使用管理员在后台无法

- mac 下 安装php扩展 - mcrypt

dcj3sjt126com

PHP

MCrypt是一个功能强大的加密算法扩展库,它包括有22种算法,phpMyAdmin依赖这个PHP扩展,具体如下:

下载并解压libmcrypt-2.5.8.tar.gz。

在终端执行如下命令: tar zxvf libmcrypt-2.5.8.tar.gz cd libmcrypt-2.5.8/ ./configure --disable-posix-threads --

- MongoDB更新文档 [四]

eksliang

mongodbMongodb更新文档

MongoDB更新文档

转载请出自出处:http://eksliang.iteye.com/blog/2174104

MongoDB对文档的CURD,前面的博客简单介绍了,但是对文档更新篇幅比较大,所以这里单独拿出来。

语法结构如下:

db.collection.update( criteria, objNew, upsert, multi)

参数含义 参数

- Linux下的解压,移除,复制,查看tomcat命令

y806839048

tomcat

重复myeclipse生成webservice有问题删除以前的,干净

1、先切换到:cd usr/local/tomcat5/logs

2、tail -f catalina.out

3、这样运行时就可以实时查看运行日志了

Ctrl+c 是退出tail命令。

有问题不明的先注掉

cp /opt/tomcat-6.0.44/webapps/g

- Spring之使用事务缘由(3-XML实现)

ihuning

spring

用事务通知声明式地管理事务

事务管理是一种横切关注点。为了在 Spring 2.x 中启用声明式事务管理,可以通过 tx Schema 中定义的 <tx:advice> 元素声明事务通知,为此必须事先将这个 Schema 定义添加到 <beans> 根元素中去。声明了事务通知后,就需要将它与切入点关联起来。由于事务通知是在 <aop:

- GCD使用经验与技巧浅谈

啸笑天

GC

前言

GCD(Grand Central Dispatch)可以说是Mac、iOS开发中的一大“利器”,本文就总结一些有关使用GCD的经验与技巧。

dispatch_once_t必须是全局或static变量

这一条算是“老生常谈”了,但我认为还是有必要强调一次,毕竟非全局或非static的dispatch_once_t变量在使用时会导致非常不好排查的bug,正确的如下: 1

- linux(Ubuntu)下常用命令备忘录1

macroli

linux工作ubuntu

在使用下面的命令是可以通过--help来获取更多的信息1,查询当前目录文件列表:ls

ls命令默认状态下将按首字母升序列出你当前文件夹下面的所有内容,但这样直接运行所得到的信息也是比较少的,通常它可以结合以下这些参数运行以查询更多的信息:

ls / 显示/.下的所有文件和目录

ls -l 给出文件或者文件夹的详细信息

ls -a 显示所有文件,包括隐藏文

- nodejs同步操作mysql

qiaolevip

学习永无止境每天进步一点点mysqlnodejs

// db-util.js

var mysql = require('mysql');

var pool = mysql.createPool({

connectionLimit : 10,

host: 'localhost',

user: 'root',

password: '',

database: 'test',

port: 3306

});

- 一起学Hive系列文章

superlxw1234

hiveHive入门

[一起学Hive]系列文章 目录贴,入门Hive,持续更新中。

[一起学Hive]之一—Hive概述,Hive是什么

[一起学Hive]之二—Hive函数大全-完整版

[一起学Hive]之三—Hive中的数据库(Database)和表(Table)

[一起学Hive]之四-Hive的安装配置

[一起学Hive]之五-Hive的视图和分区

[一起学Hive

- Spring开发利器:Spring Tool Suite 3.7.0 发布

wiselyman

spring

Spring Tool Suite(简称STS)是基于Eclipse,专门针对Spring开发者提供大量的便捷功能的优秀开发工具。

在3.7.0版本主要做了如下的更新:

将eclipse版本更新至Eclipse Mars 4.5 GA

Spring Boot(JavaEE开发的颠覆者集大成者,推荐大家学习)的配置语言YAML编辑器的支持(包含自动提示,