使用SpringBoot集成kafka实现简单的消息生产与消费

kafka是安装在本地的,系统为windows

1. 导入依赖:

org.springframework.kafka

spring-kafka

2. 配置application.properties:

# kafka配置

kafka.consumer.zookeeper.connect=localhost:2181

kafka.consumer.servers=localhost:9092

kafka.consumer.enable.auto.commit=true

kafka.consumer.session.timeout=6000

kafka.consumer.auto.commit.interval=100

kafka.consumer.auto.offset.reset=latest

kafka.consumer.topic=test

kafka.consumer.group.id=test

kafka.consumer.concurrency=10

kafka.producer.servers=localhost:9092

kafka.producer.retries=0

kafka.producer.batch.size=4096

kafka.producer.linger=1

kafka.producer.buffer.memory=409603. 新建一个message类,方便查看结果

package com.example.spring_kafka_demo.model;

import java.util.Date;

public class Message {

private Long id;

private String msg;

private Date sendTime;

public void setId(Long id) {

this.id = id;

}

public void setMsg(String msg) {

this.msg = msg;

}

public void setSendTime(Date sendTime) {

this.sendTime = sendTime;

}

@Override

public String toString() {

return "Message{" +

"id=" + id +

", msg='" + msg + '\'' +

", sendTime=" + sendTime +

'}';

}

}

4. 初始化producer:

package com.example.spring_kafka_demo.producer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.common.serialization.StringSerializer;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.kafka.annotation.EnableKafka;

import org.springframework.kafka.core.DefaultKafkaProducerFactory;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.kafka.core.ProducerFactory;

import java.util.HashMap;

import java.util.Map;

@Configuration

@EnableKafka

public class KafkaProducerConfig {

@Value("${kafka.producer.servers}")

private String servers;

@Value("${kafka.producer.retries}")

private int retries;

@Value("${kafka.producer.batch.size}")

private int batchSize;

@Value("${kafka.producer.linger}")

private int linger;

@Value("${kafka.producer.buffer.memory}")

private int bufferMemory;

public Map producerConfigs(){

Map props=new HashMap<>();

props.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, servers);

props.put(ProducerConfig.RETRIES_CONFIG, retries);

props.put(ProducerConfig.BATCH_SIZE_CONFIG, batchSize);

props.put(ProducerConfig.LINGER_MS_CONFIG, linger);

props.put(ProducerConfig.BUFFER_MEMORY_CONFIG, bufferMemory);

props.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class);

props.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, StringSerializer.class);

return props;

}

public ProducerFactory producerFactory(){

return new DefaultKafkaProducerFactory<>(producerConfigs());

}

@Bean

public KafkaTemplate kafkaTemplate(){

return new KafkaTemplate(producerFactory());

}

}

5. 为了方便操作,建立一个controller进行发送消息:

package com.example.spring_kafka_demo.controller;

import com.example.spring_kafka_demo.model.Message;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RequestMethod;

import org.springframework.web.bind.annotation.RestController;

import javax.servlet.http.HttpServletRequest;

import javax.servlet.http.HttpServletResponse;

import java.util.Date;

@RestController

@RequestMapping("/kafka")

public class CollectController {

protected Logger logger= LoggerFactory.getLogger(this.getClass());

@Autowired

private KafkaTemplate kafkaTemplate;

@RequestMapping(value = "/send",method = RequestMethod.GET)

public String sendKafka(HttpServletRequest request, HttpServletResponse response){

String msg=request.getParameter("message");

Message message=new Message();

message.setId(1L);

message.setSendTime(new Date());

try {

kafkaTemplate.send("test","key",msg);

message.setMsg(msg+"--->发送成功!");

} catch (Exception e) {

message.setMsg(msg+"--->发送失败!");

}

return message.toString();

}

}

6. 做完上面这些,然后开始初始化consumer,首先建立一个listener类,用来监听指定Topic:

package com.example.spring_kafka_demo.listener;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.kafka.annotation.KafkaListener;

public class Listener {

protected final Logger logger= LoggerFactory.getLogger(Listener.class);

@KafkaListener(topics = {"test"})

public void listen(ConsumerRecord record){

logger.info("kafka-message: key-->"+record.key()+",value-->"+record.value().toString());

}

}

7. 初始化consumer:

package com.example.spring_kafka_demo.consumer;

import com.example.spring_kafka_demo.listener.Listener;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.common.serialization.StringDeserializer;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.kafka.annotation.EnableKafka;

import org.springframework.kafka.config.ConcurrentKafkaListenerContainerFactory;

import org.springframework.kafka.config.KafkaListenerContainerFactory;

import org.springframework.kafka.core.ConsumerFactory;

import org.springframework.kafka.core.DefaultKafkaConsumerFactory;

import org.springframework.kafka.listener.ConcurrentMessageListenerContainer;

import java.util.HashMap;

import java.util.Map;

@Configuration

@EnableKafka

public class KafkaConsumerConfig {

@Value("${kafka.consumer.servers}")

private String servers;

@Value("${kafka.consumer.enable.auto.commit}")

private boolean enableAutoCommit;

@Value("${kafka.consumer.session.timeout}")

private String sessionTimeout;

@Value("${kafka.consumer.auto.commit.interval}")

private String autoCommitInterval;

@Value("${kafka.consumer.group.id}")

private String groupId;

@Value("${kafka.consumer.auto.offset.reset}")

private String autoOffsetReset;

@Value("${kafka.consumer.concurrency}")

private int concurrency;

public Map consumerConfigs(){

Map props=new HashMap<>();

props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, servers);

props.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, enableAutoCommit);

props.put(ConsumerConfig.AUTO_COMMIT_INTERVAL_MS_CONFIG, autoCommitInterval);

props.put(ConsumerConfig.SESSION_TIMEOUT_MS_CONFIG, sessionTimeout);

props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);

props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);

props.put(ConsumerConfig.GROUP_ID_CONFIG, groupId);

props.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, autoOffsetReset);

return props;

}

public ConsumerFactory consumerFactory(){

return new DefaultKafkaConsumerFactory<>(consumerConfigs());

}

@Bean

public KafkaListenerContainerFactory> kafkaListenerContainerFactory(){

ConcurrentKafkaListenerContainerFactory factory=new ConcurrentKafkaListenerContainerFactory<>();

factory.setConsumerFactory(consumerFactory());

factory.getContainerProperties().setPollTimeout(1500);

return factory;

}

@Bean

public Listener listener(){

return new Listener();

}

}

8. 启动 SpringKafkaDemoApplication 主类:

package com.example.spring_kafka_demo;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class SpringKafkaDemoApplication {

public static void main(String[] args) {

SpringApplication.run(SpringKafkaDemoApplication.class, args);

}

}

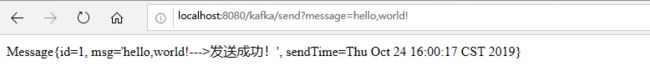

测试URL:http://localhost:8080/kafka/send?message=hello,world!

控制台:![]()

完成!