Coursera-Machine-Learning-ex1

warmUpExercise

这个部分主要为了熟悉如何运行程序,按要求返回一个5阶单位矩阵即可,warmUpExercise.m代码如下

function A = warmUpExercise()

%WARMUPEXERCISE Example function in octave

% A = WARMUPEXERCISE() is an example function that returns the 5x5 identity matrix

% ============= YOUR CODE HERE ==============

% Instructions: Return the 5x5 identity matrix

% In octave, we return values by defining which variables

% represent the return values (at the top of the file)

% and then set them accordingly.

A = eye(5);

% ===========================================

endex1

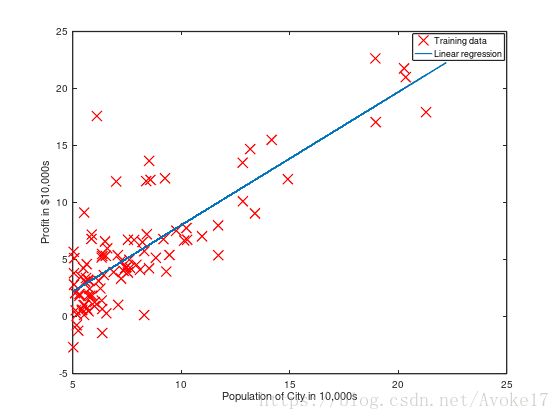

第一次编程作业需要我们利用单变量的线性回归来预测餐车的获利,其中ex1data1.txt保存了数据集,其中第一列为该城市人口数,第二列为餐车在该城市的获利,负值代表亏损,通过以下代码载入,并初始化一些可能用到的值,样本数m等:

%% ======================= Part 2: Plotting =======================

fprintf('Plotting Data ...\n')

data = load('ex1data1.txt');

X = data(:, 1); y = data(:, 2);

m = length(y); % number of training examples我们需要完成plotData.m来绘制散点图,代码已经由pdf给出,我们只需要填入文件即可:

function plotData(x, y)

%PLOTDATA Plots the data points x and y into a new figure

% PLOTDATA(x,y) plots the data points and gives the figure axes labels of

% population and profit.

figure; % open a new figure window

% ====================== YOUR CODE HERE ======================

% Instructions: Plot the training data into a figure using the

% "figure" and "plot" commands. Set the axes labels using

% the "xlabel" and "ylabel" commands. Assume the

% population and revenue data have been passed in

% as the x and y arguments of this function.

%

% Hint: You can use the 'rx' option with plot to have the markers

% appear as red crosses. Furthermore, you can make the

% markers larger by using plot(..., 'rx', 'MarkerSize', 10);

plot(x,y,'rx','MarkerSize',10);

ylabel('Profit in $10,000s');

xlabel('Population of City in 10,000s');

% ============================================================

end接下来我们需要完成梯度下降算法部分,在一开始的初始化部分,我们已经载入了X为训练集数据,由于θ0x0的存在,我们为X增加一列1,这样参数θ向量自然初始化为一个二阶行向量,设置迭代次数为1500,学习速率为0.01:

X = [ones(m, 1), data(:,1)]; % Add a column of ones to x

theta = zeros(2, 1); % initialize fitting parameters

% Some gradient descent settings

iterations = 1500;

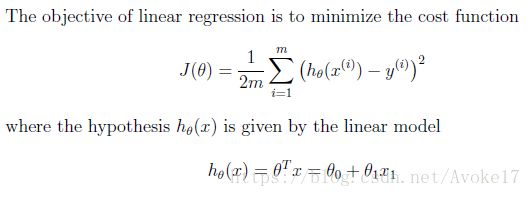

alpha = 0.01;接下来我们需要完成computeCost.m中对代价函数的计算部分,公式在上面已经给出过:

由于X是一个m*2阶矩阵,θ为一个2*1阶向量,y为m*1阶向量,这里的假设函数hx即为X*θ,具体实现代码如下:

function J = computeCost(X, y, theta)

%COMPUTECOST Compute cost for linear regression

% J = COMPUTECOST(X, y, theta) computes the cost of using theta as the

% parameter for linear regression to fit the data points in X and y

% Initialize some useful values

m = length(y); % number of training examples

% You need to return the following variables correctly

J = 0;

% ====================== YOUR CODE HERE ======================

% Instructions: Compute the cost of a particular choice of theta

% You should set J to the cost.

J = 1/(2*m)*sum((X*theta-y).^2);

% =========================================================================

end梯度下降算法中,我们需要根据每次计算出来的参数θ进行更新,并且是同步更新所有的元素:

对应到代码中,由于θ为一个向量,这里我们对向量更新自然是对所有元素的同步更新,hx-y为一个m*1的向量,X为m*2阶矩阵,θ为2*1阶向量,所以此处乘法关系上为X的转置乘(hx-y),gradientDescent.m代码具体如下:

function [theta, J_history] = gradientDescent(X, y, theta, alpha, num_iters)

%GRADIENTDESCENT Performs gradient descent to learn theta

% theta = GRADIENTDESCENT(X, y, theta, alpha, num_iters) updates theta by

% taking num_iters gradient steps with learning rate alpha

% Initialize some useful values

m = length(y); % number of training examples

J_history = zeros(num_iters, 1);

for iter = 1:num_iters

theta = theta - alpha * (1/m) * (X' * (X*theta-y));

% ====================== YOUR CODE HERE ======================

% Instructions: Perform a single gradient step on the parameter vector

% theta.

%

% Hint: While debugging, it can be useful to print out the values

% of the cost function (computeCost) and gradient here.

%

% ============================================================

% Save the cost J in every iteration

J_history(iter) = computeCost(X, y, theta);

end

end此时数据散点图,单变量线性回归的变化关系以及轮廓图应如下所示:

Optional Exercises

这个部分我们扩展单变量线性回归为多变量,ex1data2.txt中第一列为房子大小,第二列为卧室数量,第三列为该房子的价格,首先载入数据并且初始化相关参数:

%% Load Data

data = load('ex1data2.txt');

X = data(:, 1:2);

y = data(:, 3);

m = length(y);由于特征之间具有倍数差距,所以这里需要通过Feature Normalization来使得梯度下降收敛加速,我们用μ代表平均值,σ代表标准差,其中标准差用于代替max-min的值,在featureNormalize.m中实现如下:

function [X_norm, mu, sigma] = featureNormalize(X)

%FEATURENORMALIZE Normalizes the features in X

% FEATURENORMALIZE(X) returns a normalized version of X where

% the mean value of each feature is 0 and the standard deviation

% is 1. This is often a good preprocessing step to do when

% working with learning algorithms.

% You need to set these values correctly

X_norm = X;

mu = zeros(1, size(X, 2));

sigma = zeros(1, size(X, 2));

% ====================== YOUR CODE HERE ======================

% Instructions: First, for each feature dimension, compute the mean

% of the feature and subtract it from the dataset,

% storing the mean value in mu. Next, compute the

% standard deviation of each feature and divide

% each feature by it's standard deviation, storing

% the standard deviation in sigma.

%

% Note that X is a matrix where each column is a

% feature and each row is an example. You need

% to perform the normalization separately for

% each feature.

%

% Hint: You might find the 'mean' and 'std' functions useful.

%

mu = mean(X);

sigma = std(X);

row_no = size(X,1);

mu_s = mu(ones(row_no,1),:);

sigma_s = sigma(ones(row_no,1),:);

X_norm = (X - mu_s) ./ sigma_s;

% ============================================================

end由于我们之前的代码支持X为m*n阶矩阵的情况,对应θ为n*1阶向量,所以对于多变量情况下的代价函数计算和梯度下降更新参数,我们在代码上并不需要做什么改变,具体可以参照上面的代码来填写computeCostMulti.m和gradientDescentMulti.m。

最后一步,我们利用正规方程来求解最适合的参数值,并且不需要进行Feature Scaling,利用计算公式![]() 就可以直接进行计算,normalEqn.m中的具体代码如下:

就可以直接进行计算,normalEqn.m中的具体代码如下:

function [theta] = normalEqn(X, y)

%NORMALEQN Computes the closed-form solution to linear regression

% NORMALEQN(X,y) computes the closed-form solution to linear

% regression using the normal equations.

theta = zeros(size(X, 2), 1);

% ====================== YOUR CODE HERE ======================

% Instructions: Complete the code to compute the closed form solution

% to linear regression and put the result in theta.

%

% ---------------------- Sample Solution ----------------------

theta = pinv(X' * X) * X' * y;

% -------------------------------------------------------------

% ============================================================

end至此,ex1的全部内容就完成了。