Hue 的编译安装及简单使用

HUE简介

Hue是一个开源的Apache Hadoop UI系统,由Cloudera Desktop演化而来,最后Cloudera公司将其贡献给Apache基金会的Hadoop社区,用Python Web框架Django实现的。通过使用Hue我们可以在浏览器端的Web控制台上与Hadoop集群进行交互来分析处理数据,例如操作HDFS上的数据,运行MapReduce Job,执行Hive的SQL语句,浏览HBase数据库等等。

由于工作需要,最近研究了一下hue的安装配置、使用、编码调试,网上资料比较少,现将本人实际安装步骤记录一下分享出来,希望对需要的同学有所帮助

安装环境

声明:我全程在root用户下安装,其实不妥,应更换普通用户如hue

centos-7.6

mysql-8.0.17

hadoop-2.8.5

JDK:jdk-8u171-linux-x64

Maven:apache-maven-3.6.1-bin

Pyhon-2.7.5

Hue: CHD 5.16.2(相当于4.X版本)

安装依赖

yum install ant asciidoc cyrus-sasl-devel cyrus-sasl-gssapi cyrus-sasl-plain gcc gcc-c++ krb5-devel libffi-devel libxml2-devel libxslt-devel make mysql mysql-devel openldap-devel python-devel sqlite-devel gmp-devel -y

下载解压并编译

hue下载地址:

https://github.com/cloudera/hue/releases

下载zip版

解压:

unzip .....

进入到hue目录后,开始进行编译,编译需要python2.7的环境,centos7自带2.7.5

也可以自行升级

下载python安装包

wget https://www.python.org/ftp/python/2.7.14/Python-2.7.14.tgz

解压

tar -xzvf Python-2.7.14.tgz

安装依赖

yum -y install gcc gcc-c++ libstdc++-devel

编译安装

cd Python-2.7.14

./configure

make all

make install

make clean

make distclean

检测成功与否

python --version

编译hue

进入hue目录执行

make clean

make apps

然后开始等待(过程大概十分钟左右)

编译时遇到的错误:

缺少my_conf.h配置文件

https://blog.csdn.net/qq_38924171/article/details/101426144#commentBox

解决!

maven plugin插件错误

需要删除两个文件,文章最后有错误解决

配置文件:

位置在/hue/desktop/conf/pseudo-distributed.ini

我只配了mysql,hive,hdfs,resourcemanager

#####################################

# DEVELOPMENT EDITION

#####################################

# Hue configuration file

# ===================================

#

# For complete documentation about the contents of this file, run

# $ /build/env/bin/hue config_help

#

# All .ini files under the current directory are treated equally. Their

# contents are merged to form the Hue configuration, which can

# can be viewed on the Hue at

# http://:/dump_config

###########################################################################

# General configuration for core Desktop features (authentication, etc)

###########################################################################

[desktop]

# Set this to a random string, the longer the better.

# This is used for secure hashing in the session store.

secret_key=qwoiyut&^@fsdjaf,&$^%$osjfsafhdg

# Execute this script to produce the Django secret key. This will be used when

# 'secret_key' is not set.

## secret_key_script=

# Webserver listens on this address and port

http_host=0.0.0.0

http_port=8000

# Choose whether to enable the new Hue 4 interface.

## is_hue_4=true

# Choose whether to still allow users to enable the old Hue 3 interface.

## disable_hue_3=false

# Choose whether the Hue pages are embedded or not. This will improve the rendering of Hue when added inside a

# container element.

## is_embedded=false

# A comma-separated list of available Hue load balancers

## hue_load_balancer=

# Time zone name

time_zone=Asia/Shanghai

# Enable or disable Django debug mode.

django_debug_mode=false

# Enable development mode, where notably static files are not cached.

dev=true

# Enable embedded development mode, where the page will be rendered inside a container div element.

## dev_embedded=false

# Enable or disable database debug mode.

## database_logging=false

# Whether to send debug messages from JavaScript to the server logs.

send_dbug_messages=true

# Enable or disable backtrace for server error

http_500_debug_mode=false

# Enable or disable memory profiling.

## memory_profiler=false

# Enable or disable instrumentation. If django_debug_mode is True, this is automatically enabled

## instrumentation=false

# Server email for internal error messages

## django_server_email='[email protected]'

# Email backend

## django_email_backend=django.core.mail.backends.smtp.EmailBackend

# Set to true to use CherryPy as the webserver, set to false

# to use Gunicorn as the webserver. Defaults to CherryPy if

# key is not specified.

## use_cherrypy_server=true

# Gunicorn work class: gevent or evenlet, gthread or sync.

## gunicorn_work_class=eventlet

# The number of Gunicorn worker processes. If not specified, it uses: (number of CPU * 2) + 1.

## gunicorn_number_of_workers=None

# Webserver runs as this user

server_user=root

server_group=root

# This should be the Hue admin and proxy user

## default_user=root

# This should be the hadoop cluster admin

default_hdfs_superuser=root

# If set to false, runcpserver will not actually start the web server.

# Used if Apache is being used as a WSGI container.

## enable_server=yes

# Number of threads used by the CherryPy web server

## cherrypy_server_threads=50

# This property specifies the maximum size of the receive buffer in bytes in thrift sasl communication,

# default value is 2097152 (2 MB), which equals to (2 * 1024 * 1024)

## sasl_max_buffer=2097152

# Filename of SSL Certificate

## ssl_certificate=

# Filename of SSL RSA Private Key

## ssl_private_key=

# Filename of SSL Certificate Chain

## ssl_certificate_chain=

# SSL certificate password

## ssl_password=

# Execute this script to produce the SSL password. This will be used when 'ssl_password' is not set.

## ssl_password_script=

# X-Content-Type-Options: nosniff This is a HTTP response header feature that helps prevent attacks based on MIME-type confusion.

## secure_content_type_nosniff=true

# X-Xss-Protection: \"1; mode=block\" This is a HTTP response header feature to force XSS protection.

## secure_browser_xss_filter=true

# X-Content-Type-Options: nosniff This is a HTTP response header feature that helps prevent attacks based on MIME-type confusion.

## secure_content_security_policy="script-src 'self' 'unsafe-inline' 'unsafe-eval' *.google-analytics.com *.doubleclick.net data:;img-src 'self' *.google-analytics.com *.doubleclick.net http://*.tile.osm.org *.tile.osm.org *.gstatic.com data:;style-src 'self' 'unsafe-inline' fonts.googleapis.com;connect-src 'self';frame-src *;child-src 'self' data: *.vimeo.com;object-src 'none'"

# Strict-Transport-Security HTTP Strict Transport Security(HSTS) is a policy which is communicated by the server to the user agent via HTTP response header field name "Strict-Transport-Security". HSTS policy specifies a period of time during which the user agent(browser) should only access the server in a secure fashion(https).

## secure_ssl_redirect=False

## secure_redirect_host=0.0.0.0

## secure_redirect_exempt=[]

## secure_hsts_seconds=31536000

## secure_hsts_include_subdomains=true

# List of allowed and disallowed ciphers in cipher list format.

# See http://www.openssl.org/docs/apps/ciphers.html for more information on

# cipher list format. This list is from

# https://wiki.mozilla.org/Security/Server_Side_TLS v3.7 intermediate

# recommendation, which should be compatible with Firefox 1, Chrome 1, IE 7,

# Opera 5 and Safari 1.

## ssl_cipher_list=ECDHE-ECDSA-CHACHA20-POLY1305:ECDHE-RSA-CHACHA20-POLY1305:ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:DHE-RSA-AES128-GCM-SHA256:DHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-AES128-SHA256:ECDHE-RSA-AES128-SHA256:ECDHE-ECDSA-AES128-SHA:ECDHE-RSA-AES256-SHA384:ECDHE-RSA-AES128-SHA:ECDHE-ECDSA-AES256-SHA384:ECDHE-ECDSA-AES256-SHA:ECDHE-RSA-AES256-SHA:DHE-RSA-AES128-SHA256:DHE-RSA-AES128-SHA:DHE-RSA-AES256-SHA256:DHE-RSA-AES256-SHA:ECDHE-ECDSA-DES-CBC3-SHA:ECDHE-RSA-DES-CBC3-SHA:EDH-RSA-DES-CBC3-SHA:AES128-GCM-SHA256:AES256-GCM-SHA384:AES128-SHA256:AES256-SHA256:AES128-SHA:AES256-SHA:DES-CBC3-SHA:!DSS:!DH:!ADH

# Path to default Certificate Authority certificates.

## ssl_cacerts=/etc/hue/cacerts.pem

# Choose whether Hue should validate certificates received from the server.

## ssl_validate=true

# Default LDAP/PAM/.. username and password of the hue user used for authentications with other services.

# Inactive if password is empty.

# e.g. LDAP pass-through authentication for HiveServer2 or Impala. Apps can override them individually.

## auth_username=hue

## auth_password=

# Default encoding for site data

## default_site_encoding=utf-8

# Help improve Hue with anonymous usage analytics.

# Use Google Analytics to see how many times an application or specific section of an application is used, nothing more.

## collect_usage=true

# Tile layer server URL for the Leaflet map charts

# Read more on http://leafletjs.com/reference.html#tilelayer

# Make sure you add the tile domain to the img-src section of the 'secure_content_security_policy' configuration parameter as well.

## leaflet_tile_layer=http://{s}.tile.osm.org/{z}/{x}/{y}.png

# The copyright message for the specified Leaflet maps Tile Layer

## leaflet_tile_layer_attribution='© OpenStreetMap contributors'

# All the map options accordingly to http://leafletjs.com/reference-0.7.7.html#map-options

# To change CRS, just use the name, ie. "EPSG4326"

## leaflet_map_options='{}'

# All the tile layer options, accordingly to http://leafletjs.com/reference-0.7.7.html#tilelayer

## leaflet_tile_layer_options='{}'

# X-Frame-Options HTTP header value. Use 'DENY' to deny framing completely

## http_x_frame_options=SAMEORIGIN

# Enable X-Forwarded-Host header if the load balancer requires it.

## use_x_forwarded_host=true

# Support for HTTPS termination at the load-balancer level with SECURE_PROXY_SSL_HEADER.

## secure_proxy_ssl_header=false

# Comma-separated list of Django middleware classes to use.

# See https://docs.djangoproject.com/en/1.4/ref/middleware/ for more details on middlewares in Django.

## middleware=desktop.auth.backend.LdapSynchronizationBackend

# Comma-separated list of regular expressions, which match the redirect URL.

# For example, to restrict to your local domain and FQDN, the following value can be used:

# ^\/.*$,^http:\/\/www.mydomain.com\/.*$

## redirect_whitelist=^(\/[a-zA-Z0-9]+.*|\/)$

# Comma separated list of apps to not load at server startup.

# e.g.: pig,zookeeper

## app_blacklist=

# Id of the cluster where Hue is located.

## cluster_id='default'

# Choose whether to show the new SQL editor.

## use_new_editor=true

# Global setting to allow or disable end user downloads in all Hue.

# e.g. Query result in Editors and Dashboards, file in File Browser...

## enable_download=true

# Choose whether to enable the new SQL syntax checker or not

## enable_sql_syntax_check=true

# Choose whether to show the improved assist panel and the right context panel

## use_new_side_panels=false

# Choose whether to use new charting library across the whole Hue.

## use_new_charts=false

# Editor autocomplete timeout (ms) when fetching columns, fields, tables etc.

# To disable this type of autocompletion set the value to 0.

## editor_autocomplete_timeout=30000

# Enable saved default configurations for Hive, Impala, Spark, and Oozie.

## use_default_configuration=false

# The directory where to store the auditing logs. Auditing is disable if the value is empty.

# e.g. /var/log/hue/audit.log

## audit_event_log_dir=

# Size in KB/MB/GB for audit log to rollover.

## audit_log_max_file_size=100MB

# Timeout in seconds for REST calls.

## rest_conn_timeout=120

# A json file containing a list of log redaction rules for cleaning sensitive data

# from log files. It is defined as:

#

# {

# "version": 1,

# "rules": [

# {

# "description": "This is the first rule",

# "trigger": "triggerstring 1",

# "search": "regex 1",

# "replace": "replace 1"

# },

# {

# "description": "This is the second rule",

# "trigger": "triggerstring 2",

# "search": "regex 2",

# "replace": "replace 2"

# }

# ]

# }

#

# Redaction works by searching a string for the [TRIGGER] string. If found,

# the [REGEX] is used to replace sensitive information with the

# [REDACTION_MASK]. If specified with 'log_redaction_string', the

# 'log_redaction_string' rules will be executed after the

# 'log_redaction_file' rules.

#

# For example, here is a file that would redact passwords and social security numbers:

# {

# "version": 1,

# "rules": [

# {

# "description": "Redact passwords",

# "trigger": "password",

# "search": "password=\".*\"",

# "replace": "password=\"???\""

# },

# {

# "description": "Redact social security numbers",

# "trigger": "",

# "search": "\d{3}-\d{2}-\d{4}",

# "replace": "XXX-XX-XXXX"

# }

# ]

# }

## log_redaction_file=

# Comma separated list of strings representing the host/domain names that the Hue server can serve.

# e.g.: localhost,domain1,*

## allowed_hosts="*"

# Administrators

# ----------------

[[django_admins]]

## [[[admin1]]]

## name=john

## [email protected]

# UI customizations

# -------------------

[[custom]]

# Top banner HTML code

# e.g. Test Lab A2 Hue Services

## banner_top_html='This is Hue 4 Beta! - Please feel free to email any feedback / questions to [email protected] or @gethue.'

# Login splash HTML code

# e.g. WARNING: You are required to have authorization before you proceed

## login_splash_html=GetHue.com

WARNING: You have accessed a computer managed by GetHue. You are required to have authorization from GetHue before you proceed.

# Cache timeout in milliseconds for the assist, autocomplete, etc.

# defaults to 10 days, set to 0 to disable caching

## cacheable_ttl=864000000

# SVG code to replace the default Hue logo in the top bar and sign in screen

# e.g. for the parameter

# For use when using LdapBackend for Hue authentication

## ldap_username_pattern="uid=,ou=People,dc=mycompany,dc=com"

# Create users in Hue when they try to login with their LDAP credentials

# For use when using LdapBackend for Hue authentication

## create_users_on_login = true

# Synchronize a users groups when they login

## sync_groups_on_login=false

# Ignore the case of usernames when searching for existing users in Hue.

## ignore_username_case=true

# Force usernames to lowercase when creating new users from LDAP.

# Takes precedence over force_username_uppercase

## force_username_lowercase=true

# Force usernames to uppercase, cannot be combined with force_username_lowercase

## force_username_uppercase=false

# Use search bind authentication.

## search_bind_authentication=true

# Choose which kind of subgrouping to use: nested or suboordinate (deprecated).

## subgroups=suboordinate

# Define the number of levels to search for nested members.

## nested_members_search_depth=10

# Whether or not to follow referrals

## follow_referrals=false

# Enable python-ldap debugging.

## debug=false

# Sets the debug level within the underlying LDAP C lib.

## debug_level=255

# Possible values for trace_level are 0 for no logging, 1 for only logging the method calls with arguments,

# 2 for logging the method calls with arguments and the complete results and 9 for also logging the traceback of method calls.

## trace_level=0

[[[users]]]

# Base filter for searching for users

## user_filter="objectclass=*"

# The username attribute in the LDAP schema

## user_name_attr=sAMAccountName

[[[groups]]]

# Base filter for searching for groups

## group_filter="objectclass=*"

# The group name attribute in the LDAP schema

## group_name_attr=cn

# The attribute of the group object which identifies the members of the group

## group_member_attr=members

[[[ldap_servers]]]

## [[[[mycompany]]]]

# The search base for finding users and groups

## base_dn="DC=mycompany,DC=com"

# URL of the LDAP server

## ldap_url=ldap://auth.mycompany.com

# The NT domain used for LDAP authentication

## nt_domain=mycompany.com

# A PEM-format file containing certificates for the CA's that

# Hue will trust for authentication over TLS.

# The certificate for the CA that signed the

# LDAP server certificate must be included among these certificates.

# See more here http://www.openldap.org/doc/admin24/tls.html.

## ldap_cert=

## use_start_tls=true

# Distinguished name of the user to bind as -- not necessary if the LDAP server

# supports anonymous searches

## bind_dn="CN=ServiceAccount,DC=mycompany,DC=com"

# Password of the bind user -- not necessary if the LDAP server supports

# anonymous searches

## bind_password=

# Execute this script to produce the bind user password. This will be used

# when 'bind_password' is not set.

## bind_password_script=

# Pattern for searching for usernames -- Use for the parameter

# For use when using LdapBackend for Hue authentication

## ldap_username_pattern="uid=,ou=People,dc=mycompany,dc=com"

## Use search bind authentication.

## search_bind_authentication=true

# Whether or not to follow referrals

## follow_referrals=false

# Enable python-ldap debugging.

## debug=false

# Sets the debug level within the underlying LDAP C lib.

## debug_level=255

# Possible values for trace_level are 0 for no logging, 1 for only logging the method calls with arguments,

# 2 for logging the method calls with arguments and the complete results and 9 for also logging the traceback of method calls.

## trace_level=0

## [[[[[users]]]]]

# Base filter for searching for users

## user_filter="objectclass=Person"

# The username attribute in the LDAP schema

## user_name_attr=sAMAccountName

## [[[[[groups]]]]]

# Base filter for searching for groups

## group_filter="objectclass=groupOfNames"

# The username attribute in the LDAP schema

## group_name_attr=cn

# Configuration options for specifying the Source Version Control.

# ----------------------------------------------------------------

[[vcs]]

## [[[git-read-only]]]

## Base URL to Remote Server

# remote_url=https://github.com/cloudera/hue/tree/master

## Base URL to Version Control API

# api_url=https://api.github.com

## [[[github]]]

## Base URL to Remote Server

# remote_url=https://github.com/cloudera/hue/tree/master

## Base URL to Version Control API

# api_url=https://api.github.com

# These will be necessary when you want to write back to the repository.

## Client ID for Authorized Application

# client_id=

## Client Secret for Authorized Application

# client_secret=

## [[[svn]]

## Base URL to Remote Server

# remote_url=https://github.com/cloudera/hue/tree/master

## Base URL to Version Control API

# api_url=https://api.github.com

# These will be necessary when you want to write back to the repository.

## Client ID for Authorized Application

# client_id=

## Client Secret for Authorized Application

# client_secret=

# Configuration options for specifying the Desktop Database. For more info,

# see http://docs.djangoproject.com/en/1.4/ref/settings/#database-engine

# ------------------------------------------------------------------------

[[database]]

# Database engine is typically one of:

# postgresql_psycopg2, mysql, sqlite3 or oracle.

#

# Note that for sqlite3, 'name', below is a path to the filename. For other backends, it is the database name.

# Note for Oracle, options={"threaded":true} must be set in order to avoid crashes.

# Note for Oracle, you can use the Oracle Service Name by setting "host=" and "port=" and then "name=:/".

# Note for MariaDB use the 'mysql' engine.

engine=mysql

host=192.168.159.200

port=3306

user=root

password=123456

name=hue

# conn_max_age option to make database connection persistent value in seconds

# https://docs.djangoproject.com/en/1.9/ref/databases/#persistent-connections

## conn_max_age=0

# Execute this script to produce the database password. This will be used when 'password' is not set.

## password_script=/path/script

## name=desktop/desktop.db

## options={}

# Configuration options for specifying the Desktop session.

# For more info, see https://docs.djangoproject.com/en/1.4/topics/http/sessions/

# ------------------------------------------------------------------------

[[session]]

# The name of the cookie to use for sessions.

# This can have any value that is not used by the other cookie names in your application.

## cookie_name=sessionid

# The cookie containing the users' session ID will expire after this amount of time in seconds.

# Default is 2 weeks.

## ttl=1209600

# The cookie containing the users' session ID and csrf cookie will be secure.

# Should only be enabled with HTTPS.

## secure=false

# The cookie containing the users' session ID and csrf cookie will use the HTTP only flag.

## http_only=true

# Use session-length cookies. Logs out the user when she closes the browser window.

## expire_at_browser_close=false

# If set, limits the number of concurrent user sessions. 1 represents 1 session per user. Default: 0 (unlimited sessions per user)

## concurrent_user_session_limit=0

# Configuration options for connecting to an external SMTP server

# ------------------------------------------------------------------------

[[smtp]]

# The SMTP server information for email notification delivery

host=localhost

port=25

user=

password=

# Whether to use a TLS (secure) connection when talking to the SMTP server

tls=no

# Default email address to use for various automated notification from Hue

## default_from_email=hue@localhost

# Configuration options for Kerberos integration for secured Hadoop clusters

# ------------------------------------------------------------------------

[[kerberos]]

# Path to Hue's Kerberos keytab file

## hue_keytab=

# Kerberos principal name for Hue

## hue_principal=hue/hostname.foo.com

# Frequency in seconds with which Hue will renew its keytab

## keytab_reinit_frequency=3600

# Path to keep Kerberos credentials cached

## ccache_path=/var/run/hue/hue_krb5_ccache

# Path to kinit

## kinit_path=/path/to/kinit

# Mutual authentication from the server, attaches HTTP GSSAPI/Kerberos Authentication to the given Request object

## mutual_authentication="OPTIONAL" or "REQUIRED" or "DISABLED"

# Configuration options for using OAuthBackend (Core) login

# ------------------------------------------------------------------------

[[oauth]]

# The Consumer key of the application

## consumer_key=XXXXXXXXXXXXXXXXXXXXX

# The Consumer secret of the application

## consumer_secret=XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX

# The Request token URL

## request_token_url=https://api.twitter.com/oauth/request_token

# The Access token URL

## access_token_url=https://api.twitter.com/oauth/access_token

# The Authorize URL

## authenticate_url=https://api.twitter.com/oauth/authorize

# Configuration options for Metrics

# ------------------------------------------------------------------------

[[metrics]]

# Enable the metrics URL "/desktop/metrics"

## enable_web_metrics=True

# If specified, Hue will write metrics to this file.

## location=/var/log/hue/metrics.json

# Time in milliseconds on how frequently to collect metrics

## collection_interval=30000

###########################################################################

# Settings to configure the snippets available in the Notebook

###########################################################################

[notebook]

## Show the notebook menu or not

# show_notebooks=true

## Flag to enable the selection of queries from files, saved queries into the editor or as snippet.

# enable_external_statements=true

## Flag to enable the bulk submission of queries as a background task through Oozie.

# enable_batch_execute=false

## Flag to turn on the SQL indexer.

# enable_sql_indexer=false

## Flag to turn on the Presentation mode of the editor.

# enable_presentation=true

## Flag to enable the SQL query builder of the table assist.

# enable_query_builder=true

## Flag to enable the creation of a coordinator for the current SQL query.

# enable_query_scheduling=false

## Main flag to override the automatic starting of the DBProxy server.

# enable_dbproxy_server=true

## Classpath to be appended to the default DBProxy server classpath.

# dbproxy_extra_classpath=

## Comma separated list of interpreters that should be shown on the wheel. This list takes precedence over the

## order in which the interpreter entries appear. Only the first 5 interpreters will appear on the wheel.

# interpreters_shown_on_wheel=

# One entry for each type of snippet.

[[interpreters]]

# Define the name and how to connect and execute the language.

[[[hive]]]

# The name of the snippet.

name=Hive

# The backend connection to use to communicate with the server.

interface=hiveserver2

[[[impala]]]

name=Impala

interface=hiveserver2

# [[[sparksql]]]

# name=SparkSql

# interface=hiveserver2

[[[spark]]]

name=Scala

interface=livy

[[[pyspark]]]

name=PySpark

interface=livy

[[[r]]]

name=R

interface=livy

[[[jar]]]

name=Spark Submit Jar

interface=livy-batch

[[[py]]]

name=Spark Submit Python

interface=livy-batch

[[[text]]]

name=Text

interface=text

[[[markdown]]]

name=Markdown

interface=text

[[[mysql]]]

name = MySQL

interface=rdbms

[[[sqlite]]]

name = SQLite

interface=rdbms

[[[postgresql]]]

name = PostgreSQL

interface=rdbms

[[[oracle]]]

name = Oracle

interface=rdbms

[[[solr]]]

name = Solr SQL

interface=solr

## Name of the collection handler

# options='{"collection": "default"}'

[[[pig]]]

name=Pig

interface=oozie

[[[java]]]

name=Java

interface=oozie

[[[spark2]]]

name=Spark

interface=oozie

[[[mapreduce]]]

name=MapReduce

interface=oozie

[[[sqoop1]]]

name=Sqoop1

interface=oozie

[[[distcp]]]

name=Distcp

interface=oozie

[[[shell]]]

name=Shell

interface=oozie

# [[[mysql]]]

# name=MySql JDBC

# interface=jdbc

# ## Specific options for connecting to the server.

# ## The JDBC connectors, e.g. mysql.jar, need to be in the CLASSPATH environment variable.

# ## If 'user' and 'password' are omitted, they will be prompted in the UI.

# options='{"url": "jdbc:mysql://localhost:3306/hue", "driver": "com.mysql.jdbc.Driver", "user": "root", "password": "root"}'

###########################################################################

# Settings to configure your Analytics Dashboards

###########################################################################

[dashboard]

# Activate the Dashboard link in the menu.

## is_enabled=true

# Activate the SQL Dashboard (beta).

## has_sql_enabled=false

# Activate the Query Builder (beta).

## has_query_builder_enabled=false

# Activate the static report layout (beta).

## has_report_enabled=false

# Activate the new grid layout system.

## use_gridster=true

# Activate the widget filter and comparison (beta).

## has_widget_filter=false

[[engines]]

# [[[solr]]]

# Requires Solr 6+

## analytics=false

## nesting=false

# [[[sql]]]

## analytics=true

## nesting=false

###########################################################################

# Settings to configure your Hadoop cluster.

###########################################################################

[hadoop]

# Configuration for HDFS NameNode

# ------------------------------------------------------------------------

[[hdfs_clusters]]

# HA support by using HttpFs

[[[default]]]

# Enter the filesystem uri

fs_defaultfs=hdfs://localhost:9000

# NameNode logical name.

## logical_name=

# Use WebHdfs/HttpFs as the communication mechanism.

# Domain should be the NameNode or HttpFs host.

# Default port is 14000 for HttpFs.

webhdfs_url=http://localhost:50070/webhdfs/v1

# Change this if your HDFS cluster is Kerberos-secured

## security_enabled=false

# In secure mode (HTTPS), if SSL certificates from YARN Rest APIs

# have to be verified against certificate authority

## ssl_cert_ca_verify=True

# Directory of the Hadoop configuration

hadoop_conf_dir=/usr/hadoop/etc/hadoop

hadoop_bin=/usr/hadoop/bin

hadoop_hdfs_home=/usr/hadoop

# Configuration for YARN (MR2)

# ------------------------------------------------------------------------

[[yarn_clusters]]

[[[default]]]

# Enter the host on which you are running the ResourceManager

resourcemanager_host=192.168.159.200

# The port where the ResourceManager IPC listens on

resourcemanager_port=8032

# Whether to submit jobs to this cluster

submit_to=True

# Resource Manager logical name (required for HA)

## logical_name=

# Change this if your YARN cluster is Kerberos-secured

## security_enabled=false

# URL of the ResourceManager API

resourcemanager_api_url=http://192.168.159.200:8088

# URL of the ProxyServer API

proxy_api_url=http://hadoop:8088

# URL of the HistoryServer API

history_server_api_url=http://hadoop:19888

# URL of the Spark History Server

## spark_history_server_url=http://hadoop:18088

# In secure mode (HTTPS), if SSL certificates from YARN Rest APIs

# have to be verified against certificate authority

## ssl_cert_ca_verify=True

# HA support by specifying multiple clusters.

# Redefine different properties there.

# e.g.

# [[[ha]]]

# Resource Manager logical name (required for HA)

## logical_name=my-rm-name

# Un-comment to enable

## submit_to=True

# URL of the ResourceManager API

## resourcemanager_api_url=http://localhost:8088

# ...

# Configuration for MapReduce (MR1)

# ------------------------------------------------------------------------

[[mapred_clusters]]

[[[default]]]

# Enter the host on which you are running the Hadoop JobTracker

## jobtracker_host=localhost

# The port where the JobTracker IPC listens on

## jobtracker_port=8021

# JobTracker logical name for HA

## logical_name=

# Thrift plug-in port for the JobTracker

## thrift_port=9290

# Whether to submit jobs to this cluster

submit_to=False

# Change this if your MapReduce cluster is Kerberos-secured

## security_enabled=false

# HA support by specifying multiple clusters

# e.g.

# [[[ha]]]

# Enter the logical name of the JobTrackers

## logical_name=my-jt-name

###########################################################################

# Settings to configure Beeswax with Hive

###########################################################################

[beeswax]

# Host where HiveServer2 is running.

# If Kerberos security is enabled, use fully-qualified domain name (FQDN).

hive_server_host=localhost

# Port where HiveServer2 Thrift server runs on.

hive_server_port=10000

# Hive configuration directory, where hive-site.xml is located

hive_conf_dir=/usr/hive/conf

# Timeout in seconds for thrift calls to Hive service

## server_conn_timeout=120

# Choose whether to use the old GetLog() thrift call from before Hive 0.14 to retrieve the logs.

# If false, use the FetchResults() thrift call from Hive 1.0 or more instead.

## use_get_log_api=false

# Limit the number of partitions that can be listed.

## list_partitions_limit=10000

# The maximum number of partitions that will be included in the SELECT * LIMIT sample query for partitioned tables.

## query_partitions_limit=10

# A limit to the number of rows that can be downloaded from a query before it is truncated.

# A value of -1 means there will be no limit.

## download_row_limit=100000

# Hue will try to close the Hive query when the user leaves the editor page.

# This will free all the query resources in HiveServer2, but also make its results inaccessible.

## close_queries=false

# Hue will use at most this many HiveServer2 sessions per user at a time.

# For Tez, increase the number to more if you need more than one query at the time, e.g. 2 or 3 (Tez has a maximum of 1 query by session).

## max_number_of_sessions=1

# Thrift version to use when communicating with HiveServer2.

# New column format is from version 7.

## thrift_version=7

# A comma-separated list of white-listed Hive configuration properties that users are authorized to set.

## config_whitelist=hive.map.aggr,hive.exec.compress.output,hive.exec.parallel,hive.execution.engine,mapreduce.job.queuename

# Override the default desktop username and password of the hue user used for authentications with other services.

# e.g. Used for LDAP/PAM pass-through authentication.

## auth_username=hue

## auth_password=

[[ssl]]

# Path to Certificate Authority certificates.

## cacerts=/etc/hue/cacerts.pem

# Choose whether Hue should validate certificates received from the server.

## validate=true

###########################################################################

# Settings to configure Metastore

###########################################################################

[metastore]

# Flag to turn on the new version of the create table wizard.

## enable_new_create_table=true

# Flag to force all metadata calls (e.g. list tables, table or column details...) to happen via HiveServer2 if available instead of Impala.

## force_hs2_metadata=false

###########################################################################

# Settings to configure Impala

###########################################################################

[impala]

# Host of the Impala Server (one of the Impalad)

## server_host=localhost

# Port of the Impala Server

## server_port=21050

# Kerberos principal

## impala_principal=impala/hostname.foo.com

# Turn on/off impersonation mechanism when talking to Impala

## impersonation_enabled=False

# Number of initial rows of a result set to ask Impala to cache in order

# to support re-fetching them for downloading them.

# Set to 0 for disabling the option and backward compatibility.

## querycache_rows=50000

# Timeout in seconds for thrift calls

## server_conn_timeout=120

# Hue will try to close the Impala query when the user leaves the editor page.

# This will free all the query resources in Impala, but also make its results inaccessible.

## close_queries=true

# If > 0, the query will be timed out (i.e. cancelled) if Impala does not do any work

# (compute or send back results) for that query within QUERY_TIMEOUT_S seconds.

## query_timeout_s=600

# If > 0, the session will be timed out (i.e. cancelled) if Impala does not do any work

# (compute or send back results) for that session within SESSION_TIMEOUT_S seconds (default 30 min).

## session_timeout_s=1800

# Override the desktop default username and password of the hue user used for authentications with other services.

# e.g. Used for LDAP/PAM pass-through authentication.

## auth_username=hue

## auth_password=

# Username and password for Impala Daemon Web interface for getting Impala queries in JobBrowser

# Set when webserver_htpassword_user and webserver_htpassword_password are set for Impala

## daemon_api_username=

## daemon_api_password=

# Execute this script to produce the password to avoid entering in clear text

## daemon_api_password_script=

# A comma-separated list of white-listed Impala configuration properties that users are authorized to set.

## config_whitelist=debug_action,explain_level,mem_limit,optimize_partition_key_scans,query_timeout_s,request_pool

# Path to the impala configuration dir which has impalad_flags file

## impala_conf_dir=${HUE_CONF_DIR}/impala-conf

[[ssl]]

# SSL communication enabled for this server.

## enabled=false

# Path to Certificate Authority certificates.

## cacerts=/etc/hue/cacerts.pem

# Choose whether Hue should validate certificates received from the server.

## validate=true

###########################################################################

# Settings to configure the Spark application.

###########################################################################

[spark]

# Host address of the Livy Server.

## livy_server_host=localhost

# Port of the Livy Server.

## livy_server_port=8998

# Configure Livy to start in local 'process' mode, or 'yarn' workers.

## livy_server_session_kind=yarn

# Whether Livy requires client to perform Kerberos authentication.

## security_enabled=false

# Host of the Sql Server

## sql_server_host=localhost

# Port of the Sql Server

## sql_server_port=10000

###########################################################################

# Settings to configure the Oozie app

###########################################################################

[oozie]

# Location on local FS where the examples are stored.

## local_data_dir=..../examples

# Location on local FS where the data for the examples is stored.

## sample_data_dir=...thirdparty/sample_data

# Location on HDFS where the oozie examples and workflows are stored.

# Parameters are $TIME and $USER, e.g. /user/$USER/hue/workspaces/workflow-$TIME

## remote_data_dir=/user/hue/oozie/workspaces

# Maximum of Oozie workflows or coodinators to retrieve in one API call.

## oozie_jobs_count=100

# Use Cron format for defining the frequency of a Coordinator instead of the old frequency number/unit.

## enable_cron_scheduling=true

# Flag to enable the saved Editor queries to be dragged and dropped into a workflow.

## enable_document_action=true

# Flag to enable Oozie backend filtering instead of doing it at the page level in Javascript. Requires Oozie 4.3+.

## enable_oozie_backend_filtering=true

# Flag to enable the Impala action.

## enable_impala_action=false

###########################################################################

# Settings to configure the Filebrowser app

###########################################################################

[filebrowser]

# Location on local filesystem where the uploaded archives are temporary stored.

## archive_upload_tempdir=/tmp

# Show Download Button for HDFS file browser.

## show_download_button=false

# Show Upload Button for HDFS file browser.

## show_upload_button=false

# Flag to enable the extraction of a uploaded archive in HDFS.

## enable_extract_uploaded_archive=true

###########################################################################

# Settings to configure Pig

###########################################################################

[pig]

# Location of piggybank.jar on local filesystem.

## local_sample_dir=/usr/share/hue/apps/pig/examples

# Location piggybank.jar will be copied to in HDFS.

## remote_data_dir=/user/hue/pig/examples

###########################################################################

# Settings to configure Sqoop2

###########################################################################

[sqoop]

# For autocompletion, fill out the librdbms section.

# Sqoop server URL

server_url=http://localhost:12000/sqoop

# Path to configuration directory

sqoop_conf_dir=/usr/sqoop/conf

###########################################################################

# Settings to configure Proxy

###########################################################################

[proxy]

# Comma-separated list of regular expressions,

# which match 'host:port' of requested proxy target.

## whitelist=(localhost|127\.0\.0\.1):(50030|50070|50060|50075)

# Comma-separated list of regular expressions,

# which match any prefix of 'host:port/path' of requested proxy target.

# This does not support matching GET parameters.

## blacklist=

###########################################################################

# Settings to configure HBase Browser

###########################################################################

[hbase]

# Comma-separated list of HBase Thrift servers for clusters in the format of '(name|host:port)'.

# Use full hostname with security.

# If using Kerberos we assume GSSAPI SASL, not PLAIN.

## hbase_clusters=(Cluster|localhost:9090)

# HBase configuration directory, where hbase-site.xml is located.

## hbase_conf_dir=/etc/hbase/conf

# Hard limit of rows or columns per row fetched before truncating.

## truncate_limit = 500

# Should come from hbase-site.xml, do not set. 'framed' is used to chunk up responses, used with the nonblocking server in Thrift but is not supported in Hue.

# 'buffered' used to be the default of the HBase Thrift Server. Default is buffered when not set in hbase-site.xml.

## thrift_transport=buffered

###########################################################################

# Settings to configure Solr Search

###########################################################################

[search]

# URL of the Solr Server

## solr_url=http://localhost:8983/solr/

# Requires FQDN in solr_url if enabled

## security_enabled=false

## Query sent when no term is entered

## empty_query=*:*

###########################################################################

# Settings to configure Solr API lib

###########################################################################

[libsolr]

# Choose whether Hue should validate certificates received from the server.

## ssl_cert_ca_verify=true

# Default path to Solr in ZooKeeper.

## solr_zk_path=/solr

###########################################################################

# Settings to configure Solr Indexer

###########################################################################

[indexer]

# Location of the solrctl binary.

## solrctl_path=/usr/bin/solrctl

# Flag to turn on the Morphline Solr indexer.

## enable_scalable_indexer=false

# Oozie workspace template for indexing.

## config_indexer_libs_path=/tmp/smart_indexer_lib

# Flag to turn on the new metadata importer.

## enable_new_importer=false

# Flag to turn on sqoop.

## enable_sqoop=false

###########################################################################

# Settings to configure Job Designer

###########################################################################

[jobsub]

# Location on local FS where examples and template are stored.

## local_data_dir=..../data

# Location on local FS where sample data is stored

## sample_data_dir=...thirdparty/sample_data

###########################################################################

# Settings to configure Job Browser.

###########################################################################

[jobbrowser]

# Share submitted jobs information with all users. If set to false,

# submitted jobs are visible only to the owner and administrators.

## share_jobs=true

# Whether to disalbe the job kill button for all users in the jobbrowser

## disable_killing_jobs=false

# Offset in bytes where a negative offset will fetch the last N bytes for the given log file (default 1MB).

## log_offset=-1000000

# Maximum number of jobs to fetch and display when pagination is not supported for the type.

## max_job_fetch=500

# Show the version 2 of app which unifies all the past browsers into one.

## enable_v2=true

# Show the query section for listing and showing more troubleshooting information.

## enable_query_browser=true

###########################################################################

# Settings to configure Sentry / Security App.

###########################################################################

[security]

# Use Sentry API V1 for Hive.

## hive_v1=true

# Use Sentry API V2 for Hive.

## hive_v2=false

# Use Sentry API V2 for Solr.

## solr_v2=true

###########################################################################

# Settings to configure the Zookeeper application.

###########################################################################

[zookeeper]

[[clusters]]

[[[default]]]

# Zookeeper ensemble. Comma separated list of Host/Port.

# e.g. localhost:2181,localhost:2182,localhost:2183

## host_ports=localhost:2181

# The URL of the REST contrib service (required for znode browsing).

## rest_url=http://localhost:9998

# Name of Kerberos principal when using security.

## principal_name=zookeeper

###########################################################################

# Settings for the User Admin application

###########################################################################

[useradmin]

# Default home directory permissions

## home_dir_permissions=0755

# The name of the default user group that users will be a member of

## default_user_group=default

[[password_policy]]

# Set password policy to all users. The default policy requires password to be at least 8 characters long,

# and contain both uppercase and lowercase letters, numbers, and special characters.

## is_enabled=false

## pwd_regex="^(?=.*?[A-Z])(?=(.*[a-z]){1,})(?=(.*[\d]){1,})(?=(.*[\W_]){1,}).{8,}$"

## pwd_hint="The password must be at least 8 characters long, and must contain both uppercase and lowercase letters, at least one number, and at least one special character."

## pwd_error_message="The password must be at least 8 characters long, and must contain both uppercase and lowercase letters, at least one number, and at least one special character."

###########################################################################

# Settings to configure liboozie

###########################################################################

[liboozie]

# The URL where the Oozie service runs on. This is required in order for

# users to submit jobs. Empty value disables the config check.

## oozie_url=http://localhost:11000/oozie

# Requires FQDN in oozie_url if enabled

## security_enabled=false

# Location on HDFS where the workflows/coordinator are deployed when submitted.

## remote_deployement_dir=/user/hue/oozie/deployments

###########################################################################

# Settings for the AWS lib

###########################################################################

[aws]

[[aws_accounts]]

# Default AWS account

## [[[default]]]

# AWS credentials

## access_key_id=

## secret_access_key=

## security_token=

# Execute this script to produce the AWS access key ID.

## access_key_id_script=/path/access_key_id.sh

# Execute this script to produce the AWS secret access key.

## secret_access_key_script=/path/secret_access_key.sh

# Allow to use either environment variables or

# EC2 InstanceProfile to retrieve AWS credentials.

## allow_environment_credentials=yes

# AWS region to use, if no region is specified, will attempt to connect to standard s3.amazonaws.com endpoint

## region=us-east-1

# Endpoint overrides

## host=

# Endpoint overrides

## proxy_address=

## proxy_port=8080

## proxy_user=

## proxy_pass=

# Secure connections are the default, but this can be explicitly overridden:

## is_secure=true

# The default calling format uses https://.s3.amazonaws.com but

# this may not make sense if DNS is not configured in this way for custom endpoints.

# e.g. Use boto.s3.connection.OrdinaryCallingFormat for https://s3.amazonaws.com/

## calling_format=boto.s3.connection.OrdinaryCallingFormat

###########################################################################

# Settings for the Azure lib

###########################################################################

[azure]

[[azure_accounts]]

# Default Azure account

[[[default]]]

# Azure credentials

## client_id=

# Execute this script to produce the ADLS client id.

## client_id_script=/path/client_id.sh

## client_secret=

# Execute this script to produce the ADLS client secret.

## client_secret_script=/path/client_secret.sh

## tenant_id=

# Execute this script to produce the ADLS tenant id.

## tenant_id_script=/path/tenant_id.sh

[[adls_clusters]]

# Default ADLS cluster

[[[default]]]

## fs_defaultfs=adl://.azuredatalakestore.net

## webhdfs_url=https://.azuredatalakestore.net/webhdfs/v1

###########################################################################

# Settings for the Sentry lib

###########################################################################

[libsentry]

# Hostname or IP of server.

## hostname=localhost

# Port the sentry service is running on.

## port=8038

# Sentry configuration directory, where sentry-site.xml is located.

## sentry_conf_dir=/etc/sentry/conf

# Number of seconds when the privilege list of a user is cached.

## privilege_checker_caching=300

###########################################################################

# Settings to configure the ZooKeeper Lib

###########################################################################

[libzookeeper]

# ZooKeeper ensemble. Comma separated list of Host/Port.

# e.g. localhost:2181,localhost:2182,localhost:2183

## ensemble=localhost:2181

# Name of Kerberos principal when using security.

## principal_name=zookeeper

###########################################################################

# Settings for the RDBMS application

###########################################################################

[librdbms]

# The RDBMS app can have any number of databases configured in the databases

# section. A database is known by its section name

# (IE sqlite, mysql, psql, and oracle in the list below).

[[databases]]

# sqlite configuration.

## [[[sqlite]]]

# Name to show in the UI.

## nice_name=SQLite

# For SQLite, name defines the path to the database.

## name=/tmp/sqlite.db

# Database backend to use.

## engine=sqlite

# Database options to send to the server when connecting.

# https://docs.djangoproject.com/en/1.4/ref/databases/

## options={}

# mysql, oracle, or postgresql configuration.

[[[mysql]]]

# Name to show in the UI.

nice_name="MySQL"

# For MySQL and PostgreSQL, name is the name of the database.

# For Oracle, Name is instance of the Oracle server. For express edition

# this is 'xe' by default.

##name=hue

# Database backend to use. This can be:

# 1. mysql

# 2. postgresql

# 3. oracle

engine=mysql

# IP or hostname of the database to connect to.

host=localhost

# Port the database server is listening to. Defaults are:

# 1. MySQL: 3306

# 2. PostgreSQL: 5432

# 3. Oracle Express Edition: 1521

port=3306

# Username to authenticate with when connecting to the database.

user=root

# Password matching the username to authenticate with when

# connecting to the database.

password=123456

# Database options to send to the server when connecting.

# https://docs.djangoproject.com/en/1.4/ref/databases/

## options={}

# Database schema, to be used only when public schema is revoked in postgres

## schema=public

###########################################################################

# Settings to configure SAML

###########################################################################

[libsaml]

# Xmlsec1 binary path. This program should be executable by the user running Hue.

## xmlsec_binary=/usr/local/bin/xmlsec1

# Entity ID for Hue acting as service provider.

# Can also accept a pattern where '' will be replaced with server URL base.

## entity_id="/saml2/metadata/"

# Create users from SSO on login.

## create_users_on_login=true

# Required attributes to ask for from IdP.

# This requires a comma separated list.

## required_attributes=uid

# Optional attributes to ask for from IdP.

# This requires a comma separated list.

## optional_attributes=

# IdP metadata in the form of a file. This is generally an XML file containing metadata that the Identity Provider generates.

## metadata_file=

# Private key to encrypt metadata with.

## key_file=

# Signed certificate to send along with encrypted metadata.

## cert_file=

# Path to a file containing the password private key.

## key_file_password=/path/key

# Execute this script to produce the private key password. This will be used when 'key_file_password' is not set.

## key_file_password_script=/path/pwd.sh

# A mapping from attributes in the response from the IdP to django user attributes.

## user_attribute_mapping={'uid': ('username', )}

# Have Hue initiated authn requests be signed and provide a certificate.

## authn_requests_signed=false

# Have Hue initiated logout requests be signed and provide a certificate.

## logout_requests_signed=false

# Username can be sourced from 'attributes' or 'nameid'.

## username_source=attributes

# Performs the logout or not.

## logout_enabled=true

###########################################################################

# Settings to configure OpenID

###########################################################################

[libopenid]

# (Required) OpenId SSO endpoint url.

## server_endpoint_url=https://www.google.com/accounts/o8/id

# OpenId 1.1 identity url prefix to be used instead of SSO endpoint url

# This is only supported if you are using an OpenId 1.1 endpoint

## identity_url_prefix=https://app.onelogin.com/openid/your_company.com/

# Create users from OPENID on login.

## create_users_on_login=true

# Use email for username

## use_email_for_username=true

###########################################################################

# Settings to configure OAuth

###########################################################################

[liboauth]

# NOTE:

# To work, each of the active (i.e. uncommented) service must have

# applications created on the social network.

# Then the "consumer key" and "consumer secret" must be provided here.

#

# The addresses where to do so are:

# Twitter: https://dev.twitter.com/apps

# Google+ : https://cloud.google.com/

# Facebook: https://developers.facebook.com/apps

# Linkedin: https://www.linkedin.com/secure/developer

#

# Additionnaly, the following must be set in the application settings:

# Twitter: Callback URL (aka Redirect URL) must be set to http://YOUR_HUE_IP_OR_DOMAIN_NAME/oauth/social_login/oauth_authenticated

# Google+ : CONSENT SCREEN must have email address

# Facebook: Sandbox Mode must be DISABLED

# Linkedin: "In OAuth User Agreement", r_emailaddress is REQUIRED

# The Consumer key of the application

## consumer_key_twitter=

## consumer_key_google=

## consumer_key_facebook=

## consumer_key_linkedin=

# The Consumer secret of the application

## consumer_secret_twitter=

## consumer_secret_google=

## consumer_secret_facebook=

## consumer_secret_linkedin=

# The Request token URL

## request_token_url_twitter=https://api.twitter.com/oauth/request_token

## request_token_url_google=https://accounts.google.com/o/oauth2/auth

## request_token_url_linkedin=https://www.linkedin.com/uas/oauth2/authorization

## request_token_url_facebook=https://graph.facebook.com/oauth/authorize

# The Access token URL

## access_token_url_twitter=https://api.twitter.com/oauth/access_token

## access_token_url_google=https://accounts.google.com/o/oauth2/token

## access_token_url_facebook=https://graph.facebook.com/oauth/access_token

## access_token_url_linkedin=https://api.linkedin.com/uas/oauth2/accessToken

# The Authenticate URL

## authenticate_url_twitter=https://api.twitter.com/oauth/authorize

## authenticate_url_google=https://www.googleapis.com/oauth2/v1/userinfo?access_token=

## authenticate_url_facebook=https://graph.facebook.com/me?access_token=

## authenticate_url_linkedin=https://api.linkedin.com/v1/people/~:(email-address)?format=json&oauth2_access_token=

# Username Map. Json Hash format.

# Replaces username parts in order to simplify usernames obtained

# Example: {"@sub1.domain.com":"_S1", "@sub2.domain.com":"_S2"}

# converts '[email protected]' to 'email_S1'

## username_map={}

# Whitelisted domains (only applies to Google OAuth). CSV format.

## whitelisted_domains_google=

###########################################################################

# Settings to configure Metadata

###########################################################################

[metadata]

[[optimizer]]

# Hostnameto Optimizer API or compatible service.

## hostname=navoptapi.us-west-1.optimizer.altus.cloudera.com

# The name of the key of the service.

## auth_key_id=e0819f3a-1e6f-4904-be69-5b704bacd1245

# The private part of the key associated with the auth_key.

## auth_key_secret='-----BEGIN PRIVATE KEY....'

# Execute this script to produce the auth_key secret. This will be used when `auth_key_secret` is not set.

## auth_key_secret_script=/path/to/script.sh

# The name of the workload where queries are uploaded and optimizations are calculated from. Automatically guessed from auth_key and cluster_id if not specified.

## tenant_id=

# Perform Sentry privilege filtering.

# Default to true automatically if the cluster is secure.

## apply_sentry_permissions=False

# Cache timeout in milliseconds for the Optimizer metadata used in assist, autocomplete, etc.

# Defaults to 10 days, set to 0 to disable caching.

## cacheable_ttl=864000000

# Automatically upload queries after their execution in order to improve recommendations.

## auto_upload_queries=true

# Automatically upload queried tables DDL in order to improve recommendations.

## auto_upload_ddl=true

# Automatically upload queried tables and columns stats in order to improve recommendations.

## auto_upload_stats=false

# Allow admins to upload the last N executed queries in the quick start wizard. Use 0 to disable.

## query_history_upload_limit=10000

[[navigator]]

# Navigator API URL (without version suffix).

## api_url=http://localhost:7187/api

# Which authentication to use: CM or external via LDAP or SAML.

## navmetadataserver_auth_type=CMDB

# Username of the CM user used for authentication.

## navmetadataserver_cmdb_user=hue

# CM password of the user used for authentication.

## navmetadataserver_cmdb_password=

# Execute this script to produce the CM password. This will be used when the plain password is not set.

# navmetadataserver_cmdb_password_script=

# Username of the LDAP user used for authentication.

## navmetadataserver_ldap_user=hue

# LDAP password of the user used for authentication.

## navmetadataserver_ldap_ppassword=

# Execute this script to produce the LDAP password. This will be used when the plain password is not set.

## navmetadataserver_ldap_password_script=

# Username of the SAML user used for authentication.

## navmetadataserver_saml_user=hue

## SAML password of the user used for authentication.

# navmetadataserver_saml_password=

# Execute this script to produce the SAML password. This will be used when the plain password is not set.

## navmetadataserver_saml_password_script=

# Perform Sentry privilege filtering.

# Default to true automatically if the cluster is secure.

## apply_sentry_permissions=False

# Max number of items to fetch in one call in object search.

## fetch_size_search=450

# Max number of items to fetch in one call in object search autocomplete.

## fetch_size_search_interactive=450

# If metadata search is enabled, also show the search box in the left assist.

## enable_file_search=false

错误解决:

error: command 'gcc' failed with exit status 1

make[2]: *** [/opt/hue/desktop/core/build/cryptography-1.3.1/egg.stamp] Error 1

make[2]: Leaving directory `/opt/hue/desktop/core'

make[1]: *** [.recursive-env-install/core] Error 2

make[1]: Leaving directory `/opt/hue/desktop'

make: *** [desktop] Error 2

缺少依赖:yum install gcc libffi-devel python-devel openssl-devel

前面已经安过的依赖,但是报错还是要重新安装一遍

-------------------------------------------------------------------------

[ERROR] Failed to execute goal on project hue-plugins: Could not resolve dependencies for project com.cloudera.hue:hue-plugins:jar:3.12.0-SNAPSHOT: Could not transfer artifact org.apache.hadoop:hadoop-hdfs:jar:2.6.0-cdh5.5.0 from/to cdh.releases.repo (https://repository.cloudera.com/content/groups/cdh-releases-rcs): GET request of: org/apache/hadoop/hadoop-hdfs/2.6.0-cdh5.5.0/hadoop-hdfs-2.6.0-cdh5.5.0.jar from cdh.releases.repo failed: SSL peer shut down incorrectly -> [Help 1]

修改pom文件

#vim /opt/hue/maven/pom.xml

2.8.5

2.8.5

将hadoop-core修改为hadoop-common

hadoop-common

将hadoop-test的版本改为1.2.1

hadoop-test

1.2.1

删除两个ThriftJobTrackerPlugin.Java文件

# rm -rf /opt/hue/desktop/libs/hadoop/java/src/main/java/org/apache/hadoop/thriftfs/ThriftJobTrackerPlugin.java

# rm -rf /opt/hue/desktop/libs/hadoop/java/src/main/java/org/apache/hadoop/mapred/ThriftJobTrackerPlugin.java

----------------------------------------------------------

启动报错# build/env/bin/supervisor

KeyError: "Couldn't get user id for user hue"

增加hue用户:adduser hue

-----------------------------------------------

访问8000端口,报错OperationalError: attempt to write a readonly database

原因是 /opt/hue/desktop/desktop.db 只有读权限

# chmod +777 desktop.db 即可

再访问报错 OperationalError: unable to open database file

原因是包含desktop.db的文件夹对hue用户也是只能读不能写的

[root@hadoop01 hue]# chown -R hue:root *

启动成功

错误:

无法连接到hdfs文件系统

在hadoop配置文件core-site,xml中加入

hadoop.proxyuser.hue.hosts

*

hadoop.proxyuser.hue.groups

*

有几个用户就加几对,大家都要访问hdfs界面,这里的用户是指hue的操作用户

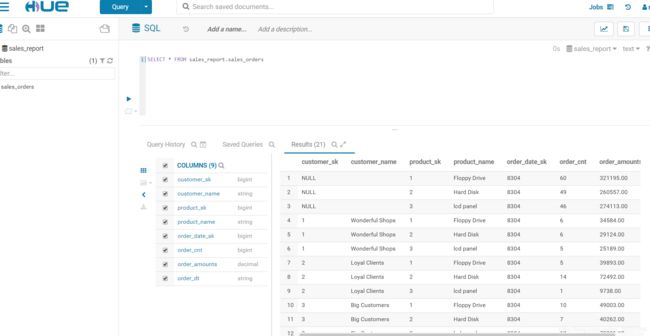

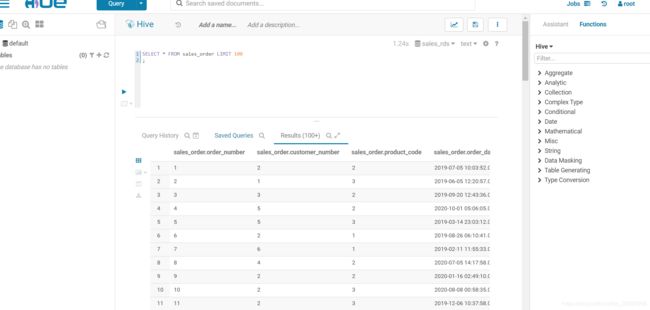

简单的用一下