06 TensorFlow 2.0:CNN CIFAR-100实战

白衣纵马风流年少

佳人倾城回眸浅笑

玉笛声声月色皎皎

起舞翩翩清影窈窕

姻缘树下共求月老

执手暮暮朝朝

《慕夏》

import os

os.environ['TF_CPP_MIN_LOG_LEVEL']='2'

import tensorflow as tf

from tensorflow.keras import layers, optimizers, datasets, Sequential

print(tf.__version__)

gpu_ok = tf.test.is_gpu_available()

print("\nuse GPU",gpu_ok)

tf.random.set_seed(1234)

lr = 1e-4

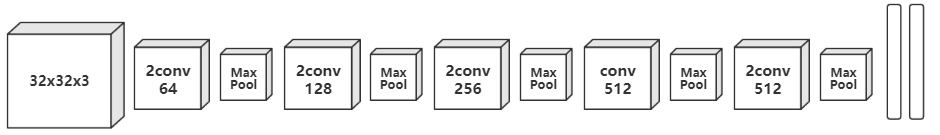

conv_layer = [

layers.Conv2D(filters=64, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.Conv2D(filters=64, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2, 2], strides=2, padding='same'),

layers.Conv2D(filters=128, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.Conv2D(filters=128, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2, 2], strides=2, padding='same'),

layers.Conv2D(filters=256, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.Conv2D(filters=256, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2, 2], strides=2, padding='same'),

layers.Conv2D(filters=512, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.Conv2D(filters=512, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2, 2], strides=2, padding='same'),

layers.Conv2D(filters=512, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.Conv2D(filters=512, kernel_size=[3, 3], strides=(1, 1), padding='same', activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2, 2], strides=2, padding='same')

]

fc_layer = [

layers.Dense(units=256, activation=tf.nn.relu),

layers.Dense(units=128, activation=tf.nn.relu),

layers.Dense(units=100, activation=None)

]

conv_net = Sequential(conv_layer)

conv_net.build(input_shape=[None, 32, 32, 3])

fc_net = Sequential(fc_layer)

fc_net.build(input_shape=[None, 512])

optimizer = optimizers.Adam(learning_rate=lr)

print(conv_net.summary())

print(fc_net.summary())

def normlize_data(x, y):

x = tf.cast(x, dtype=tf.float32)/255.

y = tf.cast(y, dtype=tf.int32)

return x, y

(x, y),(x_test, y_test) = datasets.cifar100.load_data()

y = tf.squeeze(y, axis=1)

y_test = tf.squeeze(y_test, axis=1)

train_db = tf.data.Dataset.from_tensor_slices((x, y))

train_db = train_db.shuffle(2000).map(normlize_data).batch(128)

test_db = tf.data.Dataset.from_tensor_slices((x_test, y_test))

test_db = test_db.map(normlize_data).batch(128)

type(train_db)

sample = next(iter(train_db))

print(sample[0].shape)

print(sample[1].shape)

print(tf.reduce_max(sample[0]), tf.reduce_min(sample[0]))

print(tf.reduce_max(sample[1]), tf.reduce_min(sample[1]))

variables = conv_net.trainable_variables+fc_net.trainable_variables

def execut():

for epoch in range(3):

for step, (x, y) in enumerate(train_db):

with tf.GradientTape() as tape:

conv_net_out = conv_net(x) # [b, 32, 32, 3] -> [b, 1, 1, 512]

conv_net_out = tf.reshape(conv_net_out, shape=[-1, 512])

out = fc_net(conv_net_out) # [b, 512] -> [b, 100]

y_one_hot = tf.one_hot(y, depth=100)

loss = tf.losses.categorical_crossentropy(y_one_hot, out, from_logits=True)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, variables)

optimizer.apply_gradients(zip(grads, variables))

if step%100==0:

print(epoch, step, 'loss:', float(loss))

# every epoch compute acc

total_num = 0

total_correct = 0

for test_x, test_y in test_db:

conv_net_test_out = conv_net(test_x)

conv_net_test_out = tf.reshape(conv_net_test_out, shape=[-1, 512])

test_out = fc_net(conv_net_test_out)

prob = tf.nn.softmax(test_out, axis=1)

pred = tf.argmax(prob, axis=1)

pred = tf.cast(pred, dtype=tf.int32)

correct = tf.reduce_sum(tf.cast(tf.equal(pred, y)), dtype=tf.int32)

total_num += x.shape[0]

total_correct += correct

acc = total_correct/total_num

print(epoch, 'acc:', acc)

execut()