深度学习笔记(25):第四课第二周第二次作业

前言

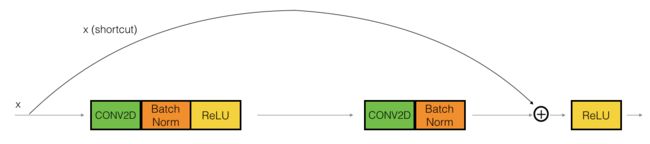

本次实验实现了残差网络ResNet。残差网络的一个重要的思想是skip,这样可以加深神经网络,使得深层次的神经网络能够下降,进而训练出更好的神经网络来。这次实验我们可以从中了解到Reg50残差网络的结构,他的基本构成,并且使用论文所提供的参数搭建出一个这样的神经网络来。本次实验能够帮助大家学习更多的keras构建,更加熟练地搭建或者复现自己想要搭建的神经网络。

代码

# GRADED FUNCTION: identity_block

def identity_block(X, f, filters, stage, block):

"""

Implementation of the identity block as defined in Figure 4

Arguments:

X -- input tensor of shape (m, n_H_prev, n_W_prev, n_C_prev)

f -- integer, specifying the shape of the middle CONV's window for the main path

filters -- python list of integers, defining the number of filters in the CONV layers of the main path

stage -- integer, used to name the layers, depending on their position in the network

block -- string/character, used to name the layers, depending on their position in the network

Returns:

X -- output of the identity block, tensor of shape (n_H, n_W, n_C)

"""

# defining name basis

conv_name_base = 'res' + str(stage) + block + '_branch'

bn_name_base = 'bn' + str(stage) + block + '_branch'

# Retrieve Filters

F1, F2, F3 = filters

# Save the input value. You'll need this later to add back to the main path.

X_shortcut = X

# First component of main path

X = Conv2D(filters = F1, kernel_size = (1, 1), strides = (1,1), padding = 'valid', name = conv_name_base + '2a', kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3, name = bn_name_base + '2a')(X)

X = Activation('relu')(X)

### START CODE HERE ###

# Second component of main path (≈3 lines)

X = Conv2D(filters = F2, kernel_size = (f, f), strides = (1,1), padding = 'same', name = conv_name_base + '2b', kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3, name = bn_name_base + '2b')(X)

X = Activation('relu')(X)

# Third component of main path (≈2 lines)

X = Conv2D(filters = F3, kernel_size = (1, 1), strides = (1,1), padding = 'valid', name = conv_name_base + '2c', kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3, name = bn_name_base + '2c')(X)

# Final step: Add shortcut value to main path, and pass it through a RELU activation (≈2 lines)

X = layers.add([X, X_shortcut])

X = Activation('relu')(X)

### END CODE HERE ###

return X

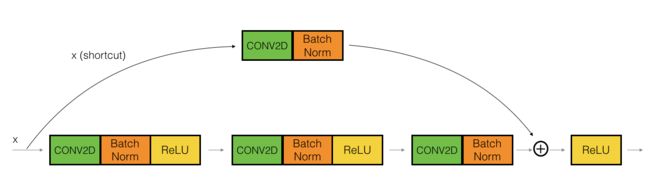

# GRADED FUNCTION: convolutional_block

def convolutional_block(X, f, filters, stage, block, s = 2):

"""

Implementation of the convolutional block as defined in Figure 4

Arguments:

X -- input tensor of shape (m, n_H_prev, n_W_prev, n_C_prev)

f -- integer, specifying the shape of the middle CONV's window for the main path

filters -- python list of integers, defining the number of filters in the CONV layers of the main path

stage -- integer, used to name the layers, depending on their position in the network

block -- string/character, used to name the layers, depending on their position in the network

s -- Integer, specifying the stride to be used

Returns:

X -- output of the convolutional block, tensor of shape (n_H, n_W, n_C)

"""

# defining name basis

conv_name_base = 'res' + str(stage) + block + '_branch'

bn_name_base = 'bn' + str(stage) + block + '_branch'

# Retrieve Filters

F1, F2, F3 = filters

# Save the input value

X_shortcut = X

##### MAIN PATH #####

# First component of main path

X = Conv2D(F1, (1, 1), strides = (s,s), name = conv_name_base + '2a', padding='valid', kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3, name = bn_name_base + '2a')(X)

X = Activation('relu')(X)

### START CODE HERE ###

# Second component of main path (≈3 lines)

X = Conv2D(F2, (f, f), strides = (1, 1), name = conv_name_base + '2b',padding='same', kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3, name = bn_name_base + '2b')(X)

X = Activation('relu')(X)

# Third component of main path (≈2 lines)

X = Conv2D(F3, (1, 1), strides = (1, 1), name = conv_name_base + '2c',padding='valid', kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3, name = bn_name_base + '2c')(X)

##### SHORTCUT PATH #### (≈2 lines)

X_shortcut = Conv2D(F3, (1, 1), strides = (s, s), name = conv_name_base + '1',padding='valid', kernel_initializer = glorot_uniform(seed=0))(X_shortcut)

X_shortcut = BatchNormalization(axis = 3, name = bn_name_base + '1')(X_shortcut)

# Final step: Add shortcut value to main path, and pass it through a RELU activation (≈2 lines)

X = layers.add([X, X_shortcut])

X = Activation('relu')(X)

### END CODE HERE ###

return X

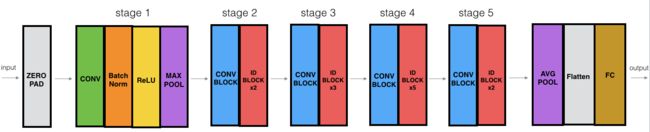

- 零填充填充(3,3)的输入

- 阶段1:

- 2D卷积具有64个形状为(7,7)的滤波器,并使用(2,2)步幅,名称是“conv1”。

- BatchNorm应用于输入的通道轴。

- MaxPooling使用(3,3)窗口和(2,2)步幅。

- 阶段2:

- 卷积块使用三组大小为[64,64,256]的滤波器,“f”为3,“s”为1,块为“a”。

- 2个标识块使用三组大小为[64,64,256]的滤波器,“f”为3,块为“b”和“c”。

- 阶段3:

- 卷积块使用三组大小为[128,128,512]的滤波器,“f”为3,“s”为2,块为“a”。

- 3个标识块使用三组大小为[128,128,512]的滤波器,“f”为3,块为“b”,“c”和“d”。

- 阶段4:

- 卷积块使用三组大小为[256、256、1024]的滤波器,“f”为3,“s”为2,块为“a”。

- 5个标识块使用三组大小为[256、256、1024]的滤波器,“f”为3,块为“b”,“c”,“d”,“e”和“f”。

- 阶段5:

- 卷积块使用三组大小为[512、512、2048]的滤波器,“f”为3,“s”为2,块为“a”。

- 2个标识块使用三组大小为[256、256、2048]的滤波器,“f”为3,块为“b”和“c”。

- 2D平均池使用形状为(2,2)的窗口,其名称为“avg_pool”。

- Flatten层没有任何超参数或名称。

- 全连接(密集)层使用softmax激活将其输入减少为类数。名字是

'fc' + str(classes)。

# GRADED FUNCTION: ResNet50

def ResNet50(input_shape = (64, 64, 3), classes = 6):

"""

Implementation of the popular ResNet50 the following architecture:

CONV2D -> BATCHNORM -> RELU -> MAXPOOL -> CONVBLOCK -> IDBLOCK*2 -> CONVBLOCK -> IDBLOCK*3

-> CONVBLOCK -> IDBLOCK*5 -> CONVBLOCK -> IDBLOCK*2 -> AVGPOOL -> TOPLAYER

Arguments:

input_shape -- shape of the images of the dataset

classes -- integer, number of classes

Returns:

model -- a Model() instance in Keras

"""

# Define the input as a tensor with shape input_shape

X_input = Input(input_shape)

# Zero-Padding

X = ZeroPadding2D((3, 3))(X_input)

# Stage 1

X = Conv2D(64, (7, 7), strides = (2, 2), name = 'conv1', kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3, name = 'bn_conv1')(X)

X = Activation('relu')(X)

X = MaxPooling2D((3, 3), strides=(2, 2))(X)

# Stage 2

X = convolutional_block(X, f = 3, filters = [64, 64, 256], stage = 2, block='a', s = 1)

X = identity_block(X, 3, [64, 64, 256], stage=2, block='b')

X = identity_block(X, 3, [64, 64, 256], stage=2, block='c')

### START CODE HERE ###

# Stage 3 (≈4 lines)

# The convolutional block uses three set of filters of size [128,128,512], "f" is 3, "s" is 2 and the block is "a".

# The 3 identity blocks use three set of filters of size [128,128,512], "f" is 3 and the blocks are "b", "c" and "d".

X = convolutional_block(X, f = 3, filters=[128,128,512], stage = 3, block='a', s = 2)

X = identity_block(X, f = 3, filters=[128,128,512], stage= 3, block='b')

X = identity_block(X, f = 3, filters=[128,128,512], stage= 3, block='c')

X = identity_block(X, f = 3, filters=[128,128,512], stage= 3, block='d')

# Stage 4 (≈6 lines)

# The convolutional block uses three set of filters of size [256, 256, 1024], "f" is 3, "s" is 2 and the block is "a".

# The 5 identity blocks use three set of filters of size [256, 256, 1024], "f" is 3 and the blocks are "b", "c", "d", "e" and "f".

X = convolutional_block(X, f = 3, filters=[256, 256, 1024], block='a', stage=4, s = 2)

X = identity_block(X, f = 3, filters=[256, 256, 1024], block='b', stage=4)

X = identity_block(X, f = 3, filters=[256, 256, 1024], block='c', stage=4)

X = identity_block(X, f = 3, filters=[256, 256, 1024], block='d', stage=4)

X = identity_block(X, f = 3, filters=[256, 256, 1024], block='e', stage=4)

X = identity_block(X, f = 3, filters=[256, 256, 1024], block='f', stage=4)

# Stage 5 (≈3 lines)

# The convolutional block uses three set of filters of size [512, 512, 2048], "f" is 3, "s" is 2 and the block is "a".

# The 2 identity blocks use three set of filters of size [256, 256, 2048], "f" is 3 and the blocks are "b" and "c".

X = convolutional_block(X, f = 3, filters=[512, 512, 2048], stage=5, block='a', s = 2)

# filters should be [256, 256, 2048], but it fail to be graded. Use [512, 512, 2048] to pass the grading

X = identity_block(X, f = 3, filters=[256, 256, 2048], stage=5, block='b')

X = identity_block(X, f = 3, filters=[256, 256, 2048], stage=5, block='c')

# AVGPOOL (≈1 line). Use "X = AveragePooling2D(...)(X)"

# The 2D Average Pooling uses a window of shape (2,2) and its name is "avg_pool".

X = AveragePooling2D(pool_size=(2,2))(X)

### END CODE HERE ###

# output layer

X = Flatten()(X)

X = Dense(classes, activation='softmax', name='fc' + str(classes), kernel_initializer = glorot_uniform(seed=0))(X)

# Create model

model = Model(inputs = X_input, outputs = X, name='ResNet50')

return model

然后导入数据集训练即可