- Kafka Raft知识整理

自东向西

Kafka知识整理kafka分布式

背景Kafka2.8之后,移除了Zookeeper,而使用了自己研发的KafkaRaft。为什么移除Zookeeper?原来Zookeeper在Kafka中承担了Controller选举、Broker注册、TopicPartition注册和选举、Consumer/Producer元数据管理和负载均衡等。即承担了各种元数据的保存和各种选举。而Zookeeper并“不快”,集群规模大了之后,很容易成为

- Kafka topic、producer、consumer的基础使用

病妖

Kafkakafkabigdata分布式

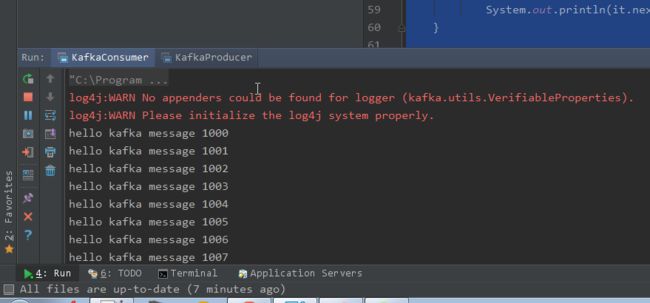

文章目录Kafka初级前言1.topic的增删改查2.生产者的消息发送3.消费者消费数据Kafka初级前言关于kafka的集群安装这里就先跳过,如果需要相关资料以及学习视频的可以在留言下留下联系信息(邮箱、微信、qq都可),我们直接从kafka的学习开始,这是初级阶段,这篇博主主要讲述kafka的命令行操作。1.topic的增删改查创建主题:切换到kafka的相关目录,进行以下命令行操作bin/k

- kafka消费能力压测:使用官方工具

ezreal_pan

kafka工具kafka分布式

背景在之前的业务场景中,我们发现Kafka的实际消费能力远低于预期。尽管我们使用了kafka-go组件并进行了相关测试,测试情况见《kafka-go:性能测试》这篇文章。但并未能准确找出消费能力低下的原因。我们曾怀疑这可能是由我的电脑网络带宽问题或Kafka部署时的某些未知配置所导致。为了进一步确定问题的根源,我们决定对Kafka的消费能力进行压力测试。在这篇《kafka的Docker镜像使用说明

- Kafka的生产者和消费者模型

Java资深爱好者

kafka分布式

Kafka的生产者和消费者模型是一种消息传递模式,以下是该模型的详细描述:一、生产者(Producer)定义:生产者是消息的生产者,它将消息发布到Kafka的主题(Topic)中。功能:生产者可以将消息发送到指定的分区(Partition)或让Kafka自行选择分区。生产者还可以控制消息的序列化和分区策略。工作原理:生产者通过Kafka提供的API与Kafka集群进行通信,将消息异步发送到指定的主

- 阶段 1:Kafka基础认知

AI航海家(Ethan)

分布式kafkakafka分布式

核心知识点Kafka三大核心角色:Producer(生产者):负责向Kafkatopic推送数据。可以理解为数据流的发起者。Broker:Kafka服务器节点,负责存储数据流。Kafka集群由多个broker组成。Consumer(消费者):负责从Kafkatopic中读取和处理数据,可以是日志分析服务、数据库服务器等。核心概念:Topic:Kafka的基本单元,类似于数据库的表结构,用于对数据进

- 正式开源:使用Kafka FDW 加载数据到 Apache Cloudberry™

数据库开源软件

ApacheCloudberry™(Incubating)由GreenplumDatabase核心开发者创建,是一款领先且成熟的开源大规模并行处理(MassivelyParallelProcessing,MPP)数据库。它基于开源版的PivotalGreenplumDatabase®衍生而来,但采用了更新的PostgreSQL内核,并具备更先进的企业级功能。Cloudberry可以作为数据仓库使用

- RabbitMQ,RocketMQ,Kafka 消息模型对比分析

Java架构设计

javaJava程序员消息模型开发语言程序人生

消息模型消息队列的演进消息队列模型早期的消息队列是按照”队列”的数据结构来设计的。生产者(Producer)产生消息,进行入队操作,消费者(Consumer)接收消息,就是出队操作,存在于服务端的消息容器就称为消息队列。当然消费者也可能不止一个,存在的多个消费者是竞争的关系,消息被其中的一个消费者消费了,其它的消费者就拿不到消息了。发布订阅模型如果一个人消息想要同时被多个消费者消费,那么上面的队列

- Kafka日志文件探秘:从数据解析到故障排查的完整指南

磐基Stack专业服务团队

Kafkakafka分布式

#作者:猎人文章目录1、查看Log文件基本数据信息2、index文件健康性检查(--index-sanity-check)3、转储文件(--max-message-size)4、偏移量解码(--offsets-decoder)5、日志数据解析(--transaction-log-decoder)6、查询Log文件具体数据(--print-data-log)7、查看index文件具体内容8、查看ti

- 消息队列简述

八二年的栗子

java

消息队列(MessageQueue),是分布式系统中重要的组件,其通用的使用场景可以简单地描述为:当不需要立即获得结果,但是并发量又需要进行控制的时候,差不多就是需要使用消息队列的时候。消息队列主要解决了应用耦合、异步处理、流量削锋等问题。当前使用较多的消息队列有RabbitMQ、RocketMQ、ActiveMQ、Kafka、ZeroMQ、MetaMq等,而部分数据库如Redis、Mysql以及

- Dubbo

java

Dubbo是一个高性能的分布式服务框架,提供了多种调用策略来优化服务调用的性能和可靠性。一、负载均衡策略:随机(Random):随机选择一个服务提供者进行调用,适合动态调整权重的场景。轮询(RoundRobin):按顺序依次选择服务提供者,适合请求分布均匀的场景,但可能存在请求累积的问题。最少活跃调用(LeastActive):选择当前活跃调用数最少的服务提供者,适合解决慢提供者接收更少请求的场景

- kafka搭建

瀟湘夜雨-秋雨梧桐

kafka分布式zookeeper大数据数据仓库

文章目录前言一、kafka是什么?二、应用场景1.实时数据处理2.日志聚合与分析3.事件驱动架构场景三、kafka搭建流程1.确定环境2.整理机器清单3.确定组件版本4JDK安装5kafka安装5.1master安装5.2slave_1从节点安装5.3slave_2从节点安装5.4配置环境变量5.5启动kafak5.5kafka集群一键管理脚本总结前言Kafka以其卓越的性能、高可靠性和可扩展性,

- Spring Boot 整合 Kafka 详解

码农爱java

KafkaKafkaMQSpringBoot微服务中间件1024程序员节

前言:上一篇分享了Kafka的一些基本概念及应用场景,本篇我们来分享一下在SpringBoot项目中如何使用Kafka。Kafka系列文章传送门Kafka简介及核心概念讲解SpringBoot集成Kafka引入Kafka依赖在项目的pom.xml文件中引入Kafka依赖,如下:org.springframework.cloudspring-cloud-starter-stream-kafka3.1

- linux 搭建kafka集群

节点。csn

linuxkafka运维

目录、一、环境准备二、文件配置三、集群启动一、环境准备1、我这里是准备三台服务器节点ipnode1192.168.72.132node2192.168.72.133node3192.168.72.1342、安装jdklinux环境安装jdk_openjdk1.8.0_345-CSDN博客3、下载kafka安装包安装包下载wget--nhttps://downloads.apache.org/kaf

- 【Redis系列】Redis安装与使用

m0_74825409

面试学习路线阿里巴巴redis数据库缓存

???欢迎来到我的博客,很高兴能够在这里和您见面!希望您在这里可以感受到一份轻松愉快的氛围,不仅可以获得有趣的内容和知识,也可以畅所欲言、分享您的想法和见解。推荐:kwan的首页,持续学习,不断总结,共同进步,活到老学到老导航檀越剑指大厂系列:全面总结java核心技术点,如集合,jvm,并发编程redis,kafka,Spring,微服务,Netty等常用开发工具系列:罗列常用的开发工具,如IDE

- Flink在指定时间窗口内统计均值,超过阈值后报警

小的~~

flink均值算法大数据

1、需求统计物联网设备收集上来的温湿度数据,如果5分钟内的均值超过阈值(30摄氏度)则发出告警消息,要求时间窗口和阈值可在管理后台随时修改,实时生效(完成当前窗口后下一个窗口使用最新配置)。物联网设备的数据从kafka中读取,配置数据从mysql中读取,有个管理后台可以调整窗口和阈值大小。2、思路使用flink的双流join,配置数据使用广播流,设备数据使用普通流。3、实现代码packagecu.

- 【动态路由】系统Web URL资源整合系列(后端技术实现)【apisix实现】

飞火流星02027

URL整合apisix反向代理apisix网关apisix实现web资源整合系统URL资源整合apisix基于请求参数的路由apisix基于请求头的路由APISIXDashboard

需求说明软件功能需求:反向代理功能(描述:apollo、eureka控、apisix、sentinel、普米、kibana、timetask、grafana、hbase、skywalking-ui、pinpoint、cmak界面、kafka-map、nacos、gateway、elasticsearch、oa-portal业务应用等多个web资源等只能通过有限个代理地址访问),不考虑SSO。软件质

- Apache ZooKeeper 分布式协调服务

slovess

分布式apachezookeeper

1.ZooKeeper概述1.1定义与定位核心定位:分布式系统的协调服务,提供强一致性的配置管理、命名服务、分布式锁和集群管理能力核心模型:基于树形节点(ZNode)的键值存储,支持Watcher监听机制生态地位:Hadoop/Kafka等生态核心依赖,分布式系统基础设施级组件1.2设计目标强一致性:所有节点数据最终一致(基于ZAB协议)高可用性:集群半数以上节点存活即可提供服务顺序性:全局唯一递

- 深入理解Kafka—如何保证Exactly Once语义

AI天才研究院

Python实战自然语言处理人工智能语言模型编程实践开发语言架构设计

作者:禅与计算机程序设计艺术1.简介Kafka是一种高吞吐量、分布式、可分区、多副本的消息系统。它在使用上非常灵活,可以作为Pulsar、RabbitMQ的替代品。但同时也带来了一些复杂性和问题,比如ExactlyOnce语义。从本质上说,ExactlyOnce就是对消费者读取的数据只要不丢失,就一定能得到一次完整的处理,而且不会被重复处理。确保ExactlyOnce语义一直是企业级应用中必须考虑

- 【kafka系列】生产者

漫步者TZ

kafkakafka数据库大数据

目录发送流程1.流程逻辑分析阶段一:主线程处理阶段二:Sender线程异步发送核心设计思想2.流程关键点总结重要参数一、核心必填参数二、可靠性相关参数三、性能优化参数四、高级配置五、安全性配置(可选)六、错误处理与监控典型配置示例关键注意事项发送流程序列化与分区:消息通过Partitioner选择目标分区(默认轮询或哈希),序列化后加入RecordAccumulator缓冲区。批次合并:Sende

- 【kafka系列】broker

漫步者TZ

kafka数据库分布式kafka

目录Broker接收生产者消息和返回消息给消费者的流程逻辑分析Broker处理生产者消息的核心流程Broker处理消费者消息的核心流程关键点总结Broker接收生产者消息和返回消息给消费者的流程逻辑分析Broker处理生产者消息的核心流程接收请求Broker的SocketServer接收来自生产者的ProduceRequest(基于Reactor网络模型)。请求解析与验证解析请求头(Topic、P

- 【kafka系列】如何选择消息语义?

漫步者TZ

kafkakafka分布式数据库大数据

目录业务权衡如何选择消息语义?业务权衡维度At-Most-OnceAt-Least-OnceExactly-Once消息丢失风险高低无消息重复风险无高无网络开销最低(无重试)中等(可能重试)最高(事务+协调)适用场景可容忍丢失的实时数据流不允许丢失的日志采集金融交易、精准统计如何选择消息语义?At-Most-Once:优先性能与低延迟,接受数据丢失(如实时监控)。At-Least-Once:优先可

- kafka动态监听主题

S Y H

微服务组件kafkalinq分布式

简单版本importorg.springframework.beans.factory.annotation.Autowired;importorg.springframework.kafka.core.ConsumerFactory;importorg.springframework.kafka.listener.ConcurrentMessageListenerContainer;import

- 【kafka系列】Exactly Once语义

漫步者TZ

kafkakafka数据库大数据分布式

目录1.Exactly-Once语义的定义2.Kafka实现Exactly-Once的机制3.端到端Exactly-Once示例场景描述3.1生产者配置与代码3.2消费者配置与代码4.异常场景与Exactly-Once保障场景1:生产者发送消息后宕机场景2:消费者处理消息后宕机场景3:Broker宕机5.关键实现细节6.总结1.Exactly-Once语义的定义Exactly-Once(精确一次)

- 【Golang学习之旅】Go 语言微服务架构实践(gRPC、Kafka、Docker、K8s)

程序员林北北

架构golang学习微服务云原生kafka

文章目录1.前言:为什么选择Go语言构建微服务架构1.1微服务架构的兴趣与挑战1.2为什么选择Go语言构建微服务架构2.Go语言简介2.1Go语言的特点与应用2.2Go语言的生态系统3.微服务架构中的gRPC实践3.1什么是gRPC?3.2gRPC在Go语言中的实现1.前言:为什么选择Go语言构建微服务架构1.1微服务架构的兴趣与挑战随着互联网技术的飞速发展,尤其是云计算的普及,微服务架构已经成为

- zipkin备忘

dzl84394

springboot学习日志javazipkin

server安装https://zipkin.io/pages/quickstart.html这里提供了几种安装方式当天他可以吧数据方存cassandra,kafka,es,等地方服务器直接下载curl-sSLhttps://zipkin.io/quickstart.sh|bash-s得到zipkin.jar启动nohup/usr/local/jdk17/bin/java-jarzipkin.ja

- org.apache.kafka.common.errors.TimeoutException

一张假钞

apachekafka分布式

个人博客地址:org.apache.kafka.common.errors.TimeoutException|一张假钞的真实世界使用kafka-console-producer.sh向远端Kafka写入数据时遇到以下错误:$bin/kafka-console-producer.sh--broker-list172.16.72.202:9092--topictestThisisamessage[20

- kafka的kafka-console-consumer.sh和kafka-console-producer.sh如何使用

WilsonShiiii

kafka分布式

一、两款工具对比功能用途kafka-console-consumer.sh是简单的命令行消费者工具,主要用于在控制台显示从Kafka主题消费的消息,适用于测试生产者是否正常发送消息、查看消息格式等调试场景。kafka-consumer-perf-test.sh则专为测试Kafka消费者性能设计,能在指定条件下(如消息数量、线程数等)测试消费者吞吐量等性能指标,帮助进行性能评估、优化及容量规划。参数

- RocketMQ与kafka如何解决消息积压问题?

一个儒雅随和的男子

RocketMQrocketmqkafka分布式

前言 消息积压问题简单来说,就是MQ存在了大量没法快速消费完的数据,造成消息积压的原因主要在于“进入的多,消费的少”,或者生产的速度过快,而消费速度赶不上,基于这一问题,我们主要介绍如何通过前期的开发设置去避免出现消息积压的问题。主要介绍两款产品RocketMQ和Kafka的解决方式,以及其差异,本质上的差异就是RocketMQ与Kafka之间的存储结构差异带来的,基本的处理思路还是怎么控制生产

- 浅聊MQ之Kafka与RabbitMQ简用

天天向上杰

kafkarabbitmq分布式

(前记:内容有点多,先看目录再挑着看。)Kafka与RabbitMQ的使用举例Kafka的使用举例安装与启动:从ApacheKafka官网下载Kafka中间件的运行脚本。解压后,通过命令行启动Zookeeper(Kafka的运行依赖于Zookeeper)。启动Kafka的服务器进程。基本功能实现:生产者:启动生产者进程,向指定的主题(Topic)发送消息。消费者:启动消费者进程,从指定的主题中接收

- 浅聊MQ之Kafka、RabbitMQ、ActiveMQ、RocketMQ持久化策略

天天向上杰

kafkarabbitmqactivemqrocketmqjava

以下是主流消息队列(Kafka、RabbitMQ、ActiveMQ、RocketMQ)的持久化策略详解及实际场景示例:1.Kafka持久化策略核心机制:日志分段存储:每个Topic分区对应一个物理日志文件(顺序写入)分段策略:默认每1GB或7天生成新Segment(log.segment.bytes/log.roll.hours)索引文件:.index(偏移量索引)和.timeindex(时间戳索

- 枚举的构造函数中抛出异常会怎样

bylijinnan

javaenum单例

首先从使用enum实现单例说起。

为什么要用enum来实现单例?

这篇文章(

http://javarevisited.blogspot.sg/2012/07/why-enum-singleton-are-better-in-java.html)阐述了三个理由:

1.enum单例简单、容易,只需几行代码:

public enum Singleton {

INSTANCE;

- CMake 教程

aigo

C++

转自:http://xiang.lf.blog.163.com/blog/static/127733322201481114456136/

CMake是一个跨平台的程序构建工具,比如起自己编写Makefile方便很多。

介绍:http://baike.baidu.com/view/1126160.htm

本文件不介绍CMake的基本语法,下面是篇不错的入门教程:

http:

- cvc-complex-type.2.3: Element 'beans' cannot have character

Cb123456

springWebgis

cvc-complex-type.2.3: Element 'beans' cannot have character

Line 33 in XML document from ServletContext resource [/WEB-INF/backend-servlet.xml] is i

- jquery实例:随页面滚动条滚动而自动加载内容

120153216

jquery

<script language="javascript">

$(function (){

var i = 4;$(window).bind("scroll", function (event){

//滚动条到网页头部的 高度,兼容ie,ff,chrome

var top = document.documentElement.s

- 将数据库中的数据转换成dbs文件

何必如此

sqldbs

旗正规则引擎通过数据库配置器(DataBuilder)来管理数据库,无论是Oracle,还是其他主流的数据都支持,操作方式是一样的。旗正规则引擎的数据库配置器是用于编辑数据库结构信息以及管理数据库表数据,并且可以执行SQL 语句,主要功能如下。

1)数据库生成表结构信息:

主要生成数据库配置文件(.conf文

- 在IBATIS中配置SQL语句的IN方式

357029540

ibatis

在使用IBATIS进行SQL语句配置查询时,我们一定会遇到通过IN查询的地方,在使用IN查询时我们可以有两种方式进行配置参数:String和List。具体使用方式如下:

1.String:定义一个String的参数userIds,把这个参数传入IBATIS的sql配置文件,sql语句就可以这样写:

<select id="getForms" param

- Spring3 MVC 笔记(一)

7454103

springmvcbeanRESTJSF

自从 MVC 这个概念提出来之后 struts1.X struts2.X jsf 。。。。。

这个view 层的技术一个接一个! 都用过!不敢说哪个绝对的强悍!

要看业务,和整体的设计!

最近公司要求开发个新系统!

- Timer与Spring Quartz 定时执行程序

darkranger

springbean工作quartz

有时候需要定时触发某一项任务。其实在jdk1.3,java sdk就通过java.util.Timer提供相应的功能。一个简单的例子说明如何使用,很简单: 1、第一步,我们需要建立一项任务,我们的任务需要继承java.util.TimerTask package com.test; import java.text.SimpleDateFormat; import java.util.Date;

- 大端小端转换,le32_to_cpu 和cpu_to_le32

aijuans

C语言相关

大端小端转换,le32_to_cpu 和cpu_to_le32 字节序

http://oss.org.cn/kernel-book/ldd3/ch11s04.html

小心不要假设字节序. PC 存储多字节值是低字节为先(小端为先, 因此是小端), 一些高级的平台以另一种方式(大端)

- Nginx负载均衡配置实例详解

avords

[导读] 负载均衡是我们大流量网站要做的一个东西,下面我来给大家介绍在Nginx服务器上进行负载均衡配置方法,希望对有需要的同学有所帮助哦。负载均衡先来简单了解一下什么是负载均衡,单从字面上的意思来理解就可以解 负载均衡是我们大流量网站要做的一个东西,下面我来给大家介绍在Nginx服务器上进行负载均衡配置方法,希望对有需要的同学有所帮助哦。

负载均衡

先来简单了解一下什么是负载均衡

- 乱说的

houxinyou

框架敏捷开发软件测试

从很久以前,大家就研究框架,开发方法,软件工程,好多!反正我是搞不明白!

这两天看好多人研究敏捷模型,瀑布模型!也没太搞明白.

不过感觉和程序开发语言差不多,

瀑布就是顺序,敏捷就是循环.

瀑布就是需求、分析、设计、编码、测试一步一步走下来。而敏捷就是按摸块或者说迭代做个循环,第个循环中也一样是需求、分析、设计、编码、测试一步一步走下来。

也可以把软件开发理

- 欣赏的价值——一个小故事

bijian1013

有效辅导欣赏欣赏的价值

第一次参加家长会,幼儿园的老师说:"您的儿子有多动症,在板凳上连三分钟都坐不了,你最好带他去医院看一看。" 回家的路上,儿子问她老师都说了些什么,她鼻子一酸,差点流下泪来。因为全班30位小朋友,惟有他表现最差;惟有对他,老师表现出不屑,然而她还在告诉她的儿子:"老师表扬你了,说宝宝原来在板凳上坐不了一分钟,现在能坐三分钟。其他妈妈都非常羡慕妈妈,因为全班只有宝宝

- 包冲突问题的解决方法

bingyingao

eclipsemavenexclusions包冲突

包冲突是开发过程中很常见的问题:

其表现有:

1.明明在eclipse中能够索引到某个类,运行时却报出找不到类。

2.明明在eclipse中能够索引到某个类的方法,运行时却报出找不到方法。

3.类及方法都有,以正确编译成了.class文件,在本机跑的好好的,发到测试或者正式环境就

抛如下异常:

java.lang.NoClassDefFoundError: Could not in

- 【Spark七十五】Spark Streaming整合Flume-NG三之接入log4j

bit1129

Stream

先来一段废话:

实际工作中,业务系统的日志基本上是使用Log4j写入到日志文件中的,问题的关键之处在于业务日志的格式混乱,这给对日志文件中的日志进行统计分析带来了极大的困难,或者说,基本上无法进行分析,每个人写日志的习惯不同,导致日志行的格式五花八门,最后只能通过grep来查找特定的关键词缩小范围,但是在集群环境下,每个机器去grep一遍,分析一遍,这个效率如何可想之二,大好光阴都浪费在这上面了

- sudoku solver in Haskell

bookjovi

sudokuhaskell

这几天没太多的事做,想着用函数式语言来写点实用的程序,像fib和prime之类的就不想提了(就一行代码的事),写什么程序呢?在网上闲逛时发现sudoku游戏,sudoku十几年前就知道了,学生生涯时也想过用C/Java来实现个智能求解,但到最后往往没写成,主要是用C/Java写的话会很麻烦。

现在写程序,本人总是有一种思维惯性,总是想把程序写的更紧凑,更精致,代码行数最少,所以现

- java apache ftpClient

bro_feng

java

最近使用apache的ftpclient插件实现ftp下载,遇见几个问题,做如下总结。

1. 上传阻塞,一连串的上传,其中一个就阻塞了,或是用storeFile上传时返回false。查了点资料,说是FTP有主动模式和被动模式。将传出模式修改为被动模式ftp.enterLocalPassiveMode();然后就好了。

看了网上相关介绍,对主动模式和被动模式区别还是比较的模糊,不太了解被动模

- 读《研磨设计模式》-代码笔记-工厂方法模式

bylijinnan

java设计模式

声明: 本文只为方便我个人查阅和理解,详细的分析以及源代码请移步 原作者的博客http://chjavach.iteye.com/

package design.pattern;

/*

* 工厂方法模式:使一个类的实例化延迟到子类

* 某次,我在工作不知不觉中就用到了工厂方法模式(称为模板方法模式更恰当。2012-10-29):

* 有很多不同的产品,它

- 面试记录语

chenyu19891124

招聘

或许真的在一个平台上成长成什么样,都必须靠自己去努力。有了好的平台让自己展示,就该好好努力。今天是自己单独一次去面试别人,感觉有点小紧张,说话有点打结。在面试完后写面试情况表,下笔真的好难,尤其是要对面试人的情况说明真的好难。

今天面试的是自己同事的同事,现在的这个同事要离职了,介绍了我现在这位同事以前的同事来面试。今天这位求职者面试的是配置管理,期初看了简历觉得应该很适合做配置管理,但是今天面

- Fire Workflow 1.0正式版终于发布了

comsci

工作workflowGoogle

Fire Workflow 是国内另外一款开源工作流,作者是著名的非也同志,哈哈....

官方网站是 http://www.fireflow.org

经过大家努力,Fire Workflow 1.0正式版终于发布了

正式版主要变化:

1、增加IWorkItem.jumpToEx(...)方法,取消了当前环节和目标环节必须在同一条执行线的限制,使得自由流更加自由

2、增加IT

- Python向脚本传参

daizj

python脚本传参

如果想对python脚本传参数,python中对应的argc, argv(c语言的命令行参数)是什么呢?

需要模块:sys

参数个数:len(sys.argv)

脚本名: sys.argv[0]

参数1: sys.argv[1]

参数2: sys.argv[

- 管理用户分组的命令gpasswd

dongwei_6688

passwd

NAME: gpasswd - administer the /etc/group file

SYNOPSIS:

gpasswd group

gpasswd -a user group

gpasswd -d user group

gpasswd -R group

gpasswd -r group

gpasswd [-A user,...] [-M user,...] g

- 郝斌老师数据结构课程笔记

dcj3sjt126com

数据结构与算法

<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<

- yii2 cgridview加上选择框进行操作

dcj3sjt126com

GridView

页面代码

<?=Html::beginForm(['controller/bulk'],'post');?>

<?=Html::dropDownList('action','',[''=>'Mark selected as: ','c'=>'Confirmed','nc'=>'No Confirmed'],['class'=>'dropdown',])

- linux mysql

fypop

linux

enquiry mysql version in centos linux

yum list installed | grep mysql

yum -y remove mysql-libs.x86_64

enquiry mysql version in yum repositoryyum list | grep mysql oryum -y list mysql*

install mysq

- Scramble String

hcx2013

String

Given a string s1, we may represent it as a binary tree by partitioning it to two non-empty substrings recursively.

Below is one possible representation of s1 = "great":

- 跟我学Shiro目录贴

jinnianshilongnian

跟我学shiro

历经三个月左右时间,《跟我学Shiro》系列教程已经完结,暂时没有需要补充的内容,因此生成PDF版供大家下载。最近项目比较紧,没有时间解答一些疑问,暂时无法回复一些问题,很抱歉,不过可以加群(334194438/348194195)一起讨论问题。

----广告-----------------------------------------------------

- nginx日志切割并使用flume-ng收集日志

liyonghui160com

nginx的日志文件没有rotate功能。如果你不处理,日志文件将变得越来越大,还好我们可以写一个nginx日志切割脚本来自动切割日志文件。第一步就是重命名日志文件,不用担心重命名后nginx找不到日志文件而丢失日志。在你未重新打开原名字的日志文件前,nginx还是会向你重命名的文件写日志,linux是靠文件描述符而不是文件名定位文件。第二步向nginx主

- Oracle死锁解决方法

pda158

oracle

select p.spid,c.object_name,b.session_id,b.oracle_username,b.os_user_name from v$process p,v$session a, v$locked_object b,all_objects c where p.addr=a.paddr and a.process=b.process and c.object_id=b.

- java之List排序

shiguanghui

list排序

在Java Collection Framework中定义的List实现有Vector,ArrayList和LinkedList。这些集合提供了对对象组的索引访问。他们提供了元素的添加与删除支持。然而,它们并没有内置的元素排序支持。 你能够使用java.util.Collections类中的sort()方法对List元素进行排序。你既可以给方法传递

- servlet单例多线程

utopialxw

单例多线程servlet

转自http://www.cnblogs.com/yjhrem/articles/3160864.html

和 http://blog.chinaunix.net/uid-7374279-id-3687149.html

Servlet 单例多线程

Servlet如何处理多个请求访问?Servlet容器默认是采用单实例多线程的方式处理多个请求的:1.当web服务器启动的