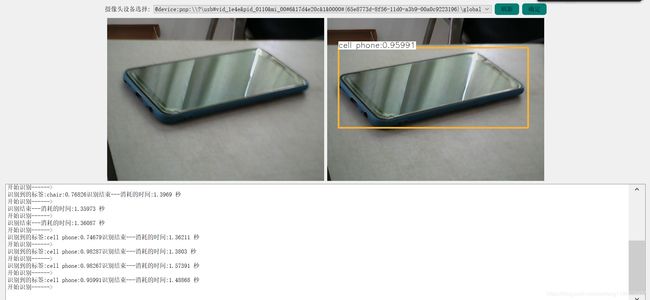

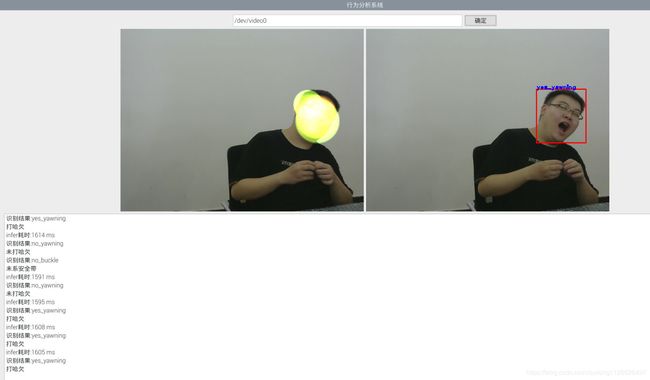

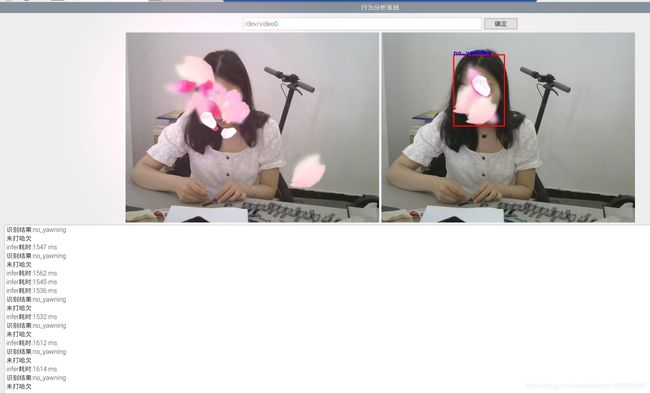

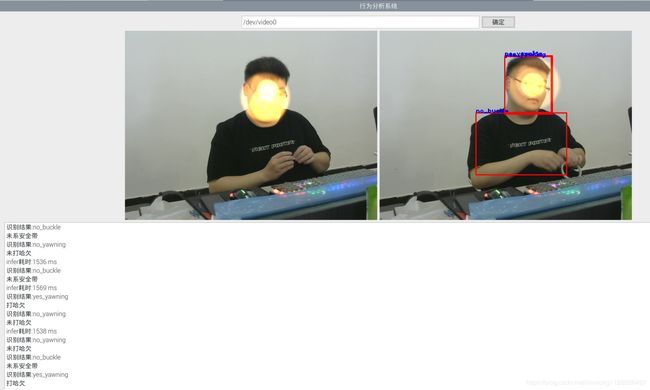

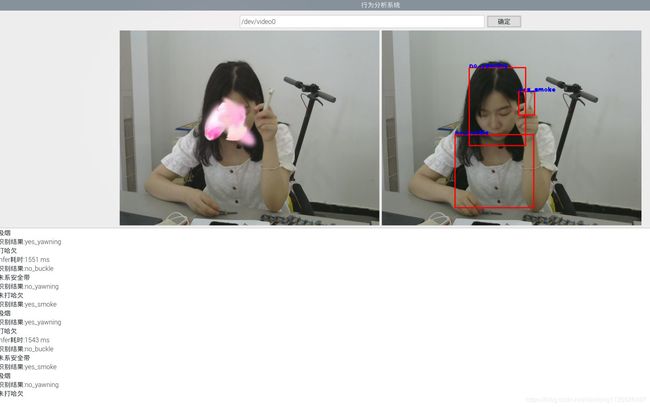

深度学习:驾驶行为分析

一、功能与环境说明

程序功能简介: 使用yolo训练,OpenCV调用、实现打哈欠、手机、抽烟、系安全带,口罩检测。

运行测试过的系统环境: 分别为 windows系统、Linux系统、嵌入式Linux系统32位、嵌入式Linux系统64位。

运行测试过的硬件环境: 分别为 普通笔记本电脑(i3、i5、i7)、RK3399、树莓派4B

yolo环境搭建方法:https://pjreddie.com/darknet/yolo/

darknet框架安装教程:https://pjreddie.com/darknet/install/

二、OpenCV调用代码

2.1 .h头文件代码

#ifndef SDK_THREAD_H

#define SDK_THREAD_H

#include

#include

#include "opencv2/core/core.hpp"

#include "opencv2/core/core_c.h"

#include "opencv2/objdetect.hpp"

#include "opencv2/highgui.hpp"

#include "opencv2/imgproc.hpp"

#include

#include

#include

#include

#include

#include

#include

using namespace cv;

using namespace std;

using namespace dnn;

//视频音频编码线程

class SDK_Thread: public QThread

{

Q_OBJECT

public:

void postprocess(Mat& frame, const vector& outs, float confThreshold, float nmsThreshold);

void drawPred(int classId, float conf, int left, int top, int right, int bottom, Mat& frame);

vector getOutputsNames(Net&net);

QImage Mat2QImage(const Mat& mat);

Mat QImage2cvMat(QImage image);

protected:

void run();

signals:

void LogSend(QString text);

void VideoDataOutput(QImage); //输出信号

};

extern QImage save_image;

extern bool sdk_run_flag;

extern string names_file;

extern String model_def;

extern String weights;

#endif // SDK_THREAD_H

2.2 .cpp文件代码

#include "sdk_thread.h"

QImage save_image; //用于行为分析的图片

bool sdk_run_flag=1;

Mat SDK_Thread::QImage2cvMat(QImage image)

{

Mat mat;

switch(image.format())

{

case QImage::Format_ARGB32:

case QImage::Format_RGB32:

case QImage::Format_ARGB32_Premultiplied:

mat = Mat(image.height(), image.width(), CV_8UC4, (void*)image.constBits(), image.bytesPerLine());

break;

case QImage::Format_RGB888:

mat = Mat(image.height(), image.width(), CV_8UC3, (void*)image.constBits(), image.bytesPerLine());

cvtColor(mat, mat, CV_BGR2RGB);

break;

case QImage::Format_Indexed8:

mat = Mat(image.height(), image.width(), CV_8UC1, (void*)image.constBits(), image.bytesPerLine());

break;

}

return mat;

}

QImage SDK_Thread::Mat2QImage(const Mat& mat)

{

// 8-bits unsigned, NO. OF CHANNELS = 1

if(mat.type() == CV_8UC1)

{

QImage image(mat.cols, mat.rows, QImage::Format_Indexed8);

// Set the color table (used to translate colour indexes to qRgb values)

image.setColorCount(256);

for(int i = 0; i < 256; i++)

{

image.setColor(i, qRgb(i, i, i));

}

// Copy input Mat

uchar *pSrc = mat.data;

for(int row = 0; row < mat.rows; row ++)

{

uchar *pDest = image.scanLine(row);

memcpy(pDest, pSrc, mat.cols);

pSrc += mat.step;

}

return image;

}

// 8-bits unsigned, NO. OF CHANNELS = 3

else if(mat.type() == CV_8UC3)

{

// Copy input Mat

const uchar *pSrc = (const uchar*)mat.data;

// Create QImage with same dimensions as input Mat

QImage image(pSrc, mat.cols, mat.rows, mat.step, QImage::Format_RGB888);

return image.rgbSwapped();

}

else if(mat.type() == CV_8UC4)

{

// Copy input Mat

const uchar *pSrc = (const uchar*)mat.data;

// Create QImage with same dimensions as input Mat

QImage image(pSrc, mat.cols, mat.rows, mat.step, QImage::Format_ARGB32);

return image.copy();

}

else

{

return QImage();

}

}

vector classes;

vector SDK_Thread::getOutputsNames(Net&net)

{

static vector names;

if (names.empty())

{

//Get the indices of the output layers, i.e. the layers with unconnected outputs

vector outLayers = net.getUnconnectedOutLayers();

//get the names of all the layers in the network

vector layersNames = net.getLayerNames();

// Get the names of the output layers in names

names.resize(outLayers.size());

for (size_t i = 0; i < outLayers.size(); ++i)

names[i] = layersNames[outLayers[i] - 1];

}

return names;

}

void SDK_Thread::drawPred(int classId, float conf, int left, int top, int right, int bottom, Mat& frame)

{

//Draw a rectangle displaying the bounding box

rectangle(frame, Point(left, top), Point(right, bottom), Scalar(255, 178, 50), 3);

//Get the label for the class name and its confidence

string label = format("%.5f", conf);

if (!classes.empty())

{

CV_Assert(classId < (int)classes.size());

label = classes[classId] + ":" + label;

}

LogSend(tr("识别到的标签:%1").arg(QString::fromStdString(label)));

//Display the label at the top of the bounding box

int baseLine;

Size labelSize = getTextSize(label, FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

top = max(top, labelSize.height);

rectangle(frame, Point(left, top - round(1.5*labelSize.height)), Point(left + round(1.5*labelSize.width), top + baseLine), Scalar(255, 255, 255), FILLED);

putText(frame, label, Point(left, top), FONT_HERSHEY_SIMPLEX, 0.75, Scalar(0, 0, 0), 1);

}

void SDK_Thread::postprocess(Mat& frame, const vector& outs, float confThreshold, float nmsThreshold)

{

vector classIds;

vector confidences;

vector boxes;

for (size_t i = 0; i < outs.size(); ++i)

{

// Scan through all the bounding boxes output from the network and keep only the

// ones with high confidence scores. Assign the box's class label as the class

// with the highest score for the box.

float* data = (float*)outs[i].data;

for (int j = 0; j < outs[i].rows; ++j, data += outs[i].cols)

{

Mat scores = outs[i].row(j).colRange(5, outs[i].cols);

Point classIdPoint;

double confidence;

// Get the value and location of the maximum score

minMaxLoc(scores, 0, &confidence, 0, &classIdPoint);

if (confidence > confThreshold)

{

int centerX = (int)(data[0] * frame.cols);

int centerY = (int)(data[1] * frame.rows);

int width = (int)(data[2] * frame.cols);

int height = (int)(data[3] * frame.rows);

int left = centerX - width / 2;

int top = centerY - height / 2;

classIds.push_back(classIdPoint.x);

confidences.push_back((float)confidence);

boxes.push_back(Rect(left, top, width, height));

}

}

}

// Perform non maximum suppression to eliminate redundant overlapping boxes with

// lower confidences

vector indices;

NMSBoxes(boxes, confidences, confThreshold, nmsThreshold, indices);

for (size_t i = 0; i < indices.size(); ++i)

{

int idx = indices[i];

Rect box = boxes[idx];

drawPred(classIds[idx], confidences[idx], box.x, box.y,

box.x + box.width, box.y + box.height, frame);

}

}

string names_file;

String model_def;

String weights;

void SDK_Thread::run()

{

Mat frame,frame_src,blob;

int inpWidth, inpHeight;

//String names_file = "D:/linux-share-dir/yolo_v3/car/yolo.names";

//String model_def = "D:/linux-share-dir/yolo_v3/car/yolov3.cfg";

//String weights = "D:/linux-share-dir/yolo_v3/car/yolov3.weights";

double thresh = 0.5;

double nms_thresh = 0.4; //0.4 0.25

inpWidth = inpHeight = 320; //416 608

//read names

ifstream ifs(names_file.c_str());

string line;

while(getline(ifs, line))classes.push_back(line);

//初始化模型

Net net = readNetFromDarknet(model_def, weights);

net.setPreferableBackend(DNN_BACKEND_OPENCV);

net.setPreferableTarget(DNN_TARGET_CPU);

QImage use_image;

LogSend("开始进行行为分析.\n");

//VideoCapture capture(0);// VideoCapture:OENCV中新增的类,捕获视频并显示出来

while(sdk_run_flag)

{

if(save_image.isNull())continue;

LogSend(tr("开始识别------>\n"));

//capture >> frame;

//得到源图片

//use_image.load("D:/linux-share-dir/car_1.jpg");

frame_src=QImage2cvMat(save_image);

cvtColor(frame_src, frame, CV_RGB2BGR);

//frame=imread("D:/linux-share-dir/car_1.jpg");//

//frame=imread("D:/linux-share-dir/5.jpg");

//in_w=save_image.width();

//in_h=save_image.height();

blobFromImage(frame, blob, 1/255.0, cvSize(inpWidth, inpHeight), Scalar(0,0,0), true, false);

vector mat_blob;

imagesFromBlob(blob, mat_blob);

//Sets the input to the network

net.setInput(blob);

// 运行前向传递以获取输出层的输出

vector outs;

net.forward(outs, getOutputsNames(net));

postprocess(frame, outs, thresh, nms_thresh);

vector layersTimes;

double freq = getTickFrequency() / 1000;

double t = net.getPerfProfile(layersTimes) / freq;

// string label = format("time : %.2f ms", t);

//putText(frame, label, Point(0, 15), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 255));

LogSend(tr("识别结束---消耗的时间:%1 秒\n").arg(t/1000));

//得到处理后的图像

use_image=Mat2QImage(frame);

use_image=use_image.rgbSwapped();

VideoDataOutput(use_image.copy());

}

}