Scikit-Learn 十大实用功能来袭!

作者 | Rebecca Vickery

译者 | 弯月,责编 | 屠敏

封图 | CSDN 付费下载自视觉中国

出品 | CSDN(ID:CSDNnews)

Scikit-learn是使用最广泛的Python机器学习库之一。它拥有标准简单的界面,可用于预处理数据以及模型的训练、优化和评估。

该项目最初始自David Cournapeau在Google Summer of Code活动中开发的项目,并于2010年首次公开发布。自创建以来,该库已发展成为了一个丰富的生态系统,可用于开发机器学习模型。

随着时间的推移,该项目发展出了许多便捷的功能,变得越来越容易使用。在本文中,我将介绍你可能不太熟悉的10个最实用的功能。

Scikit-learn拥有内置数据集

Scikit-learnAPI内置了各种实验以及真实的数据集。只需一行代码即可访问这些数据集,如果你正在学习或者想快速尝试新功能,那么这些数据集能助你一臂之力。

此外,你还可以使用生成器轻松生成合成的数据集,例如用于生成回归数据集的make_regression(),生成聚类数据集的make_blobs(),以及生成分类数据集的make_classification()。

所有数据加载函数都提供了选项,可以将数据被拆分成X(特征)和y(目标)之后再返回,这样返回值就可以直接用于训练模型。

# Toy regression data set loading

from sklearn.datasets import load_boston

X,y = load_boston(return_X_y = True)

# Synthetic regresion data set loading

from sklearn.datasets importmake_regression

X,y = make_regression(n_samples=10000,noise=100, random_state=0)

很容易获取第三方公共数据集

如果你想通过Scikit-learn直接访问各种公开的数据集,有一个便捷的功能可以让你直接从openml.org网站导入数据。该网站包含21,000多种可用于机器学习项目的数据集。

from sklearn.datasets importfetch_openml

X,y = fetch_openml("wine",version=1, as_frame=True, return_X_y=True)

利用已训练好的分类器来训练基准模型

在开发机器学习模型时,一般需要先创建一个基准模型。这个模型本质上是一个“笨”模型,通常它只能预测最常出现的类别。这个模型可以为你的“智能”模型提供一个基准,这样才能判断出模型的效果要优于随意选择的结果。

Scikit-learn包含一个处理分类任务的DummyClassifier()和一个处理回归问题的DummyRegressor()。

from sklearn.dummy importDummyClassifier

# Fit the model on the wine dataset andreturn the model score

dummy_clf =DummyClassifier(strategy="most_frequent", random_state=0)

dummy_clf.fit(X, y)

dummy_clf.score(X, y)

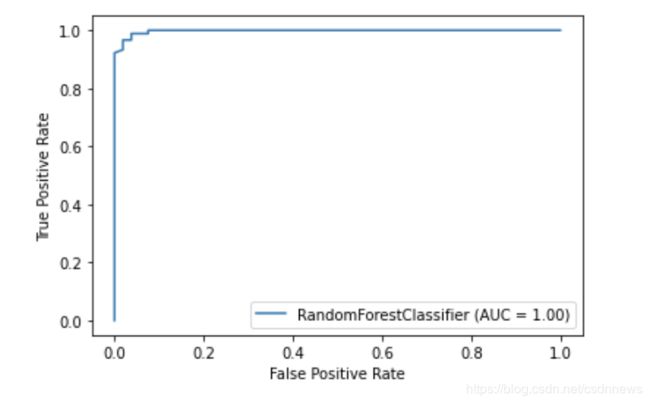

Scikit-learn拥有自己的绘图API

Scikit-learn具有内置的绘图API,所以你无需导入任何其他库即可将模型的性能显示成图表。Scikit-learn包含以下绘图工具:部分依赖图、混淆矩阵、准确率-召回率曲线以及ROC曲线。

import matplotlib.pyplot as plt

from sklearn import metrics,model_selection

from sklearn.ensemble importRandomForestClassifier

from sklearn.datasets importload_breast_cancer

X,y = load_breast_cancer(return_X_y =True)

X_train, X_test, y_train, y_test =model_selection.train_test_split(X, y, random_state=0)

clf =RandomForestClassifier(random_state=0)

clf.fit(X_train, y_train)

metrics.plot_roc_curve(clf, X_test,y_test)

plt.show()

Scikit-learn拥有内置的特征选择方法

提高模型性能的技术之一是仅使用最佳特征集或通过删除冗余特征来训练模型。此过程称为特征选择。

Scikit-learn拥有许多执行特征选择的函数。例如SelectPercentile()。这个方法能够根据特定的统计方法选择得分最高的X百分位数特征。

f

rom sklearn import model_selection

from sklearn.ensemble importRandomForestClassifier

from sklearn.datasets import load_wine

from sklearn.pipeline import Pipeline

from sklearn.preprocessing importStandardScaler

from sklearn.feature_selection importSelectPercentile, chi2

X,y = load_wine(return_X_y = True)

X_trasformed = SelectPercentile(chi2,percentile=60).fit_transform(X, y)

通过流水线将机器学习工作流程中的所有步骤链接到一起

除了提供各种机器学习的算法之外,Scikit-learn还拥有一系列用于预处理和转换数据的功能。为了促进机器学习工作流程的可重复性和简单度, Scikit-learn创建了流水线,可将大量预处理步骤与模型训练阶段链接在一起。

流水线将工作流中的所有步骤存储为单个实体,可以通过fit和predict调用。当针对流水线对象调用fit方法时,预处理步骤和模型训练会自动执行。

from sklearn import model_selection

from sklearn.ensemble import RandomForestClassifier

from sklearn.datasets importload_breast_cancer

from sklearn.pipeline import Pipeline

from sklearn.preprocessing importStandardScaler

X,y = load_breast_cancer(return_X_y =True)

X_train, X_test, y_train, y_test =model_selection.train_test_split(X, y, random_state=0)

# Chain together scaling the variableswith the model

pipe = Pipeline([('scaler',StandardScaler()), ('rf', RandomForestClassifier())])

pipe.fit(X_train, y_train)

pipe.score(X_test, y_test)

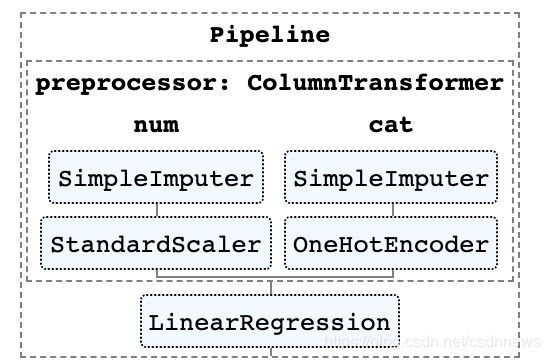

使用ColumnTransformer将不同的预处理应用到不同的特征

在许多数据集中,不同类型的特征都需要应用不同的预处理步骤。例如,对于分类数据和数字数据混合而成的数据,你可能希望通过one-hot编码将分类数据转换为数字值,并调整数字变量的比例。

Scikit-learn流水线拥有一个名叫ColumnTransformer的函数,可通过索引或列名称来指定将最合适的预处理应用到哪些列。

from sklearn import model_selection

from sklearn.linear_model importLinearRegression

from sklearn.datasets importfetch_openml

from sklearn.compose importColumnTransformer

from sklearn.pipeline import Pipeline

from sklearn.impute import SimpleImputer

from sklearn.preprocessing importStandardScaler, OneHotEncoder

# Load auto93 data set which containsboth categorical and numeric features

X,y = fetch_openml("auto93",version=1, as_frame=True, return_X_y=True)

# Create lists of numeric andcategorical features

numeric_features =X.select_dtypes(include=['int64', 'float64']).columns

categorical_features =X.select_dtypes(include=['object']).columns

X_train, X_test, y_train, y_test =model_selection.train_test_split(X, y, random_state=0)

# Create a numeric and categoricaltransformer to perform preprocessing steps

numeric_transformer = Pipeline(steps=[

('imputer', SimpleImputer(strategy='median')),

('scaler', StandardScaler())])

categorical_transformer =Pipeline(steps=[

('imputer', SimpleImputer(strategy='constant', fill_value='missing')),

('onehot', OneHotEncoder(handle_unknown='ignore'))])

# Use the ColumnTransformer to apply tothe correct features

preprocessor = ColumnTransformer(

transformers=[

('num', numeric_transformer, numeric_features),

('cat', categorical_transformer, categorical_features)])

# Append regressor to the preprocessor

lr = Pipeline(steps=[('preprocessor',preprocessor),

('classifier',LinearRegression())])

# Fit the complete pipeline

lr.fit(X_train, y_train)

print("model score: %.3f" %lr.score(X_test, y_test))

轻松输出流水线的HTML表示

通常流水线都会非常复杂,尤其是在处理实际数据时。因此,Scikit-learn提供了一种非常便捷的方法,可帮助你将流水线中的步骤输出成HTML图表。

from sklearn import set_config

set_config(display='diagram')

lr

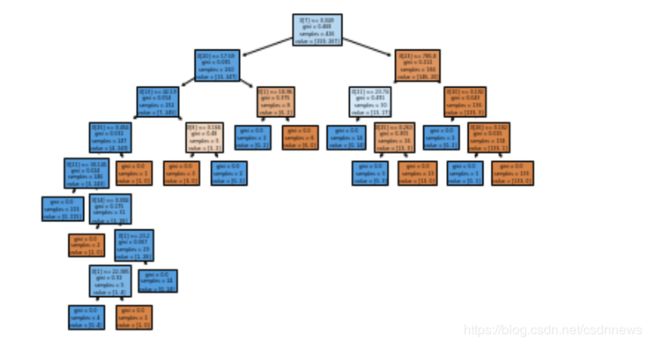

将树进行可视化的绘图函数

你可以利用plot_tree()函数创建决策树模型中的步骤图。

import matplotlib.pyplot as plt

from sklearn import metrics,model_selection

from sklearn.tree importDecisionTreeClassifier, plot_tree

from sklearn.datasets importload_breast_cancer

X,y = load_breast_cancer(return_X_y =True)

X_train, X_test, y_train, y_test =model_selection.train_test_split(X, y, random_state=0)

clf = DecisionTreeClassifier()

clf.fit(X_train, y_train)

plot_tree(clf, filled=True)

plt.show()

通过众多第三方库扩展Scikit-learn

有许多第三方库可与Scikit-learn结合使用,并扩展其功能。

举两个例子:

-

category-encoders库提供了各种分类特征的预处理方法:http://contrib.scikit-learn.org/category_encoders/

-

ELI5软件包提供了更好的模型可解释性:https://eli5.readthedocs.io/en/latest/

这两个软件包都可以直接在Scikit-learn流水线中使用。

# Pipeline using Weight of Evidencetransformer from category encoders

from sklearn import model_selection

from sklearn.linear_model importLinearRegression

from sklearn.datasets importfetch_openml

from sklearn.compose importColumnTransformer

from sklearn.pipeline import Pipeline

from sklearn.impute import SimpleImputer

from sklearn.preprocessing importStandardScaler, OneHotEncoder

import category_encoders as ce

# Load auto93 data set which containsboth categorical and numeric features

X,y = fetch_openml("auto93",version=1, as_frame=True, return_X_y=True)

# Create lists of numeric andcategorical features

numeric_features =X.select_dtypes(include=['int64', 'float64']).columns

categorical_features =X.select_dtypes(include=['object']).columns

X_train, X_test, y_train, y_test =model_selection.train_test_split(X, y, random_state=0)

# Create a numeric and categoricaltransformer to perform preprocessing steps

numeric_transformer = Pipeline(steps=[

('imputer', SimpleImputer(strategy='median')),

('scaler', StandardScaler())])

categorical_transformer =Pipeline(steps=[

('imputer', SimpleImputer(strategy='constant', fill_value='missing')),

('woe', ce.woe.WOEEncoder())])

# Use the ColumnTransformer to apply tothe correct features

preprocessor = ColumnTransformer(

transformers=[

('num', numeric_transformer, numeric_features),

('cat', categorical_transformer, categorical_features)])

# Append regressor to the preprocessor

lr = Pipeline(steps=[('preprocessor',preprocessor),

('classifier',LinearRegression())])

# Fit the complete pipeline

lr.fit(X_train, y_train)

print("model score: %.3f" %lr.score(X_test, y_test))

感谢您的阅读!

原文:https://towardsdatascience.com/10-things-you-didnt-know-about-scikit-learn-cccc94c50e4f

本文为 CSDN 翻译,转载请注明来源出处。