关于我们为什么需要Schema Registry?

参考,

https://www.confluent.io/blog/how-i-learned-to-stop-worrying-and-love-the-schema-part-1/

https://www.confluent.io/blog/schema-registry-kafka-stream-processing-yes-virginia-you-really-need-one/

https://www.confluent.io/blog/stream-data-platform-2/

Use Avro as Your Data Format

We think Avro is the best choice for a number of reasons:

- It has a direct mapping to and from JSON

- It has a very compact format. The bulk of JSON, repeating every field name with every single record, is what makes JSON inefficient for high-volume usage.

- It is very fast.

- It has great bindings for a wide variety of programming languages so you can generate Java objects that make working with event data easier, but it does not require code generation so tools can be written generically for any data stream.

- It has a rich, extensible schema language defined in pure JSON

- It has the best notion of compatibility for evolving your data over time.

One of the critical features of Avro is the ability to define a schema for your data. For example an event that represents the sale of a product might look like this:

{

"time": 1424849130111,

"customer_id": 1234,

"product_id": 5678,

"quantity":3,

"payment_type": "mastercard"

}

It might have a schema like this that defines these five fields:

{

"type": "record",

"doc":"This event records the sale of a product",

"name": "ProductSaleEvent",

"fields" : [

{"name":"time", "type":"long", "doc":"The time of the purchase"},

{"name":"customer_id", "type":"long", "doc":"The customer"},

{"name":"product_id", "type":"long", "doc":"The product"},

{"name":"quantity", "type":"int"},

{"name":"payment",

"type":{"type":"enum",

"name":"payment_types",

"symbols":["cash","mastercard","visa"]},

"doc":"The method of payment"}

]

}

Here is how these schemas will be put to use. You will associate a schema like this with each Kafka topic. You can think of the schema much like the schema of a relational database table, giving the requirements for data that is produced into the topic as well as giving instructions on how to interpret data read from the topic.

The schemas end up serving a number of critical purposes:

- They let the producers or consumers of data streams know the right fields are need in an event and what type each field is.

- They document the usage of the event and the meaning of each field in the “doc” fields.

- They protect downstream data consumers from malformed data, as only valid data will be permitted in the topic.

The Need For Schemas

Robustness

One of the primary advantages of this type of architecture where data is modeled as streams is that applications are decoupled.

Clarity and Semantics

Worse, the actual meaning of the data becomes obscure and often misunderstood by different applications because there is no real canonical documentation for the meaning of the fields. One person interprets a field one way and populates it accordingly and another interprets it differently.

Compatibility

Schemas also help solve one of the hardest problems in organization-wide data flow: modeling and handling change in data format. Schema definitions just capture a point in time, but your data needs to evolve with your business and with your code.

Schemas give a mechanism for reasoning about which format changes will be compatible and (hence won’t require reprocessing) and which won’t.

Schemas are a Conversation

However data streams are different; they are a broadcast channel. Unlike an application’s database, the writer of the data is, almost by definition, not the reader. And worse, there are many readers, often in different parts of the organization. These two groups of people, the writers and the readers, need a concrete way to describe the data that will be exchanged between them and schemas provide exactly this.

Schemas Eliminate The Manual Labor of Data Science

It is almost a truism that data science, which I am using as a short-hand here for “putting data to effective use”, is 80% parsing, validation, and low-level data munging.

KIP-69 - Kafka Schema Registry

pending状态,这个KIP估计会被cancel掉

因为confluent.inc已经提供相应的方案,

https://github.com/confluentinc/schema-registry

http://docs.confluent.io/3.0.1/schema-registry/docs/index.html

比较牛逼的是,有人为这个开发了UI,

https://www.landoop.com/blog/2016/08/schema-registry-ui/

本身使用,都是通过http进行Schema的读写,比较简单

设计,

参考, http://docs.confluent.io/3.0.1/schema-registry/docs/design.html

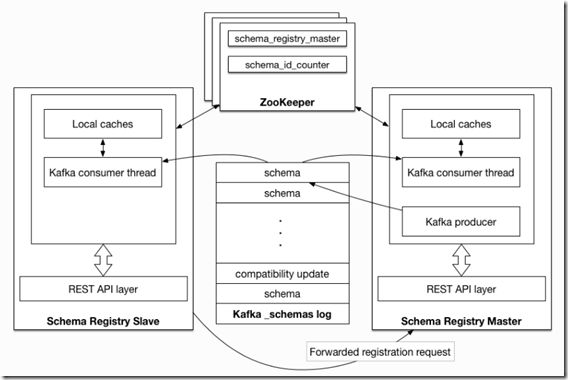

主备架构,通过zk来选主

每个schema需要一个唯一id,这个id也通过zk来保证递增

schema存在kafka的一个特殊的topic中,_schemas,一个单partition的topic

我的理解,在注册和查询schema的时候,是通过local caches进行检索的,kafka的topic可以用于replay来重建caches