- python爬虫项目(十二):爬取各大音乐平台排行榜并分析音乐类型趋势

人工智能_SYBH

爬虫试读2025年爬虫百篇实战宝典:从入门到精通python爬虫开发语言python爬虫项目python爬虫

目录1.项目简介2.工具与技术3.爬取音乐平台排行榜数据3.1使用requests和BeautifulSoup爬取网易云音乐排行榜3.2爬取QQ音乐排行榜4.数据处理4.1合并数据5.分析音乐类型趋势5.1使用关键词匹配类型6.数据可视化6.1绘制音乐类型分布图6.2绘制时间趋势图7.总结爬取各大音乐平台排行榜并分析音乐类型趋势是一个有趣且有意义的项目。我们可以通过以下步骤来实现:1.项目简介本项

- pycharm2018

qq_35581867

安装指南

因公司的需求,需要做一个爬取最近上映的电影、列车号、航班号、机场、车站等信息,所以需要我做一个爬虫项目,当然java也可以做爬虫,但是还是没有python这样方便,所以也开始学习Python啦!!!欲善其事,必先利其器。这里我为大家提供了三种激活方式:授权服务器激活:适合小白,一步到位,但服务器容易被封激活码激活:适合小白,Windows、Mac、Linux都适用且无其他副作用,推荐~破解补丁激活

- 【Python爬虫】爬取公共交通站点数据

Anchenry

Python爬虫pythonbeautifulsoup

首先,先介绍一下爬取公交站点时代码中引入的库。requests:使用HTTP协议向网页发送请求并获得响应的库。BeautifulSoup:用于解析HTML和XML网页文档的库,简化了页面解析和信息提取的过程。json:用于处理JSON格式数据的库。xlwt:用于将数据写入Excel文件中的库。Coordin_transformlat:自定义的一个坐标转换库。在这个爬虫项目中,它被用来将高德地图提供

- 从零打造 Python 爬虫项目:需求分析到部署

西攻城狮北

python爬虫实战案例

一、项目概述二、需求分析三、开发环境搭建四、代码实现1.爬虫基础2.数据解析与存储3.应对反爬虫机制4.多页爬取五、部署与运行1.定时任务2.云服务器部署六、常见问题解决七、总结随着互联网的飞速发展,信息获取成为了人们日常生活和工作中不可或缺的一部分。然而,传统的手动收集信息的方式效率低下、准确性难以保证,无法满足大量数据需求。Python爬虫技术应运而生,它能够自动化地从互联网上获取大量数据,为

- 【Python爬虫(94)】爬虫生存指南:风险识别与应对策略

奔跑吧邓邓子

Python爬虫python爬虫开发语言

【Python爬虫】专栏简介:本专栏是Python爬虫领域的集大成之作,共100章节。从Python基础语法、爬虫入门知识讲起,深入探讨反爬虫、多线程、分布式等进阶技术。以大量实例为支撑,覆盖网页、图片、音频等各类数据爬取,还涉及数据处理与分析。无论是新手小白还是进阶开发者,都能从中汲取知识,助力掌握爬虫核心技能,开拓技术视野。目录一、爬虫项目中的风险识别1.1反爬虫导致的爬虫失效1.2数据泄露风

- 爬虫和逆向教程-专栏介绍和目录

数据知道

2025年爬虫和逆向教程爬虫python数据采集网络爬虫逆向

文章目录一、爬虫基础和进阶二、App数据采集三、爬虫项目四、爬虫面试本专栏为爬虫初学者和进阶开发者量身定制的爬虫和逆向学习园地。为你提供全面而深入的爬虫和逆向技术指导,从入门到精通,从基础理论到高级实战,助你在数据的海洋中畅游,挖掘出有价值的信息。通过本专栏的学习,你将具备独立开发和优化爬虫程序的能力,及逆向分析能力和项目开发能力,成为爬虫领域的佼佼者。《本专栏持续更新中…(早订阅优惠仅需9.9元

- Python 爬虫流程及robots协议介绍

流沙丶

Python项目爬虫实战

Python爬虫流程及robots协议介绍**网络爬虫(Spider)是一种高效的数据挖掘的方式,常见的百度,谷歌,火狐等浏览器,其实就是一个非常大的爬虫项目**爬虫大致分为了四个阶段:确定目标:我们想要爬取的网页数据采集:已经爬取到的HTML数据数据提取:从HTML中提取我们想要的数据数据存储:将提取出来的数据保存在数据库,保存成JSON文件等robots协议:用简单直接的txt格式文本方式告诉

- python爬虫项目(一百九十八):电商平台用户行为数据分析与推荐系统、爬取电商平台用户行为数据

人工智能_SYBH

爬虫试读2025年爬虫百篇实战宝典:从入门到精通python爬虫数据分析开发语言信息可视化okhttp

在现代电商平台中,用户的行为数据对于优化用户体验、提升销量以及个性化推荐至关重要。通过抓取和分析用户的浏览、点击、购买等行为数据,电商平台能够更好地了解用户的偏好,从而推荐相关产品,增加用户的黏性和购买意愿。本篇博客将详细介绍如何通过爬虫技术抓取电商平台的用户行为数据,并结合数据分析和推荐算法,构建一个简单的推荐系统。目录一、电商平台用户行为数据二、爬虫技术实现2.1网站分析2.2使用Seleni

- Python网络爬虫项目开发实战:如何解决验证码处理

好知识传播者

Python实例开发实战python爬虫开发语言验证码处理网络爬虫

注意:本文的下载教程,与以下文章的思路有相同点,也有不同点,最终目标只是让读者从多维度去熟练掌握本知识点。下载教程:Python网络爬虫项目开发实战_验证码处理_编程案例解析实例详解课程教程.pdf一、验证码处理的简介在Python网络爬虫项目开发实战中,验证码处理是一个常见的挑战,因为许多网站为了防止自动化脚本滥用和保护用户账户安全,会采用验证码机制来验证请求的合法性。以下是验证码处理的简介,包

- 30天练完这96个爬虫项目案例,成功逆袭!靠接单月入W+轻轻松松!

小天才学习机打游戏

爬虫python开发语言人工智能云计算

在受所有大环境的影响,大家开始一个比一个卷,所以靠固定的收入那一点点是明显不够的。现在谁还没有一点其他的收入呢?Python爬虫就成了大家学习的不二之选~相信很多学习Python的小伙伴都苦于找不到python项目练手,在我看来,基础知识学的再好,没有经历过实战就是白扯,这️️️个项目非常适合新手学习Python爬虫虽然做为python学习中较简单的一个知识点,但是它在平时生活中的运用确实非常多的

- Scrapy分布式爬虫系统

ivwdcwso

开发运维scrapy分布式爬虫python开发

一、概述在这篇博文中,我们将介绍如何使用Docker来部署Scrapy分布式爬虫系统,包括Scrapyd、Logparser和Scrapyweb三个核心组件。这种部署方式适用于Scrapy项目和Scrapy-Redis分布式爬虫项目。需要安装的组件:Scrapyd-服务端,用于运行打包后的爬虫代码,所有爬虫机器都需要安装。Logparser-服务端,用于解析爬虫日志,配合Scrapyweb进行实时

- 豆瓣电影TOP250爬虫项目

诚信爱国敬业友善

爬虫爬虫python

以下是一个基于Python的豆瓣电影TOP250爬虫项目案例,包含完整的技术原理说明、关键知识点解析和项目源代码。本案例采用面向对象编程思想,涵盖反爬机制处理、数据解析和存储等核心内容。豆瓣电影TOP250爬虫项目一、项目需求分析目标网站:https://movie.douban.com/top250爬取内容:电影名称导演和主演信息上映年份制片国家电影类型评分评价人数短评金句技术挑战:请求头验证分

- Python网络爬虫实战:爬取中国散文网青年散文专栏文章

智算菩萨

python开发语言爬虫

一、引言在当今数字时代,网络爬虫技术已成为获取和分析大规模在线数据的重要工具。本文将介绍一个实际的爬虫项目:爬取中国散文网青年散文专栏的所有文章。选择中国散文网作为爬取对象,是因为它是国内知名的散文平台,尤其是其青年散文专栏汇集了大量新生代作家的优秀作品,具有重要的文学价值和研究意义。本项目的主要目标是获取青年散文专栏中的所有文章,并将其保存为txt格式的文本文件,便于后续的文本分析和研究。为了实

- python爬虫项目(一百):电商网站商品价格监控系统

人工智能_SYBH

爬虫试读2025年爬虫百篇实战宝典:从入门到精通python爬虫开发语言信息可视化人工智能

引言随着电子商务的快速发展,在线购物成为越来越多消费者的选择。电商平台上的商品价格波动频繁,消费者常常希望能够实时监控心仪商品的价格变化,以便在最佳时机下单。为了满足这一需求,本文将介绍一个电商网站商品价格监控系统的构建过程,包括如何爬取商品价格、存储和分析数据,以及构建价格监控的自动化系统。我们将重点介绍爬虫部分,使用最新的技术与工具,为读者提供详细的实现代码。目录引言1.系统设计概述1.1选择

- 【MapReduce】分布式计算框架MapReduce

桥路丶

大数据Hadoop快速入门bigdata

分布式计算框架MapReduce什么是MapReduce?MapReduce起源是2004年10月Google发表了MapReduce论文,之后由MikeCafarella在Nutch(爬虫项目)中实现了MapReduce的功能。它的设计初衷是解决搜索引擎中大规模网页数据的并行处理问题,之后成为ApacheHadoop的核心子项目。它是一个面向批处理的分布式计算框架;在分布式环境中,MapRedu

- 【爬虫】使用 Scrapy 框架爬取豆瓣电影 Top 250 数据的完整教程

web15085096641

爬虫scrapy

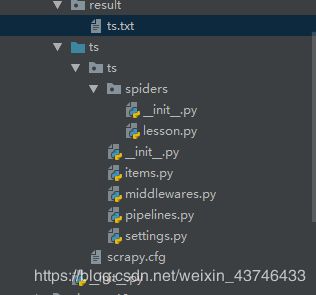

前言在大数据和网络爬虫领域,Scrapy是一个功能强大且广泛使用的开源爬虫框架。它能够帮助我们快速地构建爬虫项目,并高效地从各种网站中提取数据。在本篇文章中,我将带大家从零开始使用Scrapy框架,构建一个简单的爬虫项目,爬取豆瓣电影Top250的电影信息。Scrapy官方文档:ScrapyDocumentation豆瓣电影Top250:豆瓣电影Top250本文的爬虫项目配置如下:系统:Windo

- 1 项目概述

40岁的系统架构师

微信小程序

项目篇带着大家一起做项目,其中涉及到产品设计,架构设计和前段后端的开发工作。带着大家一起把项目做起来。开始我们做几个小项目,基本涉及不到架构设计。后面再做涉及到我们前面讲到的架构设计的相关知识,把能用到的技术大体上用一遍。先带着大家做一个无限极返佣的系统和一些赚外快的小项目和一些游戏脚本(主要是按键精灵和C++开发)还有一些爬虫项目,这些项目都是能够给大家带来收益的,创作不易,这些项目可能都要收费

- requests模块-timeout参数

李乾星

爬虫自学笔记开发语言python网络爬虫网络协议

超时参数timeout的重要性与使用方法在进行网上冲浪或爬虫项目开发时,我们常常会遇到网络波动和请求处理时间过长的情况。长时间等待一个请求可能仍然没有结果,导致整个项目效率低下。为了解决这个问题,我们可以使用超时参数timeout来强制要求请求在特定时间内返回结果,否则将抛出异常。使用超时参数timeout的方法在学习爬虫和request模块的过程中,我们会频繁使用requests.get(url

- python爬虫项目(八十二):爬取旅游攻略网站的用户评论,构建旅游景点推荐系统

人工智能_SYBH

爬虫试读2025年爬虫百篇实战宝典:从入门到精通python爬虫旅游开发语言金融信息可视化

构建一个旅游景点推荐系统,可以帮助用户根据他们的偏好和其他用户的评论来选择旅行目的地。在这个项目中,我们将通过爬取旅游攻略网站的用户评论数据,分析这些数据,并使用协同过滤等推荐算法来构建一个基本的推荐系统。本文将详细描述整个过程,包括爬虫部分和推荐系统的构建。目录文章大纲一、项目背景与目标项目的目标:二、目标网站分析与数据需求数据需求:目标网站:三、爬虫技术选型安装所需库四、使用Scrapy爬取用

- 【爬虫】使用 Scrapy 框架爬取豆瓣电影 Top 250 数据的完整教程

m0_74825360

面试学习路线阿里巴巴爬虫scrapy

前言在大数据和网络爬虫领域,Scrapy是一个功能强大且广泛使用的开源爬虫框架。它能够帮助我们快速地构建爬虫项目,并高效地从各种网站中提取数据。在本篇文章中,我将带大家从零开始使用Scrapy框架,构建一个简单的爬虫项目,爬取豆瓣电影Top250的电影信息。Scrapy官方文档:ScrapyDocumentation豆瓣电影Top250:豆瓣电影Top250本文的爬虫项目配置如下:系统:Windo

- Python网络爬虫核心面试题

闲人编程

程序员面试python爬虫开发语言面试网络编程

网络爬虫1.爬虫项目中如何处理请求失败的问题?2.解释HTTP协议中的持久连接和非持久连接。3.什么是HTTP的持久化Cookie和会话Cookie?4.如何在爬虫项目中检测并处理网络抖动和丢包?5.在爬虫项目中,如何使用HEAD请求提高效率?6.如何在爬虫项目中实现HTTP请求的限速?7.解释HTTP2相对于HTTP1.1的主要改进。8.如何在爬虫项目中模拟HTTP重试和重定向?9.什么是COR

- Python爬虫项目合集:200个Python爬虫项目带你从入门到精通

人工智能_SYBH

爬虫试读2025年爬虫百篇实战宝典:从入门到精通python爬虫数据分析信息可视化爬虫项目大全Python爬虫项目合集爬虫从入门到精通项目

适合人群无论你是刚接触编程的初学者,还是已经掌握一定Python基础并希望深入了解网络数据采集的开发者,这个专栏都将为你提供系统化的学习路径。通过循序渐进的理论讲解、代码实例和实践项目,你将获得扎实的爬虫开发技能,适应不同场景下的数据采集需求。专栏特色从基础到高级,内容体系全面专栏内容从爬虫的基础知识与工作原理开始讲解,逐渐覆盖静态网页、动态网页、API数据爬取等实用技术。后续还将深入解析反爬机制

- 【爬虫】使用 Scrapy 框架爬取豆瓣电影 Top 250 数据的完整教程

brhhh_sehe

爬虫scrapy

前言在大数据和网络爬虫领域,Scrapy是一个功能强大且广泛使用的开源爬虫框架。它能够帮助我们快速地构建爬虫项目,并高效地从各种网站中提取数据。在本篇文章中,我将带大家从零开始使用Scrapy框架,构建一个简单的爬虫项目,爬取豆瓣电影Top250的电影信息。Scrapy官方文档:ScrapyDocumentation豆瓣电影Top250:豆瓣电影Top250本文的爬虫项目配置如下:系统:Windo

- Python 爬虫入门教程:从零构建你的第一个网络爬虫

m0_74825223

面试学习路线阿里巴巴python爬虫开发语言

网络爬虫是一种自动化程序,用于从网站抓取数据。Python凭借其丰富的库和简单的语法,是构建网络爬虫的理想语言。本文将带你从零开始学习Python爬虫的基本知识,并实现一个简单的爬虫项目。1.什么是网络爬虫?网络爬虫(WebCrawler)是一种通过网络协议(如HTTP/HTTPS)获取网页内容,并提取其中有用信息的程序。常见的爬虫用途包括:收集商品价格和评价。抓取新闻或博客内容。统计数据分析。爬

- Python爬虫项目 | 二、每日天气预报

聪明的墨菲特i

Python爬虫项目python爬虫开发语言

文章目录1.文章概要1.1实现方法1.2实现代码1.3最终效果1.3.1编辑器内打印显示效果实际应用效果2.具体讲解2.1使用的Python库2.2代码说明2.2.1获取天气预报信息2.2.2获取当天日期信息,格式化输出2.2.3调用函数,输出结果2.3过程展示3总结1.文章概要继续学习Python爬虫知识,实现简单的案例,发送每日天气预报1.1实现方法本文使用Python中常用的requests

- Python 爬虫入门教程:从零构建你的第一个网络爬虫

m0_66323401

python爬虫开发语言

网络爬虫是一种自动化程序,用于从网站抓取数据。Python凭借其丰富的库和简单的语法,是构建网络爬虫的理想语言。本文将带你从零开始学习Python爬虫的基本知识,并实现一个简单的爬虫项目。1.什么是网络爬虫?网络爬虫(WebCrawler)是一种通过网络协议(如HTTP/HTTPS)获取网页内容,并提取其中有用信息的程序。常见的爬虫用途包括:收集商品价格和评价。抓取新闻或博客内容。统计数据分析。爬

- PyCharm激活

你尧大爷

PyCharmPyCharm

原文地址:https://blog.csdn.net/u014044812/article/details/78727496社区版和专业版区别:因公司的需求,需要做一个爬取最近上映的电影、列车号、航班号、机场、车站等信息,所以需要我做一个爬虫项目,当然java也可以做爬虫,但是还是没有python这样方便,所以也开始学习Python啦!!!欲善其事,必先利其器。这里我为大家提供了三种激活方式:授权

- Python爬虫项目(附源码)70个Python爬虫练手实例!

硬核Python

职业与发展python编程python爬虫开发语言

文章目录Python爬虫项目70例(一):入门级Python爬虫项目70例(二):pyspiderPython爬虫项目70例(三):scrapyPython爬虫项目70例(四):手机抓取相关Python爬虫项目70例(五):爬虫进阶部分Python爬虫项目70例(六):验证码识别技术Python爬虫项目70例(七):反爬虫技术读者福利1、Python所有方向的学习路线2、Python课程视频3、精

- 游戏行业洞察:分布式开源爬虫项目在数据采集与分析中的应用案例介绍

思通数科x

游戏网络爬虫爬山算法爬虫

前言我在领导一个为游戏行业巨头提供数据采集服务的项目中,我们面临着实时数据需求和大规模数据处理的挑战。我们构建了一个基于开源分布式爬虫技术的自动化平台,实现了高效、准确的数据采集。通过自然语言处理技术,我们确保了数据的质量和一致性,并采用分布式架构大幅提升了处理速度。最终,我们的解决方案不仅满足了客户对实时市场洞察的需求,还推动了整个游戏行业的数据驱动决策能力。在我作为项目经理、客户经理和产品经理

- eclipse的workspace删除

小小曾爱读书

eclipsejava

在最近的一个爬虫项目中,发现build进程很慢,然后就换了个workspace,但还是很慢最后也出错了,然后想删除这个workspace,我尝试删除了F盘对应的workspace文件夹,但是令人不解的是,eclipse竟然还可以switch到这个工作空间里,最后找到了彻底删除的方法:按顺序remove就行最后也是成功了哈哈:

- [星球大战]阿纳金的背叛

comsci

本来杰迪圣殿的长老是不同意让阿纳金接受训练的.........

但是由于政治原因,长老会妥协了...这给邪恶的力量带来了机会

所以......现代的地球联邦接受了这个教训...绝对不让某些年轻人进入学院

- 看懂它,你就可以任性的玩耍了!

aijuans

JavaScript

javascript作为前端开发的标配技能,如果不掌握好它的三大特点:1.原型 2.作用域 3. 闭包 ,又怎么可以说你学好了这门语言呢?如果标配的技能都没有撑握好,怎么可以任性的玩耍呢?怎么验证自己学好了以上三个基本点呢,我找到一段不错的代码,稍加改动,如果能够读懂它,那么你就可以任性了。

function jClass(b

- Java常用工具包 Jodd

Kai_Ge

javajodd

Jodd 是一个开源的 Java 工具集, 包含一些实用的工具类和小型框架。简单,却很强大! 写道 Jodd = Tools + IoC + MVC + DB + AOP + TX + JSON + HTML < 1.5 Mb

Jodd 被分成众多模块,按需选择,其中

工具类模块有:

jodd-core &nb

- SpringMvc下载

120153216

springMVC

@RequestMapping(value = WebUrlConstant.DOWNLOAD)

public void download(HttpServletRequest request,HttpServletResponse response,String fileName) {

OutputStream os = null;

InputStream is = null;

- Python 标准异常总结

2002wmj

python

Python标准异常总结

AssertionError 断言语句(assert)失败 AttributeError 尝试访问未知的对象属性 EOFError 用户输入文件末尾标志EOF(Ctrl+d) FloatingPointError 浮点计算错误 GeneratorExit generator.close()方法被调用的时候 ImportError 导入模块失

- SQL函数返回临时表结构的数据用于查询

357029540

SQL Server

这两天在做一个查询的SQL,这个SQL的一个条件是通过游标实现另外两张表查询出一个多条数据,这些数据都是INT类型,然后用IN条件进行查询,并且查询这两张表需要通过外部传入参数才能查询出所需数据,于是想到了用SQL函数返回值,并且也这样做了,由于是返回多条数据,所以把查询出来的INT类型值都拼接为了字符串,这时就遇到问题了,在查询SQL中因为条件是INT值,SQL函数的CAST和CONVERST都

- java 时间格式化 | 比较大小| 时区 个人笔记

7454103

javaeclipsetomcatcMyEclipse

个人总结! 不当之处多多包含!

引用 1.0 如何设置 tomcat 的时区:

位置:(catalina.bat---JAVA_OPTS 下面加上)

set JAVA_OPT

- 时间获取Clander的用法

adminjun

Clander时间

/**

* 得到几天前的时间

* @param d

* @param day

* @return

*/

public static Date getDateBefore(Date d,int day){

Calend

- JVM初探与设置

aijuans

java

JVM是Java Virtual Machine(Java虚拟机)的缩写,JVM是一种用于计算设备的规范,它是一个虚构出来的计算机,是通过在实际的计算机上仿真模拟各种计算机功能来实现的。Java虚拟机包括一套字节码指令集、一组寄存器、一个栈、一个垃圾回收堆和一个存储方法域。 JVM屏蔽了与具体操作系统平台相关的信息,使Java程序只需生成在Java虚拟机上运行的目标代码(字节码),就可以在多种平台

- SQL中ON和WHERE的区别

avords

SQL中ON和WHERE的区别

数据库在通过连接两张或多张表来返回记录时,都会生成一张中间的临时表,然后再将这张临时表返回给用户。 www.2cto.com 在使用left jion时,on和where条件的区别如下: 1、 on条件是在生成临时表时使用的条件,它不管on中的条件是否为真,都会返回左边表中的记录。

- 说说自信

houxinyou

工作生活

自信的来源分为两种,一种是源于实力,一种源于头脑.实力是一个综合的评定,有自身的能力,能利用的资源等.比如我想去月亮上,要身体素质过硬,还要有飞船等等一系列的东西.这些都属于实力的一部分.而头脑不同,只要你头脑够简单就可以了!同样要上月亮上,你想,我一跳,1米,我多跳几下,跳个几年,应该就到了!什么?你说我会往下掉?你笨呀你!找个东西踩一下不就行了吗?

无论工作还

- WEBLOGIC事务超时设置

bijian1013

weblogicjta事务超时

系统中统计数据,由于调用统计过程,执行时间超过了weblogic设置的时间,提示如下错误:

统计数据出错!

原因:The transaction is no longer active - status: 'Rolling Back. [Reason=weblogic.transaction.internal

- 两年已过去,再看该如何快速融入新团队

bingyingao

java互联网融入架构新团队

偶得的空闲,翻到了两年前的帖子

该如何快速融入一个新团队,有所感触,就记下来,为下一个两年后的今天做参考。

时隔两年半之后的今天,再来看当初的这个博客,别有一番滋味。而我已经于今年三月份离开了当初所在的团队,加入另外的一个项目组,2011年的这篇博客之后的时光,我很好的融入了那个团队,而直到现在和同事们关系都特别好。大家在短短一年半的时间离一起经历了一

- 【Spark七十七】Spark分析Nginx和Apache的access.log

bit1129

apache

Spark分析Nginx和Apache的access.log,第一个问题是要对Nginx和Apache的access.log文件进行按行解析,按行解析就的方法是正则表达式:

Nginx的access.log解析正则表达式

val PATTERN = """([^ ]*) ([^ ]*) ([^ ]*) (\\[.*\\]) (\&q

- Erlang patch

bookjovi

erlang

Totally five patchs committed to erlang otp, just small patchs.

IMO, erlang really is a interesting programming language, I really like its concurrency feature.

but the functional programming style

- log4j日志路径中加入日期

bro_feng

javalog4j

要用log4j使用记录日志,日志路径有每日的日期,文件大小5M新增文件。

实现方式

log4j:

<appender name="serviceLog"

class="org.apache.log4j.RollingFileAppender">

<param name="Encoding" v

- 读《研磨设计模式》-代码笔记-桥接模式

bylijinnan

java设计模式

声明: 本文只为方便我个人查阅和理解,详细的分析以及源代码请移步 原作者的博客http://chjavach.iteye.com/

/**

* 个人觉得关于桥接模式的例子,蜡笔和毛笔这个例子是最贴切的:http://www.cnblogs.com/zhenyulu/articles/67016.html

* 笔和颜色是可分离的,蜡笔把两者耦合在一起了:一支蜡笔只有一种

- windows7下SVN和Eclipse插件安装

chenyu19891124

eclipse插件

今天花了一天时间弄SVN和Eclipse插件的安装,今天弄好了。svn插件和Eclipse整合有两种方式,一种是直接下载插件包,二种是通过Eclipse在线更新。由于之前Eclipse版本和svn插件版本有差别,始终是没装上。最后在网上找到了适合的版本。所用的环境系统:windows7JDK:1.7svn插件包版本:1.8.16Eclipse:3.7.2工具下载地址:Eclipse下在地址:htt

- [转帖]工作流引擎设计思路

comsci

设计模式工作应用服务器workflow企业应用

作为国内的同行,我非常希望在流程设计方面和大家交流,刚发现篇好文(那么好的文章,现在才发现,可惜),关于流程设计的一些原理,个人觉得本文站得高,看得远,比俺的文章有深度,转载如下

=================================================================================

自开博以来不断有朋友来探讨工作流引擎该如何

- Linux 查看内存,CPU及硬盘大小的方法

daizj

linuxcpu内存硬盘大小

一、查看CPU信息的命令

[root@R4 ~]# cat /proc/cpuinfo |grep "model name" && cat /proc/cpuinfo |grep "physical id"

model name : Intel(R) Xeon(R) CPU X5450 @ 3.00GHz

model name :

- linux 踢出在线用户

dongwei_6688

linux

两个步骤:

1.用w命令找到要踢出的用户,比如下面:

[root@localhost ~]# w

18:16:55 up 39 days, 8:27, 3 users, load average: 0.03, 0.03, 0.00

USER TTY FROM LOGIN@ IDLE JCPU PCPU WHAT

- 放手吧,就像不曾拥有过一样

dcj3sjt126com

内容提要:

静悠悠编著的《放手吧就像不曾拥有过一样》集结“全球华语世界最舒缓心灵”的精华故事,触碰生命最深层次的感动,献给全世界亿万读者。《放手吧就像不曾拥有过一样》的作者衷心地祝愿每一位读者都给自己一个重新出发的理由,将那些令你痛苦的、扛起的、背负的,一并都放下吧!把憔悴的面容换做一种清淡的微笑,把沉重的步伐调节成春天五线谱上的音符,让自己踏着轻快的节奏,在人生的海面上悠然漂荡,享受宁静与

- php二进制安全的含义

dcj3sjt126com

PHP

PHP里,有string的概念。

string里,每个字符的大小为byte(与PHP相比,Java的每个字符为Character,是UTF8字符,C语言的每个字符可以在编译时选择)。

byte里,有ASCII代码的字符,例如ABC,123,abc,也有一些特殊字符,例如回车,退格之类的。

特殊字符很多是不能显示的。或者说,他们的显示方式没有标准,例如编码65到哪儿都是字母A,编码97到哪儿都是字符

- Linux下禁用T440s,X240的一体化触摸板(touchpad)

gashero

linuxThinkPad触摸板

自打1月买了Thinkpad T440s就一直很火大,其中最让人恼火的莫过于触摸板。

Thinkpad的经典就包括用了小红点(TrackPoint)。但是小红点只能定位,还是需要鼠标的左右键的。但是自打T440s等开始启用了一体化触摸板,不再有实体的按键了。问题是要是好用也行。

实际使用中,触摸板一堆问题,比如定位有抖动,以及按键时会有飘逸。这就导致了单击经常就

- graph_dfs

hcx2013

Graph

package edu.xidian.graph;

class MyStack {

private final int SIZE = 20;

private int[] st;

private int top;

public MyStack() {

st = new int[SIZE];

top = -1;

}

public void push(i

- Spring4.1新特性——Spring核心部分及其他

jinnianshilongnian

spring 4.1

目录

Spring4.1新特性——综述

Spring4.1新特性——Spring核心部分及其他

Spring4.1新特性——Spring缓存框架增强

Spring4.1新特性——异步调用和事件机制的异常处理

Spring4.1新特性——数据库集成测试脚本初始化

Spring4.1新特性——Spring MVC增强

Spring4.1新特性——页面自动化测试框架Spring MVC T

- 配置HiveServer2的安全策略之自定义用户名密码验证

liyonghui160com

具体从网上看

http://doc.mapr.com/display/MapR/Using+HiveServer2#UsingHiveServer2-ConfiguringCustomAuthentication

LDAP Authentication using OpenLDAP

Setting

- 一位30多的程序员生涯经验总结

pda158

编程工作生活咨询

1.客户在接触到产品之后,才会真正明白自己的需求。

这是我在我的第一份工作上面学来的。只有当我们给客户展示产品的时候,他们才会意识到哪些是必须的。给出一个功能性原型设计远远比一张长长的文字表格要好。 2.只要有充足的时间,所有安全防御系统都将失败。

安全防御现如今是全世界都在关注的大课题、大挑战。我们必须时时刻刻积极完善它,因为黑客只要有一次成功,就可以彻底打败你。 3.

- 分布式web服务架构的演变

自由的奴隶

linuxWeb应用服务器互联网

最开始,由于某些想法,于是在互联网上搭建了一个网站,这个时候甚至有可能主机都是租借的,但由于这篇文章我们只关注架构的演变历程,因此就假设这个时候已经是托管了一台主机,并且有一定的带宽了,这个时候由于网站具备了一定的特色,吸引了部分人访问,逐渐你发现系统的压力越来越高,响应速度越来越慢,而这个时候比较明显的是数据库和应用互相影响,应用出问题了,数据库也很容易出现问题,而数据库出问题的时候,应用也容易

- 初探Druid连接池之二——慢SQL日志记录

xingsan_zhang

日志连接池druid慢SQL

由于工作原因,这里先不说连接数据库部分的配置,后面会补上,直接进入慢SQL日志记录。

1.applicationContext.xml中增加如下配置:

<bean abstract="true" id="mysql_database" class="com.alibaba.druid.pool.DruidDataSourc