Kubernetes可视化界面及监控安装

声明:这是我在大学毕业后进入第一家互联网工作学习的内容

背景

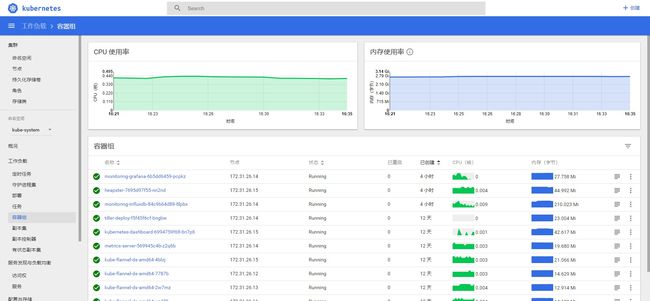

刚刚部署完压测环境kubernertes(以下简称k8s),测试人员需要对项目进行压测,我需要组装一个监控系统帮助测试人员更好地测试个项目性能,百度了很多方案,目前选择了Kubernetes-dashboard+heapster+influxdb+grafana这种方案。

要求说明

| 项目 | 版本号 | targetPort | nodePort |

|---|---|---|---|

| kubernetes | 1.15 | ||

| kubernetes-dashboard | v1.10.1 | 9090 | 32666 |

| heapster-amd64 | v1.5.4 | 8082 | |

| heapster-grafana-amd64 | v5.0.4 | 3000 | 31234 |

| heapster-influxdb-amd64 | v1.5.2 | 8086 | 30444 |

确保nodeport在整个k8s集群内唯一,版本号k8s和kubernetes-dashboard 有一些对应关系

kubernetes-dashboard的这个版本我没有做权限控制,比较麻烦,如果需要

heapster-grafana-amd64 是为了进一步监控每个容器的cpu、内存等更为具体的使用情况,如果你只需要kubernetes-dashboard的基础监控(页面上直观看到)那么只用装heapster-influxdb-amd64和heapster-amd64

简介

Kubernetes-dashboard

Kubernetes Dashboard 是 Kubernetes 集群的基于 Web 的通用 UI。它允许用户管理在群集中运行的应用程序并对其进行故障排除,以及管理群集本身。

由于Kubernetes API版本之间的重大更改,某些功能可能无法在仪表板中正常运行,这也导致了kubernetes-dashboard的兼容性问题比较严重,最好一个版本使用一个版本最稳定的dashboard。

比如kubernetes-dashboard 新版本:v2.0.0 兼容 Kubernetes 版本:1.18以上,对以下的版本不兼容。

如果想安装高版本可以看 Kubernetes 部署 Kubernetes-Dashboard v2.0.0

而本文kubernetes-dashboard:v1.10.1 兼容 kubernetes:1.15版本

Heapster

Heapster是容器集群监控和性能分析工具,天然的支持Kubernetes和CoreOS。

Kubernetes有个出名的监控agent—cAdvisor。在每个kubernetes Node上都会运行cAdvisor,它会收集本机以及容器的监控数据(cpu,memory,filesystem,network,uptime)。在较新的版本中,K8S已经将cAdvisor功能集成到kubelet组件中。每个Node节点可以直接进行web访问。

Heapster是一个收集者,Heapster可以收集Node节点上的cAdvisor数据,将每个Node上的cAdvisor的数据进行汇总,还可以按照kubernetes的资源类型来集合资源,比如Pod、Namespace,可以分别获取它们的CPU、内存、网络和磁盘的metric。默认的metric数据聚合时间间隔是1分钟。还可以把数据导入到第三方工具(如InfluxDB)。

Kubernetes原生dashboard的监控图表信息来自heapster。在Horizontal Pod Autoscaling中也用到了Heapster,HPA将Heapster作为Resource Metrics API,向其获取metric。

InfluxDB

InfluxDB是一个开源的时序数据库,使用GO语言开发,特别适合用于处理和分析资源监控数据这种时序相关数据。而InfluxDB自带的各种特殊函数如求标准差,随机取样数据,统计数据变化比等,使数据统计和实时分析变得十分方便。

时序数据库产品的发明都是为了解决传统关系型数据库在时序数据存储和分析上的不足和缺陷,这类产品被统一归类为时序数据库。针对时序数据的特点对写入、存储、查询等流程进行了优化,这些优化与时序数据的特点息息相关:

-

存储成本:

利用时间递增、维度重复、指标平滑变化的特性,合理选择编码压缩算法,提高数据压缩比;

通过预降精度,对历史数据做聚合,节省存储空间。 -

高并发写入:

批量写入数据,降低网络开销;

数据先写入内存,再周期性的dump为不可变的文件存储。 -

低查询延时,高查询并发:

优化常见的查询模式,通过索引等技术降低查询延时;

通过缓存、routing等技术提高查询并发。

Grafana

Grafana是一个跨平台的开源的度量分析和可视化工具,可以通过将采集的数据查询然后可视化的展示,并及时通知。它主要有以下六大特点:

-

展示方式:快速灵活的客户端图表,面板插件有许多不同方式的可视化指标和日志,官方库中具有丰富的仪表盘插件,比如热图、折线图、图表等多种展示方式;

-

数据源:Graphite,InfluxDB,OpenTSDB,Prometheus,Elasticsearch,CloudWatch和KairosDB等;

-

通知提醒:以可视方式定义最重要指标的警报规则,Grafana将不断计算并发送通知,在数据达到阈值时通过Slack、PagerDuty等获得通知;

-

混合展示:在同一图表中混合使用不同的数据源,可以基于每个查询指定数据源,甚至自定义数据源;

-

注释:使用来自不同数据源的丰富事件注释图表,将鼠标悬停在事件上会显示完整的事件元数据和标记;

-

过滤器:Ad-hoc过滤器允许动态创建新的键/值过滤器,这些过滤器会自动应用于使用该数据源的所有查询。

部署

下载安装

项目文件信息链接

一共有5个yaml文件及2个json文件

[root@vm-26-11 ~]# tree

├── kubernetes-dashboard

│ ├── grafana.yaml

│ ├── heapster-rbac.yaml

│ ├── heapster.yaml

│ ├── kubernetes-dashboard.yaml

│ └── influxdb.yaml

确认kubernetes-dashboard目录的yaml文件正确(json先不用管)

[root@vm-26-11 ~]# kubectl create -f kubernetes-dashboard

kubernetes-dashboard访问地址:

http://nodeip:32666/

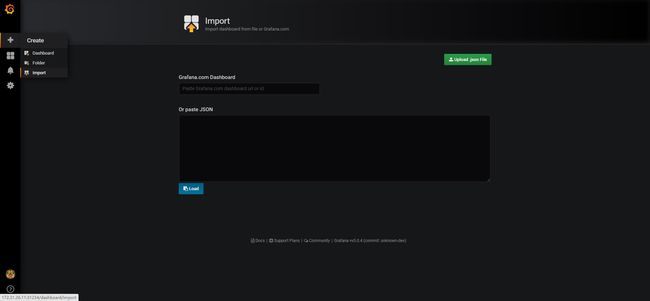

配置grafana

grafana访问地址:http://nodeip:31234/

选择添加 Dashboard模板(上面下载的json文件)

kubernetes-node-statistics_rev1.json

kubernetes-pod-statistics_rev1.json

问题排查

下面有几个问题,我记录下当时的排坑操作

kubernetes-dashboard

大视报没有开权限控制,所以只用保证镜像号在国内可以找到就行,这里我用的事阿里云的镜像号

registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.10.1

heapster

- 权限控制

E0409 06:05:27.448890 1 reflector.go:190] k8s.io/heapster/metrics/util/util.go:30: Failed to list *v1.Node: nodes is forbidden: User "system:serviceaccount:kube-system:heapster" cannot list nodes at the cluster scope

报错原因是无权访问,这个需要创建heapster-rbac.yaml就行

- 端口不通

E0302 06:11:05.004391 1 manager.go:101] Error in scraping containers from kubelet:172.16.8.12:10255: failed to get all container stats from Kubelet URL

"http://172.16.8.12:10255/stats/container/": Post http://172.16.8.12:10255/stats/container/: dial tcp 172.16.8.12:10255: getsockopt: connection refused

报错原因是10255端口不通(k8s默认使用10250作为kubelet端口)

需要将heapster的

- --source=kubernetes:https://kubernetes.default

改为

- --source=kubernetes:kubernetes:https://kubernetes.default?useServiceAccount=true&kubeletHttps=true&kubeletPort=10250&insecure=true

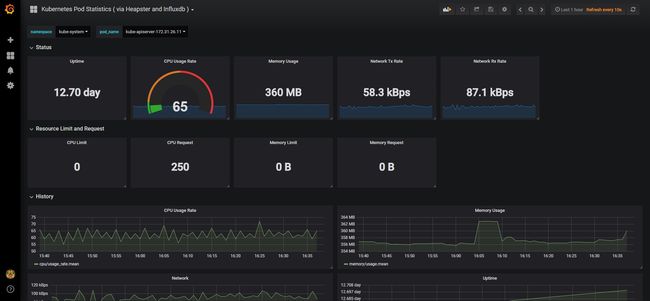

grafana

导入2个json文件后可以看到node、namespace的整体资源,但是看不了pod的资源,大视报显示的namespace的pod资源都是none。

我觉得很奇怪,但是又不太懂这个监控组件。

找了很久,在kubernetes-pod-statistics_rev1.json里找到了

"query": "SHOW TAG VALUES FROM \"uptime\" WITH KEY=pod_name WHERE \"namespace_name\"='$ns'",

"refresh": 1,

"regex": "/^(.*)-\\d{9,}-\\w{5,}$/",

虽然我不知道作者为什么把pod的查找结果还给过滤一道(真心不懂)

但是把"regex": "/^(.*)-\d{9,}-\w{5,}$/"改成 “regex”: “” 保存,就可以看到pod的资源监控情况了。

总结

我花了接近一天的时间才慢慢把坑排完,看国内文章大部分都是转载,纯水文,关键时候还是看各种官方文档才能找出问题。不过最后还是按时完成任务,完美!

附件

如果下载很慢,可以直接粘贴下面的yaml文件。

kubernetes-dashboard.yaml

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# Configuration to deploy release version of the Dashboard UI compatible with

# Kubernetes 1.8.

#

# Example usage: kubectl create -f

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

# Allows editing resource and makes sure it is created first.

addonmanager.kubernetes.io/mode: EnsureExists

name: kubernetes-dashboard-certs

namespace: kube-system

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

# Allows editing resource and makes sure it is created first.

addonmanager.kubernetes.io/mode: EnsureExists

name: kubernetes-dashboard-key-holder

namespace: kube-system

type: Opaque

---

# ------------------- Dashboard Service Account ------------------- #

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-admin

namespace: kube-system

---

# ------------------- Dashboard Role & Role Binding ------------------- #

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard-admin

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard-admin

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: cluster-watcher

rules:

- apiGroups:

- '*'

resources:

- '*'

verbs:

- 'get'

- 'list'

- nonResourceURLs:

- '*'

verbs:

- 'get'

- 'list'

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics from heapster.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster"]

verbs: ["proxy"]

---

# ------------------- Dashboard Deployment ------------------- #

apiVersion: apps/v1

kind: Deployment

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

seccomp.security.alpha.kubernetes.io/pod: 'docker/default'

spec:

priorityClassName: system-cluster-critical

containers:

- name: kubernetes-dashboard

# image: registry.cn-beijing.aliyuncs.com/kubernetes-cn/kubernetes-dashboard-amd64:v1.10.1

image: registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.10.1

resources:

#limits:

# cpu: 100m

# memory: 300Mi

requests:

cpu: 50m

memory: 200Mi

ports:

- containerPort: 9090

protocol: TCP

args:

#- --auto-generate-certificates

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

- name: tmp-volume

mountPath: /tmp

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 9090

initialDelaySeconds: 30

timeoutSeconds: 30

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard-admin

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

# ------------------- Dashboard Service ------------------- #

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

ports:

- port: 443

targetPort: 9090

selector:

k8s-app: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-external

namespace: kube-system

spec:

ports:

- port: 9090

targetPort: 9090

nodePort: 32666

type: NodePort

selector:

k8s-app: kubernetes-dashboard

influxdb.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: monitoring-influxdb

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: influxdb

spec:

containers:

- name: influxdb

image: registry.cn-hangzhou.aliyuncs.com/google_containers/heapster-influxdb-amd64:v1.5.2

volumeMounts:

- mountPath: /data

name: influxdb-storage

volumes:

- name: influxdb-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

task: monitoring

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-influxdb

name: monitoring-influxdb

namespace: kube-system

spec:

type: NodePort

ports:

- port: 8086

targetPort: 8086

nodePort: 30444

name: http

selector:

k8s-app: influxdb

heapster.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: heapster

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: heapster

spec:

serviceAccountName: heapster

containers:

- name: heapster

image: registry.cn-hangzhou.aliyuncs.com/google_containers/heapster-amd64:v1.5.4

imagePullPolicy: IfNotPresent

command:

- /heapster

- --source=kubernetes:https://kubernetes.default?useServiceAccount=true&kubeletHttps=true&kubeletPort=10250&insecure=true

- --sink=influxdb:http://monitoring-influxdb:8086

---

apiVersion: v1

kind: Service

metadata:

labels:

task: monitoring

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: Heapster

name: heapster

namespace: kube-system

spec:

ports:

- port: 80

targetPort: 8082

selector:

k8s-app: heapster

heapster-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: heapster

namespace: kube-system

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: heapster

subjects:

- kind: ServiceAccount

name: heapster

namespace: kube-system

roleRef:

kind: ClusterRole

name: cluster-admin

apiGroup: rbac.authorization.k8s.io

grafana.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: registry.cn-hangzhou.aliyuncs.com/google_containers/heapster-grafana-amd64:v5.0.4

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var

name: grafana-storage

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

# The following env variables are required to make Grafana accessible via

# the kubernetes api-server proxy. On production clusters, we recommend

# removing these env variables, setup auth for grafana, and expose the grafana

# service using a LoadBalancer or a public IP.

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

# If you're only using the API Server proxy, set this value instead:

# value: /api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: kube-system

spec:

# In a production setup, we recommend accessing Grafana through an external Loadbalancer

# or through a public IP.

# type: LoadBalancer

# You could also use NodePort to expose the service at a randomly-generated port

type: NodePort

ports:

- port: 80

targetPort: 3000

nodePort: 31234

selector:

k8s-app: grafana

参考资料

Heapster-Github

在具有InfluxDB后端和Grafana UI的Kubernetes集群中运行Heapster

Grafana的Dashboards模板

kubernetes监控方案之:heapster+influxdb+grafana详解

时序数据库介绍和使用