【k8s-kubeadm安装部署集群】centos7系统基于kubeadm1.18.3版本安装部署k8s集群

当下,k8s集群的安装方式分为两类,一类是基于二进制包或源码编译安装集群所需组件的的原始安装方式,但由于该方式在安装过程中需生成主机网络、service网络及pod网络中的多个CA证书,较为繁琐,学习时不建议使用;另外一种是基于开源的安装工具的简化安装方式,目前流行的有若干种,应用度最高的是kubernetes官方出品的kubeadm,本例程介绍如何使用kubeadm安装部署k8s集群,该项目托管在github之上,https://github.com/kubernetes/kubeadm。

一、虚拟机配置(所有机器统一)

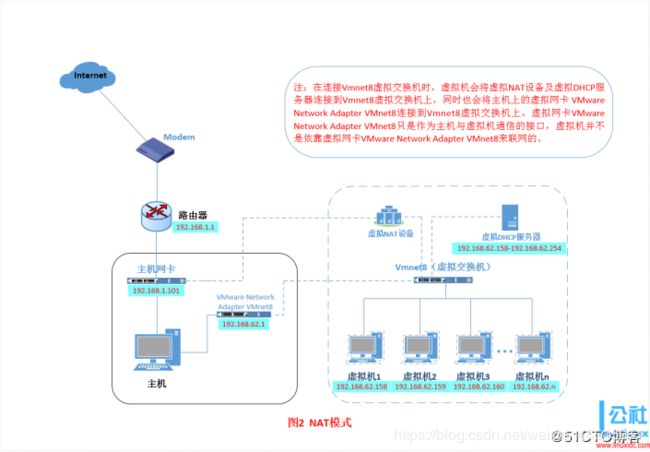

例程使用Vmware生成虚拟机实例,由于centos系统的k8s生态较好,故系统镜像选用阿里云镜像站下的centos7系统;并将虚拟机的网络设置为NAT模式,如下图所示。

二、安装docker及kubectl、kubeadm、kubelet(所有机器统一)

从此处开始,均以root权限访问虚拟机

-

安装docker:

从阿里云镜像站导入docker-ce的.repo文件

cd /etc/yum.repos.d/

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

使用docker repolist命令查看docker-ce的安装源是否导入

![]()

执行yum install docker-ce即可,安装完成之后,执行以下命令取消docker的sudo权限

groupadd docker # 新建docker用户组

gpasswd -a ${USER} docker # 将用户加入docker组

为使更改生效,重启docker服务

systemctl restart docker

如还未生效,重启虚拟机即可

设置docker自启动

systemctl enable docker

-

安装kubectl、kubeadm、kubelet:

按照阿里云镜像站给出的例程进行安装

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

setenforce 0 # 关闭SELinux

yum install -y kubelet kubeadm kubectl

systemctl enable kubelet # 设置自启动

三、配置安装环境

-

配置名称参数:

将三台机器的hostname分别更改为master、node01、node02,并设置统一的hosts文件如下:

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.244.130 master

192.168.244.131 node01

192.168.244.132 node02

199.232.68.133 raw.githubusercontent.com # 之后安装过程中需要访问的域名

-

配置环境变量(三台机器统一):

关闭防火墙及其自启动,否则稍后worker节点可能无法加入集群

systemctl stop firewalld

systemctl disable firewalld

修改/etc/sysconfig/kubelet内容如下,使其可以忽略swap失败

KUBELET_EXTRA_ARGS="--fail-swap-on=false"

查看/proc/sys/net/bridge/bridge-nf-call-iptables文件及/proc/sys/net/bridge/bridge-nf-call-ip6tables文件,确保内容全部为1,否则kubelet无法写入iptables规则,如文件内容为0,执行以下命令将其置1

echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

echo 1 > /proc/sys/net/bridge/bridge-nf-call-ip6tables

kubeadm采取pod,即容器的方式部署k8s的各个组件,所以需要拉取各个组件的镜像,然而,官方的镜像隶属于k8s.gcr.io镜像库,由于不可描述的原因,哈哈,没法拉取,所以这里预先拉取阿里云的镜像,再修改tag

kubeadm config images pull --image-repository registry.aliyuncs.com/google_containers

批量修改标签,此处XXX为阿里云镜像库名称

docker images | grep XXX | awk '{print "docker tag", $1":"$2, $1":"$2}' | sed -e 's#XXX#k8s.gcr.io#2' | sh -x

删除原有镜像

docker images | grep XXX | awk '{print "docker rmi", $1":"$2}' | sh -x

之后使用kubeadm config images list命令查看所需镜像版本,与本地已拉取的镜像做比对是否一致,我的镜像列表如下:

k8s.gcr.io/kube-apiserver:v1.18.3

k8s.gcr.io/kube-controller-manager:v1.18.3

k8s.gcr.io/kube-scheduler:v1.18.3

k8s.gcr.io/kube-proxy:v1.18.3

k8s.gcr.io/pause:3.2

k8s.gcr.io/etcd:3.4.3-0

k8s.gcr.io/coredns:1.6.7

四、初始化集群

在master节点上执行初始化命令,并忽略swap未关闭的错误,新版本允许swap存在,忽略错误即可

kubeadm init --apiserver-advertise-address=192.168.244.130 --service-cidr=10.10.0.0/16 --pod-network-cidr=10.122.0.0/16 --ignore-preflight-errors=Swap

结果如下:

W0615 13:26:55.076198 2603 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.18.3

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING Swap]: running with swap on is not supported. Please disable swap

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.10.0.1 192.168.244.130]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [master localhost] and IPs [192.168.244.130 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [master localhost] and IPs [192.168.244.130 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

W0615 13:27:03.346742 2603 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[control-plane] Creating static Pod manifest for "kube-scheduler"

W0615 13:27:03.350403 2603 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 20.006546 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: as91zi.upv68j8n1kiatpoe

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.244.130:6443 --token as91zi.upv68j8n1kiatpoe \

--discovery-token-ca-cert-hash sha256:2ece5b293fde50aa51a748467c9e4de449003322e3c7fe1e2847b7fa635eb32d

之后,按提示执行以下命令:

mkdir -p $HOME/.kube # 新建工作目录

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config # 与kubectl交互的配置信息,包括ca证书、端口信息等

chown $(id -u):$(id -g) $HOME/.kube/config # 将目录拥有者设置为当前用户

查看当前node信息:

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady master 7m v1.18.3

状态为NotReady,因为还未安装网络,网络是与k8s独立的,所以需要单独安装,例程使用flannel安装网络,flannel官方文档里给出了安装说明,如下:

但是,raw.githubusercontent.com国内无法直接访问,在ipaddress.com网站里解析域名对应ip即可,添加入hosts文件,文件内容在例程第三部分第一小节可见。默认的flannel配置文件拉取镜像在国外,国内拉取失败,导致flannel部署失败,可用的安装方式如下:

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml # 先获取.yml文件

sed -i 's#quay.io#quay-mirror.qiniu.com#g' k8s/kube-flannel.yml # 仓库替换

kubectl apply -f k8s/kube-flannel.yml # 部署网络

结果如下:

[root@master ~]# kubectl apply -f kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds-amd64 created

daemonset.apps/kube-flannel-ds-arm64 created

daemonset.apps/kube-flannel-ds-arm created

daemonset.apps/kube-flannel-ds-ppc64le created

daemonset.apps/kube-flannel-ds-s390x created

稍后查看本地镜像,flannel的镜像已经被拉取至本地仓库,此时再查看node状态信息如下:

节点状态变为Ready,并且通过kubectl get pods -n kube-system命令查看pods状态,结果如下:

NAME READY STATUS RESTARTS AGE

coredns-66bff467f8-9s8wl 1/1 Running 0 28m

coredns-66bff467f8-z5cnj 1/1 Running 0 28m

etcd-master 1/1 Running 0 28m

kube-apiserver-master 1/1 Running 0 28m

kube-controller-manager-master 1/1 Running 0 28m

kube-flannel-ds-amd64-6hz6l 1/1 Running 0 2m2s

kube-proxy-kmstv 1/1 Running 0 28m

kube-scheduler-master 1/1 Running 0 28m

此处的-n参数指定namespace,通过kubectl get namespace可查看已有的namespace

[root@master ~]# kubectl get namespace

NAME STATUS AGE

default Active 30m

kube-node-lease Active 30m

kube-public Active 30m

kube-system Active 30m

五、worker加入集群

node01、node02两节点加入集群,执行master节点初始化后给出的命令,并加上swap忽略选项

kubeadm join 192.168.244.130:6443 --token as91zi.upv68j8n1kiatpoe \

--discovery-token-ca-cert-hash sha256:2ece5b293fde50aa51a748467c9e4de449003322e3c7fe1e2847b7fa635eb32d --ignore-preflight-errors=Swap

worker节点执行命令后结果如下:

W0615 14:17:26.371105 3606 join.go:346] [preflight] WARNING: JoinControlPane.controlPlane settings will be ignored when control-plane flag is not set.

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING Swap]: running with swap on is not supported. Please disable swap

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[kubelet-start] Downloading configuration for the kubelet from the "kubelet-config-1.18" ConfigMap in the kube-system namespace

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

在master节点上查看节点状态:

执行命令kubectl get pods -n kube-system -o wide查看所有pod详细运行状况,结果如下:

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-66bff467f8-9s8wl 1/1 Running 0 52m 10.122.0.2 master <none> <none>

coredns-66bff467f8-z5cnj 1/1 Running 0 52m 10.122.0.3 master <none> <none>

etcd-master 1/1 Running 0 53m 192.168.244.130 master <none> <none>

kube-apiserver-master 1/1 Running 0 53m 192.168.244.130 master <none> <none>

kube-controller-manager-master 1/1 Running 0 53m 192.168.244.130 master <none> <none>

kube-flannel-ds-amd64-6hz6l 1/1 Running 0 26m 192.168.244.130 master <none> <none>

kube-flannel-ds-amd64-knfqj 1/1 Running 0 78s 192.168.244.132 node02 <none> <none>

kube-flannel-ds-amd64-nvl2b 1/1 Running 0 2m51s 192.168.244.131 node01 <none> <none>

kube-proxy-d2nj9 1/1 Running 0 78s 192.168.244.132 node02 <none> <none>

kube-proxy-kmstv 1/1 Running 0 52m 192.168.244.130 master <none> <none>

kube-proxy-lgrxh 1/1 Running 0 2m51s 192.168.244.131 node01 <none> <none>

kube-scheduler-master 1/1 Running 0 53m 192.168.244.130 master <none> <none>

另外,分别在worker与master节点上执行kubeadm reset命令可以删除集群