【Hadoop】3.Hadoop序列化以及序列化示例

为什么要序列化

生成的对象存在内存中,关机断电就没有了。而且生成对象只能在本地得进程使用,不能被发送到网络上的另外一台计算机。然而系列化可以存储对象,可以将对象发送到远程计算机中。

什么是序列化

序列化:把内存中的对象转换为字节序列(或其他数据传输协议)以便于存储(持久化)和网络传输。

反序列化:将收到的字节序列(或其他数据传输协议)或者是硬盘的持久化数据,转换为内存中的对象

为什么不用java的序列化

java的序列化是一个重量级的序列化框架(Serializable),一个对象被序列化后,会附带很多额外的信息(各种校验信息,header,继承体系等),不便于在网络中高效传输。所以hadoop开发了一套自己的序列化(Writable)机制。精简高效。

为什么序列化对hadoop很重要

因为Hadoop在集群之间进行通讯或者RPC调用的时候,需要序列化,而且要求序列化要快,而且体积要小,占用带宽要小。所以必须理解hadoop的序列化机制。

序列化和反序列化在分布式数据处理领域经常出现:进程通信和永久存储。然而Hadoop中各个节点的通信是通过远程调用(RPC)实现的,呢么RPC序列化要求具有以下特点:

- 紧凑:紧凑的格式能让我们充分利用网络带宽,而带宽是数据中心最稀缺的资源

- 快速:进程通信形成了分布式系统的骨架,所以需要尽量减少序列化和反序列化的性能开销,这是基本的;

- 可扩展:协议为了满足新的需求变化,所以控制客户端和服务器过程中,需要直接引进相应的协议,这些是新协议,原序列化方式能支持新的协议报文;

- 互操作:能支持不同语言写的客户端和服务端进行交互

常用数据序列化类型

| Java类型 | Hadoop Writable类型 |

|---|---|

| boolean | BooleanWritable |

| byte | ByteWritable |

| int | IntWritable |

| float | FloatWritable |

| long | LongWritable |

| double | DoubleWritable |

| string | Text |

| map | MapWritable |

| array | ArrayWritable |

自定义bean对象实现序列化接口(Writable)

实现bean的自定义序列化需要如下步骤:

- 必须实现Writable接口

- 反序列化时,需要反射调用空参构造函数,所以必须有空参构造

public Bean(){

super();

}

- 重写序列方法

@Override

public void write(DataOutput out) throws IOException{

out.writeLong(param1);

out.writeLong(param2);

// 如果是字符串 请使用writeUTF方法

out.writeUTF(param3);

}

- 重写反序列方法

@Override

public void readFields(DataInput in) throws IOException{

param1 = in.readLong();

param2 = in.readLong();

// 读取字符串

param3 = in.readUTF();

}

- 注意反序列化的顺序和序列化的顺序要完全一致,为什么要一致,其实序列化和反序列化在传输过程中和队列模式一样

- 如果想把结果显示在文件中需要重写toString()方法,可以用“\t” 分开,方便后续使用

- 如果需要将自定义的bean放在key中传输,则需要实现Comparable接口,因为在MapReduce框架中的Shuffle过程要求对key必须能排序

@Override

public int comparaTo(Bean o){

// 倒叙排列 从大到小

return this.param1 > o.getParam1() ? -1 : 1;

}

序列化实例

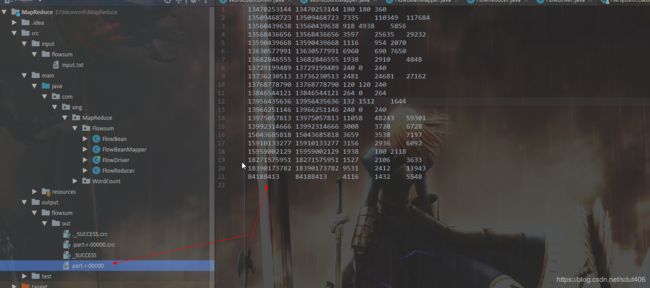

要求: 统计出手机号的上行流量和下行流量以及总量

ID 号码 IP 地址 上行 下行 状态

1 13736230513 192.196.100.1 www.atguigu.com 2481 24681 200

2 13846544121 192.196.100.2 264 0 200

3 13956435636 192.196.100.3 132 1512 200

4 13966251146 192.168.100.1 240 0 404

5 18271575951 192.168.100.2 www.atguigu.com 1527 2106 200

6 84188413 192.168.100.3 www.atguigu.com 4116 1432 200

7 13590439668 192.168.100.4 1116 954 200

8 15910133277 192.168.100.5 www.hao123.com 3156 2936 200

9 13729199489 192.168.100.6 240 0 200

10 13630577991 192.168.100.7 www.shouhu.com 6960 690 200

11 15043685818 192.168.100.8 www.baidu.com 3659 3538 200

12 15959002129 192.168.100.9 www.atguigu.com 1938 180 500

13 13560439638 192.168.100.10 918 4938 200

14 13470253144 192.168.100.11 180 180 200

15 13682846555 192.168.100.12 www.qq.com 1938 2910 200

16 13992314666 192.168.100.13 www.gaga.com 3008 3720 200

17 13509468723 192.168.100.14 www.qinghua.com 7335 110349 404

18 18390173782 192.168.100.15 www.sogou.com 9531 2412 200

19 13975057813 192.168.100.16 www.baidu.com 11058 48243 200

20 13768778790 192.168.100.17 120 120 200

21 13568436656 192.168.100.18 www.alibaba.com 2481 24681 200

22 13568436656 192.168.100.19 1116 954 200

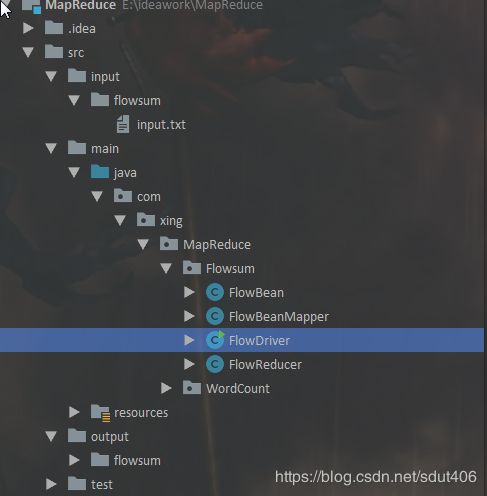

package com.xing.MapReduce.Flowsum;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class FlowBean implements Writable {

private long upFlow;

private long downFlow;

private long sumFlow;

private String phone;

public FlowBean(){

super();

}

public FlowBean(long upFlow,long downFlow,String phone){

super();

this.upFlow = upFlow;

this.downFlow = downFlow;

this.phone = phone;

this.sumFlow = upFlow + downFlow;

}

public void setUpFlow(long upFlow,long downFlow,String phone) {

this.upFlow = upFlow;

this.downFlow = downFlow;

this.phone = phone;

this.sumFlow = upFlow + downFlow;

}

public long getUpFlow() {

return upFlow;

}

public long getDownFlow() {

return downFlow;

}

public long getSumFlow() {

return sumFlow;

}

public String getPhone() {

return phone;

}

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

dataOutput.writeBytes(phone);

}

public void readFields(DataInput dataInput) throws IOException {

this.upFlow = dataInput.readLong();

this.downFlow = dataInput.readLong();

this.sumFlow = dataInput.readLong();

this.phone = String.valueOf(dataInput.readByte());

}

@Override

public String toString() {

return phone + "\t" + upFlow + "\t" + downFlow + "\t" + sumFlow;

}

}

FlowBeanMapper:

package com.xing.MapReduce.Flowsum;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowBeanMapper extends Mapper<LongWritable,Text,Text,FlowBean> {

FlowBean v = new FlowBean();

Text k = new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 切割字符串

String[] strings = value.toString().split("\t",-1);

// 获取目的数据

String phone = strings[1];

long up = Long.parseLong(strings[strings.length-3]);

long down = Long.parseLong(strings[strings.length-2]);

k.set(phone);

v.setUpFlow(up,down ,phone );

// 输出

context.write(k,v);

}

}

FlowReducer:

package com.xing.MapReduce.Flowsum;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FlowReducer extends Reducer<Text,FlowBean,Text ,FlowBean> {

@Override

protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException {

long upsum = 0L;

long downsum = 0L;

// 合并值

for (FlowBean value : values) {

upsum += value.getUpFlow();

downsum += value.getDownFlow();

}

FlowBean flowBean = new FlowBean(upsum, downsum,key.toString());

context.write(key,flowBean);

}

}

FlowDriver:

package com.xing.MapReduce.Flowsum;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class FlowDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

System.setProperty("hadoop.home.dir", "E:\\hadoop-2.7.1");

String in = "E:\\ideawork\\MapReduce\\src\\input\\flowsum\\input.txt";

String out = "E:\\ideawork\\MapReduce\\src\\output\\flowsum\\out";

Path inPath = new Path(in);

Path outPath = new Path(out);

Configuration configuration = new Configuration();

FileSystem fs = FileSystem.get(configuration);

// 判断前提目录

if (!fs.exists(inPath) || !fs.isFile(inPath)){

System.out.println(" not exists infile");

System.exit(-1);

}

if (fs.exists(outPath)){

// true 表示 如果该目录不为空 删除该文件夹及其子目录

boolean rel = fs.delete(outPath,true);

if (rel){

System.out.println("success delete outfile");

} else {

System.err.println("error delete outfile ");

System.exit(-1);

}

}

Job job = Job.getInstance(configuration);

job.setJobName("flowsnum");

job.setMapperClass(FlowBeanMapper.class);

job.setReducerClass(FlowReducer.class);

job.setJarByClass(FlowDriver.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(FlowBean.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

FileInputFormat.setInputPaths(job,new Path(in));

FileOutputFormat.setOutputPath(job, new Path(out));

boolean rel = job.waitForCompletion(true);

if (rel){

System.out.println("success");

}

}

}