tensorflow 用cnn训练识别验证码(svm+ocr )

环境是python3.6+win10x64+tensorflow-gpu 1.11.0

用厦大嘉庚的教务系统的验证码作为案例

样例:

![]()

图像预处理

- 使用OpenCV-python直接以灰度读取图像

- 进行全局大津二值化

- 使用dfs算法去除噪点

- 通过投影法切割字母

- 用cv2.copyMakeBorder把图像扩充到统一规格16*16

import cv2

word_num = 'ABCDEFGHJKLMNPRSTUVWXYZ'

word_num = list(word_num)

word_number = {}

for i in range(len(word_num)):

word_number[i] = word_num[i]

def process(img, min_area):

_, img = cv2.threshold(img, 0, 255, cv2.THRESH_OTSU) # 全局大津二值化

img = clear_background(img, min_area) # 去除噪点

return img

def mark_clear_area(img, data, col, row, dire, flag): # dfs深度搜索 dire为记录搜索的方向

if row >= img.shape[0] or col >= img.shape[1] or col < 0 or row < 0:

return data

if not flag:

if img[row, col] == 0:

img[row, col] = 127 # 标记像素

data += 1 # 连通像素点数量

# dire = 1 = 0001为上

# dire = 2 = 0010为下

# dire = 4 = 0100为左

# dire = 8 = 1000为右

if dire & 1 != 1:

data = mark_clear_area(img, data, col, row + 1, 2, flag) # 向上搜索

if dire & 8 != 8:

data = mark_clear_area(img, data, col + 1, row, 4, flag) # 向右搜索

if dire & 2 != 2:

data = mark_clear_area(img, data, col, row - 1, 1, flag) # 向下搜索

if dire & 4 != 4:

data = mark_clear_area(img, data, col - 1, row, 8, flag) # 向左搜索

else:

if img[row, col] == 127:

img[row, col] = 255 # 设置为背景色

if dire & 1 != 1:

data = mark_clear_area(img, data, col, row + 1, 2, flag) # 向上搜索

if dire & 8 != 8:

data = mark_clear_area(img, data, col + 1, row, 4, flag) # 向右搜索

if dire & 2 != 2:

data = mark_clear_area(img, data, col, row - 1, 1, flag) # 向下搜索

if dire & 4 != 4:

data = mark_clear_area(img, data, col - 1, row, 8, flag)

return data

def clear_background(image, num): # 去除噪点

for row in range(0, image.shape[0]):

for col in range(0, image.shape[1]):

if image[row, col] == 0:

number = mark_clear_area(image, 0, col, row, 0, False) # 连通数量

# print(number)

if number < num:

mark_clear_area(image, 0, col, row, 0, True) # 消除连通区域

for row in range(0, image.shape[0]):

for col in range(0, image.shape[1]):

if image[row, col] == 127:

image[row, col] = 0

return image

def horizontal(image, hor_num): # 水平投影

img = image.copy()

(h, w) = img.shape # 返回高和宽

# print(h,w)#s输出高和宽

H = [0 for z in range(0, h)]

# 记录每一行的波峰

for i in range(0, h): # 遍历一行

for j in range(0, w): # 遍历一列

if img[i, j] != 255: # 如果改点为黑点

H[i] += 1 # 该列的计数器加一计数

Hei = []

i = 0

while i != h: # 标记水平投影非0点的起始点和长度

if H[i] != 0:

start = i

count = 0

while i != h:

if H[i] == 0:

break

else:

count += 1

i += 1

Hei.append([start, count])

else:

i += 1

index = 0

while index < len(Hei): # 去除长度小于阈值的标记

if Hei[index][1] < hor_num:

del Hei[index]

index -= 1

index += 1

return H, Hei

def vertical(image, ver_num): # 垂直投影

img = image.copy()

(h, w) = img.shape # 返回高和宽

# print(h,w)#s输出高和宽

W = [0 for z in range(0, w)]

# 记录每一列的波峰

for j in range(0, w): # 遍历一列

for i in range(0, h): # 遍历一行

if img[i, j] != 255: # 如果改点为黑点

W[j] += 1 # 该列的计数器加一计数

Wid = []

i = 0

while i != w: # 标记垂直投影非0点的起始点和长度

if W[i] != 0:

start = i

count = 0

while i != w:

if W[i] == 0:

break

else:

count += 1

i += 1

Wid.append([start, count])

else:

i += 1

index = 0

while index < len(Wid): # 去除长度小于阈值的标记

if Wid[index][1] < ver_num:

del Wid[index]

index -= 1

index += 1

return W, Wid

if __name__ == '__main__':

import os

import matplotlib.pyplot as plt

from matplotlib import animation

import seaborn as sns

import cv2

dir_path = './imgcode2'

image = cv2.imread(dir_path + '\\' + os.listdir(dir_path)[2], 0) # 读取图片[0]为第一张图片

_, image = cv2.threshold(image, 0, 255, cv2.THRESH_OTSU) # 全局大津二值化

sns.set_style("whitegrid") # 设置图形主图

# 创建画布

fig = plt.figure()

im = plt.imshow(image, cmap='gray')

plt.grid(False)

def animate(i):

for row in range(0, image.shape[0]):

for col in range(0, image.shape[1]):

if image[row, col] == 0:

number = mark_clear_area(image, 0, col, row, 0, False) # 连通数量

if number < 5:

mark_clear_area(image, 0, col, row, 0, True) # 消除连通区域

im.set_array(image)

return [im]

ani = animation.FuncAnimation(fig, animate, frames=50, interval=500, blit=False)

plt.show()

image = clear_background(image, 5)

w, wid = vertical(image, 5)

plt.bar([i + 1 for i in range(len(w))], w)

plt.show()

error_img = 0

fig = plt.figure()

ax = []

for i in range(3):

ax_ = []

for j in range(1, 5):

ax_.append(fig.add_subplot(3, 4, i*4+j))

ax.append(ax_)

for i in range(len(wid)):

pic = image[:, wid[i][0]:wid[i][0] + 9]

ax[0][i].imshow(pic)

ax[0][i].grid(False)

h, hei = horizontal(pic, 8)

h = h[::-1]

ax[1][i].barh([i + 1 for i in range(len(h))], h)

ax[1][i].grid(False)

cut_img = pic[hei[0][0]:hei[0][0] + 11, :]

cut_img = cv2.copyMakeBorder(cut_img, 3, 2, 4, 3, cv2.BORDER_CONSTANT,

value=[255, 255, 255])

ax[2][i].imshow(cut_img)

ax[2][i].grid(False)

plt.show()创建训练集

- 事先用pytesseract + tesseract-ocr 识别后再手动修改,建立训练集

import cv2

import os

import improcessing as im

import numpy as np

import matplotlib.pyplot as plt

method = 1

method_name = ['svm', 'ocr']

if method_name[method] == 'svm':

import pic_svm

elif method_name[method] == 'ocr':

from PIL import Image

import re

try:

import pytesseract as ocr

except ImportError:

method = 0

import pic_svm

word_count = {}

def img_pro(dir_path, file_path, save_path):

ver_num = 5

hor_num = 8

min_area = 5

img = cv2.imread(dir_path + '\\' + file_path, flags=0)

img = im.process(img, min_area)

word =[]

if method_name[method] == 'ocr':

word_list = ocr.image_to_string(Image.fromarray(img), lang='eng', config='digits') # ocr识别图像

word_list = ''.join(re.split(r'[^A-Za-z]', word_list)) # 正则表达式提取字母

word_list = word_list.upper() # 转大写字母

word_list = list(word_list)

word = word_list

__, wid = im.vertical(img, ver_num)

pic = []

cut_img = []

error_img = 0

for i in range(len(wid)):

try:

pic.append(img[:, wid[i][0]:wid[i][0] + 9])

___, hei = im.horizontal(pic[i], hor_num)

# print(hei)

cut_img.append(pic[i][hei[0][0]:hei[0][0] + 11, :])

save_img = cv2.copyMakeBorder(cut_img[i], 3, 2, 4, 3, cv2.BORDER_CONSTANT, value=[255, 255, 255])

error_img = save_img

if method_name[method] == 'ocr':

count = word_count[word_list[i]]

count += 1

word_count[word_list[i]] = count # 计数

cv2.imwrite(save_path + '/' + word_list[i] + '/' + str(word_count[word_list[i]]) + '.bmp', save_img)

elif method_name[method] == 'svm':

x = np.array(np.mat(pic_svm.get_feature(save_img))) # 提取图像特征点

number = int(pic_svm.predict(x)[0]) # 使用svm支持向量机识别

simple_word = im.word_number[number] # 将结果转为字母

word.append(simple_word)

count = word_count[simple_word]

count += 1

word_count[simple_word] = count # 计数

cv2.imwrite(save_path + '/' + simple_word + '/' + str(word_count[simple_word]) + '.bmp', save_img) # 保存图片

except IndexError:

print(hei)

word_count[26] += 1

cv2.imwrite(save_path + '/error/' + str(word_count[26]) + '.bmp', error_img)

print(''.join(word) + '\t', end='')

print(word_count)

if __name__ == '__main__':

dir_path = './imgcode'

save_path = './pic'

if not os.path.exists(save_path): # 创建文件夹

os.mkdir(save_path)

for ch, i in zip(range(ord('A'), ord('Z') + 1), range(26)): # 创建分类文件夹

word_count[chr(ch)] = 0

path = save_path + '/' + chr(ch)

if not os.path.exists(path):

os.mkdir(path)

error_path = save_path + '/error'

if not os.path.exists(error_path): # 创建错误文件夹

os.mkdir(error_path)

for file_path in os.listdir(dir_path): # 遍历文件夹

print(file_path + '\t->\t', end='')

img_pro(dir_path, file_path, save_path)初步获得训练集之后,可以用svm训练,之后可批量生成验证码

这里也给出svm的训练,想看tensorflow的直接略过吧

训练svm

- 导入sklearn.svm的svm

- 特征点设为每列的黑点数,每行的黑点数

def get_feature(img): # 提取图像特征点

width, height = img.shape

pixel_cnt_list = []

for y in range(height):

pix_cnt_x = 0

for x in range(width):

if img[y, x] != 255: # 黑色点

pix_cnt_x += 1

pixel_cnt_list.append(pix_cnt_x)

for x in range(width):

pix_cnt_y = 0

for y in range(height):

if img[y, x] != 255: # 黑色点

pix_cnt_y += 1

pixel_cnt_list.append(pix_cnt_y)

return pixel_cnt_list- 将训练集转换成svm的标签数据

def get_files(filename): # 提取文件夹下文件名、目录

class_train = []

label_train = []

word = 'ABCDEFGHJKLMNPRSTUVWXYZ'

word = list(word)

word_dirt = {}

for i in range(len(word)):

word_dirt[word[i]] = i

for train_class in os.listdir(filename):

for pic in os.listdir(filename + '/' + train_class):

class_train.append(filename + '/' + train_class + '/' + pic)

label_train.append(train_class)

temp = np.array([class_train, label_train])

temp = temp.transpose()

# after transpose, images is in dimension 0 and label in dimension 1

image_list = list(temp[:, 0])

label_list = list(temp[:, 1])

label_list = [word_dirt[i] for i in label_list]

# print(label_list)

return image_list, label_list

def batches(image_path, label): # 生成svm标签数据

x = []

y = []

for path, i in zip(image_path, label):

image = cv2.imread(path, flags=0)

datalist = get_feature(image)

x.append(datalist)

y.append(i)

return np.array(y), np.array(x)- 进行svm训练并保存模型

import numpy as np

import cv2

import os

from sklearn.svm import SVC # 导入svm

from sklearn.externals import joblib

def trainSVM(y, x):

clf = SVC(kernel='linear')

rf = clf.fit(x, y)

score_linear = clf.score(x, y)

print("The score of linear is : %f" % score_linear)

joblib.dump(rf, 'word_svm.model')

def predict(x):

RF = joblib.load('word_svm.model')

return RF.predict(x)

if __name__ == '__main__':

array = get_files('./train_data')

array = batches(array[0], array[1])

trainSVM(array[0], array[1])训练完成后,直接将特征点输入predict(x)就会返回判断值

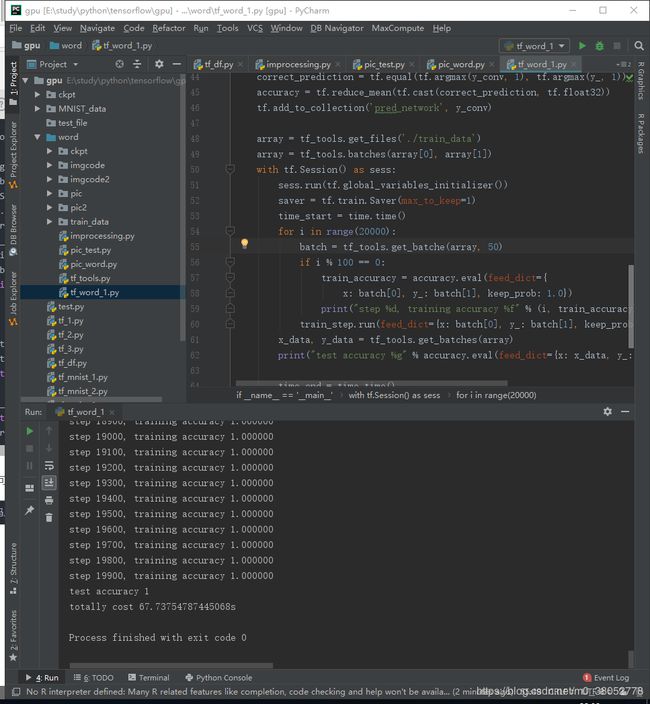

tensorflow训练cnn

- 建立一个卷积神经网络

可以使用tensorflow中文官网的http://www.tensorfly.cn/tfdoc/tutorials/mnist_pros.html深入MNIST的模型

本质上就是用python把流程图画出来,设置好评价函数、反向传播函数。设置完成后建立session与C++后台对话。sess.run()开始,后台将实际的参数填充,运行。

因为验证码没有I、O、Q字母所以输出只设置为23维向量

import time

import tensorflow as tf

import os

import numpy as np

from PIL import Image

import random

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv_2d(x, w):

return tf.nn.conv2d(x, w, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1], padding='SAME')

def get_files(filename): # 提取文件夹下文件名、目录

class_train = []

label_train = []

word = 'ABCDEFGHJKLMNPRSTUVWXYZ'

word = list(word)

word_dirt = {}

for i in range(len(word)):

word_dirt[word[i]] = i

for train_class in os.listdir(filename):

for pic in os.listdir(filename + '/' + train_class):

class_train.append(filename + '/' + train_class + '/' + pic)

label_train.append(train_class)

temp = np.array([class_train, label_train])

temp = temp.transpose()

# after transpose, images is in dimension 0 and label in dimension 1

image_list = list(temp[:, 0])

label_list = list(temp[:, 1])

label_list = [word_dirt[i] for i in label_list]

# print(label_list)

return image_list, label_list

def batches(image_path, label): # 生成cnn标签数据

x = []

for path, i in zip(image_path, label):

image = np.array(Image.open(path).convert('L'))

image_list = []

rows = image.shape[0]

cols = image.shape[1]

image = abs(255 - image)

max_px = np.max(image)

for row in range(rows):

for col in range(cols):

image_list.append(image[row, col] / max_px)

image_list.insert(0, i)

x.append(image_list)

return x

def get_batches(batches):

x = []

y = []

for iter in batches:

out = [0 for i in range(23)]

out[iter[0]] = 1

y.append(out)

x.append(iter[1:])

return np.array(x), np.array(y)

def get_batche(batches, num):

batch = random.sample(batches, num)

x = []

y = []

for iter in batch:

out = [0 for i in range(23)]

out[iter[0]] = 1

y.append(out)

x.append(iter[1:])

return np.array(x), np.array(y)

if __name__ == '__main__':

# Create the model

# placeholder

x = tf.placeholder(tf.float32, shape=[None, 16*16], name='input_x')

y_ = tf.placeholder(tf.float32, shape=[None, 23], name='input_y')

# first

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x, [-1, 16, 16, 1])

h_conv1 = tf.nn.relu(tf_tools.conv_2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

# second

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(tf_tools.conv_2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

W_fc1 = weight_variable([4 * 4 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 4 * 4 * 64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

# dropout

keep_prob = tf.placeholder(tf.float32, name='keep_prob')

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

# softmax

W_fc2 = weight_variable([1024, 23])

b_fc2 = bias_variable([23])

y_conv = tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2)

cross_entropy = -tf.reduce_sum(y_*tf.log(y_conv))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.add_to_collection('pred_network', y_conv)

array = get_files('./train_data')

array = batches(array[0], array[1])

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

saver = tf.train.Saver(max_to_keep=1)

time_start = time.time()

for i in range(2000):

batch = get_batche(array, 50) # 样本数量

if i % 100 == 0:

train_accuracy = accuracy.eval(feed_dict={

x: batch[0], y_: batch[1], keep_prob: 1.0})

print("step %d, training accuracy %f" % (i, train_accuracy))

train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5}) # 训练模型

x_data, y_data = tf_tools.get_batches(array)

print("test accuracy %g" % accuracy.eval(feed_dict={x: x_data, y_: y_data, keep_prob: 1.0}))

time_end = time.time()

print('totally cost ' + str(time_end-time_start) + 's')

saver.save(sess, './ckpt/mnist.ckpt', global_step=0) # 保存模型

百分之百的准确率!(cnn牛逼!)

训练完成后就可以测试数据啦

用saver.restore导入模型

ckpt = tf.train.get_checkpoint_state('./ckpt/')

saver = tf.train.import_meta_graph(ckpt.model_checkpoint_path + '.meta')

print(ckpt.model_checkpoint_path)

with tf.Session() as sess:

saver.restore(sess, ckpt.model_checkpoint_path)测试代码

import cv2

import os

import tensorflow as tf

import tf_tools as tf_t

import improcessing as im

import numpy as np

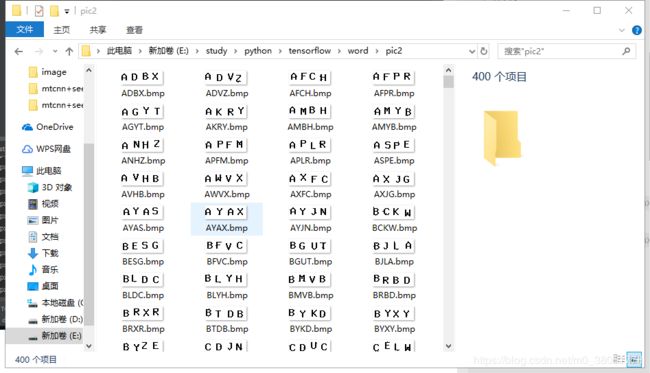

if __name__ == '__main__':

dir_path = './imgcode2'

save_path = './pic2'

if not os.path.exists(save_path):

os.mkdir(save_path)

ckpt = tf.train.get_checkpoint_state('./ckpt/')

saver = tf.train.import_meta_graph(ckpt.model_checkpoint_path + '.meta')

print(ckpt.model_checkpoint_path)

array = tf_t.get_files('./train_data')

array = tf_t.batches(array[0], array[1])

with tf.Session() as sess:

saver.restore(sess, ckpt.model_checkpoint_path)

y = tf.get_collection('pred_network')[0]

graph = tf.get_default_graph()

input_x = graph.get_operation_by_name('input_x').outputs[0]

keep_prob = graph.get_operation_by_name('keep_prob').outputs[0]

ver_num = 5 # 垂直投影阈值

hor_num = 8 # 水平投影阈值

min_area = 5 # 连通域面积阈值

for file_path in os.listdir(dir_path): # 遍历文件夹

print(file_path + '\t->\t', end='')

img = cv2.imread(dir_path + '\\' + file_path, flags=0) # 读取图片

img = im.process(img, min_area)

__, wid = im.vertical(img, ver_num) # 得到垂直投影标记

pic = []

cut_img = []

test_word = ''

datalist = []

for i in range(len(wid)): # 提取验证码四个字母特征点

pic.append(img[:, wid[i][0]:wid[i][0] + 9]) # 垂直切割图像

___, hei = im.horizontal(pic[i], hor_num) # 得到水平投影标记

# print(hei)

cut_img.append(pic[i][hei[0][0]:hei[0][0] + 11, :]) # 水平切割图像

save_img = cv2.copyMakeBorder(cut_img[i], 3, 2, 4, 3, cv2.BORDER_CONSTANT,

value=[255, 255, 255]) # 将图像大小扩充到16*16

save_img = np.abs(255 - save_img)

data = save_img / np.max(save_img)

xt = []

for row in range(data.shape[0]):

for col in range(data.shape[1]):

xt.append(data[row, col])

datalist.append(xt)

x = np.array(datalist)

result = sess.run(y, feed_dict={input_x: x, keep_prob: 1.0})

for iter in result:

i = np.where(iter == np.max(iter))[0][0]

test_word += im.word_number[i] # 将结果转为字母

print(test_word)

cv2.imwrite(save_path + '/' + test_word + '.bmp', img) # 保存结果