CNN模型 | 2 AlexNet

文章目录

- AlexNet网络

- AlexNet网络的设计思想

- 主要设计进步和贡献

- ReLU激活函数

- DropOut正则化

- 核心架构

- Pytorch实现AlexNet代码如下:

- keras实现AlexNet网络

AlexNet网络

在NIPS2012作者Alex Krizhevsky正式发表

AlexNet网络的设计思想

主要设计进步和贡献

- 5卷积+3个全连接,6000万个参数和65万个神经元

- 开始使用先进的激活函数ReLU

- 开始进行局部归一化Normalization提升性能,归一化图像,浓缩样本

- Dropout,防止过拟合,正则化方法

- Softmax损失函数,分类器

- Overlap Pooling(重叠池化),使feature map更加稠密,之前是直接下采样,会错过一些地方的均值

ReLU激活函数

- 加快了梯度下降的迭代速度

- 激活函数的目标是将卷积后的结果进行压缩至某一个特定的值,保证数值结果可控,对做卷积训练有很大帮助,做卷积越深数据范围越广,梯度求导值会变化的非常大,不太好学

- 训练的过程中会出现很多死神经元,可以选择调小学习率解决

DropOut正则化

- 减少神经元之间复杂的共适应关系,依赖关系

- 按照一定概率随机丢弃神经元(设置权值为0,使其对后续的网络层没有影响),降低参数和过拟合程度

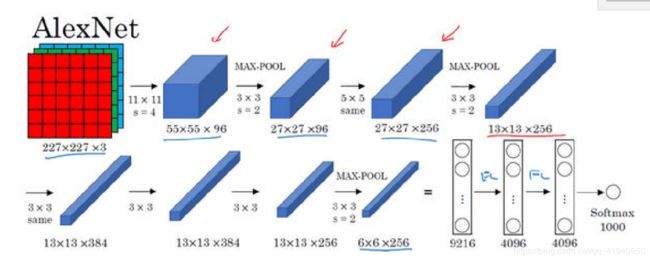

核心架构

根据吴恩达老师在深度学习课程中的讲解,AlexNet网络的基本流程为:

Pytorch实现AlexNet代码如下:

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.autograd import Variable

class AlexNet(nn.Module):

def __init__(self,num_classes):

super(AlexNet, self).__init__()

self.conv1 = nn.Conv2d(in_channels = 3, out_channels = 96, kernel_size = 11, stride=4, padding=0)

self.pool1 = nn.MaxPool2d(kernel_size=3, stride=2, padding=0)

self.conv2 = nn.Conv2d(in_channels = 96, out_channels = 256 , kernel_size = 5, stride = 1, padding = 2)

self.pool2 = nn.MaxPool2d(kernel_size= 3,stride=2,padding=0)

self.conv3 = nn.Conv2d(in_channels= 256, out_channels= 384,kernel_size= 3,stride=1,padding=1)

self.conv4 = nn.Conv2d(in_channels=384,out_channels= 384,kernel_size=3,stride=1,padding=1)

self.conv5 = nn.Conv2d(in_channels=384,out_channels= 256,kernel_size=3,stride=1,padding=1)

self.pool3 = nn.MaxPool2d(kernel_size=3,stride=2,padding=0)

self.fc1 = nn.Linear(6*6*256,4096)

self.fc2 = nn.Linear(4096,4096)

self.fc3 = nn.Linear(4096,num_classes)

def forward(self,x):

x = self.pool1(F.relu(self.conv1(x)))

x = self.pool2(F.relu(self.conv2(x)))

x = F.relu(self.conv3(x))

x = F.relu(self.conv4(x))

x = self.pool3(F.relu(self.conv5(x)))

x = x.view(-1, 256 * 6 * 6)

x = F.dropout(x)

x = F.relu(self.fc1(x))

x = F.dropout(x)

x = F.relu(self.fc2(x))

x = F.softmax(self.fc3(x))

return x

net = AlexNet(1000)

print(net)

keras实现AlexNet网络

import numpy as np

import keras

from keras.datasets import mnist

from keras.utils import np_utils

from keras.models import Sequential

from keras.layers import Dense, Activation, Conv2D, MaxPooling2D, Flatten

from keras.optimizers import Adam

from keras.layers import Dropout

num_classes = 2#暂定为二分类

model = Sequential()

# model.add(MaxPooling2D(pool_size=(2,2), strides=2, padding='same'))

model.add(Conv2D(filters=96,kernel_size=(11,11),strides=(4,4), padding='valid',input_shape=(227,227,3),activation='relu'))

model.add(keras.layers.BatchNormalization())

model.add(MaxPooling2D(pool_size=(2,2),strides=2,padding='valid'))

model.add(Conv2D(filters=256,kernel_size=(3,3),strides=(2,2), padding='same',activation='relu'))

model.add(keras.layers.BatchNormalization())

model.add(MaxPooling2D(pool_size=(3,3),strides=2,padding='valid'))

model.add(Conv2D(filters=384,kernel_size=(3,3), padding='valid',activation='relu'))

model.add(Conv2D(filters=384,kernel_size=(3,3), strides=(1,1), padding='same', activation='relu'))

model.add(Conv2D(filters=256,kernel_size=(3,3), strides=(1,1), padding='same', activation='relu'))

model.add(MaxPooling2D(pool_size=(3,3), strides=(2,2), padding='valid'))

# 第四段

model.add(Flatten())

model.add(Dense(4096, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(4096, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(1000, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(num_classes))

model.add(Activation('softmax'))

model.summary()