1. MiniGuiMian(int argc, const char* argv[])

video_record_init_lock(); // 初始化锁

parameter_init(); // 参数初始化

rk_fb_set_lcd_backlight(); // 打开背光

hMainWnd = CreateMainWindow(&CreateInfo);

RegisterMainWindow(hMainWnd);

initrec(hMainWnd);

while(GetMessage(&Msg, hMainWnd)) {

TranslateMessage(&Msg);

DispatchMessage(&Msg);

}

2. initrec(hMainWnd) {

......

video_record_init(&front, &back, &cif);

for (i = 0; i < MAX_VIDEO_DEVICE; i++)

video_record_addvideo(i, front, back, cif, 0, 0);

......

}

3. video_record_addvideo(int id,

struct ui_frame* front,

struct ui_frame* back,

struct ui_frame* cif,

char rec_immediately,

char check_record_init) {

......

video = (struct Video*)calloc(1, sizeof(struct Video));

// 初始化video对象

if (video_record_query_businfo(video, id)) // 查询设备总线信息(isp/usb/cif)

// 根据总线类型填充video->type

type:

VIDEO_TYPE_ISP

VIDEO_TYPE_USB

VIDEO_TYPE_CIF

// 根据总线信息判别FORNT和CIF, 这里是FRONT(isp)

// 从输入参数中获取图像宽高和帧率信息

width = front->width;

height = front->height;

fps = front->fps;

video->front = true;

video->fps_n = 1;

video->fps_d = fps;

video->hal = new hal();

video->deviceid = id;

// 根据设备类型video->type调用相应的初始化函数

if(video->type == VIDEO_TYPE_ISP) {

printf("video%d is isp\n", video->deviceid);

isp_video_init(video, id, width, height, fps) {

frm_info_t in_frmFmt = {

.frmSize = {width, height},

.frmFmt = HAL_FRAME_FMT_NV12,

.fps = fps

};

with_mp: 1, with_sp: 1, with_adas: 0;

if (with_sp) {

if (with_mp) {

frm_info_t spfrmFmt = {

.frmSize = {ISP_SP_WIDTH, ISP_SP_HEIGHT}, // 640*360

.frmFmt = HAL_FRMAE_FMT_NV12, // 格式

.fps = fps,

};

memcpy(&video->spfrmFmt, &spfrmFmt, sizeof(frm_info_t));

}

memcpy(&video->frmFmt, &in_frmFmt, sizeof(frm_info_t));

video->type = VIDEO_TYPE_ISP;

video->width = video->frmFmt.frmSize.width;

video->height = video->frmFmt.frmSize.height;

}

}

}

video->pre = 0;

video->next = 0;

// 该video对象添加到video_list中

video_record_addnode(video) {

struct Video* video_cur;

video_cur = getfastvideo(); // 此方法直接返回video_list

if (video_cur == NULL) {

video_list = video; // 首次,作为首节点

} else {

while (video_cur->next) { // 遍历链表到尾部

video_cur = video_cur->next;

}

video->pre = video_cur; // 节点尾插

video_cur->next = video;

}

}

// 编码初始化

video_encode_init(video) {

fps = 30;

if (video->type == VIDEO_TYPE_ISP) {

fps = video->fps_d;

MediaConfig config;

VideoConfig& vconfig = config.video_config;

vconfig.fmt = PIX_FMT_NV12;

vconfig.width = SCALED_WIDTH; // 640

vconfig.height = SCALED_HEIGHT; // 384

vconfig.bit_rate = SCALED_BIT_RATE; // 800000

vconfig.frame_rate = fps; // 30s

vconfig.level = 51;

vconfig.gop_size = 30; // I帧间隔

vconfig.profile = 100;

vconfig.quality = MPP_ENC_RC_QUALITY_MEDIUM; // 质量

vconfig.qp_step = 6;

vconfig.qp_min = 18;

vconfig.qp_max = 48;

vconfig.rc_mode = MPP_ENC_RC_MODE_CBR;

// 创建编码处理机ts_handler

ScaleEncodeTSHandler* ts_handler = new ScaleEncodeTSHandler(config);

vconfig.width = video->width;

vconfig.height = video->height;

if (!ts_handler || ts_handler->Init(config)) {

fprintf(stderr, "create ts handler failed\n");

return -1;

}

video->ts_handler = ts_handler;

}

// 创建编码处理机handler

EncodeHandler* handler = EncodeHandler::create(

video->deviceid, video->type, video->usb_type, video->width,

video->height, fps, audio_id);

if (handler) {

handler->set_global_attr(&global_attr);

handler->set_audio_mute(enableaudio ? false : true);

video->encode_handler = handler;

return 0;

}

if (rec_immediately) // 此处rec_immediately为 0 不立即录像,由按键触发 参考后面 x节

start_record(video) {

EncodeHandler* handler = vnode->encode_handler;

if (handler) {

assert(!(vnode->encode_status & RECORDING_FLAG));

if (!handler->get_audio_capture())

global_audio_ehandler.AddPacketDispatcher(handler->get_h264aac_pkt_dispatcher());

handler->send_record_mp4_start(global_audio_ehandler.GetEncoder()) {

send_message(START_RECODEMP4, aac_enc) { // 将 START_RECODEMP4 即为 id 消息放入消息队列msg_queue

Message* msg = Message::create(id);

msg_queue->Push(msg);

}

}

vnode->encode_status |= RECORDING_FLAG;

}

}

// 创建video_record线程

pthread_create(&video->record_id, &attr, video_record, video)

}

......

}

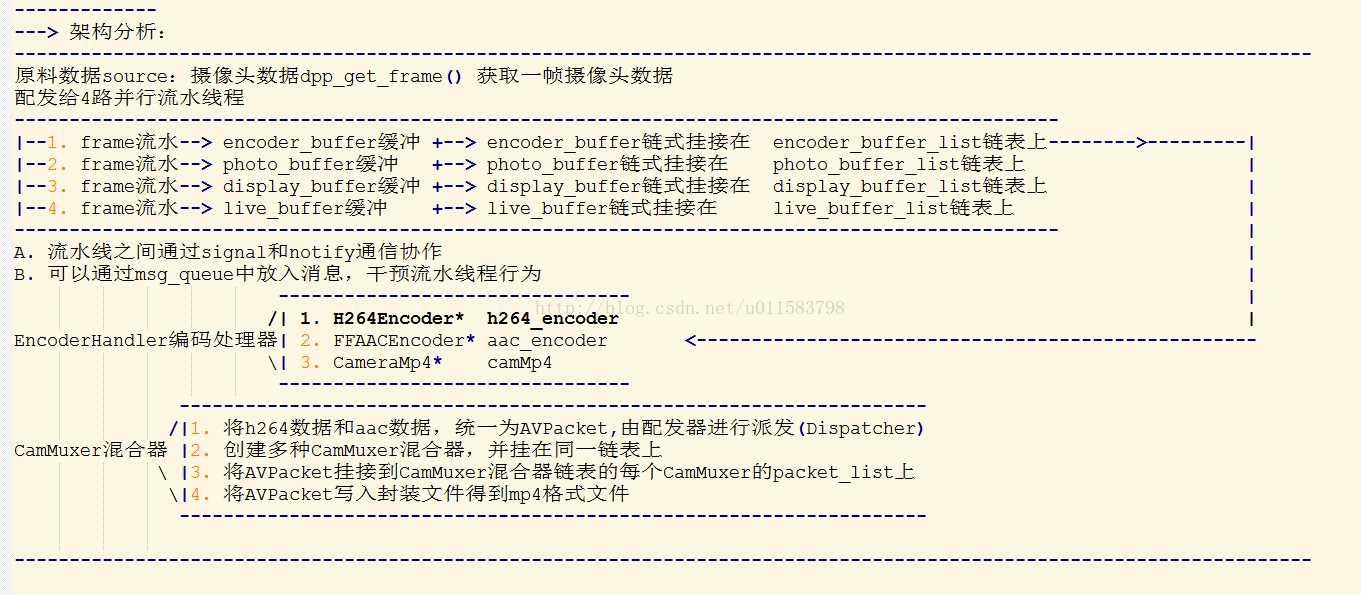

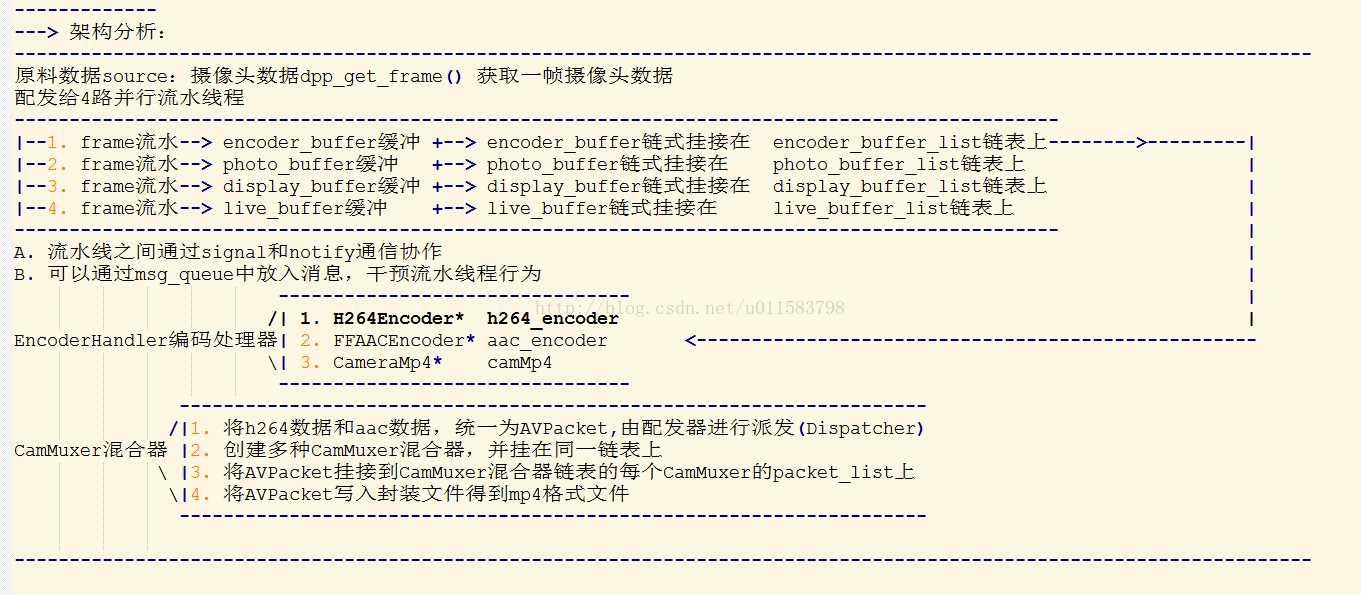

4. video_record(void* args) {

struct Video* video = (struct Video*)arg;

if (video->type == VIDEO_TYPE_ISP && with_mp) {

// xxdk005

video_dpp_init(video) {

video->dpp = new video_dpp();

video->dpp->context = 0;

video->dpp->dpp_thread = 0;

video->dpp->encode_thread = 0;

video->dpp->photo_thread = 0;

video->dpp->display_thread = 0;

video->dpp->live_thread = 0;

ret = dpp_create((DppCtx*)&video->dpp->context, DPP_FUN_3DNR);

if (DPP_OK != ret) {

printf(">> Test dpp_create failed.\n");

return -1;

}

dpp_start(video->dpp->context);

video_dpp_buffer_list_init(&video->dpp->encode_buffer_list);

video_dpp_buffer_list_init(&video->dpp->photo_buffer_list);

if (!USE_SP_DISPLAY)

video_dpp_buffer_list_init(&video->dpp->display_buffer_list);

video_dpp_buffer_list_init(&video->dpp->live_buffer_list);

// 创建 video_dpp_thread_func 线程 xxdk006

pthread_create(&video->dpp->dpp_thread, &global_attr,

video_dpp_thread_func, video);

}

}

}

5.

video_dpp_thread_func(void* atg){

struct Video* video = (struct Video*)arg;

// Create encode thread

// 创建编码线程------------------------------------->encode详见6

if (with_mp) {

if (is_record_mode) {

// 创建 video_encode_thread_func 线程 xxdk007

if (pthread_create(&video->dpp->encode_thread, &global_attr,

video_encode_thread_func, video)) {

printf("Encode thread create failed!\n");

goto out;

}

}

// 创建 video_live_thread_func 线程--------------------------------->live

if (pthread_create(&video->dpp->live_thread, &global_attr,

video_live_thread_func, video)) {

printf("Live thread create failed!\n");

goto out;

}

}

// Create photo thread: video_photo_thread_func------------------------->photo

if (pthread_create(&video->dpp->photo_thread, &global_attr,

video_photo_thread_func, video)) {

printf("Photo pthread create failed!\n");

goto out;

}

// Create display thread: video_display_thread_func--------------------->display

if (!USE_SP_DISPLAY) {

if (pthread_create(&video->dpp->display_thread, &global_attr,

video_display_thread_func, video)) {

printf("Display pthread create failed!\n");

goto out;

}

}

// DPP thread main loop. Get a frame from dpp, then consturct buffers

// pushed into next level threads for further processing.--------------->主线程dpp-thread

printf("DPP thread main loop start.\n");

while (video->pthread_run && !video->high_temp && !video->dpp->exit) {

DppFrame frame = NULL;

// 1. 从dpp获取一帧数据

DPP_RET ret = dpp_get_frame(video->dpp->context, (DppFrame*)&frame); // context和frame都是void*类型的typedef

struct dpp_buffer* encode_buffer = NULL;

struct dpp_buffer* photo_buffer = NULL;

struct dpp_buffer* display_buffer = NULL;

struct dpp_buffer* live_buffer = NULL;

// 2. 前面已经创建encode_thread线程,故条件满足

if (video->dpp->encode_thread) {

// 2.1

video_dpp_buffer_create_from_frame(&encode_buffer, frame) {

dpp_buffer* buf = NULL;

// 创建dpp_buffer缓冲区buf

video_dpp_buffer_create(&buf) {

(*buf) = (struct dpp_buffer*)calloc(1, sizeof(*buf));

}

// 3. 在从dpp获取的frame中检出数据到encode_buffer

if (buf) {

// 猜测:在从dpp获取的frame中检出 buffer

buf->buffer = dpp_frame_get_buffer(frame); // 库方法,/external/dpp/src/inc/dpp_frame.h

dpp_buffer_inc_ref(buf->buffer); // 增加引用计数

// 猜测:在从dpp获取的frame中检出 pts

buf->pts = dpp_frame_get_pts(frame);

// 猜测:在从dpp获取的frame中检出 isp元数据(描述数据属性的数据)

buf->isp_meta = (struct HAL_Buffer_MetaData*)dpp_frame_get_private_data(frame);

// 猜测:在从dpp获取的frame中检出 noise

dpp_frame_get_noise(frame, buf->noise);

// 猜测:在从dpp获取的frame中检出 sharpness

dpp_frame_get_sharpness(frame, &buf->sharpness);

return 0;

}

return -1;

}

// 2.2 插入encode_buffer到encode_buffer_list,并唤醒等待线程

video_dpp_buffer_list_push(&video->dpp->encode_buffer_list, encode_buffer) {

pthread_mutex_lock(&list->mutex); //竞态保护

list->buffers.push_back(buffer); // encode_buffer插入encode_buffer_list链表尾部

pthread_cond_signal(&list->condition); // 唤醒等待处理encode_buffer的线程

pthread_mutex_unlock(&list->mutex);

}

}

// 3. 在从dpp获取的frame中检出数据到photo_buffer

if (video->photo.state == PHOTO_ENABLE && video->dpp->photo_thread) {

video->photo.state = PHOTO_BEGIN;

video_dpp_buffer_create_from_frame(&photo_buffer, frame) {

dpp_buffer* buf = NULL;

video_dpp_buffer_create(&buf);

// 3.1 数据检索

if (buf) {

buf->buffer = dpp_frame_get_buffer(frame);

dpp_buffer_inc_ref(buf->buffer);

buf->pts = dpp_frame_get_pts(frame);

buf->isp_meta = (struct HAL_Buffer_MetaData*)dpp_frame_get_private_data(frame);

dpp_frame_get_noise(frame, buf->noise);

dpp_frame_get_sharpness(frame, &buf->sharpness);

(*buffer) = buf;

return 0;

}

}

// 3.2 插入photo_buffer到photo_buffer_list

video_dpp_buffer_list_push(&video->dpp->photo_buffer_list, photo_buffer);

}

// 4. 在从dpp获取的frame中检出数据到display_buffer

if (video->dpp->display_thread) {

video_dpp_buffer_create_from_frame(&display_buffer, frame);

// 4.2 插入display_buffer到display_buffer_list

video_dpp_buffer_list_push(&video->dpp->display_buffer_list,

display_buffer);

}

// 5. 在从dpp获取的frame中检出数据到live_buffer

if (video->dpp->live_thread) {

video_dpp_buffer_create_from_frame(&live_buffer, frame);

// 5.2 插入live_buffer到live_buffer_list

video_dpp_buffer_list_push(&video->dpp->live_buffer_list,

live_buffer);

}

dpp_frame_deinit((DppFrame*)frame);

}

//------------------------主线程dpp-thread 结束------------------------------------

}

6.

// 创建编码线程------------------------------------->encode

static void* video_encode_thread_func(void* arg) {

struct Video* video = (struct Video*)arg;

while (video->pthread_run && !video->dpp->stop_flag) {

struct dpp_buffer* buffer = NULL;

// 6.1 从 encode_buffer_list链表获取一个 encode_buffer

video_dpp_buffer_list_pop(&video->dpp->encode_buffer_list, &buffer, true);

if (!buffer)

continue;

int fd = dpp_buffer_get_fd(buffer->buffer);

size_t size = dpp_buffer_get_size(buffer->buffer);

assert(fd > 0);

if (video->save_en) {

// 6.2 构造预配置对象

MppEncPrepCfg precfg;

precfg.change = MPP_ENC_PREP_CFG_CHANGE_SHARPEN;

precfg.sharpen.enable_y = buffer->sharpness.src_shp_l;

precfg.sharpen.enable_uv = buffer->sharpness.src_shp_c;

precfg.sharpen.threshold = buffer->sharpness.src_shp_thr;

precfg.sharpen.div = buffer->sharpness.src_shp_div;

precfg.sharpen.coef[0] = buffer->sharpness.src_shp_w0;

precfg.sharpen.coef[1] = buffer->sharpness.src_shp_w1;

precfg.sharpen.coef[2] = buffer->sharpness.src_shp_w2;

precfg.sharpen.coef[3] = buffer->sharpness.src_shp_w3;

precfg.sharpen.coef[4] = buffer->sharpness.src_shp_w4;

if (video->encode_handler)

// 6.3 调用编码处理机控制旋钮

video->encode_handler->h264_encode_control(MPP_ENC_SET_PREP_CFG,

(void*)&precfg);

// 6.4 xxdk008

h264_encode_process(video, NULL, fd, NULL, size, buffer->pts) {

int ret = -1;

video->mp4_encoding = true;

if (!video->pthread_run)

goto exit_h264_encode_process;

if (video->encode_handler) {

BufferData input_data;

input_data.vir_addr_ = virt;

input_data.ion_data_.fd_ = fd;

input_data.ion_data_.handle_ = (ion_user_handle_t)hnd;

input_data.mem_size_ = size;

input_data.update_timeval_ = time_val;

const Buffer input_buffer(input_data);

// xxdk009 -----------------------------------------------------------分析详见7

ret = video->encode_handler->h264_encode_process(input_buffer, fmt);

}

exit_h264_encode_process:

video->mp4_encoding = false;

return ret;

}

}

video_dpp_buffer_destroy(buffer);

}

pthread_exit(NULL);

}

7.

// TODO, move msg_queue handler to thread which create video

int EncodeHandler::h264_encode_process(const Buffer& input_buf,

const PixelFormat& fmt) {

int recorded_sec = 0;

bool del_recoder_mp4 = false;

bool video_save = false;

Message* msg = NULL;

int fd = input_buf.GetFd();

struct timeval& time_val = input_buf.GetTimeval();

unsigned short tl_interval = parameter_get_time_lapse_interval();

if (h264_config.video_config.fmt != fmt) {

// usb camera: Only know the fmt after the first input mjpeg pixels

// decoded successfully.

// CameraMp4* cameramp4; EncodeHandler对象成员

if (!cameramp4)

h264_config.video_config.fmt = fmt;

else

printf("<%s>warning: fmt change from [%d] to [%d]\n", __func__,

h264_config.video_config.fmt, fmt);

}

assert(msg_queue);

if (!msg_queue->Empty()) {

int msg_ret = 0;

msg = msg_queue->PopFront();

EncoderMessageID msg_id = msg->GetID();

switch (msg_id) {

case START_ENCODE:

if (0 > h264aac_encoder_ref())

msg_ret = -1;

break;

case STOP_ENCODE:

h264aac_encoder_unref();

do_notify(msg->arg2, msg->arg3);

break;

case START_CACHE:

if (msg->arg2) {

MUXER_ALLOC(CameraCacheMuxer, cameracache,

h264aac_encoder_ref, h264aac_encoder_unref, NULL,

h264_encoder, (FFAACEncoder*)msg->arg2);

} else {

MUXER_ALLOC(CameraCacheMuxer, cameracache,

h264aac_encoder_ref, h264aac_encoder_unref, NULL,

h264_encoder, aac_encoder);

}

cameracache->SetCacheDuration((int)msg->arg1);

// h264aac_pkt_dispatcher.AddHandler(cameracache);

h264aac_pkt_dispatcher.AddSpecialMuxer(cameracache);

AudioEncodeHandler::recheck_enc_pcm_always_ = true;

break;

case STOP_CACHE:

// h264aac_pkt_dispatcher.RemoveHandler(cameracache);

h264aac_pkt_dispatcher.RemoveSpecialMuxer(cameracache);

AudioEncodeHandler::recheck_enc_pcm_always_ = true;

MUXER_FREE(cameracache, h264aac_encoder_unref);

do_notify(msg->arg2, msg->arg3);

break;

//---------------------------- MUXER(H264 + AAC) => MP4 -----------------

// 录像按键触发后,在msg_queue中放入该消息

case START_RECODEMP4:

assert(!cameramp4);

// 构建文件名:路径 + 年月日时分秒(A/B/C...).mp4, A,B,C代表第几个设备

video_record_getfilename(filename, sizeof(filename),

fs_storage_folder_get_bytype(video_type, VIDEOFILE_TYPE),

device_id, "mp4");

memset(&start_timestamp, 0, sizeof(struct timeval));

memset(&pre_frame_time, 0, sizeof(pre_frame_time));

if (msg->arg1) {

// external aac encoder

assert(!aac_encoder);

MUXER_ALLOC(CameraMp4, cameramp4, h264aac_encoder_ref,

h264aac_encoder_unref, filename, h264_encoder,

(FFAACEncoder*)msg->arg1);

} else {

// #define MUXER_ALLOC(CLASSTYPE, VAR, ENCODER_REF_FUNC, ENCODER_UNREF_FUNC,

// URI, VIDEOENCODER, AUDIOENCODER)

// 1. H264Encoder* h264_encoder;

// 2. FFAACEncoder* aac_encoder;

// 3. CameraMp4* cameramp4; 三者都是 EncodeHandler 的私有成员

MUXER_ALLOC(CameraMp4, cameramp4, h264aac_encoder_ref,

h264aac_encoder_unref, filename, h264_encoder,

aac_encoder)

{

h264aac_encoder_ref();

CameraMp4* muxer = CameraMp4::create() {

CameraMp4* pRet = new CameraMp4() {

CameraMp4::CameraMp4() { // 构造方法

snprintf(format, sizeof(format), "mp4");

direct_piece = true;

exit_id = MUXER_NORMAL_EXIT;

no_async = true; // no cache packet list and no thread

memset(&lbr_pre_timeval, 0, sizeof(struct timeval));

low_frame_rate_mode = false;

}

}

pRet->init() {

int CameraMuxer::init() {

run_func = muxer_process;

return 0;

}

}

return pRet;

}

muxer->init_uri(filename, h264_encoder->

GetVideoConfig().frame_rate) {

......

Message* msg =

Message::create(MUXER_START, HIGH_PRIORITY);

......

msg->arg1 = uri_str;

msg_queue.Push(msg); // 入队 MUXER_START

}

muxer->set_encoder(h264_encoder, aac_encoder);

muxer->start_new_job(global_attr) {

std::unique_lock lk(w_tidlist_mutex);

// already pushed start msg

assert(!msg_queue.Empty() && write_tid == 0); // should not call too wayward

if (no_async) { //CameraMp4::CameraMp4()中置为1

Message* msg = getMessage();

char* uri = NULL;

assert(msg && msg->GetID() == MUXER_START);

global_processor.pre_video_pts = -1;

global_processor.got_first_audio = false;

global_processor.got_first_video = false;

uri = (char*)msg->arg1;

delete msg;

muxer_init(this, &uri, global_processor)) {

muxer->init_muxer_processor(&processor) {

int CameraMuxer::init_muxer_processor(MuxProcessor* process) {

int ret = 0;

AVFormatContext* oc = NULL;

FFContext* ff_ctx = static_cast(v_encoder->GetHelpContext());

AVStream* video_stream = ff_ctx->GetAVStream();

ff_ctx = a_encoder ? static_cast(a_encoder->GetHelpContext()) : NULL;

AVStream* audio_stream = ff_ctx ? ff_ctx->GetAVStream() : NULL;

avformat_network_init();

// av_log_set_level(AV_LOG_TRACE);

/* allocate the output media context */

// getOutFormat() ==> mp4

ret = avformat_alloc_output_context2(&oc, NULL, getOutFormat(), NULL);

if (!oc) {

fprintf(stderr, "avformat_alloc_output_context2 failed for %s, ret: %d\n",

getOutFormat(), ret);

return -1;

}

process->av_format_context = oc;

if (video_stream) {

AVStream* st = add_output_stream(oc, video_stream);

if (!st) {

return -1;

}

process->video_avstream = st;

}

if (audio_stream) {

AVStream* st = add_output_stream(oc, audio_stream);

if (!st) {

return -1;

}

process->audio_avstream = st;

}

return 0;

}

}

// uri: /mnt/sdcard/VIDEO-FRONT/20160121_131010_A.mp4

muxer->muxer_write_header(processor.av_format_context(oc), uri) {

int av_ret = 0;

av_dump_format(oc, 0, url, 1) {

void av_dump_format(AVFormatContext *ic(oc), int index,

const char *url, int is_output)

{

int i;

uint8_t *printed = ic->nb_streams ? av_mallocz(ic->nb_streams) : NULL;

if (ic->nb_streams && !printed)

return;

// Output #0, mp4, to '/mnt/sdcard/VIDEO-FRONT/20160121_131010_A.mp4':

av_log(NULL, AV_LOG_INFO, "%s #%d, %s, %s '%s':\n",

is_output ? "Output" : "Input",

index,

is_output ? ic->oformat->name : ic->iformat->name,

is_output ? "to" : "from", url);

dump_metadata(NULL, ic->metadata, " ");

......

// Stream #0:0: Video: h264, nv12, 1920x1080, q=4-48, 10000 kb/s, 30 tbn, 30 tbc

// Stream #0:1: Audio: aac (libfdk_aac), 44100 Hz, stereo, s16, 64 kb/s

for (i = 0; i < ic->nb_streams; i++)

if (!printed[i])

dump_stream_format(ic, i, index, is_output(1));

av_free(printed);

}

}

if (!(oc->oformat->flags & AVFMT_NOFILE)) {

av_ret = avio_open(&oc->pb, url,

AVIO_FLAG_WRITE | (direct_piece ? AVIO_FLAG_RK_DIRECT_PIECE : 0));

} else {

if (url)

strncpy(oc->filename, url, strlen(url));

}

av_ret = avformat_write_header(oc, NULL);

return 0;

}

}//#end muxer_init

processor_ready = true;

} else if (run_func) {

// 此处不进入:

// 创建 muxer_process 线程,然后wait_pop_packet 处理包

// 等待由 CameraMuxer::push_packet 方法中的 packet_list_cond.notify_one() 唤醒

// 也就是:此处 muxer_process 线程wait_pop_packet发现packet链表为空则休眠等待

// push_packet放入一个packet,然后唤醒muxer_process进行处理

pthread_t tid = 0;

if (pthread_create(&tid, attr, run_func, (void*)this)) {

printf("%s create writefile pthread err\n", __func__);

return -1;

}

write_enable = true;

PRINTF("created muxer tid: 0x%x\n", tid);

write_tid = tid;

w_tid_list.push_back(write_tid);

}

}

cameramp4 = muxer;

}

}

if (tl_interval > 0)

cameramp4->set_low_frame_rate(MODE_NORMAL_FIX_TIME, time_val);

thumbnail_gen = true;

// class PacketDispatcher : public Dispatcher

// EncodeHandler中的对象成员:PacketDispatcher h264aac_pkt_dispatcher;

// cameramp4 作为一个muxer对象引用,以处理机(handler)的身份,通过AddHandler接口

// 加入到 std::list handlers标识的链表中;

h264aac_pkt_dispatcher.AddHandler(cameramp4) {

void AddHandler(T* handler) {

std::lock_guard lk(list_mutex);

UNUSED(lk);

handlers.push_back(handler);

}

}

break;

case STOP_RECODEMP4:

del_recoder_mp4 = (bool)msg->arg1;

if (!cameramp4) {

if (del_recoder_mp4) {

do_notify(msg->arg2, msg->arg3);

}

break;

}

mp4_encode_stop_current_job();

if (del_recoder_mp4) {

// delete the mp4 muxer

cameramp4->sync_jobs_complete();

delete cameramp4;

cameramp4 = NULL;

h264aac_encoder_unref();

do_notify(msg->arg2, msg->arg3);

PRINTF("del cameramp4 done.\n");

} else if (!del_recoder_mp4 && (bool)msg->arg2) {

// restart immediately

if (0 > mp4_encode_start_job()) {

msg_ret = -1;

break;

}

thumbnail_gen = true;

h264_encoder->SetForceIdrFrame();

}

break;

case SAVE_MP4_IMMEDIATELY:

#ifdef CACHE_ENCODEDATA_IN_MEM

{

char file_path[128];

video_record_getfilename(

file_path, sizeof(file_path),

fs_storage_folder_get_bytype(video_type, LOCKFILE_TYPE), device_id,

"mp4");

assert(cameracache);

cameracache->StoreCacheData(file_path, global_attr);

}

#else

video_save = true;

#endif

break;

case STOP_SAVE_CACHE:

if (cameracache)

cameracache->StopCurrentJobImmediately();

do_notify(msg->arg2, msg->arg3);

break;

case ATTACH_USER_MUXER: {

CameraMuxer* muxer = (CameraMuxer*)msg->arg1;

char* uri = (char*)msg->arg2;

uint32_t val = (uint32_t)msg->arg3;

bool need_video = (val & 1) ? true : false;

bool need_audio = (val & 2) ? true : false;

attach_user_muxer(muxer, uri, need_video, need_audio);

break;

}

case DETACH_USER_MUXER: {

CameraMuxer* muxer = (CameraMuxer*)msg->arg1;

detach_user_muxer(muxer);

do_notify(msg->arg2, msg->arg3);

break;

}

case ATTACH_USER_MDP: {

VPUMDProcessor* p = (VPUMDProcessor*)msg->arg1;

MDAttributes* attr = (MDAttributes*)msg->arg2;

attach_user_mdprocessor(p, attr);

break;

}

case DETACH_USER_MDP: {

VPUMDProcessor* p = (VPUMDProcessor*)msg->arg1;

detach_user_mdprocessor(p);

do_notify(msg->arg2, msg->arg3);

break;

}

case RECORD_RATE_CHANGE: {

VPUMDProcessor* p = (VPUMDProcessor*)msg->arg1;

bool low_frame_rate_mode = (bool)msg->arg2;

if (!low_frame_rate_mode) {

if (cameramp4 && cameramp4->is_low_frame_rate())

video_save = true; // low->normal, save file immediately

} else {

//...

}

if (cameramp4 && fd >= 0) {

cameramp4->set_low_frame_rate(MODE_ONLY_KEY_FRAME, time_val);

}

if (md_handler.IsRunning())

p->set_low_frame_rate(low_frame_rate_mode ? 1 : 0);

break;

}

case GEN_IDR_FRAME:

if (h264_encoder)

h264_encoder->SetForceIdrFrame();

break;

default:

// assert(0);

;

}

#ifdef TRACE_FUNCTION

printf("[%d x %d] ", h264_config.width, h264_config.height);

PRINTF("msg_ret : %d\n\n", msg_ret);

#endif

if (msg_ret < 0) {

printf("error: handle msg < %d > failed\n", msg->GetID());

delete msg;

return msg_ret;

}

}

if (msg)

delete msg;

// fd < 0 is normal for usb when pulling out usb connection

if (fd < 0)

return 0;

if (cameramp4 && thumbnail_gen) // 条件满足

thumbnail_gen = !thumbnail_handler.Process(fd, h264_config.video_config, filename);

if (cameracache)

cameracache->TryGenThumbnail(thumbnail_handler, fd,

h264_config.video_config);

md_handler.Process(fd, h264_config.video_config);

if (h264_encoder) {

int64_t usec = 0;

if (start_timestamp.tv_sec != 0 || start_timestamp.tv_usec != 0) {

usec = (time_val.tv_sec - start_timestamp.tv_sec) * 1000000LL +

(time_val.tv_usec - start_timestamp.tv_usec);

} else {

start_timestamp = time_val;

pre_frame_time = time_val;

}

recorded_sec = usec / 1000000LL;

if (cameramp4) {

if (recorded_sec < 0)

printf("**recorded_sec: %d\n", recorded_sec);

assert(recorded_sec >= 0);

// printf("**recorded_sec: %d\n", recorded_sec);

if (record_time_notify)

record_time_notify(this, recorded_sec);

if (tl_interval > 0) {

usec = (time_val.tv_sec - pre_frame_time.tv_sec) * 1000000LL +

(time_val.tv_usec - pre_frame_time.tv_usec);

if (usec > 0 && usec < tl_interval * 1000000LL)

return 0;

pre_frame_time = time_val;

}

}

// 创建一个EncodedPacket 对象 pkt

EncodedPacket* pkt = new EncodedPacket();

if (!pkt) {

printf("alloc EncodedPacket failed\n");

assert(0);

return -1;

}

pkt->is_phy_buf = true; // --------------为真,后面用到

int encode_ret = -1;

// 如果摄像头直接输出H264格式,则不需要编码,直接送入AVPacket(EncodedPacket成员对象)

if (usb_type == USB_TYPE_H264) {

encode_ret = FFContext::PackEncodedDataToAVPacket(input_buf, pkt->av_pkt);

} else { // 否则调度vpu(mpp接口api)进行编码,得到h264格式,再送入AVPacket(EncodedPacket成员对象)

// 编码后数据放入 dst_buf

Buffer dst_buf(dst_data);

#ifdef USE_WATERMARK

if (parameter_get_video_mark() && watermark->type > 0)

h264_encode_control(MPP_ENC_SET_OSD_DATA_CFG,

&watermark->mpp_osd_data[watermark->buffer_index]);

#endif

// xxdk000 --------------------------------------------------------------详见 8

// return 0 means success!

encode_ret = h264_encoder->EncodeOneFrame(const_cast(&input_buf),

&dst_buf, nullptr);

if (!encode_ret) {

assert(dst_buf.GetValidDataSize() > 0);

// 将已完成编码的数据打包封装送入AVPacket

// 没有最后一个参数,使用参数默认值: false

encode_ret = FFContext::PackEncodedDataToAVPacket(dst_buf, pkt->av_pkt) {

int FFContext::PackEncodedDataToAVPacket(const Buffer& buf,

AVPacket& out_pkt,

const bool copy) {

AVPacket pkt;

av_init_packet(&pkt);

pkt.data = static_cast(buf.GetVirAddr()); // a/v数据虚拟地址

pkt.size = buf.GetValidDataSize(); // 有效数据大小

if (copy) { // false

int ret = 0;

if (0 != av_copy_packet(&out_pkt, &pkt)) {

printf("in %s, av_copy_packet failed!\n", __func__);

ret = -1;

}

av_packet_unref(&pkt);

return ret;

} else { // ok

out_pkt = pkt;

return 0;

}

}

}

}

if (!encode_ret) {

if (dst_buf.GetUserFlag() & MPP_PACKET_FLAG_INTRA)

pkt->av_pkt.flags |= AV_PKT_FLAG_KEY;

// rk guarantee that return only one slice, and start with 00 00 01

pkt->av_pkt.flags |= AV_PKT_FLAG_ONE_NAL;

}

}

if (!encode_ret) {

pkt->type = VIDEO_PACKET; // 表征 AVPacket类型为 VIDEO_PACKET

pkt->time_val = time_val; // struct timeval& time_val = input_buf.GetTimeval();在函数开头

// 猜测: 将该经过压缩生产的AVPacket(EncodedPacket), 通过h264aac_pkt_dispatcher派发器的Dispatch方法

// 进行派发,(派发到对该pkt感兴趣的接口或thread或缓冲池中)

h264aac_pkt_dispatcher.Dispatch(pkt) {

// EncodeHandler对象成员 PacketDispatcher h264aac_pkt_dispatcher;

// class PacketDispatcher : public Dispatcher

// 初步猜测,将 EncodedPacket对象, 派发到CameraMuxer

// Dispatch直接调用 _Dispatch方法

// 参数为Dispatcher的成员

// std::mutex list_mutex;

// std::list handlers; (T = CameraMuxer ), 此链表上的元素(处理机对象)是前面放入的 cameramp4

// std::list special_muxers_; 为子类PacketDispatcher成员

_Dispatch(list_mutex, pkt, &handlers, &special_muxers_) {

static void _Dispatch(std::mutex& list_mutex,

EncodedPacket* pkt,

std::list* muxers,

std::list* except_muxers)

{

assert(muxers);

std::lock_guard lk(list_mutex);

if (pkt->is_phy_buf) { // 为真

bool sync = true;

// std::list handlers; 遍历处理机链表

for (CameraMuxer* muxer : *muxers) {

if (except_muxers &&

std::find(except_muxers->begin(), except_muxers->end(), muxer) !=

except_muxers->end())

continue;

if (muxer->use_data_async()) { // 返回0

sync = false;

break;

}

}

if (!sync) // 不执行

pkt->copy_av_packet(); //need copy buffer

}

// std::list handlers; 遍历处理机链表

for (CameraMuxer* muxer : *muxers) {

if (except_muxers &&

std::find(except_muxers->begin(), except_muxers->end(), muxer) !=

except_muxers->end())

continue;

// 将编码一帧得到的 pkt 放入 CameraMuxer 的 std::list handlers

// 所表示的链表的每个元素(CameraMuxer*对象)的packet_list成员链表中

muxer->push_packet(pkt) {

void CameraMuxer::push_packet(EncodedPacket* pkt) {

std::unique_lock lk(packet_list_mutex);

if (max_video_num > 0) { // 不执行该分支

if (!write_enable)

return;

if (video_packet_num > max_video_num) {

int i = 0;

#ifdef DEBUG

if (strlen(format)) {

// too many packets, write file is so slow

printf(

"write packet is so slow, %s, paket_list size: %d, video num: %d\n",

class_name, packet_list->size(), video_packet_num);

}

#endif

// we need drop one video packet at front, util the next video packet

while (!packet_list->empty()) {

// printf("video->packet_list->size: %d\n", video->packet_list->size());

EncodedPacket* front_pkt = packet_list->front();

PacketType type = front_pkt->type;

if (type == VIDEO_PACKET &&

(front_pkt->av_pkt.flags & AV_PKT_FLAG_KEY)) {

i++;

}

if (i < 2) {

// printf("type: %d, front_pkt: %p\n", type, front_pkt);

packet_list->pop_front();

if (front_pkt->type == VIDEO_PACKET) {

video_packet_num--;

#if 0 // def DEBUG

packet_total_size -= front_pkt->av_pkt.size;

printf("packet_total_size: %d\n", packet_total_size);

#endif

}

front_pkt->unref();

} else {

break;

}

}

}

pkt->ref(); // add one ref in muxer's packet_list

if (pkt->type == VIDEO_PACKET) {

video_packet_num++;

#if 0 // def DEBUG

packet_total_size += pkt->av_pkt.size;

printf("packet_total_size: %d\n", packet_total_size);

#endif

}

packet_list->push_back(pkt);

// printf("video->video_packet_num: %d, video->packet_list->size: %d, pkt:

// %p\n",

// video->video_packet_num, video->packet_list->size(), pkt);

// CameraMuxer私有成员 std::condition_variable packet_list_cond;

// 通知等待该条件变量者有pkt可处理

packet_list_cond.notify_one();

} else { // 这里直接将包写入封装文件得到 MP4

if (!processor_ready)

return;

pkt->ref(); // add one ref

int ret = muxer_write_free_packet(&global_processor, pkt) {

AVPacket avpkt = pkt->av_pkt;

// got packet, write it to file

if (pkt->type == VIDEO_PACKET) {

int64_t frame_rate = v_encoder->GetConfig().video_config.frame_rate;

struct timeval tval = {0, 0};

if (pkt->lbr_time_val.tv_sec != 0 || pkt->lbr_time_val.tv_usec != 0) {

tval = pkt->lbr_time_val;

} else {

tval = pkt->time_val;

// PRINTF_FUNC_LINE;

}

int64_t usec = (tval.tv_sec - process->v_first_timeval.tv_sec) * 1000000LL +

(tval.tv_usec - process->v_first_timeval.tv_usec);

if (usec < 0) {

usec = 0;

}

int64_t pts = usec * frame_rate / 1000000LL;

if (pts <= process->pre_video_pts) {

pts = process->pre_video_pts + 1;

}

avpkt.dts = avpkt.pts = pts;

process->pre_video_pts = pts;

assert(pts >= 0);

ret = muxer_write_packet(process->av_format_context,

process->video_avstream, &avpkt) {

int CameraMuxer::muxer_write_packet(AVFormatContext* ctx,

AVStream* st,

AVPacket* pkt) {

int ret = write_frame(ctx, &c->time_base, st, pkt) {

static int write_frame(AVFormatContext* fmt_ctx,

const AVRational* time_base,

AVStream* st,

AVPacket* pkt) {

/* rescale output packet timestamp values from codec to stream timebase */

av_packet_rescale_ts(pkt, *time_base, st->time_base);

pkt->stream_index = st->index;

/* Write the compressed frame to the media file. */

// log_packet(fmt_ctx, pkt);

// return av_interleaved_write_frame(fmt_ctx, pkt);

// ---------------------------------------ok

return av_write_frame(fmt_ctx, pkt);

}

}

}

}

} else if (pkt->type == AUDIO_PACKET) {

assert(a_encoder);

int64_t input_nb_samples = a_encoder->GetConfig().audio_config.nb_samples;

avpkt.pts =

(pkt->audio_index - process->audio_first_index) * input_nb_samples;

avpkt.pts -= 2 * input_nb_samples;

avpkt.dts = avpkt.pts;

// 同video数据

ret = muxer_write_packet(process->av_format_context,

process->audio_avstream, &avpkt);

}

}

}

}

}//#end push_packet

}

}

} // #end Dispatch

}

if (cameracache)

h264aac_pkt_dispatcher.DispatchToSpecial(pkt);

if (cameramp4 && cameramp4->is_low_frame_rate()) {

usec = (pkt->lbr_time_val.tv_sec - start_timestamp.tv_sec) * 1000000LL +

(pkt->lbr_time_val.tv_usec - start_timestamp.tv_usec);

recorded_sec = usec / 1000000LL;

}

}

pkt->unref(); // 一次解引用

}

if (cameramp4 && !del_recoder_mp4) { // 不成立

if (recorded_sec >= parameter_get_recodetime() || video_save) {

// h264aac_encoder_unref();

mp4_encode_stop_current_job();

// if (0 > h264aac_encoder_ref()) {

// return -1;

//}

// cameramp4->set_encoder(h264_encoder, aac_encoder);

// restart immediately

if (0 > mp4_encode_start_job()) {

return -1;

}

thumbnail_gen = true;

h264_encoder->SetForceIdrFrame();

}

}

#ifdef TRACE_FUNCTION

printf("usb_type: %d, [%d x %d] ", usb_type, h264_config.width,

h264_config.height);

PRINTF_FUNC_OUT;

#endif

return 0;

}

8.

// xxdk000

int MPPH264Encoder::EncodeOneFrame(Buffer* src, Buffer* dst, Buffer* mv) {

int ret = 0;

VideoConfig& vconfig = GetVideoConfig();

/*

typedef struct {

MppCodingType video_type; // MPP_VIDEO_CodingAVC h264/avc

MppCtx ctx; // void*

MppApi* mpi;

MppPacket packet;

MppFrame frame;

MppBuffer osd_data;

}RkMppEncContext;

*/

RkMppEncContext* rkenc_ctx = &mpp_enc_ctx_;

// MppBuffer == void*

MppBuffer pic_buf = NULL;

MppBuffer str_buf = NULL;

MppBuffer mv_buf = NULL;

MppTask task = NULL; // void*

MppBufferInfo info;

if (!src)

return 0;

if (CheckConfigChange())

return -1;

mpp_frame_init(&rkenc_ctx->frame);

mpp_frame_set_width(rkenc_ctx->frame, vconfig.width);

mpp_frame_set_height(rkenc_ctx->frame, vconfig.height);

mpp_frame_set_hor_stride(rkenc_ctx->frame, UPALIGNTO16(vconfig.width));

mpp_frame_set_ver_stride(rkenc_ctx->frame, UPALIGNTO16(vconfig.height));

// Import frame_data

memset(&info, 0, sizeof(info));

info.type = MPP_BUFFER_TYPE_ION;

get_expect_buf_size(vconfig, &info.size);

info.fd = src->GetFd();

info.ptr = src->GetVirAddr();

if (!info.size || info.size > src->Size()) {

fprintf(stderr,

"input src data size wrong, info.size: %lu, buf_size: %lu\n",

info.size, src->Size());

ret = -1;

goto ENCODE_FREE_FRAME;

}

ret = mpp_buffer_import(&pic_buf, &info);

if (ret) {

fprintf(stderr, "pic_buf import buffer failed\n");

goto ENCODE_FREE_FRAME;

}

mpp_frame_set_buffer(rkenc_ctx->frame, pic_buf);

// Import pkt_data

memset(&info, 0, sizeof(info));

info.type = MPP_BUFFER_TYPE_ION;

if (dst) {

info.size = dst->Size();

info.fd = dst->GetFd();

info.ptr = dst->GetVirAddr();

}

if (info.size > 0) {

ret = mpp_buffer_import(&str_buf, &info);

if (ret) {

fprintf(stderr, "str_buf import buffer failed\n");

goto ENCODE_FREE_PACKET;

}

mpp_packet_init_with_buffer(&rkenc_ctx->packet, str_buf);

}

// Import mv_data

memset(&info, 0, sizeof(info));

info.type = MPP_BUFFER_TYPE_ION;

if (mv) {

info.size = mv->Size();

info.fd = mv->GetFd();

info.ptr = mv->GetVirAddr();

}

if (info.size > 0) {

ret = mpp_buffer_import(&mv_buf, &info);

if (ret) {

fprintf(stderr, "mv_buf import buffer failed\n");

goto ENCODE_FREE_MV;

}

}

// Encode process

// poll and dequeue empty task from mpp input port

ret = rkenc_ctx->mpi->poll(rkenc_ctx->ctx, MPP_PORT_INPUT, MPP_POLL_BLOCK);

if (ret) {

mpp_err_f("input poll ret %d\n", ret);

goto ENCODE_FREE_PACKET;

}

ret = rkenc_ctx->mpi->dequeue(rkenc_ctx->ctx, MPP_PORT_INPUT, &task);

if (ret || NULL == task) {

fprintf(stderr, "mpp task input dequeue failed\n");

goto ENCODE_FREE_PACKET;

}

mpp_task_meta_set_frame(task, KEY_INPUT_FRAME, rkenc_ctx->frame);

mpp_task_meta_set_packet(task, KEY_OUTPUT_PACKET, rkenc_ctx->packet);

mpp_task_meta_set_buffer(task, KEY_MOTION_INFO, mv_buf);

ret = rkenc_ctx->mpi->enqueue(rkenc_ctx->ctx, MPP_PORT_INPUT, task);

if (ret) {

fprintf(stderr, "mpp task input enqueue failed\n");

goto ENCODE_FREE_PACKET;

}

task = NULL;

// poll and task to mpp output port

ret = rkenc_ctx->mpi->poll(rkenc_ctx->ctx, MPP_PORT_OUTPUT, MPP_POLL_BLOCK);

if (ret) {

mpp_err_f("output poll ret %d\n", ret);

goto ENCODE_FREE_PACKET;

}

ret = rkenc_ctx->mpi->dequeue(rkenc_ctx->ctx, MPP_PORT_OUTPUT, &task);

if (ret || !task) {

fprintf(stderr, "ret %d mpp task output dequeue failed\n", ret);

goto ENCODE_FREE_PACKET;

}

if (task) {

MppFrame frame_out = NULL;

MppPacket packet_out = NULL;

mpp_task_meta_get_packet(task, KEY_OUTPUT_PACKET, &packet_out);

ret = rkenc_ctx->mpi->enqueue(rkenc_ctx->ctx, MPP_PORT_OUTPUT, task);

if (ret) {

fprintf(stderr, "mpp task output enqueue failed\n");

goto ENCODE_FREE_PACKET;

}

task = NULL;

ret = rkenc_ctx->mpi->poll(rkenc_ctx->ctx, MPP_PORT_INPUT, MPP_POLL_BLOCK);

if (ret) {

mpp_err_f("input poll ret %d\n", ret);

goto ENCODE_FREE_PACKET;

}

// Dequeue task from MPP_PORT_INPUT

ret = rkenc_ctx->mpi->dequeue(rkenc_ctx->ctx, MPP_PORT_INPUT, &task);

if (ret) {

fprintf(stderr, "failed to dequeue from input port ret %d\n", ret);

goto ENCODE_FREE_PACKET;

}

mpp_task_meta_get_frame(task, KEY_INPUT_FRAME, &frame_out);

ret = rkenc_ctx->mpi->enqueue(rkenc_ctx->ctx, MPP_PORT_INPUT, task);

if (ret) {

fprintf(stderr, "mpp task output enqueue failed\n");

goto ENCODE_FREE_PACKET;

}

task = NULL;

if (rkenc_ctx->packet != NULL) {

assert(rkenc_ctx->packet == packet_out);

dst->SetValidDataSize(mpp_packet_get_length(rkenc_ctx->packet));

dst->SetUserFlag(mpp_packet_get_flag(rkenc_ctx->packet));

// printf("~~~ got h264 data len: %d kB\n",

// mpp_packet_get_length(rkenc_ctx->packet) / 1024);

}

if (mv_buf) {

assert(packet_out);

mv->SetUserFlag(mpp_packet_get_flag(packet_out));

mv->SetValidDataSize(mv->Size());

}

if (packet_out && packet_out != rkenc_ctx->packet)

mpp_packet_deinit(&packet_out);

}

ENCODE_FREE_PACKET:

if (pic_buf)

mpp_buffer_put(pic_buf);

if (str_buf)

mpp_buffer_put(str_buf);

mpp_packet_deinit(&rkenc_ctx->packet);

rkenc_ctx->packet = NULL;

ENCODE_FREE_FRAME:

mpp_frame_deinit(&rkenc_ctx->frame);

rkenc_ctx->frame = NULL;

ENCODE_FREE_MV:

if (mv_buf)

mpp_buffer_put(mv_buf);

if (rkenc_ctx->osd_data) {

mpp_buffer_put(rkenc_ctx->osd_data);

rkenc_ctx->osd_data = NULL;

}

return ret;

}

-------------------------------------------------------------------------------------

-------------------------------------------------------------------------------------

static int CameraWinProc(HWND hWnd, int message, WPARAM wParam, LPARAM lParam) {

case MSG_SDCHANGE: {

if (SetMode == MODE_RECORDING) {

if (sdcard == 1) {

if (parameter_get_video_autorec())

startrec(hWnd) {

startrec0(hWnd);

}

} else {

stoprec(hWnd);

}

}

}

......

}

static void startrec0(HEND hWnd) {

if (video_rec_state)

return;

InvalidateRect (hWnd, &msg_rcWatermarkTime, FALSE);

InvalidateRect (hWnd, &msg_rcWatermarkLicn, FALSE);

InvalidateRect (hWnd, &msg_rcWatermarkImg, FALSE);

// gc_sd_space();

// test code start

audio_sync_play("/usr/local/share/sounds/VideoRecord.wav");

// test code end

if (video_record_startrec() == 0) {

video_rec_state = true;

FlagForUI_ca.video_rec_state_ui = video_rec_state;

sec = 0;

}

}

extern "C" int video_record_startrec(void) {

int ret = -1;

// 1. lock

pthread_rwlock_rdlock(¬elock);

{

// 2.

Video* video = getfastvideo();

while (video) {

EncodeHandler* ehandler = video->encode_handler;

if (ehandler &&

!(video->encode_status & RECORDING_FLAG) &&

video_is_active(video)) {

enablerec += 1;

if (!ehandler->get_audio_capture())

// ???????????????????????????????????????

global_audio_ehandler.AddPacketDispatcher(

ehandler->get_h264aac_pkt_dispatcher());

ehandler->send_record_mp4_start(global_audio_ehandler.GetEncoder()) {

void EncodeHandler::send_record_mp4_start(FFAACEncoder* aac_enc) {

// msg_queue中放入 START_RECODEMP4 消息

send_message(START_RECODEMP4, aac_enc);

}

}

video->encode_status |= RECORDING_FLAG;

ret = 0;

}

video = video->next;

}

}

pthread_rwlock_unlock(¬elock);

PRINTF("%s, enablerec: %d\n", __func__, enablerec);

PRINTF_FUNC_OUT;

return ret;

}