软件版本

操作系统版本:CentOS Linux release 7.4.1708

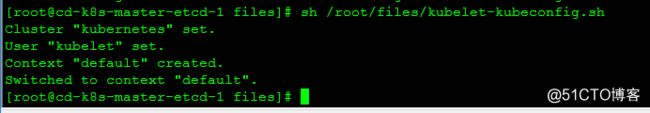

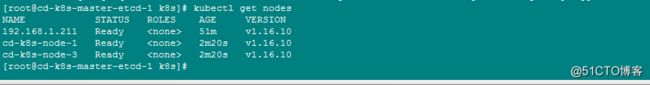

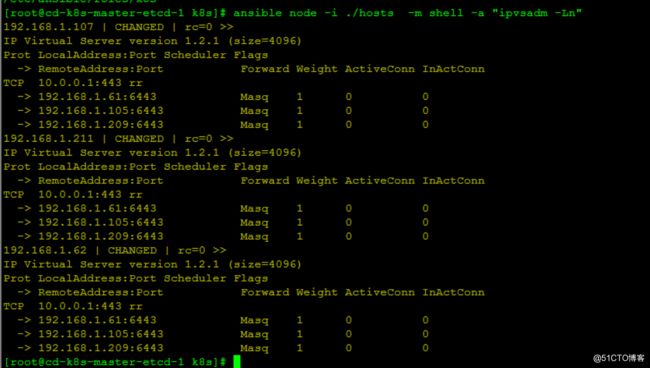

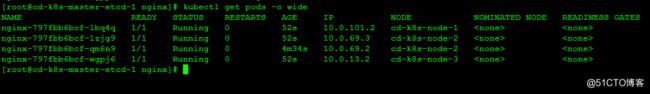

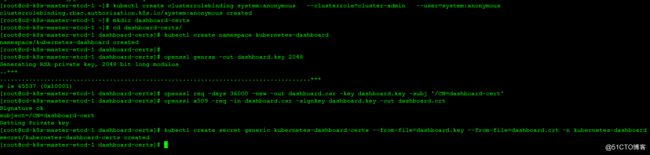

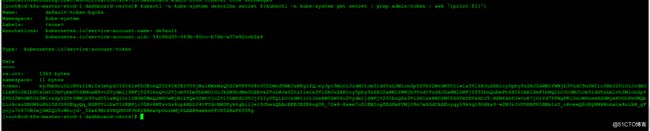

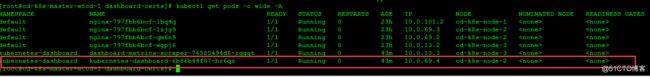

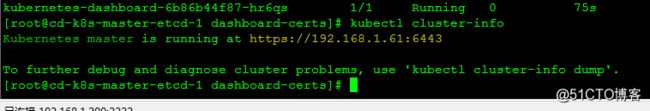

ansible: 2.9.10

etcd:3.3.22

docker:19.03.5

kubernetes:v1.16.10

基础信息

主机名 |

IP地址 |

安装的软件 |

备注 |

cd-k8s-master-etcd-1 |

192.168.1.61 |

ansible、docker、etcd、kube-apiserver、kube-scheduler、kube-controller-manager、flannel、Haproxy、KeepAlived |

这台安装了Ansible,若无特别说明,所有操作均在这台操作 |

cd-k8s-master-etcd-2 |

192.168.1.105 |

ansible、docker、etcd、kube-apiserver、kube-scheduler、kube-controller-manager、flannel、Haproxy、KeepAlived |

|

cd-k8s-master-etcd-3 |

192.168.1.209 |

ansible、docker、etcd、kube-apiserver、kube-scheduler、kube-controller-manager、flannel、Haproxy、KeepAlived |

|

cd-k8s-node-1 |

192.168.1.107 |

docker、kubelet、kube-proxy、flannel |

|

cd-k8s-node-2 |

192.168.1.211 |

docker、kubelet、kube-proxy、flannel |

|

cd-k8s-node-3 |

192.168.1.62 |

docker、kubelet、kube-proxy、flannel |

|

192.168.1.3 |

VIP |

VIP |

一、初始化配置

1.配置所有主机名

mkdir -p /root/files/

cd /root/files/

cat > /root/files/hosts << EOF

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.1.61 cd-k8s-master-etcd-1

192.168.1.105 cd-k8s-master-etcd-2

192.168.1.209 cd-k8s-master-etcd-3

192.168.1.107 cd-k8s-node-1

192.168.1.211 cd-k8s-node-2

192.168.1.62 cd-k8s-node-3

EOF

2.建立ansible主机清单

mkdir -p /etc/ansible/roles/k8s/

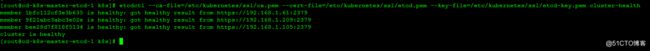

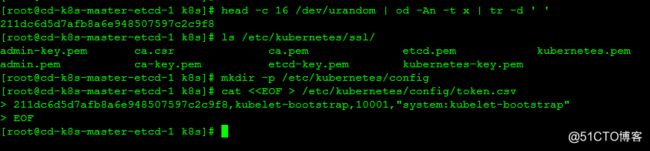

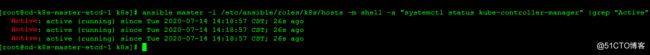

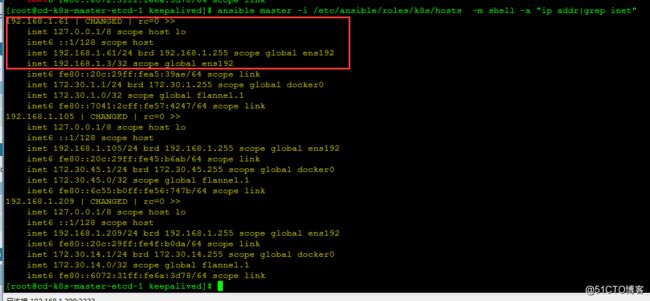

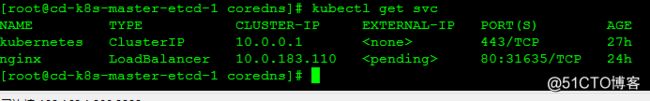

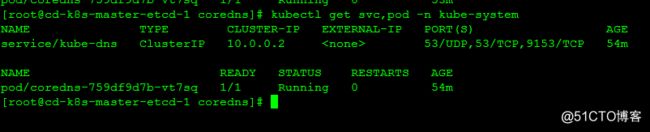

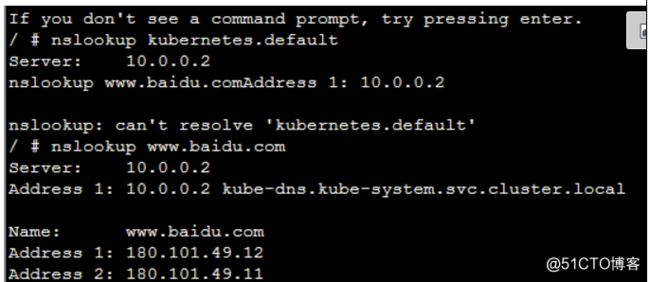

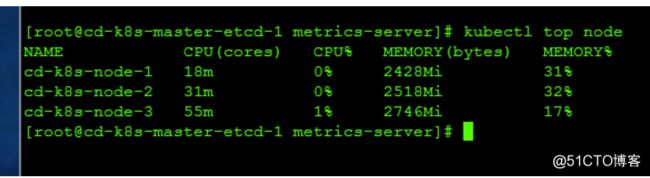

cat > /etc/ansible/roles/k8s/hosts < [all] 192.168.1.61 192.168.1.105 192.168.1.209 192.168.1.107 192.168.1.211 192.168.1.62 [master] 192.168.1.61 192.168.1.105 192.168.1.209 [etcd] 192.168.1.61 192.168.1.105 192.168.1.209 [node] 192.168.1.107 192.168.1.211 192.168.1.62 [test] 192.168.1.62 EOF 3.初始化各个节点信息 cat > /root/files/k8s.conf < net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_nonlocal_bind = 1 net.ipv4.ip_forward = 1 vm.swappiness = 0 EOF cat > /etc/ansible/roles/k8s/init.yaml << EOF --- - name: Init K8S env hosts: all gather_facts: False tasks: - name: close swapoff shell: swapoff -a - name: close firewalld shell: systemctl stop firewalld.service && systemctl disable firewalld.service # - name: close selinux # shell: setenforce 0 - name: sed selinux shell: sed -i 's/enforcing/disabled/' /etc/selinux/config - name: copy k8s.conf copy: src=/root/files/k8s.conf dest=/etc/sysctl.d/k8s.conf owner=root group=root force=yes - name: sysctl -p shell: sysctl --system - name: copy /etc/hosts copy: src=/root/files/hosts dest=/etc/hosts owner=root group=root force=yes - name: yum install -y wget shell: yum install -y wget EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/init.yaml 二.安装docker cat > /etc/ansible/roles/k8s/docker_init.yaml < --- - name: Install Docker hosts: all gather_facts: False tasks: - name: remove old docker version shell: yum remove -y docker docker-ce docker-client docker-client-latest docker-common docker-latest docker-latest-logrotate docker-logrotate docker-engine - name: add yum tools shell: yum install -y yum-utils - name: add docker yum repos shell: yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo - name: Install docker shell: yum install -y docker-ce-19.03.5 docker-ce-cli-19.03.5 containerd.io - name: start docker shell: systemctl enable docker && systemctl start docker EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/docker_init.yaml 5.CFSSL工具安装 关于CFSSL证书介绍,参见:https://github.com/cloudflare/cfssl mkdir -p /root/soft/ && cd /root/soft/ wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64 mv cfssl_linux-amd64 /usr/bin/cfssl mv cfssljson_linux-amd64 /usr/bin/cfssljson mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo 三.生成CA证书并分发到所有节点 mkdir -p /opt/k8s/certs/ && cd /opt/k8s/certs/ cat > /opt/k8s/certs/ca-config.json < { "signing": { "default": { "expiry": "87600h" }, "profiles": { "kubernetes": { "usages": [ "signing", "key encipherment", "server auth", "client auth" ], "expiry": "87600h" } } } } EOF cat > /opt/k8s/certs/ca-csr.json < { "CN": "kubernetes", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "ShangHai", "L": "ShangHai", "O": "k8s", "OU": "System" } ] } EOF cfssl gencert -initca ca-csr.json | cfssljson -bare ca cat > /etc/ansible/roles/k8s/copy_ca.yaml < --- - name: Copy CA file to all machine hosts: all gather_facts: False tasks: - name: mkdir -p /etc/kubernetes/ssl/ shell: mkdir -p /etc/kubernetes/ssl/ - name: copy ca files copy: src=/opt/k8s/certs/ca.pem dest=/etc/kubernetes/ssl/ca.pem - name: copy ca-key files copy: src=/opt/k8s/certs/ca-key.pem dest=/etc/kubernetes/ssl/ca-key.pem - name: copy ca.csr files copy: src=/opt/k8s/certs/ca.csr dest=/etc/kubernetes/ssl/ca.csr EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_ca.yaml 四.安装etcd集群 1.创建证书 cd /opt/k8s/certs/ cat > /opt/k8s/certs/etcd-csr.json < { "CN": "etcd", "hosts": [ "127.0.0.1", "192.168.1.61", "192.168.1.105", "192.168.1.209", "192.168.1.3", "cd-k8s-master-etcd-1", "cd-k8s-master-etcd-2", "cd-k8s-master-etcd-3" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "ShangHai", "L": "ShangHai", "O": "k8s", "OU": "System" } ] } EOF cd /opt/k8s/certs cfssl gencert -ca=/opt/k8s/certs/ca.pem -ca-key=/opt/k8s/certs/ca-key.pem -config=/opt/k8s/certs/ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd cat > /root/files/etcd.service < [Unit] Description=Etcd Server After=network.target After=network-online.target Wants=network-online.target Documentation=https://github.com/coreos [Service] Type=notify WorkingDirectory=/var/lib/etcd/ ExecStart=/usr/bin/etcd \\ --data-dir=/var/lib/etcd \\ --name=HOSTNAME \\ --cert-file=/etc/kubernetes/ssl/etcd.pem \\ --key-file=/etc/kubernetes/ssl/etcd-key.pem \\ --trusted-ca-file=/etc/kubernetes/ssl/ca.pem \\ --peer-cert-file=/etc/kubernetes/ssl/etcd.pem \\ --peer-key-file=/etc/kubernetes/ssl/etcd-key.pem \\ --peer-trusted-ca-file=/etc/kubernetes/ssl/ca.pem \\ --peer-client-cert-auth \\ --client-cert-auth \\ --listen-peer-urls=https://IP:2380 \\ --initial-advertise-peer-urls=https://IP:2380 \\ --listen-client-urls=https://IP:2379,http://127.0.0.1:2379 \\ --advertise-client-urls=https://IP:2379 \\ --initial-cluster-token=etcd-cluster-0 \\ --initial-cluster=cd-k8s-master-etcd-1=https://192.168.1.61:2380,cd-k8s-master-etcd-2=https://192.168.1.105:2380,cd-k8s-master-etcd-3=https://192.168.1.209:2380 \ --initial-cluster-state=new \\ --auto-compaction-mode=periodic \\ --auto-compaction-retention=1 \\ --max-request-bytes=33554432 \\ --quota-backend-bytes=6442450944 \\ --heartbeat-interval=250 \\ --election-timeout=2000 Restart=on-failure RestartSec=5 LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF #红色部分是需要填写各个etcd集群的主机名及IP地址 2.分发证书并启动etcd集群 cat > /etc/ansible/roles/k8s/etcd_init.yaml < --- - name: Init etcd Cluster hosts: etcd gather_facts: False tasks: # - name: add etcd user # shell: useradd -s /sbin/nologin etcd - name: mkdir etcd shell: mkdir -p /var/lib/etcd && mkdir -p /etc/kubernetes/ssl && chown -R etcd:etcd /var/lib/etcd - name: copy etcd files copy: src=/root/files/etcd dest=/usr/bin/etcd owner=root group=root force=yes mode=755 - name: cpoy etcdctl file copy: src=/root/files/etcdctl dest=/usr/bin/etcdctl owner=root group=root force=yes mode=755 - name: copy etcd.service file copy: src=/root/files/etcd.service dest=/etc/systemd/system/etcd.service owner=root group=root force=yes mode=755 - name: replace etcd.service info shell: IP=\`ip addr|grep "192.168.1."|awk '{print \$2}'|awk -F '/' '{print \$1}'\` && sed -i 's#IP#'\${IP}'#g' /etc/systemd/system/etcd.service && hostname=\`hostname\` && sed -i 's#HOSTNAME#'\${hostname}'#g' /etc/systemd/system/etcd.service - name: copy cert copy: src=/opt/k8s/certs/ca.pem dest=/etc/kubernetes/ssl/ca.pem owner=root group=root force=yes mode=755 - name: copy ca-key.pem copy: src=/opt/k8s/certs/ca-key.pem dest=/etc/kubernetes/ssl/ca-key.pem owner=root group=root force=yes mode=755 - name: copy etcd.pem copy: src=/opt/k8s/certs/etcd.pem dest=/etc/kubernetes/ssl/etcd.pem owner=root group=root force=yes mode=755 - name: copy etcd-key.pem copy: src=/opt/k8s/certs/etcd-key.pem dest=/etc/kubernetes/ssl/etcd-key.pem owner=root group=root force=yes mode=755 - name: start etcd.service shell: systemctl daemon-reload && systemctl enable etcd.service && systemctl restart etcd.service EOF ansible etcd -i /etc/ansible/roles/k8s/hosts -m group -a 'name=etcd' ansible etcd -i /etc/ansible/roles/k8s/hosts -m user -a 'name=etcd group=etcd comment="etcd user" shell=/sbin/nologin home=/var/lib/etcd createhome=no' ansible etcd -i /etc/ansible/roles/k8s/hosts -m file -a 'path=/var/lib/etcd state=directory owner=etcd group=etcd' ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/etcd_init.yaml 2.查看etcd集群状态 #etcd3.3版本执行如下: etcdctl --ca-file=/etc/kubernetes/ssl/ca.pem --cert-file=/etc/kubernetes/ssl/etcd.pem --key-file=/etc/kubernetes/ssl/etcd-key.pem cluster-health 五、安装Master组件 1.创建证书 mkdir -p /opt/k8s/certs/ cat > /opt/k8s/certs/admin-csr.json < { "CN": "admin", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "ShangHai", "L": "ShangHai", "O": "system:masters", "OU": "System" } ] } EOF cd /opt/k8s/certs/ cfssl gencert -ca=/opt/k8s/certs/ca.pem -ca-key=/opt/k8s/certs/ca-key.pem -config=/opt/k8s/certs/ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin cat >/etc/ansible/roles/k8s/master_init.yaml < --- - name: Copy k8s master files hosts: master gather_facts: False tasks: - name: copy kube_apiserver copy: src=/root/files/kube-apiserver dest=/usr/bin/kube-apiserver owner=root group=root mode=755 - name: copy kube-scheduler copy: src=/root/files/kube-scheduler dest=/usr/bin/kube-scheduler owner=root group=root mode=755 - name: copy kubectl file copy: src=/root/files/kubectl dest=/usr/bin/kubectl owner=root group=root mode=755 - name: copy kube-controller-manager copy: src=/root/files/kube-controller-manager dest=/usr/bin/kube-controller-manager owner=root group=root mode=755 - name: copy admin.pem copy: src=/opt/k8s/certs/admin.pem dest=/etc/kubernetes/ssl/admin.pem owner=root group=root - name: copy admin-key.pem copy: src=/opt/k8s/certs/admin-key.pem dest=/etc/kubernetes/ssl/admin-key.pem owner=root group=root EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/master_init.yaml cat >/etc/ansible/roles/k8s/node_init.yaml < --- - name: Copy k8s master files hosts: node gather_facts: False tasks: - name: copy kube_proxy copy: src=/root/files/kube-proxy dest=/usr/bin/kube-proxy owner=root group=root mode=755 - name: copy kubelet copy: src=/root/files/kubelet dest=/usr/bin/kubelet owner=root group=root mode=755 EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/node_init.yaml kubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=https://127.0.0.1:6443 # 设置客户端认证参数 kubectl config set-credentials admin \ --client-certificate=/etc/kubernetes/ssl/admin.pem \ --embed-certs=true \ --client-key=/etc/kubernetes/ssl/admin-key.pem #设置上下文参数 kubectl config set-context admin@kubernetes \ --cluster=kubernetes \ --user=admin # 设置默认上下文 kubectl config use-context admin@kubernetes 2.分发kube文件 cat >/etc/ansible/roles/k8s/copy_kubeconfig.yaml < --- - name: Copy k8s kubeconfig files hosts: master gather_facts: False tasks: - name: mkdir -p home kube dir shell: mkdir -p /root/.kube - name: copy kubeconfig copy: src=/root/.kube/config dest=/root/.kube/config owner=root group=root EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_kubeconfig.yaml 3.部署kube-apiserver组件 cat > /opt/k8s/certs/kubernetes-csr.json< { "CN": "kubernetes", "hosts": [ "127.0.0.1", "192.168.1.61", "192.168.1.105", "192.168.1.209", "192.168.1.3", "10.0.0.1", "localhost", "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.cluster", "kubernetes.default.svc.cluster.local" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "ShangHai", "L": "ShangHai", "O": "k8s", "OU": "System" } ] } EOF cd /opt/k8s/certs/ cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes cat >/etc/ansible/roles/k8s/copy_master_k8s-key.yaml < --- - name: Copy Kubernetes key files hosts: master gather_facts: False tasks: - name: mkdir -p /etc/kubernetes/ssl shell: mkdir -p /etc/kubernetes/ssl/ - name: copy kubernetes.pem file copy: src=/opt/k8s/certs/kubernetes.pem dest=/etc/kubernetes/ssl/kubernetes.pem owner=root group=root - name: copy kubernetes-key.pem file copy: src=/opt/k8s/certs/kubernetes-key.pem dest=/etc/kubernetes/ssl/kubernetes-key.pem owner=root group=root EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_master_k8s-key.yaml ###问题 若使用kubect get cs 出现 The connection to the server 127.0.0.1:6443 was refused - did you specify the right host or port? 解决方法:临时将:vi $HOME/.kube/config 将 server: https://127.0.0.1:6443 改为 server: https://192.168.1.105:6443 这里每个节点都要改一下,有可能kube-scheduler启动会出现failed to acquire lease kube-system/kube-scheduler这样的错误 #配置kube-apiserver客户端使用的token文件 #创建 TLS Bootstrapping Token [root@cd-k8s-master-etcd-1 k8s]# head -c 16 /dev/urandom | od -An -t x | tr -d ' ' 211dc6d5d7afb8a6e948507597c2c9f8 [root@cd-k8s-master-etcd-1 k8s]# mkdir -p /etc/kubernetes/config [root@cd-k8s-master-etcd-1 k8s]# cat < 211dc6d5d7afb8a6e948507597c2c9f8,kubelet-bootstrap,10001,"system:kubelet-bootstrap" EOF cat >/etc/ansible/roles/k8s/copy_master_k8s-key.yaml < --- - name: Copy token to master hosts: master gather_facts: False tasks: - name: mkdir -p /etc/kubernetes/config shell: mkdir -p /etc/kubernetes/config/ - name: copy token file to master copy: src=/etc/kubernetes/config/token.csv dest=/etc/kubernetes/config/token.csv owner=root group=root EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_master_k8s-key.yaml #创建apiserver配置文件 cat < KUBE_APISERVER_OPTS="--logtostderr=true \\ --v=4 \\ --etcd-servers=https://192.168.1.61:2379,https://192.168.1.105:2379,https://192.168.1.209:2379 \\ --bind-address=IP \\ --secure-port=6443 \\ --advertise-address=IP \\ --allow-privileged=true \\ --service-cluster-ip-range=10.0.0.0/16 \\ --enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction \\ --authorization-mode=RBAC,Node \\ --enable-bootstrap-token-auth \\ --token-auth-file=/etc/kubernetes/config/token.csv \\ --service-node-port-range=30000-50000 \\ --tls-cert-file=/etc/kubernetes/ssl/kubernetes.pem \\ --tls-private-key-file=/etc/kubernetes/ssl/kubernetes-key.pem \\ --client-ca-file=/etc/kubernetes/ssl/ca.pem \\ --service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \\ --etcd-cafile=/etc/kubernetes/ssl/ca.pem \\ --etcd-certfile=/etc/kubernetes/ssl/kubernetes.pem \\ --etcd-keyfile=/etc/kubernetes/ssl/kubernetes-key.pem" EOF #创建kube-apiserver启动文件 cat > /root/files/kube-apiserver.service << EOF [Unit] Description=Kubernetes API Server Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=/etc/kubernetes/config/kube-apiserver ExecStart=/bin/kube-apiserver \$KUBE_APISERVER_OPTS Restart=on-failure [Install] WantedBy=multi-user.target EOF cat >/etc/ansible/roles/k8s/copy_master_kube-apiserver.yaml < --- - name: Copy kube-apiserver file to master hosts: master gather_facts: False tasks: - name: mkdir /etc/kubernetes/config/ shell: mkdir -p /etc/kubernetes/config/ - name: copy kube-apiserver config file copy: src=/root/files/kube-apiserver dest=/etc/kubernetes/config/kube-apiserver owner=root group=root force=yes - name: replace etcd.service info shell: IP=\`ip addr|grep "192.168.1."|awk '{print \$2}'|awk -F '/' '{print \$1}'\` && sed -i 's#IP#'\${IP}'#g' /etc/kubernetes/config/kube-apiserver - name: copy kube-apiserver.service copy: src=/root/files/kube-apiserver.service dest=/usr/lib/systemd/system/kube-apiserver.service owner=root group=root force=yes mode=755 - name: systemctl reload && add reboot machine && start kube-apiserver.service shell: systemctl daemon-reload && systemctl enable kube-apiserver && systemctl restart kube-apiserver EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_master_kube-apiserver.yaml #查看启动情况 [root@cd-k8s-master-etcd-1 k8s]# ansible master -i /etc/ansible/roles/k8s/hosts -m shell -a "systemctl status kube-apiserver"|grep "Active" 4.部署kube-scheduler组件 cat > /root/files/kube-scheduler < KUBE_SCHEDULER_OPTS="--logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect" EOF #参数说明: --master 连接本地apiserver --leader-elect 当该组件启动多个时,自动选举(HA) cat > /root/files/kube-scheduler.service < [Unit] Description=Kubernetes Scheduler Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=-/etc/kubernetes/config/kube-scheduler ExecStart=/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS Restart=on-failure [Install] WantedBy=multi-user.target EOF cat >/etc/ansible/roles/k8s/copy_master_kube-scheduler.yaml < --- - name: Copy kube-scheduler file to master hosts: master gather_facts: False tasks: - name: mkdir /etc/kubernetes/config/ shell: mkdir -p /etc/kubernetes/config/ - name: copy kube-scheduler config copy: src=/root/files/kube-scheduler dest=/etc/kubernetes/config/kube-scheduler owner=root group=root force=yes - name: copy kube-scheduler.service copy: src=/root/files/kube-scheduler.service dest=/usr/lib/systemd/system/kube-scheduler.service owner=root group=root force=yes mode=755 - name: systemctl reload && add reboot machine && start kube-apiserver.service shell: systemctl daemon-reload && systemctl enable kube-scheduler && systemctl start kube-scheduler.service EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_master_kube-scheduler.yaml #查看kube-scheduler启动状态 [root@cd-k8s-master-etcd-1 k8s]# ansible master -i /etc/ansible/roles/k8s/hosts -m shell -a "systemctl status kube-scheduler"|grep "Active" 5.部署controller-manager组件 cat >/root/files/kube-controller-manager < KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\ --v=4 \\ --master=127.0.0.1:8080 \\ --leader-elect=true \\ --address=127.0.0.1 \\ --service-cluster-ip-range=10.0.0.0/24 \\ --cluster-name=kubernetes \\ --cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \\ --cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \\ --root-ca-file=/etc/kubernetes/ssl/ca.pem \\ --service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem" EOF cat >/root/files/kube-controller-manager.service < [Unit] Description=Kubernetes Controller Manager Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=/etc/kubernetes/config/kube-controller-manager ExecStart=/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS Restart=on-failure [Install] WantedBy=multi-user.target EOF cat >/etc/ansible/roles/k8s/copy_master_kube-controller-manager.yaml < --- - name: Copy Kube-controller-manager file to master hosts: master gather_facts: False tasks: - name: mkdir /etc/kubernetes/config/ shell: mkdir -p /etc/kubernetes/config/ - name: copy kube-controller-manager config file copy: src=/root/files/kube-controller-manager dest=/etc/kubernetes/config/kube-controller-manager owner=root group=root force=yes - name: copy kube-controller-manager.service copy: src=/root/files/kube-controller-manager.service dest=/usr/lib/systemd/system/kube-controller-manager.service owner=root group=root force=yes mode=755 - name: systemctl reload && add reboot machine && start kube-controller-manager.service shell: systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl start kube-controller-manager.service EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_master_kube-controller-manager.yaml #查看kube-controller-manager组件启动状态 [root@cd-k8s-master-etcd-1 k8s]# ansible master -i /etc/ansible/roles/k8s/hosts -m shell -a "systemctl status kube-controller-manager" |grep "Active" 六、master高可用部署(Haproxy+KeepAlived) #安装Haproxy和KeepAlived cat >/root/files/haproxy.cfg << EOF global log /dev/log local0 log /dev/log local1 notice chroot /var/lib/haproxy stats socket /var/run/haproxy-admin.sock mode 660 level admin stats timeout 30s user haproxy group haproxy daemon nbproc 1 defaults log global timeout connect 5000 timeout client 10m timeout server 10m listen admin_stats bind 0.0.0.0:10080 mode http log 127.0.0.1 local0 err stats refresh 30s stats uri /status stats realm welcome login\ Haproxy stats auth admin:123456 stats hide-version stats admin if TRUE listen kube-master bind 0.0.0.0:8443 mode tcp option tcplog balance roundrobin server 192.168.1.61 192.168.1.61:6443 check inter 2000 fall 2 rise 2 weight 1 server 192.168.1.109 192.168.1.109:6443 check inter 2000 fall 2 rise 2 weight 1 server 192.168.1.205 192.168.1.205:6443 check inter 2000 fall 2 rise 2 weight 1 EOF cat >/etc/ansible/roles/k8s/install_ha.yaml < --- - name: Install Haproxy && KeepAlive software hosts: master gather_facts: False tasks: - name: Install Haproxy shell: yum install -y haproxy - name: Install KeepAlive shell: yum install -y keepalived # - name: backup haproxy config # shell: mv /etc/haproxy/haproxy.cfg /etc/haproxy/haproxy.cfg.bak - name: copy haproxy config copy: src=/root/files/haproxy.cfg dest=/etc/haproxy/haproxy.cfg owner=haproxy group=haproxy force=yes - name: start haproxy shell: systemctl enable haproxy && systemctl restart haproxy # - name: backup KeepAlived config # shell: cp /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.bak EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/install_ha.yaml #查看Haproxy启动状态 [root@cd-k8s-master-etcd-1 k8s]# ansible master -i /etc/ansible/roles/k8s/hosts -m shell -a "systemctl status haproxy"|grep "Active" #配置KeepAlived [root@cd-k8s-master-etcd-1 keepalived]# vi /etc/keepalived/keepalived.conf ! Configuration File for keepalived global_defs { router_id master1 } vrrp_instance VI_1 { state MASTER interface ens192 virtual_router_id 51 priority 100 advert_int 1 authentication { auth_type PASS auth_pass 1111 } virtual_ipaddress { 192.168.1.3 } } 说明: 1)、global_defs 只保留 router_id(每个节点都不同); 2)、修改 interface(vip绑定的网卡),及 virtual_ipaddress(vip地址及掩码长度); 3)、其他节点只需修改 state 为 BACKUP,优先级 priority 低于100即可。 全部配置信息如下: [root@cd-k8s-master-etcd-1 keepalived]# ansible master -i /etc/ansible/roles/k8s/hosts -m shell -a "cat /etc/keepalived/keepalived.conf" 192.168.1.105 | CHANGED | rc=0 >> ! Configuration File for keepalived global_defs { router_id master2 } vrrp_instance VI_1 { state BACKUP interface ens192 virtual_router_id 51 priority 82 advert_int 1 authentication { auth_type PASS auth_pass 1111 } virtual_ipaddress { 192.168.1.3 } } 192.168.1.61 | CHANGED | rc=0 >> ! Configuration File for keepalived global_defs { router_id master1 } vrrp_instance VI_1 { state MASTER interface ens192 virtual_router_id 51 priority 100 advert_int 1 authentication { auth_type PASS auth_pass 1111 } virtual_ipaddress { 192.168.1.3 } } 192.168.1.209 | CHANGED | rc=0 >> ! Configuration File for keepalived global_defs { router_id master3 } vrrp_instance VI_1 { state BACKUP interface ens192 virtual_router_id 51 priority 83 advert_int 1 authentication { auth_type PASS auth_pass 1111 } virtual_ipaddress { 192.168.1.3 } } #启动KeepAlive服务 [root@cd-k8s-master-etcd-1 keepalived]# ansible master -i /etc/ansible/roles/k8s/hosts -m shell -a "systemctl enable keepalived && systemctl start keepalived" #查看VIP信息 [root@cd-k8s-master-etcd-1 keepalived]# ansible master -i /etc/ansible/roles/k8s/hosts -m shell -a "ip addr|grep inet" 七、Node节点部署 Master apiserver启用TLS认证后,Node节点kubelet组件想要加入集群,必须使用CA签发的有效证书才能与apiserver通信,当Node节点很多时,签署证书是一件很繁琐的事情,因此有了TLS Bootstrapping机制,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。 1.创建kubelet bootstrap kubeconfig文件 [root@cd-k8s-master-etcd-1 files]# cat /etc/kubernetes/config/token.csv 211dc6d5d7afb8a6e948507597c2c9f8,kubelet-bootstrap,10001,"system:kubelet-bootstrap" cat > /root/files/environment.sh << EOF # 创建kubelet bootstrapping kubeconfig BOOTSTRAP_TOKEN=211dc6d5d7afb8a6e948507597c2c9f8 KUBE_APISERVER="https://192.168.1.3:8443" # 设置集群参数 kubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=\${KUBE_APISERVER} \\ --kubeconfig=bootstrap.kubeconfig # 设置客户端认证参数 kubectl config set-credentials kubelet-bootstrap \\ --token=${BOOTSTRAP_TOKEN} \\ --kubeconfig=bootstrap.kubeconfig # 设置上下文参数 kubectl config set-context default \\ --cluster=kubernetes \\ --user=kubelet-bootstrap \\ --kubeconfig=bootstrap.kubeconfig # 设置默认上下文 kubectl config use-context default --kubeconfig=bootstrap.kubeconfig EOF [root@cd-k8s-master-etcd-1 files]# sh /root/files/environment.sh 2.创建kubelet.kubeconfig文件 cat > /root/files/envkubelet.kubeconfig.sh < # 创建kubelet bootstrapping kubeconfig BOOTSTRAP_TOKEN=211dc6d5d7afb8a6e948507597c2c9f8 KUBE_APISERVER="https://192.168.1.3:8443" # 设置集群参数 kubectl config set-cluster kubernetes \\ --certificate-authority=/etc/kubernetes/ssl/ca.pem \\ --embed-certs=true \\ --server=\${KUBE_APISERVER} \\ --kubeconfig=kubelet.kubeconfig # 设置客户端认证参数 kubectl config set-credentials kubelet \\ --token=\${BOOTSTRAP_TOKEN} \\ --kubeconfig=kubelet.kubeconfig # 设置上下文参数 kubectl config set-context default \\ --cluster=kubernetes \\ --user=kubelet \\ --kubeconfig=kubelet.kubeconfig # 设置默认上下文 kubectl config use-context default --kubeconfig=kubelet.kubeconfig EOF [root@cd-k8s-master-etcd-1 files]# sh /root/files/envkubelet.kubeconfig.sh 5.将kubelet-bootstrap用户绑定到系统集群角色 kubectl create clusterrolebinding kubelet-bootstrap \ --clusterrole=system:node-bootstrapper \ --user=kubelet-bootstrap 6.创建kubelet参数配置模板文件 cat > /root/files/kubelet << EOF KUBELET_OPTS="--logtostderr=true \\ --v=4 \\ --hostname-override=HOSTNAME \\ --kubeconfig=/etc/kubernetes/config/kubelet.kubeconfig \\ --bootstrap-kubeconfig=/etc/kubernetes/config/bootstrap.kubeconfig \\ --config=/etc/kubernetes/config/kubelet-config.yaml \\ --cert-dir=/etc/kubernetes/ssl \\ --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0" EOF cat > /root/files/bootstrap.kubeconfig << EOF apiVersion: v1 clusters: - cluster: certificate-authority: /etc/kubernetes/ssl/ca.pem server: https://192.168.1.3:8443 name: kubernetes contexts: - context: cluster: kubernetes user: kubelet-bootstrap name: default current-context: default kind: Config preferences: {} users: - name: kubelet-bootstrap user: token: 211dc6d5d7afb8a6e948507597c2c9f8 EOF cat > /root/files/kubelet-config.yaml << EOF kind: KubeletConfiguration apiVersion: kubelet.config.k8s.io/v1beta1 address: 0.0.0.0 port: 10250 readOnlyPort: 10255 cgroupDriver: cgroupfs clusterDNS: - 10.0.0.2 clusterDomain: cluster.local failSwapOn: false authentication: anonymous: enabled: true webhook: cacheTTL: 2m0s enabled: true x509: clientCAFile: /etc/kubernetes/ssl/ca.pem authorization: mode: Webhook webhook: cacheAuthorizedTTL: 5m0s cacheUnauthorizedTTL: 30s evictionHard: imagefs.available: 15% memory.available: 100Mi nodefs.available: 10% nodefs.inodesFree: 5% maxOpenFiles: 1000000 maxPods: 110 EOF cat > /root/files/kubelet.kubeconfig << EOF apiVersion: v1 clusters: - cluster: certificate-authority: /etc/kubernetes/ssl/ca.pem server: https://192.168.1.3:8443 name: default-cluster contexts: - context: cluster: default-cluster namespace: default user: default-auth name: default-context current-context: default-context kind: Config preferences: {} users: - name: default-auth user: client-certificate: /etc/kubernetes/ssl/kubelet-client-current.pem client-key: /etc/kubernetes/ssl/kubelet-client-current.pem EOF cat > /root/files/kubelet.service << EOF [Unit] Description=Kubernetes Kubelet After=docker.service Requires=docker.service [Service] EnvironmentFile=/etc/kubernetes/config/kubelet ExecStart=/bin/kubelet \$KUBELET_OPTS Restart=on-failure KillMode=process [Install] WantedBy=multi-user.target EOF cat >/etc/ansible/roles/k8s/copy_node-kubelet.yaml < --- - name: Copy Node files to nodes hosts: node gather_facts: False tasks: - name: mkdir /etc/kubernetes/config/ shell: mkdir -p /etc/kubernetes/config/ - name: copy kubelet file copy: src=/root/files/kubelet dest=/etc/kubernetes/config/kubelet owner=root group=root force=yes - name: copy bootstrap.kubeconfig file copy: src=/root/files/bootstrap.kubeconfig dest=/etc/kubernetes/config/bootstrap.kubeconfig owner=root group=root force=yes - name: copy kubelet-config.yaml file copy: src=/root/files/kubelet-config.yaml dest=/etc/kubernetes/config/kubelet-config.yaml owner=root group=root force=yes - name: copy kubelet.kubeconfig file copy: src=/root/files/kubelet.kubeconfig dest=/etc/kubernetes/config/kubelet.kubeconfig owner=root group=root force=yes - name: copy kubelet.service file copy: src=/root/files/kubelet.service dest=/usr/lib/systemd/system/kubelet.service owner=root group=root force=yes mode=755 - name: replace kubelet info shell: HOSTNAME=\`hostname\` && sed -i 's#HOSTNAME#'\${HOSTNAME}'#g' /etc/kubernetes/config/kubelet - name: add reboot and start kubelet.service shell: systemctl enable kubelet.service && systemctl start kubelet.service EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_node-kubelet.yaml [root@cd-k8s-master-etcd-1 k8s]# kubectl get csr NAME AGE REQUESTOR CONDITION node-csr-Arp8iLv-iuvwaRnKx0K-EFa1vBXh-byRJzqxqj5jFAs 3s kubelet-bootstrap Pending node-csr-w8UjzWe9HAz583WTP0iI7kY8bojJaX0s0cEAyPrx4qo 50m kubelet-bootstrap Approved,Issued node-csr-zqE1LTAyJ3POfd0wUuYdvfhWsyucuEB4I43LP-5jAxI 2s kubelet-bootstrap Pending [root@cd-k8s-master-etcd-1 k8s]# kubectl certificate approve node-csr-Arp8iLv-iuvwaRnKx0K-EFa1vBXh-byRJzqxqj5jFAs node-csr-w8UjzWe9HAz583WTP0iI7kY8bojJaX0s0cEAyPrx4qo node-csr-zqE1LTAyJ3POfd0wUuYdvfhWsyucuEB4I43LP-5jAxI certificatesigningrequest.certificates.k8s.io/node-csr-Arp8iLv-iuvwaRnKx0K-EFa1vBXh-byRJzqxqj5jFAs approved certificatesigningrequest.certificates.k8s.io/node-csr-w8UjzWe9HAz583WTP0iI7kY8bojJaX0s0cEAyPrx4qo approved certificatesigningrequest.certificates.k8s.io/node-csr-zqE1LTAyJ3POfd0wUuYdvfhWsyucuEB4I43LP-5jAxI approved [root@cd-k8s-master-etcd-1 k8s]# kubectl get csr NAME AGE REQUESTOR CONDITION node-csr-Arp8iLv-iuvwaRnKx0K-EFa1vBXh-byRJzqxqj5jFAs 74s kubelet-bootstrap Approved,Issued node-csr-w8UjzWe9HAz583WTP0iI7kY8bojJaX0s0cEAyPrx4qo 52m kubelet-bootstrap Approved,Issued node-csr-zqE1LTAyJ3POfd0wUuYdvfhWsyucuEB4I43LP-5jAxI 73s kubelet-bootstrap Approved,Issued [root@cd-k8s-master-etcd-1 k8s]# kubectl get nodes NAME STATUS ROLES AGE VERSION 192.168.1.211 Ready cd-k8s-node-1 Ready cd-k8s-node-3 Ready 3.创建kube-proxy kubeconfig文件 cat > /opt/k8s/certs/kube-proxy-csr.json < { "CN": "system:kube-proxy", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "ShangHai", "L": "ShangHai", "O": "k8s", "OU": "System" } ] } EOF cd /opt/k8s/certs/ cfssl gencert -ca=/opt/k8s/certs/ca.pem \ -ca-key=/opt/k8s/certs/ca-key.pem \ -config=/opt/k8s/certs/ca-config.json \ -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy ansible node -i /etc/ansible/roles/k8s/hosts -m copy -a "src=/opt/k8s/certs/kube-proxy.pem dest=/etc/kubernetes/ssl/kube-proxy.pem force=yes" ansible node -i /etc/ansible/roles/k8s/hosts -m copy -a "src=/opt/k8s/certs/kube-proxy-key.pem dest=/etc/kubernetes/ssl/kube-proxy-key.pem force=yes" 4.创建kube-proxy kubeconfig文件 cat > /root/files/kube-proxy.conf << EOF KUBE_PROXY_OPTS="--logtostderr=false \\ --v=4 \\ --log-dir=/var/log/kubernetes/logs \\ --config=/etc/kubernetes/config/kube-proxy-config.yaml" EOF cat > /root/files/kube-proxy.kubeconfig << EOF apiVersion: v1 clusters: - cluster: certificate-authority: /etc/kubernetes/ssl/ca.pem server: https://192.168.1.3:8443 name: kubernetes contexts: - context: cluster: kubernetes user: kube-proxy name: default current-context: default kind: Config preferences: {} users: - name: kube-proxy user: client-certificate: /etc/kubernetes/ssl/kube-proxy.pem client-key: /etc/kubernetes/ssl/kube-proxy-key.pem EOF cat > /root/files/kube-proxy-config.yaml<< EOF kind: KubeProxyConfiguration apiVersion: kubeproxy.config.k8s.io/v1alpha1 address: 0.0.0.0 metricsBindAddress: 0.0.0.0:10249 clientConnection: kubeconfig: /etc/kubernetes/config/kube-proxy.kubeconfig hostnameOverride: HOSTNAME clusterCIDR: 10.0.0.0/24 mode: ipvs ipvs: scheduler: "rr" iptables: masqueradeAll: true EOF cat > /root/files/kube-proxy.service< [Unit] Description=Kubernetes Proxy After=network.target [Service] EnvironmentFile=/etc/kubernetes/config/kube-proxy.conf ExecStart=/bin/kube-proxy \$KUBE_PROXY_OPTS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF cat >/etc/ansible/roles/k8s/copy_node-kube-proxy.yaml < --- - name: Copy Node files to nodes hosts: node gather_facts: False tasks: - name: mkdir /etc/kubernetes/config/ shell: mkdir -p /etc/kubernetes/config/ - name: mkdir /var/log/kubernetes/logs shell: mkdir -p /var/log/kubernetes/logs - name: copy kube-proxy.conf file copy: src=/root/files/kube-proxy.conf dest=/etc/kubernetes/config/kube-proxy.conf owner=root group=root force=yes - name: copy kube-proxy.kubeconfig file copy: src=/root/files/kube-proxy.kubeconfig dest=/etc/kubernetes/config/kube-proxy.kubeconfig owner=root group=root force=yes - name: copy kube-proxy-config.yaml file copy: src=/root/files/kube-proxy-config.yaml dest=/etc/kubernetes/config/kube-proxy-config.yaml owner=root group=root force=yes - name: copy kube-proxy.service file copy: src=/root/files/kube-proxy.service dest=/usr/lib/systemd/system/kube-proxy.service owner=root group=root force=yes mode=755 - name: replace kube-proxy-config.yaml info shell: HOSTNAME=\`hostname\` && sed -i 's#HOSTNAME#'\${HOSTNAME}'#g' /etc/kubernetes/config/kube-proxy-config.yaml - name: add reboot and start shell: systemctl daemon-reload && systemctl enable kube-proxy && systemctl restart kube-proxy EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_node-kube-proxy.yaml ansible node -i /etc/ansible/roles/k8s/hosts -m shell -a "systemctl restart kube-proxy" ansible node -i /etc/ansible/roles/k8s/hosts -m shell -a "yum install -y ipvsadm" ansible node -i /etc/ansible/roles/k8s/hosts -m shell -a "ipvsadm -Ln" 八、配置Flannel网络 1.创建flannel证书及私钥 cd /opt/k8s/certs cat >flanneld-csr.json< { "CN": "flanneld", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "ShangHai", "L": "ShangHai", "O": "k8s", "OU": "System" } ] } EOF cfssl gencert -ca=/opt/k8s/certs/ca.pem \ -ca-key=/opt/k8s/certs/ca-key.pem \ -config=/opt/k8s/certs/ca-config.json \ -profile=kubernetes flanneld-csr.json | cfssljson -bare flanneld cat >/root/files/flanneld.service < [Unit] Description=Flanneld overlay address etcd agent After=network.target After=network-online.target Wants=network-online.target After=etcd.service Before=docker.service [Service] Type=notify ExecStart=/usr/bin/flanneld --ip-masq \\ -etcd-cafile=/etc/kubernetes/ssl/ca.pem \\ -etcd-certfile=/etc/kubernetes/ssl/flanneld.pem \\ -etcd-keyfile=/etc/kubernetes/ssl/flanneld-key.pem \\ -etcd-endpoints=https://192.168.1.61:2379,https://192.168.1.105:2379,https://192.168.1.209:2379 \ -etcd-prefix=/coreos.com/network/ ExecStartPost=/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env Restart=on-failure [Install] WantedBy=multi-user.target RequiredBy=docker.service EOF cat >/etc/ansible/roles/k8s/copy_flannel.yaml < --- - name: Copy Node files to nodes hosts: all gather_facts: False tasks: - name: copy flanneld.pem file copy: src=/opt/k8s/certs/flanneld.pem dest=/etc/kubernetes/ssl/flanneld.pem owner=root group=root force=yes - name: copy flanneld-key.pem file copy: src=/opt/k8s/certs/flanneld-key.pem dest=/etc/kubernetes/ssl/flanneld-key.pem owner=root group=root force=yes - name: copy flanneld file copy: src=/root/files/flanneld dest=/usr/bin/flanneld owner=root group=root force=yes mode=755 - name: copy mk-docker-opts.sh file copy: src=/root/files/mk-docker-opts.sh dest=/usr/bin/mk-docker-opts.sh owner=root group=root force=yes mode=755 - name: copy /root/files/flanneld.service file copy: src=/root/files/flanneld.service dest=/usr/lib/systemd/system/flanneld.service owner=root group=root force=yes mode=755 EOF ansible-playbook -i /etc/ansible/roles/k8s/hosts /etc/ansible/roles/k8s/copy_flannel.yaml #etcd 3.3版本,执行如下: etcdctl --ca-file=/etc/kubernetes/ssl/ca.pem --cert-file=/etc/kubernetes/ssl/etcd.pem --key-file=/etc/kubernetes/ssl/etcd-key.pem mkdir /coreos.com/network/ etcdctl --ca-file=/etc/kubernetes/ssl/ca.pem --cert-file=/etc/kubernetes/ssl/etcd.pem --key-file=/etc/kubernetes/ssl/etcd-key.pem mk /coreos.com/network/config '{"Network":"10.0.0.0/16","SubnetLen":24,"Backend":{"Type":"vxlan"}}' ansible all -i /etc/ansible/roles/k8s/hosts -m shell -a "systemctl daemon-reload && systemctl enable flanneld && systemctl start flanneld" #查看所有集群主机的网络情况 etcdctl --ca-file=/etc/kubernetes/ssl/ca.pem --cert-file=/etc/kubernetes/ssl/etcd.pem --key-file=/etc/kubernetes/ssl/etcd-key.pem ls /coreos.com/network/subnets 2.配置Docker启动指定子网段 cat > /root/files/docker.service << EOF [Unit] Description=Docker Application Container Engine Documentation=https://docs.docker.com BindsTo=containerd.service After=network-online.target firewalld.service containerd.service Wants=network-online.target Requires=docker.socket [Service] Type=notify # the default is not to use systemd for cgroups because the delegate issues still # exists and systemd currently does not support the cgroup feature set required # for containers run by docker #ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock EnvironmentFile=/run/flannel/subnet.env ExecStart=/usr/bin/dockerd \$DOCKER_NETWORK_OPTIONS ExecReload=/bin/kill -s HUP \$MAINPID TimeoutSec=0 RestartSec=2 Restart=always # Note that StartLimit* options were moved from "Service" to "Unit" in systemd 229. # Both the old, and new location are accepted by systemd 229 and up, so using the old location # to make them work for either version of systemd. StartLimitBurst=3 # Note that StartLimitInterval was renamed to StartLimitIntervalSec in systemd 230. # Both the old, and new name are accepted by systemd 230 and up, so using the old name to make # this option work for either version of systemd. StartLimitInterval=60s # Having non-zero Limit*s causes performance problems due to accounting overhead # in the kernel. We recommend using cgroups to do container-local accounting. LimitNOFILE=infinity LimitNPROC=infinity LimitCORE=infinity # Comment TasksMax if your systemd version does not support it. # Only systemd 226 and above support this option. TasksMax=infinity # set delegate yes so that systemd does not reset the cgroups of docker containers Delegate=yes # kill only the docker process, not all processes in the cgroup KillMode=process [Install] WantedBy=multi-user.target EOF ansible all -i /etc/ansible/roles/k8s/hosts -m copy -a "src=/root/files/docker.service dest=/usr/lib/systemd/system/docker.service mode=755" ansible all -i /etc/ansible/roles/k8s/hosts -m shell -a "systemctl daemon-reload && systemctl restart docker.service" 3.创建pod进行测试集群 kubectl run nginx --image=nginx:1.16.0 kubectl expose deployment nginx --port 80 --type LoadBalancer kubectl get pods -o wide kubectl get svc http://192.168.1.211:31635/ 4.通过scale命令扩展应用 kubectl scale deployments/nginx --replicas=4 kubectl get pods -o wide 九、部署Dashboard V2.0(beta5) 1.下载并修改DashBoard安装脚本 mkdir -p $HOME/dashboard-certs && cd $HOME/dashboard-certs wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta5/aio/deploy/recommended.yaml ###修改如下内容: 修改recommended.yaml文件内容 kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: type: NodePort #新加 ports: - port: 443 targetPort: 8443 nodePort: 30001 #新加 selector: k8s-app: kubernetes-dashboard #因为自动生成的证书很多浏览器无法使用,所以我们自己创建,注释掉kubernetes-dashboard-certs对象声明 #apiVersion: v1 #kind: Secret #metadata: # labels: # k8s-app: kubernetes-dashboard # name: kubernetes-dashboard-certs # namespace: kubernetes-dashboard #type: Opaque 2.创建证书 mkdir -p $HOME/dashboard-certs && cd $HOME/dashboard-certs # 创建key文件 openssl genrsa -out dashboard.key 2048 #证书请求 openssl req -days 36000 -new -out dashboard.csr -key dashboard.key -subj '/CN=dashboard-cert' #自签证书 openssl x509 -req -in dashboard.csr -signkey dashboard.key -out dashboard.crt #创建命名空间 kubectl create namespace kubernetes-dashboard kubectl create clusterrolebinding system:anonymous --clusterrole=cluster-admin --user=system:anonymous #创建kubernetes-dashboard-certs对象 kubectl create secret generic kubernetes-dashboard-certs --from-file=dashboard.key --from-file=dashboard.crt -n kubernetes-dashboard 3.安装Dashboard #安装 kubectl create -f ./recommended.yaml #检查结果 kubectl get pods -A -o wide kubectl get service -n kubernetes-dashboard -o wide 4.创建服务账户 cat > ./dashboard-admin.yaml < apiVersion: v1 kind: ServiceAccount metadata: labels: k8s-app: kubernetes-dashboard name: dashboard-admin namespace: kubernetes-dashboard EOF kubectl apply -f dashboard-admin.yaml 5.创建集群角色绑定 cat >./dashboard-admin-bind-cluster-role.yaml < apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: dashboard-admin-bind-cluster-role labels: k8s-app: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: dashboard-admin namespace: kubernetes-dashboard EOF kubectl apply -f dashboard-admin-bind-cluster-role.yaml 6.获取用户登录Token kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-token | awk '{print $1}') 7.查看Dashboard状态 kubectl get pods -o wide -A 8.登录dashboard验证服务 kubectl cluster-info 打开如下网址(使用Firefox浏览器) https://192.168.1.3:8443/api/v1/namespaces/kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy/#/login 或 https://192.168.1.211:30001/#/overview?namespace=default 十、安装CoreDNS服务 1.下载相关文件 mkdir /opt/coredns && cd /opt/coredns/ wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/deploy.sh wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/coredns.yaml.sed chmod +x deploy.sh #查看其网段 kubectl get svc 2.修改部署文件 修改部署文件 修改$DNS_DOMAIN、$DNS_SERVER_IP变量为实际值,并修改image后面的镜像。 这里直接用deploy.sh脚本进行修改: ./deploy.sh -s -r 10.0.0.0/16 -i 10.0.0.2 -d cluster.local > coredns.yaml 注意:网段为10.0.0.0/16(同apiserver定义的service-cluster-ip-range值,非kube-proxy中的cluster-cidr值),DNS的地址设置为10.0.0.2 ./deploy.sh -i 10.0.0.2 -d cluster.local > coredns.yaml 修改如下 apiVersion: v1 kind: ConfigMap metadata: name: coredns namespace: kube-system data: Corefile: | .:53 { errors health { lameduck 5s } ready kubernetes cluster.local in-addr.arpa ip6.arpa { pods insecure upstream fallthrough in-addr.arpa ip6.arpa } prometheus :9153 forward . /etc/resolv.conf cache 30 loop reload loadbalance } ....... image: harbor.ttsingops.com/coredns/coredns:1.5.0 ....... kubectl apply -f coredns.yaml kubectl get svc,pod -n kube-system 3.修改kubelet的dns参数 所有node节点都要操作 ansible node -i /etc/ansible/roles/k8s/hosts -m lineinfile -a 'dest=/etc/kubernetes/config/kubelet regexp="pause-amd64" line="--cluster-dns=10.0.0.2 \ --cluster-domain=cluster.local. \ --resolv-conf=/etc/resolv.conf \ --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0\" " ' ansible node -i /etc/ansible/roles/k8s/hosts -m shell -a 'systemctl daemon-reload && systemctl restart kubelet && systemctl status kubelet' 4.验证CoreDNS服务解析 kubectl run busybox --image busybox:1.28 --restart=Never -it busybox -- sh #到cd-k8s-node-1上执行 docker exec -it busybox sh kubectl get pods -A -o wide / # nslookup kubernetes.default / # nslookup www.baidu.com / # cat /etc/resolv.conf 5.安装metrics-server mkdir -p /root/metrics-server && cd /root/metrics-server wget https://github.com/kubernetes-sigs/metrics-server/releases/download/v0.3.6/components.yaml 将 imagePullPolicy: IfNotPresent args: - --cert-dir=/tmp - --secure-port=4443 修改如下: imagePullPolicy: IfNotPresent args: - --cert-dir=/tmp - --kubelet-insecure-tls - --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname - --secure-port=4443 6.Node上下载镜像 ansible node -i /etc/ansible/roles/k8s/hosts -m shell -a 'docker pull bluersw/metrics-server-amd64:v0.3.6' ansible node -i /etc/ansible/roles/k8s/hosts -m shell -a 'docker tag bluersw/metrics-server-amd64:v0.3.6 k8s.gcr.io/metrics-server-amd64:v0.3.6' ansible node -i /etc/ansible/roles/k8s/hosts -m shell -a 'docker images' kubectl create -f components.yaml kubectl get pods --all-namespaces #验证 kubectl top node 登陆网页查看是否有监控信息 若出现 [root@cd-k8s-master-etcd-1 ~]# kubectl top node Error from server (NotFound): the server could not find the requested resource (get services http:heapster:) 则在每个kube-apiserver的master节点上修改配置文件:/etc/kubernetes/config/kube-apiserver 添加如下: --enable-aggregator-routing=true 最后重启kube-apisever